Dataphin Data Integration's offline pipeline feature enables you to develop visual components. Once you've created an offline pipeline script, you can easily drag and drop components from a comprehensive library for development. This visual approach simplifies development, enhances efficiency, and streamlines the organization of source and destination data sources. This topic outlines the process for developing an offline pipeline task using the component library.

Prerequisites

To develop an offline pipeline, you must first create the corresponding development script. For more information on creating an offline pipeline script, see create an integration task through a single pipeline.

Offline pipeline component development entry

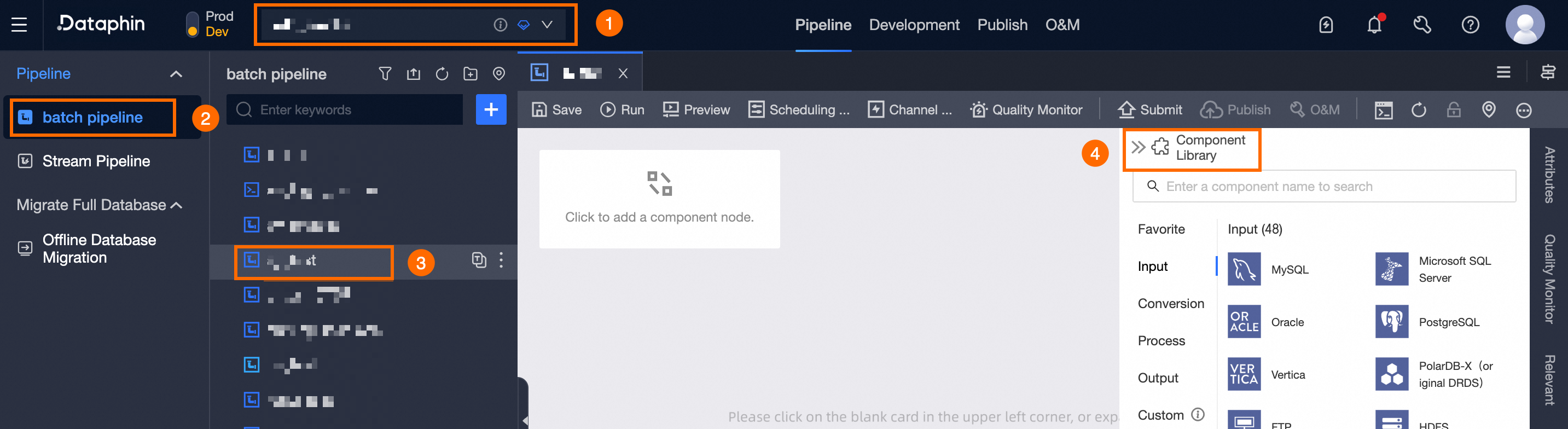

Navigate to the Dataphin home page and select Development -> Data Integration from the top menu bar.

To access the Offline Pipeline Component development page, follow these steps:

Choose a Project (Dev-Prod mode requires selecting an environment) -> click Batch Pipeline -> select and click the offline pipeline you wish to develop -> click Component Library.

Offline component library development instructions

Typically, a complete offline pipeline is composed of one or more Inputs, zero or more Transforms and Flows, and one or more Outputs.

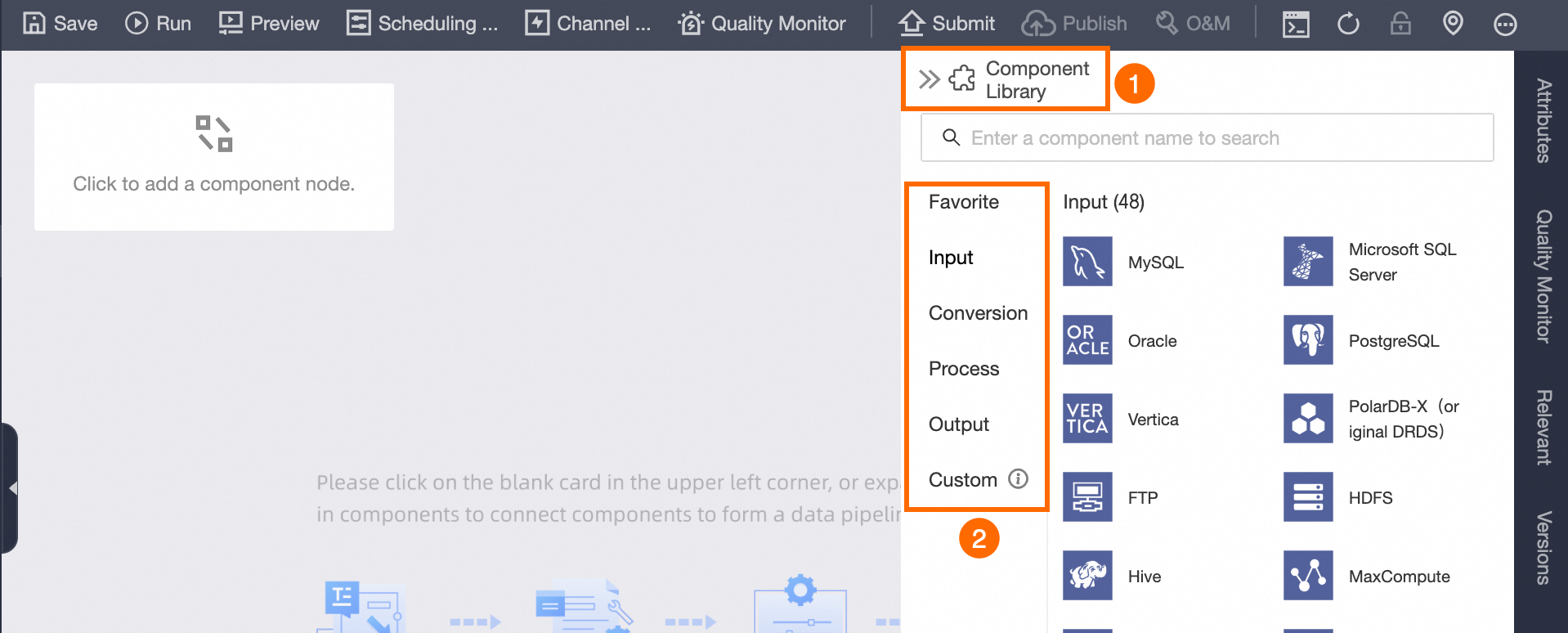

On the development page for a single offline pipeline script, click Component Library in the upper right corner to reveal components such as Favorite, Inputs, Transforms, Flows, Outputs, and Custom components.

Favorite components

By clicking ![]() , you can access components that have been favorited by the currently logged-in account in other component libraries. This allows you to quickly select and use frequently used components from the favorite component library.

, you can access components that have been favorited by the currently logged-in account in other component libraries. This allows you to quickly select and use frequently used components from the favorite component library.

Input components

The origin of the initial data. Depending on the type of your business data, select the appropriate component and drag it onto the pipeline canvas on the left to begin data entry. For an explanation of the functions of each input component, see the configuration details for each component.

Input components are not compatible with ancestor nodes.

The descendant node of an Input can be a Transform, Output, or Flow.

When the Input component is connected to multiple descendant nodes, such as Outputs or Transforms, it is necessary to select a Data Sending Method for the Input component.

Replication: The data from the ancestor node is replicated equally among the descendant nodes, with each descendant node receiving the full data set from the ancestor node.

Round-robin Distribution: The data from the ancestor node is distributed in a round-robin fashion among the descendant nodes, ensuring the combined data of all descendant nodes equals that of the ancestor node.

Output components

Integrate your data source by selecting the appropriate output component that aligns with your business requirements. Drag your chosen component onto the left pipeline canvas to facilitate data output. For comprehensive information on the functions of each output component, see the configuration details of each component.

Output components are not compatible with descendant nodes.

Flow components

Dataphin provides flow control during data integration through two types of components: throttling and conditional distribution. For an in-depth look at the functions of each component, see the configuration details of each component.

Flow components cannot serve as the initial or terminal nodes in an offline pipeline; however, they can be positioned anywhere between the start and end of the pipeline script.

When the Flow component is connected to multiple descendant nodes, such as Transforms, Outputs, or Flows, it is necessary to select a Data Sending Method from the Input component.

If the Flow component selects the Conditional Distribution component, you must specify the distribution condition when connecting the components:

Select Condition Result Is True to send data downstream when the ancestor node's result is true.

Select Condition Result Is False to send data downstream when the ancestor node's result is false.

Transform components

This can be utilized to process the source data from input components by performing operations such as computation, filtering, and encryption of data fields. For comprehensive information on the functionalities of each transform component, see the configuration details of each component.

Transform components can be connected to multiple Downstream components, such as Transforms, Outputs, and Flows. It is necessary to specify the Input component's Data Sending Method when establishing these connections.

Directed connections

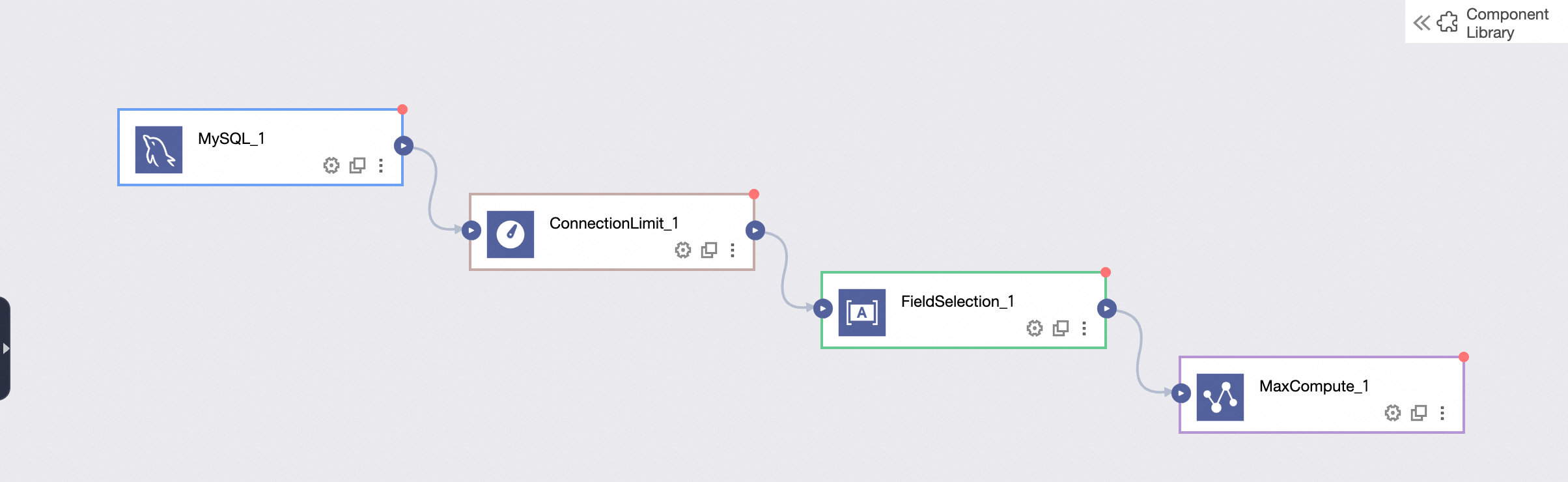

After selecting the required components, use directed connections to link upstream input components to downstream transform, flow, and output components, forming directed lines. The integration task runtime will execute each component sequentially based on these directed connections. For visual representation of the upstream and downstream relationships when connecting components, see the figure below.

Canvas operations

The pipeline canvas supports the simultaneous construction of multiple pipeline scripts. Additionally, right-clicking on the pipeline canvas allows you to perform various operations.

Operation | Description |

Copy | Copy existing components on the pipeline canvas. |

Paste | Paste the copied pipeline components onto the pipeline canvas. |

Delete | Delete the selected components from the canvas. |

Select All | Select all components on the pipeline canvas. |

Lasso Select | Use the mouse to lasso and select multiple components on the canvas. |

Switch to code editor components

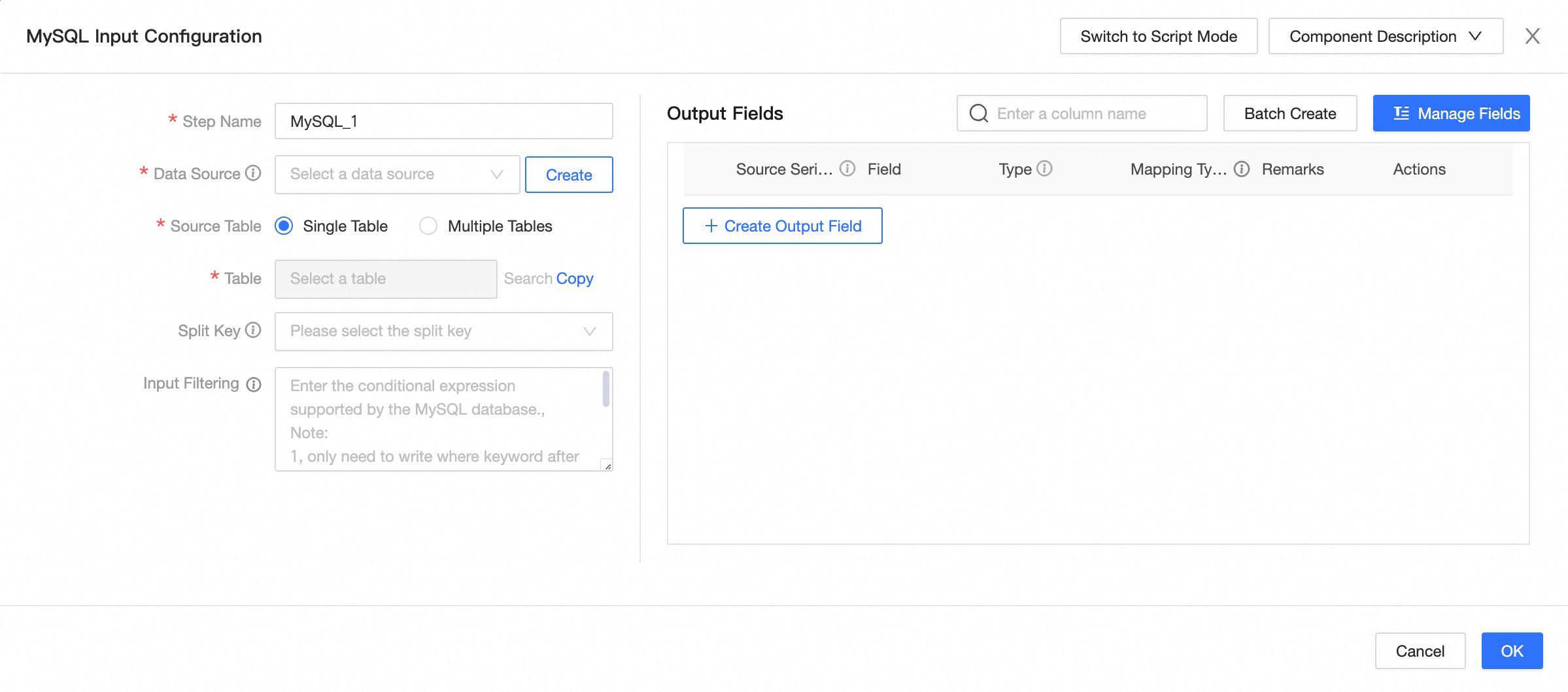

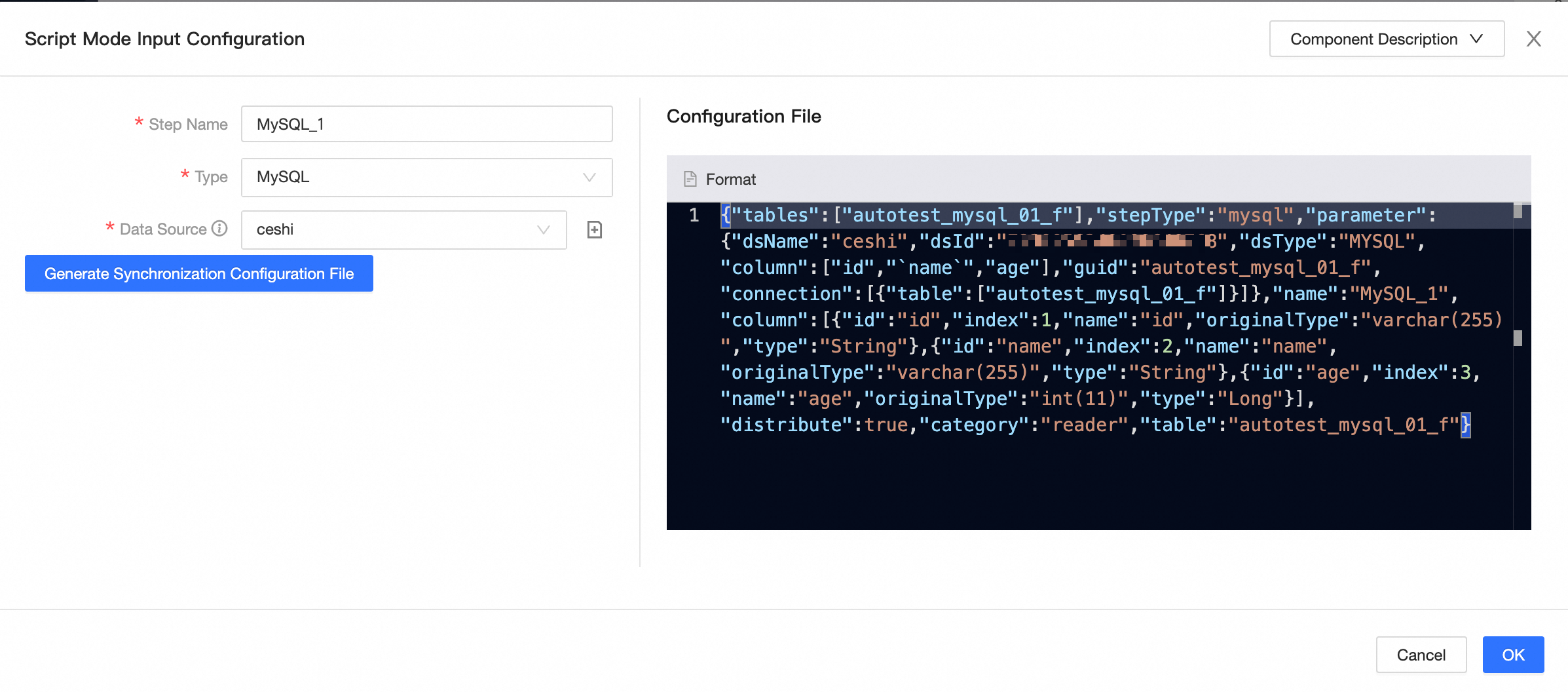

For components other than LogicalTable, code editor, and local file, the input and output components in the configuration dialog box support switching to Code Editor mode. Once switched, it cannot be reverted. The following figure uses the MySQL input component as an example.

Before switching | After switching |

|

|

Component configuration instructions

For instructions on configuring the components supported by Dataphin, see the table below:

Input components

Component Name | Component Configuration |

MYSQL | |

Oracle | |

Vertica | |

FTP | |

Hive | |

HBase | |

LogicalTable | |

AnalyticDB for PostgreSQL | |

PolarDB | |

Local file | |

Teradata | |

OceanBase | |

Hologres | |

TDH Inceptor | |

DataHub | |

DM | |

TiDB | |

GBase 8a | |

SAP Table | |

StarRocks | |

Elasticsearch | |

ArgoDB | |

Salesforce | |

SelectDB | |

Microsoft SQL Server | |

PostgreSQL | |

PolarDB-X (formerly DRDS) | |

HDFS | |

MaxCompute | |

MongoDB | |

AnalyticDB for MySQL 3.0 | |

Log Service | |

OSS | |

SAP HANA | |

IBM DB2 | |

Code editor input | |

ClickHouse | |

Kafka | |

API | |

KingbaseES | |

GoldenDB | |

Impala | |

OpenGauss | |

Kudu | |

Greenplum | |

Doris | |

Amazon S3 | |

Lindorm (Compute Engine) |

Output components

Component Name | Configuration Instructions |

MYSQL | |

Oracle | |

Vertica | |

FTP | |

Hive | |

HBase | |

AnalyticDB for MySQL 2.0 | |

AnalyticDB for MySQL 3.0 | |

PolarDB | |

SAP HANA | |

IBM DB2 | |

Output from the code editor | |

ClickHouse | |

Kafka | |

KingbaseES | |

GoldenDB | |

Impala | |

StarRocks | |

Greenplum | |

ArgoDB | |

Amazon S3 | |

Microsoft SQL Server | |

PostgreSQL | |

PolarDB-X (formerly known as DRDS) | |

HDFS | |

MaxCompute | |

MongoDB | |

Elasticsearch | |

AnalyticDB for PostgreSQL | |

OSS | |

Teradata | |

OceanBase | |

Hologres | |

TDH Inceptor | |

DataHub | |

DM | |

TiDB | |

GBase 8a | |

OpenGauss | |

API | |

Redis | |

Doris | |

SelectDB | |

Lindorm (compute engine) |

Transform components

Component Name | Component Configuration |

Field Selection | |

Signature Calculation | |

Filter | |

Encryption | |

Decryption |

Flow components

Component Name | Configuration Instructions |

Throttling | |

Conditional Distribution |

Custom components

To utilize custom components in Dataphin, you must first create them within the platform. Once created, you can select and employ them as needed. For detailed instructions, refer to creating an offline custom source type.