The AnalyticDB for MySQL 2.0 output component writes data to an AnalyticDB for MySQL 2.0 data source. When synchronizing data from other data sources to AnalyticDB for MySQL 2.0, you need to configure the target data source after configuring the source data source information. This topic describes how to configure the AnalyticDB for MySQL 2.0 output component.

Procedure

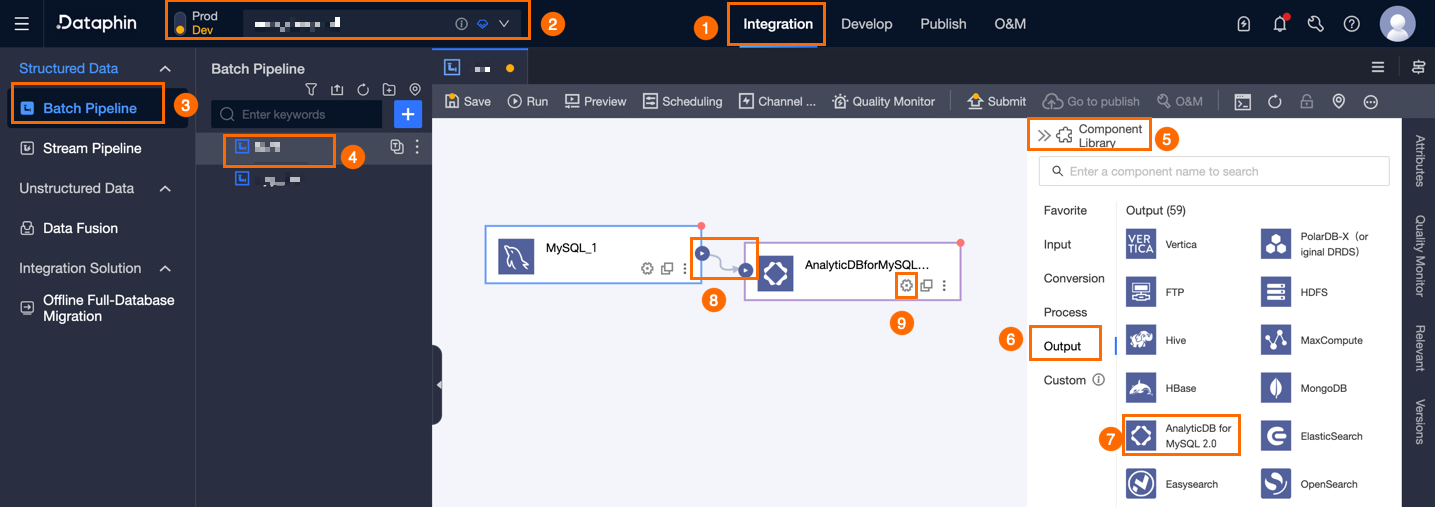

In the top navigation bar of the Dataphin homepage, choose Develop > Data Integration.

In the top navigation bar of the Integration page, select a project. In Dev-Prod mode, you need to select an environment.

In the left navigation bar, click Batch Pipeline. In the Batch Pipeline list, click the offline pipeline that you want to develop to open its configuration page.

Click Component Library in the upper-right corner of the page to open the Component Library panel.

In the left navigation bar of the Component Library panel, select Outputs. In the output component list on the right, find the AnalyticDB for MySQL 2.0 component and drag it to the canvas.

Click and drag the

icon of the target input, transform, or flow component to connect it to the current AnalyticDB for MySQL 2.0 output component.

icon of the target input, transform, or flow component to connect it to the current AnalyticDB for MySQL 2.0 output component.Click the

icon on the AnalyticDB for MySQL 2.0 output component card to open the Output Configuration dialog box.

icon on the AnalyticDB for MySQL 2.0 output component card to open the Output Configuration dialog box.

In the AnalyticDB For MySQL 2.0 Output Configuration dialog box, configure the parameters.

Parameter

Description

Basic Settings

Step Name

The name of the AnalyticDB for MySQL 2.0 output component. Dataphin automatically generates a step name, which you can modify based on your business scenario. The name must meet the following requirements:

It can contain only Chinese characters, letters, underscores (_), and digits.

It cannot exceed 64 characters in length.

Datasource

The data source dropdown list displays all AnalyticDB for MySQL 2.0 data sources, including those for which you have write-through permissions and those for which you do not. Click the

icon to copy the current data source name.

icon to copy the current data source name.For data sources for which you do not have write-through permissions, you can click Request next to the data source to request write-through permissions. For more information, see Request, renew, and return data source permissions.

If you do not have an AnalyticDB for MySQL 2.0 data source, click Create Data Source to create one. For more information, see Create an AnalyticDB for MySQL 2.0 data source

Time Zone

The time zone used to process time format data. The default is the time zone configured in the selected data source and cannot be modified.

NoteFor tasks created before V5.1.2, you can select Data Source Default Configuration or Channel Configuration Time Zone. The default is Channel Configuration Time Zone.

Data Source Default Configuration: The default time zone of the selected data source.

Channel Configuration Time Zone: The time zone configured in Properties > Channel Configuration for the current integration task.

Table

Select the target table for output data. You can enter a keyword to search for a table or enter the exact table name and click Exact Match. After you select a table, the system automatically checks the table status. Click the

icon to copy the name of the currently selected table.

icon to copy the name of the currently selected table.Mode

Select the data output mode. Mode includes:

Insert Mode: Insert mode supports writing small amounts of data (less than 10 million records). You need to configure Preparation Statement and Completion Statement.

Preparation Statement: The SQL script to execute before import.

Completion Statement: The SQL script to execute after import.

Load Mode: Load mode supports writing large amounts of data (more than 10 million records). You need to configure Loading Policy, Load parameters, and Alibaba Cloud account.

Loading Policy: Select the policy for writing data to the target table.

Overwrite Data means that the historical data in the target table is overwritten based on the current source table.

Append Data means that data is appended to the existing data in the target table without modifying the historical data.

Load Parameters: The connection used for MaxCompute transfer, filled in JSON format, for example:

{"accessid":"XXX","accessKey":"XXX","odpsServer":"XXX","tunnelServer":"XXX","accountType":"aliyun","project":"transfer_project"}Alibaba Cloud Account: Required in Load mode. The Alibaba Cloud account is used to authorize Load data. The content is filled in as: ALIYUN$****_data@aliyun.com.

Batch Write Data Volume (optional)

The size of data to be written at once. You can also set Batch Write Count. The system will write data when either of these two configurations reaches its limit. The default is 32M.

Field Mapping

Input Fields

Displays the input fields based on the upstream input.

Output Fields

Displays the output fields. You can perform the following operations:

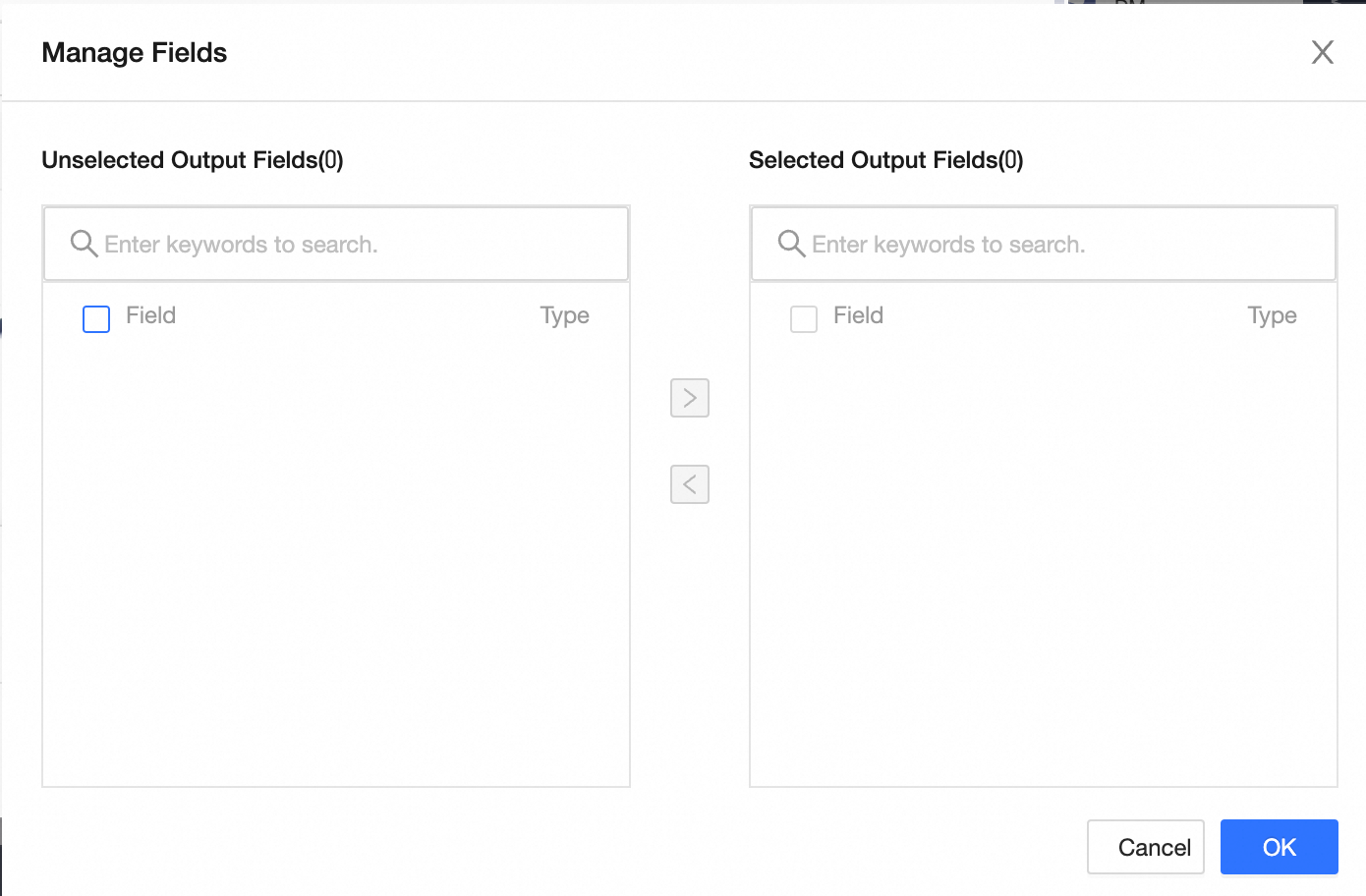

Field Management: Click Field Management to select output fields.

Click the

icon to move Selected Input Fields to Unselected Input Fields.

icon to move Selected Input Fields to Unselected Input Fields.Click the

icon to move Unselected Input Fields to Selected Input Fields.

icon to move Unselected Input Fields to Selected Input Fields.

Batch Add: Click Batch Add to configure in JSON, TEXT, or DDL format.

Configure in JSON format, for example:

// Example: [{ "name": "user_id", "type": "String" }, { "name": "user_name", "type": "String" }]Notename specifies the name of the field to import, and type specifies the data type of the field after it is imported. For example,

"name":"user_id","type":"String"imports the field named user_id and sets its data type to String.Configure in TEXT format, for example:

// Example: user_id,String user_name,StringThe row delimiter is used to separate the information of each field. The default is a line feed (\n). Supported delimiters include line feed (\n), semicolon (;), and period (.).

The column delimiter is used to separate the field name and field type. The default is a comma (,).

Configure in DDL format, for example:

CREATE TABLE tablename ( id INT PRIMARY KEY, name VARCHAR(50), age INT );

Create a new output field: Click +Create Output Field, fill in the Column and select the Type as prompted. After completing the configuration for the current row, click the

icon to save.

icon to save.

Mapping

Based on the upstream input and the fields of the target table, you can manually select field mappings. Mapping includes Same Row Mapping and Same Name Mapping.

Same Name Mapping: Maps fields with the same name.

Same Row Mapping: Maps fields in the same row when the field names in the source and target tables are different but the data in the corresponding rows needs to be mapped.

Click OK to complete the property configuration of the AnalyticDB for MySQL 2.0 output component.