Configuring DataHub output widgets enables writing data from an external database to DataHub, along with copying and pushing data from connected storage systems to the big data platform for integration and reprocessing. This topic describes the steps to configure DataHub output widgets.

Prerequisites

A DataHub data source has been created. For more information, see Create a DataHub data source.

To configure the DataHub input widget properties, the account must have read-through permission for the data source. If permission is lacking, you need to request access to the data source. For more information, see Request, renew, and return data source permissions.

Procedure

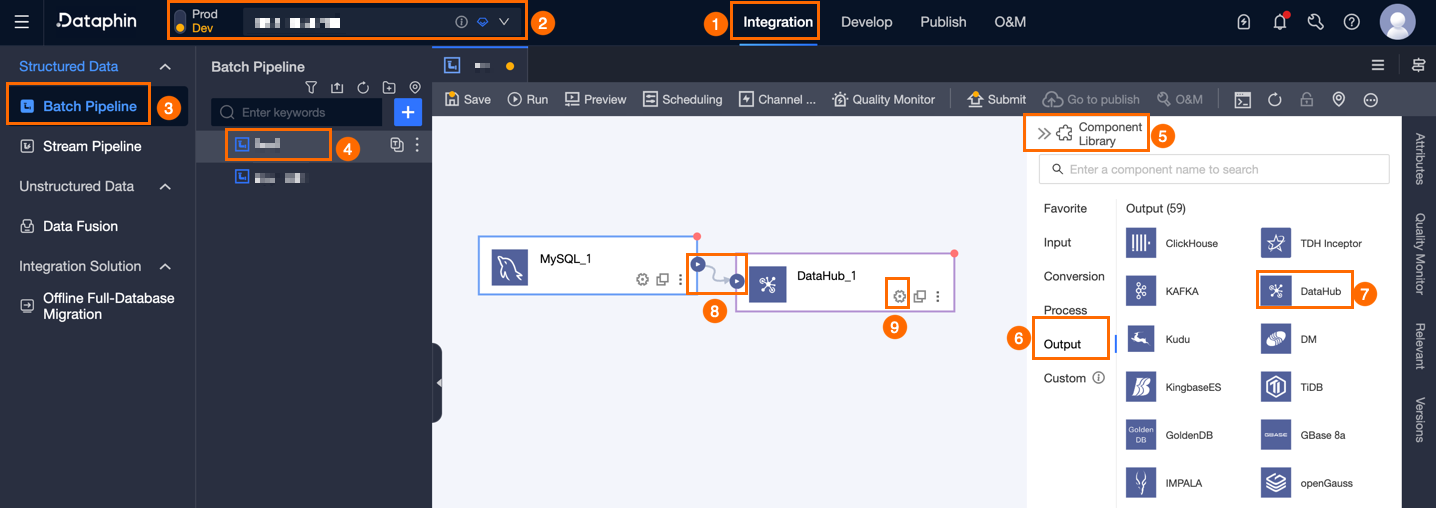

On the Dataphin home page, select Development > Data Integration from the top menu bar.

On the integration page, select Project from the top menu bar (Dev-Prod pattern requires selecting an environment).

In the navigation pane on the left, click Batch Pipeline. Then, in the Batch Pipeline list, click the Offline Pipeline you want to develop to open its configuration page.

Click Component Library in the upper right corner of the page to open the Component Library panel.

In the Component Library panel's left-side navigation pane, select Output. Find the DataHub widget in the output widget list on the right and drag it to the canvas.

Click and drag the

icon of the target upstream widget to connect it to the DataHub output widget.

icon of the target upstream widget to connect it to the DataHub output widget.On the DataHub output widget, click the

icon to open the DataHub Output Configuration dialog box.

icon to open the DataHub Output Configuration dialog box.

In the Datahub Output Configuration dialog box, set the parameters according to the provided table.

Parameter

Description

Basic settings

Step Name

The name of the DataHub output widget. Dataphin automatically generates the step name, and you can also modify it according to the business scenario. The name must meet the following requirements:

Can only contain Chinese characters, letters, underscores (_), and numbers.

Cannot exceed 64 characters.

Datasource

The data source drop-down list displays all DataHub-type data sources, including those for which you have write-through permission and those for which you do not. Click the

icon to copy the current data source name.

icon to copy the current data source name.For data sources without write-through permission, you can click Request after the data source to request write-through permission. For more information, see Request, renew, and return data source permissions.

If you do not have a DataHub-type data source, click Create Data Source to create a data source. For more information, see Create a DataHub data source.

Subject

Select the required topic according to the actual scenario.

Data Volume Per Submission

To improve write efficiency, Data Integration accumulates buffer data. When the accumulated data size reaches the data volume per submission size (in MB), it is submitted in batches to the destination. The default is 1, which is 1 MB of data.

Advanced Configuration

Configure as needed. The following parameters are supported:

maxRetryCount: The maximum number of retries for a failed node. The retry count cannot exceed 3.

batchSize: To improve write efficiency, Data Integration accumulates buffer data. When the accumulated number of data records reaches the batchSize (in records), it is submitted in batches to the destination.

maxCommitInterval: The maximum time for buffer data. Unit: milliseconds. The default is 30,000, which is 30 seconds. If the data collection source does not produce data for a long time, to ensure timely delivery of data, this parameter needs to be set. If the set time is exceeded, delivery will be forced.

NoteFor the three parameters of data volume per submission, batchSize, and maxCommitInterval, only one parameter needs to be met for delivery. Additionally, DataHub limits the number of data records written in a single request to 10,000. Exceeding 10,000 records will cause the node to fail. It is recommended to set batchSize to less than or equal to 10,000 records to avoid node errors.

Field mapping

Input Fields

The input fields are displayed based on the output of the upstream widget.

Output Fields

The output fields area displays all fields of the selected table. If certain fields do not need to be output to the downstream widget, you can delete the corresponding fields:

To delete a small number of fields, you can click the Actions column's

icon to delete the extra fields.

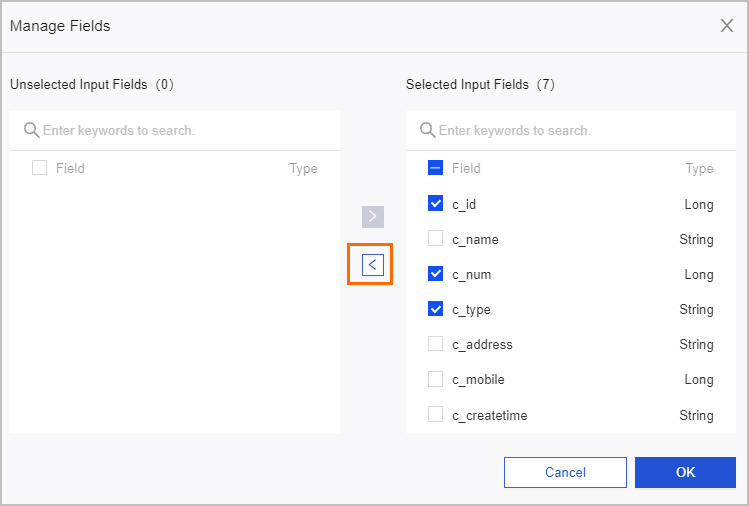

icon to delete the extra fields.To delete many fields, you can click Field Management, select multiple fields on the Field Management page, and click the

icon to move the Selected Input Fields to Unselected Input Fields.

icon to move the Selected Input Fields to Unselected Input Fields.

Mapping

The mapping relationship is used to map the input fields of the source table to the output fields of the target table. The mapping relationship includes same-name mapping and same-row mapping. The scenarios are described as follows:

Same-name mapping: Maps fields with the same field name.

Same-row mapping: The field names of the source table and target table are inconsistent, but the data in the corresponding rows of the fields need to be mapped. Only fields in the same row are mapped.

Click OK.