The AnalyticDB for PostgreSQL output component writes data to an AnalyticDB for PostgreSQL data source. When you synchronize data from other data sources to an AnalyticDB for PostgreSQL data source, configure the target data source for the AnalyticDB for PostgreSQL output component after you finish configuring the source data.

Prerequisites

You have created an AnalyticDB for PostgreSQL data source. For more information, see Create an AnalyticDB for PostgreSQL Data Source.

The account used to configure the AnalyticDB for PostgreSQL input component must have write-through permission on the data source. If the account does not have this permission, request it. For more information, see Request Data Source Permissions.

Procedure

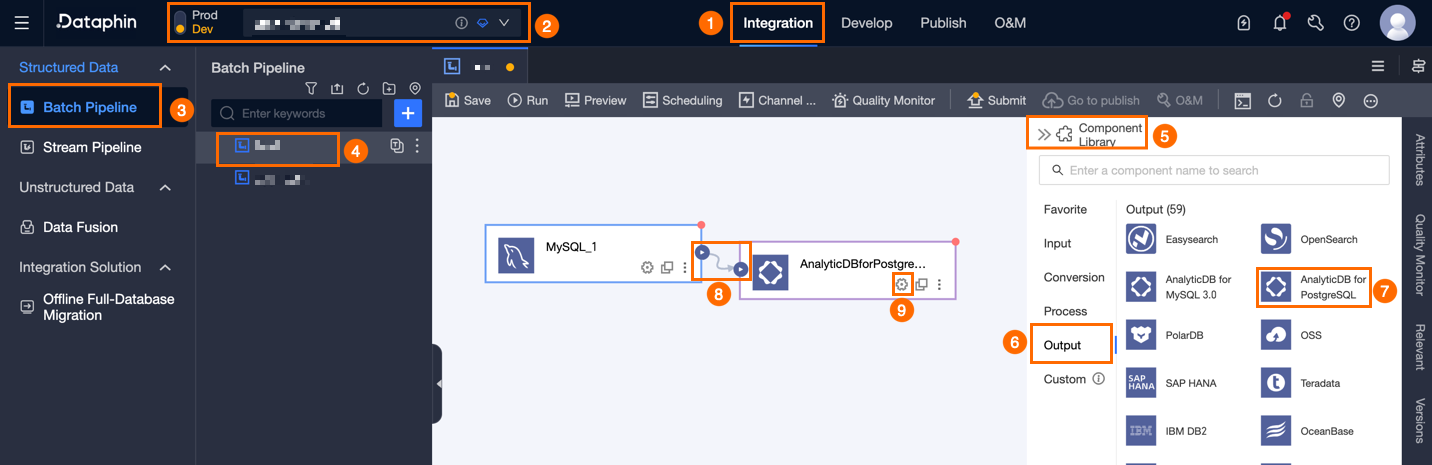

On the Dataphin homepage, in the top menu bar, click Develop, and then click Data Integration.

On the Integration page, in the top menu bar, select a Project. In Dev-Prod mode, also select an environment.

In the left navigation pane, click Batch Pipeline. In the Batch Pipeline list, click the offline pipeline that you want to develop. The configuration page for the offline pipeline opens.

In the upper-right corner of the page, click Component Library to open the Component Library panel.

In the left navigation pane of the Component Library panel, click Output. In the output component list on the right, locate the AnalyticDB for PostgreSQL component and drag it onto the canvas.

Click and drag the

icon of a target input, transform, or flow component to connect it to the AnalyticDB for PostgreSQL output component.

icon of a target input, transform, or flow component to connect it to the AnalyticDB for PostgreSQL output component.In the AnalyticDB for PostgreSQL output component card, click the

icon to open the AnalyticDB for PostgreSQL Output Configuration dialog box.

icon to open the AnalyticDB for PostgreSQL Output Configuration dialog box.

In the AnalyticDB for PostgreSQL Output Configuration dialog box, configure the following parameters.

Parameter

Description

Basic Information

Step Name

The name of the AnalyticDB for PostgreSQL output component. Dataphin generates a step name automatically. You can change it based on your business scenario. The naming convention is as follows:

Use only Chinese characters, letters, underscores (_), and digits.

Keep the name no longer than 64 characters.

Datasource

The drop-down list shows all AnalyticDB for PostgreSQL data sources. This includes data sources for which you have write-through permission and those for which you do not. Click the

icon to copy the current data source name.

icon to copy the current data source name.If you do not have write-through permission for a data source, click Request next to the data source to request write-through permission. For more information, see Request Data Source Permissions.

If you do not have an AnalyticDB for PostgreSQL data source, click Create Data Source to create one. For more information, see Create an AnalyticDB for PostgreSQL Data Source.

Time Zone

Dataphin processes time-format data based on the current time zone. By default, this matches the time zone configured for the selected data source. You cannot change this setting.

NoteFor tasks created before version V5.1.2, you can choose Data Source Default Configuration or Channel Configuration Time Zone. The default choice is Channel Configuration Time Zone.

Data Source Default Configuration: The default time zone of the selected data source.

Channel Configuration Time Zone: The time zone configured for the current integration task under Properties > Channel Configuration.

Schema (Optional)

Select the schema where the table resides. Cross-schema table selection is supported. If you do not specify a schema, the default schema configured for the data source is used.

Table

Select the target table for output data. Enter a keyword to search for tables, or enter the exact table name and click Exact Search. After you select a table, the system automatically checks its status. Click the

icon to copy the name of the selected table.

icon to copy the name of the selected table.If the target table does not exist in the AnalyticDB for PostgreSQL data source, use the one-click table creation feature to generate it quickly. Follow these steps:

Click Create Table. Dataphin automatically generates the SQL script to create the target table. This includes the table name (default: source table name) and field types (converted from Dataphin fields).

Modify the SQL script as needed, then click Create. After the table is created, Dataphin uses it as the target table for output data.

NoteIf a table with the same name exists in the development environment, clicking Create returns an error that the table already exists.

Production Table Missing Policy

This policy defines how to handle missing production tables. Choose No Action or Automatic Creation. The default is Automatic Creation. If you choose No Action, the task publishes without creating the production table. If you choose Automatic Creation, the task creates a table with the same name in the target environment during publishing.

No Action: If the target table does not exist, the system warns you during submission but still allows publishing. You must manually create the target table in the production environment before running the task.

Automatic Creation: Requires you to Edit The Table-creation Statement. The system prepopulates the table-creation statement for the selected table by default, and you can modify it. The table name in the statement uses the placeholder

${table_name}, and only this placeholder is allowed. At runtime, the placeholder will be replaced with the actual table name.If the target table does not exist, Dataphin first creates it using the statement. If creation fails, publishing fails. Fix the statement based on the error message, then republish. If the target table already exists, no action is taken.

NoteThis setting is available only for projects in Dev-Prod mode.

Loading Policy

Supports insert and copy policies.

insert policy: Uses the AnalyticDB for PostgreSQL

INSERT INTO ... VALUES ...statement to write data. If a primary key or unique index conflict occurs, the conflicting row fails to write and becomes dirty data. We recommend using insert mode first.copy policy: Uses the AnalyticDB for PostgreSQL

COPY FROMcommand to load data into a table. It supports conflict resolution strategies. Use this policy only if you face performance issues. Also configure the Conflict Resolution Strategy, such as Error on Conflict or Overwrite on Conflict.ImportantThe conflict resolution strategy works only for Copy mode in AnalyticDB for PostgreSQL kernel versions higher than 4.3. Avoid using it for kernel versions lower than 4.3 or unknown versions to prevent task failures.

Batch Write Size (Optional)

The size of data written in one batch. You can set both Batch Write Count and this parameter. The system writes data when either limit is reached. The default is 32 MB.

Batch Write Count (Optional)

The default is 2,048 rows. Data synchronization uses batch writing. Key parameters are Batch Write Count and Batch Write Size.

When the accumulated data reaches either limit (size or count), the system treats it as a full batch and writes it to the destination immediately.

We recommend setting the batch write size to 32 MB. Adjust the batch write count based on the average record size. Use a large value to maximize batch efficiency. For example, if each record is about 1 KB, set the batch write size to 16 MB. Then set the batch write count to more than 16,384 (16 MB ÷ 1 KB). Here, we use 20,000 rows. With this setup, the system triggers batch writes when the accumulated data reaches 16 MB.

Preparation Statement (Optional)

An SQL script to run on the database before data import.

For example, to ensure continuous service availability, run these steps: First, create the target table Target_A. Then write data to Target_A. After writing completes, rename the live service table Service_B to Temp_C. Next, rename Target_A to Service_B. Finally, delete Temp_C.

Finalization Statement (Optional)

An SQL script to run on the database after data import.

Field Mapping

Input Fields

Lists the input fields from upstream components.

Output Fields

Lists the output fields. You can perform the following actions:

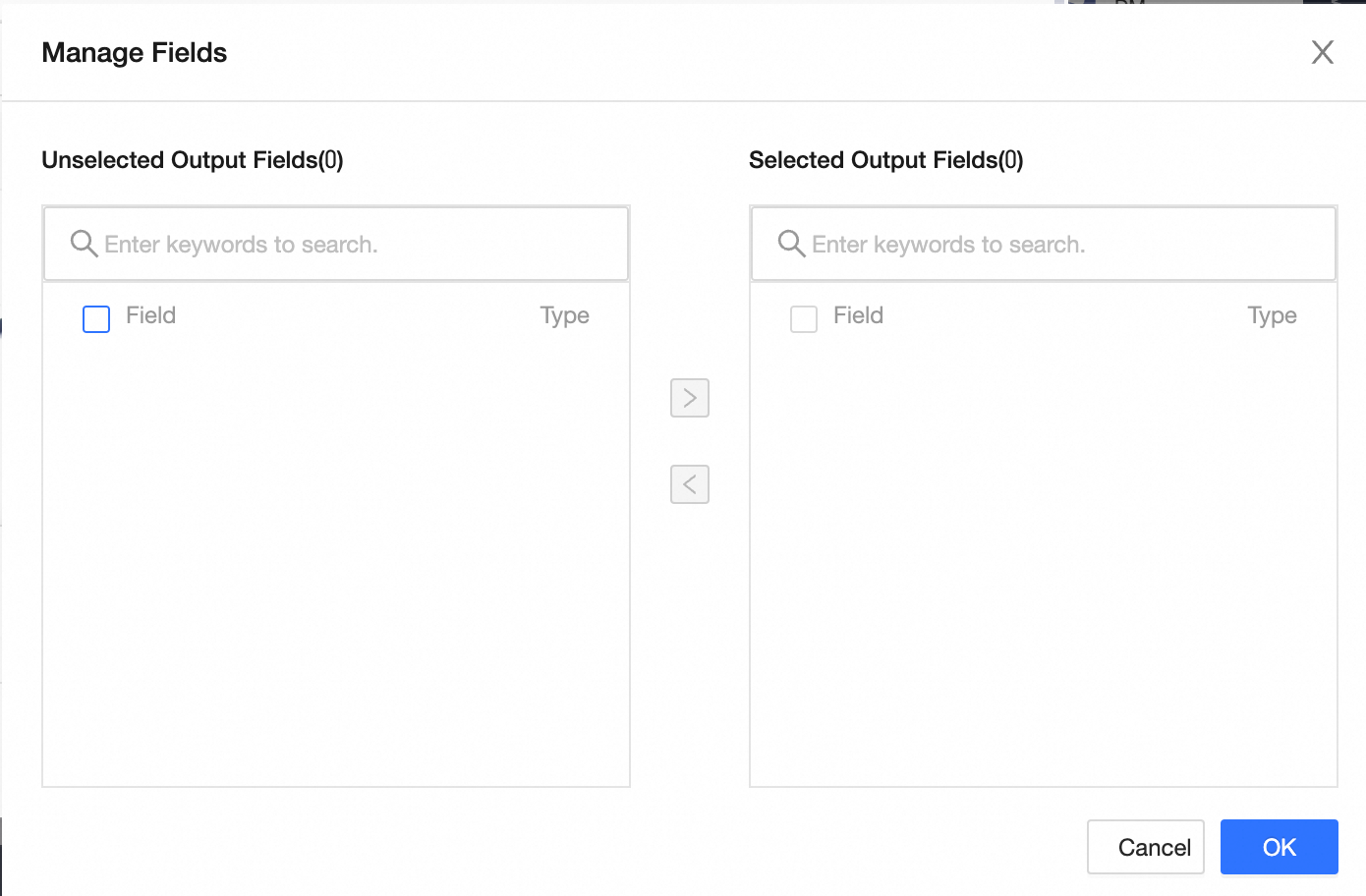

Field Management: Click Field Management to select output fields.

Click the

icon to move Selected Input Fields to Unselected Input Fields.

icon to move Selected Input Fields to Unselected Input Fields.Click the

icon to move the Unselected Input Fields into the Selected Input Fields.

icon to move the Unselected Input Fields into the Selected Input Fields.

Batch Add: Click Batch Add to configure fields in JSON, TEXT, or DDL format.

JSON format example:

// Example: [{ "name": "user_id", "type": "String" }, { "name": "user_name", "type": "String" }]NoteThe "name" field is the field name. The "type" field is the data type. For example,

"name":"user_id","type":"String"imports the field named user_id and sets its type to String.TEXT format example:

// Example: user_id,String user_name,StringThe row delimiter separates field entries. The default is a line feed (\n). Other options include semicolons (;) and periods (.).

The column delimiter separates field names from field types. The default is a comma (,).

Batch configuration in DDL format, such as:

CREATE TABLE tablename ( id INT PRIMARY KEY, name VARCHAR(50), age INT );

Create Output Field: Click + Create Output Field. Enter the Column name and select the Type. Click the

icon to save the row.

icon to save the row.

Mapping

Manually map fields between upstream inputs and target table fields. Mapping includes Row Mapping and Name Mapping.

Name Mapping: Maps fields with identical names.

Row Mapping: Maps fields by position when source and target field names differ.

Click Confirm to complete the configuration of the AnalyticDB for PostgreSQL output component.