The PolarDB output component writes data to a PolarDB data source. To synchronize data from another data source to PolarDB, you can configure the PolarDB output component as the target. This topic describes how to configure the PolarDB output component.

Prerequisites

A PolarDB data source has been created. For more information, see Create a PolarDB data source.

The account that you use to configure the PolarDB output component has write-through permission for the data source. If the account does not have this permission, you must apply for it. For more information, see Apply for, renew, and return data source permissions.

Procedure

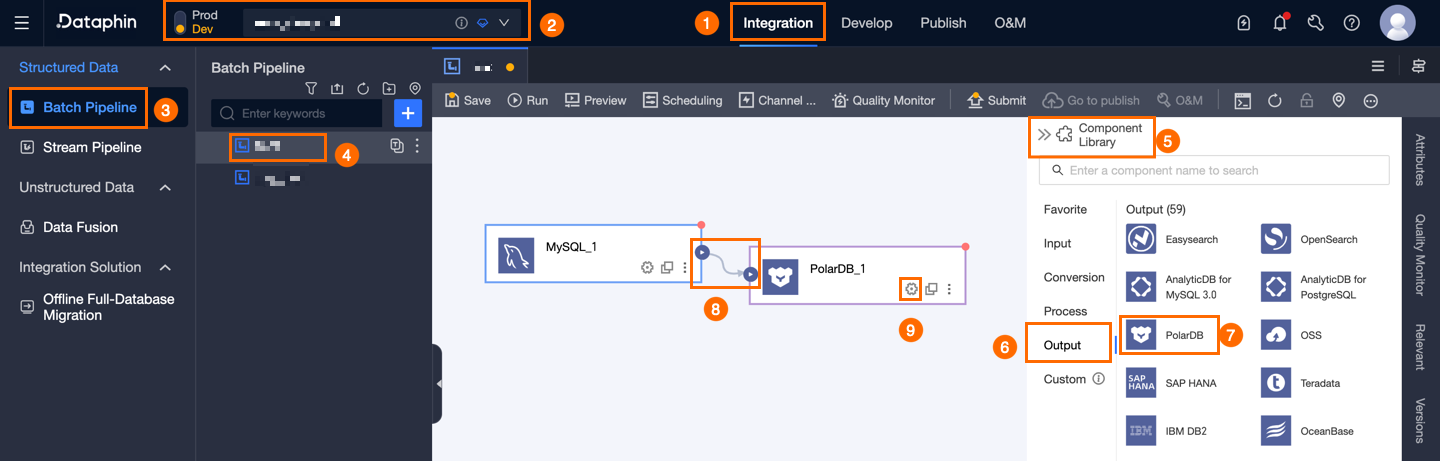

In the top menu bar of the Dataphin home page, choose Develop > Data Integration.

On the Data Integration page, select a project. If you are in Dev-Prod mode, you must also select an environment.

In the navigation pane on the left, click Batch Pipeline. In the Batch Pipeline list, click the offline pipeline that you want to edit to open its configuration page.

In the upper-right corner of the page, click Component Library to open the Component Library panel.

In the navigation pane on the left of the Component Library panel, select Outputs. Find the PolarDB component in the list on the right and drag it to the canvas.

Click and drag from the

icon on a source, transform, or flow component to the PolarDB output component to connect them.

icon on a source, transform, or flow component to the PolarDB output component to connect them.Click the

icon on the PolarDB output component card to open the PolarDB Output Configuration dialog box.

icon on the PolarDB output component card to open the PolarDB Output Configuration dialog box.

In the PolarDB Output Configuration dialog box, configure the parameters.

Parameter

Description

Basic Settings

Step Name

The name of the PolarDB output component. Dataphin automatically generates a step name. You can also modify it as needed. The naming convention is as follows:

Can contain only letters, underscores (_), and digits.

Cannot exceed 64 characters.

Datasource

The data source drop-down list displays all PolarDB data sources, including those for which you have write-through permission and those for which you do not. Click the

icon to copy the current data source name.

icon to copy the current data source name.For data sources for which you do not have write-through permission, click Request next to the data source to apply for the permission. For more information, see Apply for, renew, and return data source permissions.

If you do not have a PolarDB data source, click Create Data Source to create one. For more information, see Create a PolarDB data source.

Time Zone

The component processes time-formatted data based on the current time zone. By default, the time zone is inherited from the selected data source and cannot be changed.

NoteFor nodes created before V5.1.2, you can select Data Source Default Configurations or Channel Configuration Time Zone. By default, Channel Configuration Time Zone is selected.

Data Source Default Configurations: The default time zone of the selected data source.

Channel Configuration Time Zone: The time zone configured in Properties > Channel Configuration for the current integration node.

Table

Select the target table for the output data. You can enter a keyword to search for a table or enter the exact table name and click Exact Match. After you select a table, the system automatically checks the table status. Click the

icon to copy the name of the selected table.

icon to copy the name of the selected table.Loading Policy

Select a policy for writing data to the target table. The Loading Policy options are:

Append Data: Appends data to the target table without modifying existing data. A dirty data error is reported if a primary key or constraint violation occurs.

Overwrite Data: If a primary key or constraint violation occurs, the system deletes the old row with the duplicate primary key and then inserts the new row.

NoteThe loading policy does not take effect for the PostgreSQL protocol.

Batch Write Size (optional)

The amount of data to write in a single batch. You can also set Batch Write Records. The system writes the data when either limit is reached. The default value is 32 MB.

Batch Write Records (optional)

The default value is 2048 records. Data is written in batches. The batch size is determined by Batch Write Records and Batch Write Size.

A batch is written to the destination when the accumulated data reaches either the record limit or the size limit.

For best performance, set a large batch size. For example, if a single record is about 1 KB, you can set the batch size to 16 MB. Then, set the batch record count to a value greater than 16,384 (16 MB / 1 KB), such as 20,000. With this configuration, the system writes a batch each time the accumulated data reaches 16 MB.

Prepare Statement (optional)

An SQL script to execute on the database before the data import.

For example, to ensure continuous service availability, you can create a temporary target table `Target_A` before the write step. The data is written to `Target_A`. After the write step is complete, you can rename the production table `Service_B` to `Temp_C`, rename `Target_A` to `Service_B`, and then delete `Temp_C`.

Post Statement (optional)

An SQL script to execute on the database after the data import.

Field Mapping

Input Fields

Displays the input fields from the upstream source.

Output Fields

Displays the output fields. The following operations are supported:

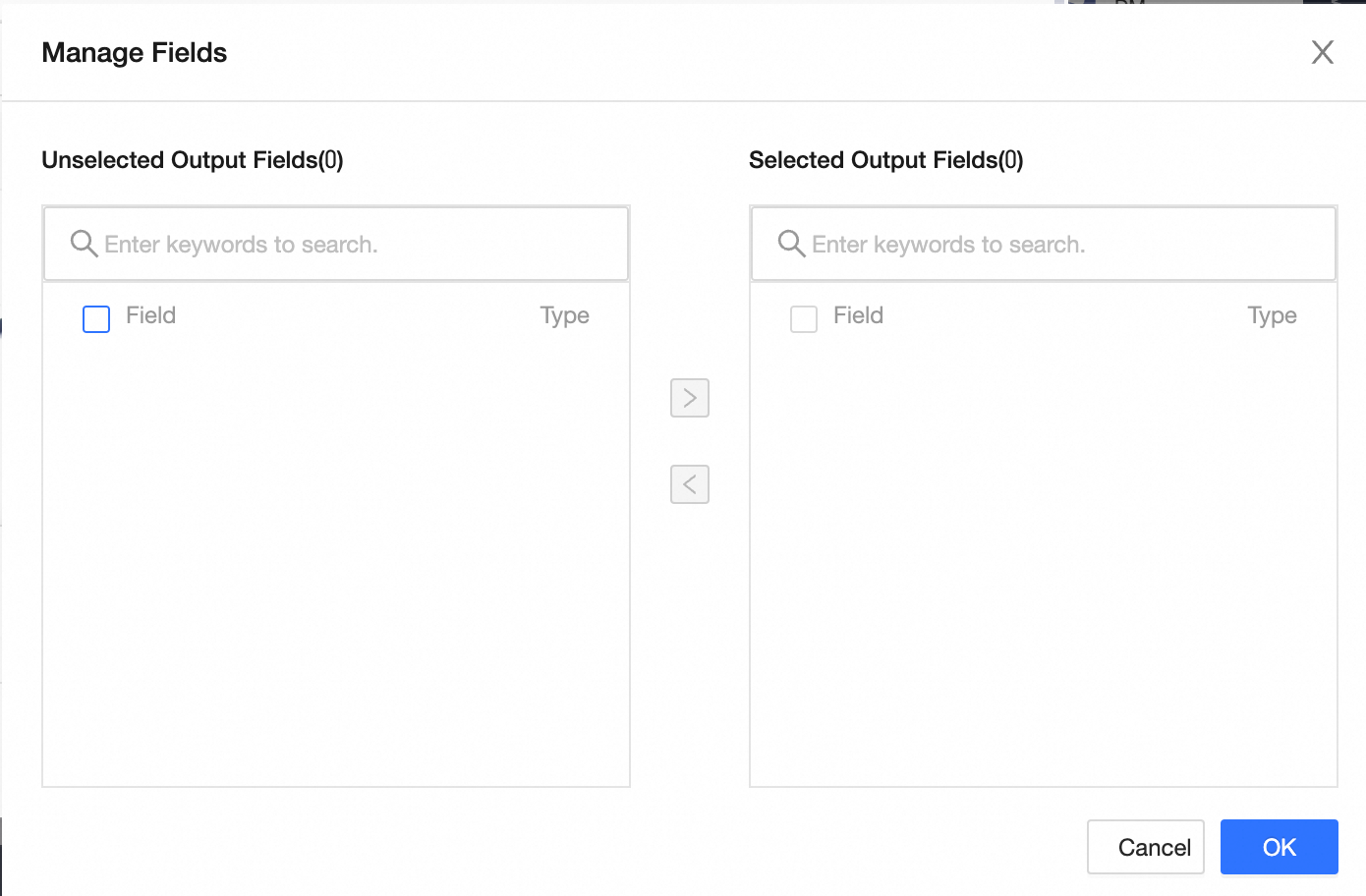

Field Management: Click Field Management to select output fields.

Click the

icon to move fields from Selected Input Fields to Unselected Input Fields.

icon to move fields from Selected Input Fields to Unselected Input Fields.Click the

icon to move fields from Unselected Input Fields to Selected Input Fields.

icon to move fields from Unselected Input Fields to Selected Input Fields.

Batch Add: Click Batch Add to configure fields in bulk using JSON, TEXT, or DDL format.

To configure in JSON format, use the following example:

// Example: [{ "name": "user_id", "type": "String" }, { "name": "user_name", "type": "String" }]Note`name` is the name of the field to import. `type` is the data type of the field after import. For example,

"name":"user_id","type":"String"imports the field named `user_id` and sets its data type to String.To configure in TEXT format, use the following example:

// Example: user_id,String user_name,StringThe row delimiter separates the information for each field. The default is a line feed (\n). Semicolons (;) and periods (.) are also supported.

The column delimiter separates the field name from the field type. The default is a comma (,).

To configure in DDL format, use the following example:

CREATE TABLE tablename ( id INT PRIMARY KEY, name VARCHAR(50), age INT );

Create an output field: Click +Create Output Field. Enter a Column name and select a Type as prompted. After you configure the row, click the

icon to save.

icon to save.

Mapping

Manually map fields between the upstream source and the target table. The Mapping options are Same Row Mapping and Same Name Mapping.

Same Name Mapping: Maps fields that have the same name.

Same Row Mapping: Maps fields based on their row position. Use this when source and target field names differ, but their order is the same.

Click Confirm to save the configuration of the PolarDB output component.