The HDFS output widget enables writing data to an HDFS data source. When synchronizing data from various data sources to an HDFS data source, it's necessary to configure the target data source for the HDFS output widget after setting up the source data information. This topic explains the configuration process for the HDFS output widget.

Prerequisites

An HDFS data source has been created. For more information, see Create an HDFS Data Source.

To configure the properties of the HDFS input widget, the account must have write-through permission for the data source. If permission is lacking, you need to request access to the data source. For more information, see Request Data Source Permission.

Procedure

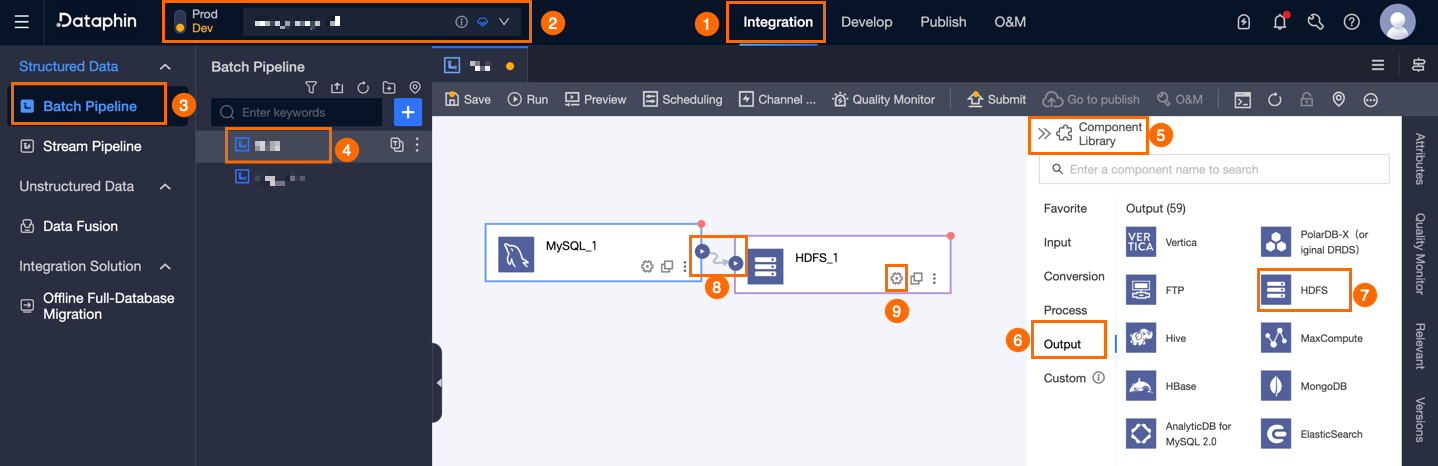

On the Dataphin home page, select Development > Data Integration from the top menu bar.

In the integration page's top menu bar, select Project (Dev-Prod mode requires selecting Environment).

In the navigation pane on the left, click Batch Pipeline. In the Batch Pipeline list, click the offline pipeline you want to develop to open its configuration page.

Click the Component Library in the upper right corner to open the Component Library panel.

In the Component Library panel's left-side navigation pane, select Output. Find the HDFS component in the list of output widgets on the right and drag it to the canvas.

Click and drag the

icon from the target upstream component to connect it to the HDFS output widget.

icon from the target upstream component to connect it to the HDFS output widget.Click the

icon on the HDFS output component to open the HDFS Output Configuration dialog box.

icon on the HDFS output component to open the HDFS Output Configuration dialog box.

In the HDFS Output Configuration dialog box, set the parameters.

Parameter

Description

Step Name

The name of the HDFS output widget. Dataphin automatically generates the step name, and you can also modify it according to the business scenario. The naming convention is as follows:

Can only contain Chinese characters, letters, underscores (_), and numbers.

Cannot exceed 64 characters.

Data Source

In the data source drop-down list, all HDFS-type data sources are displayed, including data sources for which you have write-through permission and those for which you do not. Click the

icon to copy the current data source name.

icon to copy the current data source name.For data sources without write-through permission, you can click Request after the data source to request write-through permission for the data source. For more information, see Request, Renew, and Return Data Source Permission.

If you do not yet have an HDFS-type data source, click Create Data Source to create a data source. For more information, see Create an HDFS Data Source.

File Path

Enter the absolute path of the file. Because the

NameNodeis already configured for the data source, you do not need to add thehdfs://{namenode}:{port}prefix. For example:/hadoop/input/file.txt. The system accesses the following path:hdfs://{NameNode configured for the data source}:{IPC Port configured for the data source}{entered file path}.File Type

Select the file type to convert the data into for storage. File Type includes Text, ORC, and Parquet.

File Encoding

Select the file encoding. File Encoding includes UTF-8 and GBK.

Load Policy

When writing data to the target data source (HDFS data source), the policy for writing data into the table. Load policies include overwriting data and appending data. The applicable scenarios are described as follows:

Overwrite Data: Under the overwrite data policy, the files in the target directory are deleted first, and then new data files are added.

Append Data: Under the append data policy, new data files are directly added to the target directory.

Row Delimiter

For the file type Text, you can configure the row delimiter between fields. This is optional. If you do not enter a value, the system automatically adds \n as the delimiter.

Field Delimiter

For the file type Text, you can configure the field delimiter between fields. This is optional. If you do not enter a value, the system automatically adds a comma (,) as the delimiter.

Mark Completion File

Configure the path and format of the completion file. There are two ways to mark completion: task-level and file-level.

Task-level: Example:

/ftpuser/test/SUCCESS. After the task is complete, a single empty file named SUCCESS is generated.File-level: Use an asterisk (*) as a placeholder for the data file name. Example:

/ftpuser/test/.flg. An empty .flg file with the same name is generated for each data file.

Merge Policy

Select the thread for outputting data:

Merge: All data is merged into one file, using a single thread for output. The output speed of large files will be affected.

ImportantMerge does not support appending data.

Do Not Merge: Uses multi-threaded output, generating multiple files.

Export Compressed File

Support whether to import the file into the destination database in a compressed file format. You can choose Do Not Compress, Export Directly in the Selected File Type, gzip compression format, or zip compression format.

Export Column Header

Choose whether to export the column header:

Export: The field name is output in the first line of each file.

Do Not Export: The first line of the file is data.

Input Fields

Displays the output fields of the upstream component.

Output Fields

Displays the output fields. Dataphin supports configuring output fields through Batch Add and Create New Output Field:

Batch Add: Click Batch Add. JSON and TEXT formats are supported for batch configuration.

Batch configuration in JSON format, for example:

// Example: [{"name": "user_id","type": "String"}, {"name": "user_name","type": "String"}]Notename specifies the field name and type specifies the field data type. For example,

"name":"user_id","type":"String"imports the user_id field and sets its data type to String.Batch configuration in TEXT format, for example:

// Example: user_id,String user_name,StringThe row delimiter is used to separate the information of each field. The default is a line feed (\n). Line feed (\n), semicolon (;), and period (.) are supported.

The column delimiter is used to separate the field name and field type. The default is a comma (,).

Create New Output Field.

Click +create New Output Field and fill in Column and select Type according to the page prompts.

Copy Ancestor Table Field.

Click Copy Ancestor Table Field. The system automatically generates output fields based on the field names of the ancestor table.

Manage Output Fields.

You can also perform the following operations on the added fields:

Click the Actions column's

icon to edit existing fields.

icon to edit existing fields.Click the Actions column's

icon to delete the existing field.

icon to delete the existing field.

Mapping

Mapping is used to map the input fields of the source table to the output fields of the destination table, facilitating subsequent data synchronization. Mapping includes same-name mapping and same-row mapping. The applicable scenarios are described as follows:

Same-name Mapping: Maps fields with the same field name.

Same-row Mapping: The field names of the source table and the destination table are inconsistent, but the data in the corresponding rows of the fields need to be mapped. Only fields in the same row are mapped.

Click Confirm to finalize the HDFS output widget configuration.