The MySQL output component writes data to a MySQL data source. When you synchronize data from another data source to MySQL, you must configure the target data source for the MySQL output component after configuring the source data source. This topic describes how to configure the MySQL output component.

Prerequisites

A MySQL data source is created. For more information, see Create a MySQL data source.

The account used to configure the MySQL output component properties requires write-through permission for the data source. If you do not have this permission, request it. For more information, see Request, renew, and return data source permissions.

Procedure

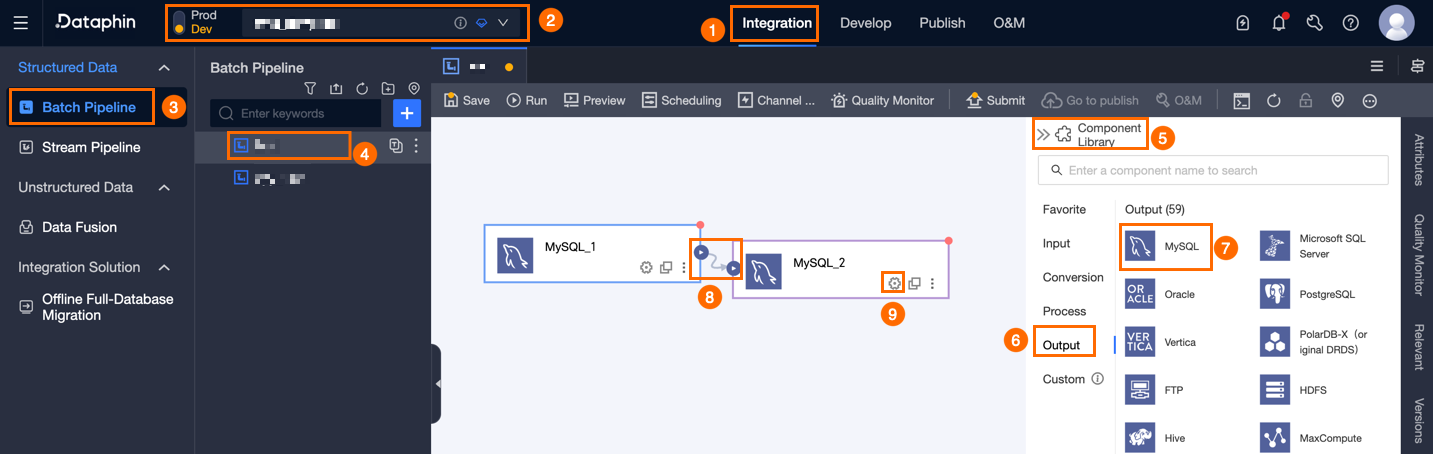

On the Dataphin home page, choose Develop > Data Integration from the menu bar.

In the menu bar of the integration page, select a Project. If you are in Dev-Prod mode, select an Environment.

In the left navigation pane, click Batch Pipeline. In the Batch Pipeline list, click the offline pipeline that you want to develop to open its configuration page.

In the upper-right corner of the page, click Component Library to open the Component Library panel.

In the left navigation pane of the Component Library panel, select Output. In the list of output components on the right, locate the MySQL component and drag it to the canvas.

Click and drag the

icon of the target input, transform, or flow component to connect it to the MySQL output component.

icon of the target input, transform, or flow component to connect it to the MySQL output component.Click the

icon on the MySQL output component card to open the MySQL Output Configuration dialog box.

icon on the MySQL output component card to open the MySQL Output Configuration dialog box.

In the MySQL Output Configuration dialog box, configure the parameters.

Parameter

Description

Basic Settings

Step Name

The name of the MySQL output component. Dataphin automatically generates a step name. You can also change the name as needed. The naming convention is as follows:

The name can contain only Chinese characters, letters, underscores (_), and digits.

The name cannot exceed 64 characters in length.

Datasource

The data source drop-down list displays all MySQL data sources, including those for which you have write-through permission and those for which you do not. Click the

icon to copy the current data source name.

icon to copy the current data source name.For a data source for which you do not have write-through permission, click Request next to the data source to request the permission. For more information, see Request, renew, and return data source permissions.

If you do not have a MySQL data source, click Create Data Source to create one. For more information, see Create a MySQL data source.

Time Zone

Time-formatted data is processed based on the current time zone. By default, this is the time zone configured in the selected data source and cannot be changed.

NoteFor tasks created before V5.1.2, you can select Default Data Source Configuration or Channel Configuration Time Zone. The default selection is Channel Configuration Time Zone.

Default Data Source Configuration: The default time zone of the selected data source.

Channel Configuration Time Zone: The time zone configured for the current integration task in Properties > Channel Configuration.

Database (Optional)

Select the database where the table is located. If you leave this blank, the database specified during data source registration is used.

Table

Select the target table for data output. You can enter a keyword of the table name to search, or enter the exact table name and click Exact Search. After you select a table, the system automatically checks the table status. Click the

icon to copy the name of the currently selected table.

icon to copy the name of the currently selected table.If the target table for data synchronization does not exist in the MySQL data source, you can use the one-click table creation feature to quickly generate a target table. The procedure is as follows:

Click One-click DDL. Dataphin automatically generates the code to create the target table. This includes the target table name, which defaults to the source table name, and field types, which are initially converted based on Dataphin fields.

You can modify the SQL script for creating the target table as needed, and then click Create. After the target table is created, Dataphin automatically uses it as the target table for data output.

NoteIf a table with the same name exists in the development environment, Dataphin reports an error that the table already exists when you click Create.

If there are no matching items, you can also perform integration based on a manually entered table name.

Missing Production Table Policy

The policy for handling cases where the production table does not exist. You can select Do Nothing or Automatic Creation. The default is Automatic Creation. If you select Do Nothing, the production table is not created when the task is published. If you select Automatic Creation, a table with the same name is created in the target environment when the task is published.

Do Nothing: If the target table does not exist, a message is displayed when you submit the task, but the task can still be published. In this case, you must manually create the target table in the production environment before you can execute the task.

Automatic Creation: You must Edit DDL Statement. The DDL statement for the selected table is filled in by default, which you can adjust. The table name in the DDL statement uses the placeholder

${table_name}. Only this placeholder is supported. It is replaced with the actual table name during execution.If the target table does not exist, it is first created according to the DDL statement. If the table creation fails, the check fails during publishing. You can modify the DDL statement based on the error message and then publish again. If the target table already exists, the DDL statement is not executed.

NoteThis parameter is supported only in projects in Dev-Prod mode.

Loading Policy

Select the policy for writing data to the target table. Loading Policy includes:

Append data (insert into): Appends data to the existing data in the target table without modifying historical data. A dirty data error is reported in case of a primary key or constraint violation.

Overwrite on primary key conflict (replace into): In case of a primary key or constraint violation, the entire row of old data with the duplicate primary key is deleted before the new data is inserted.

Update on primary key conflict (on duplicate key update): In case of a primary key or constraint violation, the data of the mapped fields is updated on the existing record.

Batch Write Data Volume (Optional)

The amount of data to write in a single operation. You can also set Batch Write Records. When writing, the system writes data when either of the two limits is reached. The default is 32 MB.

Batch Write Records (Optional)

The default is 2,048 records. When data is synchronized, a batch write policy is used. The parameters for this policy include Batch Write Records and Batch Write Data Volume.

When the accumulated data reaches either of the set limits, which are the data volume or the number of records for a batch write, the system considers a batch to be full and immediately writes the batch to the target.

Set the batch write data volume to 32 MB. You can adjust the upper limit for the number of records for a bulk insert based on the actual size of a single record. Set this to a large value to take full advantage of batch writing. For example, if a single record is about 1 KB, you can set the bulk insert size to 16 MB. Then, set the number of records for a bulk insert to a value greater than 16 MB divided by 1 KB, which is 16,384 records. For this example, set it to 20,000 records. With this configuration, the system triggers a batch write operation based on the bulk insert size. A write operation is performed each time the accumulated data reaches 16 MB.

Preparation Statement (Optional)

The SQL script to execute on the database before data import.

For example, to ensure continuous service availability, you can create a target table Target_A before the current step writes data. The step then writes data to Target_A. After the current step finishes writing, rename the table that provides continuous service, Service_B, to Temp_C. Then, rename Target_A to Service_B, and finally, delete Temp_C.

Completion Statement (Optional)

The SQL script to execute on the database after data import.

Field Mapping

Input Fields

Displays the input fields based on the output of the upstream component.

Output Fields

Displays the output fields. The following operations are supported:

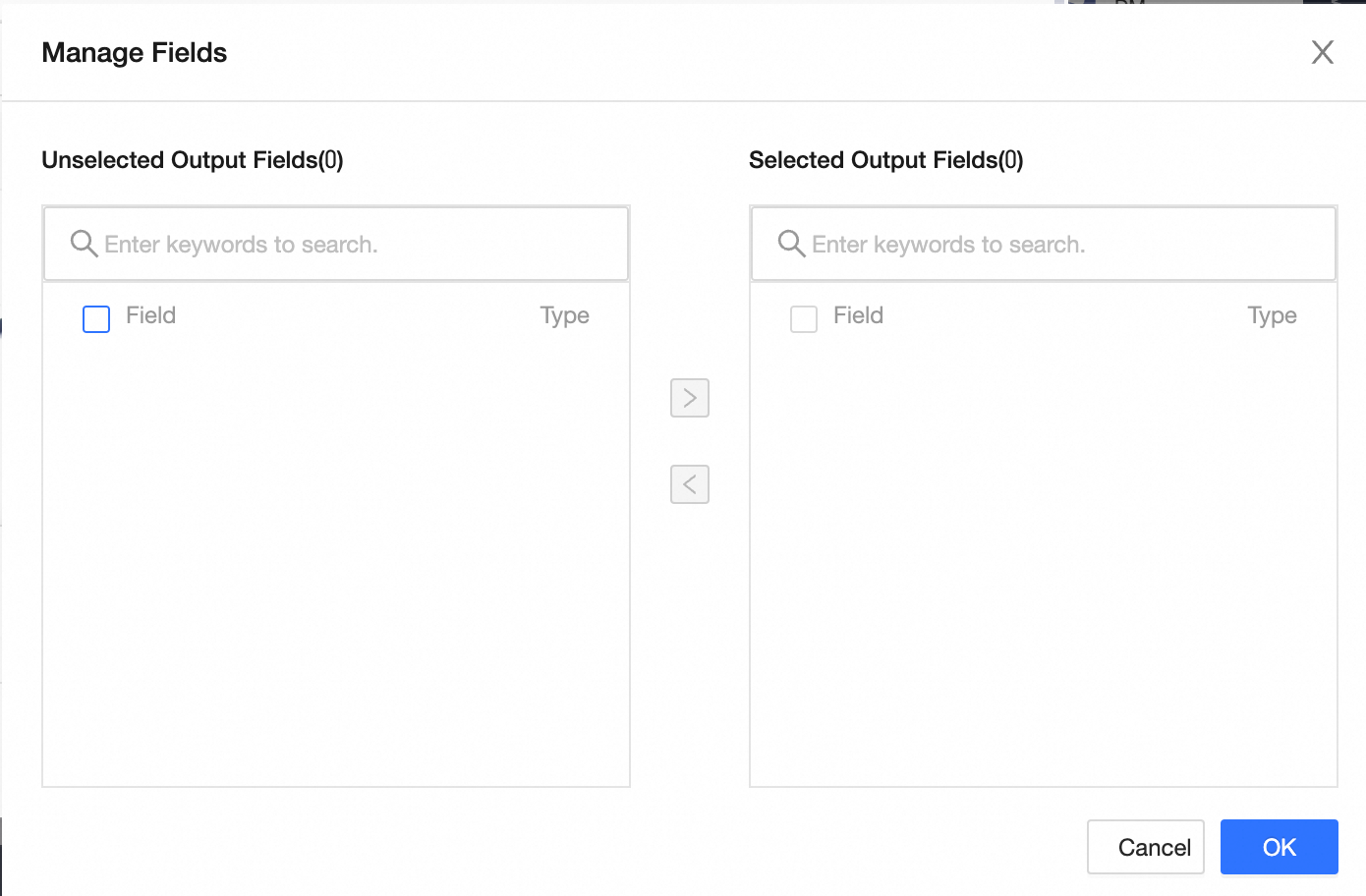

Manage Fields: Click Manage Fields to select output fields.

Click the

icon to move a field from Selected Input Fields to Unselected Input Fields.

icon to move a field from Selected Input Fields to Unselected Input Fields.Click the

icon to move a field from Unselected Input Fields to Selected Input Fields.

icon to move a field from Unselected Input Fields to Selected Input Fields.

Batch Add: Click Batch Add to configure fields in batches using JSON, TEXT, or DDL format.

To configure in batches using JSON format, for example:

// Example: [{ "name": "user_id", "type": "String" }, { "name": "user_name", "type": "String" }]Notename indicates the name of the imported field, and type indicates the field type after import. For example,

"name":"user_id","type":"String"imports the field named user_id and sets its type to String.To configure in batches using TEXT format, for example:

// Example: user_id,String user_name,StringThe row delimiter separates the information for each field. The default is a line feed (\n). Semicolons (;) and periods (.) are also supported.

The column delimiter separates the field name from the field type. The default is a comma (,).

To configure in batches using DDL format, for example:

CREATE TABLE tablename ( id INT PRIMARY KEY, name VARCHAR(50), age INT );

Create Output Field: Click + Create Output Field, enter a Column name, and select a Type as prompted. After you configure the current row, click the

icon to save.

icon to save.

Mapping

You can manually select field mappings based on the upstream input and the target table fields. Mapping includes By Row and By Name.

By Name: Maps fields that have the same name.

By Row: The field names in the source and target tables are different, but the data in the corresponding rows needs to be mapped. Only fields in the same row are mapped.

Click Confirm to complete the property configuration for the MySQL output component.