The OSS output component enables writing data from an external database to OSS or replicating and pushing data from a connected storage system to a big data platform into OSS for integration and reprocessing. This topic explains the configuration process for the OSS output component.

Prerequisites

An OSS data source must be created. For more information, see Create OSS Data Source.

The account configuring the OSS output component properties must have read-through permissions for the data source. If permissions are not granted, request data source permission. For more information, see Request Data Source Permission.

Procedure

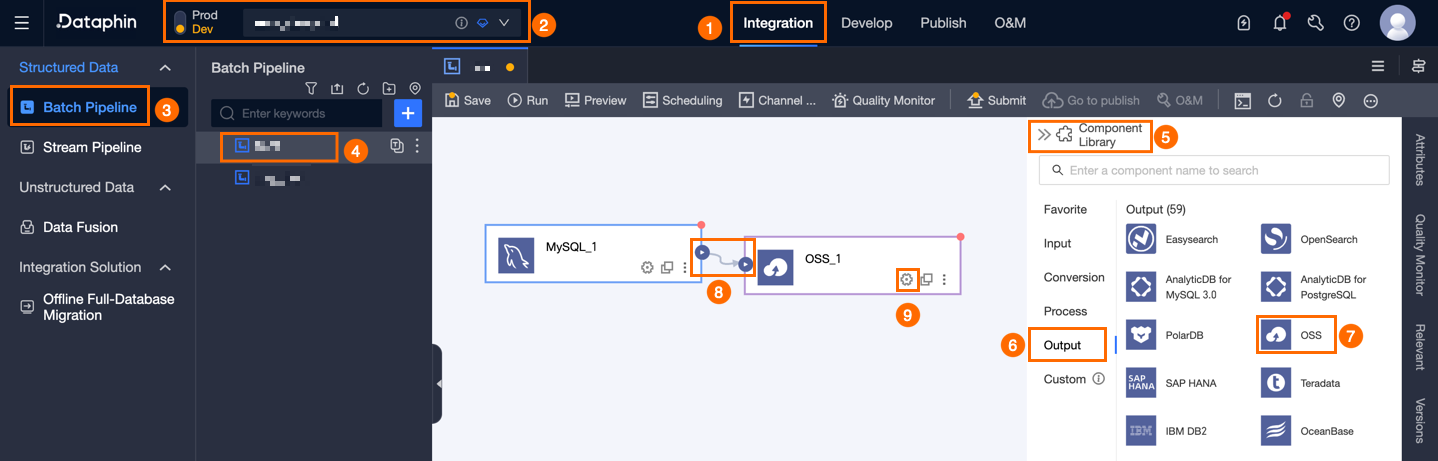

On the Dataphin home page, navigate to the top menu bar and select Development > Data Integration.

At the top menu bar on the integration page, select Project (Dev-Prod mode requires selecting the environment).

In the navigation pane on the left, click Batch Pipeline. In the Batch Pipeline list, select the offline pipeline to be developed to access its configuration page.

Click the Component Library in the upper right corner to open the Component Library panel.

In the Component Library panel's left-side navigation pane, select Output, locate the OSS component in the list on the right, and drag it onto the canvas.

Connect the target component to the current OSS output component by clicking and dragging the

icon.

icon.Click the

icon on the OSS output component card to open the OSS Output Configuration dialog box.

icon on the OSS output component card to open the OSS Output Configuration dialog box.

Configure the following parameters in the OSS Output Configuration dialog box.

Parameter

Description

Basic Settings

Step Name

Enter the name for the OSS output component, adhering to the following conventions:

Supports Chinese characters, letters, numbers, and underscores (_).

Allows up to 64 characters.

Datasource

Select a pre-configured data source in Dataphin or click Create to navigate to the Management Center to set up a new data source. For more information, see Create OSS Data Source.

Ensure the account used for configuration has write-through permissions for the data source. If not, request data source permission. For more information, see Request Data Source Permission.

File Type

Choose between Text and CSV file types.

File Encoding

Supports UTF-8 and GBK encodings.

Object Prefix

The prefix of the OSS object. You can specify multiple object prefixes. For example, if an OSS bucket contains a `data` folder with a `phin.txt` file, set the object prefix to

data/phin.txtto sync a specific file. To sync all files in a folder, use a wildcard character, such asdata/*.Prefix Conflict

Choose an action for prefix conflicts: replace existing files, append to existing files, or report an error. The options are:

Replace existing file: Before writing, deletes all objects that match the specified object prefix. For example, if the object prefix is

Dataphin, all objects whose names start withDataphinare deleted.Append to Existing Files: Writes directly using the configured prefix, adding a random UUID suffix to avoid filename conflicts.

Report error on conflict: If an object that matches the prefix exists in the specified path, an error is reported. For example, if the object prefix is

Dataphinand an object namedDataphinexists, an error is reported.

Number Of Files To Write

The file write policy for the destination OSS supports writing to a single file or multiple files.

Single File: Writes to a single file in the target OSS.

Multiple files: Writes data to multiple files in the destination OSS. You must also configure the suffix format. You can choose to generate a sequential suffix, such as

_0,_1, or_2, or generate a random UUID suffix. The number of files is determined by the task concurrency.NoteIf you choose to write to multiple files, a suffix is generated even if the task concurrency is set to 1. The suffix can be

_1or a randomuuid.When appending to existing files, only a UUID random number suffix is possible.

Advanced Configuration

Column Delimiter

Specify a column delimiter for writing to the target table. The default is a comma (,).

Row Delimiter

Designate a row delimiter for writing to the target table. If unspecified, the default is a line feed (\n).

Null Value

Optional. Defines the string representation for null values.

File Name Extension

You can configure an extension, such as

.csvor.text, as the final suffix of the object name.Output Field Names

Select Yes to include the field names from the upstream component as the first line in the output file, or No to exclude them.

Field Mapping

Input Fields

Displays the output fields from the upstream component.

Output Fields

Shows the output fields. Dataphin allows configuration of output fields through Batch Add and Create Output Field options:

Batch Add: Click Batch Add to support batch configuration in JSON or TEXT format.

For JSON format, an example is:

// Example: [{"name": "user_id","type": "String"}, {"name": "user_name","type": "String"}]Note`name` specifies the name of the imported field, and `type` specifies the data type of the field after it is imported. For example,

"name":"user_id","type":"String"specifies that the `user_id` field is imported and its data type is set to String.For TEXT format, an example is:

// Example: user_id,String user_name,StringEach field's information is separated by a row delimiter, defaulting to a line feed (\n). It supports line feed (\n), semicolon (;), and period (.).

The column delimiter, defaulting to a comma (,), separates the field name from the field type.

Creating an Output Field.

Click on the + Create Output Field button, enter a value for Column, and choose the appropriate Type as guided by the on-screen instructions.

Copy Fields from Upstream.

Select Copy Upstream Fields to automatically generate output fields that match the names of the upstream fields.

Manage Output Fields: Perform the following actions on added fields:

Additionally, you can perform these operations on the added fields:

Click the Actions column's

icon to edit the existing fields.

icon to edit the existing fields.To delete an existing field, click the Actions column

icon.

icon.

Mapping

Map input fields from the source table to output fields of the target table to facilitate data synchronization. Mapping options include the following:

Same-name Mapping: Maps fields with identical names.

Same-row Mapping: Maps fields with corresponding row data when source and target table field names differ.

Click Confirm to complete the OSS output component configuration.