Data Transmission Service 는 관계형 데이터베이스, NoSQL, OLAP와 같은 데이터 스토리지 유형 간에 데이터를 마이그레이션하도록 지원합니다. 이 서비스는 동종 마이그레이션뿐 아니라 서로 다른 데이터 스토리지 유형 간의 이기종 마이그레이션을 지원합니다.

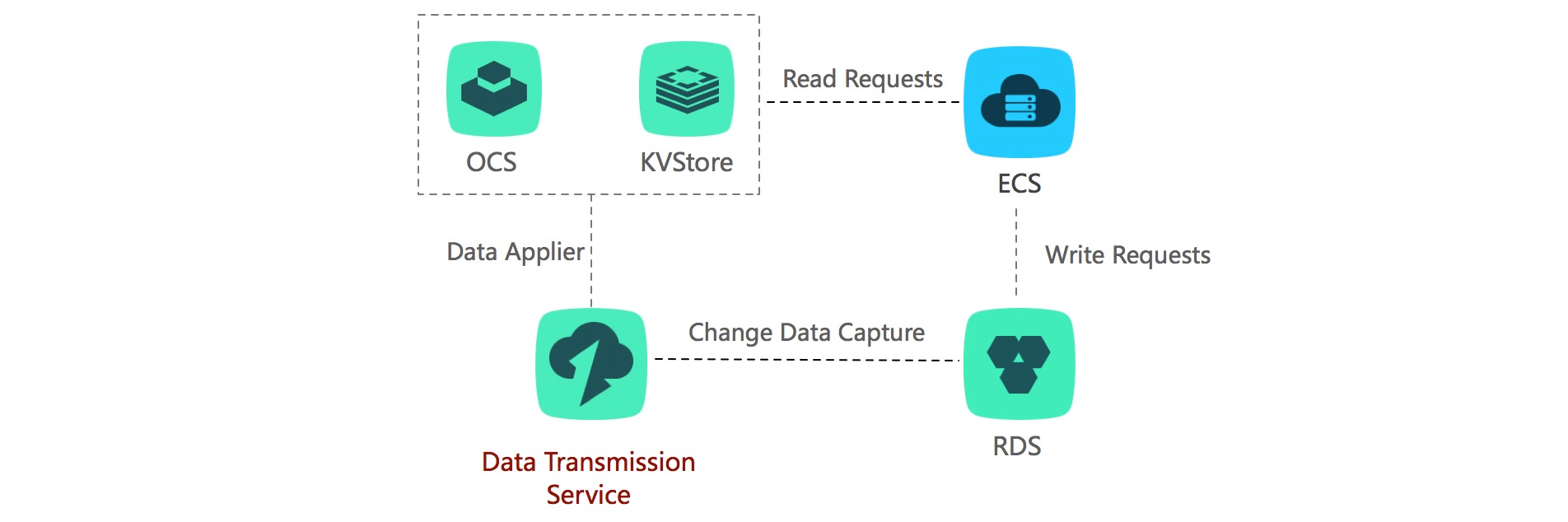

Data Transmission Service 는 고가용성을 지원하므로 연속 데이터 복제에도 사용할 수 있습니다. 또한 Data Transmission Service 는 ApsaraDB for RDS의 변경 데이터 기능을 구독하는 데도 도움을 줄 수 있습니다. Data Transmission Service 를 사용하면 데이터 마이그레이션, 원격 실시간 데이터 백업, 실시간 데이터 통합, 캐시 새로고침과 같은 시나리오를 쉽게 구현할 수 있습니다.

혜택

-

고성능

기존 데이터 마이그레이션 속도는 최대 70MB/s입니다.

변경 데이터 복제 속도는 초당 레코드 최대 30,000개 이상입니다.

-

실시간 데이터 복제

DTS는 RDS 데이터베이스 간 실시간 데이터 동기화를 지원합니다.

동기화 프로세스 중에 동기화 객체를 수정할 수 있습니다.

-

간단한 사용

Data Transmission Service 관리 콘솔에서 몇 번의 클릭만으로 데이터베이스 마이그레이션을 시작할 수 있습니다.

언제 어디서나 관리 콘솔에서 작업을 모니터링하고 관리할 수 있습니다.

-

신뢰성

Data Transmission Service 는 소스 및 대상 데이터베이스를 지속적으로 모니터링하고, 연결이 변경되면 성능을 최적화하기 위해 연결을 다이내믹하게 수정합니다.

특징

-

작동 중단 없음

Data Transmission Service는 거의 작동 중단 없이 데이터를 마이그레이션하도록 지원합니다. 마이그레이션 중에 발생하는 소스 데이터베이스의 모든 데이터 변경 사항은 대상에 지속적으로 복제되므로 마이그레이션 프로세스 중에 소스 데이터베이스가 완전히 작동할 수 있습니다. 데이터베이스 마이그레이션이 완료된 후 원하는 경우 대상 데이터베이스가 소스 데이터베이스와 동기화된 상태로 유지되므로 편리한 시간에 데이터베이스를 전환할 수 있습니다.

-

가장 널리 사용되는 데이터베이스 지원

Data Transmission Service 는 널리 사용되는 대부분의 상용 및 오픈소스 데이터베이스 간에 데이터를 마이그레이션할 수 있습니다. 이는 MySQL 간의 마이그레이션과 같은 동종 마이그레이션뿐 아니라 Oracle에서 MySQL로의 마이그레이션과 같은 서로 다른 데이터베이스 플랫폼 간의 이기종 마이그레이션을 지원합니다.

마이그레이션은 RDS 데이터베이스 간에 수행될 뿐만 아니라 온프레미스 데이터베이스에서 RDS 또는 ECS로, ECS에서 실행되는 데이터베이스에서 RDS로, 또는 그 반대로 수행될 수 있습니다.

Data Transmission Service 는 데이터 마이그레이션, 실시간 데이터 복제, 변경 데이터 구독과 같은 여러 전송 모드를 지원합니다.

-

데이터 마이그레이션

작동 중단 없음

마이그레이션 중에 발생하는 소스 데이터베이스의 모든 데이터 변경 사항은 대상에 지속적으로 복제되므로 마이그레이션 프로세스 중에 소스 데이터베이스가 완전히 작동할 수 있습니다.

데이터베이스 마이그레이션이 완료된 후 원하는 경우 대상 데이터베이스가 소스 데이터베이스와 동기화된 상태로 유지되므로 편리한 시간에 데이터베이스를 전환할 수 있습니다.

가장 널리 사용되는 데이터베이스 지원

Data Transmission Service 는 MySQL 간, SQL Server 간의 마이그레이션과 같은 동종 마이그레이션뿐 아니라 Oracle에서 MySQL로의 마이그레이션과 같은 이기종 마이그레이션을 지원합니다.

Data Transmission Service 는 서로 다른 데이터베이스 플랫폼 간의 이기종 마이그레이션을 지원합니다. 마이그레이션은 RDS 데이터베이스 간에 수행될 뿐만 아니라 온프레미스 데이터베이스에서 ApsaraDB for RDS 또는 Alibaba Cloud ECS로, ECS에서 실행되는 데이터베이스에서 RDS로, 또는 그 반대로 수행될 수 있습니다. -

실시간 변경 데이터 구독

Data Transmission Service는 RDS의 변경 데이터에 대한 실시간 구독을 지원합니다.

구독 객체는 구독 인스턴스가 생성된 후에 수정할 수 있습니다.

-

자동 모니터링

복제 지연, 전송 상태 및 사용 지연과 같은 중요한 인스턴스 정보를 실시간으로 제공하므로 비즈니스에 중요한 애플리케이션을 모니터링하고 보호할 수 있습니다. 40개 이상의 제품을 무료로 체험하세요

-

간단한 사용

Data Transmission Service 는 마이그레이션 프로세스 중에 소스 데이터베이스에서 발생하는 데이터 변경 사항을 자동으로 복제하는 프로세스를 포함하여 모든 복잡한 마이그레이션 프로세스를 관리합니다.

-

신뢰성

Data Transmission Service는 소스 및 대상 데이터베이스를 지속적으로 모니터링하고, 연결이 변경되면 성능을 최적화하기 위해 연결을 다이내믹하게 수정합니다.

-

고성능

Data Transmission Service는 고성능으로 병렬 복제를 지원하고 데이터 압축 및 패킷 재전송과 같은 다양한 네트워크 최적화 기능을 지원합니다.

핀테크, 이커머스 및 게임을 위한 강력한 클라우드 기반 데이터베이스 구축

이 백서에서는 Alibaba Cloud의 클라우드 네이티브 데이터베이스인 PolarDB를 소개하고 데이터 보호, 백업 및 마이그레이션을 포함한 실제 시나리오를 통해 데이터베이스별 문제를 조사합니다.

시나리오

-

작동 중단 없는 데이터베이스 마이그레이션

-

원격 데이터 재해 복구

-

원격 접속 감소

-

실시간 빅데이터 분석

-

캐시 새로고침

-

메시지 알림

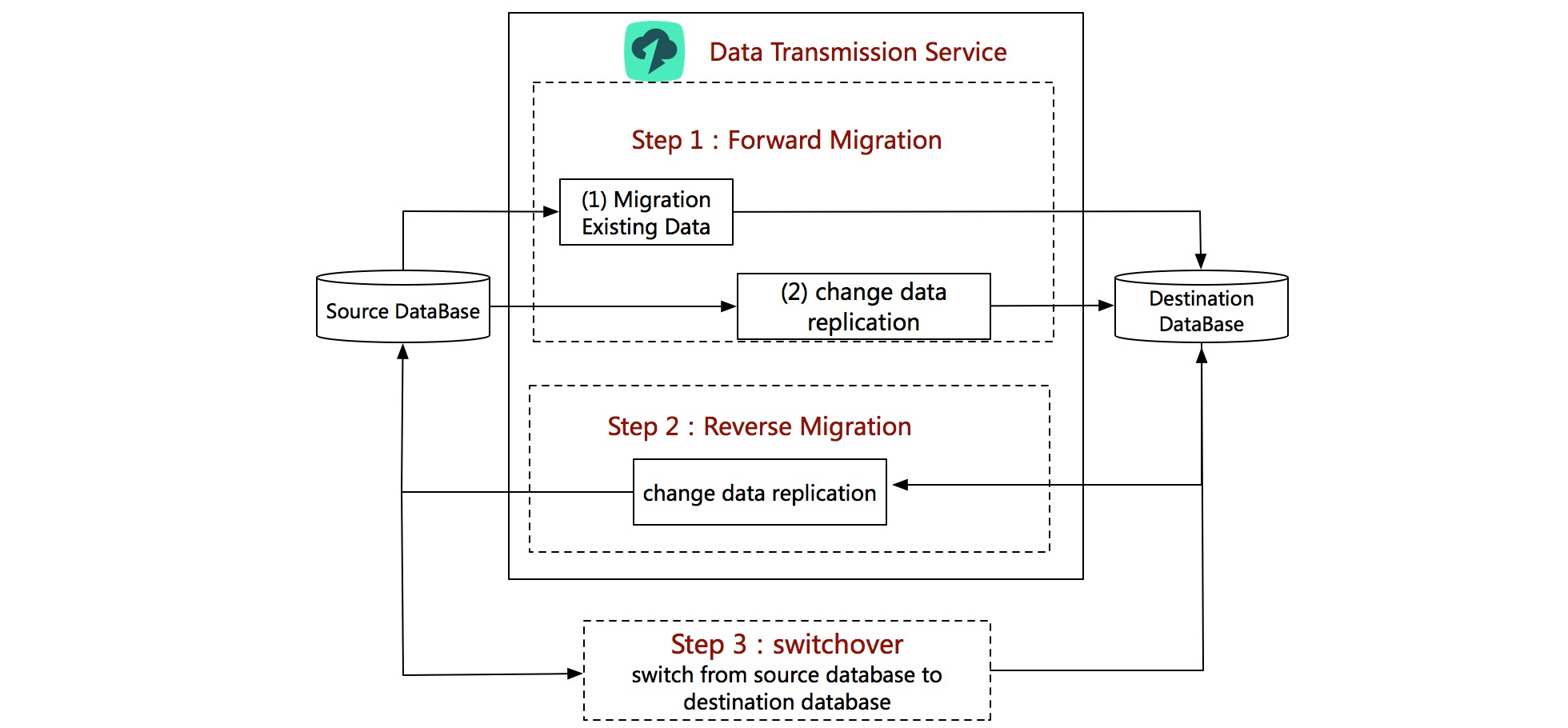

작동 중단 없는 데이터베이스 마이그레이션

Data Transmission Service는 거의 작동 중단 없이 데이터를 마이그레이션하도록 지원합니다. 마이그레이션 중에 발생하는 소스 데이터베이스의 모든 데이터 변경 사항은 대상에 지속적으로 복제되므로 마이그레이션 프로세스 중에 소스 데이터베이스가 완전히 작동할 수 있습니다. 데이터베이스 마이그레이션이 완료된 후 선택한 기간 동안 대상 데이터베이스가 소스 데이터베이스와 동기화된 상태로 유지되므로 편리한 시간에 데이터베이스를 전환할 수 있습니다.

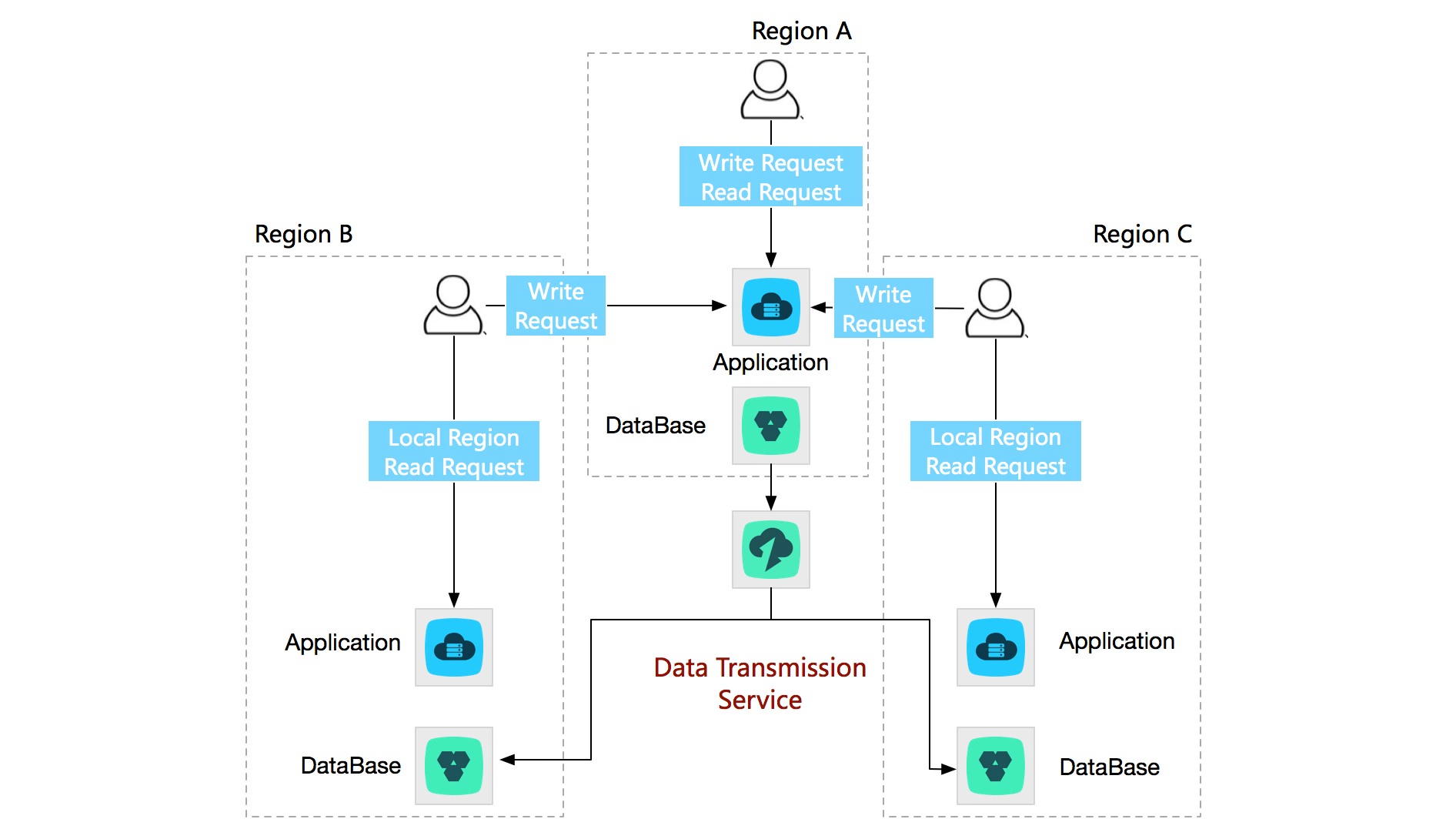

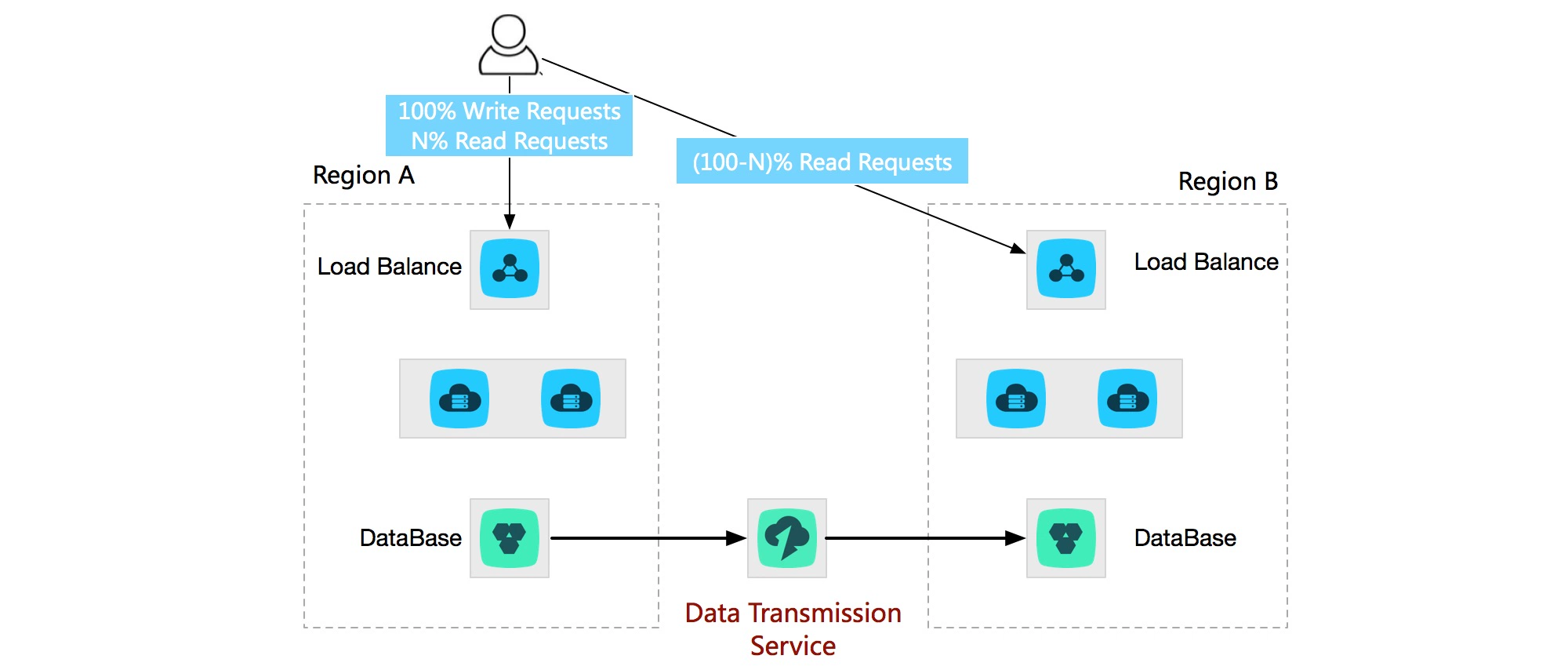

원격 데이터 재해 복구

Data Transmission Service를 사용하면 서로 다른 리전에 있는 두 RDS 인스턴스 간에 실시간 데이터 복제를 수행할 수 있습니다. 원격 재해 복구 인스턴스는 기본 인스턴스의 슬레이브입니다. 재해가 발생하면 애플리케이션이 기본 인스턴스에서 원격 재해 복구 인스턴스로 전환하여 비즈니스 가용성을 보장할 수 있습니다.

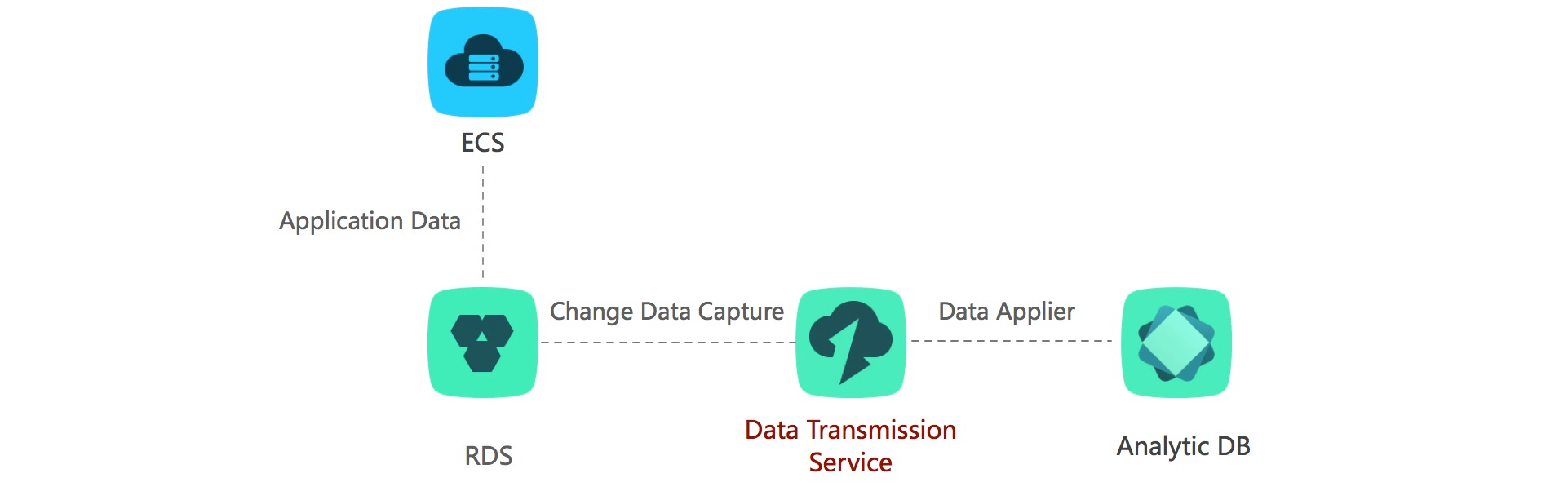

실시간 빅데이터 분석

Data Transmission Service 는 RDS에서 Analytic DB로 최적화된 고성능 전송을 제공하여 실시간 빅데이터 분석 이니셔티브를 통해 고객을 지원합니다. 이 솔루션을 통해 고객은 보다 완성도가 높은 분석 툴로 전환하기 전에 낮은 레이턴시의 데이터를 임시 검색하고 구성 및 보강할 수 있습니다.

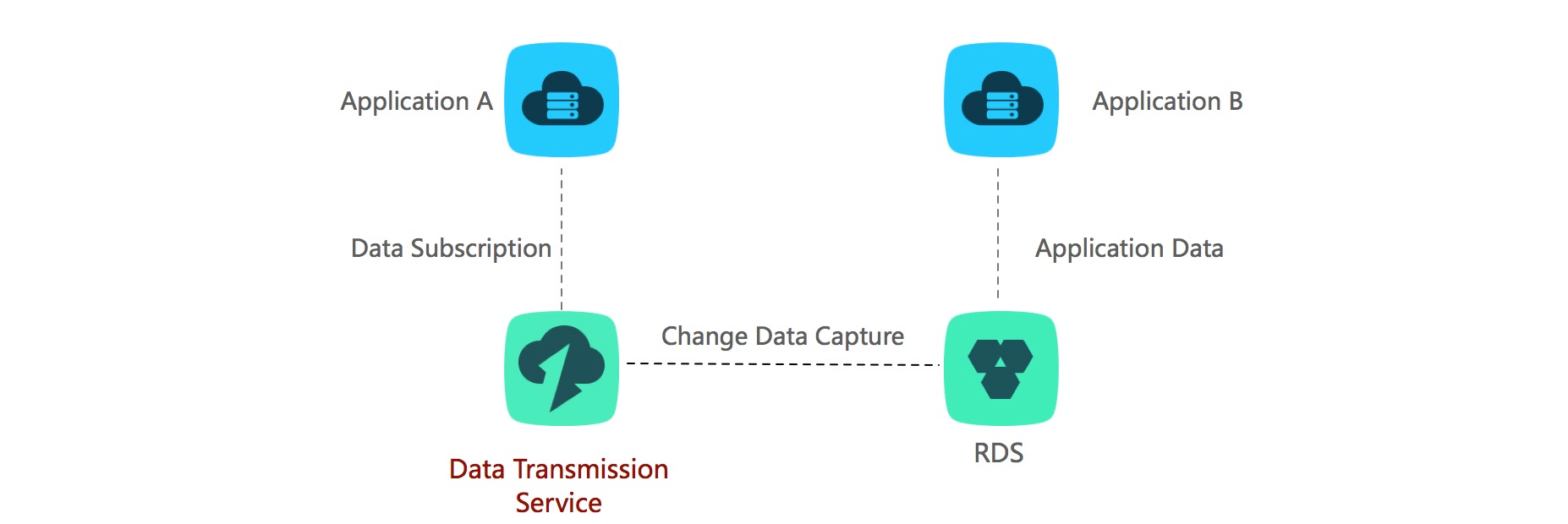

메시지 알림

두 애플리케이션에 비동기 결합이 있는 경우 Data Transmission Service의 데이터 구독을 사용하면 소스 애플리케이션의 성능을 저하시키지 않고 낮은 레이턴시 메시지 알림을 수행할 수 있습니다. 데이터 구독을 사용하면 소스 애플리케이션 중에 메시지를 게시할 필요가 없습니다. 따라서 이 솔루션을 사용하면 핵심 애플리케이션을 보다 안정적이고 신뢰할 수 있게 만들 수 있습니다.

사용 사례

- 다중 활성 지리적 복제 (Multi-active geo-replication)

- 지역 간 및 가용 영역 간 재해 복구 (Cross-region & cross-AZ disaster recovery)

- 국경 간 데이터 동기화 (Cross-border data synchronization)

- 클라우드 BI 및 실시간 데이터 웨어하우징 (Cloud BI & real-time data warehousing)

예: 실시간 데이터 웨어하우징의 경우, DTS는 OLTP와 OLAP 데이터베이스를 다운타임 없이 원활하게 통합하며, 최소한의 설정 작업만 필요합니다.

동영상을 시청하여 DTS가 데이터 워크플로를 어떻게 간소화하는지 알아보세요!

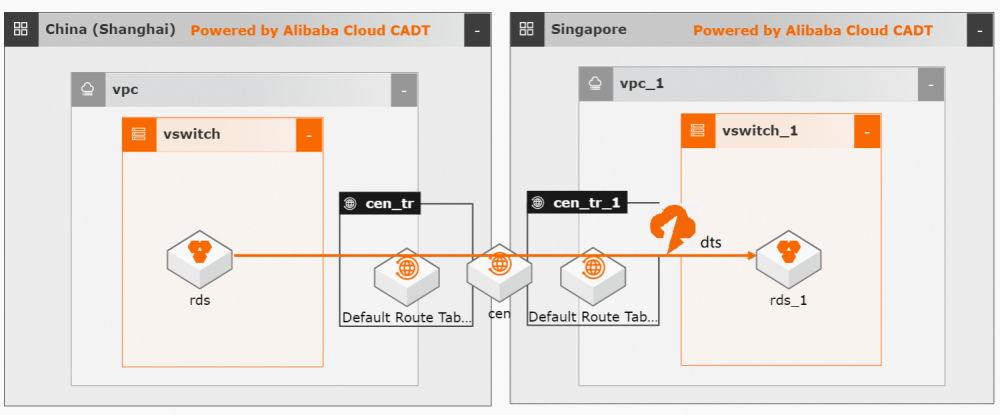

Cloud Enterprise Network (CEN)와의 통합을 통해 Data Transmission Service (DTS) 솔루션은 컴플라이언스 요구 사항을 충족하는 실시간 지역 간 및 국경 간 데이터 복제를 가능하게 할 뿐만 아니라 온프레미스 환경과 클라우드 인프라 간의 원활한 연결도 제공합니다. 이를 통해 어디에 있든지 데이터 접근성 및 신뢰성을 향상시킬 수 있습니다.

우리 솔루션이 잠재적 장애로부터 비즈니스를 어떻게 강화하고 효율적인 글로벌 데이터베이스 동기화를 가능하게 하는지 확인해 보세요.

- CEN을 사용하여 온프레미스 데이터베이스를 DTS에 연결하기 >

- 모범 사례: 국경 간 데이터베이스 동기화 >

관련 자료

실습

Alibaba Cloud에서 데이터베이스 성능 벤치마킹 설정

이 문서에서는 TPC(Transaction Processing Performance Council) 및 sysbench 벤치마크를 포함하여 일반적으로 사용되는 벤치마크를 기반으로 데이터베이스 성능 테스트를 구현하기 위한 지침을 제공합니다.

실습

Alibaba Cloud에 멀티 리전 애플리케이션 배포

이 솔루션을 사용하면 다수의 리전에 애플리케이션을 배포하고, 리전별 네트워크를 중앙 내부 네트워크에 연결하며, Express Connect 기반 배포를 CEN 기반 배포로 마이그레이션하고, 크로스리전 ApsaraDB for RDS 데이터베이스 시스템 간에 복제를 배포할 수 있습니다.

FAQ

1. Data Transmission Service는 두 개의 서로 다른 Alibaba Cloud 계정에서 RDS 인스턴스 간의 데이터 마이그레이션을 지원하나요?

예. 서로 다른 Alibaba Cloud 계정의 RDS 인스턴스 간에 데이터를 마이그레이션할 때 대상 RDS 인스턴스의 계정으로 Data Transmission Service 콘솔에 로그인해야 합니다.

마이그레이션 작업을 구성할 때 소스 인스턴스에 대한 퍼블릭 IP 주소가 있는 허용된 데이터베이스를 선택하고 소스 RDS 인스턴스의 연결을 구성해야 합니다.

2. Data Transmission Service는 데이터 마이그레이션 중에 소스 인스턴스의 변경 데이터 마이그레이션을 지원하나요?

예. 마이그레이션 중에 발생하는 소스 데이터베이스에 대한 모든 데이터 변경 사항은 대상에 지속적으로 복제됩니다. Data Transmission Service를 사용하면 마이그레이션 프로세스 중에 소스 데이터베이스가 완전히 작동할 수 있습니다.

3. Data Transmission Service를 통한 변경 데이터 마이그레이션의 기본 원칙은 무엇인가요?

Data Transmission Service를 통한 변경 데이터 마이그레이션의 기본 원칙은 다음과 같습니다.

데이터 마이그레이션 중에 Data Transmission Service는 로그 구문 분석 모듈을 시작하여 소스 데이터베이스의 변경 로그를 실시간으로 캡처하고 구문 분석합니다. 그런 다음 Data Transmission Service는 기존 데이터 마이그레이션을 시작합니다. 데이터 로드 후 Data Transmission Service는 캡처된 변경 데이터를 대상 인스턴스에 복제하고 대상 데이터베이스는 선택한 기간 동안 소스 데이터베이스와 동기화된 상태로 유지됩니다.

4. Data Transmission Service를 통한 데이터 마이그레이션 중에 테이블이 잠겨 있나요?

기존 데이터 마이그레이션 및 변경 데이터 복제를 선택하면 전체 데이터 마이그레이션 중에 Data Transmission Service는 소스 데이터베이스에 기본 키가 없는 비-트랜잭션 테이블(예: MyISAM)이 있는지 여부를 확인합니다. 이러한 테이블이 있는 경우 Data Transmission Service는 데이터 마이그레이션 일관성을 보장하기 위해 테이블에 읽기 전용 잠금을 설정합니다. 다른 경우에는 Data Transmission Service가 소스 데이터베이스에 잠금을 설정하지 않습니다.

5. Data Transmission Service를 통한 데이터 마이그레이션 중에 ECS 인스턴스에 접속하는 데 사용되는 네트워크(인트라넷 또는 인터넷)는 무엇인가요?

ECS 인스턴스의 네트워크 유형이 VPC인 경우 Data Transmission Service는 인터넷을 통해 ECS 인스턴스에 연결합니다.

ECS 인스턴스가 마이그레이션 작업의 소스 인스턴스이고 마이그레이션 작업의 대상 인스턴스와 다른 리전에 있는 경우 Data Transmission Service는 인터넷을 통해 ECS에 연결합니다.

그렇지 않으면 Data Transmission Service는 인트라넷을 통해 ECS 인스턴스에 연결합니다.

6. Data Transmission Service를 통한 데이터 마이그레이션 중에 RDS 인스턴스에 접속하는 데 사용되는 네트워크(인트라넷 또는 인터넷)는 무엇인가요?

RDS 인스턴스가 마이그레이션 작업의 소스 인스턴스이고 마이그레이션 작업의 대상 인스턴스와 다른 리전에 있는 경우 Data Transmission Service는 인터넷을 통해 RDS 인스턴스에 연결합니다.

그렇지 않으면 Data Transmission Service는 인트라넷을 통해 RDS 인스턴스에 연결합니다.

7. RDS 인스턴스가 마이그레이션 작업의 소스 인스턴스이고 마이그레이션 작업의 대상 인스턴스와 다른 리전에 있는 경우 Data Transmission Service는 인터넷을 통해 RDS 인스턴스에 연결할 수 있습니다. 그렇지 않으면 Data Transmission Service는 인트라넷을 통해 RDS 인스턴스에 연결합니다.

소스 인스턴스의 데이터베이스 유형이 MySQL 또는 MongoDB인 경우 DDL 작업이 동기화됩니다.

그렇지 않으면 DDL 작업이 동기화되지 않습니다.

8. Data Transmission Service는 VPC ECS 인스턴스의 데이터베이스를 RDS 인스턴스로 마이그레이션하는 것을 지원하나요?

예. 하지만 ECS 인스턴스는 EIP 주소로 연결되어야 합니다. 마이그레이션 작업을 구성할 때 소스 인스턴스에 대한 ECS 인스턴스를 선택합니다. Data Transmission Service는 ECS 인스턴스의 EIP 주소로 ECS 인스턴스에 접속합니다.

9. 데이터 마이그레이션 중에 Data Transmission Service는 어떤 데이터베이스(활성/대기)에서 데이터를 캡처하나요?

Data Transmission Service는 데이터 마이그레이션 중에 RDS 인스턴스의 활성 데이터베이스에서 데이터를 캡처합니다.

10. Data Transmission Service는 RDS 인스턴스 A의 데이터베이스 C를 RDS 인스턴스 B의 데이터베이스 D로 마이그레이션할 수 있나요?

예. Data Transmission Service는 두 RDS 인스턴스의 서로 다른 두 데이터베이스 간에 데이터 마이그레이션을 허용하는 데이터베이스 이름 매핑을 지원합니다.

11. Data Transmission Service를 통한 마이그레이션 후 소스 데이터베이스의 데이터가 삭제되나요?

아니요. Data Transmission Service는 데이터 마이그레이션 중에 소스 데이터베이스의 데이터만 복사하므로 소스 데이터베이스의 데이터는 영향을 받지 않습니다.

12. "Failed to obtain the structure object "[java.sql.SQLException: I/O exception: The Network Adapter co"가 보고된 이유는 무엇인가요?

이러한 오류가 보고되면 Data Transmission Service는 소스 데이터베이스에 연결하지 못합니다. 가능한 원인은 다음과 같습니다.

(1) 연결 주소가 올바르지 않습니다.

(2) 방화벽이 로컬 데이터베이스에 대해 활성화되어 있습니다.

(3) 원격 수신이 데이터베이스에 대해 활성화되어 있지 않습니다.

13. 데이터 마이그레이션 중에 대상 데이터베이스에서 생성된 "increment_trx" 테이블은 무엇인가요?

"increment_trx" 테이블은 Data Transmission Service에 의해 생성됩니다. 이는 주로 마이그레이션 체크포인트를 기록하는 데 사용됩니다. 작업이 중단되면 Data Transmission Service는 자동으로 프로세스를 다시 시작하고 기록된 체크포인트에서 마이그레이션을 계속합니다.

테이블을 삭제하지 마세요. 그렇지 않으면 마이그레이션 작업이 실패합니다.

14. Data Transmission Service를 통한 마이그레이션 후 대상 RDS 인스턴스의 크기가 소스 데이터베이스보다 큰 이유는 무엇인가요?

Data Transmission Service는 SQL을 통해 데이터를 마이그레이션합니다. 이는 대상 인스턴스에 binlog를 생성합니다. 따라서 마이그레이션 후 대상 RDS 인스턴스의 크기는 소스 데이터베이스보다 큽니다.

15. "java.sql.BatchUpdateException: INSERT, DELETE command denied to user 'user'"라는 오류가 발생한 이유는 무엇인가요?

일반적인 원인은 대상 RDS 인스턴스가 잠겨 있고 계정의 쓰기 권한이 취소되었기 때문입니다.

이 문제를 해결하려면 대상 RDS 인스턴스의 공간을 업그레이드하고 Data Transmission Service 콘솔에서 작업을 다시 시작하면 됩니다.

16. Data Transmission Service를 통한 데이터 마이그레이션 중에 대상 데이터베이스의 테이블 내의 데이터를 덮어쓰나요?

아니요. 마이그레이션할 대상 인스턴스의 테이블은 데이터 마이그레이션 전에 비어 있어야 합니다. 마이그레이션할 테이블이 이미 대상 데이터베이스에 있는 경우 사전 검사가 실패합니다.

17. 다른 Alibaba Cloud 계정에서 RDS 인스턴스로 데이터베이스를 마이그레이션하려면 어떻게 해야 하나요?

대상 RDS 인스턴스의 Alibaba Cloud 계정에서 Data Transmission Service 콘솔에 로그인해야 합니다. 소스 인스턴스 유형을 허용된 데이터베이스로 설정하고 소스 RDS 인스턴스의 연결을 구성합니다.

18. 완료된 마이그레이션 작업의 릴리스가 마이그레이션된 데이터베이스 사용에 영향을 주나요?

아니요.

19. Data Transmission Service가 허용된 데이터베이스와 RDS 인스턴스 간의 동기화를 지원할 수 있나요?

예. Data Transmission Service를 사용하여 클라우드 인스턴스와 허용된 데이터베이스 간에 동기화를 수행할 수 있습니다.

20. 데이터 Data Transmission Service 동기화 중에 어떤 네트워크(인트라넷 또는 인터넷)가 사용되나요?

Data Transmission Service는 데이터 동기화 중에 인트라넷을 통해 데이터를 전송합니다.

21. 데이터 구독 SDK가 어떤 메시지도 구독할 수 없고 "client partition is empty, wait partition balance"라는 메시지가 항상 보고되는 이유는 무엇인가요?

데이터 구독 SDK가 어떤 메시지도 구독할 수 없고 "client partition is empty, wait partition balance"라는 메시지가 항상 보고됩니다.

22. 데이터 구독 SDK에서 "keep alive error"가 보고되는 이유는 무엇인가요?

사용 타임스탬프가 데이터 구독 인스턴스의 데이터 범위에 있지 않습니다. 사용 타임스탬프를 수정하고 SDK를 다시 시작해야 합니다.

23. 데이터 구독 기능을 사용할 때 시스템에서 "failed to get master store addr for topic aliyun_sz_ecs_ApsaraDBr*****y-1-0" 오류를 보고하는 이유는 무엇인가요?

먼저 SDK의 sePublicIp가 true로 설정되어 있는지 확인합니다.

usePublicIp = true인 경우 사용 타임스탬프가 구독 인스턴스의 데이터 범위 내에 있는지 확인합니다. 그렇지 않은 경우 사용 타임스탬프를 수정하고 SDK를 다시 시작합니다.

24. 데이터 구독용 SDK를 시작할 때 시스템에서 "Specified signature is not matched with our calculation. at com.aliyuncs.DefaultAcsClient.parseAcsResponse(DefaultAcsClient.java:139) at" 오류를 보고하는 이유는 무엇인가요?

SDK에 구성된 접속 키/접속 암호가 구독 인스턴스에 해당하는 Alibaba Cloud 계정에 속하지 않습니다. 접속 키/접속 암호를 수정하고 SDK를 다시 시작합니다.

25. SDK 클라이언트가 여러 채널을 구독할 수 있나요?

아니요.

26. SDK 구독을 시작할 때 시스템에서 "get guid info failed"를 보고하는 이유는 무엇인가요?

SDK에 설정된 구독 인스턴스 ID가 올바르지 않습니다. 샘플 코드 클라이언트의 구독 인스턴스 ID를 구독하려는 구독 인스턴스의 ID로 바꿔야 합니다.