MaxCompute (previously known as ODPS) is a general purpose, fully managed, multi-tenancy data processing platform for large-scale data warehousing. MaxCompute supports various data importing solutions and distributed computing models, enabling users to effectively query massive datasets, reduce production costs, and ensure data security.

Benefits

-

Large-scale computing and storage

Supports EB-level data storage and computing.

-

Multiple computational models

Supports SQL, MapReduce, and Graph computational models and Message Passing Interface (MPI) iterative algorithms.

-

Reliable data security measures

Provides stable offline analysis services for more than seven years, and enables multi-level sandbox protection and monitoring.

-

Cost-effective

Provides more efficient computing and storage services than an enterprise private cloud, and reduces the purchase cost by 20% to 30%.

Features

-

Data channel

Supports multiple data tunnels, history data tunnels, and incremental data tunnels.

Multiple data tunnels and history data tunnels

MaxCompute uses tunnels to transmit data. Tunnels are scalable, and import and export PB-level data on a daily basis. You can import all data or history data through multiple tunnels. The tunnel service supports Java SDKs. You can use commands on the MaxCompute client to exchange files and data with the cloud.

Real-time incremental data tunnels

MaxCompute provides the DataHub service to upload real-time data. This service features low latency and is easy to use. It is very suitable for importing incremental data. DataHub supports multiple data transmission plug-ins, such as Logstash, Flume, Fluentd, and Sqoop. You can also use Log Service to easily ship logs to MaxCompute, and use big data development kits to perform log analysis and mining.

-

Data storage in a two-dimensional table

MaxCompute stores all data in tables to hide the file system. The compressed column storage greatly reduces your costs using a high compression ratio. MaxCompute provides a compression ratio of 5.

-

Computational models

Supports multiple computational models, such as SQL, MapReduce, and Graph.

SQL

MaxCompute SQL follows standard SQL syntax and Hive syntax. This combined syntax is similar to Hibernate Query Language (HQL), so SQL or HQL programmers can use MaxCompute SQL easily. MaxCompute provides a more efficient computing framework than a common MapReduce model, to run the SQL computational model. However, MaxCompute SQL does not support transactions, indexes, update, and delete.

MapReduce

MaxCompute provides the Java MapReduce programming model. MaxCompute does not have any file API. You have to read data from and write data to tables in the system. Therefore, the MapReduce model in MaxCompute is different from the MapReduce model in an open-source software community. A modified model may be less flexible. For example, you cannot customize sorting and hashing algorithms. However, the development process is simplified. More importantly, MaxCompute provides the Extended MapReduce (MR²) model. In this model, multiple Reduce operations can follow a Map operation.

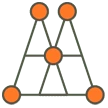

Graph

In some complex iterative computation scenarios, such as K-Means and PageRank, MapReduce takes a long time to complete the tasks. Therefore, MaxCompute uses the Graph model to efficiently run these tasks.

-

Secure

MaxCompute is a multi-tenant computing platform. By default, tenants are isolated and do not share data. However, they can use MaxCompute to assign the permissions on certain data to other members in the same project group.

Customer Scenarios

-

Cost-effective Investment and Maintenance

-

Data Warehouses

-

Big Data Analysis for Logs

-

Redefined Management

-

Big data-based Precision Marketing

-

Analysis of Large Amounts of Marketing Data

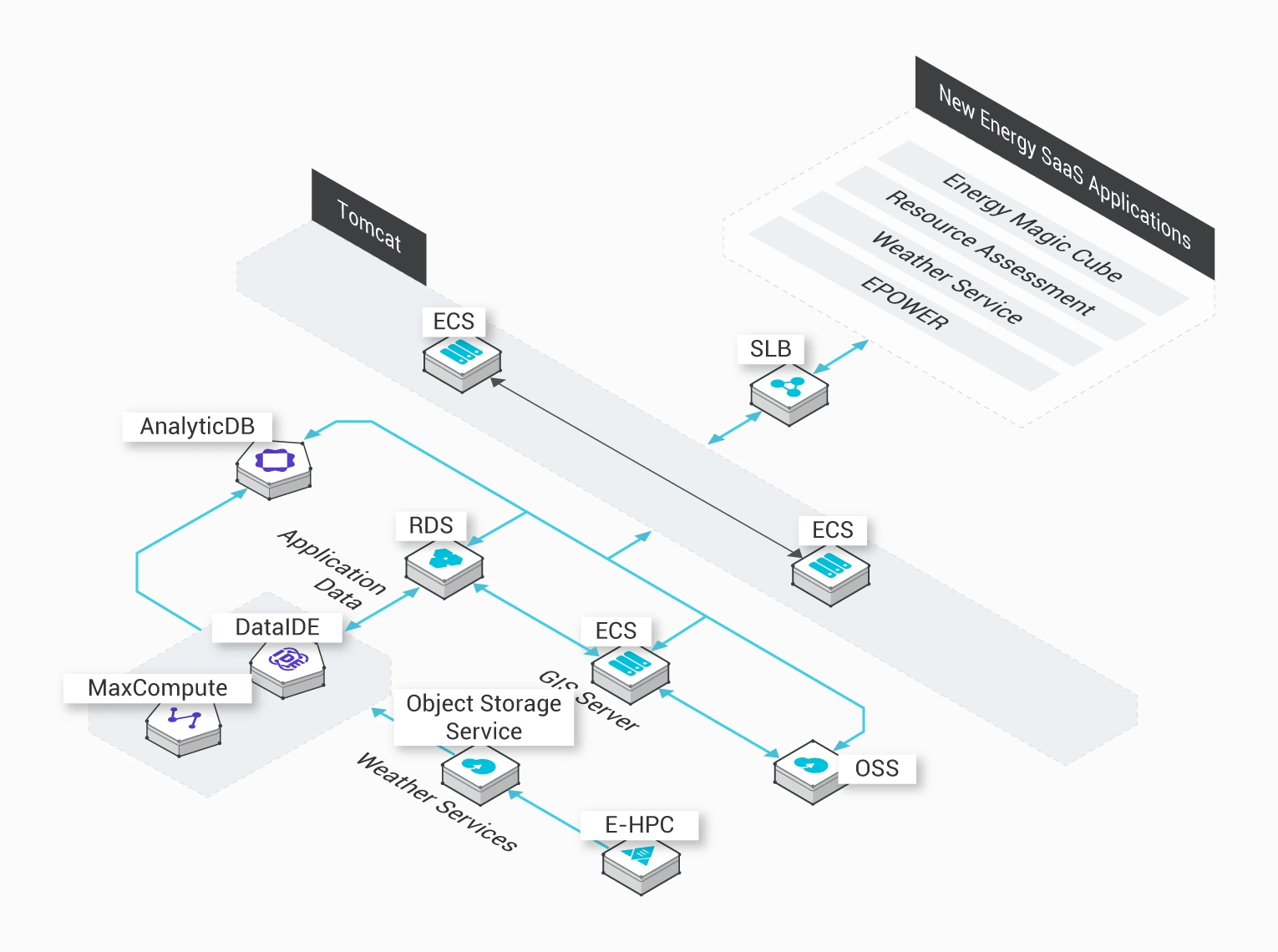

East Environment Energy

Cost-effective and quick data uploads to the cloud

MaxCompute helps migrate all related services to the cloud within three months. East Environment Energy does not have to build a big data platform. Instead, all related services are migrated to Alibaba Cloud's MaxCompute. This shortens the data processing time by more than two thirds. MaxCompute also secures green energy usage data in the cloud.

Customer benefits

-

Focused on core businesses

MaxCompute helps migrate all related services to the cloud within three months. A large amount of resources in the cloud can serve businesses.

-

Cost-effective investment and maintenance

MaxCompute greatly reduces the need to invest in manpower, materials, research and development.

-

Secure and reliable

Comprehensive services and stable performance secure your data in the cloud.

Related Products & Services

Xiaohongchun

Data warehouses

Data warehouses are vital to cloud computing and big data applications, and are continuously developing. Xiaohongchun uses MaxCompute to build data warehouses.

Challenges

-

Upload data to the cloud

Step 1: Synchronize data to MaxCompute using DataX and tunnels.

-

Scrub data

Step 2: Integrate Alibaba Cloud services to synchronize and scrub data in DataWorks.

-

Display data

Quick BI generates reports based on the data from extract, transform, load (ETL), and online analytical processing (OLAP) in DataWorks.

Related Products & Services

Moji Weather

Efficient development and cost-effective storage and computing

Moji Weather's log analysis business has migrated to MaxCompute. Development efficiency is enhanced by five times, and storage and computing costs are reduced by 70%. MaxCompute processes and analyzes 2TB of logs each day to benefit personalized operating strategies.

Customer benefits

-

Enhanced business efficiency

MaxCompute analyzes all logs using SQL, to enhance business efficiency by more than five times.

-

Improved storage efficiency

MaxCompute reduces the storage and computing costs by 70% and improves the performance and stability.

-

Easy application of big data

MaxCompute provides multiple open-source plugins for easy migration to the cloud.

Related Products & Services

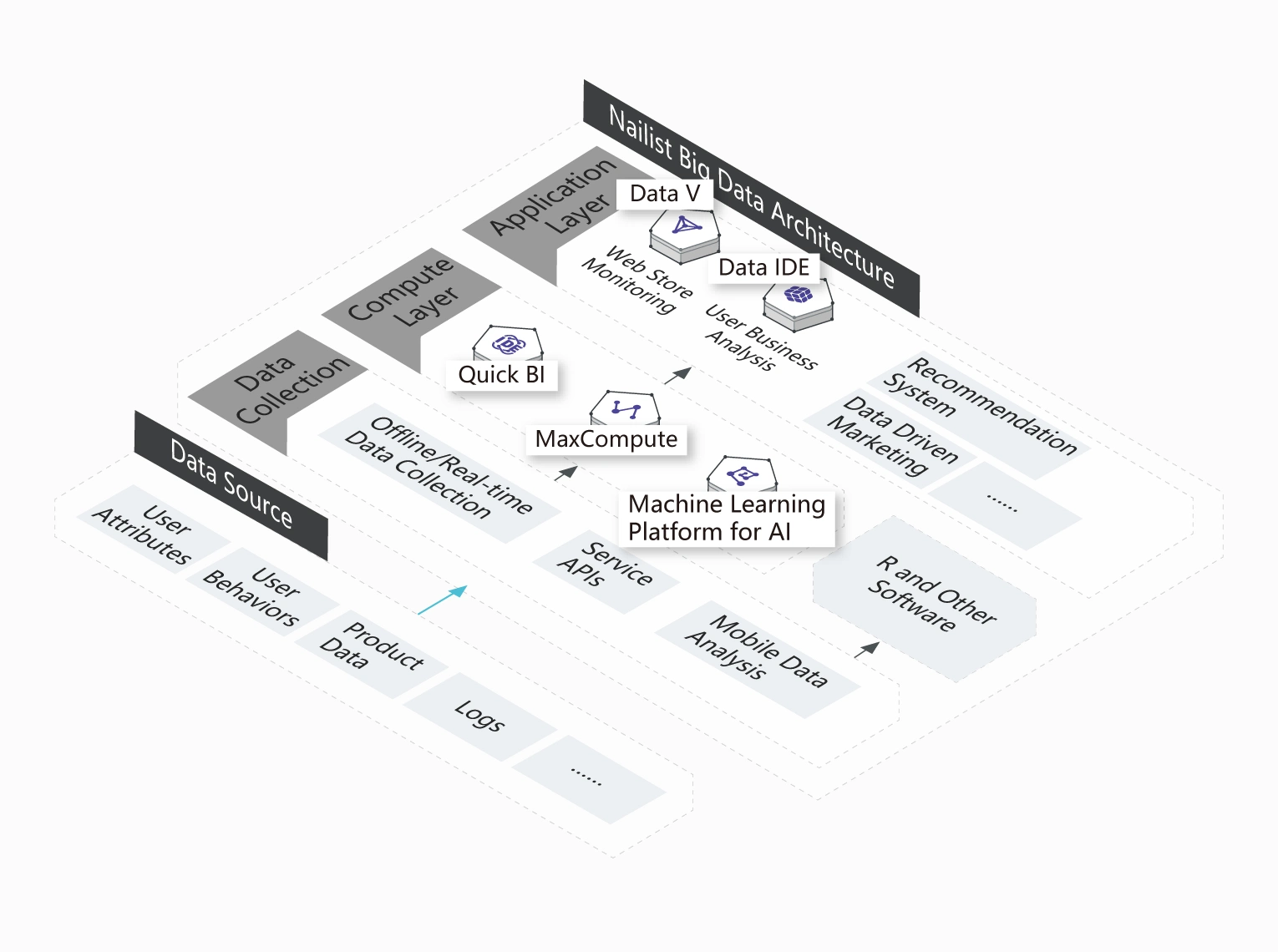

Nailist

Efficient use of large amounts of data for the refined management of millions of users

Nailist mainly deals with the e-commerce business and currently serves millions of users. Therefore, Nailist needs to extract the best value from its large amounts of user data in order to improve user experience.

Customer benefits

-

Improved business insight

Nailist can manage millions of users with MaxCompute.

-

Data-based business

MaxCompute provides analysis and monitoring of business data to enhance business efficiency.

-

Quick response to business demands

MaxCompute is scalable to meet the demand of increasing business data analysis.

Related Products & Services

Huihe Marketing

Big data-based precision marketing

Huihe Marketing has built a core precision marketing platform based on big data by using MaxCompute. On this platform, MaxCompute stores all logs, and DataWorks performs offline scheduling and analysis.

Benefits

-

Cost-effective analysis of large amounts of data

Performs statistics and analysis of large amounts of logs to ensure cost-effective development.

-

Real-time data searching and analysis

The system responds to customer search requests in milliseconds and returns the number of users of a specified tag.

-

User-friendly machine learning platform

The revenue of the precision marketing provider depends on the quality of algorithm models.

Integrations

PING++

Analysis of large amounts of marketing data

Currently, Ping++ deals with millions of transactions each day. Therefore, Ping++ needs to use a secure, reliable, and stable big data platform. This platform analyzes large amounts of transaction data to enhance business efficiency and to increase user loyalty.

Challenges

-

Innovative

The comprehensive big data platform provides a variety of features, such as storage, computing, BI, and machine learning.

-

Quick and cost-effective

The Internet-based enterprise can provide Ping++ in a cost-effective method.

-

Secure, stable, and reliable

The system provides strict data privacy and protection. Users can only analyze their own data.

Integrations

Upgraded Support For You

1 on 1 Presale Consultation, 24/7 Technical Support, Faster Response, and More Free Tickets.

1 on 1 Presale Consultation

24/7 Technical Support

6 Free Tickets per Quarter