Data Studio is an intelligent data lakehouse development platform built on Alibaba Cloud's decades of big data experience. It supports a wide range of Alibaba Cloud computing services and delivers capabilities for intelligent ETL development, data catalog management, and cross-engine workflow orchestration. With personal development environments that support Python development, Notebook analysis, and Git integration, along with a rich plugin ecosystem, Data Studio enables integrated real-time and offline processing, data lakehouse unification, and big data and AI workflows across the entire "Data+AI" lifecycle.

Introduction to Data Studio

Data Studio integrates with Alibaba Cloud big data and AI computing services—including MaxCompute, EMR, Hologres, Flink, and PAI—to provide ETL development services for data warehouses, data lakes, and OpenLake data lakehouse architectures.

| Capability | Description |

|---|---|

| Data lakehouse and multi-engine support | Access data in data lakes (such as OSS) and data warehouses (such as MaxCompute) through a unified data catalog. Run multi-engine hybrid development with a wide range of engine nodes. |

| Flexible workflows and scheduling | Orchestrate cross-engine tasks visually on a DAG canvas using flow control nodes. Supports both time-driven recurring scheduling and event-driven triggered scheduling. |

| Open Data+AI development environment | A personal development environment with customizable dependencies and a Notebook for mixed SQL and Python programming. Dataset integration and Git support let you build an open and flexible AI research and development workstation. |

| Intelligent assistance and AI engineering | The built-in Copilot assistant supports code development. PAI algorithm nodes and large language model (LLM) nodes provide native support for end-to-end AI engineering. |

Key concepts

| Concept | Description |

|---|---|

| Workflow | An organizational and orchestration unit for tasks. Manages dependencies and automates scheduling for complex tasks—acts as a container for development and scheduling. |

| Node | The smallest execution unit in a workflow. Where you write code and implement business logic. Supports SQL, Python, Shell, and data integration. |

| Scheduling | A rule for automatically triggering a task. Converts manual tasks into automatically runnable production tasks through recurring or event-triggered scheduling. |

| Data catalog | A unified metadata workbench. Organizes and manages data assets (tables) and computing resources (functions and resources) in a structured way. |

| Dataset | A logical mapping to external storage. Connects to unstructured data such as images and documents stored in OSS/NAS—a key data bridge for AI development. |

| Custom image | A standardized snapshot of an environment. Ensures the development environment is extensible, consistent, and reproducible in production. |

| Notebook | An interactive Data+AI development canvas. Integrates SQL and Python code to accelerate data exploration and algorithm validation. |

Development process guide

Data Studio supports two primary development paths. Choose the path that matches your role and objective.

Which path is right for you?

| Path | Who it's for | What you'll build |

|---|---|---|

| General path | Data engineers, ETL developers | A stable, automatically scheduled enterprise data warehouse for batch processing and report generation |

| Advanced path | AI engineers, data scientists, algorithm engineers | Data exploration pipelines, trained models, or real-time AI applications such as RAG pipelines and inference services |

General path: Data warehouse development for recurring ETL tasks

This path covers building an enterprise data warehouse with stable, automated batch data processing.

-

Key technologies: Data catalog, recurring workflow, SQL node, scheduling configuration

<br />

| Step | Phase | What to do | Where to go |

|---|---|---|---|

| 1 | Associate a compute engine | Associate one or more compute engines—such as MaxCompute—with the workspace. This serves as the execution environment for all SQL tasks. | Console > Workspace Configuration — See Associate a computing resource |

| 2 | Manage the data catalog | Create or explore the table schemas for each layer of the data warehouse—such as ODS, DWD, and ADS. This defines the input and output for data processing. Use the data modeling module to build your data warehouse system. | Data Studio > Data Catalog — See Data Catalog |

| 3 | Create a scheduled workflow | Create a recurring workflow in the workspace directory to serve as a container for organizing and managing related ETL tasks. | Data Studio > Workspace Directory > Periodic Scheduling — See Orchestrate a recurring workflow |

| 4 | Develop and debug nodes | Create nodes such as ODPS SQL nodes. Write the core ETL logic—data cleaning, transformation, and aggregation—in the node editor, then debug. | Data Studio > Node Development > Node Editor / Debugging Configuration — See Node development |

| 5 | Develop with Copilot | Use DataWorks Copilot to generate, correct, rewrite, and convert SQL and Python code. | Data Studio > Node Development > Copilot or Data Studio > Copilot > Agent — See DataWorks Copilot |

| 6 | Orchestrate and schedule nodes | On the DAG (Directed Acyclic Graph) canvas, drag and connect nodes to define upstream and downstream dependencies. Use flow control nodes for complex orchestration. Configure scheduling properties—cycle, time, and dependencies—for the workflow or individual nodes. Supports large-scale scheduling of tens of millions of tasks per day. | Data Studio > Workflow > Workflow Canvas and Data Studio > Node Development > Scheduling Configuration — See General flow control nodes and Node scheduling configuration |

| 7 | Deploy and O&M | Deploy: Push the debugged node or workflow to the production environment. Operations: In Operation Center, monitor tasks, configure alerts, backfill data, and run recurring validation. Use intelligent baselines to ensure tasks complete on time and alerts to handle abnormal tasks promptly. | Data Studio > Node/Workflow Details > Deploy Node/Workflow and Operation Center > Auto Triggered Node O&M > Auto Triggered Nodes — See Deploy a node or workflow and Basic O&M operations for auto triggered nodes |

For a hands-on example, see Advanced: Analyze best-selling product categories.

Advanced path: Big data and AI development

This path covers AI model development, data science exploration, and building real-time AI applications. The exact steps may vary based on your use case.

-

Key technologies: Personal development environment, Notebook, event-triggered workflow, dataset, custom image

| Step | Phase | What to do | Where to go |

|---|---|---|---|

| 1 | Create a personal development environment | Create an isolated, customizable cloud container instance for installing complex Python dependencies and running professional AI development. | Data Studio > Personal Development Environment — See Personal development environment |

| 2 | Create an event-triggered workflow | Create a workflow driven by external events in the workspace directory. This is the orchestration container for your real-time AI application. | Data Studio > Workspace Directory > Event-triggered Workflow — See Event-triggered workflow |

| 3 | Create and configure a trigger | In Operation Center, configure a trigger to define which external event—such as an OSS event or a Kafka message event—starts the workflow. | Create: Operation Center > Trigger Management. Use: Data Studio > Event-triggered Workflow > Scheduling Configuration — See Manage triggers and Design an event-triggered workflow |

| 4 | Create a Notebook node | Create the core development unit for writing AI and Python code. Start by exploring in a Notebook in your personal folder. | Project Folder > Event-triggered Workflow > Notebook Node — See Create a node |

| 5 | Create and use a dataset | Register unstructured data—such as images and documents—stored in OSS or NAS (Apsara File Storage NAS) as a dataset. Mount it to the development environment or task so your code can access it. | Create: Data Map > Data Catalog > Dataset. Use: Data Studio > Personal Development Environment > Dataset Configuration — See Manage Datasets and Use datasets |

| 6 | Develop and debug | Write algorithm logic, explore data, validate models, and iterate in the interactive personal development environment. | Data Studio > Notebook Editor — See Basic Notebook development |

| 7 | Install custom dependency packages | In the terminal of the personal development environment or in a Notebook cell, use pip to install the third-party Python libraries your model requires. |

Data Studio > Personal Development Environment > Terminal — See Appendix: Complete your personal development environment |

| 8 | Create a custom image | Snapshot the personal development environment—with all dependencies installed—into a standardized image. This ensures the production environment matches the development environment. Skip this step if you have not installed custom dependency packages. | Data Studio > Personal Development Environment > Manage Environment and Console > Custom Image — See Create a DataWorks image from a personal development environment |

| 9 | Configure node scheduling | In the production node's scheduling configuration, specify the custom image from the previous step as the runtime environment and mount the required datasets. | Data Studio > Notebook Node > Scheduling — See Node scheduling configuration |

| 10 | Deploy and O&M | Deploy: Push the configured event-triggered workflow to the production environment. O&M: Trigger a real event—such as uploading a file—to verify that the end-to-end flow runs correctly, and perform trigger validation. | Data Studio > Node/Workflow Details > Deploy Node/Workflow and Operation Center > Manually Triggered Node O&M > Manually Triggered Node — See Deploy node and workflow and Run and manage one-time tasks |

Core modules

| Module | Capabilities |

|---|---|

| Workflow orchestration | Visual DAG canvas for building and managing task projects with drag-and-drop. Three workflow types: recurring workflow orchestration for scheduled batch tasks, event-triggered workflows for real-time pipelines, and manually triggered workflows for on-demand runs. |

| Execution environments and modes | Environments: Default development environment, personal development environment for AI workloads, or custom images for environment standardization. Git integration for version control. Modes: Project folder for team collaboration, personal folder for individual development and testing, or manual folder for temporary tasks. |

| Node development | Compute engines: MaxCompute, EMR, Hologres, Flink, and PAI. Node types: Data integration, SQL, Python, Shell, Notebook, LLM, and AI interactive nodes. See Computing resource management and Node development. |

| Node scheduling | Mechanism: Time-based recurring scheduling (year, month, day, hour, minute, second) plus event-triggered and OpenAPI-triggered scheduling. Dependencies: Same cycle, across cycles, across workflows, and across workspaces. Policies: Effective period, rerun on failure, dry-run, and freeze. Parameters: Workflow, workspace, context, and node parameters. See Node scheduling configuration. |

| Development resource management | Data catalog: Metadata management for data lakehouse assets—create, view, and manage tables. See Data Catalog. Functions and resources: Manage and reference user-defined functions (UDFs) and resource files such as JAR and Python files. See Resource Management. Dataset: Mount and manage datasets from OSS/NAS. See Use datasets. |

| Quality control | Code review: Manual code review before task publication. Flow control: Combine smoke testing, governance item checks, and extensions for automated validation during submission and publication. See Configure check items and Smoke testing. Data Quality: Associate Data Quality monitoring rules to automatically trigger data validation after a task runs. See Configure Data Quality rules. |

| Openness and extensibility | OpenAPI: Comprehensive API interfaces for programmatic management of development tasks. Event messages: Subscribe to data development event messages to integrate with external systems. Custom extensions are also supported. |

Billing

DataWorks charges

The following fees appear on your DataWorks bill:

| Fee type | When it applies | Reference |

|---|---|---|

| Resource group fees | Node development and personal development environments require resource groups. Fees depend on the resource group type: Serverless or exclusive. Using a large model service also incurs Serverless resource group fees. | Serverless resource group fees / Exclusive resource group fees |

| Task scheduling fees | Publishing a task to the production environment for scheduled execution. | Task scheduling fees (Serverless) / Exclusive resource group fees (exclusive) |

| Data Quality fees | Configuring quality monitoring for a periodic task, when an instance is successfully triggered. | Data Quality instance fees |

| Smart baseline fees | Configuring a smart baseline for a periodic task, for baselines in the enabling status. | Smart baseline instance fees |

| Alert text message and phone call fees | Configuring alert monitoring when a text message or phone call is successfully triggered. | Alert text message and phone call fees |

These costs are associated with the Data Development, Data Quality, and Operation Center modules.

Other service charges

Running a Data Development node task may incur compute engine and storage fees—such as OSS (Object Storage Service) storage fees. These fees are not charged by DataWorks and do not appear on DataWorks bills.

Get started

Create or enable Data Studio

-

New workspace: Select Use Data Studio (New Version) when creating a workspace. See Create a workspace.

-

Upgrade from the old version: The old version of DataStudio supports migrating data to the new Data Studio. Click Upgrade to Data Studio at the top of the Data Development page and follow the on-screen instructions. See Data Studio upgrade guide.

Open Data Studio

Go to the Workspaces page in the DataWorks console. In the top navigation bar, select a region. Find the target workspace and choose Shortcuts > Data Studio in the Actions column.

FAQ

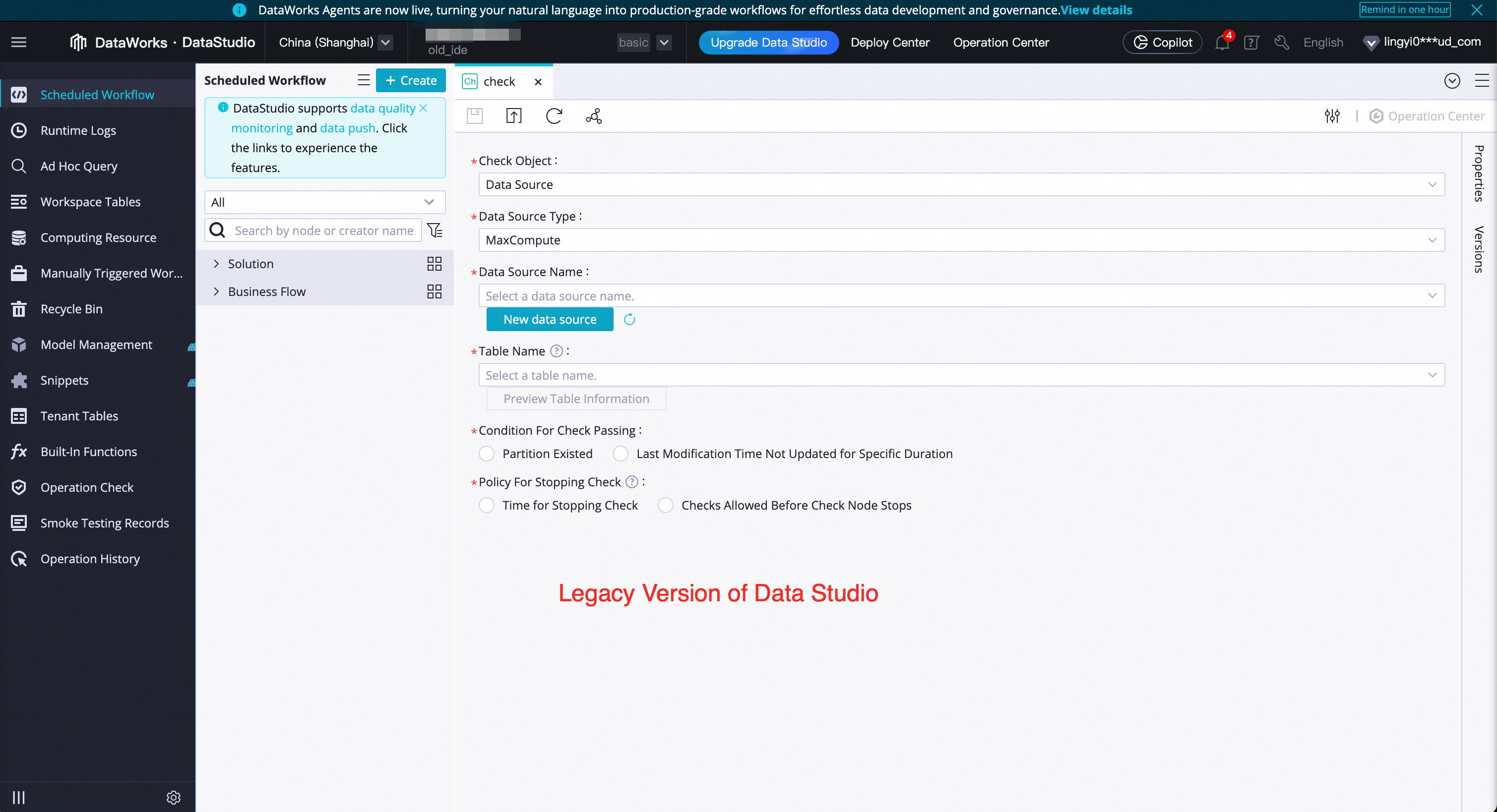

Q: How do I tell whether I'm using the new or old version of Data Studio?

The page styles are completely different. The new version looks like the screenshots in this document. The old version is shown below.

Q: Can I revert to the old version after upgrading?

No. Upgrading from the old version to the new version is irreversible. Before upgrading, create a test workspace with the new Data Studio enabled to verify it meets your needs. Note that data in the new and old versions is independent of each other.

Q: Why don't I see the "Use Data Studio (New Version)" option when creating a workspace?

If the option is not visible, your workspace has already enabled the new Data Studio by default.

If you encounter issues while using the new Data Studio, join the exclusive DingTalk group for DataWorks Data Studio upgrade support for assistance.