DataWorks Notebook is an interactive, modular environment for data analysis and development. Use Python, SQL, and Markdown cells together to connect to compute engines such as MaxCompute, EMR, and AnalyticDB — covering everything from data processing and exploratory analysis to visualization and model development.

Run your first Notebook

This section walks you through creating a Notebook, passing a variable from Python to SQL, and querying data from a MaxCompute table.

Prerequisites

Before you begin, ensure that you have:

-

The new Data Studio enabled in your workspace

-

A Serverless resource group created — required to run Notebooks in the production environment

-

A personal development environment instance created — required to run and debug Notebooks in the development environment. If you haven't created one yet, see Create a personal development environment instance

Steps

-

Create a Notebook node In Data Studio, create a Notebook node under Workspace Directories. Enter a name such as

hello_notebook, then submit it. -

Select a personal development environment In the top navigation bar, click Personal Development Environment and select your personal development environment instance from the drop-down list.

-

Define a Python variable Add a Python cell and enter the following code. This defines the

cityvariable for the SQL query in the next step.# Define a variable for the subsequent SQL query city = 'Beijing' print(f"Defined city variable city = {city}") -

Write an SQL cell that references the variable Below the Python cell, add an SQL cell. In the lower-right corner of the cell, switch the SQL type to MaxCompute SQL, then enter:

-- Query using the variable defined in Python SELECT '${city}' AS city;The

${city}syntax automatically picks up the value from the Python cell above. -

Run all cells and verify the output Click Run All in the Notebook toolbar. Two things happen:

-

The Python cell prints

Defined city variable city = Beijing. -

The SQL cell displays the query result in a table below it.

-

You've created and run a Notebook that passes data between Python and SQL.

Key concepts

Understanding the following concepts helps you avoid surprises when moving Notebooks from development to production.

Development environment vs. production environment

| Development environment | Production environment | |

|---|---|---|

| Runtime | Personal development environment instance | Resource group and image specified in Scheduling |

| What it's for | Interactive debugging; freely install Python libraries | Recurring scheduled runs and manually triggered workflows from Data Studio |

| Network | A personal development environment instance not attached to a virtual private cloud (VPC) gets a random public IP with limited bandwidth | Determined by the resource group's VPC; no public network access by default unless a NAT Gateway is configured |

| How to keep them consistent | If you install packages via pip install in your development environment, create a DataWorks image from that environment and select it in Scheduling |

Select the custom image in Scheduling to ensure the same dependencies are available at runtime |

To ensure network consistency between environments, attach the same Serverless resource group to your personal development environment instance.

Compute resources and kernels

-

Compute resource: The backend compute engine connected to the Notebook — for example, MaxCompute or EMR Serverless Spark. An SQL cell must be bound to a compute resource to run.

-

Python kernel: The backend environment that executes Python code — typically your personal development environment instance. Use Magic Commands such as

%odpsin Python cells to connect to compute resources, submit tasks, or manipulate data.

Folder types

Where you create a Notebook determines its collaboration model, permissions, and deployment path.

| Folder type | Best for | Collaboration and deployment |

|---|---|---|

| Workspace Directories | Team collaboration and scheduled tasks | Shared across the workspace; must be deployed to the production environment to run on a schedule |

| Personal Directory | Personal development and debugging | Visible only to you; to schedule a node, first commit it to a Workspace Directory, then deploy |

Develop and debug a Notebook

Data Studio does not save your code automatically. Save frequently during development, or enable auto-save by navigating to Settings > Files: Auto Save.

If the Notebook becomes unresponsive, click Restart in the toolbar to restart the kernel.

Manage cells

| Action | How |

|---|---|

| Add a cell | Hover over the top or bottom edge of a cell and click a button such as + SQL, or use the toolbar buttons |

| Switch cell type | Click the type label in the lower-right corner of the cell (for example, Python) and select a new type. The existing code is retained — update it manually to fit the new type |

| Reorder cells | Hover over the blue vertical line on the left of a cell, then drag it to a new position |

Run cells

Choose the right run option based on what you're doing:

-

Run a single cell — click Run on that cell. Use this when iterating on a specific cell during active development.

-

Run all cells — click Run All in the Notebook toolbar. Use this before sharing or scheduling the Notebook to confirm all cells execute correctly end-to-end.

Pass parameters between cells

Python to SQL

Variables defined in a Python cell are available in following SQL cells using ${variable_name} syntax.

Example:

# Python cell

table_name = "dwd_user_info_d"

limit_num = 10-- SQL cell

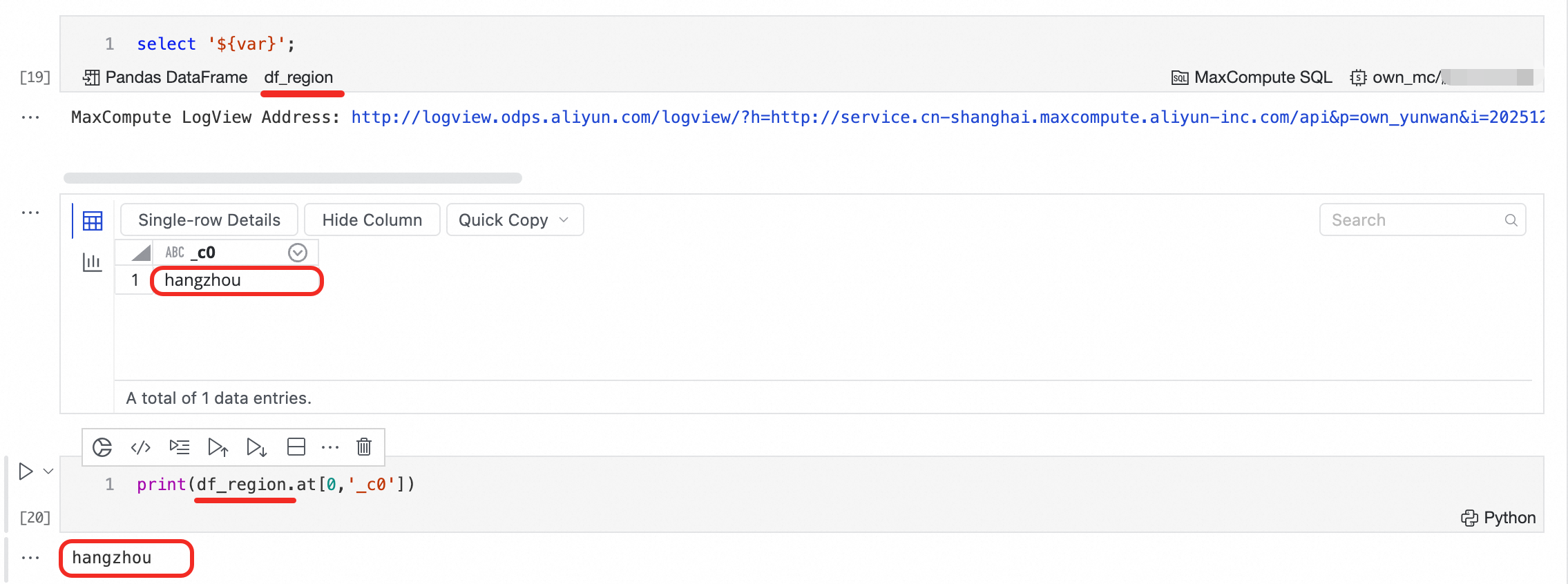

SELECT * FROM ${table_name} LIMIT ${limit_num};SQL to Python

When an SQL cell executes a SELECT query, the result is automatically saved as a DataFrame variable. Access it in any following Python cell.

-

Variable name: Starts with

df_by default. Click the name in the lower-left corner of the SQL cell to rename it. -

Variable type: Depends on the SQL engine. Click the DataFrame label in the lower-left corner to switch types if multiple are supported.

| SQL engine | Supported types |

|---|---|

| MaxCompute SQL | Pandas DataFrame, MaxCompute MaxFrame |

| AnalyticDB for Spark SQL | Pandas DataFrame, PySpark MaxFrame |

| Other SQL types | Pandas DataFrame |

If a cell contains multiple SQL statements, only the result of the last statement is saved to the DataFrame variable.

Example:

Use DataWorks Copilot

DataWorks Copilot is a built-in AI programming assistant that generates and explains code. To open it:

-

Click the Copilot

icon in the upper-left corner of the selected cell.

icon in the upper-left corner of the selected cell. -

Right-click inside an SQL cell and select Copilot.

-

Press

Cmd+I(Mac) orCtrl+I(Windows).

Schedule and deploy a Notebook

To run a Notebook on a recurring schedule, configure its scheduling settings and deploy it to the production environment.

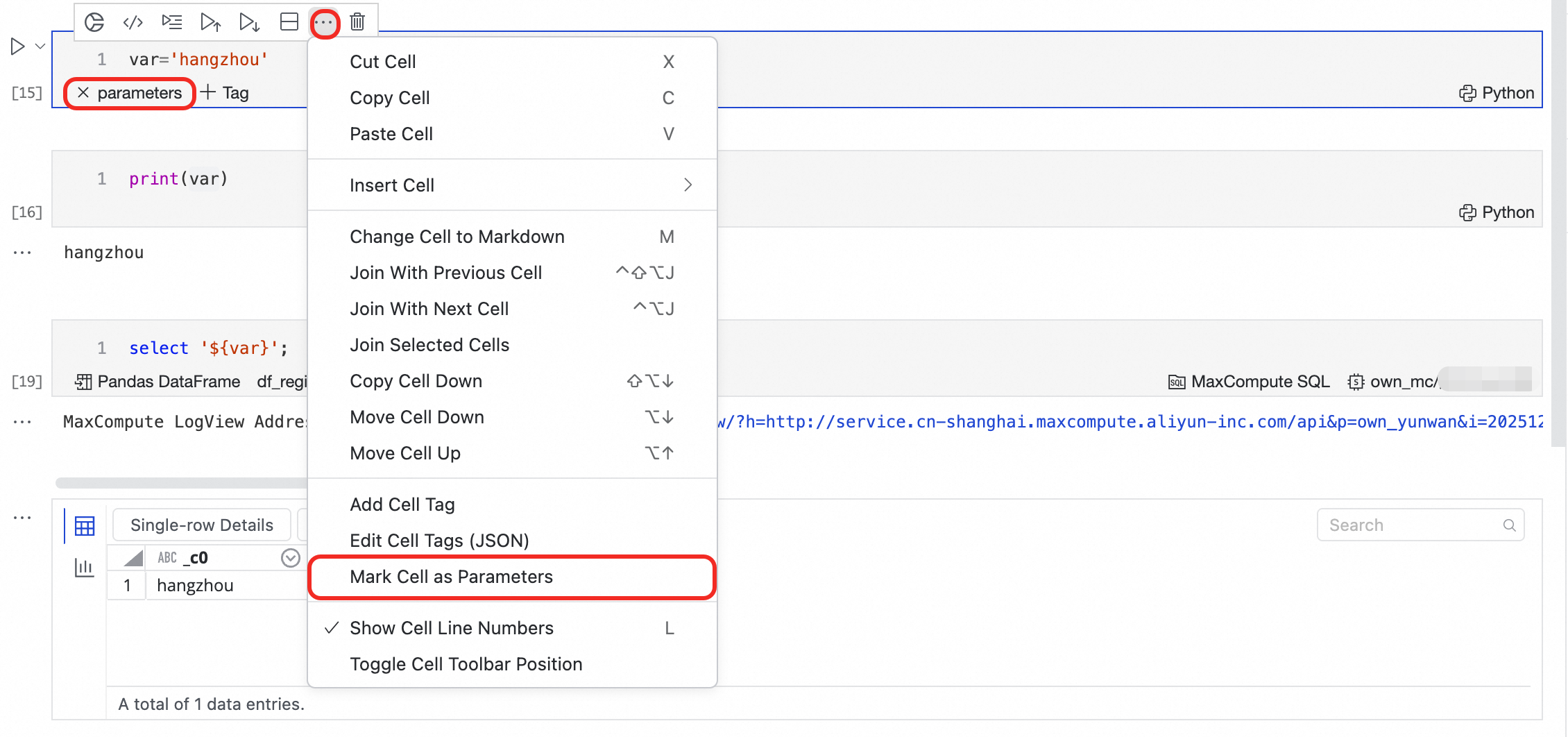

1. Set up parameterized scheduling

Parameterized scheduling lets the Notebook receive different values on each run — useful for processing data from different daily partitions without changing the code.

When to use this: Configure parameterized scheduling when your Notebook needs to operate on different data slices per run, such as reading yesterday's partition each day.

-

In the Python cell that contains your parameter definitions, click ... in the upper-right corner of the cell and select Mark Cell as Parameters. A

parameterstag appears, marking it as the parameter entry point.

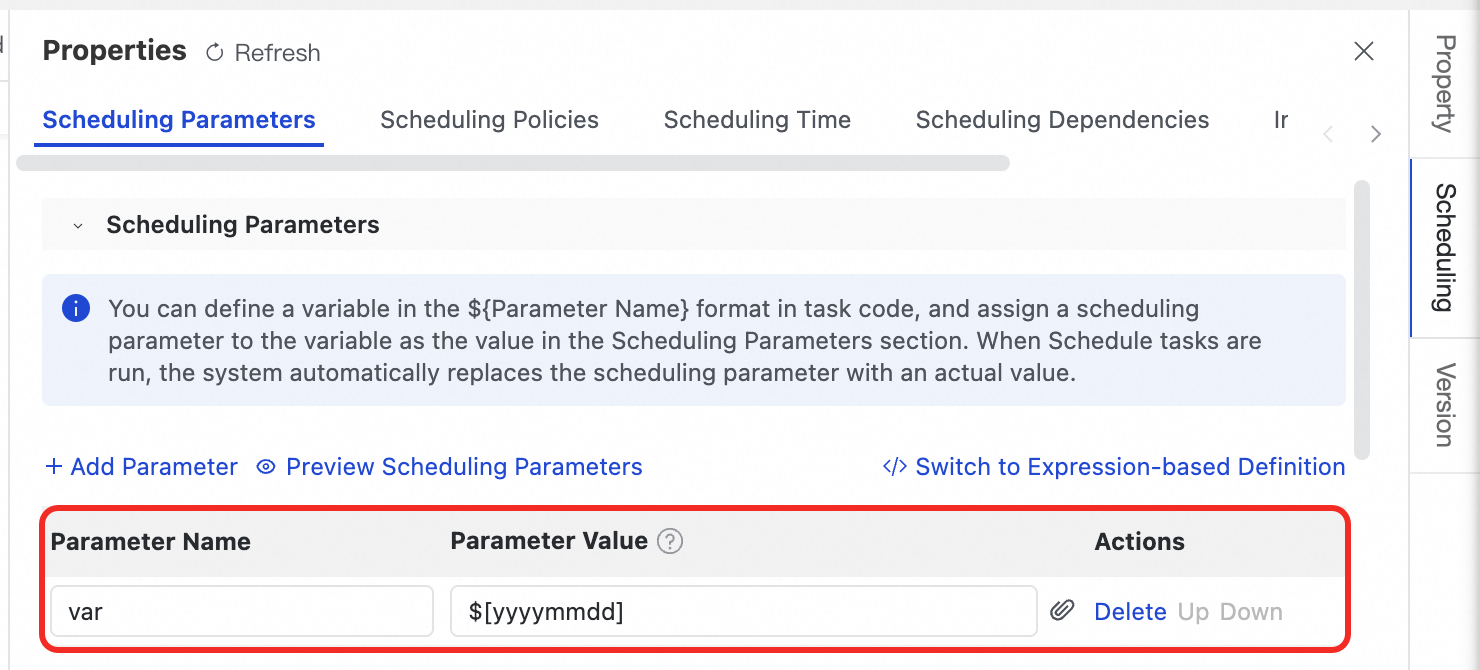

-

In the right pane, click Scheduling, then expand Scheduling Parameters. Assign values to the variables (such as

var) defined in your code.

At runtime, the scheduling system replaces the variable in your code with the configured value.

2. Configure the runtime environment

-

Select an image: In Scheduling, select an image that includes all Python dependencies the Notebook needs. If you installed packages in your personal development environment instance using

pip install, create a DataWorks image from that environment and select it here. -

Select a resource group: Choose the resource group for executing the task. For a Serverless resource group, configure no more than 16 CU to avoid startup failures. A single task supports a maximum of 64 CU.

-

Associate a RAM role (optional): To apply fine-grained permission control, associate a Resource Access Management (RAM) role with the node. The node runs with that role's identity. For details, see Configure an associated role for a node.

3. Deploy the node

Only nodes in Workspace Directories can be deployed and scheduled periodically.

-

Notebook in a Workspace Directory: Click Deploy in the toolbar.

-

Notebook in a Personal Directory: Click Save, then click Commit to Workspace Directory, then deploy.

After deployment, monitor and manage the Notebook task on the Auto Triggered Nodes page in Operation Center.

FAQ

Why does my code access the public network during development but fail during a scheduled run?

The network policies differ between environments. During development, a personal development environment instance without a VPC gets limited public network access by default — enough for installing packages or calling external APIs. In the production environment, tasks run inside a VPC with no public network access unless a NAT Gateway is configured on the resource group's VPC. To fix this, ensure that the personal development environment instance and the Serverless resource group are set up in the same VPC.

Why does my scheduled run fail to find third-party packages that work fine in development?

The production environment uses the image you select in Scheduling, not your local development instance. Package all Python dependencies into a custom image and specify it in Scheduling. See Create a DataWorks image from a personal development environment.

How do I change the Python kernel version?

Install the required Python version in the terminal ![]() of your personal development environment. Then click

of your personal development environment. Then click ![]() on the right side of the Notebook toolbar to switch to that kernel version. Avoid installing multiple additional Python kernels — new versions may lack the dependencies required by SQL cells and cause them to stop working.

on the right side of the Notebook toolbar to switch to that kernel version. Avoid installing multiple additional Python kernels — new versions may lack the dependencies required by SQL cells and cause them to stop working.