By Zhang Zuowei (Youyi)

In the technical narrative of cloud-native, "elasticity" has always been a core keyword. From microservices to Serverless, the evolution of infrastructure has consistently aimed to make resources more flexible and loosely coupled. However, with the explosion of the AI Large Model era, the form of computing tasks has shown a seemingly "anomalous" regression—distributed jobs have re-exhibited a "rigid" dependence on resources in pursuit of extreme training efficiency.

This "rigid" demand has clashed violently with the Kubernetes native philosophy of "elastic, incremental" scheduling. This has made Gang Scheduling, a key feature from the early HPC era, once again a mandatory option for connecting cloud-native foundations with AI business.

This article will use the development of Gang Scheduling as a thread to deeply analyze the game between "rigidity" and "elasticity" in AI computing resources, explain its engineering implementation in Kubernetes, and look forward to future trends in resource orchestration.

The cloud-native era was born in scenarios usually handling massive amounts of stateless requests, but modern AI training tasks (especially Large Model training based on Transformers) exhibit extremely strong synergy. This application-layer "rigid" demand stems from strict constraints in underlying communication patterns and physical topology, posing "harsher" requirements for resource scheduling.

The demand for Gang Scheduling originates from two core characteristics of AI distributed training tasks:

• Tight Communication Topology & Synchronization Barriers

Distributed training relies on frequent parameter synchronization. Taking the typical All-Reduce collective communication pattern as an example, it requires that all Worker nodes participating in the calculation must participate in gradient aggregation and updating simultaneously at the end of each iteration.

Logically, this constitutes a strict "synchronization barrier." Under the traditional BSP (Bulk Synchronous Parallel) model, if the cluster network lacks even a single Worker (even if due to a temporary resource shortage), the entire communication topology is in an incomplete state. At this point, all launched Workers are forced into a blocking wait state and cannot start, causing expensive GPU resources to idle and go to waste.

• Static and Strict Resource Binding

To pursue extreme communication performance, mainstream AI training frameworks are usually built on communication libraries like NCCL, requiring a strict and static binding relationship between Workers and underlying resource instances.

This is reflected during the startup phase, where training tasks allocate fixed Rank IDs (for example, Rank 0 to Rank N) based on the number of participating nodes (World Size) and build a static communication topology based on specific IP addresses. This implies the task has the following constraints:

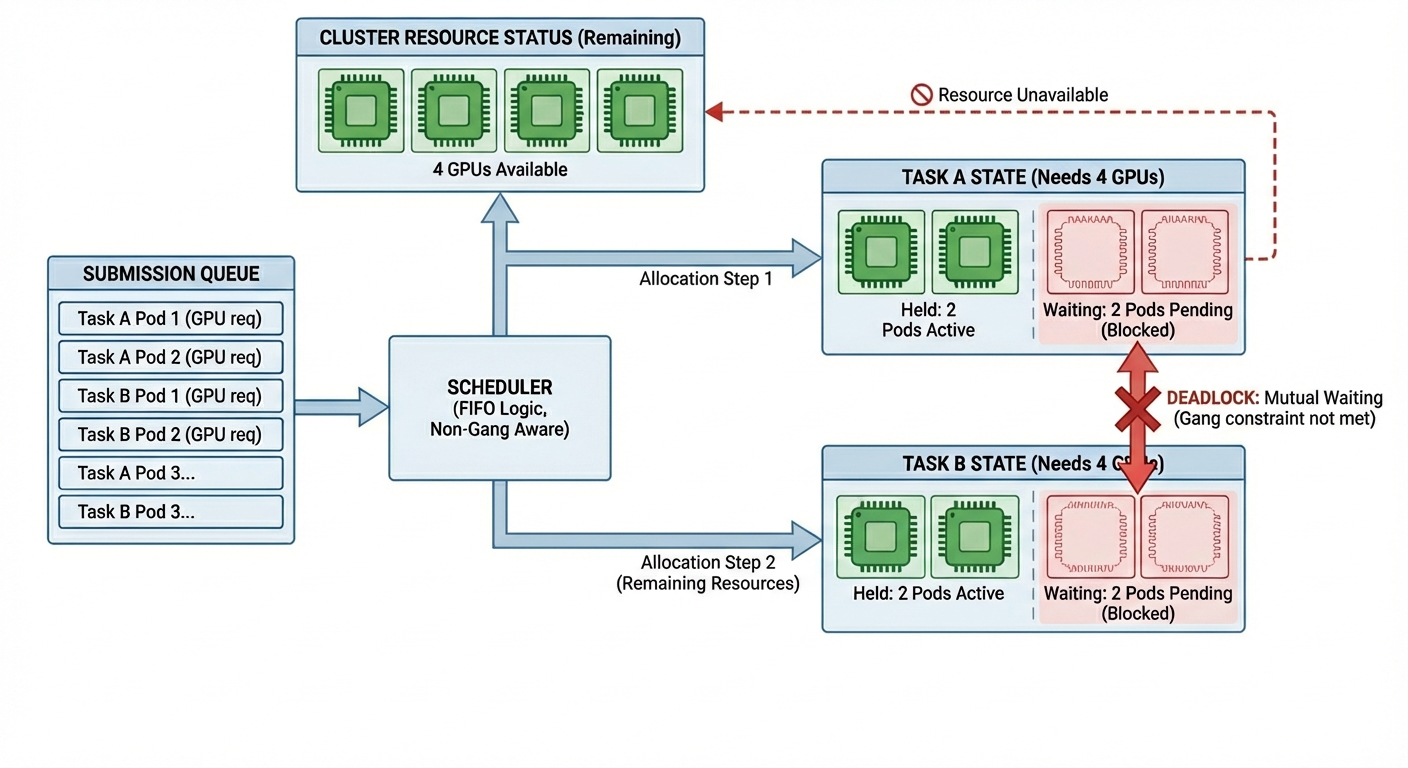

Based on the above characteristics, if the scheduler follows the Kubernetes default FIFO strategy based on individual Pods, resource deadlocks will be very easily triggered.

A typical deadlock scenario is as follows:

Assume the cluster has enough remaining resources to run only one 4-card task (such as 4 Pods). At this time, Task A (needs 4 cards) and Task B (needs 4 cards) submit their Pods sequentially.

• The scheduler, following queue order, first allocates and starts 2 Pods for Task A (partial allocation);

• Subsequently, the scheduler allocates the remaining resources to Task B, starting its first 2 Pods.

Result: Task A cannot establish a communication ring because it is waiting for the remaining 2 Pods, and the same applies to Task B. Both parties hold partial resources and refuse to yield, preventing both tasks from starting and causing resource idleness.

To address the above issues, Gang Scheduling introduces All-or-Nothing resource allocation semantics. It views all associated tasks (Pods) in a job as an indivisible atomic whole (Gang). The scheduler follows these core principles when making decisions:

• Atomicity Check: Execute the scheduling action only when the current free resources in the cluster are sufficient to meet the needs of the entire Gang (for example, all Workers).

• Holistic Allocation: Once conditions are met, resources are allocated to all Workers atomically.

• Unified Queuing: If resources are insufficient, all Pods in the entire Gang must remain in the queue, avoiding the intermediate state of "partial startup."

Through this mechanism, Gang Scheduling eliminates the risk of deadlock caused by partial allocation, ensuring that computing resources can be delivered effectively.

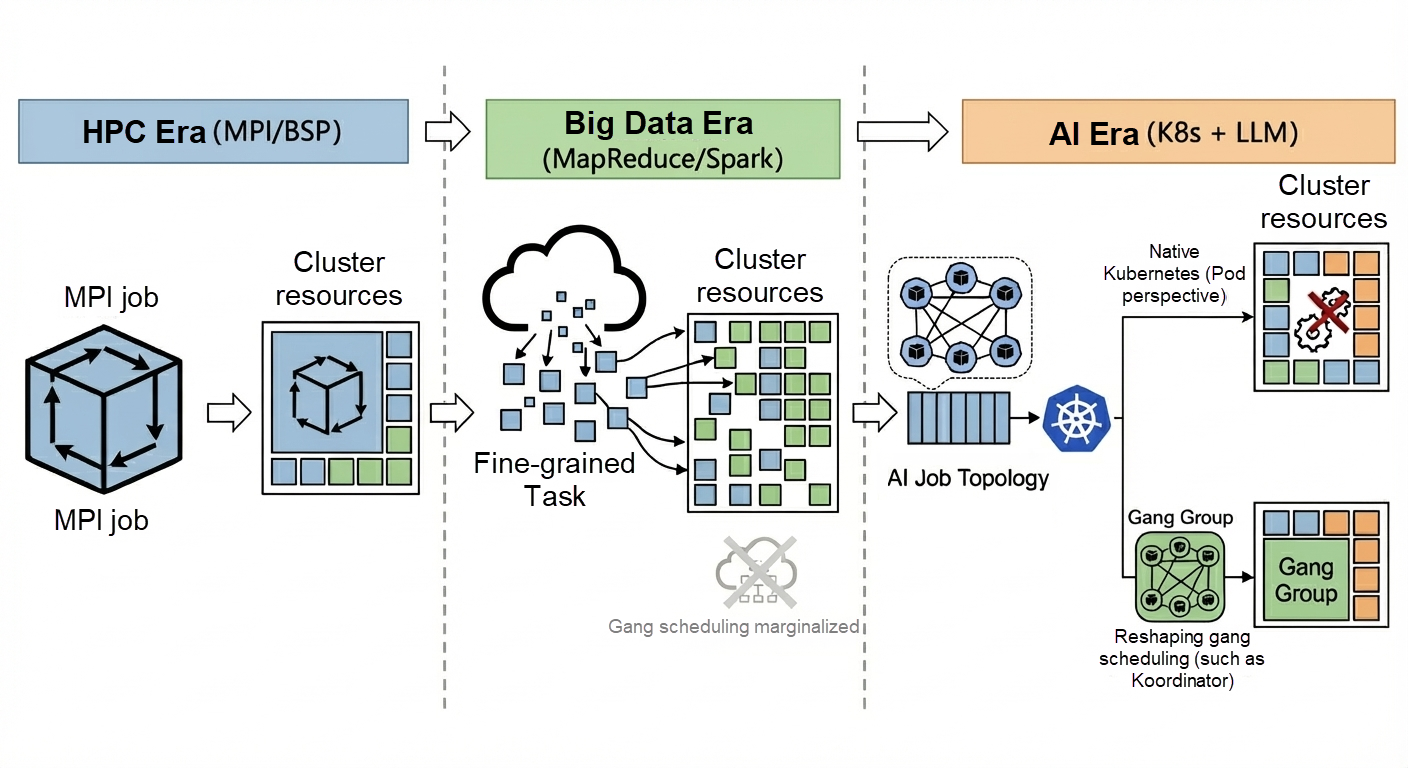

The development of resource scheduling technology is not linear; it presents a characteristic of "spiral ascent" with the evolution of computing paradigms. The concept of Gang Scheduling was first proposed and implemented in the High-Performance Computing (HPC) era, faded into the background during the Big Data era due to the architectural shift toward "loose coupling," and has now regained attention with the arrival of the AI era.

The period from the 1990s to around 2010 was a critical time for the development of HPC. This stage established the basic paradigm of distributed parallel computing and laid the theoretical prototype for later AI training.

• Application Characteristics: MPI Standards and the Definition of "Rigid" Jobs

• Scheduling Implementation: Atomic Delivery Based on Resource Reservation

To adapt to these rigid tasks, HPC schedulers (such as Slurm, LSF, and PBS) built the following core scheduling mechanisms:

These scheduling mechanisms helped the HPC ecosystem build a mature resource management system, effectively balancing the relationship between "job atomicity" and "cluster utilization."

With the publication of Google's GFS and MapReduce papers, the industry entered the Big Data era after 2008. The infrastructure for computing shifted from expensive dedicated supercomputing clusters to general-purpose hardware clusters based on x86, and the computing paradigm underwent a drastic turn.

• Application Characteristics and Resource Needs:

• Evolution of Scheduling Systems:

Advancing to the AI Large Model era, although the computing paradigm has returned to the tightly coupled characteristics of HPC at the algorithmic level (relying on All-Reduce synchronization), the underlying infrastructure it runs on has become Kubernetes. This leads to a misalignment in resource scheduling scenarios.

• Kubernetes Native Design: Orchestration for Stateless Services

The original intention of Kubernetes' architecture was to solve the problem of scaling microservices. Its core workloads (like Deployment) are oriented toward homogeneous and decoupled online service replicas.

• Infrastructure Misalignment:

However, this design logic oriented toward microservices reveals core contradictions when facing AI training tasks, mainly reflected in the following two aspects:

The Kubernetes native scheduler is Pod-centric. It maintains a flat Pod queue, and scheduling decisions are limited to "which node should this current Pod go to."

For AI training, users submit a complete Job. The scheduler lacks a "global job perspective" and cannot perceive that multiple scattered Pods in the queue actually belong to the same logical entity. This leads the scheduler to blindly perform local optimizations on Pods when handling AI tasks.

AI training tasks require atomic delivery of resources. That is, all computing resources required by a job must be ready at the same time. Kubernetes' default incremental allocation mechanism destroys this atomicity. When multiple AI jobs are submitted simultaneously, the scheduler mechanically follows the queue order, alternately allocating resources to different jobs. This "pipeline" style allocation easily leads to multiple jobs holding partial resources but none being able to assemble a complete topology, ultimately resulting in resource fragmentation or even deadlock.

Therefore, the current resource demand of AI tasks in the Kubernetes ecosystem is actually about rediscovering the "rigid" resource capabilities of the HPC era on top of cloud-native foundations. This is also the fundamental reason why scheduling systems like Koordinator are dedicated to introducing Gang Scheduling into the Kubernetes ecosystem.

As Kubernetes gradually becomes the new generation unified computing power foundation, Gang Scheduling, as a core demand of AI/HPC scenarios, has undergone a long evolution in its technical implementation from non-existence to "plugin-based" and finally to "standardization."

For a long time, support for Gang Scheduling in the Kubernetes native scheduler was absent. This was not an oversight in design, but an architectural trade-off based on its initial positioning.

The primary target scenario at the inception of the Kubernetes scheduler was the high-throughput processing of independent, stateless Pods. Introducing Gang Scheduling into the scheduling process would mean managing Pod collections at the scheduler level and forcibly introducing Wait logic (waiting for a group of Pods to complete scheduling). This would not only significantly increase the code complexity of the critical scheduling path but also easily slow down the entire cluster's scheduling rate due to handling dependencies of a single group, or even trigger Head-of-Line Blocking problems.

At that time, the mainstream applications occupying Kubernetes were microservice types, which did not have Gang Scheduling scenarios. It was this misalignment of application scenarios and design philosophy that caused the community to maintain an extremely cautious and conservative attitude when considering merging Gang logic into the core code (In-tree).

To solve Gang Scheduling needs in scenarios like AI training, the community has produced various solutions. Although these solutions have different architectures, they usually introduce Custom Resources (CRDs) to describe the concept of a "Pod Group" to achieve coordinated scheduling of a group of Pods.

• Independent Scheduler Mode:

The independent scheduler mode refers to running a completely independent Batch Scheduler outside the Kubernetes native scheduler to replace the Kubernetes native scheduler (such as Volcano). Such schedulers usually choose to define exclusive CRDs to identify jobs or Pod groups to implement Gang Scheduling capabilities.

• Scheduling Framework Plugin Mode:

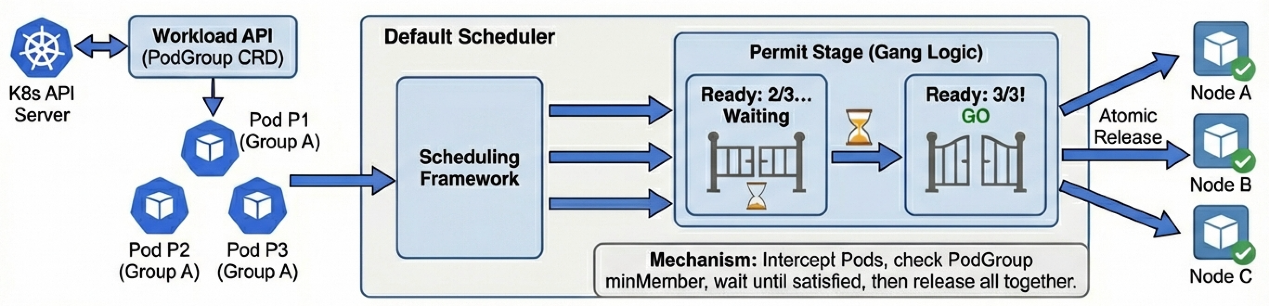

With Kubernetes 1.15 introducing the Scheduling Framework, quite a few projects chose to implement this via native scheduler extension plugins. Both the Coscheduling plugin from Kubernetes SIGs and the Koordinator project fall into this category. They declare job information based on the officially defined PodGroup CRD (or similar) and use the native scheduler's extension points to intervene. Only when enough Pods are gathered are they uniformly released; otherwise, the Pods are kept in a waiting state until the next scheduling cycle.

As more AI training and high-performance computing tasks run on Kubernetes, the community has begun to standardize Gang Scheduling capabilities. In the planning for v1.35 and subsequent versions, Kubernetes plans to support Gang Scheduling at the core scheduler level by introducing a native Workload API to meet the growing demand for AI computing.

• Technical Implementation: PodGroup and Permit Mechanism

From the perspective of technical implementation details, the native Gang Scheduling mechanism planned by the official community has a core logic consistent with Koordinator and current mainstream plugin implementations: It aggregates related Pods by introducing the concept of a "Group" (similar to PodGroup), intercepts Pods belonging to the same group during stages like Permit, checks whether the Pods that have obtained scheduling opportunities satisfy the minimum member count (minMember), then uniformly releases them once gathered. This is currently the industry's general solution to such problems.

• Evolution Planning: From Workload API to Elevated Scheduler Perspective

PodGroup), which can end the current situation where different scheduling plugins (like Volcano, Koordinator, Co-scheduling) maintain independent Gang Scheduling implementations.The evolution of scheduling systems is not limited to the optimization of scheduling algorithms themselves but is closely related to the core of computing architecture. Finally, we will step out of the pure engineering implementation perspective and analyze the "rigidity" and "elasticity" of future AI computing orchestration from the underlying logic.

To understand the cause of resource "rigidity," we need to examine the technological evolution of current AI infrastructure. To squeeze hardware performance to the extreme on existing physical clusters, the industry has derived a series of representative optimization technologies:

• In-Network Computing: Represented by NVIDIA SHARP, it offloads the All-Reduce calculation process directly to the switch's ASIC chips, eliminating host calculation overhead and significantly reducing global synchronization communication latency.

• 3D Parallelism: Through the hybrid use of Data Parallelism, Tensor Parallelism, and Pipeline Parallelism, super-large models are physically sliced and orchestrated in multiple dimensions, aiming to maximize the masking of computation and communication gaps through precise pipeline collaboration.

• Zero Redundancy Optimizer: By fragmenting model states in global video memory and reconstructing data in real-time using high-frequency collective communication during calculation, it exchanges high bandwidth communication overhead for valuable video memory space, breaking through the capacity limit of single cards.

Although these technologies use different optimization methods, their operational basis is still the BSP pattern. They do not attempt to decouple the dependencies between Workers; instead, based on the premise of "global synergy," they dedicate themselves to improving the pipeline efficiency of computation and communication.

This begs the question: Why can't we break this rigid constraint and use a more flexible asynchronous training mode? After all, in the early days of deep learning, asynchronous architectures based on Parameter Servers (PS) decoupled the dependencies between Workers.

However, in the era of large models with hundreds of billions of parameters, this attempt hit the "hard wall" of algorithmic convergence. Asynchronous updates inevitably introduce the gradient staleness problem, where some Workers use old versions of parameters to calculate gradients. This mathematical noise is disastrous for LLM training, which is extremely sensitive to precision, causing the model to fail to converge. Therefore, to ensure training results, algorithm scientists must constrain the training mode within a "strongly consistent" synchronous mode. This strict constraint at the mathematical level of algorithms is eventually transmitted layer by layer to the infrastructure layer, translating into an absolute dependence on Gang Scheduling.

Because the algorithmic constraint of "global synergy" cannot be broken in the short term, the core proposition of infrastructure evolution becomes how to maximize the digestion of the impact brought by this rigidity under the premise of global synergy. Through engineering and technical means, achieving "flexible scheduling" of resources while meeting "absolute synchronization" requirements, future evolution will mainly revolve around the following three dimensions:

• Convergence of the "Synchronization Domain": Reducing Scale via Single-Point Density

Since we cannot eliminate the global synchronization constraint brought by the BSP mechanism, the most pragmatic path to make large-scale training tasks run more smoothly is to reduce the physical radius of synchronization. The core logic lies in drastically increasing unit computing density to reduce a single task's dependence on cross-node large-scale synergy, thereby significantly lowering the physical threshold for "gathering resources." This evolution is mainly reflected in the vertical scaling (Scale-up) of computing architecture.

With the explosion of single-card computing power in new generation GPUs and breakthroughs in intra-machine/inter-machine interconnection technologies (like NVLink/NVSwitch), a single node (Node) or even a single rack (Rack) is evolving into a giant Super Node. This extreme increase in single-point density means that tasks originally requiring crossing dozens of racks and complex synchronization via the network can now converge to be completed within a few high-density nodes or even a single rack.

When the communication path shifts from a relatively slow long-distance network to an extremely fast inter-chip bus, the waiting cost of global synchronization is significantly compressed, and the task's rigid dependence on large-scale cluster topology decreases accordingly. This convergence of the synchronization domain is essentially making physical computing power "thicker," reducing the extremely hard-to-meet ultra-large-scale synchronization demands to relatively easy-to-meet medium- and small-scale synchronization demands, thus giving rigid BSP tasks relative "elastic" space in resource acquisition.

• Rapid Recovery: From Disaster to Feature

In the traditional BSP mode, the failure of any single Worker leads to the collapse of the entire training task, followed by long Checkpoint loading and recalculation. Traditional Checkpoint mechanisms block the main computation path and have long read/write times. Future evolution will focus on asynchronous persistence technology:

When the time cost of "saving the scene" and "restoring the scene" is compressed to an acceptable range, interruption is no longer a nightmare for jobs, and can instead become a routine means to improve overall cluster throughput.

• Dynamic Topology Reconfiguration: Removing the Strong Constraint of "Bind upon Start"

If Checkpoint solves the problem of "how to survive after interruption," then elastic training is dedicated to solving the problem of deformation after job interruption.

In traditional training modes, the GPU quantity (World Size) and Rank topology applied for at job startup are a statically bound "contract." Once a node is removed, it often means the entire job must be terminated, configuration modified, and restarted. The endgame of future evolution is to transform this static constraint into dynamic automated Topology Re-sharding:

In this way, originally "sensitive" AI training jobs truly possess the core characteristics of cloud-native applications—dynamic scaling and self-healing. It allows large-scale training to no longer "collapse" due to single points, and to maintain its lifecycle through degraded operation (fewer nodes) or backfill operation (replacing nodes).

Our exploration of the evolutionary path of computing "elasticity" is, in the final analysis, not for the pure pursuit of architectural flexibility, but to respond to the core metric of large-scale infrastructure operations—High Cluster Throughput. With computing costs becoming increasingly high today, the core mission of the system is no longer the extreme acceleration of a single task, but how to produce more effective results (Goodput) per unit of time.

Traditional training tasks are like "giant rocks" with fixed shapes and massive volumes. While this rigid structure guarantees internal algorithmic consistency, it imposes harsh requirements on the operating environment—perfect space (resource topology) and continuous time windows must be provided to fit them in. When we stuff these sharp-edged "giant rocks" into the container of a physical cluster, it inevitably creates massive gaps and wait times due to shape mismatch, directly limiting the breakthrough of the cluster's overall throughput ceiling.

Therefore, the evolution from "rigidity" to "elasticity" is essentially an engineering optimization turning the computing system from being "picky about resources" to "adapting to resources." It ensures that expensive computing clusters can maintain saturated pipeline operations when faced with any fluctuations. In the future evolution of computing power, only systems possessing this elastic capability can truly eliminate the losses caused by rigidity and achieve continuous, stable high-throughput output.

When Agents Meet Workflows—Can Intelligence Become More Controllable?

229 posts | 34 followers

FollowAlibaba Cloud Native Community - November 3, 2025

Alibaba Container Service - June 26, 2025

Alibaba Cloud Native Community - July 19, 2022

Alibaba Cloud Native Community - December 1, 2022

Alibaba Container Service - January 15, 2026

Alibaba Cloud Native Community - March 11, 2025

229 posts | 34 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Container Service