Kimi has already rolled out several Agent-based products, including "Deep Research," "Agentic PPT," "OK Computer," and "Data Analytics."

At peak times, Kimi's consumer-facing Agent business handles tens of thousands of concurrent requests. Each request requires its own isolated compute resources—assigned instantly—to ensure a smooth user experience. During model training, reinforcement learning (RL) and data synthesis also require massive, isolated compute environments that start and stop frequently. Bringing these agents to users creates entirely new demands on infrastructure. To solve this, Kimi worked closely with Alibaba Cloud to build an end-to-end Agent infra system, powered by Alibaba Cloud Container Service for Kubernetes (ACK) and the Agent Sandbox from Alibaba Cloud Container Compute Service (ACS).

An Agent product isn't just a collection of software features; it represents a new way of interacting with AI. It allows models to understand complex user intent, break down tasks autonomously, call tools, and execute multi-step workflows—essentially acting like a human colleague in creative or analytical roles.

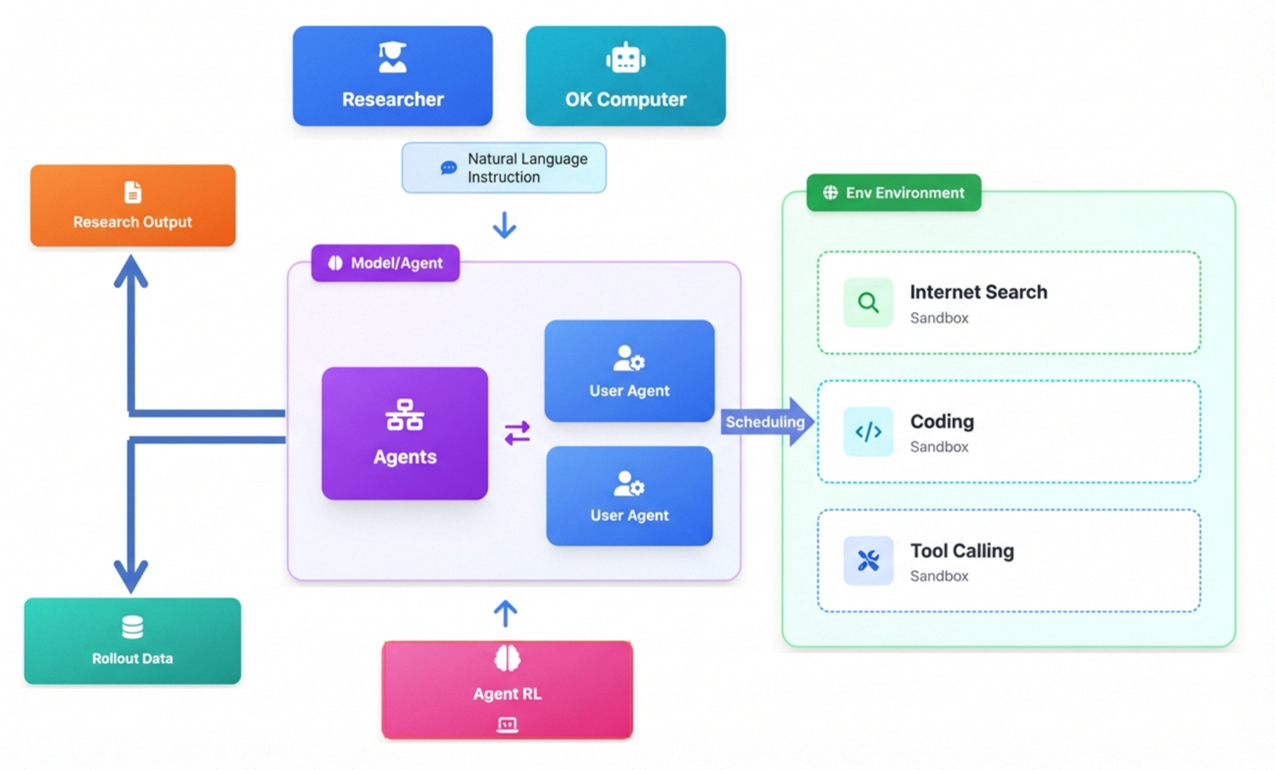

Products like "Deep Research" and "OK Computer" rely on natural language instructions. The model plans and reflects autonomously to drive a virtual computer sandbox, automating complex pipelines that include tool calling, web searches, and code debugging.

During peak usage, the system must handle 100,000+ simultaneous user requests, each potentially triggering multiple rounds of complex inference. To prevent interference or resource contention, the system must rapidly assign dedicated compute to every single request.

Beyond user-facing services, Kimi used large-scale RL and Agentic data synthesis to train its new K2 model. This requires the system to start and stop the massive Agent instances in parallel, simulating complex user behaviors and environments to generate high-quality trajectory data. For this to work, elasticity and scalability are essential.

Kimi Agent Scenarios

● Challenge 1: Instant Response Times

The elasticity and startup speed of the sandbox environment is Kimi's first major hurdle. AI Agent tasks are highly unpredictable; user requests can spike in an instant. Traditional virtual machines or containers that take minutes to deploy are too slow for agents that require an immediate response. Security is also vital: because agents execute LLM-generated code without manual oversight, the sandbox must provide robust isolation to protect other users and the host system.

● Challenge 2: Maintaining State and Handling Concurrency

For long-running tasks, sandboxes need status preservation. If a task is paused, it should recover exactly where it left off. Furthermore, as Kimi's user base grows, the sheer volume of users places immense pressure on the cluster's scheduling, resource allocation, and control plane.

● Challenge 3: Scaling Without Breaking the Bank

Cost control is a major practical concern. Agent tasks usually come in short bursts. If you provision enough resources to handle peak demand 24/7, you end up with massive waste. The goal is stable, on-demand scheduling that supports massive concurrency at the lowest possible cost.

Agent Infra Architecture Diagram

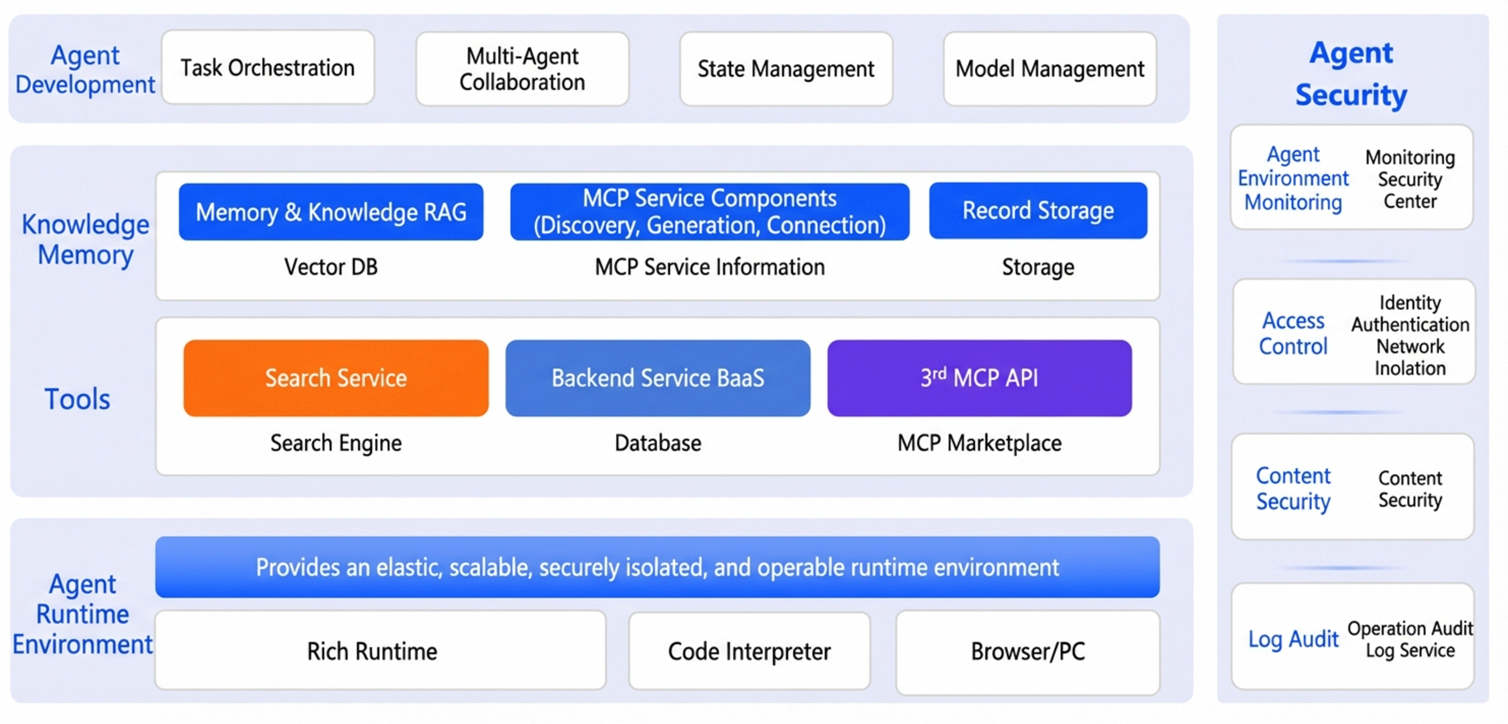

In summary, the core infrastructure requirements for AI Agents include:

● A large-scale elastic sandbox environment that serves as the cornerstone of task execution, enabling rapid launch, termination, and isolation.

● Support for long-running processes and preserve session status to meet the needs of multi-turn inference.

● Robust knowledge capabilities and flexible memory management to achieve higher levels of intelligence through tool calling.

● Security monitoring and assurance tools to facilitate the safe publication of Agent products.

Through deep technical synergy with Alibaba Cloud, Kimi's Agent infra was successfully implemented, providing stable and efficient support for both consumer-facing services and algorithm research. Throughout this process, both teams tackled a range of complex technical challenges—spanning elasticity, cost, stability, state preservation, and security.

AI Agent traffic fluctuates like the tide. During peak hours, thousands of users might initiate requests concurrently, requiring the system to handle several folds the normal volume within seconds.

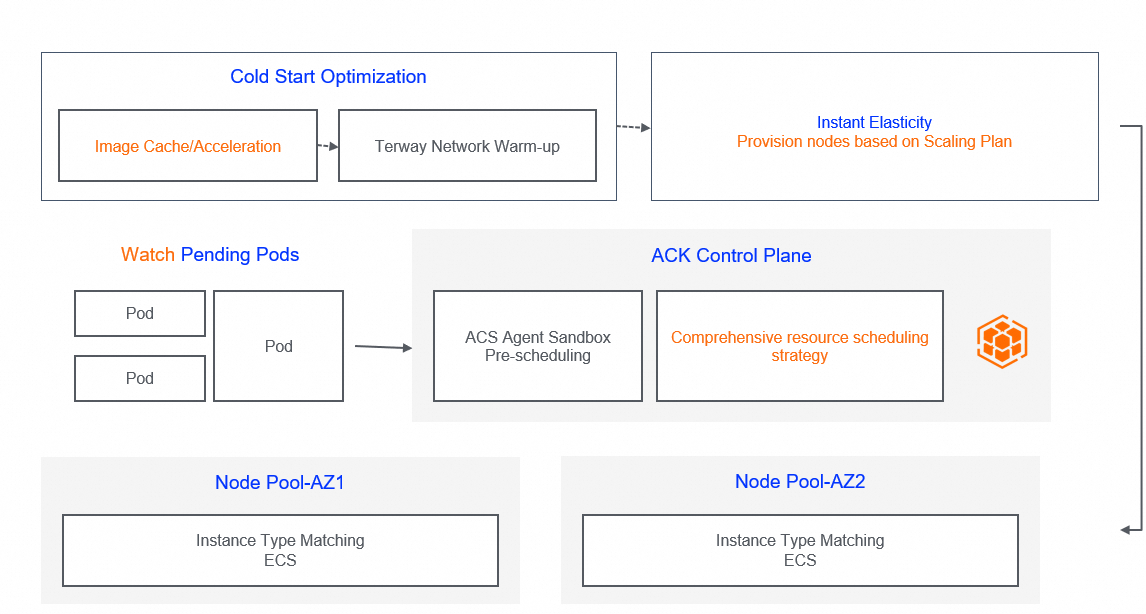

To create a fast, accurate, and stable solution for Kimi, Alibaba Cloud utilized ACK's instant node elasticity and ACS Agent Sandbox's resource pre-scheduling and intelligent resource policies, achieving fine-grained management and efficient scheduling.

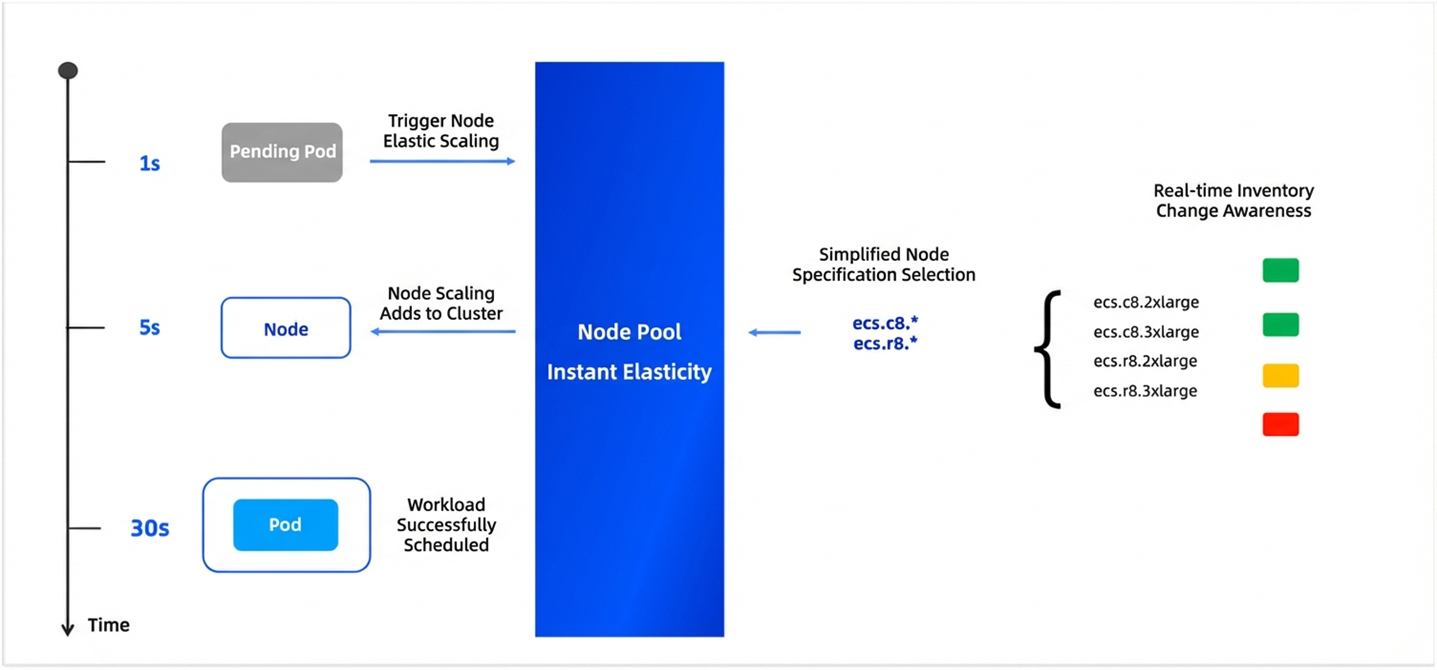

ACK Node Pool Instant Elasticity / ACS Agent Sandbox Collaborative Scheduling

When facing burst traffic, ACK node pools ensure the scale-out of computing resources through a combination of multi-zone and multiple instance types (such as general-purpose, compute-optimized, or storage-optimized). This avoids scale-out failures due to resource shortages in a single zone. To shorten the duration from node initialization to business readiness, ACK supports several acceleration methods:

● Users can pre-package business images and dependencies, which eliminates the need to repeatedly pull images and reduces initialization time by over 60%.

● The system uses data disk snapshots for rapid cloning, which drastically reduces initialization time from minutes to mere seconds.

● By pre-assigning and attaching Elastic Network Interfaces (ENIs) to scale-out nodes, the system avoids unnecessary waiting and significantly accelerates pod network readiness.

Instant Elasticity of Node Pools

To handle Kimi's demand for massive, fragmented computing power, the ACS Agent Sandbox delivers ultra-fast, sub-second launches. By using lightweight virtual machine (MicroVM) technology at the infrastructure layer, it slashes virtualization overhead by 90%. This allows Kimi to start up thousands of sandboxes in seconds to handle even the most intense traffic spikes.

This speed is driven by three key optimizations:

● ACS leverages Alibaba Cloud's massive resource pool to prepare resources in advance based on user patterns, effectively eliminating the "cold start" headache.

● ACS solves the bottleneck of pulling large container images with an image cache feature based on disk snapshot technology, which pre-converts images into caches to bypass the need for downloading image layers.

● Instead of over-provisioning resources for startup bursts, ACS uses "hot update" technology to allow a sandbox to acquire extra CPU and memory for the first few seconds of creation before smoothing back to baseline specs. For Python-based sandboxes, this alone cuts startup times by over 60% while keeping costs under control.

To handle high-frequency fluctuations, Kimi uses Alibaba Cloud's ResourcePolicy to build a tiered scheduling system. This policy defines priority rules via declarative configuration:

● Reserved nodes are utilized as a baseline capacity pool to handle daily stable payloads.

● When the pod queue exceeds a specific threshold (e.g., 500) or waits for a timeout (e.g., 30s), the system automatically overflows excess requests to the ACS Agent Sandbox Serverless pool.

This mixed mode reduces Kimi Agent's overall costs, increases burst peak capacity, and balances speed with cost optimization.

Modern Agent tasks are often long-running procedures rather than "single-turn" interactions. For example, a research Agent might organize literature and generate reports in the background for several minutes. If the system destroys the sandbox due to resource tension during this time, all intermediate data and inference paths are lost.

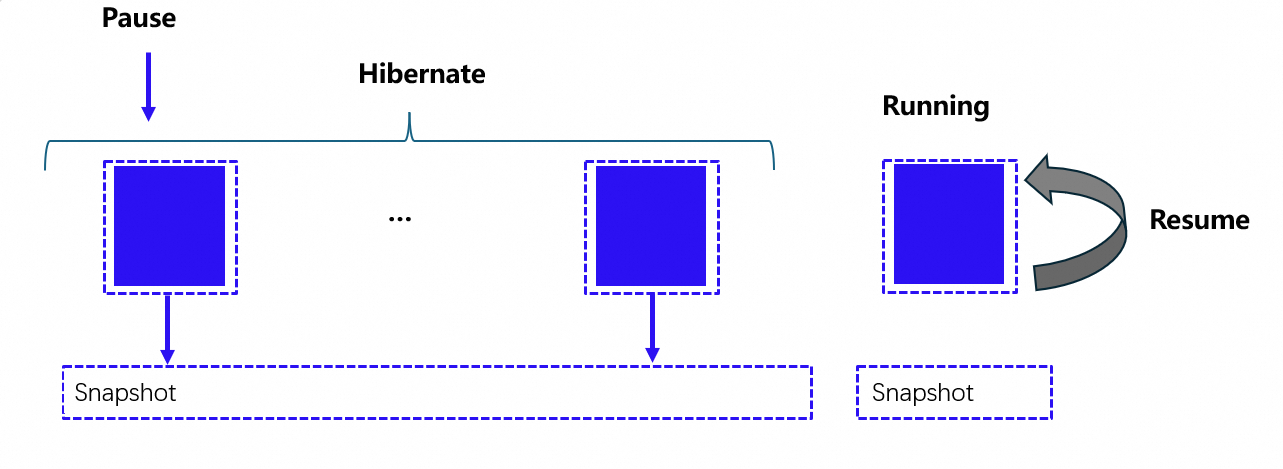

To avoid this, Kimi uses a hibernation-wake up-clone mechanism:

ACS Agent Sandbox supports one-click hibernation and rapid wake-up triggered via native K8s pod protocols, Sandbox CR, or the E2B SDK. During hibernation, CPU and memory resources are released to reduce costs, but memory status, temporary storage, and IPs are preserved. The system can wake these pods in seconds, fully recovering the environment so the user feels the Agent was always on standby.

ACS Hibernation Capability

Building on hibernation, Kimi uses memory-level snapshot Checkpoints to instantly create massive numbers of instances with identical initial states. By using a decoupled storage and compute architecture, cloning eliminates the need to reload dependencies or initialize memory. This is a game-changer for RL scenarios like Monte Carlo Tree Search (MCTS). Instead of running paths sequentially or starting brand-new sandboxes with high overhead, Kimi can instantly generate thousands of replicas to explore different "future paths" in parallel, significantly accelerating algorithm iteration.

As Kimi's concurrency climbed to hundreds of thousands of pods, the pressure on the Kubernetes scheduler and API Server became immense. To prevent response latency or scheduling backlogs, ACK deeply optimized the core components:

Through parameter tuning, pod affinity caching, and equivalence class scheduling, the ACK scheduler handles hundreds of pods per second at a scale of thousands of nodes. It exponentially reduces overhead by using intermediate caching and parallel processing across different links.

The control plane is deployed on a multi-zone high-availability architecture. Every component—including ETCD, API Server, KCM, VK, and the ACS management layer—has been optimized for the high-frequency "create-and-destroy" cycles of Agent sandboxes, supporting dynamic elastic scale-out to meet second-level elasticity demands.

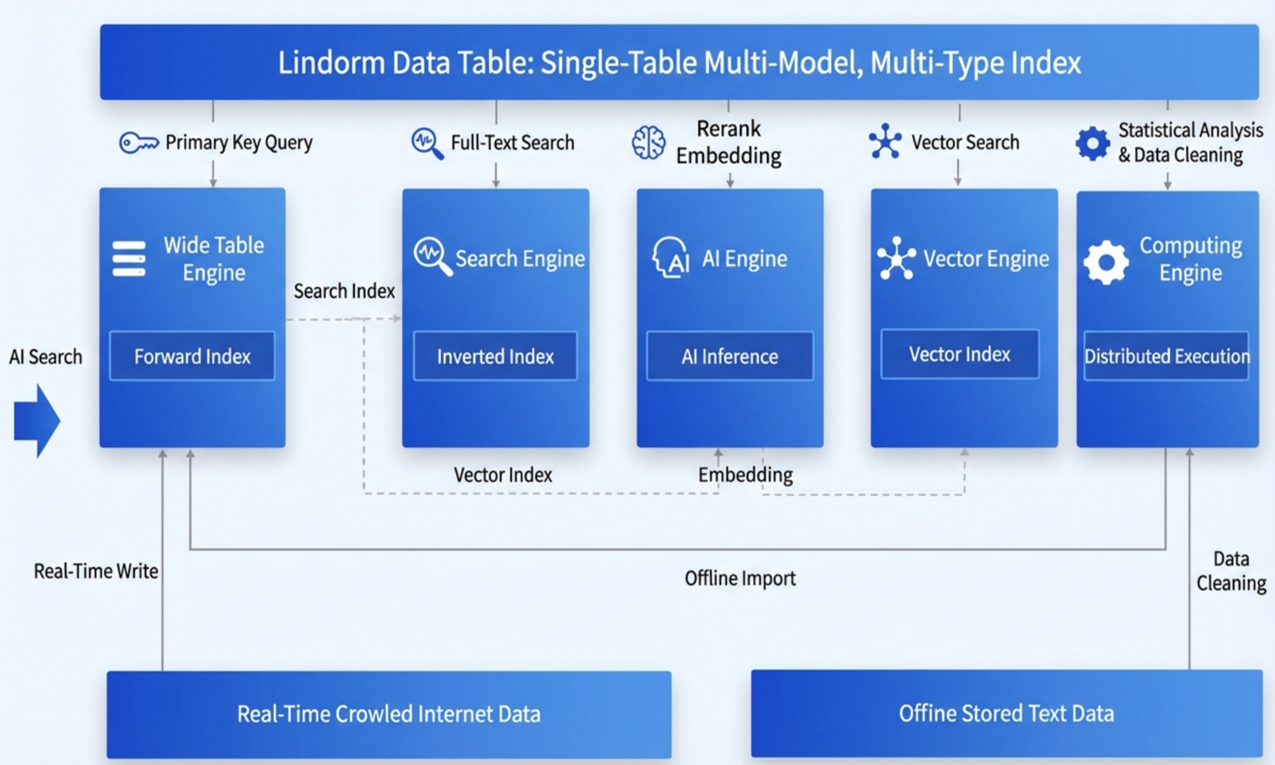

High-quality search and memory are the foundations of any complex agent. Kimi built these using Alibaba Cloud's Lindorm multi-model database, which features a cloud-native architecture with decoupled storage and compute.

Lindorm Multi-engine Capabilities

● Lindorm integrates four core engines—Table, Search, Vector, and AI—allowing data to flow automatically within the system without the need for complex synchronization links.

● The system natively supports RRF-based retrieval, combining full-text and vector searches with custom weights for higher accuracy.

● By using built-in compression algorithms and flexible storage options (such as disks and OSS), Lindorm cuts storage costs by 30% to 50% compared to open-source alternatives.

In a multi-tenant environment where different users share the same physical cluster, security isolation is non-negotiable. Kimi must ensure that every Agent runs in a strictly independent environment, preventing them from accessing other users' data or performing unauthorized system operations.

The ACS Agent Sandbox uses MicroVM technology to provide hardware-level isolation for every Agent task. Combined with NetworkPolicy and Fluid, this creates an end-to-end secure runtime that protects both the network and storage layers.

For persistent storage, NAS allows for the dynamic assignment of independent subdirectories to each Agent. This creates logically isolated spaces with strict read/write permissions via ACLs or POSIX.

On the network side, Alibaba Cloud NetworkPolicy restricts inter-Agent communication. These policies are optimized to handle massive Terway clusters, ensuring that security management doesn't overwhelm the Kubernetes control plane.

To facilitate the large-scale deployment of enterprise AI, Kimi utilized the ACS Agent Sandbox—a high-performance, out-of-the-box solution. By coordinating with ACK, they established a secure, agile, and sustainable foundation for production.

This infrastructure successfully supported the launch of "Deep Research" and "OK Computer," achieving significant results:

● The system proved capable of handling tens of thousands of sandboxes per minute during peaks while cutting startup times by over 50%.

● Kimi dramatically reduced task latency and boosted overall efficiency during the critical model post-training phase.

● Intelligent scheduling—blending standard and Serverless computing—combined with instance hibernation has significantly reduced the total cost of ownership for Kimi's Agent business.

By integrating Alibaba Cloud's comprehensive PaaS and security capabilities, this system provides the technical bedrock for future autonomous AI. It empowers Kimi to stay at the forefront of innovation in the AI Agent era.

Caching is Efficiency: Achieving Precise LLM Cache Hits with Alibaba Cloud ACK GIE

229 posts | 34 followers

FollowApsaraDB - February 10, 2026

Ced - February 28, 2025

Alibaba Cloud Indonesia - July 16, 2025

CloudSecurity - March 27, 2026

Alibaba Developer - January 11, 2021

Anna Chat APP - August 12, 2024

229 posts | 34 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Registry

Container Registry

A secure image hosting platform providing containerized image lifecycle management

Learn MoreMore Posts by Alibaba Container Service