By Shuangkun Tian

Large Language Model (LLM) Agents are rapidly moving into production environments. However, the challenge of making them "both smart and controllable" remains a major hurdle for engineering teams. At KubeCon North America this year, a compelling approach gained significant traction: leveraging the determinism of Workflows to anchor and constrain the uncertainty of Agents. By integrating Argo Workflows, companies like JFrog, Root.io, and Salesforce have successfully deployed Agents at scale for complex scenarios such as vulnerability remediation and self-healing operations. This synergy finds the elusive balance between intelligence and reliability, providing a blueprint for AI implementation across various industries.

At KubeCon North America, held in Atlanta in November 2025, "Agents" was the standout topic. From keynote stages to booth showcases, the industry was focused on one question: How do we successfully land LLM-driven Agents in production?

A recurring keyword in these discussions was Workflows. A consensus is emerging:

• Agents represent "Uncertainty": They are intelligent, flexible, and excel at navigating ambiguity. However, their outputs and behaviors are "probabilistically optimal" rather than 100% predictable.

• Workflows represent "Determinism": They define clear steps, sequences, conditions, and retry mechanisms. They break tasks down into standardized, observable, and auditable pipelines.

This creates a fascinating architectural question:

Should Workflows orchestrate Agents, or should Agents orchestrate Workflows?

Several practical case studies from KubeCon provided the answer.

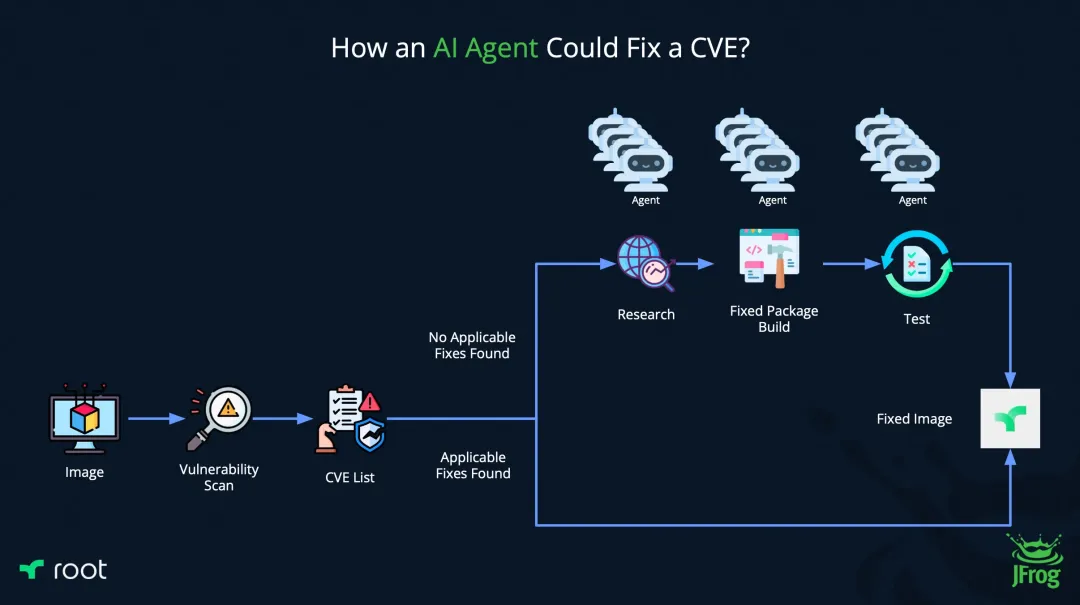

JFrog and Root.io shared their experience using Argo Workflows to orchestrate Agents for large-scale Common Vulnerabilities and Exposures (CVE) remediation.

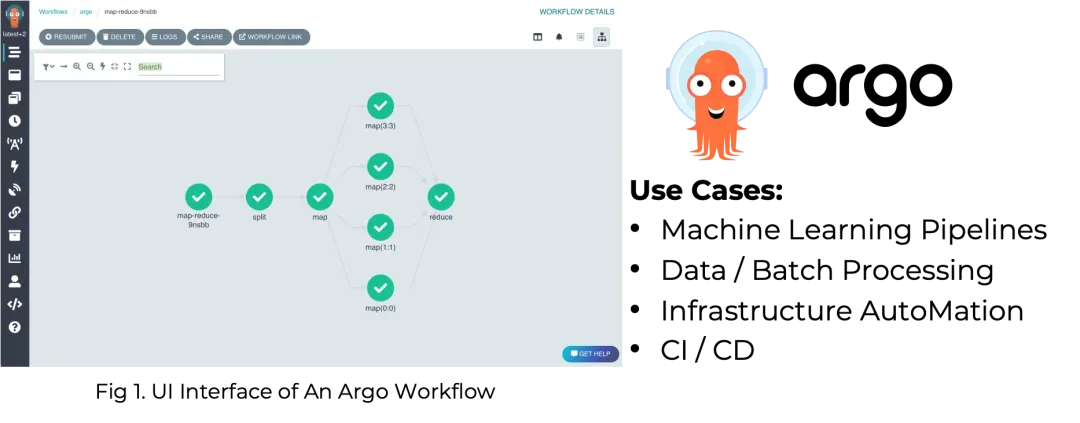

The teams utilized AI Agents to automate the discovery, patching, and testing of vulnerabilities. As remediation flows grew in complexity—spanning multiple projects and platforms and scaling from single instances to thousands—ensuring system reliability became a massive challenge. The solution was to use Argo Workflows as the orchestration backbone.

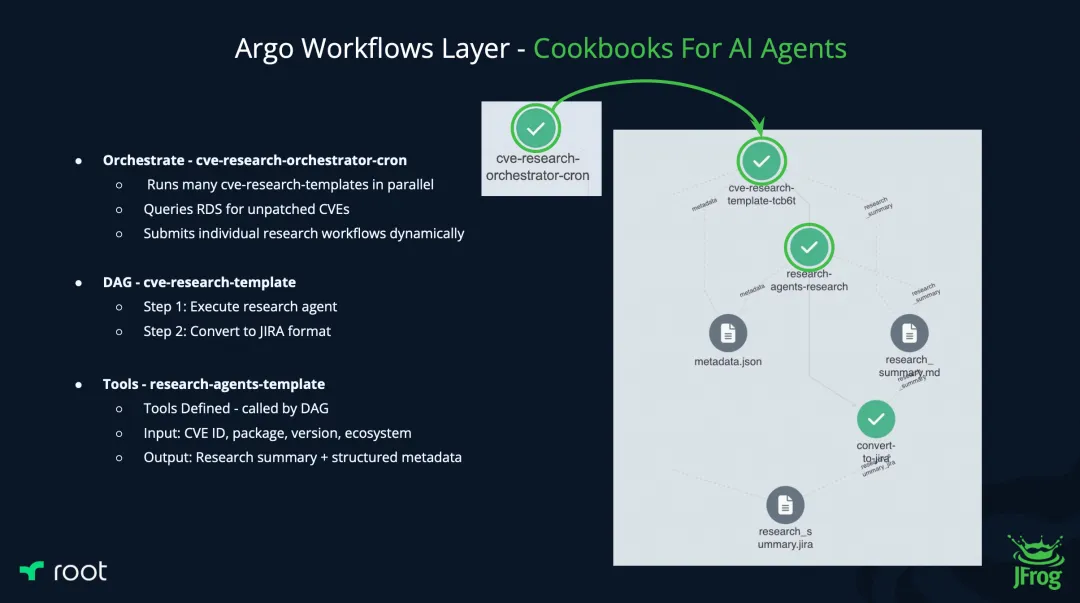

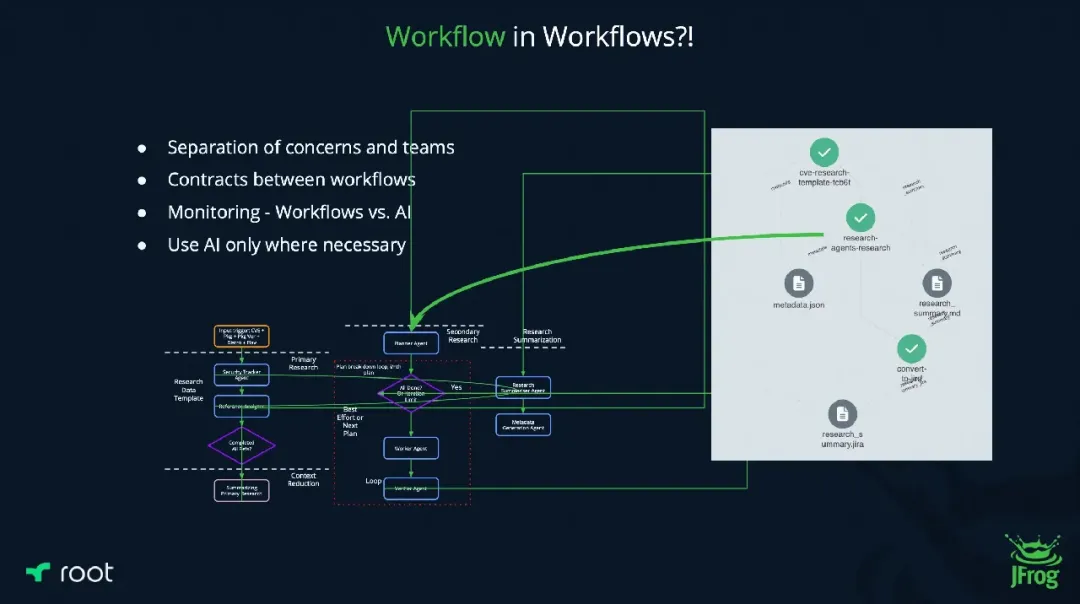

A scheduled Workflow triggers the detection process. Within the pipeline, a "Research Agent" template is called to analyze the CVE and generate a report. Subsequent steps then handle packaging, transformation, and deployment. By using Workflows to call Agents, the remediation process becomes predictable and automated.

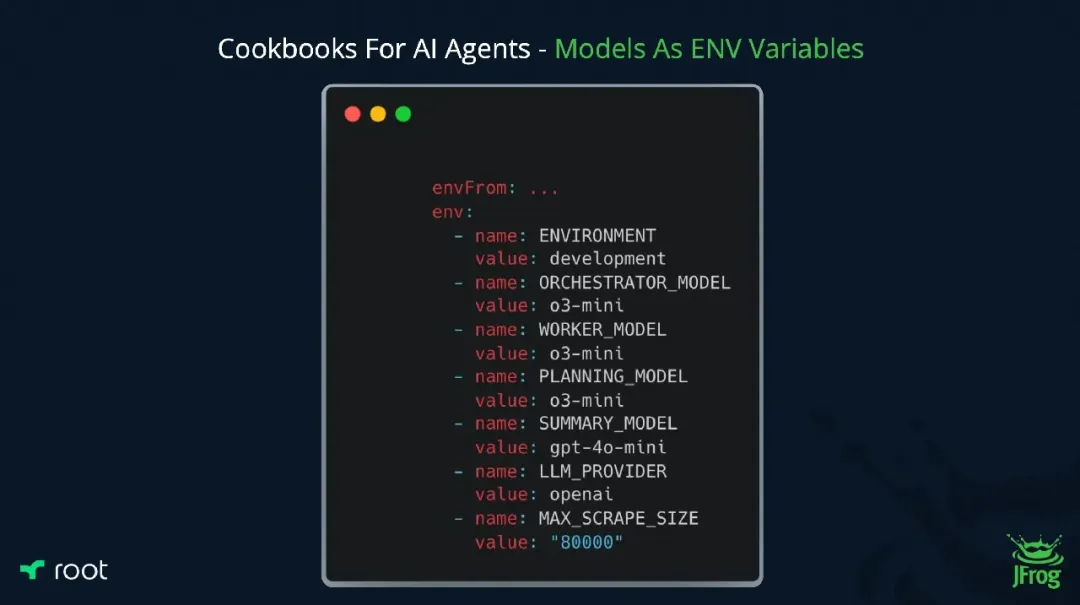

When agents are called, the input and output can be customized via Argo’s native parameters, and model versions or prompts are flexibly managed through environment variables. Failures, anomalies, or timeouts are managed by the Workflow’s built-in retry and rollback policies, ensuring the system doesn't crash due to a "hallucinating" or unresponsive AI.

The result was a highly observable and deterministic platform for large-scale intelligent task orchestration. Interestingly, the presenters argued that even within a Research Agent, AI usage should be disciplined and used only where necessary, favoring Workflows for internal logic due to their superior predictability.

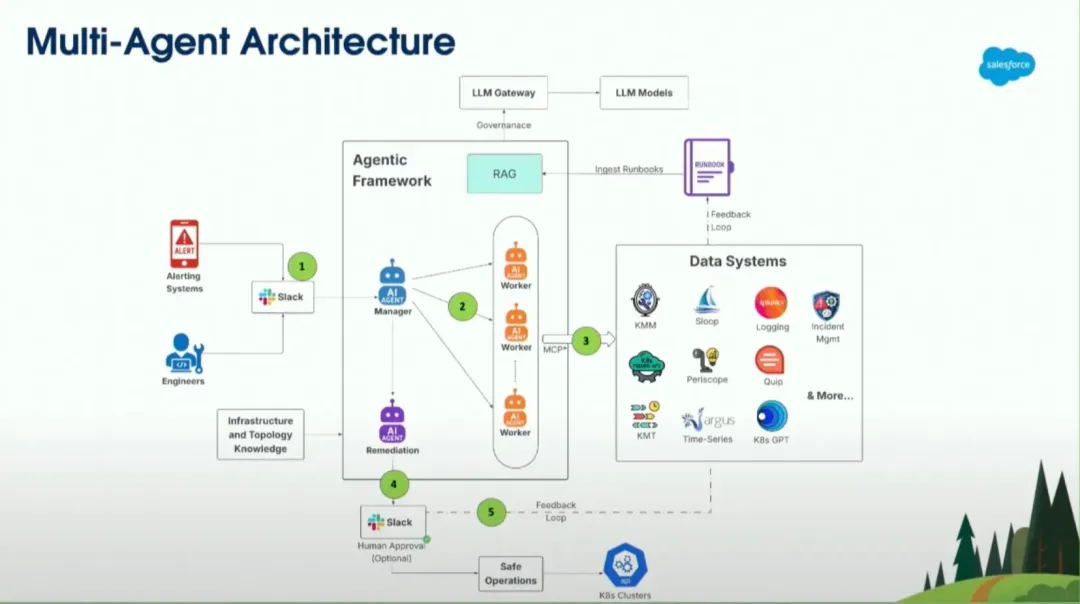

Salesforce presented their experience using a Multi-Agent architecture to trigger Argo Workflows, significantly improving the operational efficiency of massive clusters.

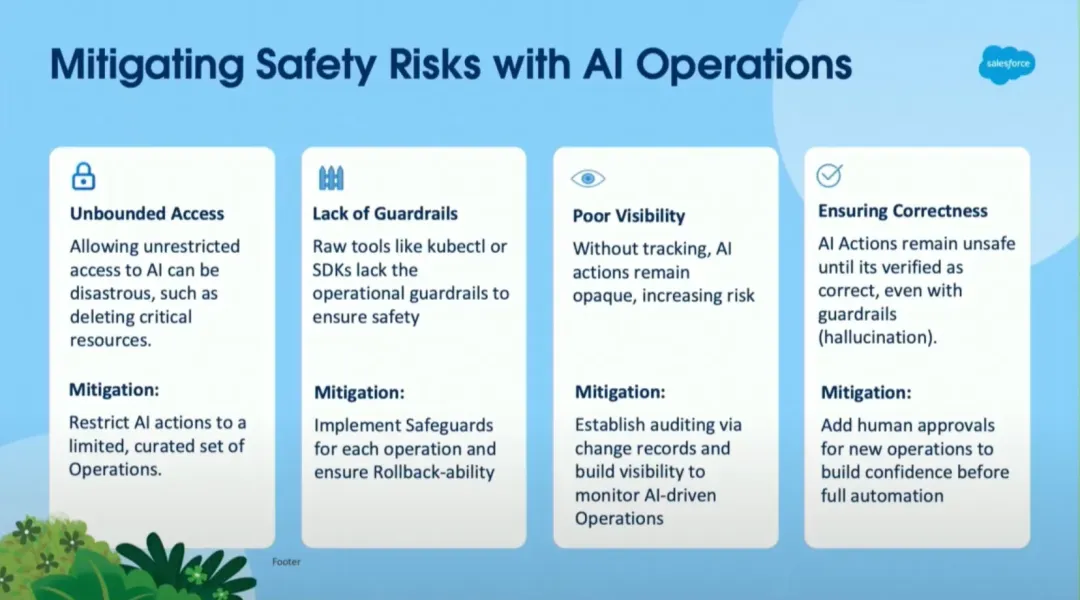

Salesforce faces a monumental Operations and Maintenance (O&M) challenge: managing over 1,400 clusters and millions of Pods across diverse teams. Their goal was to build a "Self-Healing" AIOps system capable of autonomous fault discovery and remediation.

The team adopted a Multi-Agent architecture, enabling intelligent collaboration between specialized agents such as the On-Call Agent, Kubectl Agent, and Analysis Agent. These agents analyze alerts, query historical data, and assess risks to determine the optimal operational action, thereby reducing manual intervention and boosting efficiency.

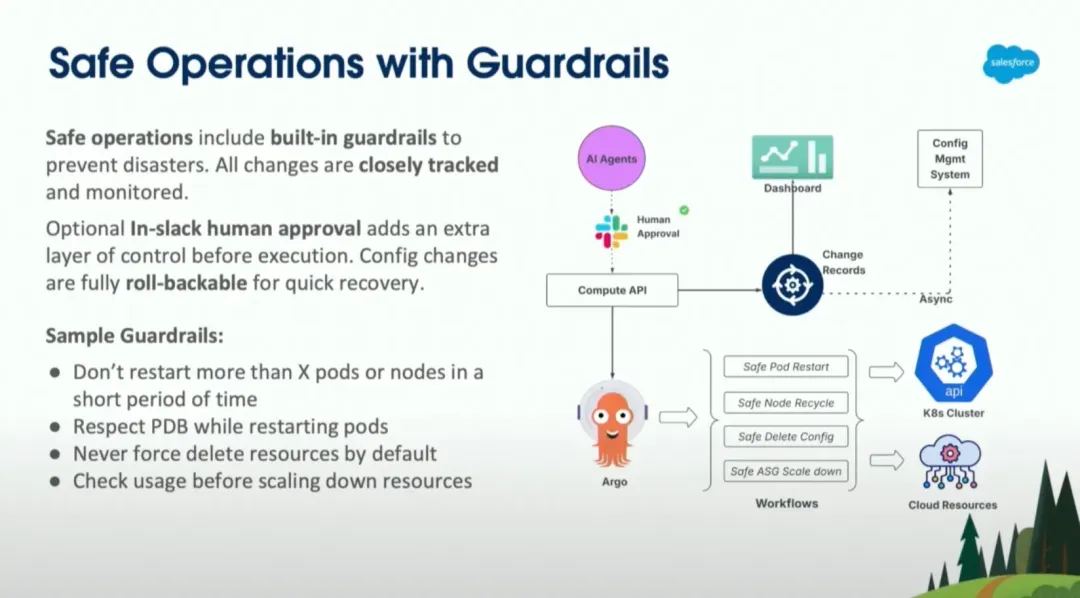

However, when it came to executing actual cluster management, new challenges emerged: How could they strictly enforce access controls and operational boundaries? How could they prevent misconfigurations from triggering cascading failures? And how could they ensure robust auditability and traceability? Salesforce made a pivotal architectural decision: Agents do not "act directly" on the cluster; instead, they orchestrate and trigger Argo Workflows.

By treating Workflows as "operational atoms" that are auditable, testable, and reusable, Salesforce ensured that AI-driven decisions always execute within safe boundaries and under strict audit trails. This approach made large-scale production deployment a reality.

Argo Workflows is an open-source, container-native workflow engine designed to orchestrate large-scale, highly reliable parallel jobs on Kubernetes.

The practices of JFrog, Root.io, and Salesforce demonstrate that the combination of Argo Workflows and Agents helps teams find the balance between innovation and control:

• Embrace the flexibility and creativity of LLMs.

• Maintain the controllability, observability, and auditability required by enterprise engineering systems.

If your team is currently building Platform Engineering, DevOps, or AI-native infrastructure, integrating Agents with Argo Workflows is an architectural direction you cannot afford to ignore.

Intelligent Scheduling for AI Inference: Cluster-Level Priority Elastic Scheduling

229 posts | 34 followers

FollowAlibaba Cloud Native Community - May 21, 2026

Alibaba Cloud Native Community - December 17, 2025

Alibaba Cloud Native Community - April 10, 2026

Alibaba Cloud Community - August 22, 2025

Alibaba Cloud Native Community - June 5, 2026

CloudSecurity - April 20, 2026

229 posts | 34 followers

Follow Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Container Service