By Yi Li – Alibaba Researcher

Since 2020, the COVID-19 pandemic has changed the functioning of the global economy and people's lives. Digital production and lifestyle have become the new normal in the post-pandemic era. Today, cloud computing has become the infrastructure of the digital economy, and cloud-native technologies are changing the way enterprises migrate to and use the cloud. How to use cloud-native technologies to help enterprises reduce cost and improve efficiency is a topic that concerns many IT leaders.

Alibaba has always been an explorer and practitioner in the cloud-native field. There are several major stages of Alibaba Group's cloud-native:

On this basis, Alibaba Group begins to realize a comprehensive cloud-native upgrade. We adhere to the trinity of open-source technologies, Alibaba Cloud products, and Alibaba applications. In 2021, Alibaba migrated 100% of its business to the cloud, and all its applications became cloud-native.

Cloud-native technologies have brought huge value to Alibaba. Currently, Alibaba Group has the largest Kubernetes cluster in the world, with more than 10,000 nodes in a single cluster, which can centrally support diversified applications such as e-commerce, search, big data, and AI. The computing cost of the peak during Double 11 in 2021 was 50% lower than in 2020. Serverless is also implemented in a large number of scenarios, and R&D performance is improved by 40%.

Thanks to its large-scale cloud-native practice, Alibaba Cloud has built an advanced and inclusive cloud-native product family for enterprises, serving both Alibaba Group and its customers in various industries. In the first quarter of 2022, Alibaba Cloud Container Service for Kubernetes (ACK) became the global leader in the public cloud container platform analyst report released by Forrester. This is also the first time a Chinese technology company became a leader in the container service field.

Cloud-native technologies represented by containers have developed rapidly over the past few years. Based on the latest CNCF developer survey, there were more than seven million cloud-native developers worldwide in the third quarter of 2021. It is the consensus of most developers to use cloud-native technologies to reduce costs and improve efficiency for enterprises. However, based on the FinOps Kubernetes Report launched by CNCF in 2021, 68% of the respondents said the cost of computing resources of enterprises in the Kubernetes environment increased in the past year. What is the reason behind this phenomenon?

We communicated and analyzed enterprises and found five major problems they encounter:

In recent years, the concept of FinOps has been mentioned and adopted by more enterprises as they accelerate to migrate to the cloud. FinOps is a cloud operation model that combines systems, best practices, and culture to improve the ability of enterprises to understand cloud costs. This is an approach that brings financial responsibility to cloud spending, enabling teams to make wise business decisions. FinOps enhances collaboration among IT, engineering, finance, procurement, and the enterprise. It enables IT to evolve into a service organization focusing on leveraging cloud technology to add value to the business. When cloud-native technology is intertwined with the concept of FinOps, it gives birth to the concept of Cloud-Native FinOps. It is an evolution of FinOps in cloud-native scenarios.

Enterprises began to focus on new methods for cost governance to solve the new challenges brought by cloud architecture and cloud-native technologies. Thanks to the collaboration between IT, finance, business, and other teams, Cloud-Native FinOps helps enterprises gain better financial control and predictability while ensuring business development.

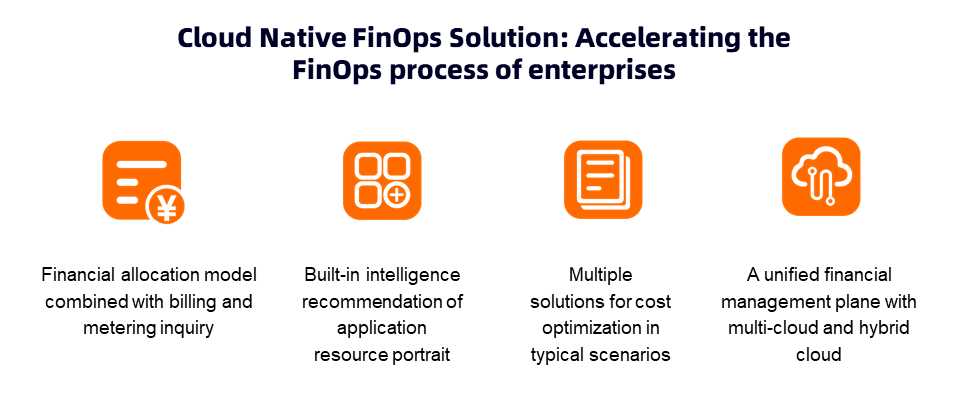

Alibaba Cloud combines the practice of the integration of industry and finance and the concept of FinOps to offer native product capabilities, thus providing enterprises with the guarantee of financial management on the cloud. ACK has launched a Cloud-Native FinOps solution to help enterprises provide enterprise IT cost management, enterprise IT cost visualization, and enterprise IT cost optimization in cloud-native scenarios.

The Alibaba Cloud Cloud-Native FinOps solution has five core features.

Core Feature 1: A Unique Model of Cost Allocation and Estimation for Cloud-Native Container Scenarios: Container service proposes a unique cost estimation model that combines billing and metering to solve the problem of inconsistent lifecycles between business units and billing units in container scenarios. It takes cost strategies into account, including payment types, savings plans, vouchers, user discounts, bidding fluctuations, allocation factors (such as CPU, memory, GPU card, and GPU memory), and resource forms (ECS, ECI, and HPC). It implements cost estimation in the level of pods and cost allocation for cluster proportions. All resource costs of a cluster in one phase are aggregated through bill analysis. In addition, with the cost allocation capability of the pod dimension, a complete model of cost allocation and estimation for cloud-native container scenarios is realized.

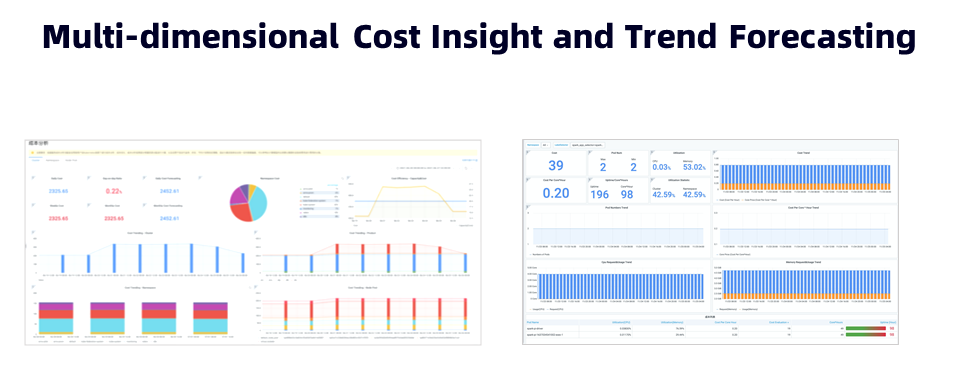

Core Feature 2: Multi-Dimensional Cost Insight, Trend Prediction, and Drill-Down Root Cause Analysis: It supports cost insight in four dimensions: cluster, namespace, node pool, and application (label wildcard matching). In terms of cluster, it focuses on the distribution of cloud resources, the trend of resource cost, the ratio of cluster level to waste, and the trend and prediction of cluster cost. It can help IT administrators accurately judge the trend of consumption and prevent scenarios that exceed the budget. It focuses on the allocation of costs in the namespace dimension. It supports short-cycle cost estimation, long-cycle cost allocation, and the correlation analysis of scheduling level, resource usage, and cost trend. It assists department administrators in cost estimation, drilling down to analyze cost waste, and improving department resource usage. It focuses on resource cost planning and governance in the dimension of the node pool. It assists IT asset administrators in optimizing resource group and payment policies by analyzing the correlation between instance type, unit verification time, scheduling level, and usage level. It focuses on cost optimization in domain scenarios in the dimension of application (label wildcard matching), such as big data, AI, offline jobs, online applications, and other upper-layer application scenarios. You can use the cost insight in the application dimension to estimate real-time costs and calculate costs at the task level.

The feature and solutions of cost optimization in all scenarios can be supported by data, and the cost reduction and efficiency improvement can be realized with reliable data through the cost insight of four dimensions.

Core Feature 3: Cost Optimization Capabilities and Solution Covering the All Scenarios: Alibaba Cloud Container Service provides resource portrait creation and the feature and solutions of cost optimization for different business scenarios in various enterprises. In addition, most of the cost optimization strategies of enterprises need to be supported by business scenarios. Customization and secondary development still exist in many scenarios. Therefore, the cost insight capabilities and upper-layer optimization solution provided by Cloud-Native FinOps of ACK are completely decoupled. They can use the cost insight capability in four dimensions to cover the evaluation of cost optimization solutions in all scenarios.

Core Feature 4: Full-Type Cloud Cost Management Capabilities for Multi-Cluster, Multi-Cloud, and Hybrid Cloud: Multi-cloud is a new trend for enterprises to migrate to the cloud. The billing models of cloud vendors are quite different, such as the subscription billing method that is popular among domestic cloud service providers, the credit card pre-payment or post-payment method that is common among foreign cloud service providers, and the savings plans and reserved instances supported by some cloud service providers. All of these pose more challenges to the cost analysis capability of the multi-cloud management platform. The solution of Cloud Native FinOps of ACK provides unified billing and inquiry access and default implementation for cloud service vendors and supports access to cost data of mainstream cloud service vendors and IDC self-built data centers. In addition, cost management is carried out through a consistent model of cost allocation and estimation for cloud-native container scenarios. With the enterprise-level cloud-native distributed cloud container platform ACK One, it provides capabilities for unified cluster management, resource scheduling, data disaster recovery, and application delivery in multiple clusters and multiple environments but also offers the capability for unified financial management.

Core Function 5: Expert Services for Enterprise Cloud-Native FinOps: Cloud-Native FinOps is not only a product capability or solution but also an evolution of enterprise IT management, organizational process, and culture in the cloud-native era. The ACK Team and the Alibaba Cloud Apsara Infrastructure Management Team provide complete products and expert services covered by the FinOps concept through Alibaba Cloud Asset Manager.

For example, we can use multi-dimensional cost analysis and insight features to understand the cost and resource usage of applications. Moreover, multi-dimensional cost analysis and insight features offer trend forecasts and provide a decision-making basis for enterprise financial management. We also provide an open data model that can be integrated into the governance process of enterprises by using Prometheus and OpenAPI. With cost insight, let's see which means we can use to achieve cost optimization.

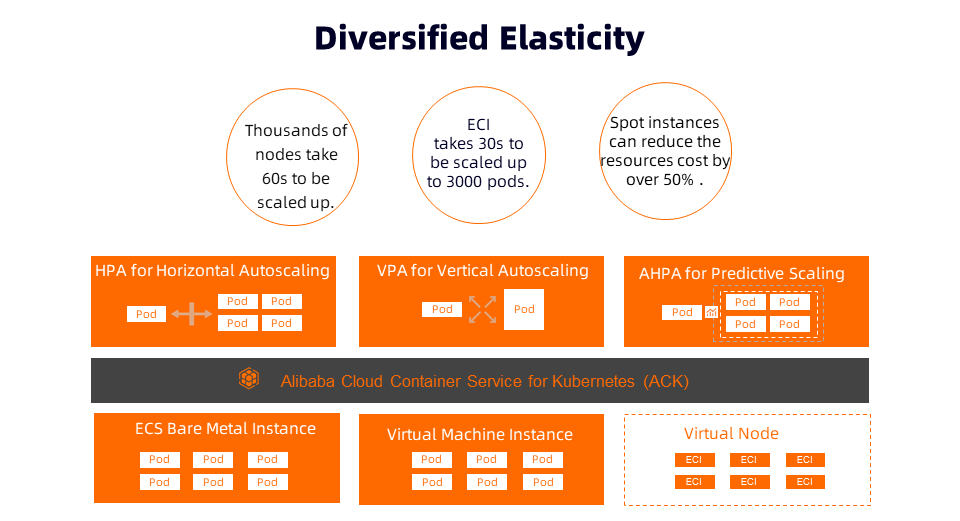

Elasticity is one of the core capabilities of the cloud, which can effectively reduce computing costs. ACK provides rich elasticity policies at the resource layer and the application layer.

At the resource layer, when the cluster resources are insufficient, ACK clusters can use the cluster-autoscaler to create new node instances in the node pool automatically. We can select Elastic Compute Service (ECS) virtual machines and ECS bare metal instances based on the application load to expand the capacity. Based on the powerful elastic computing capability of Alibaba Cloud, we can scale out thousands of nodes in minutes.

A more simplified solution in ACK clusters is to use Elastic Container Instance (ECI) to implement elasticity. ECI provides a Serverless-based container runtime environment based on lightweight virtual machines, which features strong isolation and high elasticity. It is O&M-free and capacity planning-free. ECI can be scaled up to 3000 pods within 30 seconds to respond to breaking news events or support batch compute businesses, such as autonomous driving simulation.

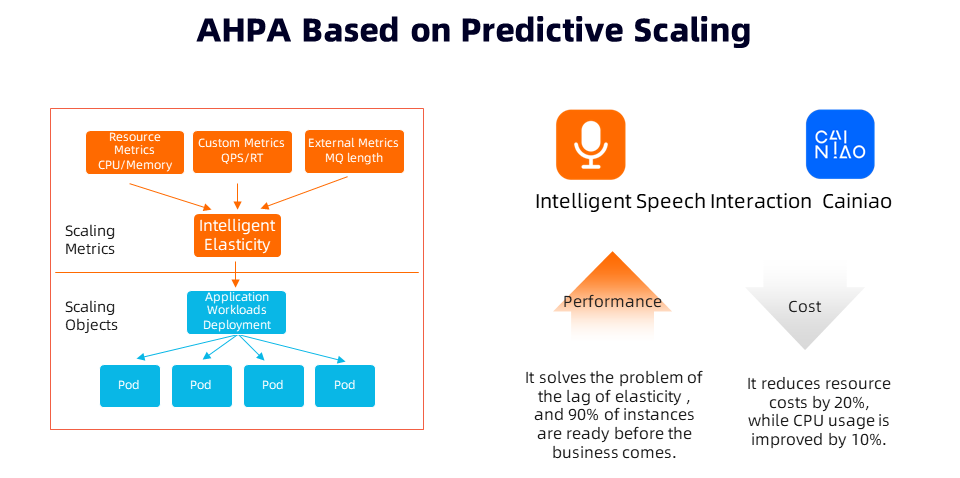

It is worth mentioning that we can use spot instances of ECS or ECI, which can utilize the idle computing resources of Alibaba Cloud, and the discounted cost can be as low as 90% of pay-as-you-go instances. Spot instances are ideal for stateless and fault-tolerant applications, such as batch data processing or video rendering. Kubernetes provides Horizontal Pod Autoscaling (HPA) at the application layer for horizontal scaling of pods and Vertical Pod Autoscaling (VPA) for vertical scaling of pods. ACK has a built-in Advanced Horizontal Pod Autoscaling (AHPA) solution based on machine learning to help simplify the elastic experience and improve the elastic SLA.

The built-in HPA of Kubernetes has two shortcomings:

The first one is the lag of elasticity. The elasticity strategy is based on the passive response to monitoring metrics. In addition, it takes some time to start and warm up an application, and the business stability may be affected during the scale-out process.

The second one is the complexity of the configuration. The execution effect of HPA depends on the configuration of the elasticity threshold. If the configuration is too aggressive, the stability of the application may be affected. However, if the configuration is too conservative, the effect of cost optimization will be impacted significantly. It is required to try several times to reach a reasonable level. Also, the elasticity strategy will need to be readjusted as the business changes.

In cooperation with DAMO Academy, Alibaba Cloud has launched AHPA, which can predict the elastic period and usage based on historical resource portraits and scale out in advance to ensure service quality. It has been verified in various scenarios (such as the Cainiao PAAS platform and the Alibaba Cloud intelligent speech service). This helps intelligent semantic interaction products realize that 90% of instances are ready before the business comes along. It improves CPU usage by 10% and reduces resource costs by 20%.

Computing-based workloads on Kubernetes are becoming more abundant with the widespread application of cloud-native technologies. We can make full use of the peak-to-peak effect between loads through reasonable scheduling so workloads can use resources in a more stable, efficient, and low-cost manner. This is the concept of hybrid deployment often mentioned in the industry.

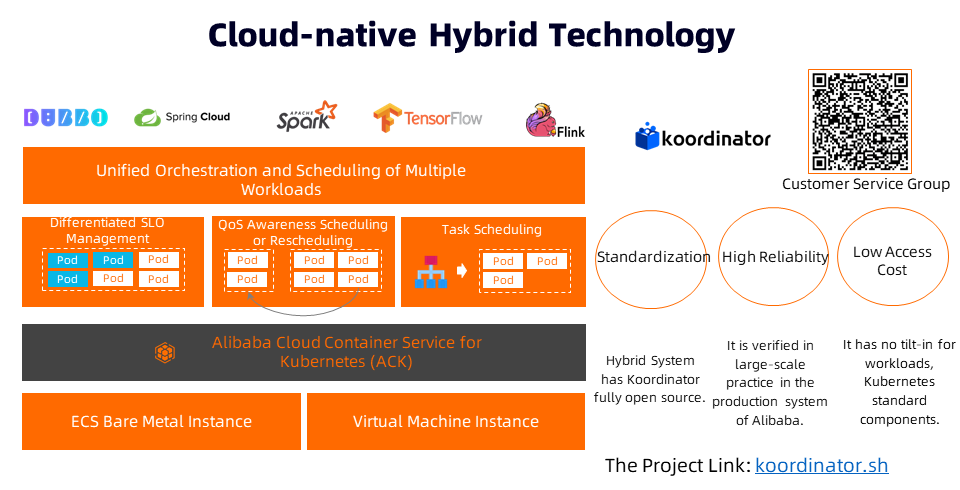

Alibaba began to explore container technology in 2011 and started the research and development of hybrid technology in 2016. Alibaba has undergone several rounds of technical architecture upgrades and finally evolved to the current cloud-native hybrid system architecture. It realizes a cloud-native hybrid deployment with a full business scale of over 10 million cores. The average daily CPU usage rate of the hybrid deployment exceeds 50%, helping Alibaba reduce a lot of resource costs.

The hybrid deployment is a cost control core built with a lot of money within the Internet enterprises, which gathers a lot of thought and optimization experience of business abstraction and resource management. Therefore, it usually needs several years of polishing and practicing to gradually be stable and generate production value. However, does every enterprise need a high threshold to use hybrid deployment or a large amount of investment to generate value?

Based on the practical experience of ultra-large-scale production within Alibaba Group, Alibaba Cloud has recently had the cloud-native hybrid project named Koordinator open-source. It aims to create a solution with the lowest access cost and the best hybrid efficiency in cloud-native scenarios and bring continuous dividends to enterprises after becoming cloud-native. It provides enhancements to the capability for orchestration and scheduling on Kubernetes, including three core capabilities:

The Koordinator project is fully compatible with the upstream standard Kubernetes without any intrusive modification. ACK supports productization so users can apply it in their specific scenarios based on open-source projects. The open-source Koordinator allows more enterprises to see (and use) the ability of cloud-native hybrid deployment and help enterprises accelerate the process of becoming cloud-native. Technically, Koordinator can help enterprises connect more loads to the Kubernetes platform, enrich the types of workloads for container scheduling, and give full play to the feature of load shifting and workload balancing, thus realizing efficiency and cost benefits and maintaining a healthy form of long-term sustainable development. The Koordinator project is still in the process of rapid development, but we welcome everyone to build it together.

RocketMQ Streams: Lightweight Real-Time Computing Engine in a Messaging System

710 posts | 57 followers

FollowAlibaba Cloud Community - July 22, 2022

Alibaba Cloud Serverless - February 17, 2023

Alibaba Cloud Native Community - August 7, 2025

Alibaba Cloud Native Community - January 25, 2024

Alibaba Developer - December 16, 2021

Alibaba Cloud Community - October 10, 2024

710 posts | 57 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Cloud Native Community