By Caijing and Mingshan Zhao

Deploying Large Language Models (LLMs) that often exceed tens of gigabytes is high-stakes work. An unregulated release can lead to service disruptions and massive resource waste. The ACK One Fleet multi-cluster canary release capability was designed to avoid this problem.

In the era of large models, AI inference has become a core business pillar for many enterprises. However, model iterations come with significant risks: Will the new model meet performance benchmarks? Will resource consumption spike? In complex geo-distributed and hybrid cloud environments, ensuring a safe and smooth version transition is a primary challenge for engineers.

By combining the ACK One Fleet management capability with Kruise Rollout, Alibaba Cloud provides a cross-cluster canary release solution that makes every update controllable, observable, and reversible.

For AI inference services—especially LLMs—canary releases have shifted from an optional best practice to a technical requirement due to several unique attributes:

• AI models suffer from slow loading times and high cold-start costs.

• A faulty deployment may cause a sharp drop in Queries per Second (QPS) and a sharp increase in Response Time (RT).

• Rollbacks are complex due to intricate dependencies between model versions and inference engine compatibility.

• Due to resource fragmentation, supply shortages, and data compliance, inference services are typically deployed across geo-distributed or hybrid cloud clusters.

• Manually executing kubectl apply across dozens of clusters is prone to human error.

• Disparate scripts and approval workflows for each cluster make unified observability impossible.

• During an incident, identifying the specific cluster or version at fault becomes a difficult problem.

ACK One Fleet + Kruise Rollout solves these challenges by providing a unified management layer.

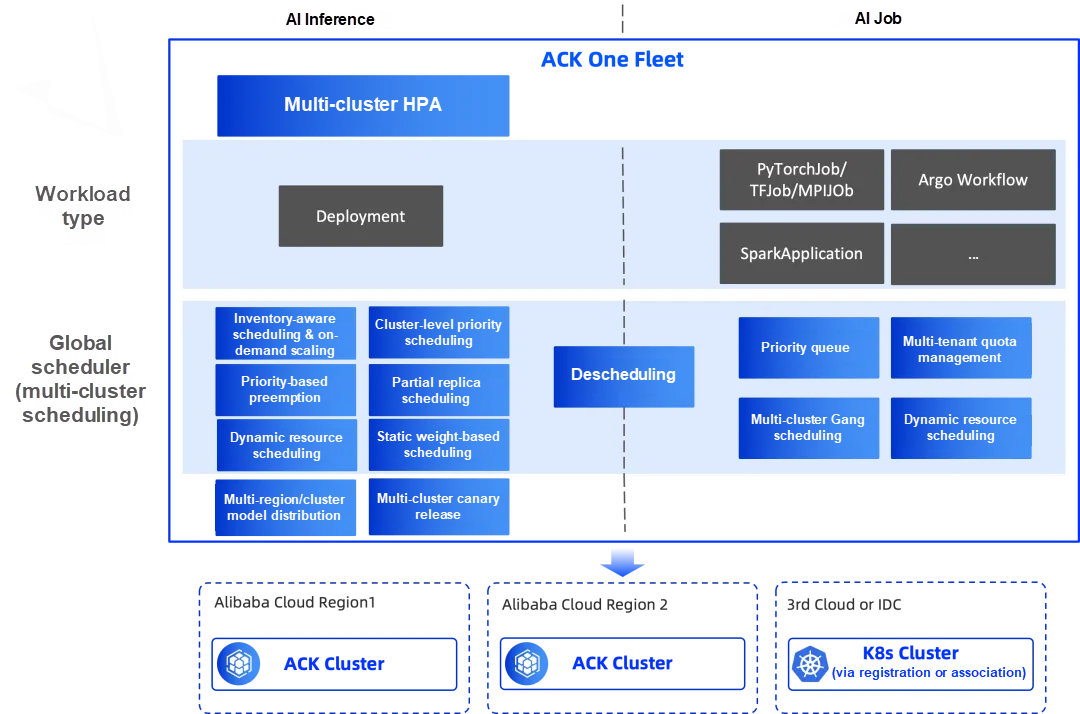

ACK One Fleet[1] is Alibaba Cloud’s enterprise-grade multi-cluster management solution. It provides end-to-end management and intelligent scheduling strategies for AI workloads, aimed at accelerating GPU provisioning and maximizing resource utilization.

1. Geo-distributed model distribution: ModelDistribution

2. Intelligent multi-cluster scheduling and distribution:

3. Multi-cluster HPA: Automatically scaling inference services based on global metrics to ensure stability during traffic surges.

4. Multi-cluster canary release: Providing a secure, stable, and convenient path for version updates.

5. More capabilities continue to evolve...

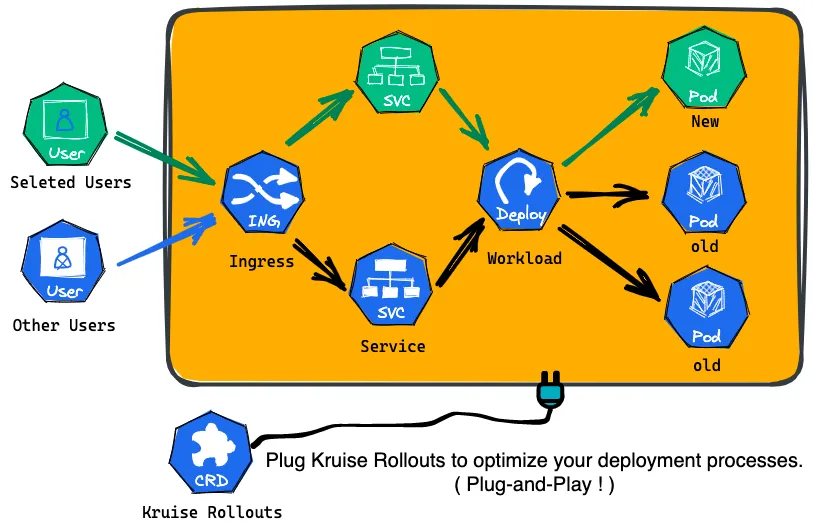

Kruise Rollout[2] is an open-source progressive delivery framework from the OpenKruise community. As a bypass component, Kruise Rollouts provides advanced progressive delivery capabilities, enabling smoother and more controlled application deployments. It supports various delivery modes—including canary, blue-green, multi-batch, and A/B testing—and is compatible with the Gateway API and various Ingress implementations for seamless integration into existing infrastructure. Overall, Kruise Rollouts is a valuable tool for Kubernetes users looking to optimize their deployment workflows.

Key features:

• Flexible release strategies

• Comprehensive traffic routing policies

• Broad protocol support

While multi-cluster releases can be handled by cluster or by workload, this solution focuses on the latter.

• By cluster: Suited for services replicated across multiple clusters.

• By workload: Suited for services with split-replica scheduling, such as AI inference workloads deployed across multiple clusters.

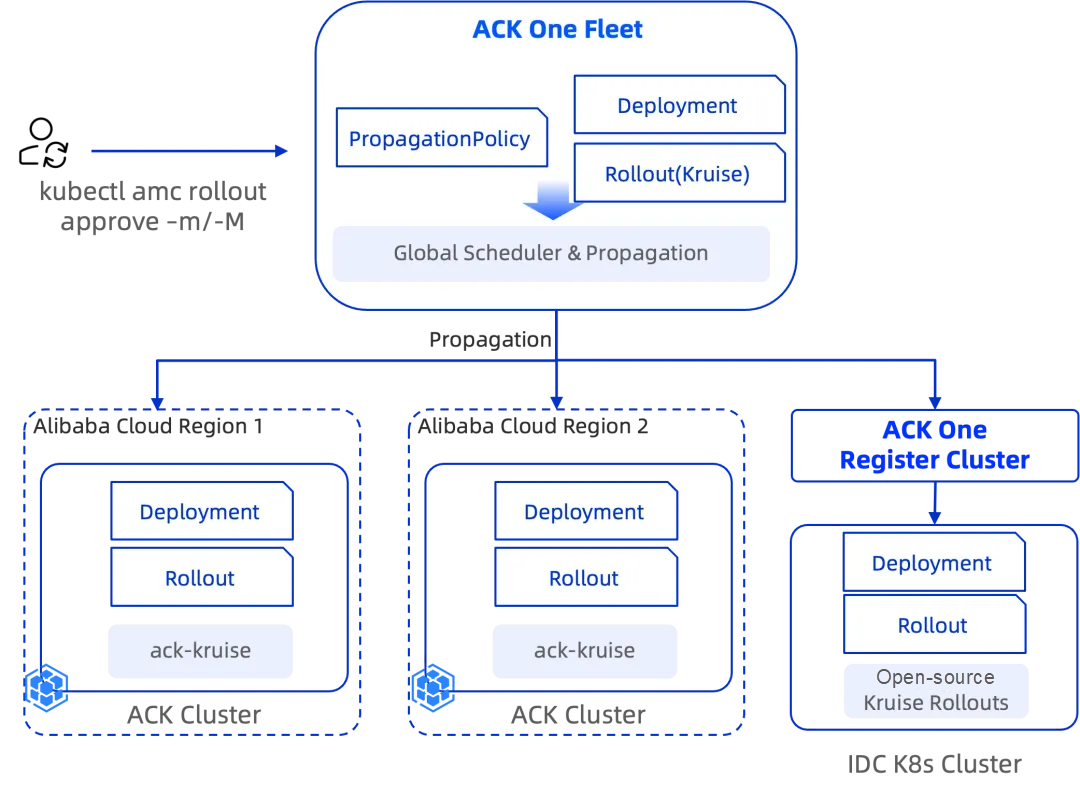

The overall solution for ACK One Fleet multi-cluster canary release is a collaborative architecture realized through integration with Kruise Rollout, following the principle of "Centralized Fleet Orchestration, Autonomous Sub-cluster Control":

1. Unified orchestration via ACK One Fleet:

2. Autonomous control via local Kruise Rollout: The Kruise Rollout controller deployed in each sub-cluster takes over the local release process. Based on the propagated Rollout policy, it automatically manages the update pace of pods within that cluster—for example, updating 10% of replicas first, then pausing for observation.

3. Unified approval with kubectl amc[3]:

When release processes across multiple sub-clusters are paused for manual intervention, you no longer need to log onto multiple clusters individually. By executing the kubectl amc rollout approve command at the fleet level, you can approve all sub-clusters at once, allowing them to proceed to the next batch simultaneously. Individual cluster approval is also supported. This approach eliminates the complexity and potential errors of repeatedly switching between cluster kubeconfig contexts.

Use ack-kruise version 1.8.3 or later for ACK clusters and Kruise Rollout version 0.6.2 or later for on-premises clusters.

Why Choose This Solution?

• Risk isolation and security control:

Limits the blast radius of new versions to specific batches and traffic segments to avoid global failures. Manual approval is supported for extra security.

• Architectural clarity:

ACK One Fleet handles service scheduling and dispatching, while Kruise Rollout manages fine-grained local rollout processes. This separation of concerns ensures a clear and logical architecture.

• Simplified O&M:

Provides a single pane of glass for global release status via the kubectl amc plugin. This eliminates the hassle and potential errors of repeatedly switching between cluster kubeconfig contexts.

• Multi-cluster policy consistency: Ensures that the same Rollout definition governs every cluster, preventing configuration drift.

Suppose you have a Qwen -based text generation service deployed across two clusters in Beijing and Shanghai. You are now upgrading the model to v2 using a batch release strategy. For simplicity, the inference service YAML and propagation policy are not shown in this example.

Define a Rollout policy in the Fleet with 3 batches (10% -> 50% -> 100%) and manual approval pauses between them.

Apply the PropagationPolicy to distribute to multiple clusters through ACK One Fleet.

apiVersion: rollouts.kruise.io/v1beta1

kind: Rollout

metadata:

name: qwen-inference-rollout

spec:

workloadRef:

apiVersion: apps/v1

kind: Deployment

name: qwen-inference

strategy:

canary:

enableExtraWorkloadForCanary: false

steps:

- replicas: 10%

- replicas: 50%

- replicas: 100%

---

apiVersion: policy.one.alibabacloud.com/v1alpha1

kind: PropagationPolicy

metadata:

name: qwen-inference-rollout-pp

namespace: demo

spec:

preserveResourcesOnDeletion: false

resourceSelectors:

- apiVersion: rollouts.kruise.io/v1beta1

kind: Rollout

name: qwen-inference-rollout

placement:

replicaScheduling:

replicaSchedulingType: Duplicated# View the Rollout status across all sub-clusters

kubectl amc get rollouts -M

# Approve the release for a specific sub-cluster

kubectl amc rollout approve rollouts/qwen-inference-rollout -m ${clusterid}

# Approve the release for all sub-clusters

kubectl amc rollout approve rollouts/qwen-inference-rollout -MFor enterprise AI inference services, stability and continuity are paramount. ACK One Fleet combines precise Kruise Rollout control with global orchestration to offer a robust, enterprise-grade release solution for multi-region and hybrid cloud deployments. This capability is essential for ACK One Fleet to build a high-performance, platform-level environment for multi-cluster AI inference.

Questions or feedback? Join our ACK One customer support group on DingTalk (Group ID: 35688562).

You can also find us in the OpenKruise community (Group ID: 23330762).

[1] ACK One Fleet:

https://www.alibabacloud.com/help/en/ack/distributed-cloud-container-platform-for-kubernetes/user-guide/fleet-management-overview

[2] Kruise Rollout:

https://openkruise.io/rollouts/introduction

[3] kubectl amc:

https://www.alibabacloud.com/help/en/ack/distributed-cloud-container-platform-for-kubernetes/user-guide/use-amc

Intelligent Scheduling for AI Inference: Cluster-Level Priority Elastic Scheduling

Caching is Efficiency: Achieving Precise LLM Cache Hits with Alibaba Cloud ACK GIE

229 posts | 34 followers

FollowAlibaba Container Service - February 25, 2026

Alibaba Container Service - June 13, 2024

Alibaba Container Service - August 5, 2025

Alibaba Container Service - June 26, 2025

Alibaba Container Service - November 21, 2024

Alibaba Container Service - April 24, 2025

229 posts | 34 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn MoreMore Posts by Alibaba Container Service