By Yinhang

In the world of Large Language Model (LLM) inference, there is a metric that is often underestimated yet absolutely critical: KV-Cache hit rate. This technical indicator directly determines whether your AI application delivers a silky-smooth experience or a sluggish, bottlenecked performance—and whether your operational costs remain under control or spiral out of budget.

Alibaba Cloud ACK Gateway with Inference Extension (ACK GIE) has recently introduced the precision mode prefix cache-aware routing capability. This technology not only pushes vLLM’s KV-Cache hit rate to its limit but also delivers tangible business value by slashing latency and resource waste.

Before diving into our solution, let's understand why KV-Cache is so important.

LLMs are built on the Transformer architecture, centered around the self-attention mechanism. During inference, the model must calculate attention weights between every new token and all preceding tokens. As context length grows, this computational complexity increases quadratically. Consequently, the prefill stage (processing the entire input prompt) becomes the most time-consuming part of the inference cycle.

KV-Cache is the definitive solution to this bottleneck. It stores previously computed Key and Value vectors in GPU memory. When generating subsequent tokens, the model retrieves these values directly from the cache rather than recomputing them. This drastically reduces redundant computation and boosts inference efficiency.

As an industry-leading LLM inference framework, vLLM optimizes KV-Cache utilization through its APC feature. APC intelligently identifies shared prefixes across different requests and reuses existing KV-Cache blocks.

Consider a customer service bot: every interaction begins with the same system prompt: "You are a professional customer service assistant; please answer user questions in a friendly tone..." Without APC, every request re-calculates the KV-Cache for this prompt. With APC, the system reuses the cached blocks for the second user, skipping the prefill stage and moving directly to the decode (generation) stage.

In single-instance environments, the results are staggering. Our tests show that for a long prompt of 10,000 tokens, the Time to First Token (TTFT) for the second request drops from 4.3 seconds to 0.6 seconds—a performance leap of more than 7 times.

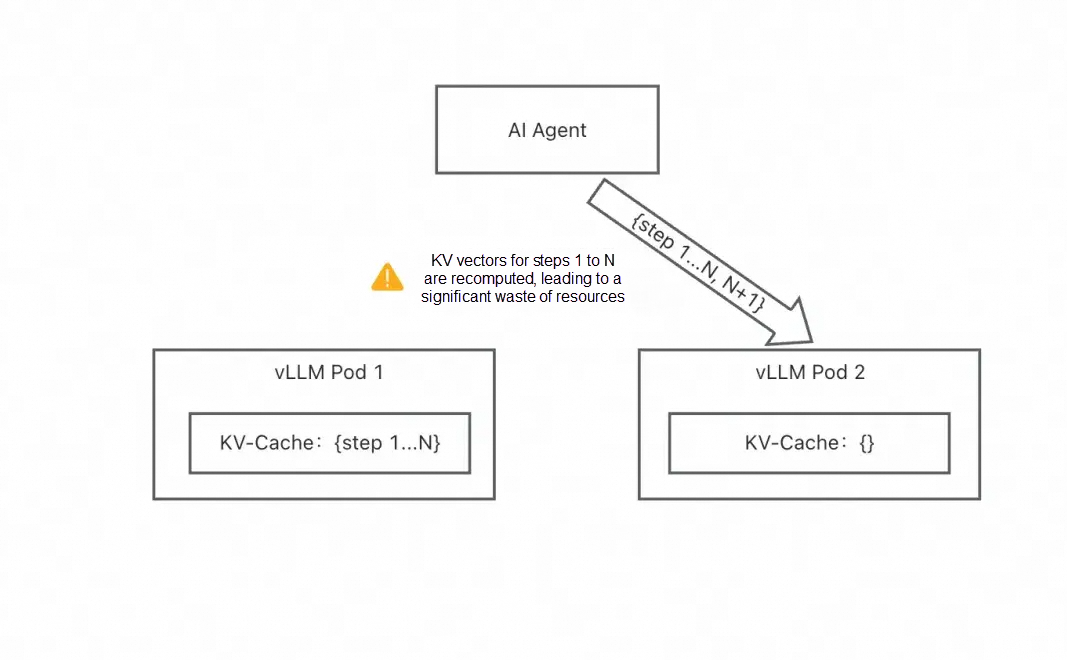

While single-machine optimization is powerful, production environments require multiple replicas to handle high concurrency. This introduces a fatal flaw: KV-Cache fragmentation.

In a distributed environment, each vLLM instance maintains its own independent KV-Cache. Traditional load balancers (e.g., Round Robin, Least Connections) are blind to the state of these caches; they distribute requests mechanically. This leads to:

• Requests with shared prefixes being scattered across different instances.

• Every instance being forced to recompute the same prefill.

• The total loss of KV-Cache reuse advantages.

• A significant drop in overall system performance.

It’s like having an elite commando unit where every soldier acts in isolation without a central command—synergy is impossible.

Consider a typical agent workflow:

At this point, the disaster occurred:

• Cache miss: Pod B has nothing in its cache regarding the 6,000-token context.

• Redundant computation: Pod B is forced to re-run the full 6,100-token prefill, wasting expensive GPU cycles.

• Latency spike: User TTFT jumps from milliseconds to seconds or even tens of seconds.

• Resource waste: GPU power that could have processed new requests is consumed by redundant work, lowering total system throughput.

In high-concurrency environments, this "cache thrashing" occurs continuously, forcing the system to spend most of its time on redundant Prefills rather than efficient Decode generation.

ACK GIE’s Winning Strategy

The precise-mode prefix cache-aware routing of ACK GIE was designed to solve this problem. By establishing a global view of the KV-Cache across the cluster, it enables intelligent, cache-aware request scheduling.

The core of precise mode is real-time perception of the KV-Cache state on every vLLM instance.

1. KV event reporting: Each vLLM instance (v0.10.0+) uses the ZeroMQ protocol to report events—such as the creation, update, or deletion of KV-Cache blocks—to ACK GIE in real-time.

2. Global index construction: ACK GIE receives these events and builds a global KV-Cache index. This index tracks the hash values of every KV block, its instance location, and its storage medium (GPU/CPU).

3. Intelligent routing decisions: When a new request arrives, the ACK GIE:

This mechanism ensures that requests with the same prefix are routed to the same instance, maximizing the KV-Cache hit rate.

ACK GIE also provides prefix cache-aware routing in estimation mode, but the two differ fundamentally:

• Estimation mode: Estimates KV-Cache distribution based on historical routing records. It requires no inference engine support but has limited accuracy.

• Precise mode: Directly receives KV-Cache events for 100% accuracy. It delivers much higher hit rates but requires specific inference engine versions.

In actual testing, precise mode delivered a 57x TTFT performance improvement over estimation mode in high-concurrency scenarios.

• An ACK managed cluster (GPU node pools are recommended)

• Gateway with Inference Extension v1.4.0-apsara.3 or later

• vLLM v0.10.0 or later

Using Qwen3-32B as an example, store the model files in OSS:

# Download the model

git lfs install

GIT_LFS_SKIP_SMUDGE=1 git clone https://www.modelscope.cn/Qwen/Qwen3-32B.git

cd Qwen3-32B/

git lfs pull

# Upload to OSS

ossutil mkdir oss://<your-bucket-name>/Qwen3-32B

ossutil cp -r ./Qwen3-32B oss://<your-bucket-name>/Qwen3-32BCreate a PV and a PVC to mount the model in OSS:

# llm-model.yaml

apiVersion: v1

kind: Secret

metadata:

name: oss-secret

stringData:

akId: <your-oss-ak>

akSecret: <your-oss-sk>

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: llm-model

labels:

alicloud-pvname: llm-model

spec:

capacity:

storage: 30Gi

accessModes:

- ReadOnlyMany

persistentVolumeReclaimPolicy: Retain

csi:

driver: ossplugin.csi.alibabacloud.com

volumeHandle: llm-model

nodePublishSecretRef:

name: oss-secret

namespace: default

volumeAttributes:

bucket: <your-bucket-name>

url: <your-bucket-endpoint>

otherOpts: "-o umask=022 -o max_stat_cache_size=0 -o allow_other"

path: /Qwen3-32B/

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: llm-model

spec:

accessModes:

- ReadOnlyMany

resources:

requests:

storage: 30Gi

selector:

matchLabels:

alicloud-pvname: llm-modelThe key is to configure the KV event reporting parameters:

# vllm.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: qwen3

name: qwen3

spec:

replicas: 3

selector:

matchLabels:

app: qwen3

template:

metadata:

labels:

app: qwen3

spec:

containers:

- name: vllm

image: 'registry-cn-hangzhou.ack.aliyuncs.com/dev/vllm:0.10.0'

command:

- sh

- '-c'

- >-

vllm serve /models/Qwen3-32B --served-model-name Qwen3-32B

--trust-remote-code --port=8000 --max-model-len 8192

--gpu-memory-utilization 0.95 --enforce-eager --kv-events-config

"{\"enable_kv_cache_events\":true,\"publisher\":\"zmq\",\"endpoint\":\"tcp://epp-default-qwen-inference-pool.envoy-gateway-system.svc.cluster.local:5557\",\"topic\":\"kv@${POD_IP}@Qwen3-32B\"}"

--prefix-caching-hash-algo sha256_cbor_64bit --block-size 64

env:

- name: POD_IP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.podIP

- name: PYTHONHASHSEED

value: '42'

ports:

- containerPort: 8000

name: restful

resources:

limits:

nvidia.com/gpu: '1'

requests:

nvidia.com/gpu: '1'

volumeMounts:

- mountPath: /models/Qwen3-32B

name: model

- mountPath: /dev/shm

name: dshm

volumes:

- name: model

persistentVolumeClaim:

claimName: llm-model

- emptyDir:

medium: Memory

sizeLimit: 30Gi

name: dshm

---

apiVersion: v1

kind: Service

metadata:

labels:

app: qwen3

name: qwen3

spec:

ports:

- name: http-serving

port: 8000

targetPort: 8000

selector:

app: qwen3

type: ClusterIPKey parameter descriptions:

• -- kv-events-config: Enables KV event reporting and specifies the ZeroMQ endpoint.

• -- prefix-caching-hash-algo: Must be set to sha256_cbor_64bit.

• -- block-size: The KV block size. We recommend setting this parameter to 64.

• PYTHONHASHSEED: The hash seed, which must be consistent with the routing policy configuration.

# inference-policy.yaml

apiVersion: inference.networking.x-k8s.io/v1alpha2

kind: InferencePool

metadata:

name: qwen-inference-pool

spec:

targetPortNumber: 8000

selector:

app: qwen3

---

apiVersion: inferenceextension.alibabacloud.com/v1alpha1

kind: InferenceTrafficPolicy

metadata:

name: inference-policy

spec:

poolRef:

name: qwen-inference-pool

profile:

single:

trafficPolicy:

prefixCache:

mode: tracking

trackingConfig:

indexerConfig:

tokenProcessorConfig:

blockSize: 64

hashSeed: 42

model: Qwen/Qwen3-32B# inference-gateway.yaml

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: inference-gateway

spec:

gatewayClassName: ack-gateway

listeners:

- name: http-llm

protocol: HTTP

port: 8080

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: inference-route

spec:

parentRefs:

- name: inference-gateway

rules:

- matches:

- path:

type: PathPrefix

value: /v1

backendRefs:

- name: qwen-inference-pool

kind: InferencePool

group: inference.networking.x-k8s.io

---

apiVersion: gateway.envoyproxy.io/v1alpha1

kind: BackendTrafficPolicy

metadata:

name: backend-timeout

spec:

timeout:

http:

requestTimeout: 24h

targetRef:

group: gateway.networking.k8s.io

kind: Gateway

name: inference-gatewayCreate two requests with identical prefixes:

# round1.txt

echo '{"max_tokens":24,"messages":[{"content":"Hi, here'\''s some system prompt: hi hi hi hi hi hi hi hi hi hi.For user 3, here are some other context: hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi.I would like to test your intelligence. for this purpose I would like you to play zork. you can interact with the game by typing in commands. I will forward these commands to the game and type in any response. are you ready?","role":"user"}],"model":"Qwen3-32B","stream":true,"stream_options":{"include_usage":true},"temperature":0}' > round1.txt

# round2.txt

echo '{"max_tokens":3,"messages":[{"content":"Hi, here'\''s some system prompt: hi hi hi hi hi hi hi hi hi hi.For user 3, here are some other context: hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi hi.I would like to test your intelligence. for this purpose I would like you to play zork. you can interact with the game by typing in commands. I will forward these commands to the game and type in any response. are you ready?","role":"user"},{"content":"Hi there! It looks like you'\''re setting up a fun test. I'\''m ready to play Zork! You can","role":"assistant"},{"content":"% zork\nWelcome to Dungeon. This version created 11-MAR-91.\nYou are in an open field west of a big white house with a boarded\nfront door.\nThere is a small mailbox here.\n>","role":"user"},{"content":"Great!","role":"assistant"},{"content":"Opening the mailbox reveals:\n A leaflet.\n>","role":"user"}],"model":"Qwen3-32B","stream":true,"stream_options":{"include_usage":true},"temperature":0}' > round2.txtSend the request and verify the routing:

export GATEWAY_IP=$(kubectl get gateway/inference-gateway -o jsonpath='{.status.addresses[0].value}')

curl -X POST $GATEWAY_IP:8080/v1/chat/completions -H 'Content-Type: application/json' -d @./round1.txt

curl -X POST $GATEWAY_IP:8080/v1/chat/completions -H 'Content-Type: application/json' -d @./round2.txt

# View the logs

kubectl logs deploy/epp-default-qwen-inference-pool -n envoy-gateway-system|grep "handled"The expected output will show both requests being routed to the same instance:

2025-08-19T10:16:12Z LEVEL(-2) requestcontrol/director.go:278 Request handled {"x-request-id": "00d5c24e-b3c8-461d-9848-7bb233243eb9", "model": "Qwen3-32B", "endpoint": "{NamespacedName:default/qwen3-779c54544f-9c4vz Address:10.0.0.5 ...}"}

2025-08-19T10:16:19Z LEVEL(-2) requestcontrol/director.go:278 Request handled {"x-request-id": "401925f5-fe65-46e3-8494-5afd83921ba5", "model": "Qwen3-32B", "endpoint": "{NamespacedName:default/qwen3-779c54544f-9c4vz Address:10.0.0.5 ...}"}We conducted a benchmark on a cluster with 8 vLLM instances to verify the real-world impact of precise mode.

Simulated B2B SaaS case:

• 150 enterprise customers

• Each customer has a unique 6,000-token context (system prompt)

• 5 concurrent users per customer

• User query length: 1,200 tokens

• QPS gradually increased from 3 to 60

| Scheduling Strategy | Output Throughput (tokens/s) | P90 TTFT (s) | Avg TTFT (s) | Avg Wait Queue |

|---|---|---|---|---|

| (Precise Mode) Prefix cache-aware routing | 8730.0 | 0.542 | 0.298 | 0.1 |

| (Estimate Mode) Prefix cache-aware routing | 6944.4 | 31.083 | 13.316 | 8.1 |

| Random Scheduling | 4428.7 | 92.551 | 46.987 | 27.3 |

These metrics prove that precise-mode prefix cache-aware routing is not just a theoretical optimization—it is a performance powerhouse for production environments.

In the race for LLM inference efficiency and cost reduction, every percentage point of optimization yields massive business value. ACK GIE co-developed and contributed the precise-mode prefix cache-aware routing technology to the llm-d upstream community. For details, see https://llm-d.ai/blog/kvcache-wins-you-can-see

By establishing a global KV-Cache view, ACK GIE transforms inference from "operating in the dark" to "full-spectrum visibility", allowing distributed vLLM clusters to function as a unified, high-performance engine. Whether you are building enterprise AI apps or operating a large-scale AI service platform, this technology is your key to superior performance and cost optimization.

ACK One Fleet Multi-Cluster Canary Release: A "Safety Valve" for AI Inference Services

229 posts | 34 followers

FollowAlibaba Container Service - January 15, 2026

ApsaraDB - December 29, 2025

Alibaba Cloud Native Community - June 2, 2026

Alibaba Cloud Native Community - September 9, 2025

Alibaba Cloud Native Community - March 6, 2025

Alibaba Cloud Native Community - February 13, 2026

229 posts | 34 followers

Follow Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Container Service