By Jing Cai

In the era of large models, the demand for GPU computing power has reached an inflection point. Due to uneven resource distribution, supply shortages, high costs, global deployment, and compliance requirements, enterprises are increasingly adopting cross-region multi-cluster or hybrid cloud multi-cluster architectures. However, without global orchestration, AI tasks often end up queued in "resource silos" within a specific region, while GPUs in other clusters sit idle. Alibaba Cloud ACK One, a distributed cloud container platform, provides a suite of multi-cluster scheduling strategies to intelligently orchestrate your AI inference services. This article explores the applicable scenarios for cluster-level priority scheduling:

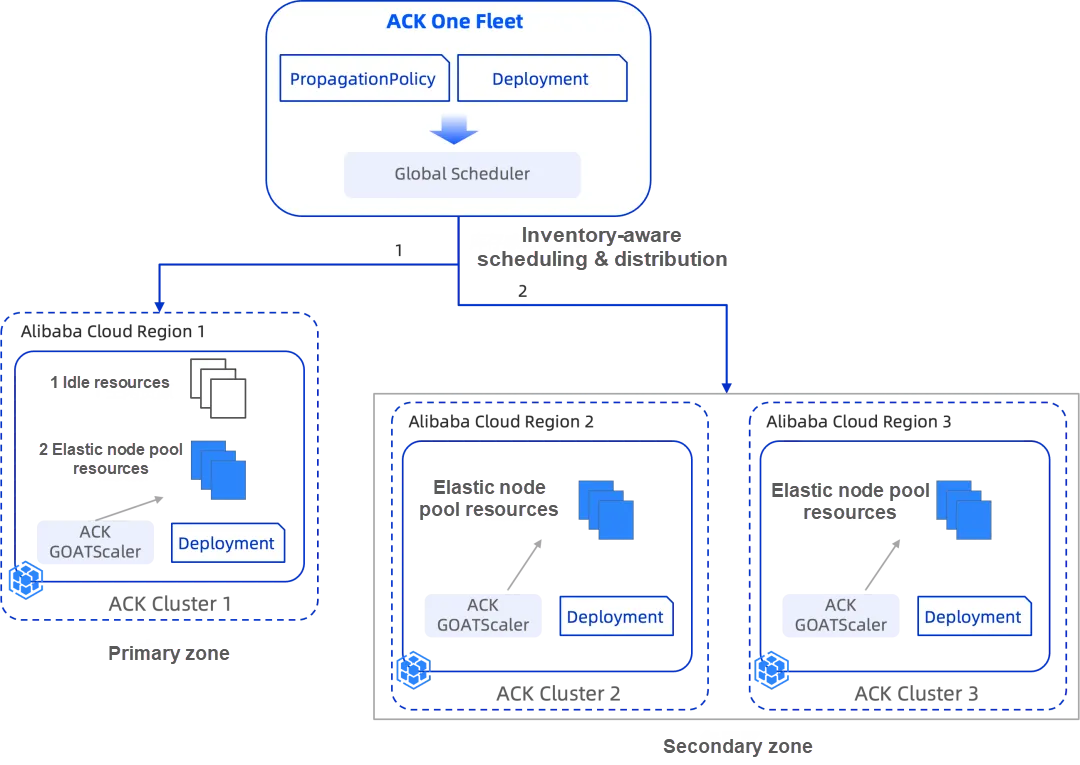

1. Cross-region multi-ACK clusters: Prioritize compute resources in the primary region. When capacity is reached, the system triggers a rapid "burst" to other regions to ensure business continuity. During scale-in, resources in secondary regions are released first.

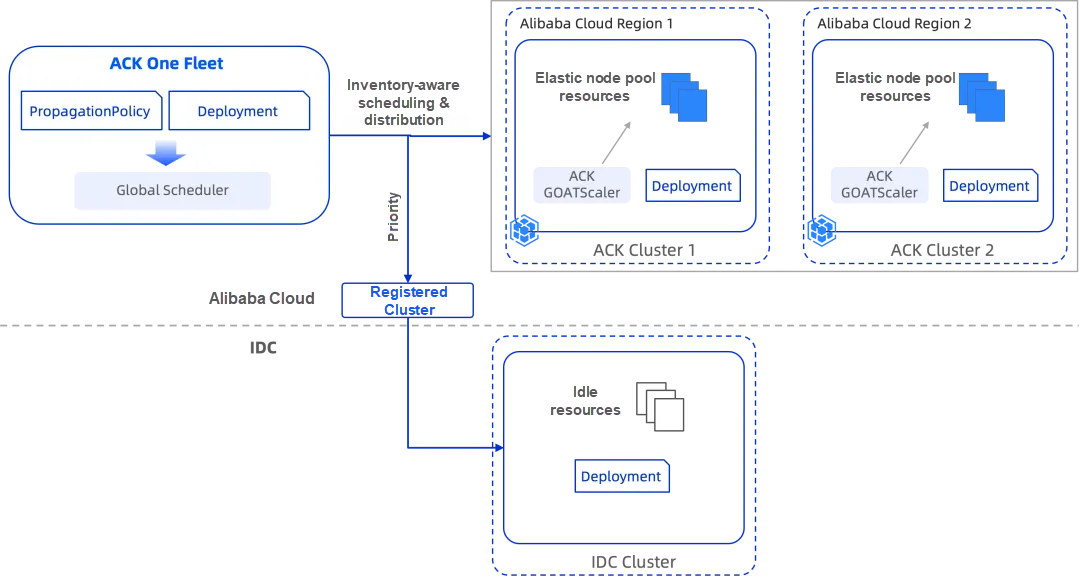

2. Hybrid cloud multi-cluster (On-premises Kubernetes + ACK): Balancing cost, compliance, and scalability. The system first saturates on-premises capacity to minimize overhead. Once exhausted, it overflows to Alibaba Cloud ACK for rapid scaling. When peak traffic subsides, cloud-based replicas are scaled in first to optimize costs.

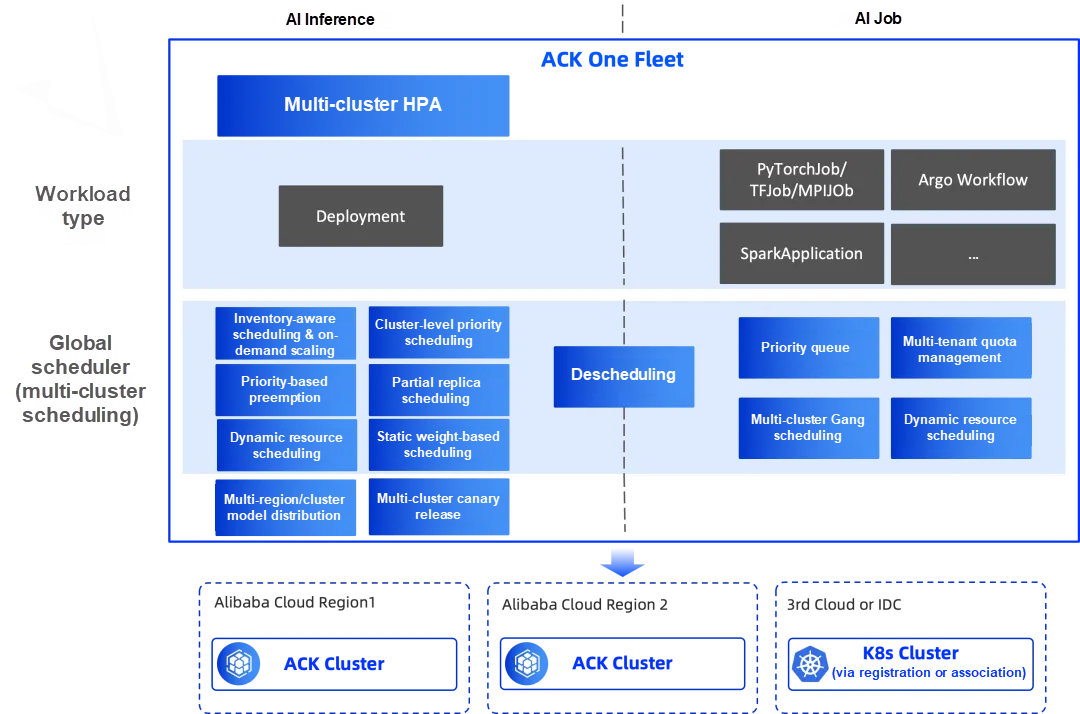

ACK One Fleet [1] is Alibaba Cloud’s enterprise-grade multi-cluster management solution. It enables intelligent AI workload scheduling to accelerate GPU provisioning and maximize resource utilization, preventing both resource waste and performance hotspots. Furthermore, it provides an end-to-end management framework that simplifies multi-cluster AI operations.

For online AI inference, ACK One Fleet offers several intelligent scheduling capabilities:

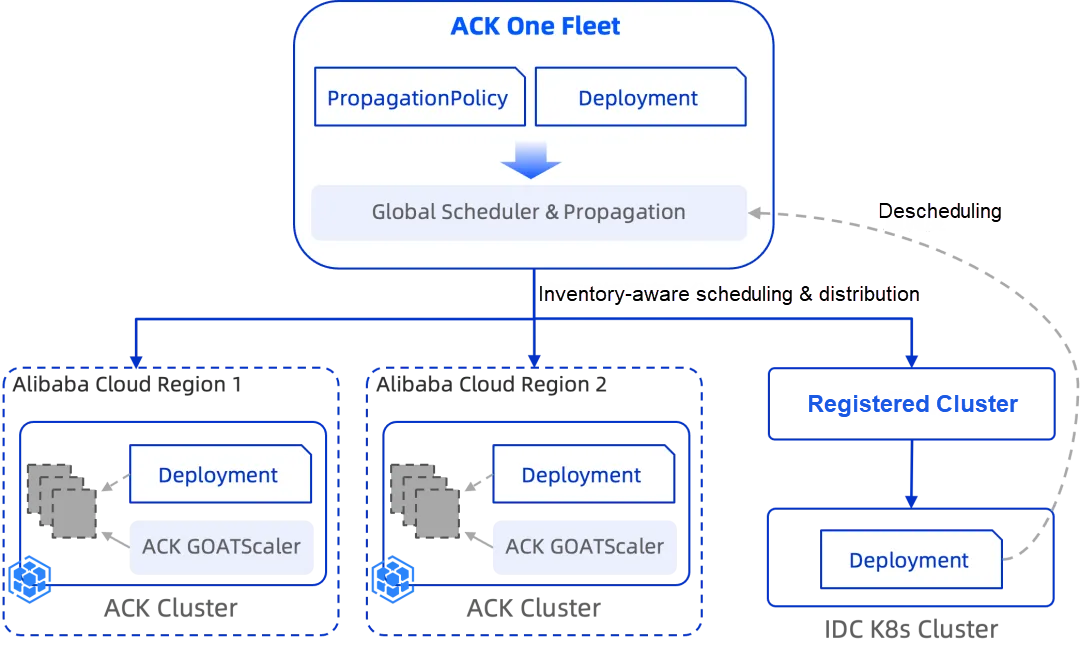

1. Inventory-aware multi-cluster elastic scheduling [2]: Integrates the Fleet Global Scheduler with ACK node pool instant scaling [3] to perceive real-time cloud inventory. This enables rapid GPU provisioning across regions and hybrid environments.

2. Cluster-level priority scheduling [4]: Routes AI services based on pre-defined cluster priorities. Workloads are prioritized for high-priority clusters to maximize resource utilization; only when these clusters reach capacity is traffic overflowed to lower-priority ones. For services with multiple replicas, the system supports split deployment across clusters of varying priorities and ensures that compute resources are released in reverse order (from lowest to highest priority) during scale-in. When integrated with inventory-aware elastic scheduling, this capability supports the following production-ready scenarios:

3. Workload preemption: Uses PriorityClass to preempt low-priority AI tasks across clusters, ensuring that mission-critical AI services stay online. The resource utilization sequence is: Idle resources -> Preempted resources from low-priority services -> Elastic scaling.

4. Partial replica scheduling: For Deployment types, if a cluster has some—but not all—of the required capacity, the scheduler will run as many replicas as possible to ensure no idle resource goes to waste.

5. Dynamic resource scheduling: Global Scheduler monitors real-time idle capacity across all sub-clusters and distributes replicas based on weighted availability.

6. Static weight scheduling: Administrators can assign weights to specific target clusters, allowing the scheduler to distribute replicas proportionally.

7. Descheduling: The descheduler identifies pods stuck in a pending state due to resource constraints and relocates them to clusters with sufficient capacity.

8. Multi-cluster HPA: Supports Horizontal Pod Autoscaler (HPA) based on global metrics (custom or external) from sub-clusters. Scaling requirements are calculated at the Fleet level and then distributed to sub-clusters according to the scheduling policy.

9. Multi-cluster canary release: Leverages Kruise Rollouts for phased, multi-batch deployments across the entire fleet.

10. Geo-distributed model distribution: Uses OCI Images to accelerate model delivery and simplify version management (e.g., rollbacks).

For AI jobs (training, data processing, and offline inference), ACK One Fleet provides:

1. Supports for multiple frameworks: PyTorchJob, TFJob, SparkApplication, and Argo Workflow.

2. Multi-cluster Gang scheduling: Implements "all-or-nothing" scheduling via reservation or dynamic detection, ensuring jobs only start when all required sub-tasks can be scheduled.

3. Multi-tenancy quota management: Uses ElasticQuotaTree for namespace-based resource isolation, ensuring fairness while allowing dynamic resource sharing between tenants during idle periods.

4. Priority-based scheduling: High-priority tasks are prioritized for resource allocation according to the PriorityClass specified within the user-defined PodTemplate.

5. Job failure rescheduling: If a job fails in a sub-cluster, Global Scheduler reclaims the task and migrates it to a healthy cluster with sufficient resources.

You can define multiple cluster groups in a PropagationPolicy, ranked from high to low priority. The ACK One Global Scheduler then orchestrates the workload accordingly:

1. Saturate high-priority first: Tasks always target high-priority clusters first. Only when capacity is exhausted (or inventory is unavailable) does the workload overflow to lower-priority groups. Clusters in the same cluster group have the same priority.

2. Fractional deployment: Even for large Deployments, the system will fill the idle gaps in the high-priority cluster before sending the remainder to the next cluster.

3. Reverse scale-in: To maintain optimal cost and performance, replicas on lower-priority clusters are terminated first during scale-in.

apiVersion: policy.one.alibabacloud.com/v1alpha1

kind: PropagationPolicy

metadata:

name: vllm-deploy-pp

namespace: test

spec:

autoScaling:

# Enable inventory-aware scheduling

ecsProvision: true

placement:

# Configure cluster priority groups

clusterAffinities:

- affinityName: ack-region1

clusterNames:

- ${Cluster 1 ID}

- ${Cluster 2 ID}

- affinityName: ack-region2

clusterNames:

- ${Cluster 3 ID}

replicaScheduling:

replicaSchedulingType: Divided

replicaDivisionPreference: Weighted

weightPreference:

dynamicWeight: AvailableReplicas

preserveResourcesOnDeletion: false

resourceSelectors:

- apiVersion: apps/v1

kind: Deployment

namespace: test

schedulerName: default-schedulerWith ACK One Fleet, you can incorporate clusters from multiple regions into a unified multi-cluster control plane. This allows for the centralized orchestration of compute resources across regions to ensure seamless and stable workload expansion. For instance, latency-sensitive AI services can prioritize compute capacity in the primary region, leveraging elastic resources in standby regions only when primary capacity is exhausted. This also enables the strategic utilization of region-specific hardware—such as prioritizing high-performance RTX 5090 GPUs in Region A and falling back to RTX 4090 units in Region B when needed.

By leveraging cluster-level priority scheduling combined with inventory-aware elastic scheduling, you can implement a sophisticated resource strategy: First, use idle resources in the primary region. Next, burst into elastic resources within the same region. Finally, overflow to other regions only if capacity in the primary region is completely depleted.

Many enterprises maintain on-premises GPU capacity to meet compliance requirements or manage baseline costs. However, as AI workloads surge or experience tidal fluctuations (demand spikes), on-premises resources often become a bottleneck. ACK One Fleet enables a hybrid cloud multi-cluster architecture that provides unified orchestration of both on-premises and cloud-based compute power. This approach ensures you maximize your on-premises investment while maintaining the ability to scale elastically.

The synergy of priority-based and inventory-aware scheduling allows you to saturate on-premises GPU capacity first. Once on-premises resources are exhausted, the system triggers a "cloud burst" to Alibaba Cloud ACK for rapid capacity replenishment. Once the peak demand subsides, the system intelligently scales in cloud-based inference replicas first to minimize cloud expenditures. In hybrid cloud scenarios, the value of ACK One Fleet is clear:

• Cost optimization: Make full use of the existing on-premises GPU hardware.

• Elasticity and business continuity: Cloud capacity serves as a safety net, allowing training or inference tasks to spill over to ACK during peak loads.

• Unified scheduling and management: Move away from manual allocation. The system automatically decides where a workload should run based on real-time capacity and policy.

ACK One Fleet provides the "brain" for AI workload orchestration. By unifying scheduling across clusters, it doesn't just provision capacity—it ensures that GPU resources are used where they provide the most value. With integrated HPA, traffic gateways, and model distribution, ACK One Fleet is the essential foundation for building a production-ready, multi-cluster AI inference platform. In the large model era, the winning strategy isn't just about how much compute you have—it's about how intelligently you use it.

[1] ACK One Fleet:

https://www.alibabacloud.com/help/en/ack/distributed-cloud-container-platform-for-kubernetes/user-guide/fleet-management-overview

[2] Inventory-aware multi-cluster elastic scheduling:

https://www.alibabacloud.com/help/en/ack/distributed-cloud-container-platform-for-kubernetes/user-guide/enable-inventory-aware-elastic-scheduling-for-multi-cluster-fleets

[3] Node instant scaling:

https://www.alibabacloud.com/help/en/ack/ack-managed-and-ack-dedicated/user-guide/instant-elasticity

[4] Cluster-level priority scheduling for multi-cluster environments:

https://www.alibabacloud.com/help/en/ack/distributed-cloud-container-platform-for-kubernetes/use-cases/multi-cluster-priority-elastic-scheduling-based-on-cluster-level

When Agents Meet Workflows—Can Intelligence Become More Controllable?

ACK One Fleet Multi-Cluster Canary Release: A "Safety Valve" for AI Inference Services

229 posts | 34 followers

FollowAlibaba Container Service - February 25, 2026

Alibaba Container Service - January 15, 2026

Alibaba Container Service - May 19, 2025

Alibaba Cloud Native Community - May 21, 2026

Alibaba Container Service - May 14, 2025

Alibaba Cloud Native Community - December 17, 2025

229 posts | 34 followers

Follow Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More Auto Scaling

Auto Scaling

Auto Scaling automatically adjusts computing resources based on your business cycle

Learn More Hybrid Cloud Solution

Hybrid Cloud Solution

Highly reliable and secure deployment solutions for enterprises to fully experience the unique benefits of the hybrid cloud

Learn More Hybrid Cloud Storage

Hybrid Cloud Storage

A cost-effective, efficient and easy-to-manage hybrid cloud storage solution.

Learn MoreMore Posts by Alibaba Container Service