By Buchen, Alibaba Cloud Serverless Technical Director

When we build an application, we always hope it can respond quickly and be cheap. However, our system faces various challenges in practice, such as unpredictable traffic peaks, slow response from dependent downstream services, and a small number of requests consuming a lot of CPU or memory resources. The entire system is often slowed down or cannot respond to requests. More computing resources have to be reserved in many cases to make application services always respond quickly, but most of the time, these computing resources are idle. A better way is to separate the time-consuming or resource-consuming processing logic from the main request processing logic and hand it over to a more resource-flexible system for asynchronous execution. This allows the request to be quickly processed and returned to the user but also saves costs.

Generally speaking, the time-consuming, resource-consuming, or prone to error logic is better stripped out of the main request process logic and executed asynchronously. For example, after a new user registers successfully, the system usually sends a welcome email. The logic of sending a welcome email can be stripped from the registration process. Another example is when a user uploads an image, thumbnails of different sizes are usually needed. However, image processing is not included in the image uploading. The user can finish the process after uploading the image successfully, and the processing logic (such as generating thumbnails) can be executed as asynchronous tasks. This way, the application server can avoid being overwhelmed by compute-intensive tasks (such as image processing), and users can get a faster response. Common asynchronous execution tasks include:

Slack [1], Pinterest [2], Facebook [3], and other companies are using asynchronous task processing systems to achieve better service availability and lower costs. According to Dropbox statistics [4], there are more than 100 different types of asynchronous tasks in their business scenarios. A fully functional asynchronous task processing system can bring significant benefits:

A task processing system usually consists of task API and observability, task distribution, and task execution. We introduce the functions of these three subsystems first and then discuss the technical challenges and solutions faced by the entire system.

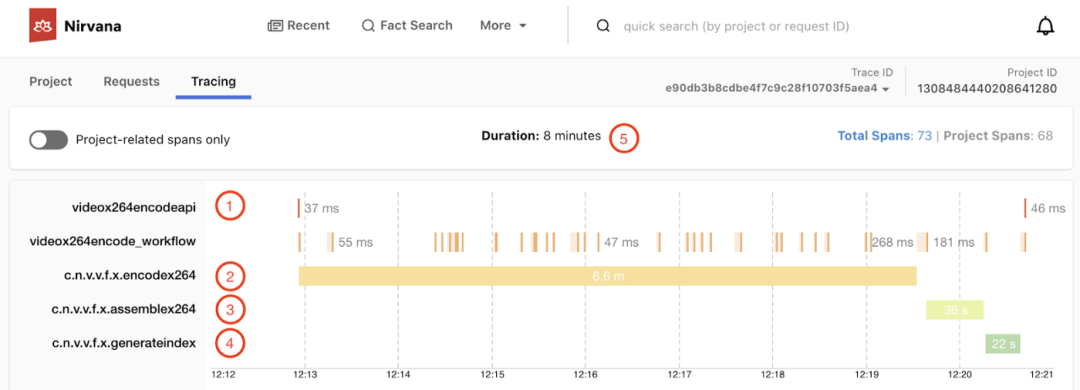

This subsystem provides a set of task-related APIs, including task creation, query, and deletion. Users use system functions through GUI and command line tools that directly invoke API. Observability presented in the Dashboard and other ways is also important. A good task processing system should include the following observable capabilities:

Task distribution is responsible for scheduling and distributing tasks. A task distribution system that can be applied to the production environment usually has the following functions:

1) The processing capability of the downstream task execution system should be considered for task retry. For example, if a traffic control error from the downstream task execution system appears or the task execution has become a bottleneck, the exponential backoff is required to retry. The retry should not increase the pressure on the downstream system or crush the downstream system.

2) The retry strategy should be simple, clear, and easy for users to understand and configure. First of all, it is necessary to classify errors to distinguish the non-retryable error, retryable errors, and traffic control errors. The non-retryable error refers to the error that fails deterministically. On this occasion, task retry is meaningless, such as parameter errors and permission issues. Retryable error means the factors that cause a task to fail are contingent, and the task will eventually succeed by retrying, such as network timeout and other internal system errors. The traffic control error is a special retryable error, which usually means the downstream is already fully loaded, and the task retry requires the backoff mode to control the number of requests sent to the downstream task.

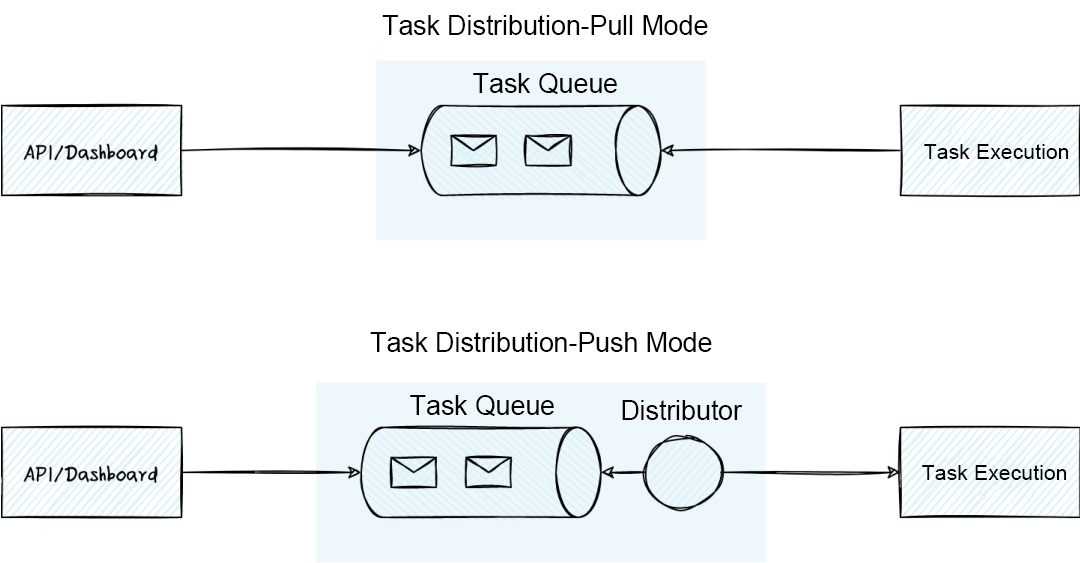

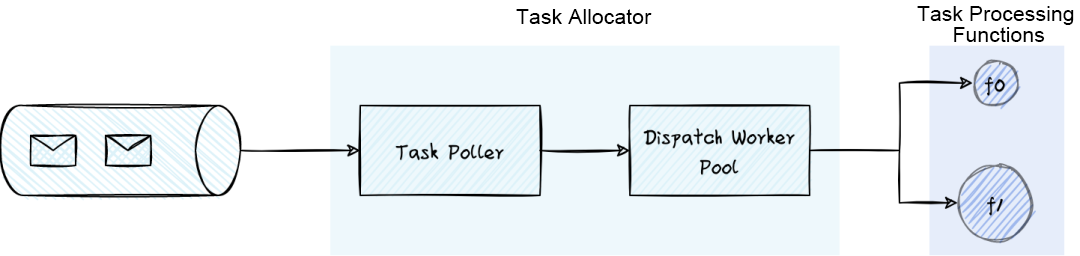

The architecture of task distribution can be divided into pull mode and push mode. Pull mode distributes tasks through the task queue. The instance that executes the task proactively pulls the tasks from the task queue and pulls the new task after it is processed. Compared with pull mode, push mode adds an allocator. The allocator reads the task from the task queue, schedules it, and pushes it to the appropriate task execution instance.

The architecture of the pull mode is clear. It can quickly build a task distribution system and perform well in simple task scenarios (based on popular software such as Redis). However, if the functions required by complex service scenarios (such as task deduplication, task priority judgment, batch suspension or deletion of tasks, and flexible resource scaling) are supported by the pull mode, the implementation complexity of the pull mode will increase rapidly. The pull mode faces the following major challenges in practice:

The core idea of push mode is to decouple the task queue from the task execution instance to clarify the boundary between the platform and the user. Users only need to focus on the implementation of task processing logic, and the platform is responsible for the management of task queues and resource pools of the task execution nodes. The decoupling of the task queue from the task execution instance also enables the capacity expansion of task execution nodes to be no longer limited by the connection resources of the task queue and achieve higher flexibility. However, the push mode also introduces high complexity. The priority management of tasks, load balancing, scheduling and distribution, and traffic control are all performed by the allocator, which needs to be linked with upstream and downstream systems.

In general, when the task scenario becomes complex, the system complexity in both the pull mode and the push mode remains high. However, the push mode makes the boundary between the platform and users clearer and simplifies the system complexity for users. Therefore, teams with strong technical strength usually choose the push mode when implementing a platform-level task processing system.

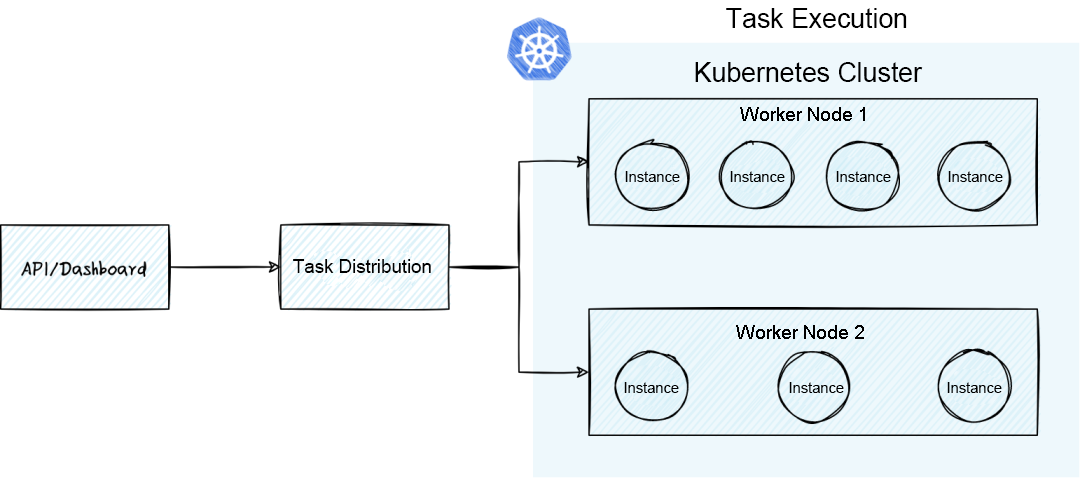

The task execution subsystem manages a batch of worker nodes that execute tasks flexibly and reliably. A typical task execution subsystem must have the following functions:

The task execution subsystem typically uses the container cluster managed by Kubernetes as the resource pool. Kubernetes can manage nodes and schedule container instances that execute tasks to the appropriate nodes. Kubernetes also has built-in Jobs and Cron Jobs, which simplifies the complexity for users to use Job. Kubernetes helps implement shared resource pool management and resource isolation of tasks. However, the main capabilities of Kubernetes are POD or instance management. In many cases, more functions need to be developed to meet the requirements of asynchronous task processing. Examples:

Note: There are some differences between jobs in Kubernetes and tasks discussed in this article. The job in Kubernetes usually means processing one or more tasks. The task in this article is the atomic concept. A single task is only executed on one instance. The execution duration ranges from tens of milliseconds to several hours.

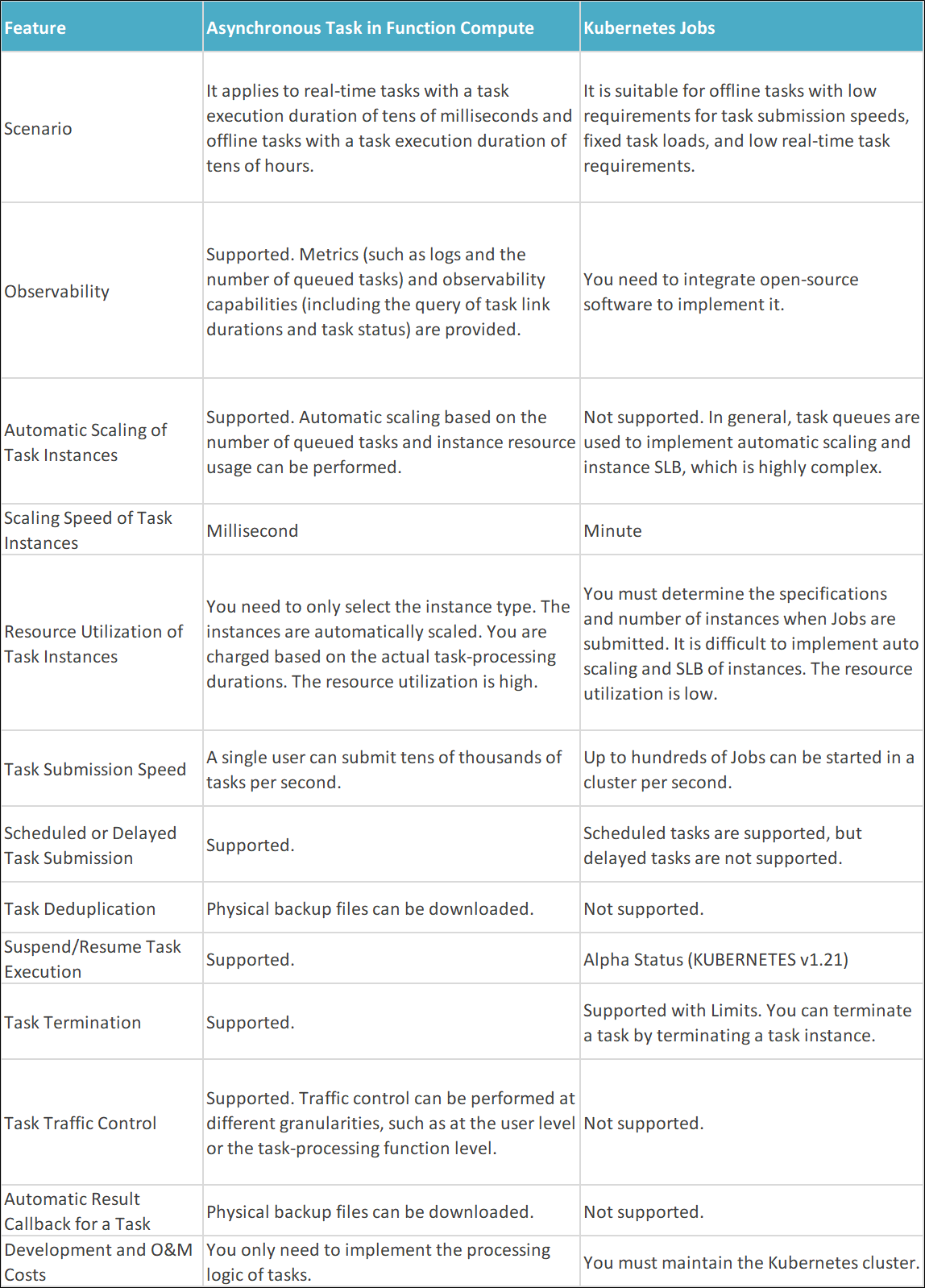

Next, I will use the asynchronous task processing system of Alibaba Cloud Function Compute (FC) as an example and discuss some technical challenges faced by the large-scale multi-tenant asynchronous task [7] processing system and the corresponding counter strategies. On the Alibaba Cloud Function Compute (FC) platform, users only need to create a task processing function and then submit the task. The asynchronous task processing is flexible, highly available, and observable. We have adopted a variety of strategies to implement isolation, scaling, load balancing, and traffic control in a multi-tenant scenario to smoothly handle the highly dynamically changing load of a large number of users.

As mentioned earlier, asynchronous task systems usually rely on queues to implement task distribution. When the task processing mid-end has to deal with many business sides, it is no longer feasible to allocate separate queue resources for each application or function (or even each user). Since most applications are long-tailed, low-frequency calls will cause a lot of waste of queue and connection resources, and polling a large number of queues also weakens the scalability of the system.

However, if all users share the same batch of queue resources, they may face the noisy neighbor problem in multi-tenant scenarios. The load burst of application A will crowd out the processing capacity of the queue and affect other applications.

In practice, Function Compute built a dynamic queue resource pool. First, some queue resources will be preset in the resource pool, and applications will be mapped to some queues through the hash map. If the traffic of some applications increases rapidly, the system will adopt a variety of policies:

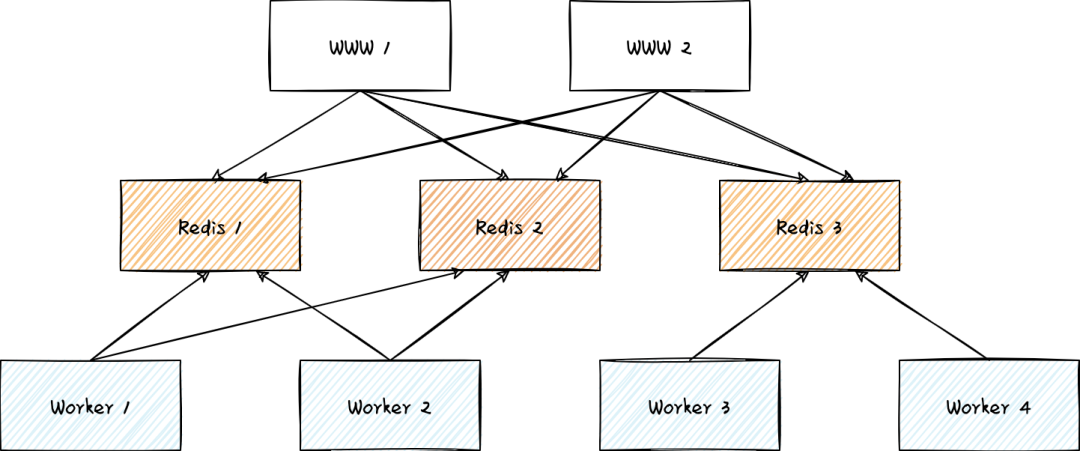

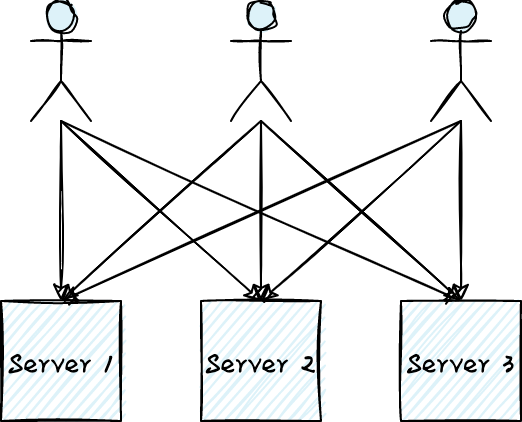

In a multi-tenant scenario, preventing spoilers from causing catastrophic damage to the system is the biggest challenge in system design. The spoiler may be a user attacked by DDoS or the load that may have triggered the system bug in some corner cases. The following figure shows a very popular architecture in which the traffic from all users is evenly sent to multiple servers in round-robin mode. When the traffic from all users meets expectations, the system works well, each server achieves load balancing, and the downtime of some servers does not affect the availability of the overall service. However, when a spoiler appears, the availability of the system will be at great risk.

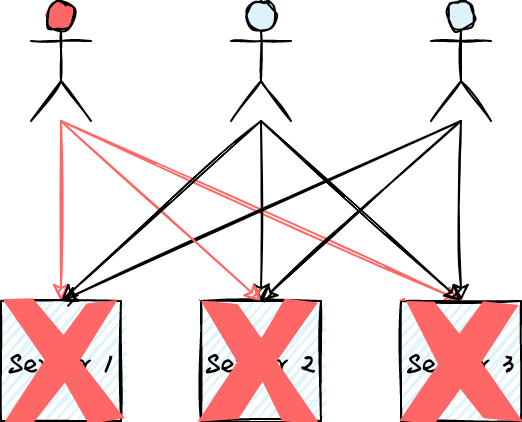

As shown in the following figure, if the red user is attacked by DDoS or some of his requests may trigger a bug that causes server downtime, his load may destroy all servers and cause the entire system to become unavailable.

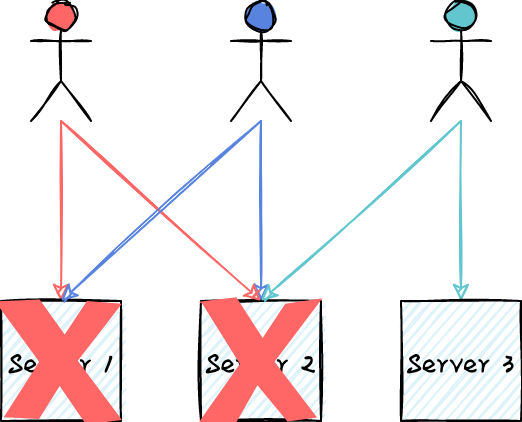

The essence of the problems above is that the traffic from any user will be routed to all servers. This mode is quite fragile when faced with spoilers without any load isolation capability. If any user's load will only be routed to some servers, could this problem be solved? As shown in the following figure, the traffic of any user is routed to two servers at most. Even if the two servers are down, the processing of requests from normal users is still not affected. This sharding load mode, which maps the user's load to some servers (but not all), can implement load isolation and reduce the risk of service unavailability. The cost is that the system needs to prepare more redundant resources.

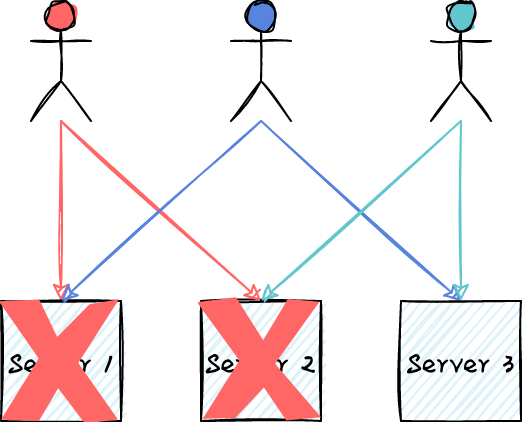

Next, let's adjust how the user load is mapped. As shown in the following figure, the load of each user is evenly mapped to the two servers. The load is more balanced, and even if the two servers are down, no user's load is affected except for the red. If we set the partition size to 2, there are C_{3}^{2}=3 combinations of selecting 2 servers from 3 servers (or 3 possible partitioning methods). Based on the random algorithm, we map the load evenly to the partitions. Then, if any partition is not available, 1/3 of the load will be affected at most. Assuming that we have 100 servers and the size of the partition is still 2, there are C_{100}{2}=4950 types of partitioning methods. The unavailability of a single partition only affects 1/4950=0.2% of the load. As the number of servers increases, the positive effect of random partitioning becomes more obvious. Random partitioning load is a very simple but powerful model, which plays a key role in ensuring the availability of the multi-tenant system.

Function Compute uses the push mode for task distribution, so users only need to focus on the development of task processing logic, and the boundary between the platform and users is also clear. There is a task allocator in push mode, which is responsible for pulling tasks from the task queue and scheduling them to downstream task processing instances. The task allocator should be able to adjust the task distribution speed adaptively according to the downstream processing capacity. When the queue backlog occurs, we hope to strengthen the dispatch worker pool's task distribution capability continuously. When the upper limit of downstream processing capability is reached, the worker pool must be able to perceive it and maintain a relatively stable distribution speed. When the task is processed, the work pool has to be scaled down to release the distribution capacity to other task processing functions.

In practice, we draw on the idea of the TCP congestion control algorithm and adopt the Additive Increase Multiplicative Decrease (AIMD) algorithm for the scaling of the worker pool. When users submit a large number of tasks in a short time, the allocator does not immediately distribute a large number of tasks to the downstream task. Instead, it linearly increases the distribution speed according to the additive increase policy to avoid the impact on downstream services. After receiving the traffic control error from the downstream service, the multiplicative decrease policy is adopted to scale down the worker pool according to a certain proportion. Only when the traffic control error meets the threshold of error rate and error number, scaling down is triggered to avoid frequent scaling of the worker pool.

If the task processing capacity always lags behind the production capacity, there will be more task backlogs in the queue, although multiple queues and traffic routing can be used to reduce the mutual influence between tenants.

However, when the task backlogs exceed a certain threshold, the processing system should be more actively informing this task processing pressure to the upstream task production system, such as the request for traffic control of task submission. In the multi-tenant resource sharing scenario, the implementation of back pressure will be even more challenging. For example, application A and application B share the resources of the task distribution system. If application A has a backlog of tasks, how can we do this?

It is challenging to identify objects that need traffic control in multi-tenant scenarios. We have borrowed Sample and Hold algorithms [8] in practice and achieved good results. Readers interested in it can refer to relevant papers.

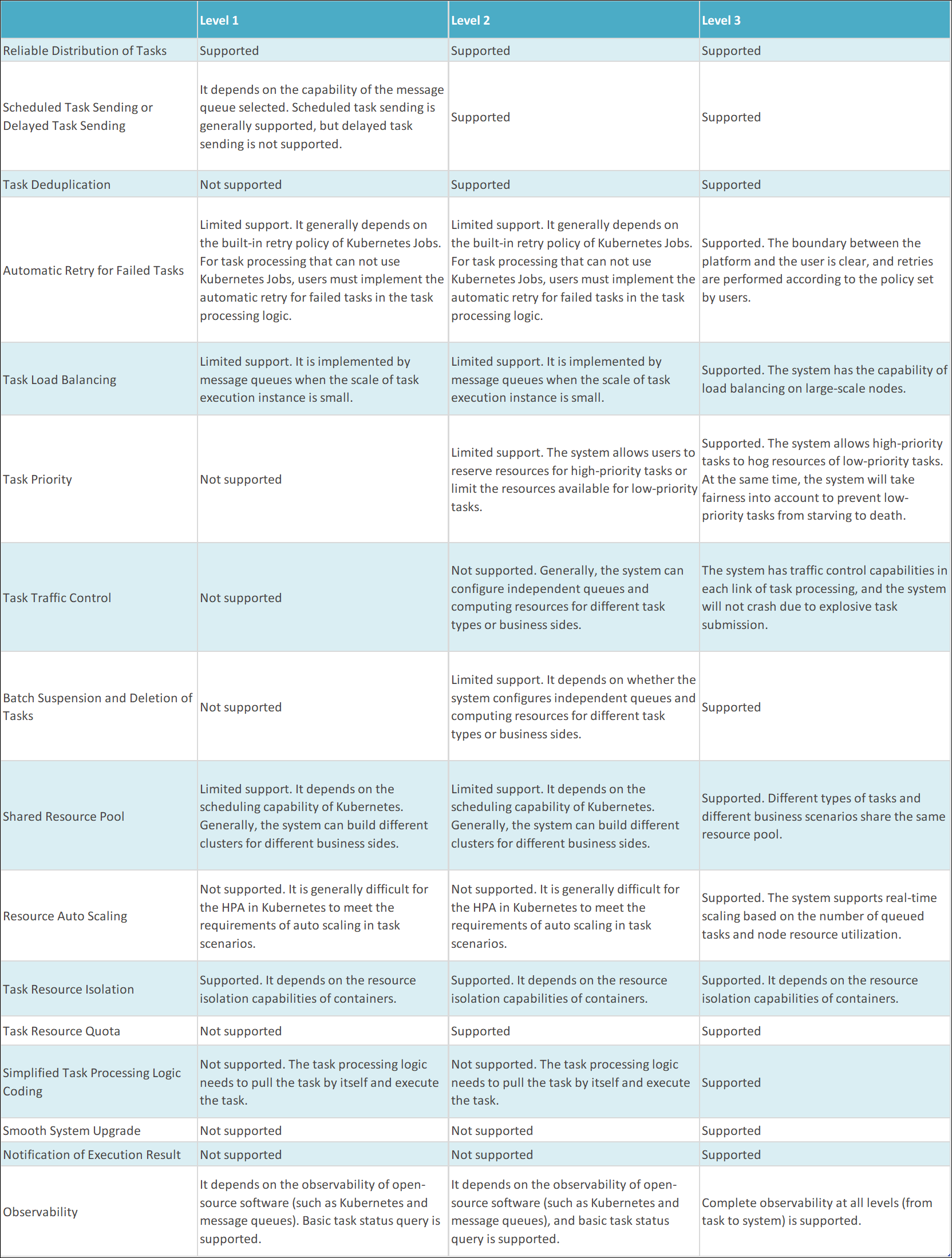

Based on the preceding analysis of the architecture and functions of the asynchronous task processing system, we divide the capabilities of the asynchronous task processing system into the following three levels:

The asynchronous task processing system is an important means of building flexible, highly available, and responsive applications. This article introduces the applicable scenarios and benefits of the asynchronous task processing system and discusses the architecture, functions, and engineering practices of the typical asynchronous task system.

Implementing a flexible and scalable asynchronous task processing platform that can meet the needs of multiple business scenarios is highly complex. Alibaba Cloud Function Compute (FC) provides convenient asynchronous task processing services close to Level ß3 capabilities. Users only need to create task processing functions and submit tasks through the console, command line tools, APIs, SDKs, event triggers, or other ways to process tasks in a flexible, reliable, and observable manner.

Using Function Compute to process asynchronous tasks covers scenarios with task processing duration ranging from milliseconds to 24 hours. Function Compute is widely used by customers inside and outside Alibaba Group, including Alibaba Cloud database self-made service DAS, the Alipay applet stress testing platform, Netease CloudMusic, New Oriental, Focus Media, and Milian.

[1] Slack Engineering: https://slack.engineering/scaling-slacks-job-queue/

[2] Facebook: https://engineering.fb.com/2020/08/17/production-engineering/async/

[3] Dropbox Statistics: https://dropbox.tech/infrastructure/asynchronous-task-scheduling-at-dropbox

[4] Netflix Cosmos Platform: https://netflixtechblog.com/the-netflix-cosmos-platform-35c14d9351ad

[5]Keda: https://keda.sh/

[6] Autoscaling Asynchronous Job Queues: https://d1.awsstatic.com/architecture-diagrams/ArchitectureDiagrams/autoscaling-asynchronous-job-queues.pdf

[7] Asynchronous Tasks: https://www.alibabacloud.com/help/en/function-compute/latest/asynchronous-invocation-tasks

[8] Sample and Hold Algorithm: https://dl.acm.org/doi/10.1145/633025.633056

The Details of Asynchronous Tasks: Function Compute Task Triggered Deduplication

99 posts | 7 followers

FollowAlibaba Cloud Native Community - April 23, 2023

Alibaba Cloud Serverless - February 17, 2023

ApsaraDB - October 29, 2025

Alibaba Cloud Native Community - May 19, 2026

Alibaba Cloud Native Community - May 14, 2026

Alibaba Cloud Serverless - November 10, 2022

99 posts | 7 followers

Follow Elastic High Performance Computing

Elastic High Performance Computing

A HPCaaS cloud platform providing an all-in-one high-performance public computing service

Learn More Application High availability Service

Application High availability Service

Application High Available Service is a SaaS-based service that helps you improve the availability of your applications.

Learn More Remote Rendering Solution

Remote Rendering Solution

Connect your on-premises render farm to the cloud with Alibaba Cloud Elastic High Performance Computing (E-HPC) power and continue business success in a post-pandemic world

Learn More Elastic High Performance Computing Solution

Elastic High Performance Computing Solution

High Performance Computing (HPC) and AI technology helps scientific research institutions to perform viral gene sequencing, conduct new drug research and development, and shorten the research and development cycle.

Learn MoreMore Posts by Alibaba Cloud Serverless