By Jianyi (Senior Development Engineer of Alibaba Cloud Serverless)

In the previous article entitled Detailed Asynchronous Tasks: Task Status and Lifecycle Management, I introduced the status management of the task system and how users should query the task status information in real-time according to their needs. This article will go further into asynchronous tasks in Function Compute and introduce scheduling solutions for asynchronous tasks and the function supported by the system in terms of observability.

Task scheduling refers to the operations that the system schedules different tasks to appropriate computing resources based on the current load. A perfect scheduling system often needs to balance the requirements of the isolation between tasks with different characteristics and optimal efficiency.

Asynchronous tasks in Function Compute adopt an independent queue model and an automatic load-balancing policy to isolate multiple tenants without affecting processing performance.

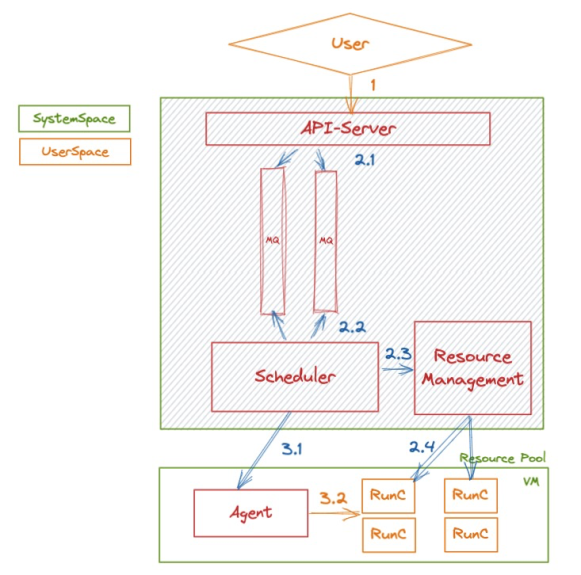

After a user submits a task, the system converts it into a message and sends it asynchronously to the internal queue. The following figure shows the processing of a message:

Figure 1

The whole system mainly relies on the Scheduler’s consumption and control of queues in terms of multi-tenant isolation and message backlog control in task scheduling. We will allocate an account-level queue for each user in advance, and all asynchronous calls (including task calls) of the user will share the queue.

This model ensures that each user’s asynchronous execution requests (including task calls) are not affected by the call of other users.

However, in some large-scale application scenarios (such as when a user has many functions and the number of calls to each function is large), all asynchronous messages sharing one queue inevitably lead to mutual influence between calls. Some long-tail calls may consume too many resources of the queue, which results in starvation in the execution of other functions.

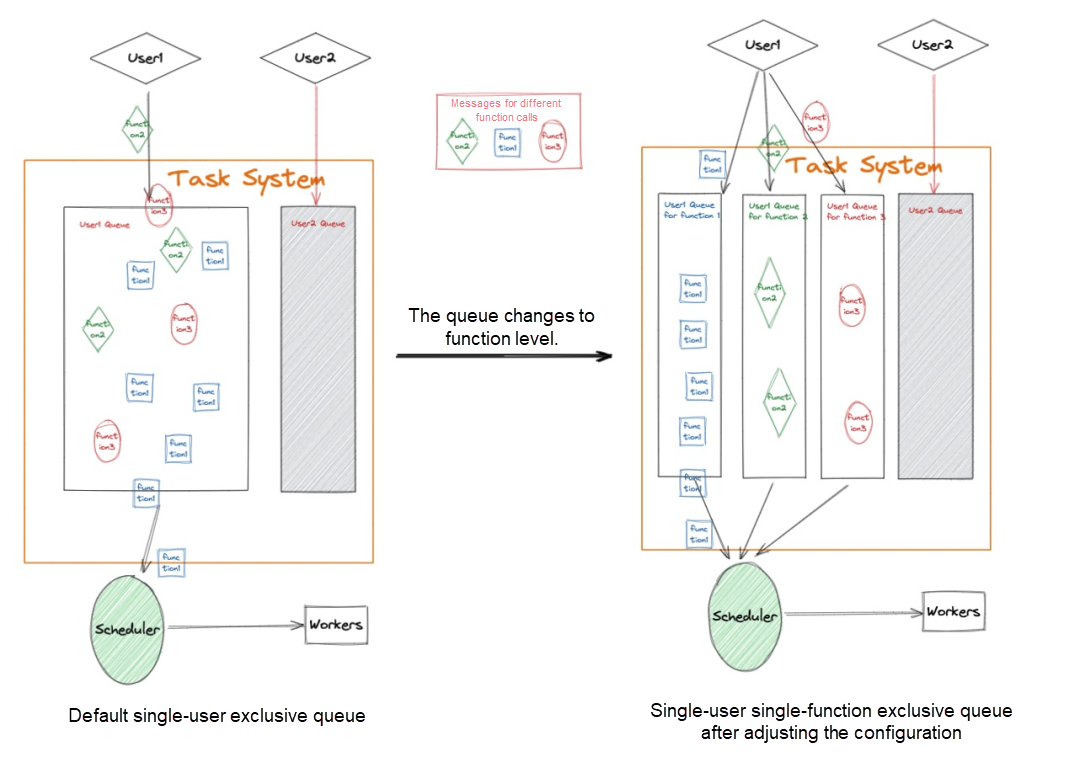

Function Compute provides queues with finer granularity and queues at the function level to avoid affecting the execution of important functions. A separate queue for each different function can be set to ensure that the consumption of high-priority functions is not affected by the execution of other functions under the same account. The following figure shows the relationship between queues:

Figure 2

Let’s assume user A has two different task functions. Task A executes messages one by one due to the limitation of downstream services. Task B is a large concurrent task and expected to be executed as soon as possible.

Tasks A and B share the same user queue in the default mode. Then, the following scenario occurs: Since there is a concurrency limit in Task A, Function Compute controls the dequeue rate of the entire task queue. This leads to the delay in the dequeue of Task B. However, after Task A is executed, Task B gets the opportunity to dequeue. At this time, the concurrency increases. The messages of Task B preempt the resource pool for execution, so it is difficult for task A to dequeue, and even one execution cannot be started for a long time. The result is both A and B are seriously disturbed by each other’s business.

After the queue is adjusted, Tasks A and B occupy exclusive queues, respectively. In this case, the consumption speeds of Tasks A and B are not affected by each other, and both can meet their own demands.

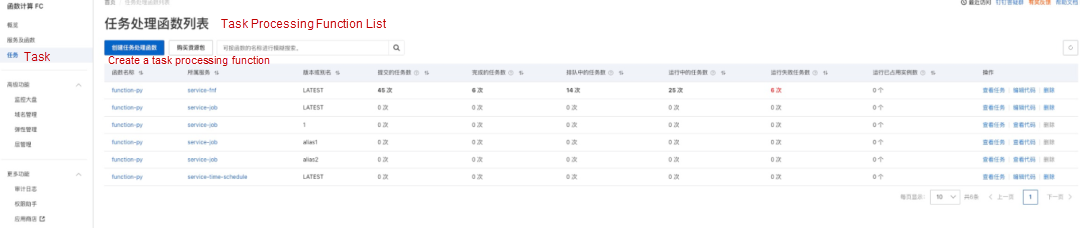

Currently, Serverless Task provides a task backlog dashboard. We can obtain the number of tasks that have been backlogged on the Task page and comprehensively analyze whether we need to enable the exclusive queue of the function.

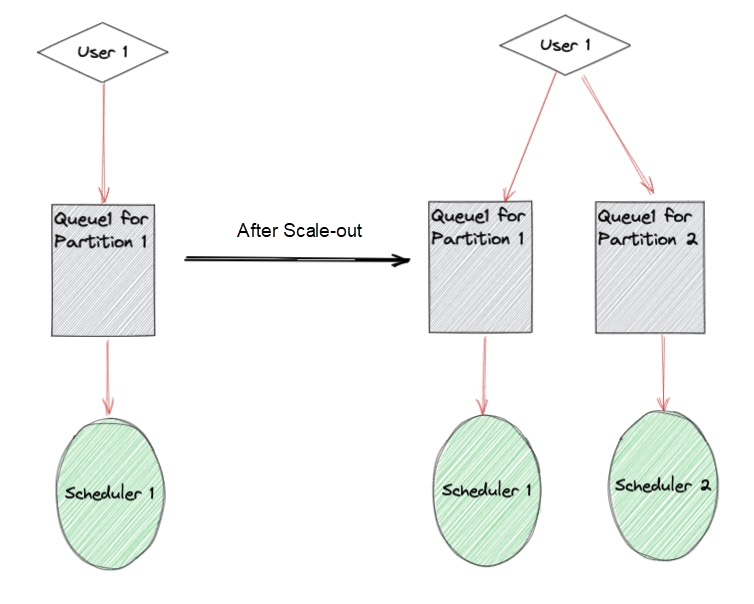

The preceding part describes how to avoid the Noisy Neighbor problem using function-level queues. However, if the concurrency level of a task is too high in some scenarios, even if a single queue is allocated to the task, it will cause a backlog of tasks. Then, we must introduce the load balancing policy of Serverless Task to solve this problem.

The task processing module of Function Compute includes the concept of Partition., each user belongs to a partition By default. The Scheduler responsible for this partition monitors the task queue corresponding to the user. When there is a serious backlog, we will allocate multiple partitions to users according to the load situation and assign different schedulers to consume tasks to improve the overall task consumption speed.

Figure 3

As shown above, Alibaba Cloud Function Compute (FC) achieves multi-tenancy and isolation in task queue management by default, and it can be applied to most scenarios. Function Compute also supports horizontal scale-out to speed up consumption in scenarios with a heavy load, long task execution, and high concurrency. In terms of task isolation, Function Compute supports separate isolation for functions with different priorities to avoid the Noisy Neighbor problem.

The observability of a task is one of the essential capabilities of the task system. Strong observability will help the business side reduce the additional workload required at all stages of the task operation.

The main demand of the business development phase is to quickly debug and locate problems. In this phase, Serverless Task provides abilities for instance login and real-time logging.

After codes are developed and uploaded, the process of test-debug-modify codes-retest can be implemented in the console, which significantly improves the research and development efficiency. If performance debugging or third-party binary debugging (such as FFmpeg debugging in the audio and video processing field) is in demand, we can log on to the instance.

The procedure is listed below:

Select the task to log on to the instance and click the instance link:

Enter the Instance Monitoring page and click Log On to Instance in the upper-right corner to log on to the corresponding instance

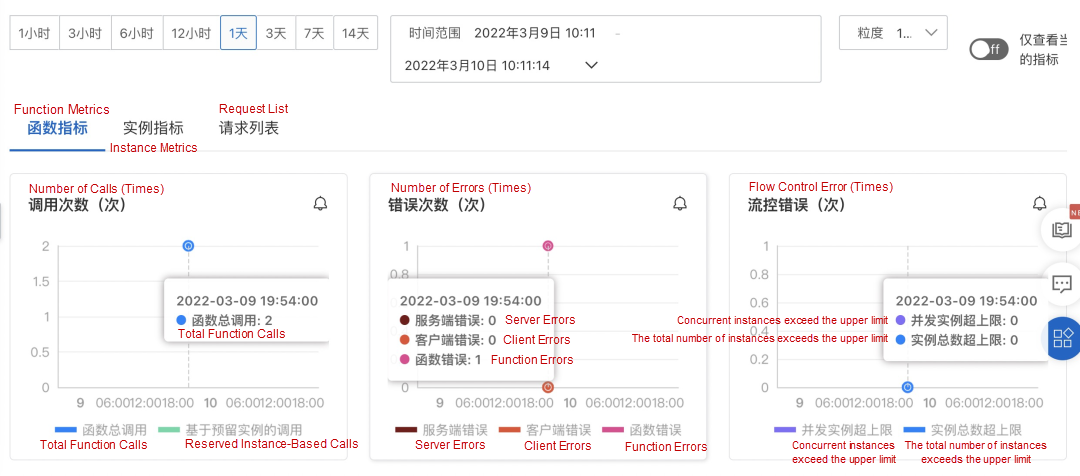

When the service is launched, it is often prone to faults that the downstream system cannot bear the pressure due to insufficient capacity estimation. Therefore, Serverless Task provides runtime metrics (the number of tasks submitted, completed, and executed over a period). Users can quickly learn about the load of the current business based on this indicator chart. When the downstream consumption of user tasks is slow, it may cause a backlog of tasks. This situation is also easily reflected in the indicator chart. Then, we can respond accordingly. Serverless Task provides the following metrics:

The task monitoring dashboard provides the following task-monitoring data:

| Monitoring Metrics | Description |

| Number of Submitted Tasks | The total number of tasks submitted in the past minute, including the number of running, completed, and dequeued tasks |

| Number of Completed Tasks | The number of tasks completed in the past minute, including tasks executed successfully or failed |

| Number of Tasks in the Queue | The number of tasks submitted in the past minute that are still in the queue. If the number is not 0, there is a task backlog. |

| Number of Running Tasks | The number of running tasks submitted in the last minute |

| Number of Tasks That Failed to Run | The number of tasks submitted in the last minute that failed to run |

| Number of Instances Occupied by Running | The number of tasks submitted in the last minute that are running successfully |

In terms of quick problem location, Function Compute allows users to view function logs and instance metrics in real-time. We can go to the Task List page, find the tasks that failed to be executed, and go to the Log page and Instance page to locate the problem:

When an online task runs for a period, a series of periodic audits are often required, such as the total number of tasks executed in the previous week, the number of failed tasks executed, and the time of failed execution. In addition to the console, Function Compute provides various API capabilities to audit tasks. It mainly includes the following capabilities:

If a task has an upstream and downstream TraceID, we can specify a meaningful task ID when triggering the task. Later, range queries can be performed based on the ID prefix.

The filtering methods above can be combined to achieve more convenient effects. The following figure shows the filtering conditions supported by the console.

Please visit this link for more information

There is a very important concept in the field of messages: dead-letter queues. When some messages cannot be consumed, these messages often need to be stored in one place for subsequent human intervention to avoid business losses caused by unprocessed messages.

Serverless Task also supports such features. Users can set a target feature for Serverless Task. When a task fails to be executed, Function Compute supports automatically pushing the context information of the failed task execution to Message Queue and other Message Service for subsequent processing. If the user’s processing logic supports automation, Function Compute also supports pushing the context information of failed tasks back to the Function Compute and executing a piece of custom business logic to implement business compensation.

Users can configure success and failure targets on the Asynchronous Call Configuration page.

Please visit this link for more information

In summary, the observability provided by Serverless Task can effectively support the monitoring requirements of the entire lifecycle of the task. All console capabilities can be customized using open APIs to meet more requirements.

The target feature of Serverless Task can compensate for task failures and serve as a data source in Event-Driven mode to deliver processed events to downstream services automatically.

99 posts | 7 followers

FollowAlibaba Cloud Serverless - November 10, 2022

Alibaba Cloud Native Community - April 23, 2023

Alibaba Cloud Serverless - February 17, 2023

Alibaba Cloud Serverless - September 29, 2022

Alibaba Cloud Native Community - December 17, 2025

Alibaba Cloud Native Community - May 21, 2026

99 posts | 7 followers

Follow Serverless Workflow

Serverless Workflow

Visualization, O&M-free orchestration, and Coordination of Stateful Application Scenarios

Learn More Serverless Application Engine

Serverless Application Engine

Serverless Application Engine (SAE) is the world's first application-oriented serverless PaaS, providing a cost-effective and highly efficient one-stop application hosting solution.

Learn More CloudMonitor

CloudMonitor

Automate performance monitoring of all your web resources and applications in real-time

Learn More Simple Log Service

Simple Log Service

An all-in-one service for log-type data

Learn MoreMore Posts by Alibaba Cloud Serverless