By Jingxiao

Serverless is the best practice and future trend of cloud computing. Its fully managed, O&M-free usage experience, and pay-as-you-go cost advantages make it highly regarded in the cloud-native era. The application scenario of Serverless is event-driven. Data processing and other specific scenarios change to generic Web, microservices, and AI, which has penetrated e-commerce, mutual entertainment, travel, and traditional industries.

The development and operation personnel recognized its core value of reducing costs and improving efficiency during the popularization of Serverless. However, they were troubled by a series of Serverless full-managed features (such as vendor lock-in, black box optimization, and full shielding). The resulting problems are urgently needed to be solved. For example, the migration costs are high, and the problem and causes are difficult to troubleshoot and analyze.

Openness means users can migrate from any platform to Serverless without changing language applications. It means users can obtain the core data of the entire lifecycle of Serverless applications out of the box. It also means users can integrate the original architecture with the Serverless architecture to realize the cloud interoperability and colocation on the cloud and under the cloud.

After embracing openness, Serverless will not be an unavailable technical test product that needs to be adaptive. It is not an independent data island separated from the original technical system. Instead, Serverless will carry the ideas of technology developers and become one of the shortest paths that can make technology more inclusive, universal, and shared.

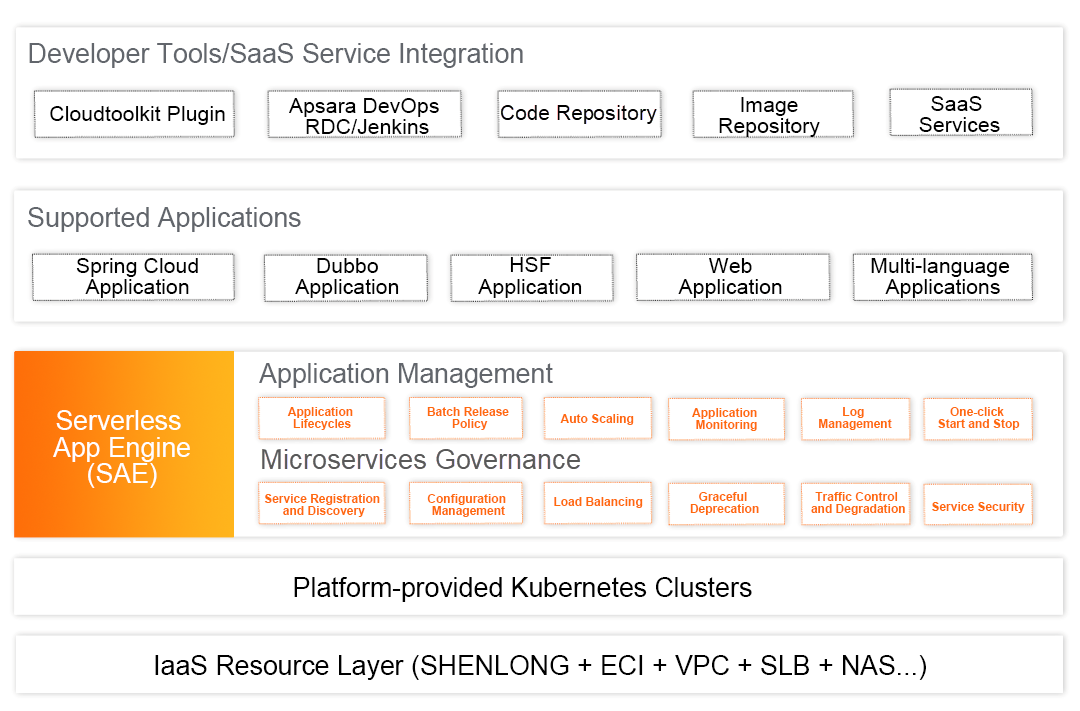

Alibaba Cloud continues investing in the Serverless field and maintains a leading position in technical competitiveness. Serverless App Engine (SAE) is the industry's first application-oriented Serverless PaaS and provides a comprehensive application managing solution with lower costs and better efficiency.

At the beginning of its internal incubation in 2018, SAE carried out Serverless transformation and a landing practice of applications (tasks) under the principle of zero threshold and transformation. It actively seeks to be integrated into the development process of products and enhances the whole process experience of DevOps, helping more than 1,000 customers realize cloud-native Serverless applications in three years. The existing and upcoming functions of SAE are all around embracing openness. Let's take a look at the product features currently provided by SAE and the thinking logic behind it:

Many users do not use Serverless from scratch for their applications. Instead, they agree with the Serverless concept and expect to migrate the original deployment environment (such as physical machines, ECS, and Kubernetes) to Serverless or colocate in Serverless based on architecture upgrade and evolution. In this scenario, the cost of application migration and transformation is particularly important. However, the difficulty of Serverless and the stereotype of vendor ecosystem lock-in have discouraged many developers.

As mentioned earlier, SAE focuses on zero threshold, transformation, and migration. Applications can be directly deployed in SAE without modifying any code logic. For non-container applications, SAE also provides built-in image building capabilities and enables all CICD to be streamlined, automated, and visualized through release orders. Let's focus on cross-platform scenarios.

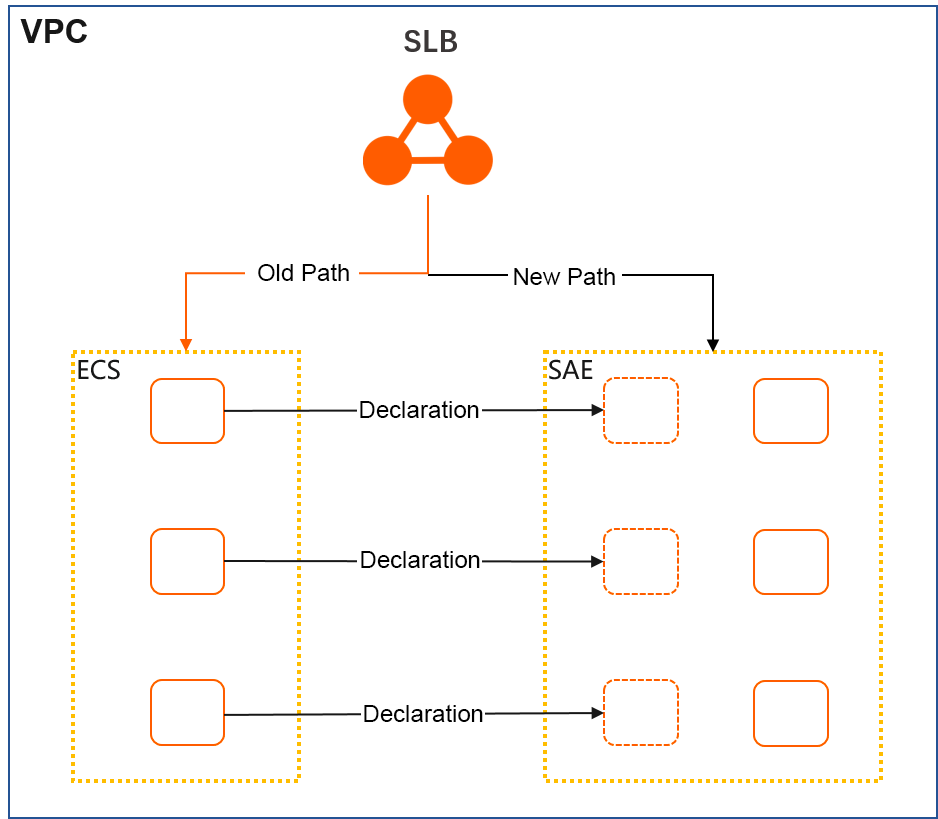

SAE can perform colocation with ECS instances. This allows you to realize fast elasticity in the scenario of existing migration. It can take advantage of SAE without any development or transformation.

The specific method is to add existing ECS instances to the SLB backend VServer group that is declared to be used by the SAE instance. The SAE application automatically maintains the backend instances of SLB in scenarios (such as deployment, scale, stop, start, restart, and vertical scale) and provides services to the outside uniformly.

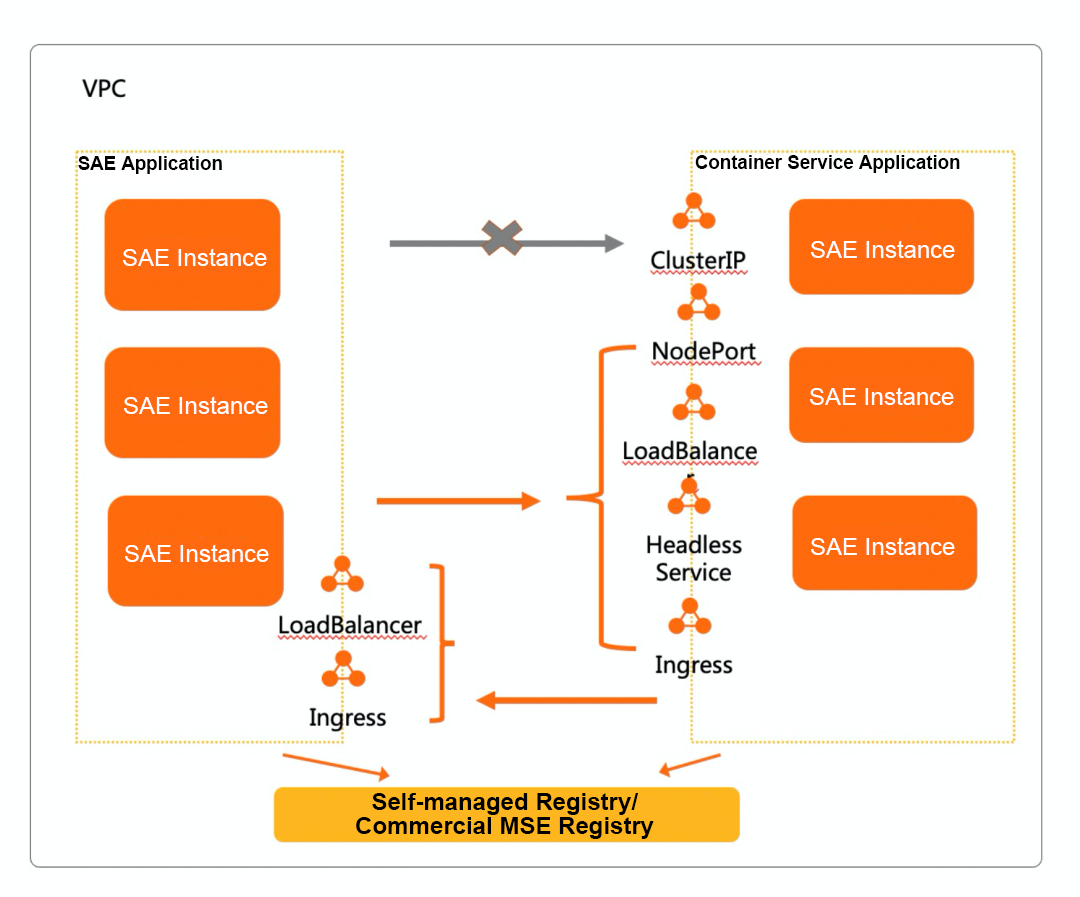

SAE allows you to exchange traffic with Kubernetes (ACK). You can use public SLB/Ingress or private SLB in the same VPC and use the PrivateZone ability to resolve the internal domain name to expose the application service address. You can also use the same registry in microservice scenarios to realize the communication and interaction between Kubernetes pods and SAE instances without changing the original architecture.

Prometheus is an open-source monitoring and alerting system. Its features include a multi-dimensional data model, flexible query statement PromQL, and data visualization, which has become the factual standard of the cloud-native monitoring system.

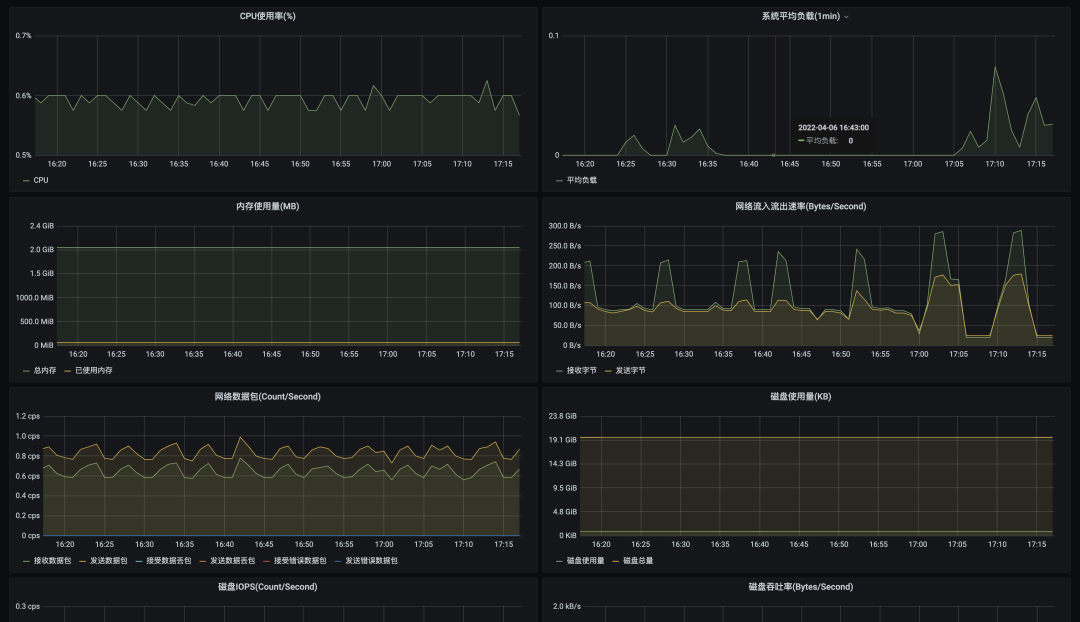

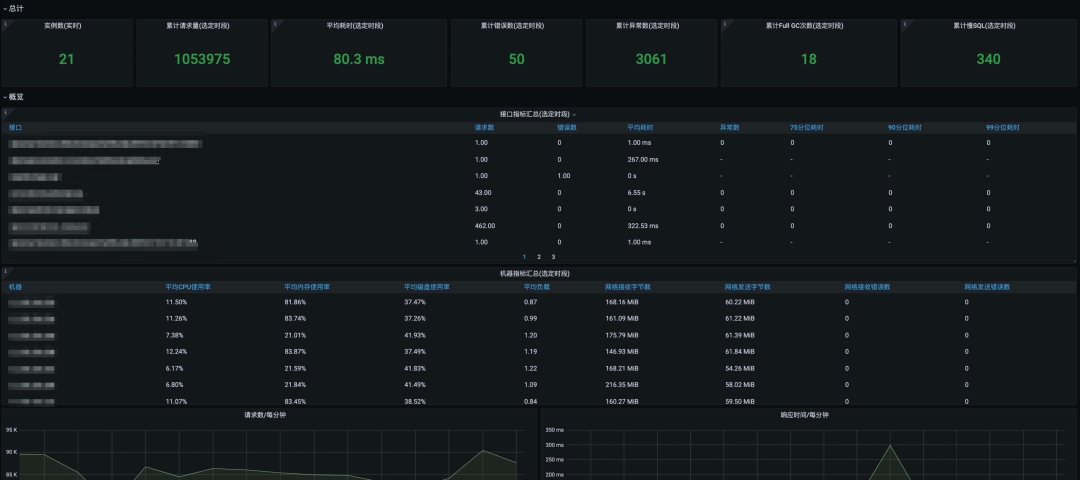

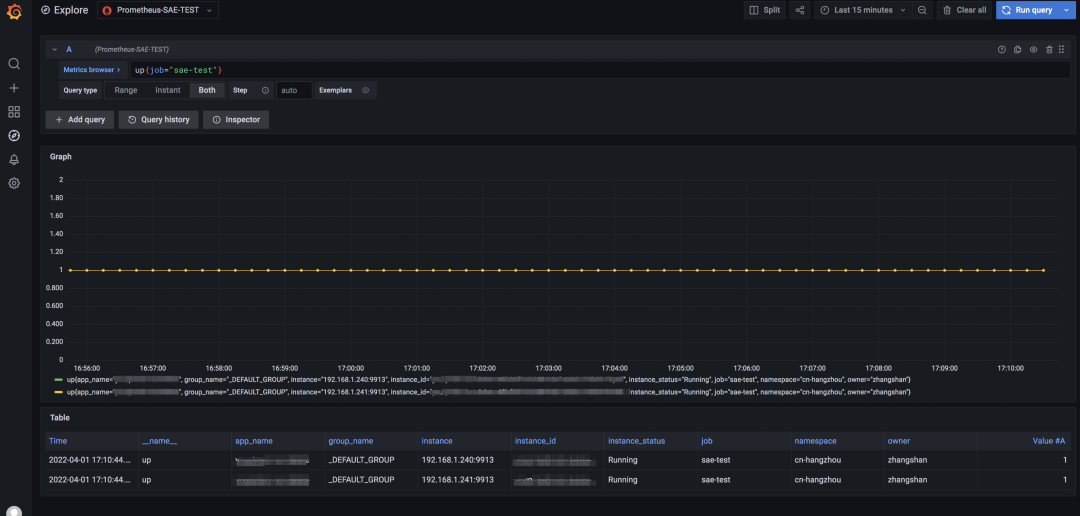

SAE provides convenient observability. It is fully compatible with the Prometheus ecosystem and provides core metric data to meet the requirements of flexible configuration, customization, and scalability in the monitoring field.

SAE collects and analyzes data on the CPU, load, memory, network, and disk of an instance where an application is running. It displays data in a dynamic graph, which allows you to keep track of the running status of the application directly in real-time. The collected data is prefabricated in Prometheus, and the integrated visualization dashboard is configured. You can use Grafana to configure custom dashboards.

For Java and PHP applications, SAE can use agent technology to track data (such as its interface RED). At the same time, its data has been prefabricated in Prometheus and integrated into the visualized dashboard. You can use Grafana to configure custom dashboards.

SAE uses EBPF technology for other multi-language applications to obtain non-intrusive seven-layer monitoring data and provide a non-sensitive use experience in the whole process. Multi-language monitoring data is prefabricated in Prometheus. You can use Grafana to configure custom dashboards.

SAE applications can customize their business, manually track and expose custom metrics, and use the service discovery capability in VPC to connect to Prometheus. This ensures the availability of the entire process in an environment of ever-changing instances.

With the development of products, we are deeply aware that Serverless does not mean that the server is completely black box optimized as we wish. Users fully trust the internal operations of the product. At the same time, we tell users to perform O&M in line with the Serverless model. This conflicts with users' existing knowledge systems and traditional O&M habits and is not conducive to timely troubleshooting and root cause delimitation.

SAE has given multiple solutions and best practices to optimize and improve Serverless O&M capabilities based on user demands and difficulties.

Log on to an instance to collect information and troubleshoot issues, which is an essential part of traditional O&M. There are already many mature problem diagnosis tools for users in the Linux environment. SAE knows the entire application and environment should not be passively delivered to third-party suppliers.

SAE provides the Webshell feature based on application instance exposing. Users can access Serverless instances to perform O&M operations in the same as accessing a local host. SAE provides the one-click tool installation function, solves the command removal in custom images, and adapts to various operating systems to troubleshoot problems more efficiently. Users can download and update tools in a private network environment.

How to efficiently perform O&M has always been the focus of SAE. In the process of daily development, deployment, and testing, users often mention that they expect to upload local files or configurations to cloud applications for temporary debugging or download logs, configurations, Java dump, and core dump of cloud applications locally.

As a requirement in the O&M field, SAE provides the bidirectional file transfer feature in Serverless scenarios. SAE implements the upload and download features without software dependencies and application intrusion.

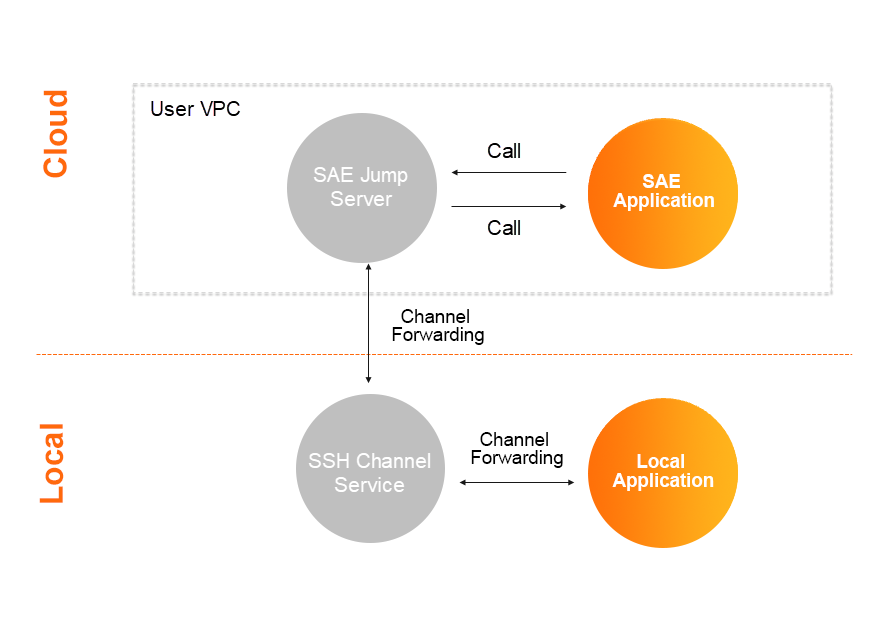

Developers are limited by their dependence on the application environment during development and testing. Considering the speed and efficiency of startup and deployment, developers are often reluctant to restart cloud applications locally and willing to simulate the cloud execution environment for local debugging.

This is also a major challenge in serverless scenarios. SAE uses a built-in jump server to realize intermodulation between local services and cloud-based SAE applications. It supports remote access between Java/PHP remote debug and instances, truly integrating local and cloud environments.

Embracing openness is the next step in the Serverless era. This is both SAE's vision under the wave of cloud-native and the direction that SAE continues to focus on and will adhere to. In the future, SAE will be committed to providing more powerful technical support and experience with the minimum transformation and cognitive cost of users. SAE will continue developing deployment architecture, metric data, generic operation and maintenance, and all aspects to embrace the concept of openness, promoting the development process of the Serverless era.

99 posts | 7 followers

FollowAlibaba Cloud Community - September 30, 2022

Alibaba Cloud Community - March 15, 2024

Aliware - July 21, 2021

Alibaba Clouder - October 14, 2020

Alibaba Clouder - September 8, 2020

Alibaba Cloud Native Community - October 22, 2025

99 posts | 7 followers

Follow Serverless Application Engine

Serverless Application Engine

Serverless Application Engine (SAE) is the world's first application-oriented serverless PaaS, providing a cost-effective and highly efficient one-stop application hosting solution.

Learn More Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn MoreMore Posts by Alibaba Cloud Serverless