The 12th event of the Alibaba Cloud User Group (AUG) was held on September 24, 2022, in Xiamen. At the event site, Shi Mingwei (an Alibaba Cloud Senior Technical Expert) shared "The Future - From Technology Upgrade to Cost Reduction and Efficiency Improvement" with representatives of enterprises. This article is based on the content of the speech.

Hello, everyone. I am glad to share the topic of Serverless with you today.

As the R&D leader of the Serverless event-driven ecosystem, asynchronous system, and Serverless workflow, I hope I can help you understand the technical principles behind Serverless and how Serverless helps enterprises achieve the goal of reducing costs and improving efficiency. I will also share some best practice guidance. When the enterprise is still in the transition stage from containerization to Serverless, we should learn to use Serverless for technical upgrades to achieve the purpose of reducing costs and improving efficiency. Finally, I will share some Serverless cases applied to actual production to help you understand how to use Serverless in the production process and solve business pain points.

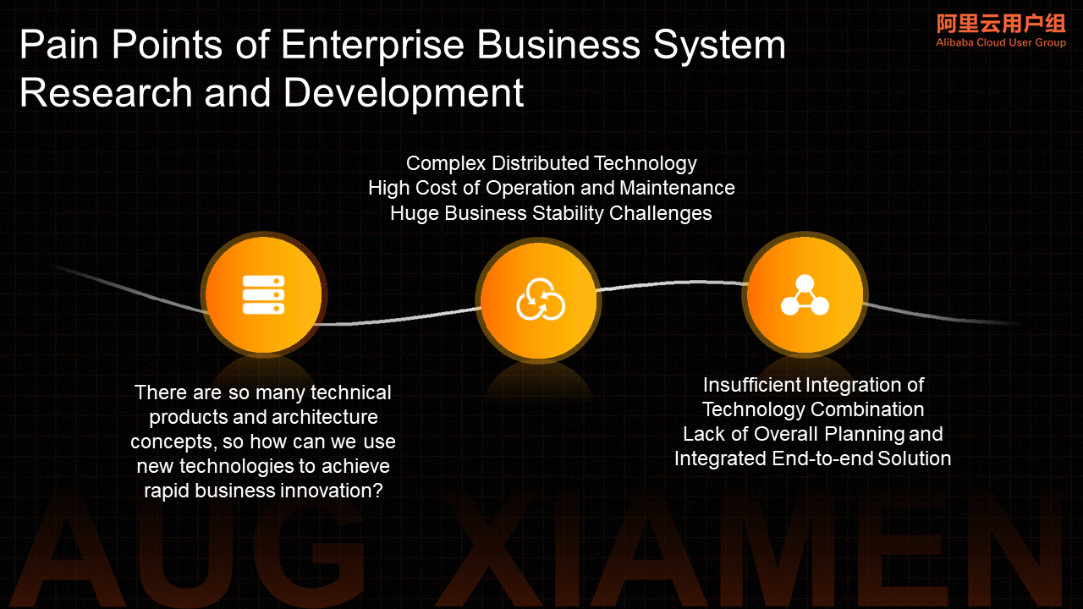

First of all, let's understand the three core driving forces of technological upgrading in the production process of enterprises.

When an emerging business arrives, due to the unpredictability of the business, it is difficult for us to predict and plan the business and make basic preparations at the IT level. Enterprises should have the capability of matching IT capabilities in a short time to support rapid business growth.

The second point is R&D and efficiency improvement.** R&D efficiency can be improved through technical means or personnel optimization.

The third point is the enterprise IT cost optimization demand.** Whether it is in an early stage of development or a stage of stable business growth, to survive or achieve a balance of payments, companies attach great importance to costs. They seek technological upgrade drives for cost reduction.

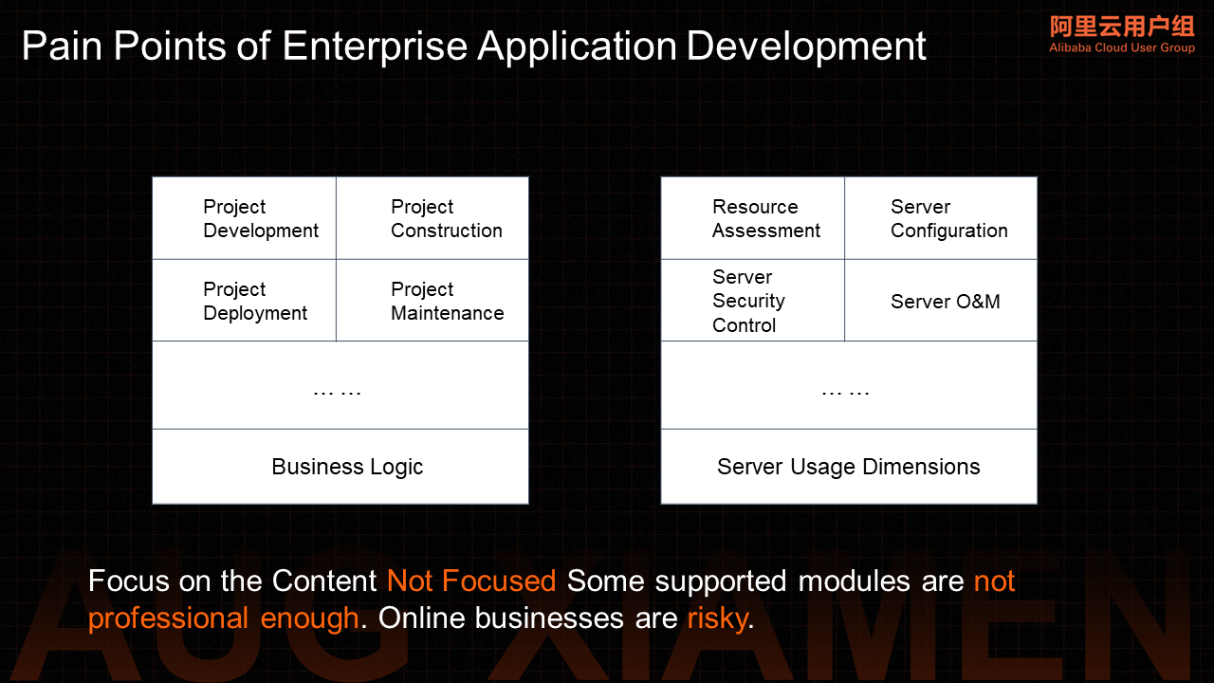

The Past and Present of Serverless article provides a good foundation for readers to understand why Serverless appears. What is the core problem it wants to solve? Enterprise development pursues the core goals: implement business logic faster, reduce development time on environment construction and system connection, and focus more time on business development.

After completing the development, you need a running environment to deploy the developed business code to provide services and the related maintenance work involved in the operation process, which is what we call operation and maintenance. The pain point faced by everyone in the whole process (DevOps) is the problem of enterprise research and development efficiency, especially for R&D and O&M students.

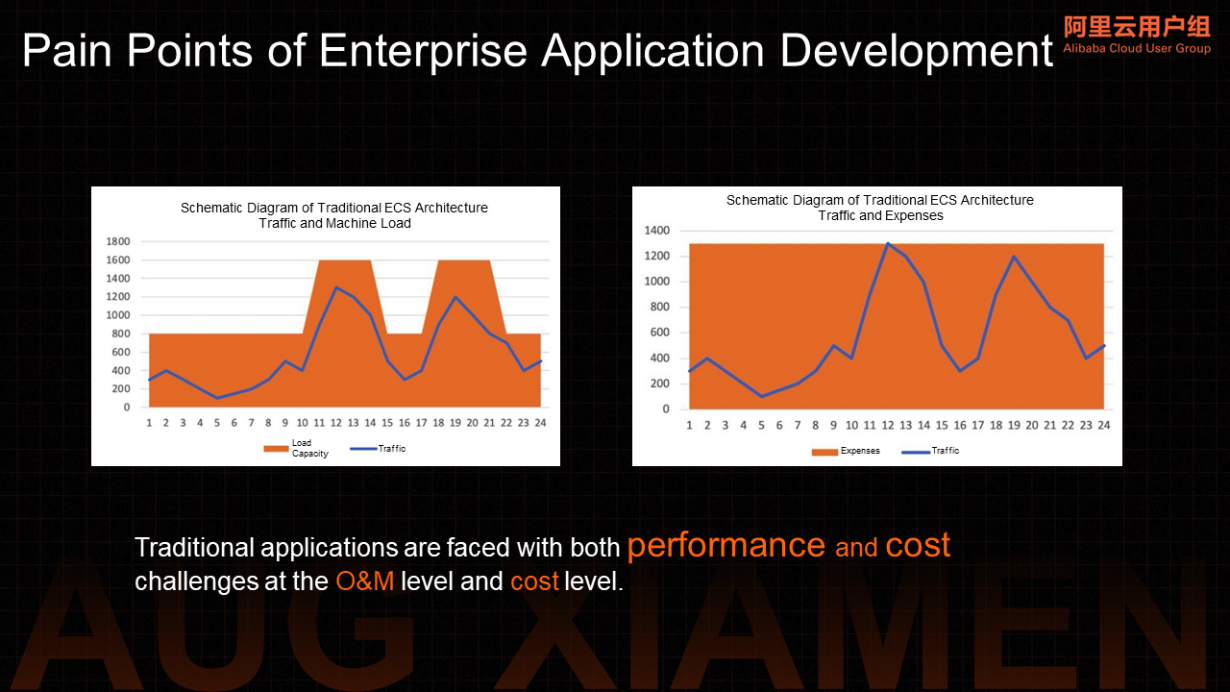

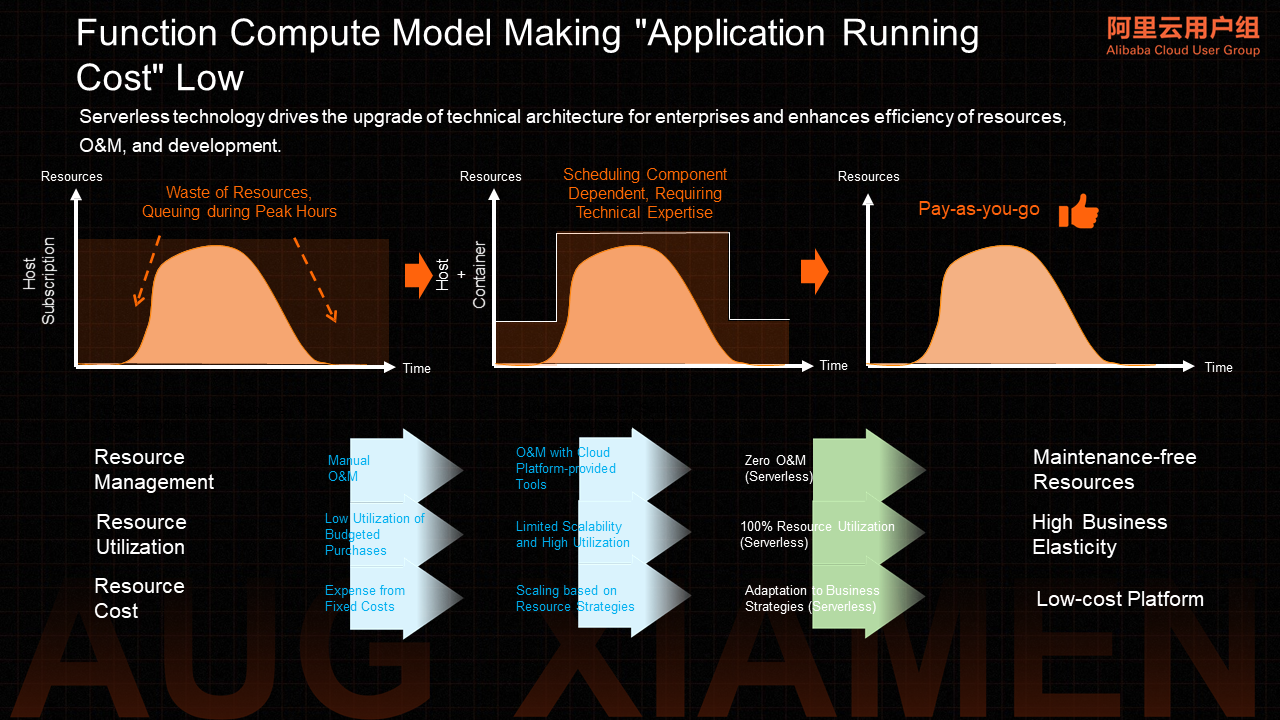

In addition to R&D efficiency, another important thing for enterprises is R&D cost. Here we only discuss the IT cost in enterprise research and development. The ideal model is to only pay for computing that generates business value. However, under normal circumstances, this computing is consistent with the lifecycle of business requests. Before the real business requests arrive or during the intermittent period of requests, we still need to pay for the computing resources we hold at these times. Although these time computing resources are idle for the business, Serverless hopes to realize the demand of paying per request to reduce the cost of customers.

Below is an example of Kubernetes or ECS billing mode to help everyone understand the payment per request. After you purchase Kubernetes, you have to pay. After you create Pod, the cluster allocates resources to you, but your request traffic does not come. You still need to pay for Pod resources. Serverless means you provide the code and deploy it on the platform today. Your code package and container image have been deployed on the Serverless platform. It may already be warming up or running (or in a Standby state), but there will be no computing cost during the period before your request arrives or during the interval between two request calls.

For enterprises, what we call an application is not just a program but an information system that carries the entire enterprise's business capabilities.

When we want to build a business system, we go through several stages of system architecture selection. The first is the selection of technical architecture. Many enterprise systems are not built from scratch but are iteratively evolving in business precipitation. However, when you face new business systems, you must target those business systems that have to be reconstructed (like the kind of architecture and open source framework). You need to consider the scalability of the architecture, the post-maintenance of the framework, the maturity of the community, the threshold of technology acquisition, follow-up business development talent recruitment, and other factors.

In self-built systems, especially in the Internet environment, the O&M burden and stability challenges brought by distributed systems will overwhelm the R&D team. Technological integration is difficult. It brings huge challenges to enterprise self-built distributed business systems.

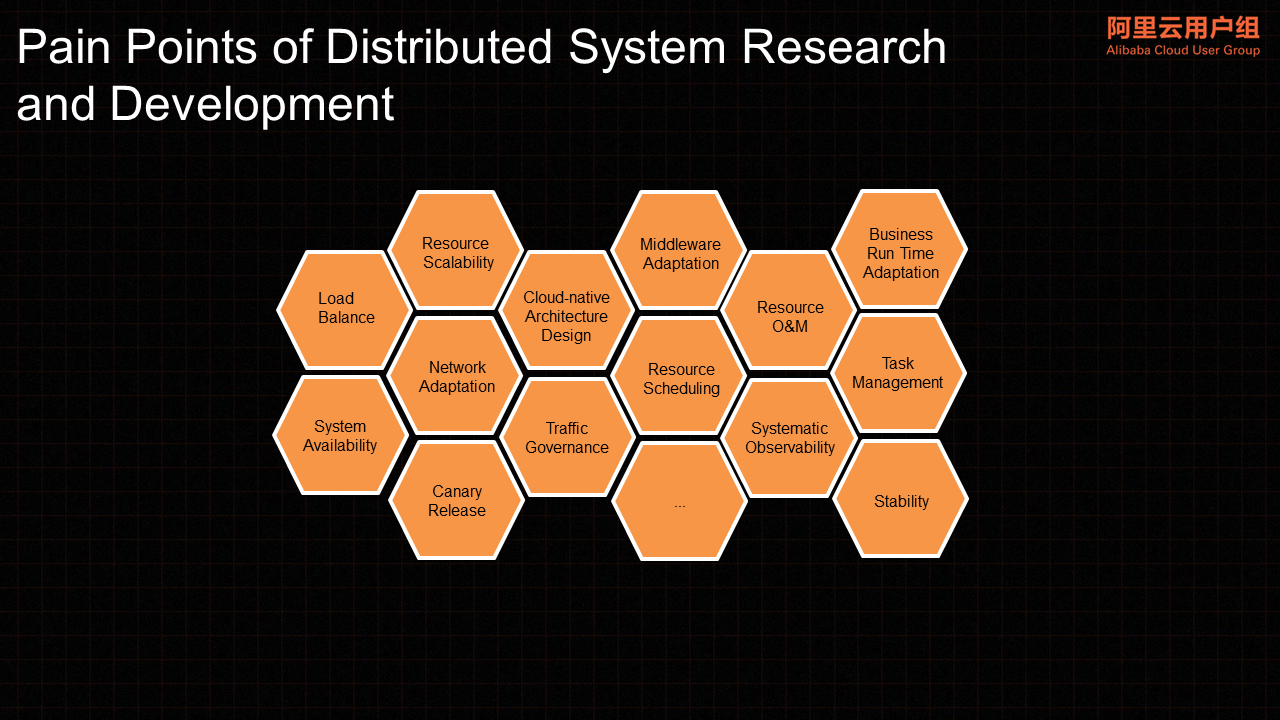

The main components of a typical distributed system need to consider a series of issues related to load balance, traffic control, resource scheduling, system observability, system stability, high availability requirements, and service governance. Continuous R&D investment and O&M burden have become the pain points of in-house development.

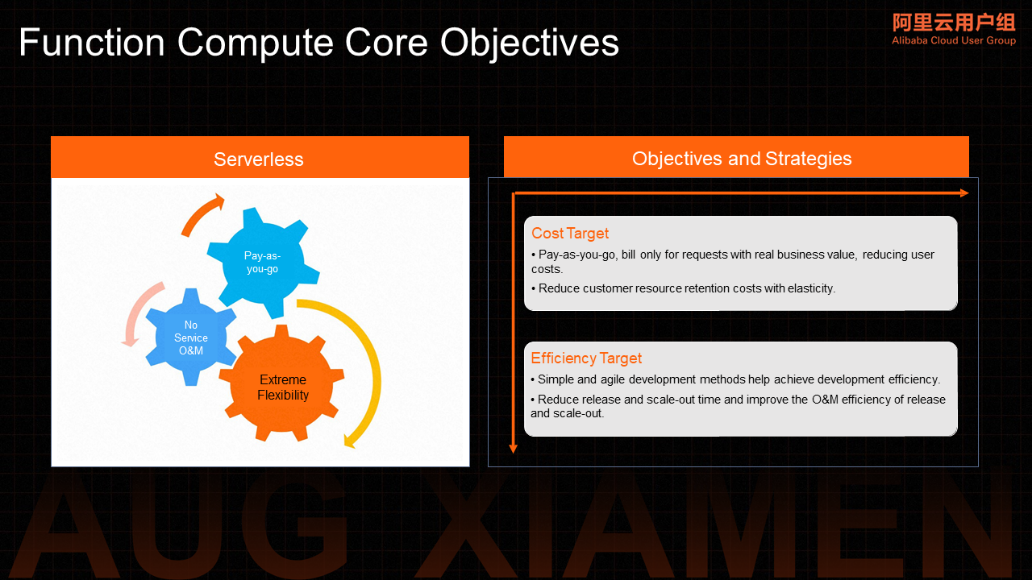

Facing all these demands and challenges, let's review Serverless's original intention before discussing how Serverless technology can meet and solve these demands and problems at the product level. Serverless is a cutting-edge technology of cloud computing. Extreme Flexibility, Serverless O&M, and pay-as-you-go were the goals from the beginning. To achieve these goals, Serverless needs to solve the cost and efficiency problems we face from the perspective of technological upgrading. As the saying goes, "If you go the right way, you are not afraid of going far."

Pay-as-you-go, Serverless O&M, and Extreme Flexibility are three concepts that have found a good balance between the customer's perspective and technical terms. Both the R&D personnel responsible for Serverless technology and the decision-makers responsible for enterprise technology upgrades can understand the value to be realized by Serverless. Around these three concepts, we need to achieve efficiency improvement and cost optimization.

Pay-as-you-go is from a business perspective, which means paying on-demand according to the request. From the perspective of operation and maintenance or research and development, Serverless operation and maintenance means you do not want to spend more time on server purchases. In operation and maintenance, it includes a series of operation and maintenance things (such as resource auto scaling and health checks). We must have basic product capabilities to carry these two things. The simplest thing is extreme flexibility. I use it when I need it and recycle it when I don't use it. This is the only way can we support the logic of pay-as-you-go.

For business value request billing, the flexibility ability can help reduce the cost of customer resources. The finer the strength, the shorter the time to retain it, and the closer to the real computing time, which can reduce the cost. The efficiency goal must first be simple in the development method. If it is complicated, it is difficult for R&D personnel to accept it, and it does not have a synergistic effect. In addition, based on simple development, rapid deployment reduces the time for R&D personnel to participate in the release and scaling, which is the so-called cost and efficiency goal.

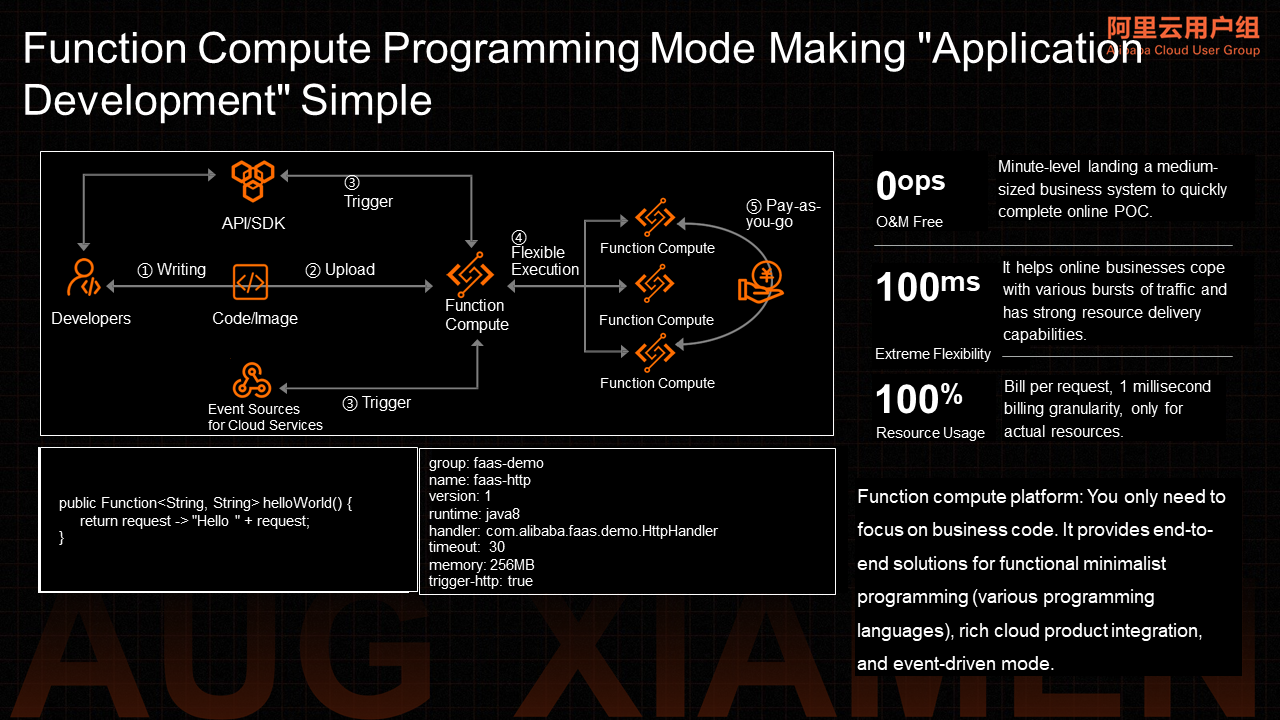

After understanding the basic goals of Serverless, we need to discuss how Function Compute can achieve these two goals. First, we must evaluate what Function Compute can achieve from Function Compute programming patterns. Function Compute is an event-driven and fully managed computing service.

When you use Function Compute, you only need to write and upload code without the need to purchase and manage infrastructure resources (such as servers). Function Compute allocates computing resources, runs tasks elastically and reliably, and provides features (such as log query, performance monitoring, and alerting).

According to the function granularity, independent functional unit development, rapid debugging, rapid deployment, and online save a lot of resource purchase and environment construction. At the same time, Function Compute is an event-driven model, which means users do not need to pay attention to the problem of service product data transmission, eliminating the logic of a large number of service endpoints involved in writing code. Several dimensional features (such as event-driven, function granularity development, and server-free O&M) help Function Compute support the underlying logic that focuses more on business logic development, achieving real technology upgrades, and improving R&D efficiency.

In addition to the improvement in R&D efficiency brought about by the development model, let's look at how Function Compute implements the underlying logic that helps customers reduce costs. According to the user request, paying according to the model of user traffic is the most ideal state. However, paying by user request has huge technical challenges, requiring Function Compute instances to start less than the user's RT requirements, and cold start performance is important. At this time, extreme flexibility has become the underlying technical support for Serverless pay-as-you-go and business cost reduction. Function Compute uses the extreme flexibility + pay-as-you-go model to help Serverless Function Compute implement the real underlying cost reduction logic.

Whether it is for cloud developers or enterprise customers trying to upgrade their business, the three concepts of pay-as-you-go, Serverless O&M, and Extreme Flexibility have been deeply rooted in their hearts. However, what Serverless can do and how to do it are still the most common voices around us.

In the initial stage of Serverless research and development, the technical team usually focuses more on elasticity and accelerates a cold start. They hope to highlight the technical competitiveness of products through elasticity capabilities and establish the leading position of products in the market. They rely on these capabilities to fulfill the technical goal of Serverless extreme flexibility and attract developers and enterprise customers to use Serverless. At this stage, we mostly depend on the influence of technology to guide everyone to explore Serverless.

As our understanding of Serverless continues to deepen and the improvement of elasticity has entered the deep water zone, relatively speaking, when there is no essential change and customers need to use Serverless in the production environment, we will think more about other values other than Serverless elasticity. At this time, the coverage of elasticity includes computing resources and related resources (such as networks and storage). Elasticity will permeate all aspects of the product as the basic capability of the system. It is necessary to consider more from a systematic perspective what Serverless can do, what it can bring to customers, and how to focus the business on those parts that have to be customized.

Before answering what Serverless can do and how to do it, let's first understand what Serverless has done and what out-of-the-box capabilities it can provide.

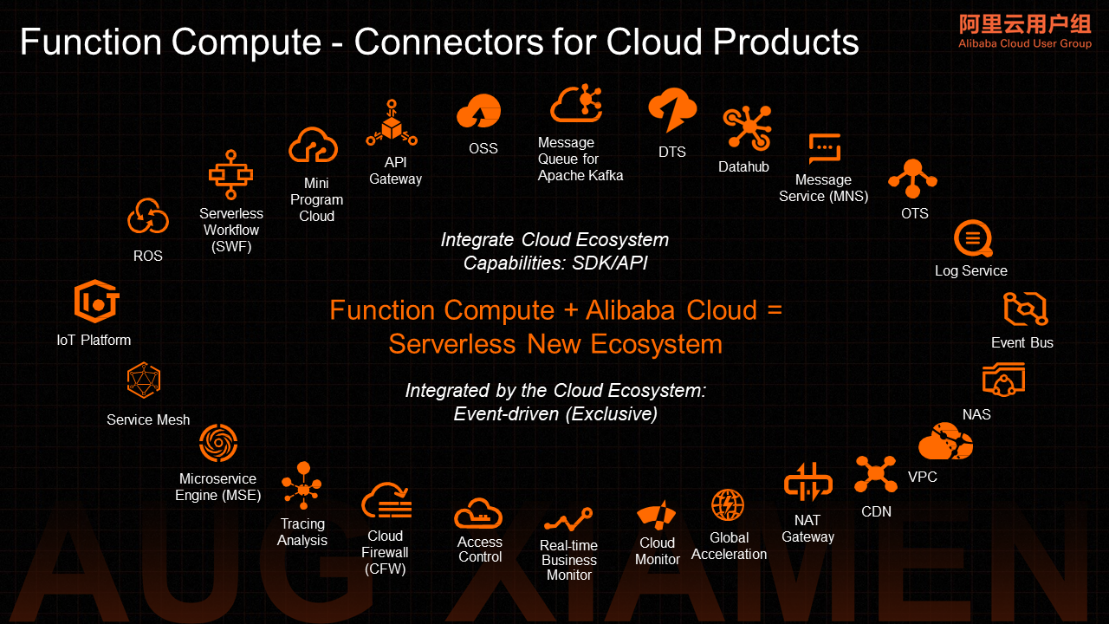

Function Compute, as a computing platform, is not an isolated island. Only by linking with other products of the entire cloud computing ecosystem to form a distributed cloud development environment can it maximize the value of Function Compute and meet the needs of enterprise customers to build business systems based on it.

The greatest value of linkage is to solve the connection problem of services behind cloud products. It is the foundation of Serverless Function Compute event-driven architecture. The value of event-driven is to help users understand the hidden call logic intuitively. These calls do not need to be reflected in the user's business logic. Connection means the dependency between systems. This dependency will be reflected through the degree of coupling. Coupling cannot indicate the strength of functional dependency. It is more reflected in the implementation level of software architecture, which is a result of implementation. This is the implementation requirement of high cohesion and low coupling emphasized in the software architecture field.

Event-driven meets this implementation requirement in an event-driven manner. Based on this architecture, the software's internal implementation is no longer like the previous typical monolithic application or traditional microservice implementation. It needs to be strongly dependent on integrating the clients involved with multiple dependent services into their business systems.

In the cloud-based development environment, the services carried by cloud products are cohesive, and each plays an important role in the distributed system in the cloud-native architecture. The event notification mechanism between cloud products can help customers build their cloud-native business systems based on multiple cloud products. Otherwise, watching events between cloud products is complicated and expensive to develop. In addition to the development efficiency brought by product connection, when a user subscribes to an event and provides processing logic, the customer has potentially filtered out the event requests that do not need to be processed. Event-driven means that every event request is a valid driver.

Currently, Function Compute integrates with multiple cloud products to build a complete event ecosystem (including API Gateway, message middleware, Object Storage Service, Tablestore, SLS, CDN, big data Datahub, and cloud calls). At the same time, EventBridge is used to access the O&M events (log audit, CloudMonitor, and O&M) of Alibaba Cloud products. This helps customers leverage Function Compute and many cloud products to form a cloud-native business system based on an event-driven architecture.

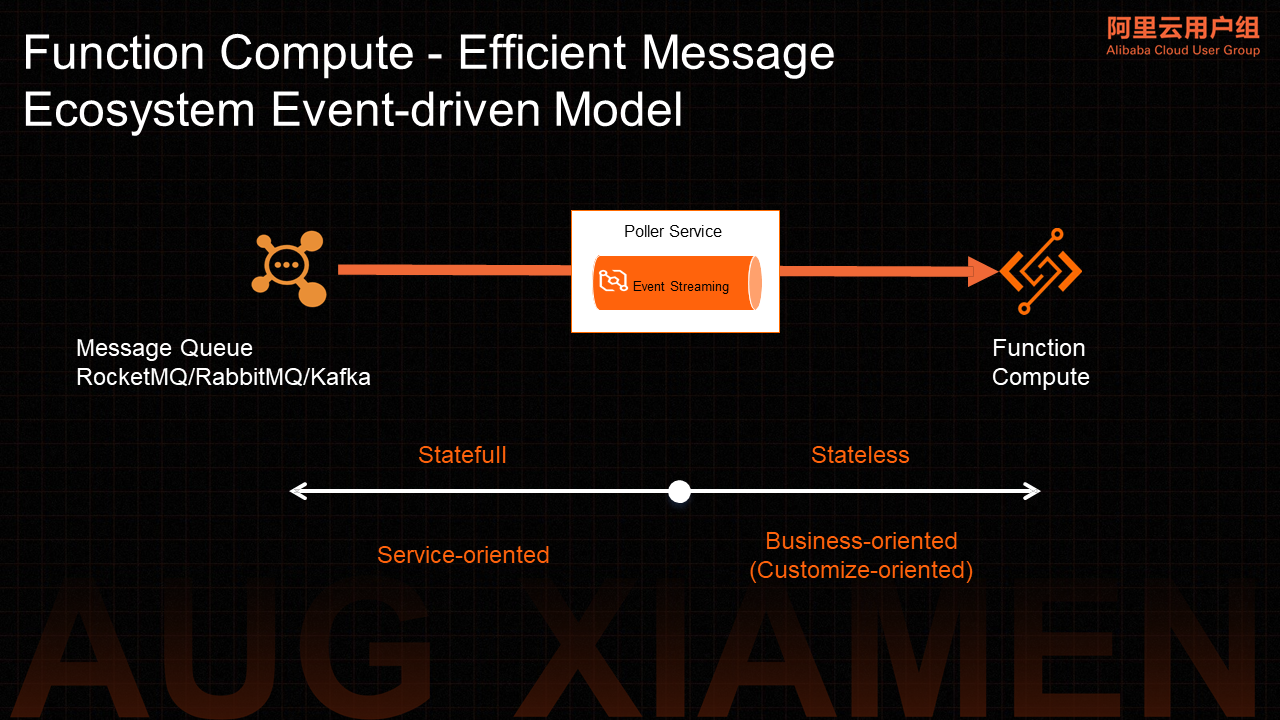

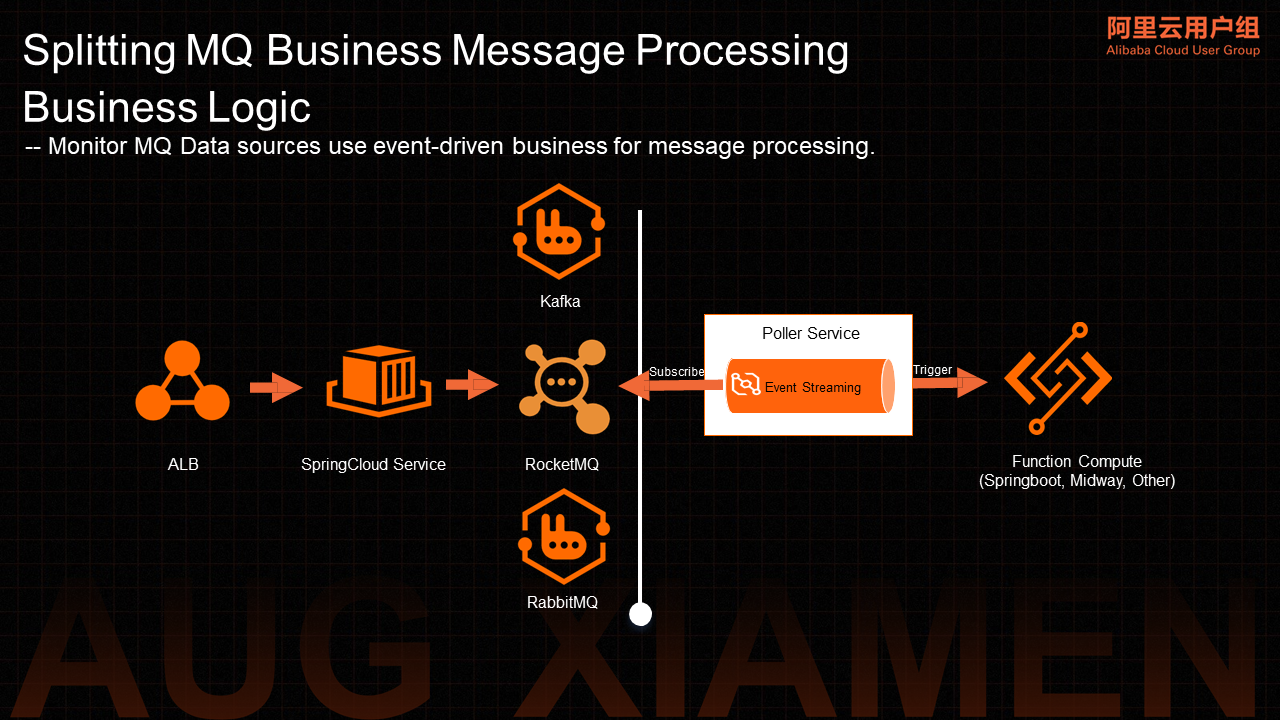

With its characteristics of asynchronous decoupling and peak cutting, message products have become a necessary part of the distributed architecture of the Internet. Serverless Function Compute has a consistent application scenario. Function Compute has made special construction at the architecture level for the ecological integration of message products. A general message consumption service Poller Service was built based on the EventStreaming channel capability provided by EventBridge products. Based on this architecture, users are provided with the ability to trigger multiple message types (such as MNS, RocketMQ, Kafka, and RabbitMQ).

The logical service of consumption, separated from the business logic, is provided by the platform. The message pull model of the traditional architecture is transformed into a Serverless event-driven push model, which can support the computing logic of message processing carried by Function Compute and realize Serverless message processing. Based on this architecture, it can help customers solve the problem of integrated connection of message clients and simplify the implementation of message processing logic. At the same time, the business model of peaks and troughs can realize dynamic scaling of resources and reduce user costs.

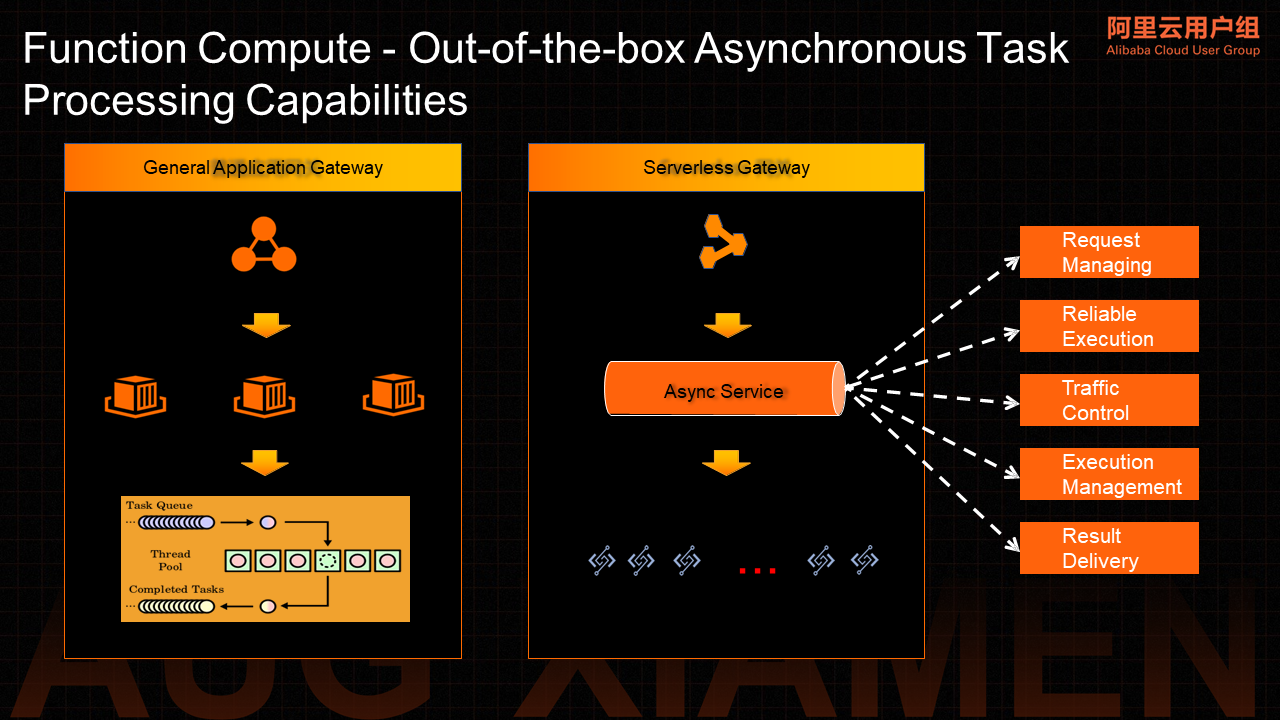

The following figure shows the basic model of a typical asynchronous task processing system, which uses APIs to submit tasks, schedule tasks, execute tasks, and deliver execution results.

The construction of task scheduling, load balance, and traffic control strategies is usually based on service gateways in the traditional task processing framework. This is the most basic but also the most core and complex part. It is the most important part of the construction of distributed systems. The backend implementation is usually based on the process granularity memory queue and runtime-level thread pool model to complete specific task distribution and execution. Thread pools are closely related to the selected programming language Runtime, and the system architecture has a strong dependence on programming development languages.

The system process of Serverless asynchronous task processing says users distribute tasks using API operations. After requests arrive at the Serverless service gateway, they are stored in the asynchronous request queue. Async Service starts to take over these requests. Then, request scheduling obtains backend resources and allocates these requests to specific backend resources for execution.

Async Service is responsible for the implementation of the request Dispatcher, load balance, traffic control strategy, and resource scheduling in the traditional architecture. At this time, the function cluster is equivalent to the abstract distributed thread pool model. Under the Function Compute model, instances are isolated from each other, and resources have the capability of horizontal scaling. With the overall resource pool capability of Function Compute, the thread pool capacity problem and resource scheduling bottleneck problem caused by the limitation of single-machine resources in the traditional application architecture can be avoided. The execution environment of tasks is not limited by the overall business system run time. This is also the advantage of Serverless asynchronous task systems over traditional task systems.

From the perspective of the architecture of the Serverless task processing system, its processing logic is simple. Most of the capabilities distributed systems rely on are all implemented by the Async Service system role transparency. For users, the implementation logic of task processing is more provided through functional programming. The overall architecture avoids the dependence on the language-based run time thread pool. The entire Function Compute cluster provides a thread pool with unlimited capacity. In the service-oriented mode, users only need to submit requests. The Serverless platform is responsible for all other concurrent processing, traffic control, and backlog processing. In the actual execution process, you need to configure the concurrency, error retry policy, and result delivery of asynchronous task processing based on business characteristics.

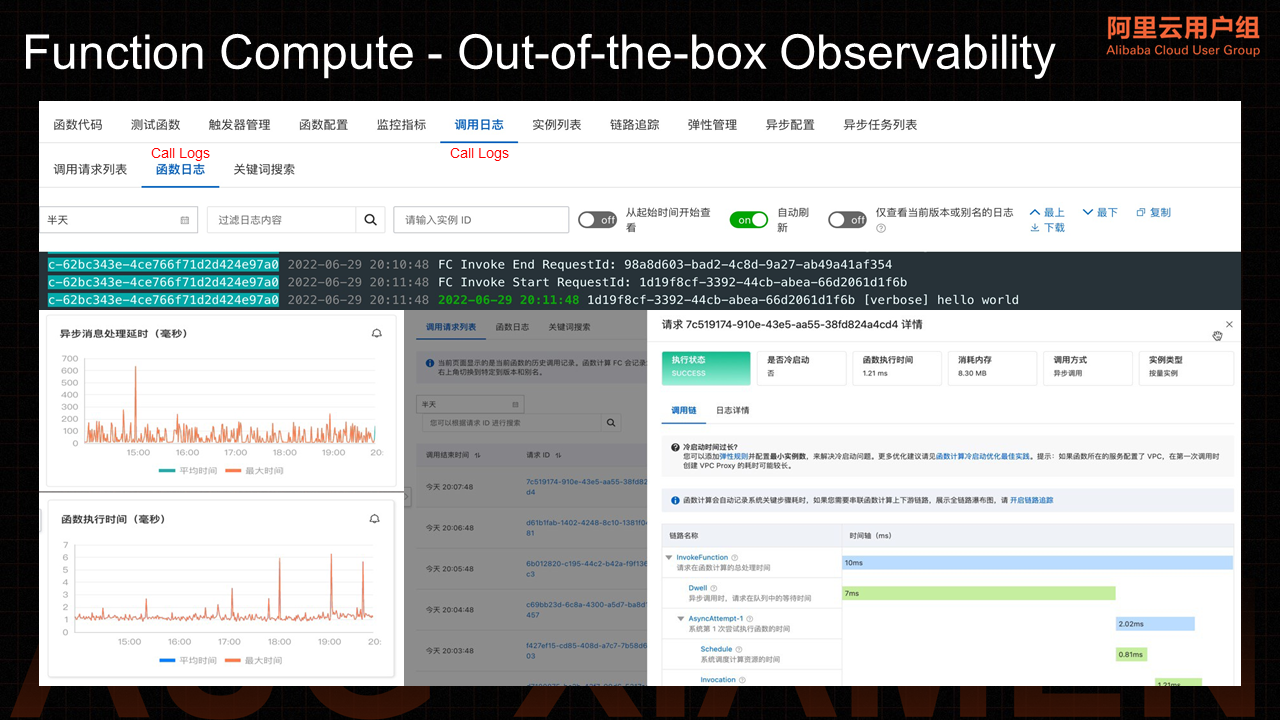

After the function provides many out-of-the-box capabilities, the most urgent need of customers is the observability of these out-of-the-box capabilities. How can we reveal indicators and operating status that users care about in the face of customer development and debugging, business logic optimization, system stability, metering and billing, and other needs?

We need to provide customers with out-of-the-box observability support, especially in the initial transition stage when customers use Serverless. It is necessary to be able to display the information of the black box part of the system to customers to improve the transparency of product billing. Based on the current out-of-the-box observability, you can see the entire task-processing process and the computing resources consumed at a glance.

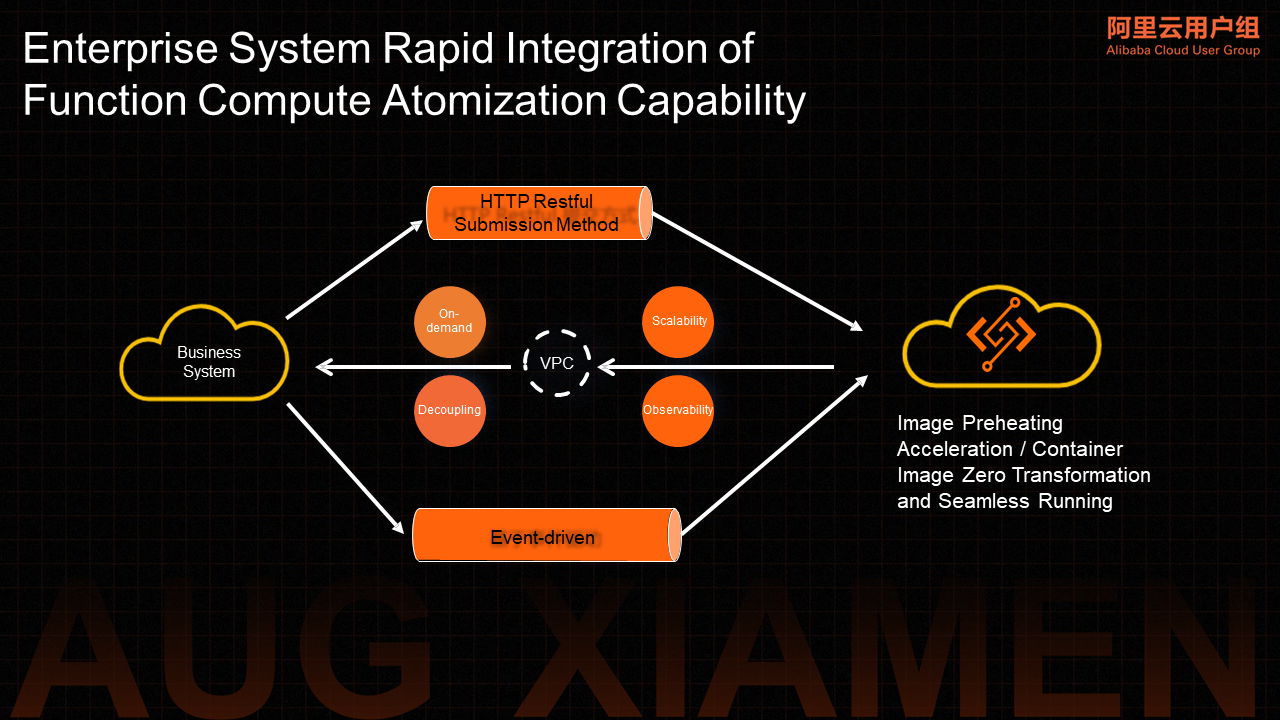

Next, I will focus on how enterprises can use the atomic capabilities provided by Function Compute to quickly expand their business systems. Serverless can become a reliable dependency for enterprises to achieve business system extension and architecture upgrades.

We hope to promote the All-on-Serverless business system so the entire business system of the enterprise can run on Serverless. However, the goal is still challenging at this stage. We are still in an early stage of transition. We hope to provide some best practice suggestions to help the enterprise build on the existing system. You can use the atomic capabilities provided by Serverless systems to reduce costs and increase efficiency. Serverless technologies are introduced into your business systems. Continuous use of Serverless technologies can help us realize that Serverless can bring business value.

How can we quickly integrate such capabilities with existing systems? Function Compute provides SDKs, HTTP URLs, and a variety of event-driven access methods and its ability to support VPC opens up the network space of Function Compute and customers' existing systems. In terms of business support, functions provide a variety of runtime capabilities, including the official standard Runtime, the Customer Runtime, and the deployment of Customer Container images integrated with the container ecosystem. This minimizes the threshold for running customer business systems on Serverless platforms. Compared with the container ecosystem, Function Compute provides a significant image warm-up acceleration capability to help quickly start customers' business systems to provide external services.

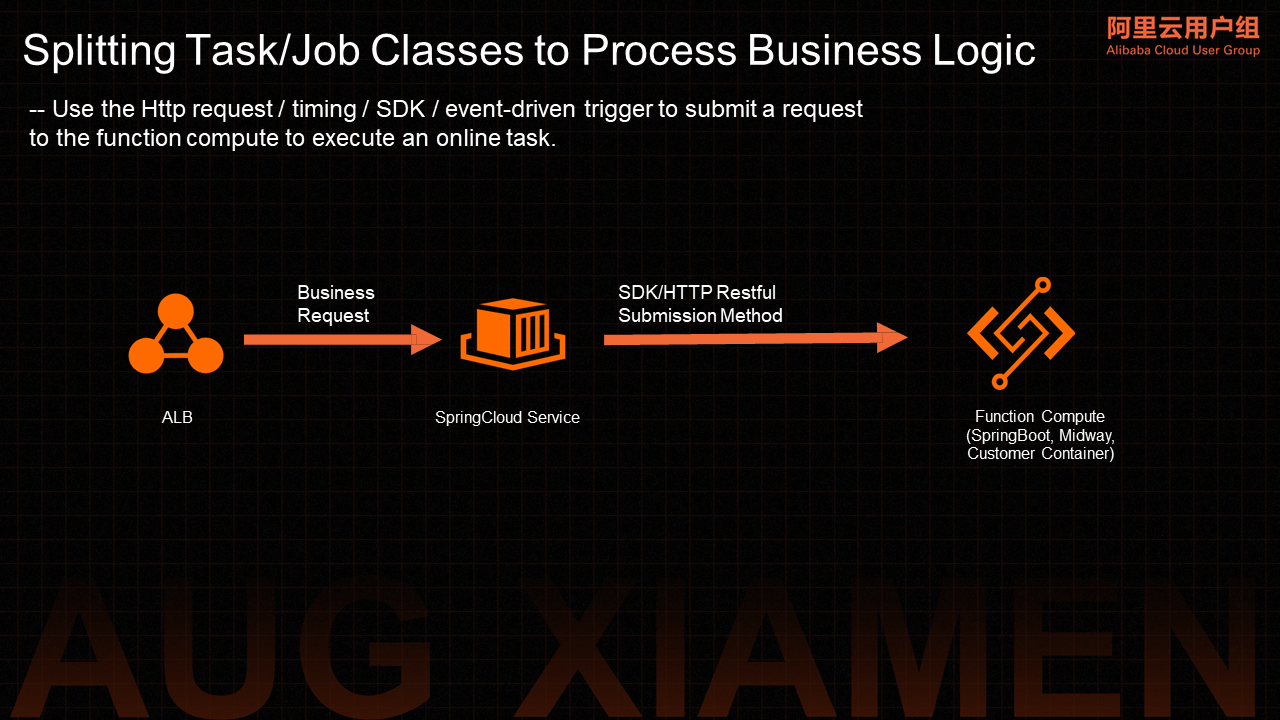

In some microservices model business systems, the abilities of Serverless asynchronous and asynchronous tasks are used to meet the task processing requirements of these systems. Function Compute provides a variety of integration methods (such as HTTP, SDK, timing, and event triggering) to help users submit requests and execute related tasks.

There are usually many business subsystems linked by message middleware in the business system of an enterprise. The original logic of actively pulling messages through monitoring MSMQ for consumption is replaced by Serverless triggers through the event-triggering capability of message cloud products provided by Function Compute. The message processing logic is undertaken by Function Compute, and the event-driven logic is used to decouple message consumption and message processing. Serverless message processing logic is implemented by relying on the reliable consumption capabilities provided by the event driver.

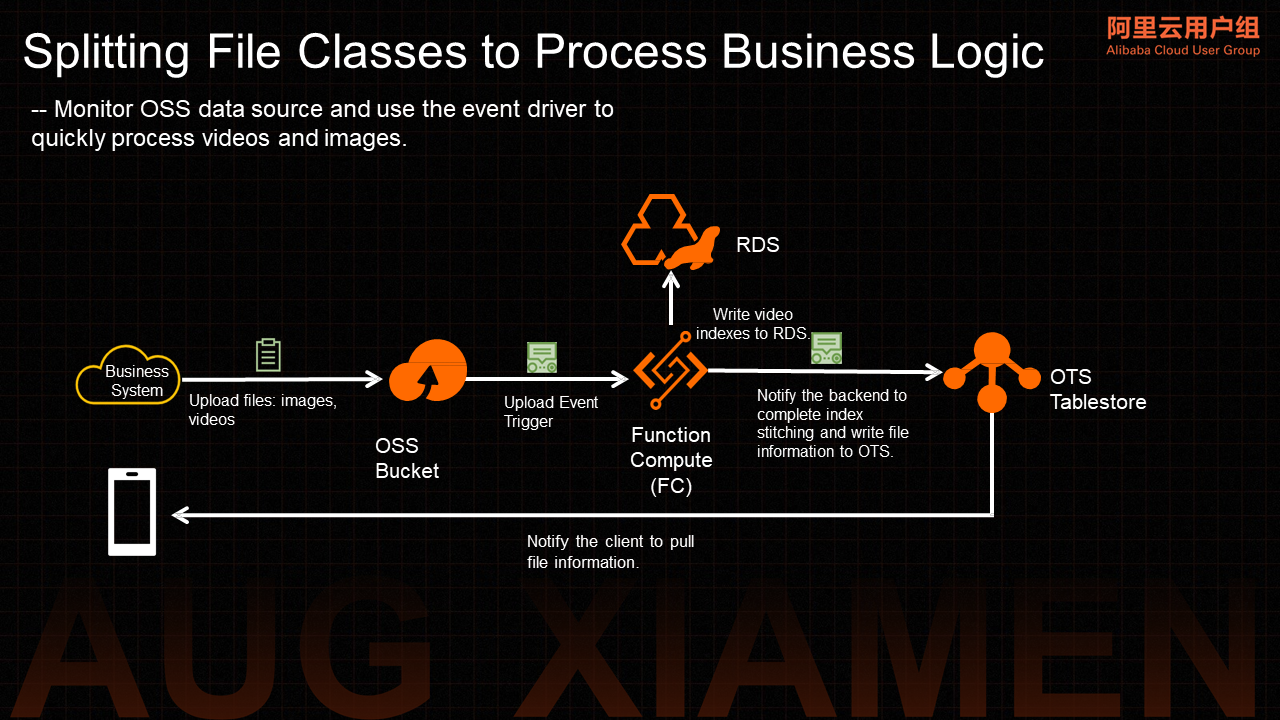

For some file and video processing businesses, data is forwarded between the file system and DB. OSS triggers and DB triggers (OTS) provided by Function Compute are used to quickly complete relevant data processing logic in an event-driven manner.

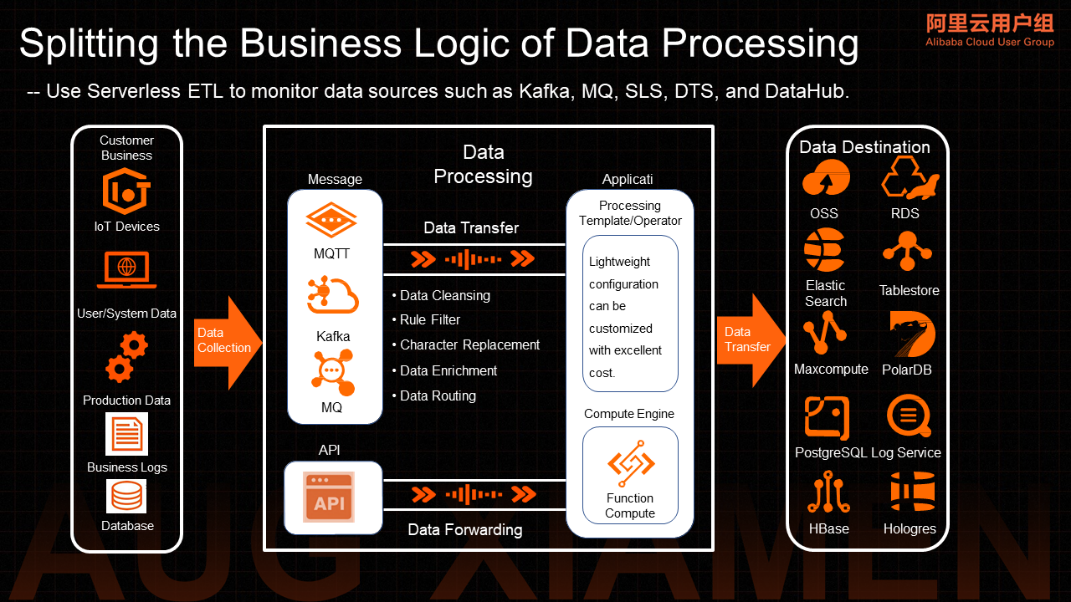

It is hoped that some business logic of data processing can be processed using the Serverless ETL capability provided by message products. The source and target can be quickly expanded using Function Compute according to business needs.

Next, I will share some user Serverless scenario cases.

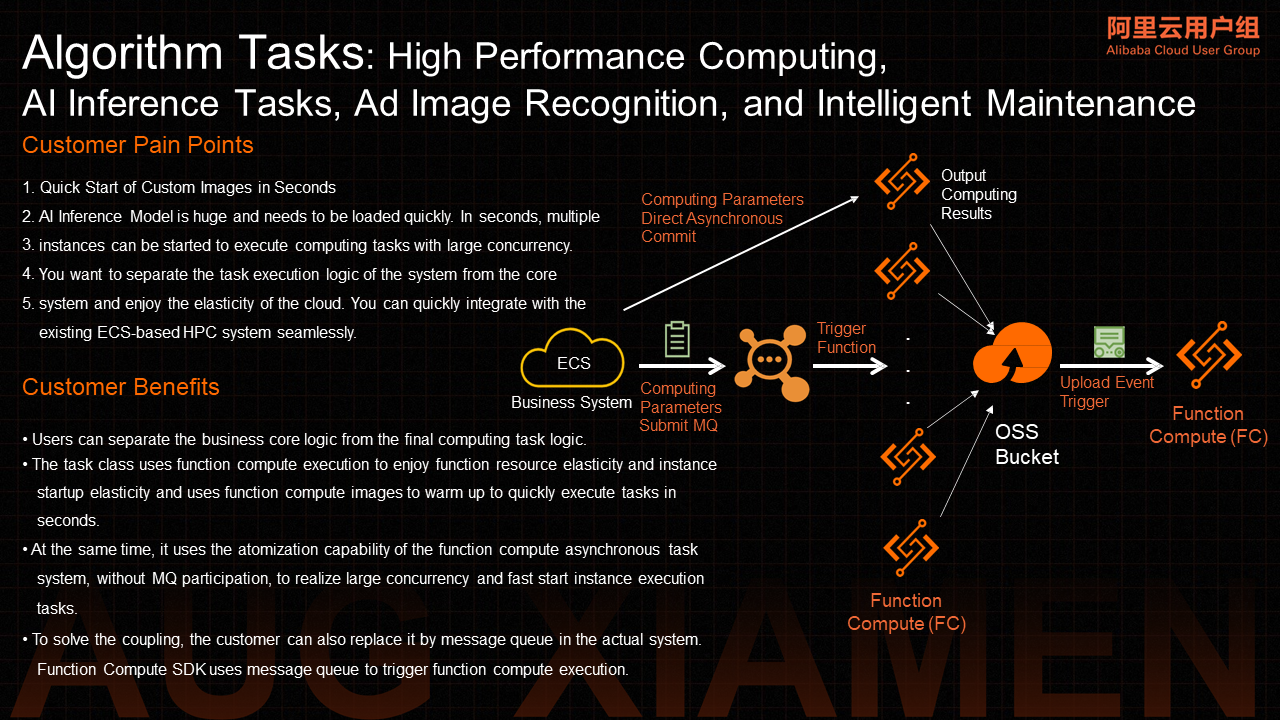

The following is a typical business scenario architecture in the algorithm field for some algorithm tasks, high-performance computing, AI-related inference tasks, advertisement image recognition, and intelligent O&M capabilities.

Generally, these parts are independent of the business system. After using Function Compute, an algorithm model for inference is quickly deployed, and the image or set of parameters required for inference are mentioned to the function by request. If you want to introduce a layer of decoupling logic in the middle, you can use MQ and then MQ events to trigger task execution. The final result is submitted to OSS. You can use the event driver to process the generated files.

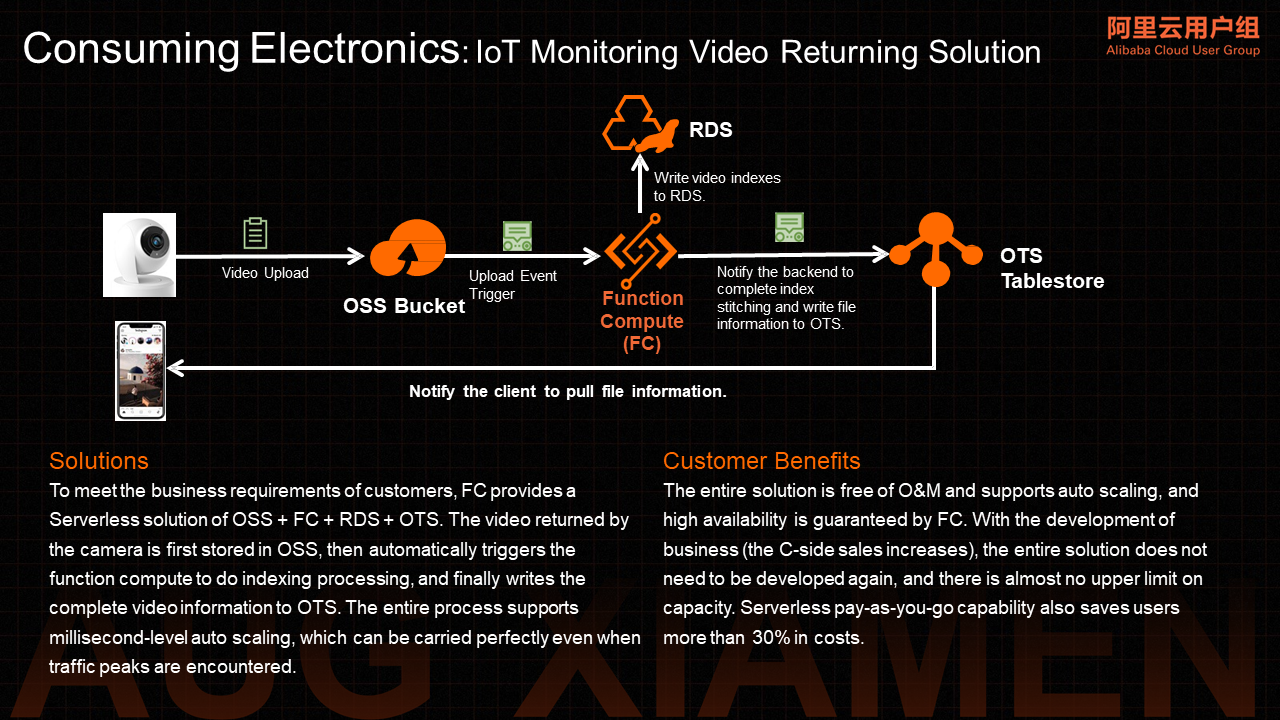

It provides Serverless solutions for customers in the consuming electronics field and IoT-related video transmission, analyzes data from IoT and supports clients to consume these video data.

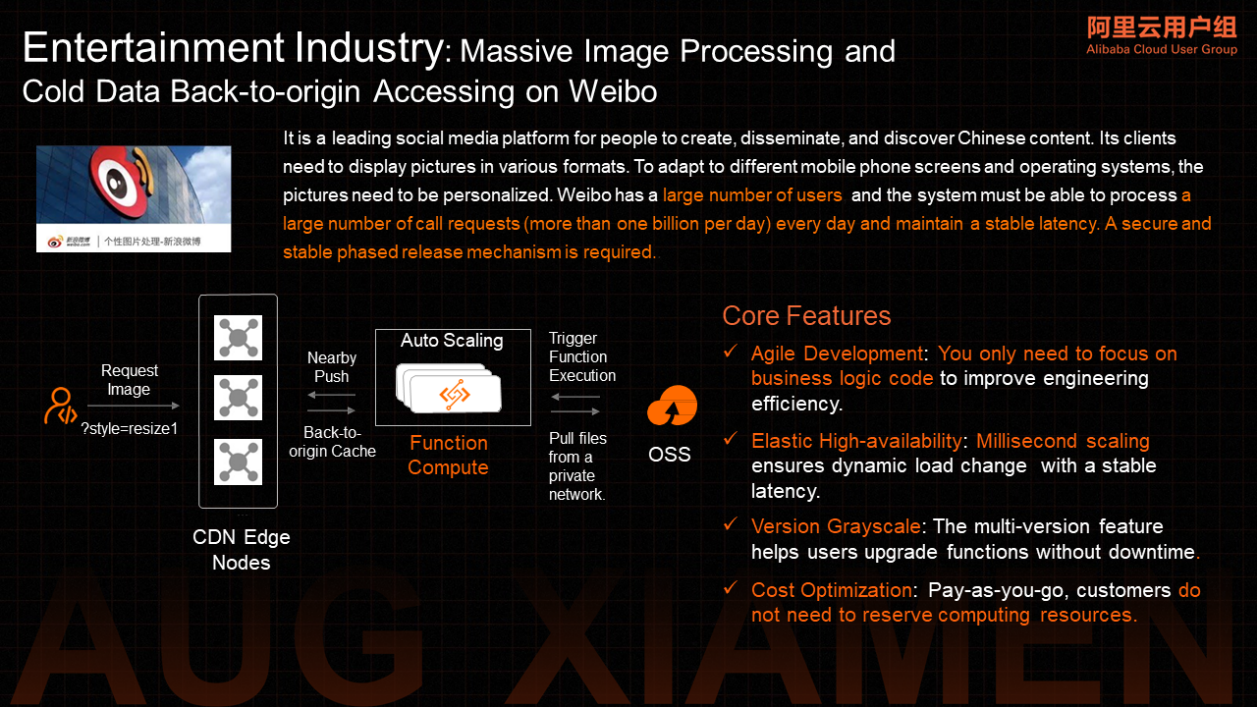

The following is a Serverless scenario case of Weibo for image access and processing. Function Compute is used to realize cold data access and personalized image processing.

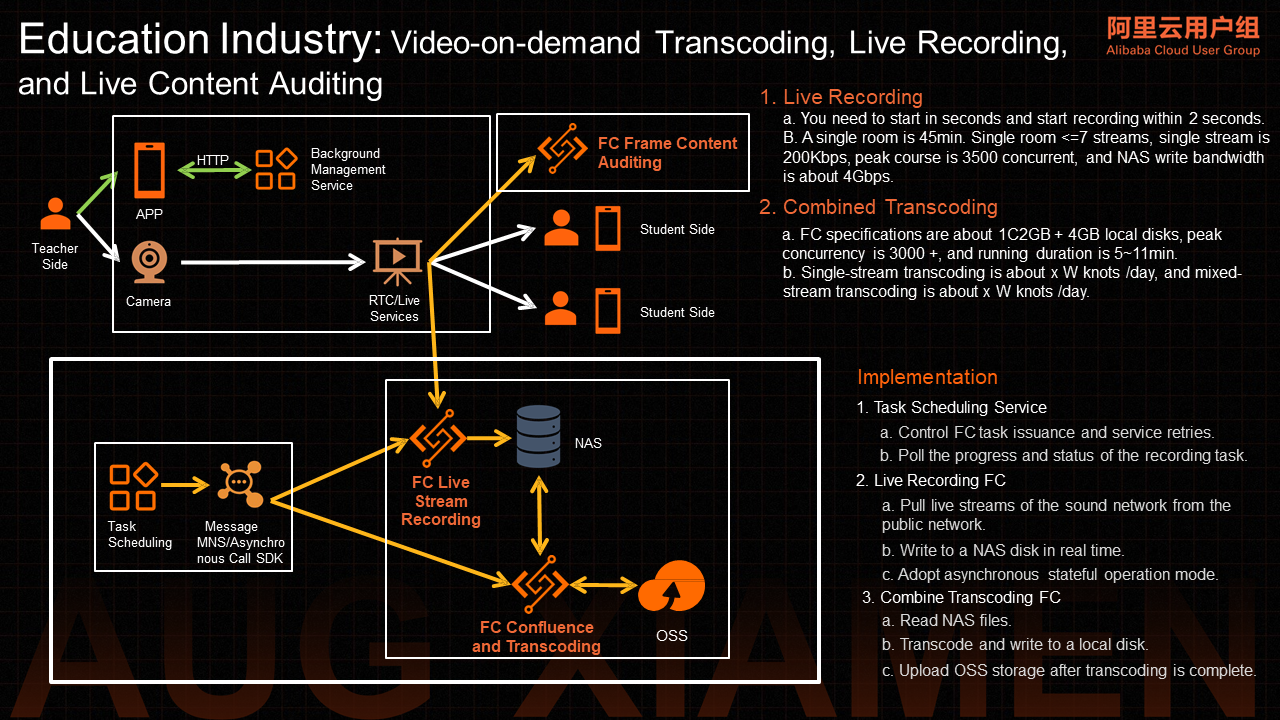

The following is a Serverless solution for the education industry. There is a phenomenon in the education industry with common requirements for coding and broadcasting, live recording, and live content auditing. During the live broadcast, the legitimacy of live content needs to be audited by intercepting frames.

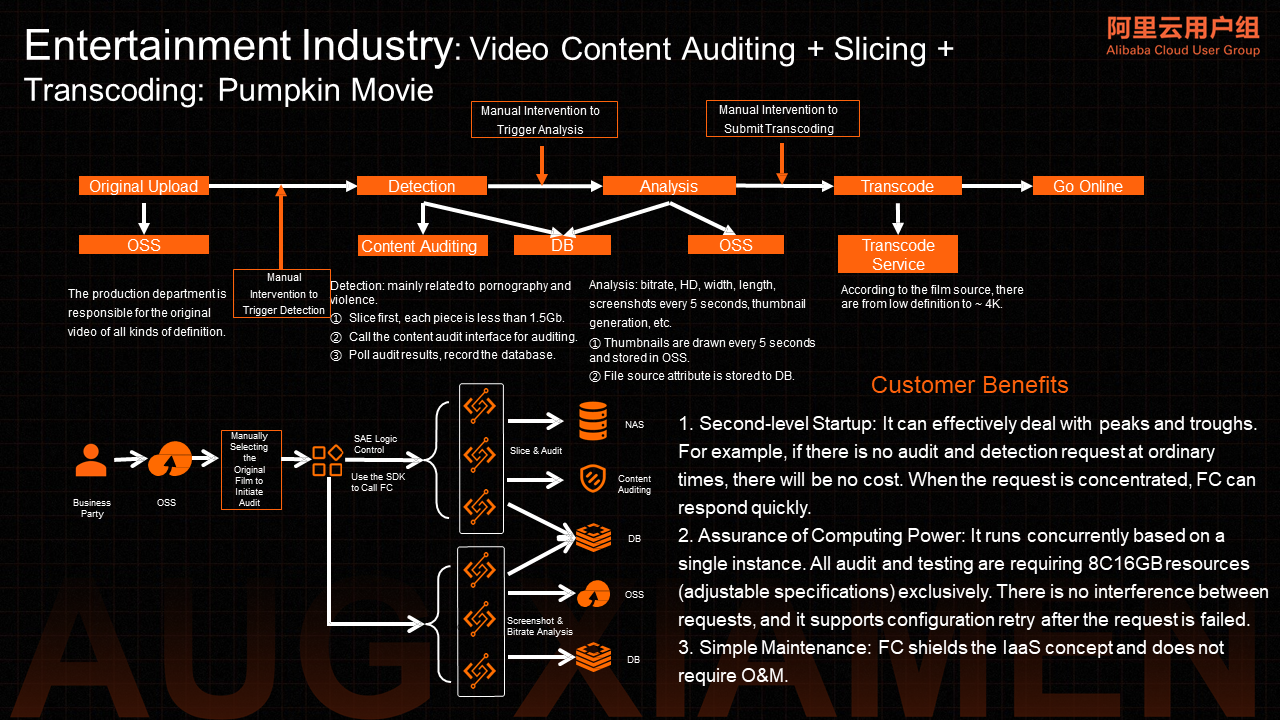

The following is a Serverless solution for dynamic frame auditing and slice transcoding in the entertainment industry. The following figure shows a technical scheme from Pumpkin Movie, using Function Compute to realize such a capability.

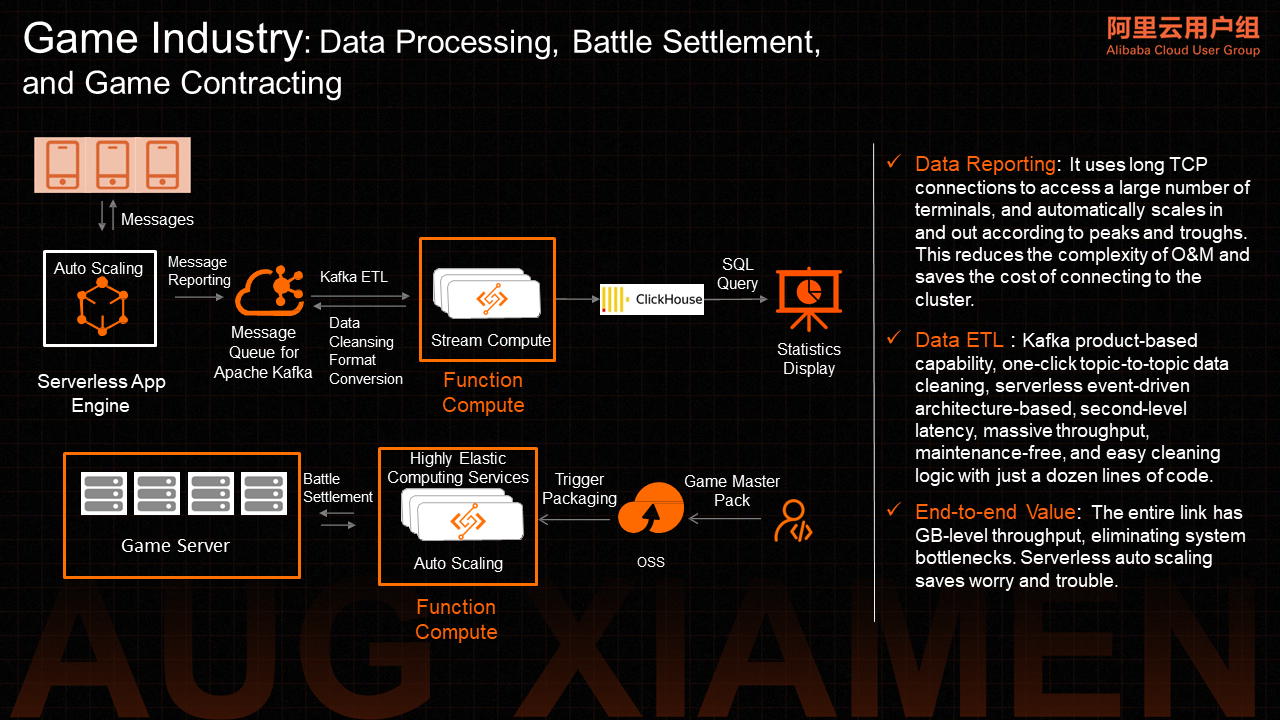

The following are typical application cases of Function Compute in the gaming industry, covering scenarios (such as data processing, battle settlement, and game contracting). Several leading customers in the gaming industry are using such Serverless solution combinations to implement their business systems. These scenarios have many characteristics. Let's take battle settlement as an example. It is unnecessary to continuously perform real-time computing during the execution of your replica. Usually, computing is only required when a replica may be about to the end or when a replica needs to perform a battle. This is a typical Serverless application scenario.

App Deploy as Code! SAE and Terraform Combine to Implement IaC-Style Application Deployment

99 posts | 7 followers

FollowAlibaba Cloud Native Community - July 19, 2022

Alibaba Developer - December 16, 2021

Alibaba Cloud Native - August 14, 2024

Alibaba Cloud Community - July 26, 2023

Alibaba EMR - August 5, 2024

DavidZhang - January 2, 2024

99 posts | 7 followers

Follow ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn More Elastic High Performance Computing

Elastic High Performance Computing

A HPCaaS cloud platform providing an all-in-one high-performance public computing service

Learn More Link IoT Edge

Link IoT Edge

Link IoT Edge allows for the management of millions of edge nodes by extending the capabilities of the cloud, thus providing users with services at the nearest location.

Learn MoreMore Posts by Alibaba Cloud Serverless