By Bin Wang (Alibaba Cloud Solution Architect), Lantian Yao (Rokid Technical Expert), and Dapeng Nie (Alibaba Cloud Senior Technical Expert)

Rokid was founded in 2014 as a product platform company focusing on human-computer interaction technology. Rokid combines cutting-edge Al and AR technologies with industry applications through research in speech recognition, natural language processing, computer vision, optical display, hardware design, and other fields to provide full-stack solutions for customers in different vertical fields, effectively improving user experience, helping enterprises increase efficiency, and enabling public safety.

The early years of Rokid focused on AR technology research. Google Glass was created in 2012, and its vast imagination deeply shocked Rokid's founding team. Google Glass’ popularity did not last due to limited usage scenarios and high prices. However, it can be expected that in the near future, with the maturity of infrastructure and ecological applications and people's increasing requirements for the improvement of entertainment and office experience, AR technology will be more widely used.

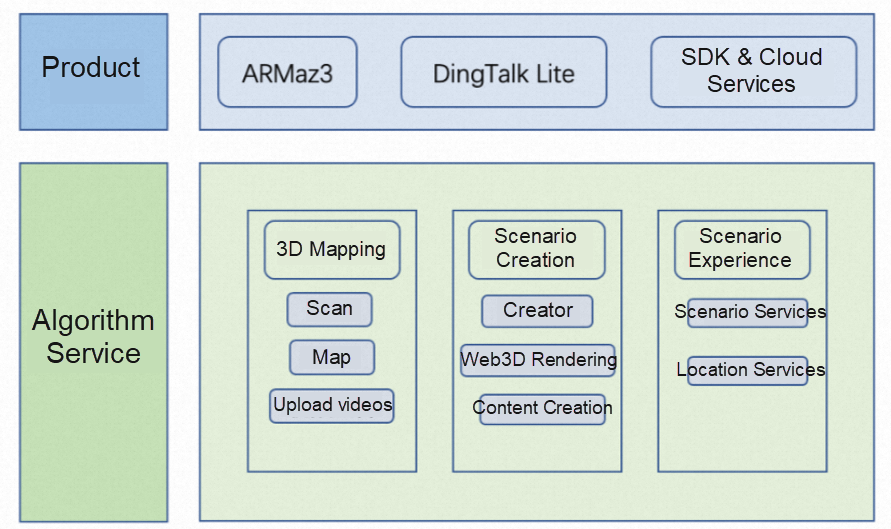

In the digital culture field, with a focus on display guide solutions, Rokid has formed three business modules: three-dimensional mapping (3D mapping), scene creation, and scene experience. Each one is supported by different background platforms.

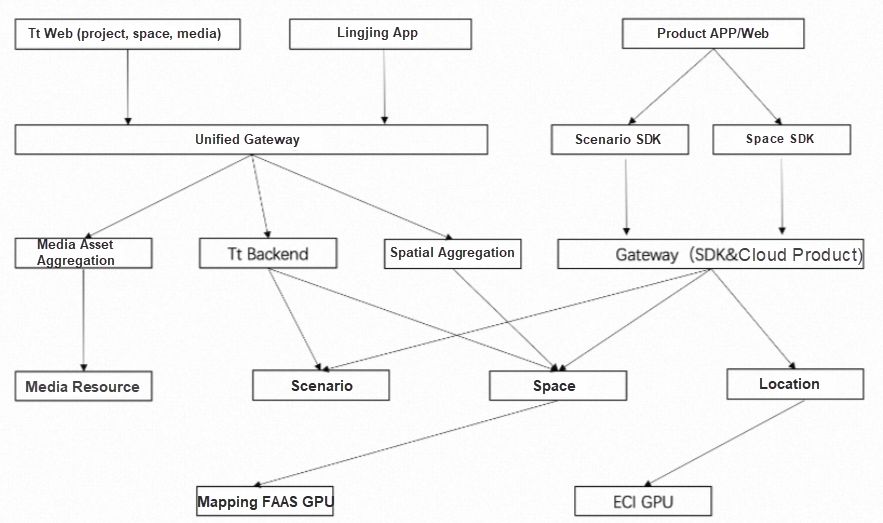

The following figure shows the overall product architecture:

Three-dimensional mapping, scene creation, and scene experience all involve image processing, which requires a large amount of GPU resources. Three-dimensional mapping is an offline task. When a display model is built, the video content of the entire display place needs to be preprocessed, which is the part that consumes the most computing power among the three modules. Scene creation needs to use authoring software, so GPU resources are mainly consumed by development machines. Scene experience provides real-time services when the device is running. Its main function is location service, which requires high real-time services.

In order to support the demand for GPU computing power, Rokid used cloud resources as much as possible during the early stage of development to make full use of the dividends of cloud computing. Initially, GPU models of ECS were purchased for business development and testing. The big problem is that when you carry out 3D mapping, all the scene data of the display environment is usually collected at one time. The amount of video is huge, so it takes a long time to process through ECS serial processing. For example, it takes about three hours to process an hour's worth of video data through an ECS GPU machine. The first step of Rokid is parallelization. By splitting the CPU and GPU processing logic and optimizing the task scheduling mode, enable the part that can be processed concurrently to pull up more resources as much as possible to increase the concurrency. After a series of optimizations, the video processing time has been reduced significantly.

In terms of concurrent resources, Rokid selected Elastic Container Instance (ECI) as the GPU computing resource. Compared with ECS, ECI has more diverse options for resource granularity. For small-size videos, Rokid uses small-size ECI instances to perform parallel processing concurrently, shortening the overall video processing time significantly. To this end, the Rokid internal platform has developed a set of scheduling modules for Alibaba Cloud ECI resources, making it easier for the internal staff to apply for and return ECI resources.

Using ECI resources flexibly ensures the task processing concurrency during peak hours and ensures that the computing power cost is controllable. The use process has been initially optimized. However, it is found that there are still many shortcomings after a period of use. The main problems are summarized below:

In order to solve the problems above, Rokid's internal architecture team seeks to optimize the existing architecture. For problem 2, Rokid maintains a general scheduling system to arrange tasks. For problem 3, through the introduction of ARMS Tracing, Prometheus, Grafana, and other components of Alibaba Cloud, combined with ECI hardware indicators, operation index analyses are collected to Log Service in a unified way to form a unified monitoring report. The monitoring metrics alert is also added. This way, the needs of Rokid are mostly met. However, it is difficult to solve problem 1 and problem 4 by adding cloud products or internal applications. The Rokid Architecture Team began to look for new products on the cloud to completely solve the pain points in the use process. At that time, the Function Compute appeared in the vision of Rokid architects. After a period of research and use, the team decided the various capabilities of Function Compute could meet the Rokid usage scenarios.

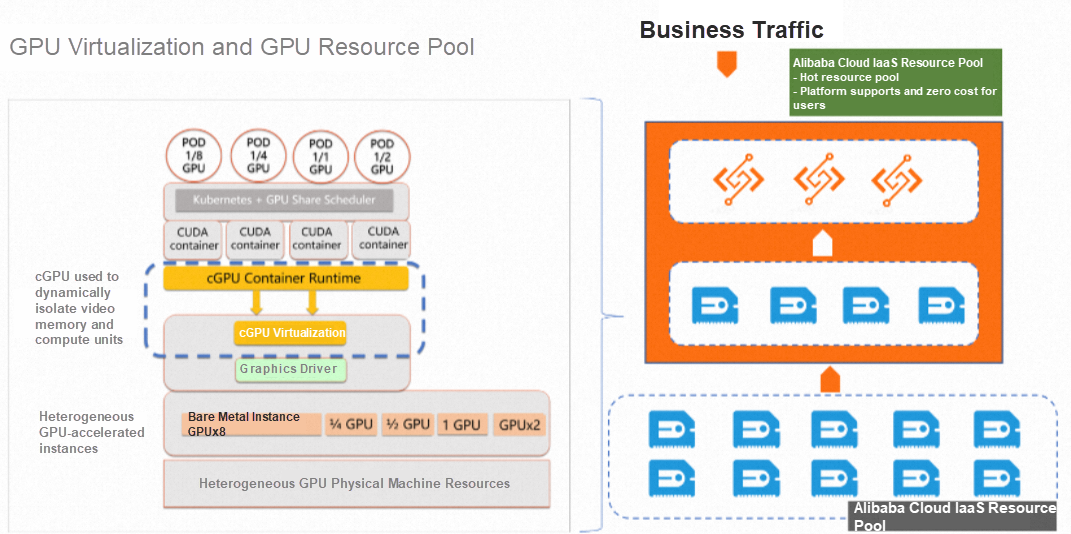

Function Compute is a fully managed, event-driven computing service. Function Compute users only need to focus on writing and uploading code or images without procuring and managing infrastructure resources (such as servers). Function Compute prepares computing resources to run tasks flexibly and reliably and provides log query, performance monitoring, and alerting functions. Function Compute provides the computing power of CPU and GPU and second-level billing. Customers only need to pay for the actual resource usage. Resource elasticity can be automatically scaled based on metrics (such as timing and request volume) without maintaining components (such as scheduling, load, retry, and asynchronous callback). It provides the ultimate Serverless capability that is out-of-the-box and pay-as-you-go. The Function Compute GPU architecture is listed below:

The underlying layer relies on the large computing pool of Alibaba Cloud to provide nearly unlimited computing resources. With the cGPU technology of Alibaba Cloud, single-card GPU resources can be divided into a variety of smaller-grained resource specifications. The customer creates a function by selecting GPU specification in Function Compute. When a request is made, Function Compute obtains resources from the preheating resource pool in real-time and starts the customer function with the corresponding image program. After the function is started, it provides external services.

Function Compute resources are pulled up when you run an instance for the first time. The elastic delivery time ranges from 1s (warm start) to 20s (cold start). Rokid's 3D mapping is an offline task, and the processing time of a single video is also in minutes, so a second-level startup delay is acceptable.

After the 3D mapping task is accessed by Function Compute, Rokid does not need to manually apply for ECI resources nor manually release them after use. Function Compute will dynamically pull up the backend GPU computing resources that match the request volume according to the request traffic and automatically release the resources when there is no new request for a period after the request processing is completed. The entire 3D modeling involves several steps after the task is split. Each step is executed asynchronously. The next task can be automatically triggered through the Function Compute asynchronous system after the previous task is completed. The Function Compute console has built-in functions of metrics monitoring, exception alert, tracing analysis, log call, and asynchronous configuration that can meet Rokid's functional requirements of the full function lifecycle from development and operation monitoring to O&M. The underlying layer of Function Compute relies on the large computing pool and the backend algorithms of preheating and resource evaluation of Alibaba Cloud to ensure resource supply to the greatest extent. These points are the same pain points Rokid encountered before. Function Compute solves nearly all of Rokid's major problems.

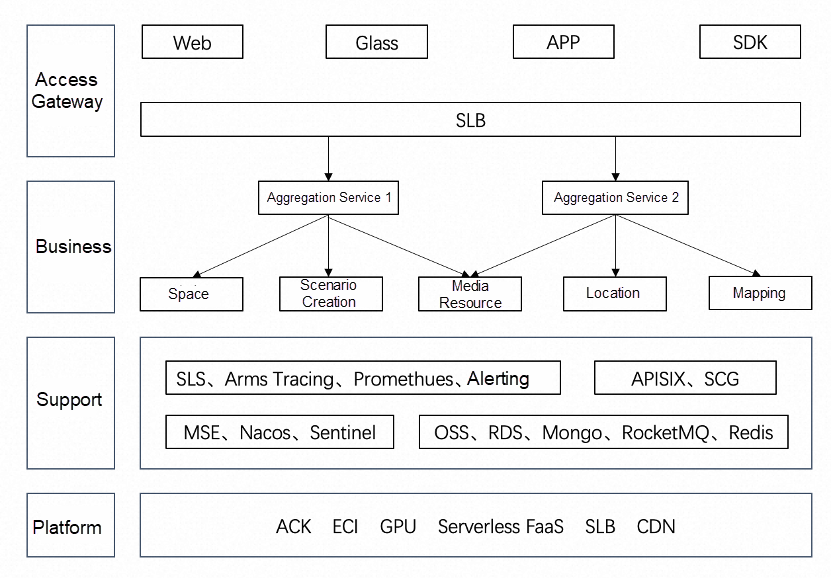

The technical architecture of the cloud product of Rokid is listed below:

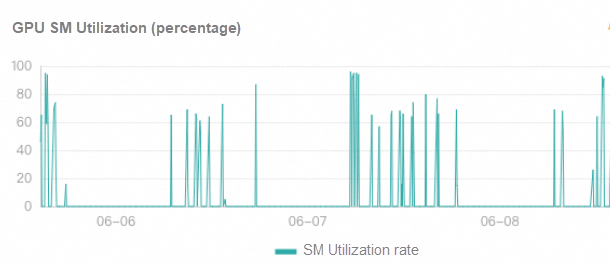

The monitoring chart of Function Compute resource utilization is listed below. As we can see, when a task enters, the GPU computing utilization can reach 60% (or close to 100%).

The Function Compute offers Rokid a lot of convenience. It can be seen as the best cloud product in terms of resource extensibility and cost savings during peak hours. However, Function Compute is not universal. For Rokid's scene experience function, the module that needs to provide location services in real-time, and Function Compute still has some problems.

When Function Compute pulls up instance resources for the first time, there will be a startup delay that ranges from 1s (warm start) to 20s (cold start), which is unacceptable for the real-time location service module. When a user is in the display site, the AR device obtains AR expansion information of the spatial location through real-time location. The response time of the interface is very important to the customer experience, and the location request needs to be returned within 1s.

In terms of cost and quality of service, Rokid chooses quality of service as the priority. The scene experience module uses ECI deployment. Through daily scheduled tasks, more ECI instances are popped up in advance during peak hours, and a small number of ECI instances are reserved during off-peak hours to achieve a balance between experience and cost.

On the other hand, Function Compute has solutions to deal with real-time scenarios. GPU models are large, and images are all at the GB level. So, for the first resource pull-up, you won't see the same pull speed within 100ms as CPU resources for a while. For real-time scenarios, the GPU instances of Function Compute are reserved instances. This feature can release computing resources while reserving the memory running images of programs when resources are idle. When new requests come in, functions can provide services when computing resources are provided, eliminating the time of pulling up intermediate hardware resources and pulling and starting function images. This way, real-time services can be provided. Reserved instances have been launched on CPU instances. When it is idle, the CPU price is one-tenth of that in the running state. This significantly reduces resource costs while ensuring real-time capability. The reserved capacity of the GPU version is expected to be launched by the end of the year.

The following figure shows the business architecture of Rokid after ECI is used in the scene experience:

Through a series of cloud architecture transformations, the current three-dimensional mapping module of Rokid runs on Function Compute GPU resources, and the scene experience module runs on ECI resources. Both cost and performance have been taken into account, which gives the entire system strong extensibility, reaching the architecture target set when the system was designed. Good results have been achieved since the service was launched in February 2023. Compared with the original ECS architecture, the cost of the three-dimensional mapping module is reduced significantly, with the cost of computing power reduced by 40%. More importantly, through real-time concurrent processing, the queuing time of subtasks is significantly reduced and the completion time of the entire task is accelerated.

Rokid is still looking forward to the GPU-reserved instances of Function Compute and expects Function Compute to be launched as soon as possible, so Rokid can migrate the entire GPU computing power to Function Compute and achieve a unified architecture.

After the display project, Rokid believes that Serverless, represented by Function Compute, is the future of cloud computing. With Serverless, cloud computing users no longer need to pay attention to the underlying IaaS layer O&M and scheduling and can maximize extensibility while ensuring optimal costs. In addition, they can use the native capabilities provided by cloud products throughout the service lifecycle to locate and solve problems easily and quickly. Rokid is trying to apply Function Compute on 3D model processing and audio and video post-processing on a large scale. Rokid believes that the Serverless architecture represented by Function Compute will also be applied to more cloud products.

Alibaba Cloud Serverless | Practice 2: Two Minutes to Deploy Serverless Application

Alibaba Cloud Function Compute Helps to Upgrade the Architecture of Amap RTA Advertising System

99 posts | 7 followers

FollowAlibaba Cloud Community - July 22, 2025

Alibaba Cloud Serverless - June 28, 2022

Alibaba Cloud Storage - February 27, 2020

Alibaba Cloud Serverless - April 21, 2022

Alibaba Cloud Serverless - March 8, 2023

OpenAnolis - September 26, 2022

99 posts | 7 followers

Follow Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Serverless Workflow

Serverless Workflow

Visualization, O&M-free orchestration, and Coordination of Stateful Application Scenarios

Learn MoreMore Posts by Alibaba Cloud Serverless