By Wu Mengqi (Han Shu)

Generative AI is profoundly transforming every industry, and the field of data analysis is no exception—AI is empowering applications with higher intelligence and greater automation. The way data is generated is also changing. In the past, data was mainly produced by human-triggered actions (such as shopping orders, financial transactions, page visits, and so on). Now it is gradually shifting toward AI-driven behaviors (such as intelligent recommendations, automated trading, and agent-based access). This naturally leads to a data explosion, along with a dramatic increase in the frequency of data analysis and mining, often jumping by several orders of magnitude.

Put simply:

• More data is being generated

• Data is being accessed more frequently

• Both have grown by multiple orders of magnitude

As data volume grows by orders of magnitude, the paradigms for data access and processing are also evolving. Under this new reality, traditional full recomputation becomes increasingly problematic: both the amount of data processed (the input size) and the frequency of queries (the number of executions) have changed qualitatively, and the inefficiencies of full recomputation are being amplified. In contrast, incremental computation is gaining significant advantages, because its computational cost does not grow linearly with the total data volume or the number of queries. Instead, its cost depends only on the size of the data changes. Following this trend, the AnalyticDB team recently presented their latest work on incremental computation at VLDB 2025: Streaming View: An Efficient Data Processing Engine for Modern Real-time Data Warehouse of Alibaba Cloud. This article provides a brief introduction to that work.

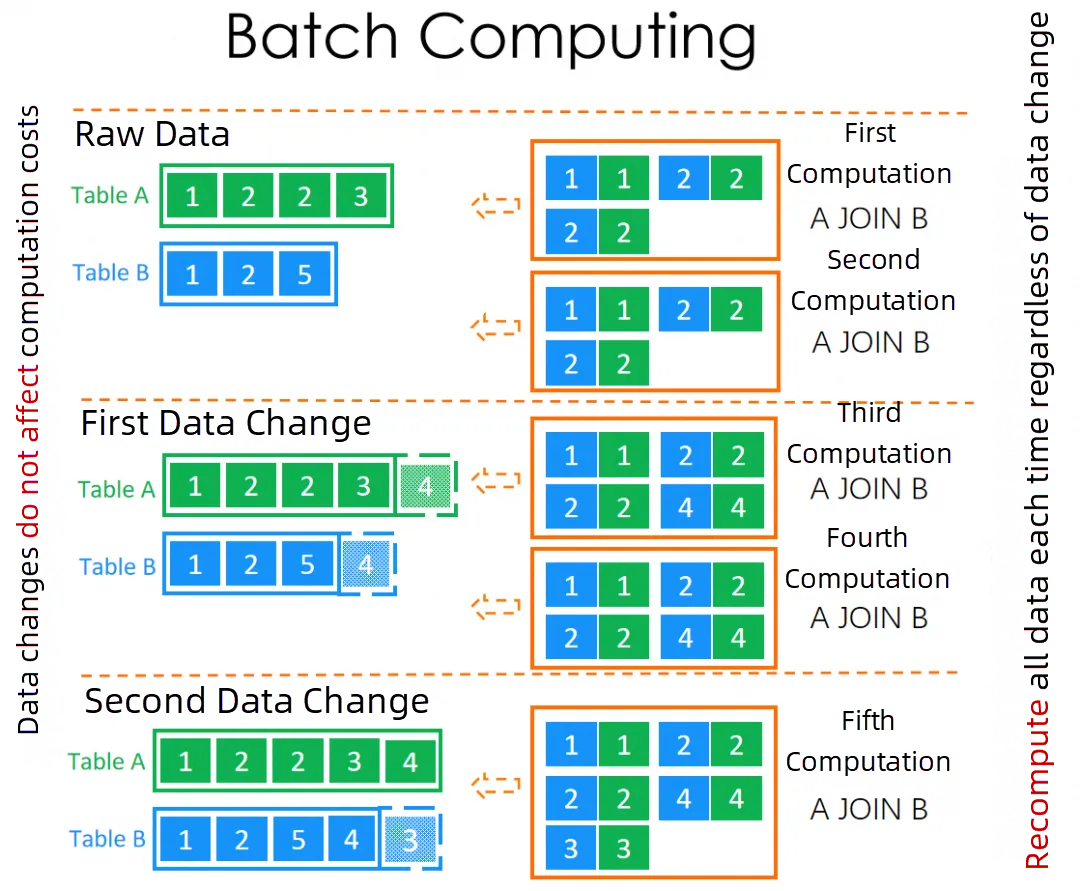

As illustrated in the original paper, full recomputation is a relatively simple and intuitive computation paradigm. Whenever a query is issued, the system recomputes over the entire dataset. Its advantages are: 1. The logic is straightforward and easy to understand. 2. It can support almost all SQL syntax.

However, its downside is that the computational cost grows linearly with both the data size and the number of query executions, and these two dimensions multiply together. Roughly:

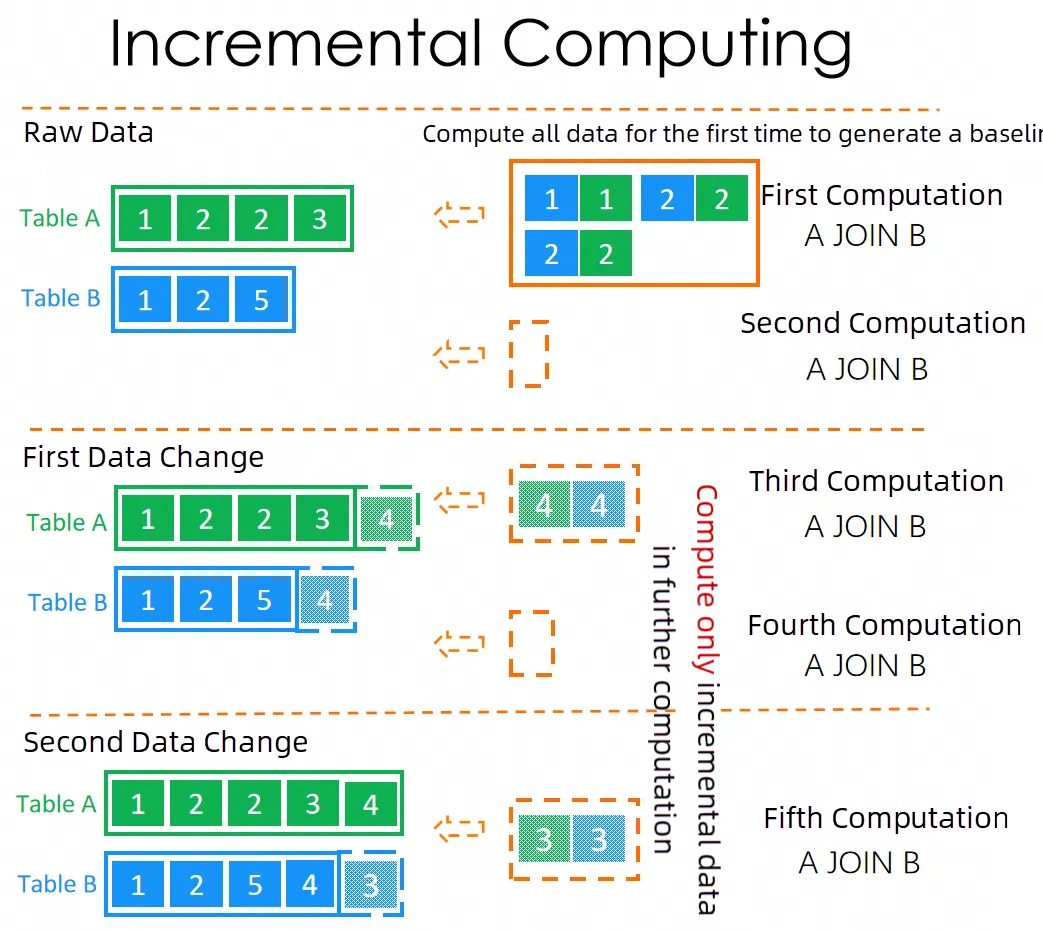

In the AI era, as data generation becomes increasingly automated and queries are more often triggered by intelligent agents rather than humans, both data volume and query frequency grow by orders of magnitude. Under this background, the performance pressure and resource consumption caused by full recomputation become increasingly severe, and its limitations are more and more apparent. By comparison, the advantages of incremental computation stand out, because its cost is independent of the total data size and the number of queries, and instead depends only on the size of the delta:

Conceptually, incremental computation performs a one-time full recomputation the first time it runs, generating a baseline. Subsequent computations are progressive updates based on this baseline plus the data changes.

However, the challenges of incremental computation are obvious: both the implementation complexity and the usage barrier are significantly higher than those of full recomputation. From an implementation perspective, every SQL construct and operator needs explicit support for incremental semantics, which is far more complex than full recomputation. Meanwhile, because incremental computation is a stepwise process, maintaining data consistency becomes more difficult. From a usage perspective, the bar is also higher. As the computation paradigm changes, data modeling and business logic design must be rebuilt around progressive computation, which raises requirements for both development and operations.

In practice, the relationship between incremental computation and full recomputation is quite similar to that between LNG shipping and pipeline natural gas, a comparison that has often appeared in recent news: LNG shipping is flexible and convenient, requiring no large upfront infrastructure. It can quickly mobilize shipping capacity and deliver gas on demand. This is similar to full recomputation: easy to start, no preprocessing, and each query is executed independently. Pipeline natural gas, on the other hand, requires high upfront construction costs—just as incremental computation requires building the baseline data and refactoring business models and data architectures to fit the incremental paradigm. But once the pipeline is built and stable, the marginal cost of transporting gas is extremely low, and supply can be continuous and efficient.

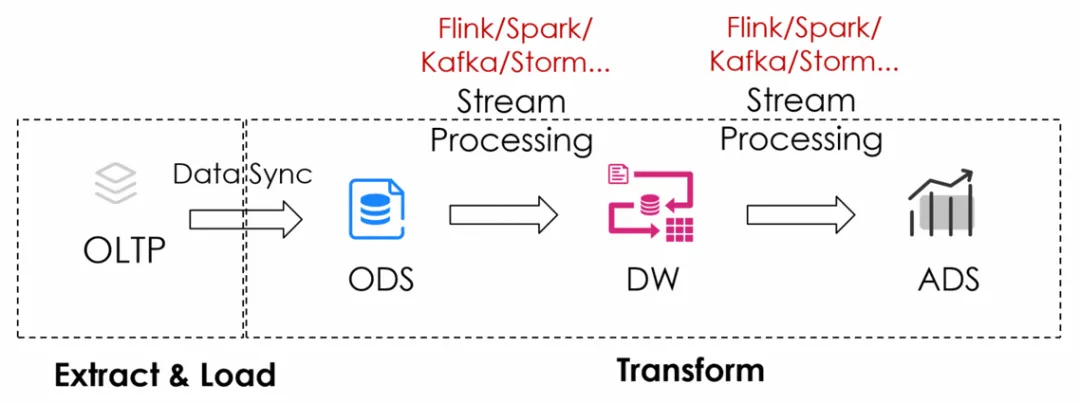

Traditionally, standalone real-time computing engines—such as Flink, Spark Streaming, and Storm—have been the de facto standard in incremental computation. They are widely deployed in production and have been used reliably for years. These systems implement a dedicated stream processing paradigm, which can be seen as a variant of incremental computation. By introducing core concepts such as watermarks and windows, they effectively reduce the complexity of progressive computation and state management over unbounded data streams.

However, this simplification comes at a cost: it essentially trades off semantic precision and latency controllability. In real-world applications, businesses often need to significantly adapt redefining time semantics, tolerating out-of-order data, and accepting results that are "approximately correct" rather than strictly consistent.

In addition, standalone stream processing engines suffer from a significant pain point: redundant data storage and data movement. This is especially true in layered data warehouse architectures, where data flows repeatedly between OLAP data warehouses (such as ClickHouse, Doris, Greenplum, and others) and stream processing engines. This leads to massive duplicate storage and transmission, significant compute and network overhead, fragmented development pipelines and high operational complexity. As a result, both the resource cost and the human maintenance cost are high, and "stream processing is expensive" has become a common perception in the industry.

In contrast to the broad industrial adoption of standalone stream processing engines, we have Incremental View Maintenance (IVM) in traditional database systems—i.e., materialized view maintenance. Although IVM has been deeply studied in academia for many years, its industrial-grade implementations and production deployments have long lagged behind those of stream processing engines.

There are several reasons behind this:

StreamingView is the incremental computation engine designed and developed inside AnalyticDB for PostgreSQL at Alibaba Cloud. Its core implementation draws on the ideas of traditional IVM, but its target positioning and engineering realization go significantly beyond prior work.

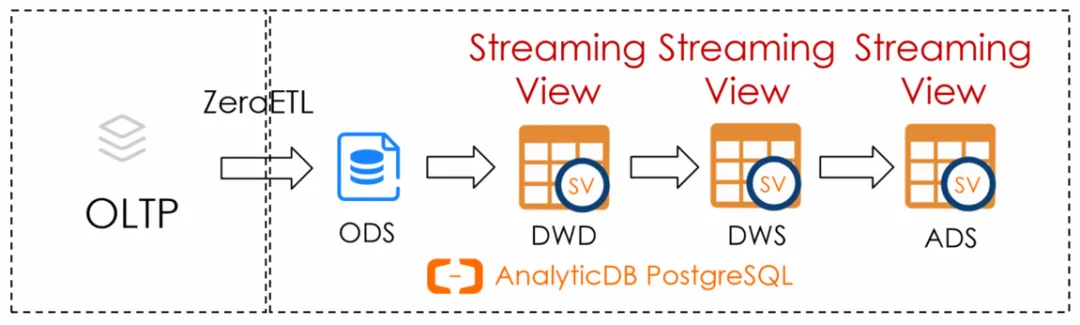

Its primary goal is to solve a major pain point of standalone stream processing engines: eliminate the repeated "out-of-warehouse / into-warehouse" movement of data. By avoiding frequent data shuttling between the OLAP data warehouse and external stream engines, StreamingView eliminates storage redundancy, reduces data transmission overhead, simplifies pipeline complexity, and significantly lowers overall resource and maintenance cost.

On this basis, StreamingView deeply integrates IVM's incremental computation theory and builds a complete, native incremental computation paradigm within the data warehouse. Users no longer need to deal with the complex concepts of traditional stream processing—such as watermarks, windows, and state management—yet can still achieve continuously updated real-time computation, greatly reducing the usage barrier.

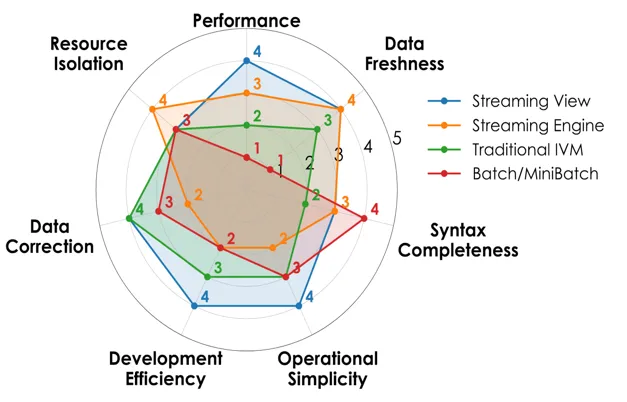

At the same time, StreamingView is not limited to the classic "light-write, heavy-read" query acceleration scenarios of traditional IVM. Instead, it is designed for high-throughput streaming data, adopting an eventual consistency model, and incorporating multiple deep optimizations for incremental workloads. As a result, in terms of data ingestion capability, processing performance, and completeness of SQL support, StreamingView can even surpass some traditional stream processing engines.

In addition, StreamingView includes a large number of practical engineering optimizations tailored to incremental computation in production, substantially improving both performance and stability at scale. For more details, readers are encouraged to refer to the original paper.

In summary, StreamingView enables efficient, low-cost, and robust large-scale incremental computation entirely inside the data warehouse. It has already been widely deployed in multiple large-scale production environments at Alibaba Cloud, supporting typical scenarios such as real-time data warehouses, near real-time analytics, and continuous aggregations. This way, it becomes a core component of the real-time data processing architecture of AnalyticDB for PostgreSQL.

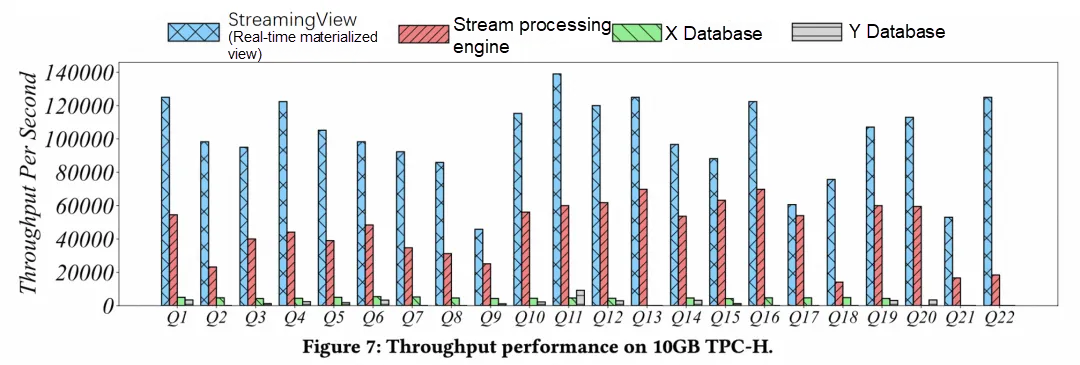

In the experimental section, the paper compares StreamingView with leading stream processing engines and mainstream commercial databases. StreamingView consistently demonstrates significant advantages.across multiple dimensions—including incremental computation throughput (under the same hardware resources, StreamingView achieves 2–7x or more throughput advantages, as shown in the paper), performance characteristics, and breadth of SQL syntax supported for incremental computation.

More importantly, StreamingView has already been integrated as a cloud service into AnalyticDB for PostgreSQL and is available to customers. To date, many customers have adopted StreamingView at scale in production to build complex incremental computation pipelines. For example, the paper presents a real-world online use case: An e-commerce customer runs an AnalyticDB for PostgreSQL cluster with dozens of nodes, managing hundreds of terabytes of data. On top of this data, they have built dozens of StreamingViews, forming a complex incremental computation workflow. A significant portion of these computations involve complex SQL with more than 10 tables. On the write side, StreamingView processes tens of thousands to hundreds of thousands of rows of incremental updates per second. StreamingView is able to maintain second-level incremental computation latency. During peak events such as major promotions, experience only a small transient increase in latency, which quickly returns to normal. This fully demonstrates its ability to handle complex scenarios and its strong adaptability to high throughput, low latency, and highly volatile workloads.

The rapid development of generative AI is driving explosive growth in both data volume and data access frequency, pushing the field of data analysis to evolve from the era of full recomputation to the era of incremental computation. In response to this trend, Alibaba Cloud published the VLDB 2025 paper: "Streaming View: An Efficient Data Processing Engine for Modern Real-time Data Warehouse of Alibaba Cloud", which systematically introduces the StreamingView (real-time materialized views) feature built into AnalyticDB for PostgreSQL. StreamingView provides a high-performance, low-cost evolution path, helping users smoothly transition from traditional full recomputation paradigms to incremental computation paradigms. With the convenience of cloud computing, users can easily obtain this capability in the cloud. We welcome more businesses with such needs to experience and adopt the StreamingView capability of the AnalyticDB for PostgreSQL through Alibaba Cloud services.

Learn more in the documentation: https://www.alibabacloud.com/help/en/analyticdb/analyticdb-for-postgresql/user-guide/real-time-materialized-views

PolarDB-X Clustered Columnar Index | How to Create a Columnar Index

Alibaba Cloud Big Data and AI - December 29, 2025

Farruh - December 5, 2025

Apache Flink Community - August 29, 2025

ApsaraDB - September 21, 2022

ApsaraDB - June 27, 2022

Alibaba Cloud Community - October 10, 2024

Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn MoreMore Posts by ApsaraDB