By Baotiao

It is 2026. Can AI modify database kernel code? I used Claude Code to replace PostgreSQL's Full Page Write (FPW) with MySQL's Doublewrite Buffer (DWB), and the resulting performance differed by 3×.

I have pondered for a long time which is more reasonable: FPW or DWB. I previously posted a thread on the pgsql-hackers mailing list to discuss this matter, but no conclusion was reached.

In 2026, I wanted to test whether Claude Code could modify kernel code and take the opportunity to compare the two schemes through actual code. Show me the code — thus, this experiment came to be.

Databases manage data in units of pages. The default size is 8 KB for PostgreSQL and 16 KB for MySQL. However, the atomic write unit of the operating system and disk is generally 4 KB.

This means that writing a complete database page to disk requires multiple physical I/Os. If a power outage occurs or the system crashes when the writing is halfway done, the page is only partially written — a Torn Page. The new and old data are mixed, and the page becomes corrupted.

PostgreSQL and MySQL use completely different methods to solve this problem.

After each Checkpoint, when a data page is modified for the first time, PostgreSQL writes the content of the entire page to the Write Ahead Log (WAL). During crash recovery, the complete page is retrieved from the WAL to overwrite the corrupted one, and then the subsequent WAL records are replayed.

However, FPW has a critical problem: It turns the Checkpoint frequency into a dilemma.

FPW favors fewer Checkpoints because after each Checkpoint, the first modification of a large number of pages requires writing the full page. Consequently, the WAL volume surges, and the write performance plummets. The checkpoint_timeout of PostgreSQL must be at least 30 seconds. Of course, a checkpoint may also be triggered when the size exceeds max_wal_size.

However, database theory favors more Checkpoints. The more frequent the Checkpoint, the less WAL data needs to be replayed during crash recovery, and the faster the recovery.

These two requirements are conflicting: FPW requires fewer Checkpoints, while rapid recovery requires more Checkpoints.

The approach of InnoDB is completely different. Before dirty page flushing, multiple dirty pages are sequentially written to a dedicated Doublewrite Buffer area on the disk. When the buffer is full, an fsync() is performed, and then these pages are written to their respective data file locations in a scattered manner.

What happens in the event of a crash? During a restart, the system checks whether a complete page replica exists in the Doublewrite Buffer. If so, the replica is used to recover the corrupted data page. The Torn Page problem ceases to exist.

If Data Merge is not considered:

• FPW = 1 WAL write + 1 data page write

• DWB = 2 data page writes

Both involve 2 I/Os. However, the WAL write is on the foreground path, which directly impacts the SQL latency of the User. The DWB write is on the background dirty page flushing path, which the User is basically unaware of.

DWB does not need to perform fsync() for every write. It syncs only when a Buffer is full. Although WAL write can also perform batching, it is on the foreground path. You cannot let the User wait for too long, so the batch space is limited.

DWB does not rely on Checkpoint to prevent Torn Page. Therefore, you can increase the Checkpoint frequency at will to accelerate crash recovery without the side effect of write amplification.

Main configuration:

shared_buffers=4GB wal_buffers=64MB synchronous_commit=on maintenance_work_mem=2GB checkpoint_timeout=30s For each scenario, the database is rebuilt. Before the scenario is run, a VACUUM FULL and 60 seconds of prefetch are performed. Each payload is run for 300 seconds.

Scenario: io-bound, —tables=10 —table_size=10000000

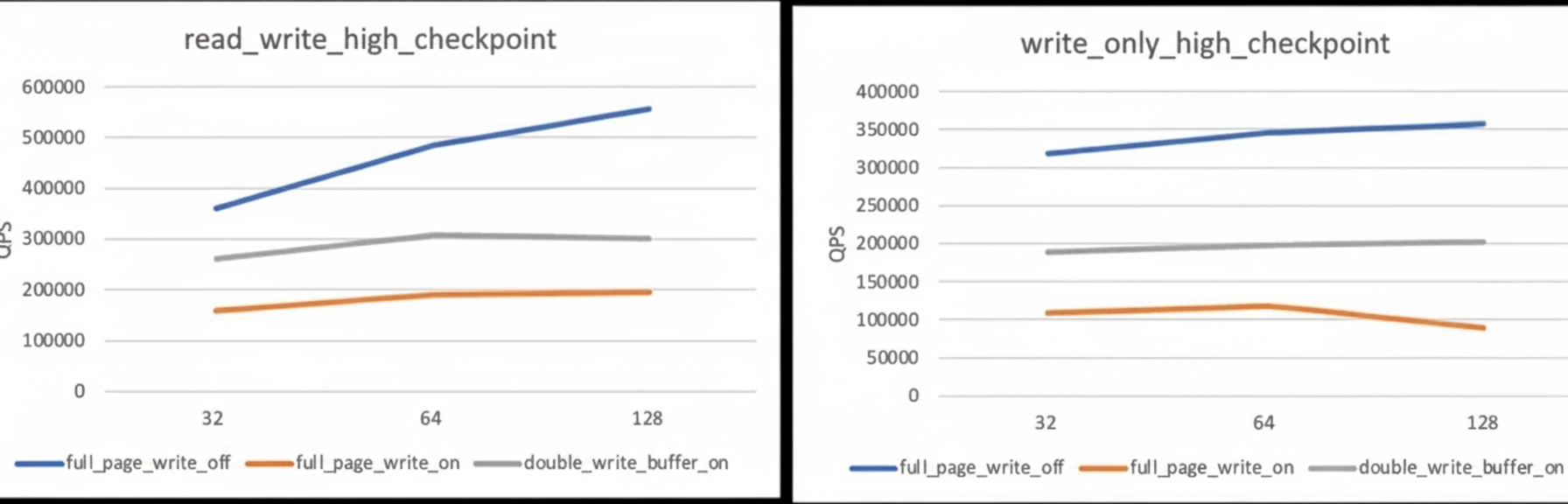

The results are as follows:

| Scenario | Concurrency | FPW OFF (QPS) | FPW ON (QPS) | DWB ON (QPS) | FPW OFF (TPS) | FPW ON (TPS) | DWB ON (TPS) | FPW OFF (ms) | FPW ON (ms) | DWB ON (ms) |

|---|---|---|---|---|---|---|---|---|---|---|

| read_write | 32 | 360,764 | 158,865 | 260,171 | 18,038 | 7,943 | 13,009 | 1.77 | 4.03 | 2.46 |

| read_write | 64 | 484,988 | 190,654 | 307,735 | 24,249 | 9,533 | 15,387 | 2.64 | 6.71 | 4.16 |

| read_write | 128 | 556,021 | 194,301 | 301,791 | 27,801 | 9,715 | 15,387 | 4.60 | 13.17 | 9.81 |

| write_only | 32 | 318,879 | 108,696 | 188,760 | 53,146 | 18,116 | 31,460 | 0.60 | 1.77 | 1.02 |

| write_only | 64 | 345,766 | 117,533 | 197,251 | 57,628 | 19,589 | 32,875 | 1.11 | 3.27 | 1.95 |

| write_only | 128 | 356,725 | 89,144 | 202,884 | 59,454 | 14,857 | 33,814 | 2.15 | 8.61 | 3.78 |

The result is obvious. Disabling FPW yields the best performance and serves as the baseline. After FPW is enabled, performance drops to ~25% of the baseline. However, DWB can maintain ~57%. In the write_only scenario with 128 concurrent connections, the throughput of DWB is 2.3 times that of FPW. The latency is also comprehensively better than that of FPW.

The modified code is here: https://github.com/baotiao/postgres

The entire kernel modification was completed using Claude Code. This verifies that AI is indeed capable of modifying database kernel code.

How the PolarDB-X JDBC Driver Implements High Availability Detection

Alibaba Cloud Community - January 30, 2026

Alibaba Cloud Native Community - March 25, 2026

Alibaba Cloud Native Community - March 30, 2026

Alibaba Cloud Big Data and AI - January 21, 2026

Alibaba Cloud Community - December 4, 2025

CloudSecurity - January 30, 2026

PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn MoreMore Posts by ApsaraDB