By Wang Chen

📌 Harness originates from the term used for horse gear. The horse is a powerful AI model, but due to its black box nature, it has uncontrollability; Harness refers to items such as reins, saddles, and protective gear, related to engineering management; the rider is the human engineer who clarifies intentions, designs the environment, and constructs feedback loops.

In February 2026, OpenAI published a technical blog titled "Harness Engineering: Leveraging Codex in an Agent-First World"[1]. The article revealed an astonishing experiment: a team consisting of only 3 engineers (later expanded to 7 people) generated over 1 million lines of production-level code with Codex Agent in just 5 months, merging approximately 1500 Pull Requests, without a single line of code being handwritten by humans. However, what truly sparked industry discussion was not the figure of "AI wrote 1 million lines of code" itself, but rather the new engineering paradigm it proposed: Harness Engineering.

As the widely circulated Medium article metaphorically explained: a dragon has entered our living room. It is smart, powerful, and seems relatively tame for now. But the dragon will grow, and we don’t need thicker chains; we need a complete harnessing system, including reins, saddles, and protective gear, as well as a rider who knows how to coexist with the dragon.

To understand Harness Engineering more profoundly, let’s broaden our perspective to the larger scale of technological history:

The steam engine unleashed physical power far beyond human muscles. But the steam engine itself didn’t know what to drive, how fast to turn, or when to stop. Thus, humans invented flywheel governors, safety valves, and drive systems; these were the "Harnesses" of the industrial revolution. Without them, the steam engine would have been just a dangerous kettle.

Computers released computational power far beyond human brains. But bare machines don’t know what to compute. Therefore, humans invented operating systems, programming languages, and software engineering methodologies, from the waterfall model to agile development, from assembly to high-level languages, every step built a better "Harness" to control computation.

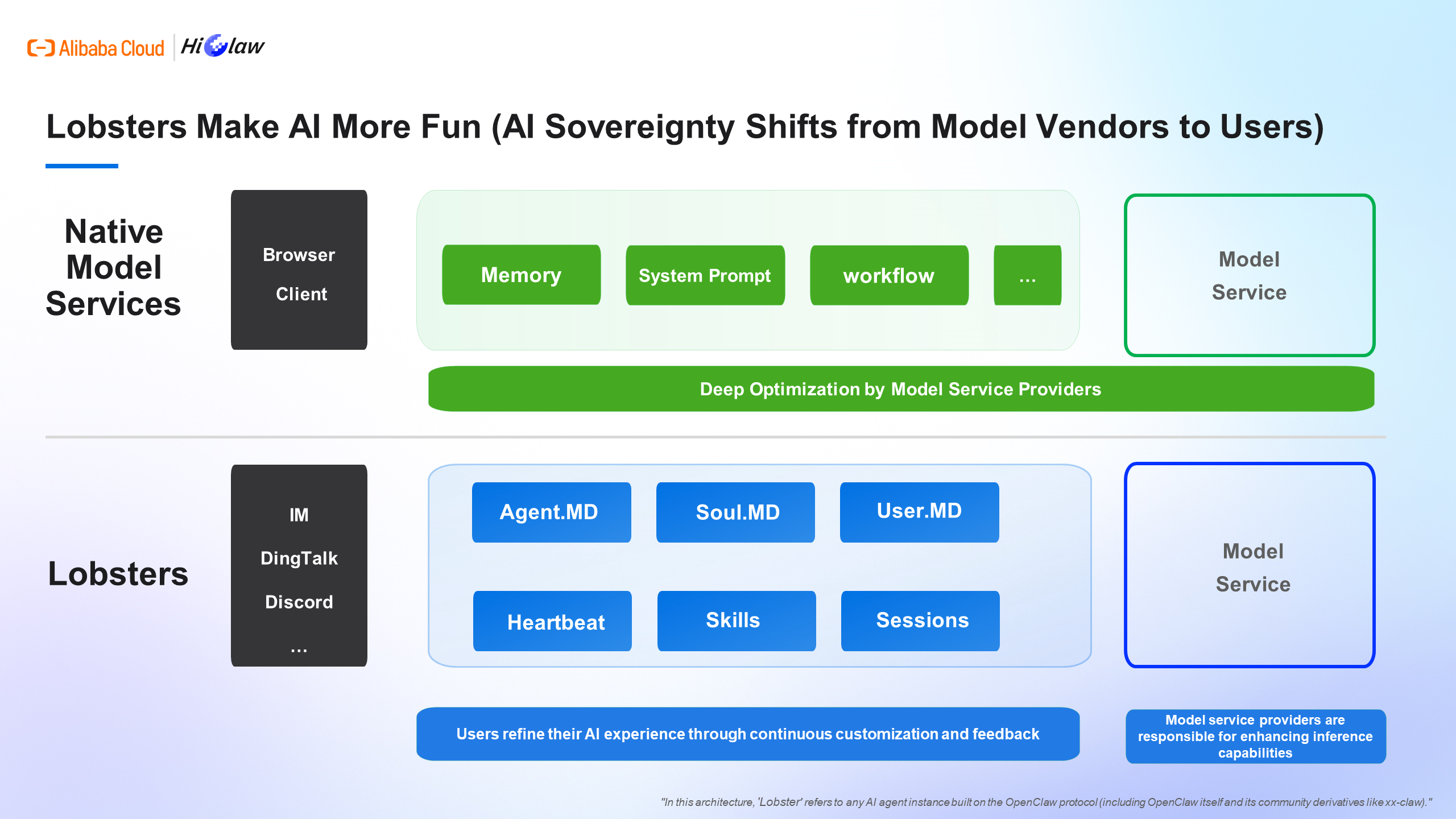

Large language models unleashed cognitive power far beyond individual humans; they can plan, reason, and generate independently. Yet, the models themselves don’t know what problems to solve, what constraints to follow, or how to operate more reliably in the real world. Harness Engineering is the unity of the operating system and software engineering methodology in the age of AI, encompassing memory, system prompts, knowledge bases, orchestration, and text flows under the Agent paradigm, such as Agent.md, Soul.md, User.md, etc., all aimed at better communicating with the models.

The emergence of Harness Engineering signals that AI harness systems are starting to take shape. However, when discussing harnessing engineering, we must also review prompt engineering and context engineering.

● Core Question: How to communicate with the model?

● Human Role: Users meticulously craft the wording, formatting, and examples of each instruction, attempting to coax the correct answer from the black box. Few-shot, Chain-of-Thought, role-playing... are essentially working within a fixed dialogue window.

● Limitations: Single interaction, stateless, highly dependent on individual experience, resembling more of an artisan skill rather than engineering.

● Core Question: What should the model see?

● Human Role: The role changes from user to Agent Builder, as Builders systematically design, construct, and maintain a dynamic system that provides the appropriate context for an Agent at every step of executing tasks, including knowledge bases, tool invocations, memory management... The focus shifts from what the user should say to what Builders let the model see, allowing the model to understand the user better.

● In June 2025, Andrej Karpathy clearly stated: Context engineering is far more important than prompt engineering.

● Core Question: How should the entire environment operate?

● Human Role: The role is once again returned from Agent Builder back to the user. By designing a complete operating environment, including constraints, feedback loops, automated validation, entropy management, lifecycle governance, etc.

● Personally, I believe that the resonance of Harness Engineering at this stage is closely related to the emergence of OpenClaw, which facilitates the transfer of AI sovereignty from model vendors to users. Rights and responsibilities are balanced; having the power to mediate Agents also requires learning to Harness and understanding how to coexist with Agents.

Image Source: ApsaraDB's Xiaogao Database Hangzhou Station (In this architecture, 'Lobster' refers to any AI agent instance built on the OpenClaw protocol (including OpenClaw itself and its community derivatives like xx-claw).)

By this point, you might have a reasonable doubt: Is Harness Engineering just a repackaging of good software engineering practices? Writing good documentation, establishing solid feedback loops, running CI... haven’t we always been doing these things? This doubt deserves serious attention. Let’s look at 4 real cases.

Source: Can Duruk, "I Improved 15 LLMs at Coding in One Afternoon", 2026.02[2]

Independent developer Can Duruk maintains an open-source coding Agent framework. He discovered a widely overlooked issue: the editing tools that Agents use to modify code files are a significant source of failure.

The current mainstream editing methods in the industry are three: OpenAI's apply_patch (requires the model to generate a specific format of diff), Claude Code's str_replace (requires the model to exactly reproduce every character of the old text), and a dedicated 70B merge model trained by Cursor. Each method has serious defects; Grok 4 using patch format has a failure rate as high as 50.7%.

He designed a new solution called Hashline: when the model reads a file, each line is accompanied by a 2-3 character content hash tag. When editing, the model only needs to reference these tags instead of reproducing the original text.

// File content seen by the model:

11:a3| function hello() {

22:f1| return "world";

33:0e| }

// Model's edit instruction:

"replace line 2:f1 with: return 'universe';"Result: 16 models, 3 editing tools, 180 tasks, each task ran 3 times. Hashline matched or surpassed traditional solutions on almost all models. The most extreme case saw Grok Code Fast 1's success rate soar from 6.7% to 68.3%, a tenfold increase! The output tokens of Grok 4 Fast also decreased by 61%.

In traditional software engineering, whether humans use VS Code or Vim doesn’t affect code quality. But in the Agent world, the interface design through which models express intentions directly determines their ability to transform correct ideas into correct code. Can Duruk put it this way: "It's not the pilot you should blame; the problem lies with the landing gear."

Source: AgentsMesh Developer, "52 Days, 350K Lines Solo", Reddit r/ClaudeAI, 2026.03, From Reddit

An independent developer built 350,000 lines of production code on their own using an AI Agent in just 52 days. They discovered a phenomenon not present in traditional development: technical debt can be exponentially amplified by Agents.

When you make a temporary compromise, bypass the Service layer to directly query the database, or use a hardcoded magic number, the Agent treats this pattern as a "precedent." Next time it generates similar functionality, it’s not occasional reuse, but systematic reuse. Human engineers often know to avoid "this is a landmine; steer clear of it" when encountering bad code. Agents, however, do not; they see a certain pattern present in the codebase and regard it as a legitimate solution.

When good practices dominate, Agents amplify those good practices; when shortcuts dominate, Agents amplify those shortcuts.

In traditional software engineering, technical debt accumulates linearly; a bad pattern may be imitated by a few people, but its propagation speed is limited by team size and code review. In Agent collaborative development, technical debt becomes a self-replicating virus: one bad pattern can be copied by Agents to every corner of the codebase within hours.

This necessitates a completely new "codebase hygiene" strategy, as mentioned at the beginning of the article by OpenAI:

Regularly running cleanup Agents, like garbage collectors, the OpenAI team previously allocated 20% of their time every Friday for cleaning up "AI garbage," but later found that this was not scalable and unsustainable for combatting decay. Instead, they encoded "taste" into automated rules.

Here, taste includes:

● Prefer using shared utility packages rather than manually written auxiliary tools, to manage invariants in a centralized manner;

● Not using "YOLO-style" detection data, but verifying boundaries or relying on typed SDKs, so that agents do not inadvertently construct based on guessed structures.

● Regularly running a suite of background Codex tasks that scan for biases, update quality levels, and initiate targeted refactoring Pull Requests. Most of these can be reviewed and automatically merged within a minute, functioning similarly to garbage collection.

Technical debt is like a high-interest loan: continuously repaying the debt in small amounts is much better than letting it accumulate and then suffering through a painful one-off settlement. Once human taste is captured, it continues to apply to every line of code. This also encourages us to discover and resolve bad patterns daily, rather than allowing them to spread in the codebase for days or weeks.

Source: HumanLayer, "Skill Issue: Harness Engineering for Coding Agents", 2026.03 [3]

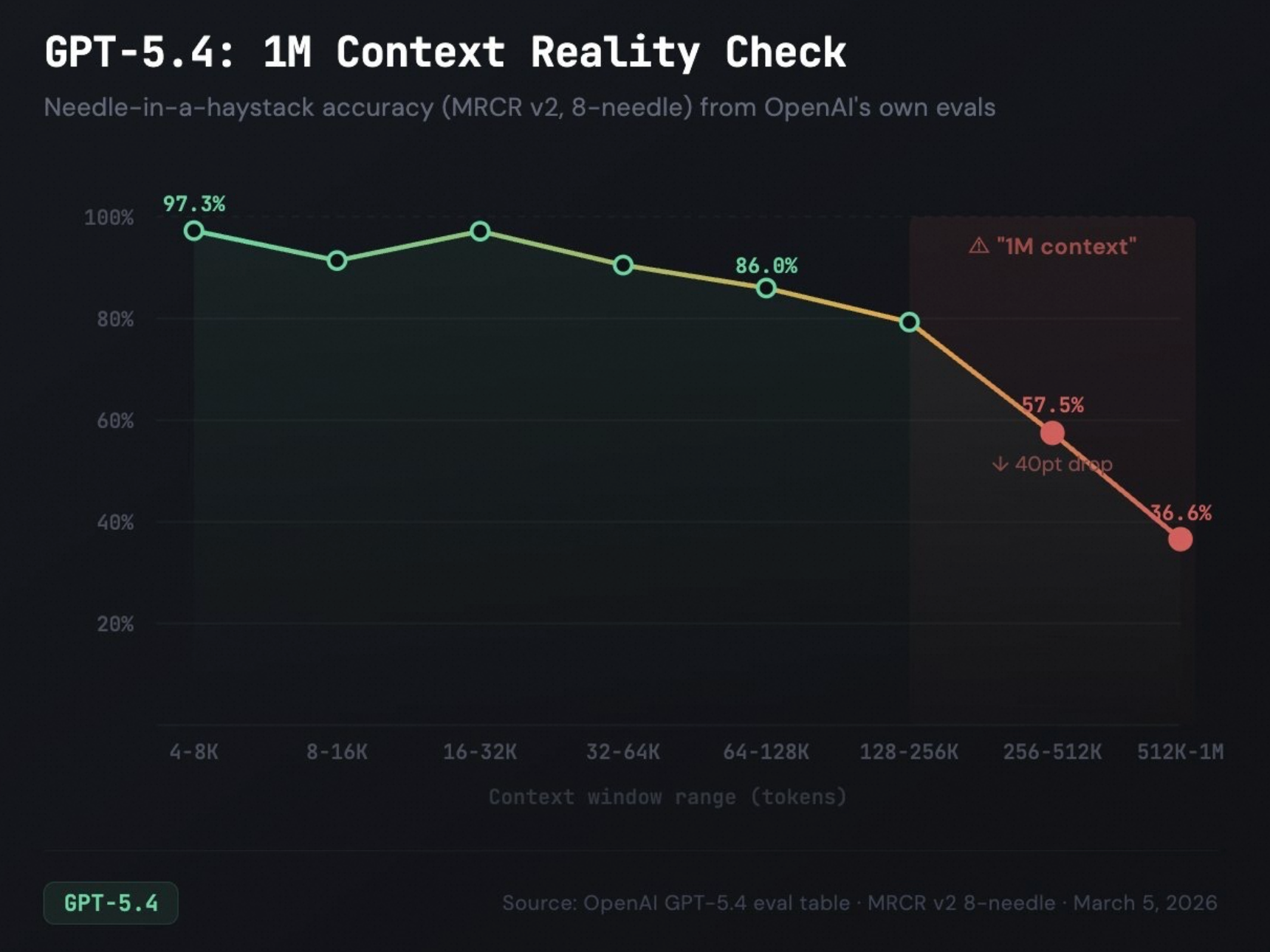

The HumanLayer team discovered a core issue in numerous enterprise-level brownfield projects: the context windows of Agents deteriorate as work progresses. Each tool invocation, each file read, and each grep result leaves residues in the context. When the context expands to a certain extent, the Agent enters what they call the "idiot zone," where even simple tasks start to go awry.

The study provides empirical support: 18 models showed a significant decline in performance on the Terminal Bench 2.0 test cases as context length increased, and deterioration was more severe when unrelated low-semantics information was present in the context.

HumanLayer’s solution is not to "expand the context window" but to introduce sub-Agents as "context firewalls":

● The parent Agent is responsible for planning and orchestration, using expensive high-inference models (like Opus).

● The sub-Agent executes specific tasks in isolated context windows, using inexpensive, fast models (like Sonnet).

● The sub-Agent only returns highly compressed results + source references, avoiding contamination of the parent Agent's context in the process.

● The parent Agent always remains in the "smart zone," maintaining coherence across dozens of sub-tasks.

Alibaba's recently open-sourced HilCaw project, employing a Manager-Workers architecture, can also be seen as a form of "context firewall," where tasks are assigned by the Manager and each Worker has different responsibilities to prevent memory overflow or contamination, keeping the Agent from entering the "idiot zone."

In traditional software engineering, context management is performed automatically by the human brain; we do not need to worry about forgetting project architecture after reading too many code files. However, the context window of an LLM is a limited and degrading resource. The context firewall model provided by sub-Agents or multiple Agents is a completely new architectural mode that is neither a microservice, a message queue, nor a replica of any traditional distributed system concept. It solves a problem that only arises when a non-human cognitive entity is executing tasks: how to complete work that requires infinite attention within a limited attention budget.

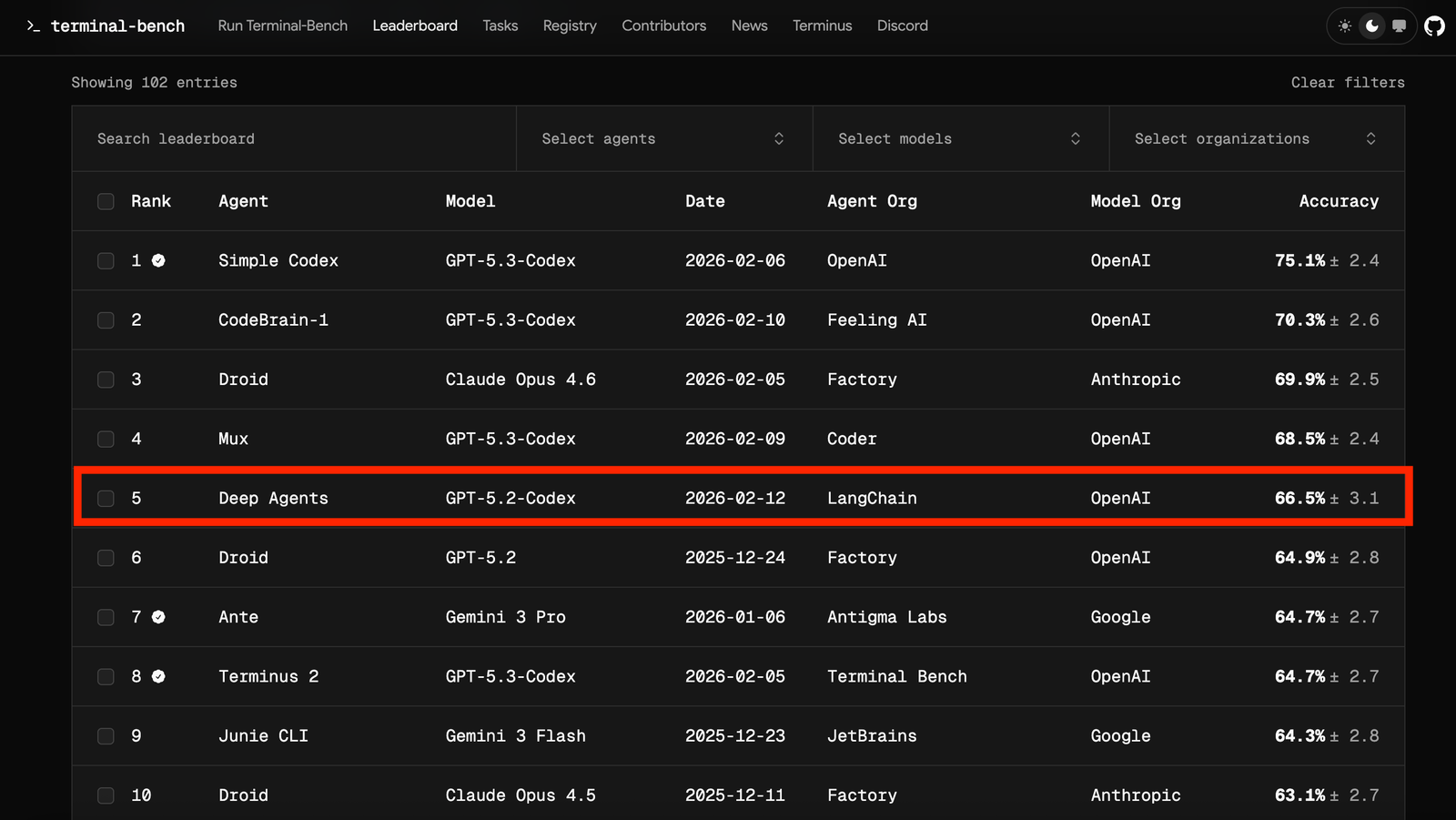

Source: HumanLayer's practice + LangChain "Improving Deep Agents" [4]

The HumanLayer team initially made a seemingly reasonable mistake: running the full test suite each time the Agent modified code. As a result, the output of 4000 passing tests flooded the context window, causing the Agent to start having hallucinations about the recently read test files, losing track of the actual task.

They concluded one counterintuitive principle: "Success should be silent; only failure should make noise."

They wrote a Hook script for Claude Code: when the Agent stops working, it automatically runs formatting and TypeScript type checks. If everything passes, it remains completely silent, injecting no content into the context. If it fails, it only outputs error information and uses an exit code to tell Harness to reactivate the Agent for issue resolution.

LangChain's practice goes a step further: they designed a PreCompletionChecklistMiddleware that intercepts the Agent when it attempts to submit, forcing it to validate against task specifications. Meanwhile, a LoopDetectionMiddleware tracks the number of repeated edits to the same file, injecting a prompt saying "Maybe you should consider a different approach" after N instances, helping the Agent break out of loops.

The result is that LangChain's coding agents rose from the top 30 to the top 5 in the Terminal Bench 2.0 tests.

Traditional CI/CD feedback loops are designed for humans: the more detailed the test report, the better, because humans need to understand the reasons for failures. However, the feedback loops for Agents need to be context-window friendly, with information tightly controlled; success signals must be compressed to zero, while failure signals must be distilled to the minimal actionable unit. Unique are the "loop detection" and "forced validation" mechanisms since human engineers do not need reminders like "You’ve edited the same file 10 times" or forced checks against requirement documents before submission. These are compensatory mechanisms specifically designed for the behavioral flaws of non-human cognitive entities.

The same model, different Harness, radically different results. These 4 cases illustrate that an Agent's competitive advantage lies not only in which model you use but also in how you construct the Harness. Harness becomes the moat, not just for Agent Builders but also for Agent Users.

Stories of efficiency improvements are no longer sexy enough; business innovation is the strongest motivation for enterprises to pay for Tokens.

Harness Engineering aims not only to enable individual Agents to work more reliably but also to optimize cooperation between multiple Agents, accelerating business innovation through collective intelligence. Collective intelligence enhances business innovation by overcoming knowledge silos between roles and creative decay due to cross-role collaboration.

This topic is being pushed to the forefront of practice by a range of open-source projects.

Source: Hong Kong University's Data Intelligence Lab (HKUDS), github.com/HKUDS/CLI-Anything

AI Agents can reason, write code, and search, but can they open GIMP to remove the background from an image, or use Blender to render a 3D scene? They cannot. GUIs are designed for humans, not for Agents.

CLI-Anything is a Claude Code plugin that analyzes the source code of any software and automatically generates a production-grade command line interface (CLI), able to invoke real application backends, including LibreOffice generating true PDFs, Blender rendering real 3D scenes, Audacity processing real audio through sox, and more.

One command performs all the work: /cli-anything <path-or-repo>, undergoing a 7-stage fully automated pipeline of analysis → design → implementation → testing → documentation → release, outputting a pip-installable Python package.

Each generated CLI comes with a SKILL.md, a machine-readable capability description file. This means Agents can automatically discover what other Agents can do at runtime, dynamically forming collaborative relationships. This is the infrastructure for collective intelligence.

Source: Alibaba Cloud, github.com/alibaba/hiclaw/tree/main

However, CLI-Anything only solves part of the problem.

Imagine a company with more than 10 key departments: architects, product managers, front-end developers, back-end developers, marketing, PR, supply chain... each department has unique skills and knowledge bases. You may find that building collective intelligence based on a monolithic architecture like OpenClaw faces:

● Poor scalability: Users can't freely combine or introduce new Agents as needed; redeployment must be done by the operations team or AI platform.

● Lack of model freedom: All Agents can only use default models, incapable of freely replacing or comparing effects.

● The more you talk, the more expensive and ineffective it becomes: As multiple Agents collaborate in one room, the longer the memory and the more Skills available, the more likely it is to become polluted.

● FinOps Difficult to Implement: Token consumption is uncontrollable; you cannot implement FinOps through flexible model use or file sharing, and ROI faces challenges.

These are the issues that HiClaw intends to resolve.

● Designed a Manager-Workers architecture: Users can flexibly create Workers representing various roles and introduce custom Agents as Workers; all Workers' Skills and memories are independently stored to prevent pollution.

● Each Agent supports customization: OpenClaw, Copaw, NanoClaw, ZeroClaw, and custom Agents developed by enterprises can be freely configured with backend models; for code generation using Baillan Coding Plan, for text writing using local Qwen open-source models, aiding FinOps.

● Introduced MinIO shared file system: Used for information sharing between Agents, significantly reducing Token consumption brought about by multi-Agent collaboration.

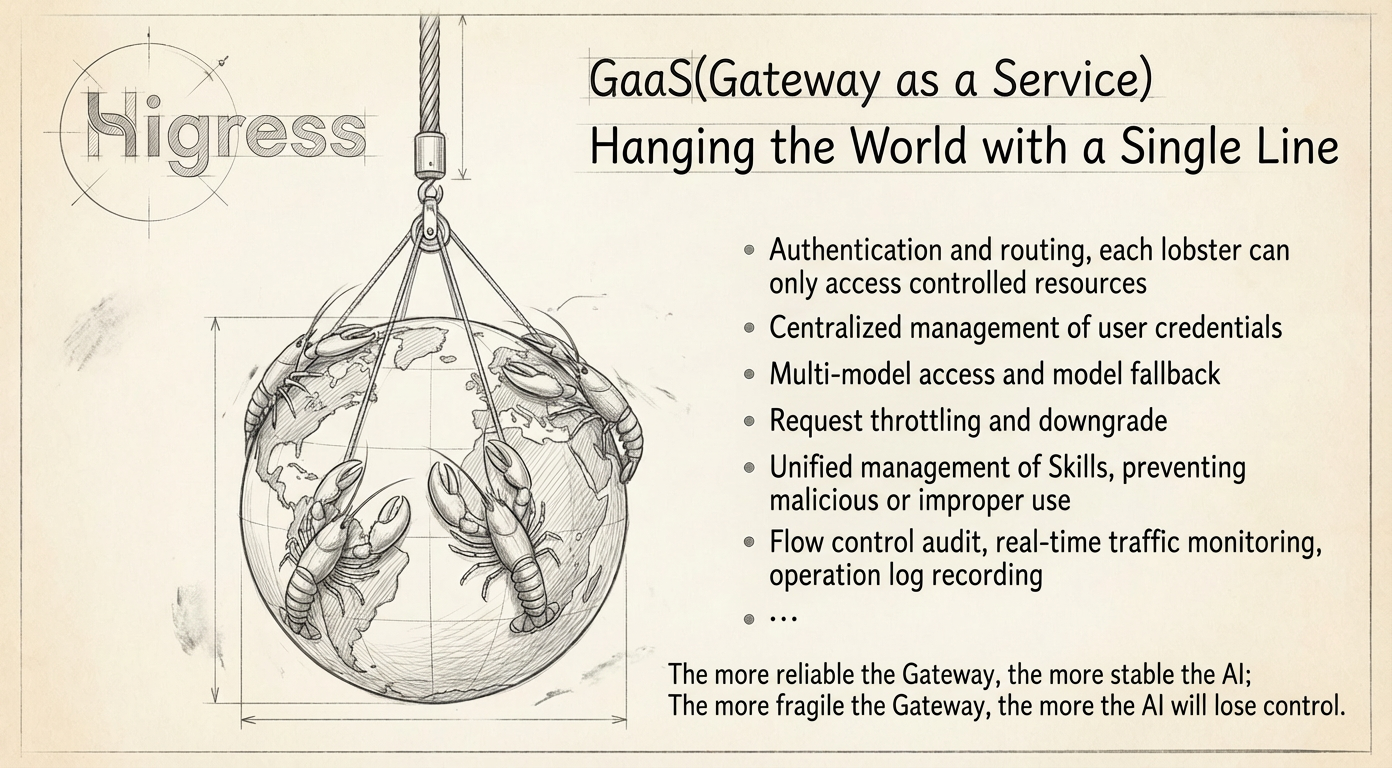

● Introduced Higress AI Gateway: Implements authentication routing (each lobster can only access controlled resources), credential and access security (centralized management of various user credentials and safety barriers), backend stability (multi-model access and model fallback, limiting and downgrading), unified management of Skills to prevent malicious or improper use, entry monitoring (QPS, authorization failure rates, Token quota consumption, MCP service latency), and security auditing (request records, operation log records, etc.). The importance of the AI Gateway is shown in the figure below.

Let’s look at a practical case of collective intelligence built on HiClaw. An automotive manufacturer plans to produce a 70 million luxury car, designing N roles to engage in 100 discussions and deliver a result.

In this case, we selected three target users with different identities to engage in free discussion. During these 100 rounds of dialogue, they passionately discussed aspects such as brand perception, comfort needs, safety/privacy, brand socializing, and soft values.

As there is quite a bit of content, interested friends can go to the following address to observe.

https://github.com/alibaba/hiclaw/issues/405

Harness Engineering enables enterprises to have a digital intelligent team that can be orchestrated, governed, and sustainably evolved. Individual efficiency improvements are linear, while the emergence of collective intelligence is exponential. Open-source projects like CLI-Anything and HiClaw are explorations and practices of Harness Engineering under collective intelligence.

[1] https://openai.com/index/harness-engineering/

[2] https://blog.can.ac/2026/02/12/the-harness-problem/

[3] https://www.humanlayer.dev/blog/skill-issue-harness-engineering-for-coding-agents

[4] https://blog.langchain.com/improving-deep-agents-with-harness-engineering/

40+ Commits Daily: HiClaw v1.0.6 Lands; Credential-zero Security, Skills + MCP > 2

Higress Joins CNCF: Delivering an Enterprise-Grade AI Gateway and a Seamless Path from Nginx Ingress

706 posts | 57 followers

FollowAlibaba Cloud Native Community - April 16, 2026

Alibaba Cloud Native Community - July 15, 2025

Alibaba Cloud Native Community - October 31, 2025

Alibaba Cloud Native Community - April 3, 2026

Alibaba Cloud Native Community - January 19, 2026

Neel_Shah - September 8, 2025

706 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More DevOps Solution

DevOps Solution

Accelerate software development and delivery by integrating DevOps with the cloud

Learn More Alibaba Cloud Flow

Alibaba Cloud Flow

An enterprise-level continuous delivery tool.

Learn MoreMore Posts by Alibaba Cloud Native Community