Large Language Models (LLMs) achieved great success in understanding and generating contextually coherent conversations. However, their inherent memory limitation, specifically the limited context window, critically compromises their ability to maintain consistency in long-term interactions across sessions and applications. Once a conversation exceeds the context length, the LLM acts as an amnesiac partner. It forgets user preferences, repeats questions, and contradicts previously established facts. Imagine this scenario: You tell an AI assistant that you are a vegetarian and do not eat dairy. A few days later, you ask it for dinner recommendations, and it suggests roast chicken. This experience undoubtedly weakens user trust and dependency on AI.

To address this, ApsaraDB PolarDB for PostgreSQL introduces a new one-stop memory management AI application. It helps agents achieve continuous retention of user preferences, factual backgrounds, and historical interaction information across sessions and applications. This solves the core pain points of limited context windows and cross-session memory loss in LLMs.

Low development and O&M efficiency: Current mainstream memory frameworks are retrieval-based memory systems. The backend must connect to multiple memory resources, such as relational databases, vector databases, and graph databases. Data consistency is hard to guarantee. For AI that rapidly drives business evolution, enterprise customers find it difficult to fully manage underlying facilities. These facilities include memory engines, storage, databases, and model services.

Poor memory generation and retrieval: Many enterprise customers want to build their own memory systems. However, incomplete fetching of memory facts and preferences omits key information. The long overall link of the memory system causes high memory retrieval latency. This makes interactive Q&A pairs unsmooth. In memory inference scenarios, retrieval results have weak relevance because the system only provides vectorized memory. In addition, the flexibility of model algorithms and prompt configuration directly determines the iteration speed of the solution.

High system cost pressure: As user size grows, the system lacks elasticity and scaling capabilities in concurrency and storage size. System fees stack up for multi-license systems, such as databases and memory engines. For rapidly growing memory libraries, the system lacks an effective mechanism to support memory lifecycle management.

To address these challenges, PolarDB for PostgreSQL introduces a new AI application. The one-stop long memory management system is officially published. PolarDB for PostgreSQL memory management integrates graph and vector one-stop memory libraries, open memory engines, and model operator capabilities. It provides comprehensive GUI-based parameter settings, prompt policy management, and model algorithm mixed-pool acceleration. This supports a complete closed loop of "memory read and write → context injection → model inference → result feedback". The first phase connected to the Mem0 (pronounced "mem-zero") memory engine. It is compatible with the open source Mem0 community ecosystem. This helps agents achieve continuous retention of user preferences, factual backgrounds, and historical interaction information across sessions and applications. This achieves true personalization and a continual learning experience.

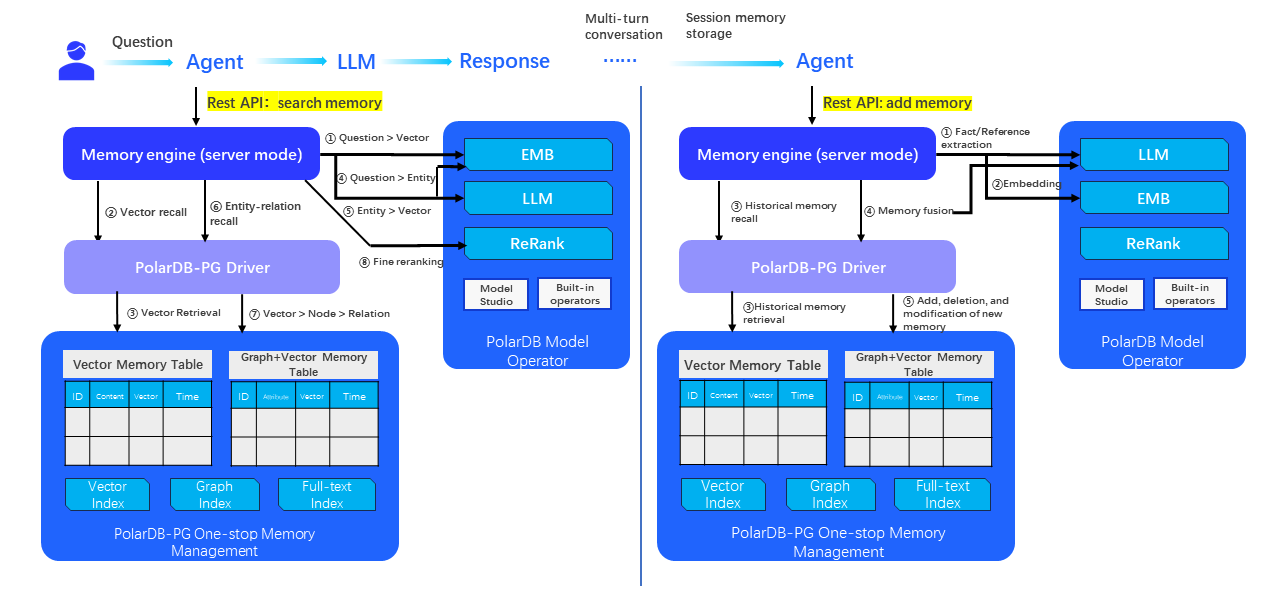

PolarDB for PostgreSQL one-stop memory management system architecture

Currently, PolarDB for PostgreSQL supports the Mem0 framework. It is fully compatible with the Open Source Project Mem0 community ecosystem. It supports Mem0 (vector Basic Edition) and Mem0g (graph enhanced edition). It implements a series of enhancements to the open source Mem0 system. These include Chinese and English model access capabilities, multi-graph management based on userid, and vector partition management based on userid. It also includes synchronous and asynchronous memory write capabilities. It adds the sslmode connection parameter to support SSL connections. It supports custom optimization of prompt templates and feature alignment with the Mem0 enterprise edition. In the future, PolarDB for PostgreSQL will cooperate with MemOS to build an exclusive "memory operating system" for AI. This achieves fine-grained management and dynamic scheduling of the entire memory lifecycle.

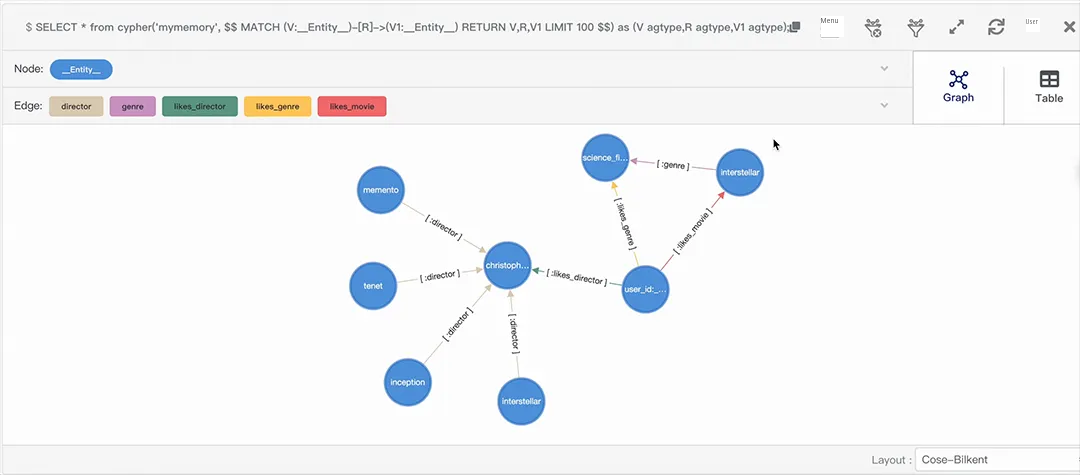

The memory database combines the PolarDB for PostgreSQL vector database engine and the graph database engine. The vector database engine uses the optimized PGVector plugin. The PostgreSQL community widely uses PGVector. It provides excellent AI ecosystem support. The graph database engine is compatible with the open source A Graph Extension (AGE). AGE is a top-level project of the Apache Software Foundation. We integrated AGE with PolarDB for PostgreSQL and cloud-native capabilities. We also applied years of application improvements and performance optimization for many graph customers. It is mature and stable. It delivers excellent performance in graph scenarios with tens of billions of data points. It maintains a queries per second (QPS) over 10,000 and a query latency under 100 ms. The memory database supports cloud-native centralized and distributed versions. You do not need to worry about extensibility risks.

The system uses PolarDB model operators to provide model deployment, model inference, and schedule capabilities. Models play a core role in memory management. The large language model (LLM) automatically fetches key facts and preferences with long-term value from conversations between users and agents. It also merges new and existing memories through additions, deletions, and modifications. It fetches graph-based entity trituple information. The embed model converts key information into high-dimensional vectors. This achieves efficient semantic retrieval. The Rerank model performs fine sorting after memory retrieval. The efficiency of model invokes and inferences is critical to user experience. This solution supports multiple model connection methods. These include: a. Built-in database model operators. b. Model Studio model services. Highly optimized links significantly improve memory-related inference efficiency.

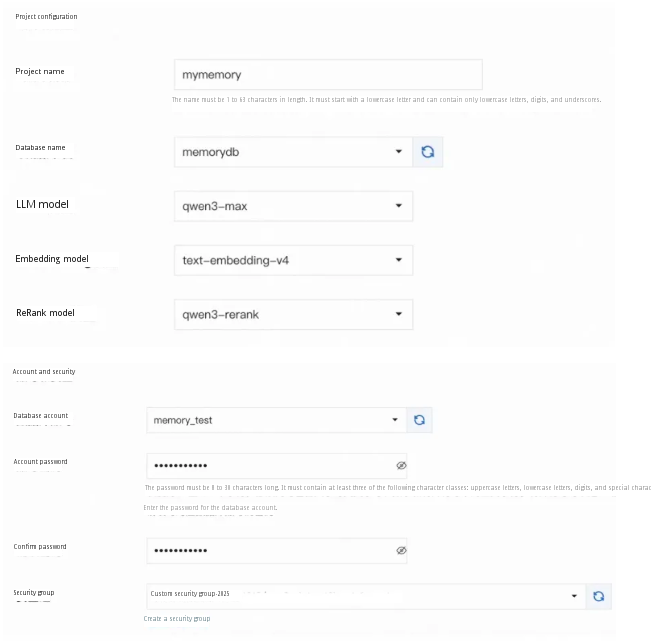

PolarDB for PostgreSQL memory management is an AI application in the PolarDB system. It provides a comprehensive graphical management interface:

Support for flexible model algorithms and database configurations

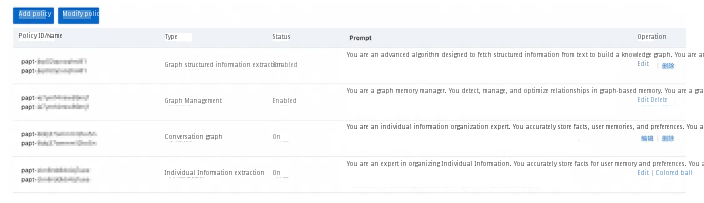

Support for various memory fetch policy configurations

The console supports memory graph query visualization.

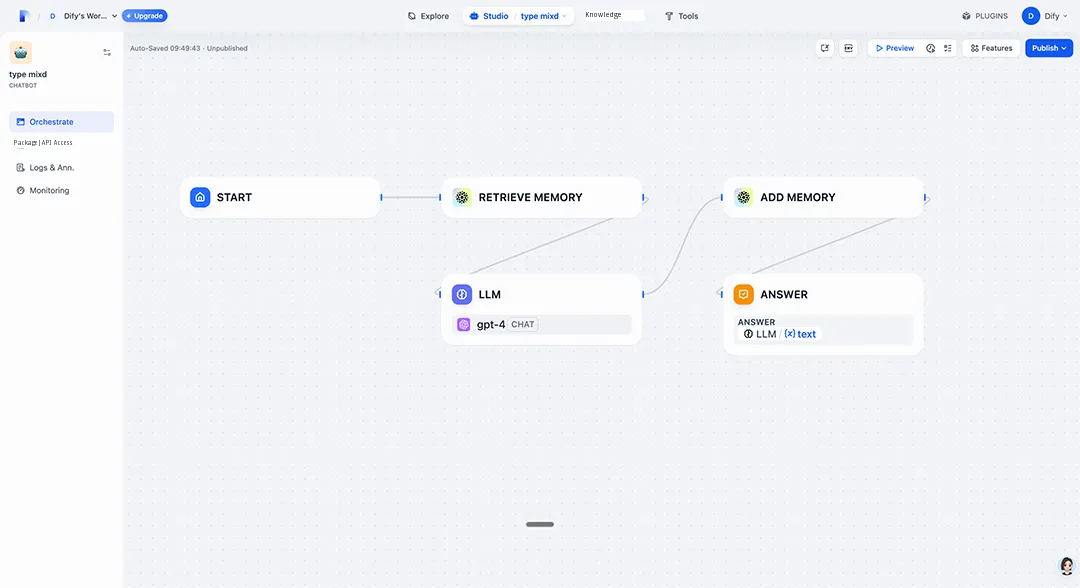

It supports the existing Mem0 ecosystem. This includes frames and platforms such as LangChain, LangGraph, AgentOps, and LlamaIndex. It supports adding the PolarDB memory engine as a plugin to the Dify frame to customize job streams. It supports integrated applications with the Alibaba Cloud AgentRun enterprise-level AI agent one-stop infrastructure platform and the AgentScope open source agent development frame.

PolarDB memory management supports Dify plugin applications.

It is out-of-the-box. It integrates the memory engine, memory database, model operator service, and KVCache acceleration capabilities. This eliminates the joint debugging and maintenance costs of multiple systems.

It supports memory management across multiple projects. Memory project configuration uses a fully graphical interface. It supports configurations for options such as the memory engine, model service, and memory fetch policy (prompt). It provides simple REST APIs and client SDKs. These automatically complete memory fact fetch, memory merge, and memory search.

It supports the pure vector memory database pattern and the advanced memory database pattern that merges graphs and vectors. It uses the relational inference of graph structures to increase the memory recall rate by 40%. It provides a one-stop solution for graph databases, vector databases, and relational databases. This significantly reduces total cost of ownership (TCO).

It supports fine granularity configuration of model operators such as LLM, Embedding, and Rerank. It uses a flexible model service routing policy. It prioritizes Model Studio model services. During high concurrency, it automatically scales out backup channels to ensure high availability. Built-in model operators are deployed in VPCs. This reduces model inference latency by over 30%.

It supports dividing independent memory spaces by dimensions such as projects and lines-of-business. This ensures resource isolation, data security, and size extensibility. It supports automatic subgraph management based on user IDs. The memory size is unlimited. With the same memory size, retrieval efficiency increases by over 50%.

It incorporates customer Best Practices with tens of billions of vector data and graph data points. It meets the high standards of online service with a high QPS over 10,000 and a low latency under 50 ms. It combines cross-session long memory with KVCache token acceleration within a session. This reduces request latency by 88.3% (context length of 200,000, 30 concurrent requests).

PolarDB memory management supports two long memory solutions. These are the pure vector memory database solution and the combined vector memory database and graph database solution. They are suitable for the following scenarios:

Common scenarios: Conversation scenarios that require fast semantic search, such as Live Support and real-time chatbots.

Technical features:

• Use large language models (LLMs) to extract key facts from conversations and store them as vectors.

• Use dynamic thresholds to control the retrieval scope. This balances the recall rate and accuracy.

Common scenarios: Complex relationship inference scenarios, such as medical diagnosis (tracking patient medical history and drug interactions) and travel planning (integrating relationships among flights, hotels, and attractions), and long-term knowledge management scenarios.

Technical features:

• Vector databases process semantic search. Graph databases store associations among entities.

• Support dynamic knowledge graph updates based on time awareness or causal inference.

• This improves on the Mem0g solution. It uses a two-stage pipeline to implement structured memory.

The two solutions complement each other. The vector and graph combination handles complex relationships but challenges retrieval efficiency. The pure vector solution is more efficient in simple scenarios but cannot model deep relationships. During deployment, design a hybrid architecture based on business complexity and real-time requirements.

Currently, PolarDB memory management is used in developer assistants for new energy vehicle companies and education companions. It is fully adapted to scenarios such as text memory and multimodal memory. This significantly improves the immersion of personalized interactions. In addition to these scenarios, PolarDB memory management delivers customer value in multiple key realms. These realms include enterprise knowledge bases, game virtual humans, travel planning, and E-commerce shopping guides. It serves as key infrastructure to evolve AI applications from chatbots to intelligent companions. The deep integration of PolarDB and Mem0/MemOS lets every developer easily build a memory system that remembers, understands, scales, and responds quickly.

DAS Agent Helps Businesses Enter the AI-native Database O&M Era

Alibaba Cloud Community - September 27, 2025

Alibaba Cloud Community - February 17, 2026

Alibaba Cloud Community - July 2, 2025

Apache Flink Community - July 28, 2025

ApsaraDB - January 16, 2026

Justin See - March 20, 2026

Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More Oracle Database Migration Solution

Oracle Database Migration Solution

Migrate your legacy Oracle databases to Alibaba Cloud to save on long-term costs and take advantage of improved scalability, reliability, robust security, high performance, and cloud-native features.

Learn MoreMore Posts by ApsaraDB