By Yuchen

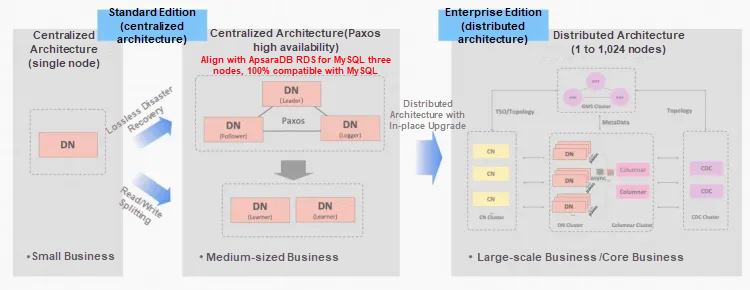

Alibaba Cloud PolarDB for Xscale (PolarDB-X) uses a shared-nothing architecture. It competes by being compatible with the open source MySQL ecosystem. It supports both centralized and distributed deployment modes. It features financial-grade high availability, reliability, and distributed scalability. As a database fully compatible with the MySQL ecosystem, the Data Node (DN) optimizes the InnoDB storage structure. This greatly improves data access efficiency. This topic outlines the technical details of executor optimization in the DN engine.

Before analyzing specific executor optimization policies, review the basic data positioning mechanism in the InnoDB storage engine. This is the foundation of all performance optimizations.

In the InnoDB storage engine, user-defined tables rely entirely on B+ tree indexes. There are two types of B+ tree indexes:

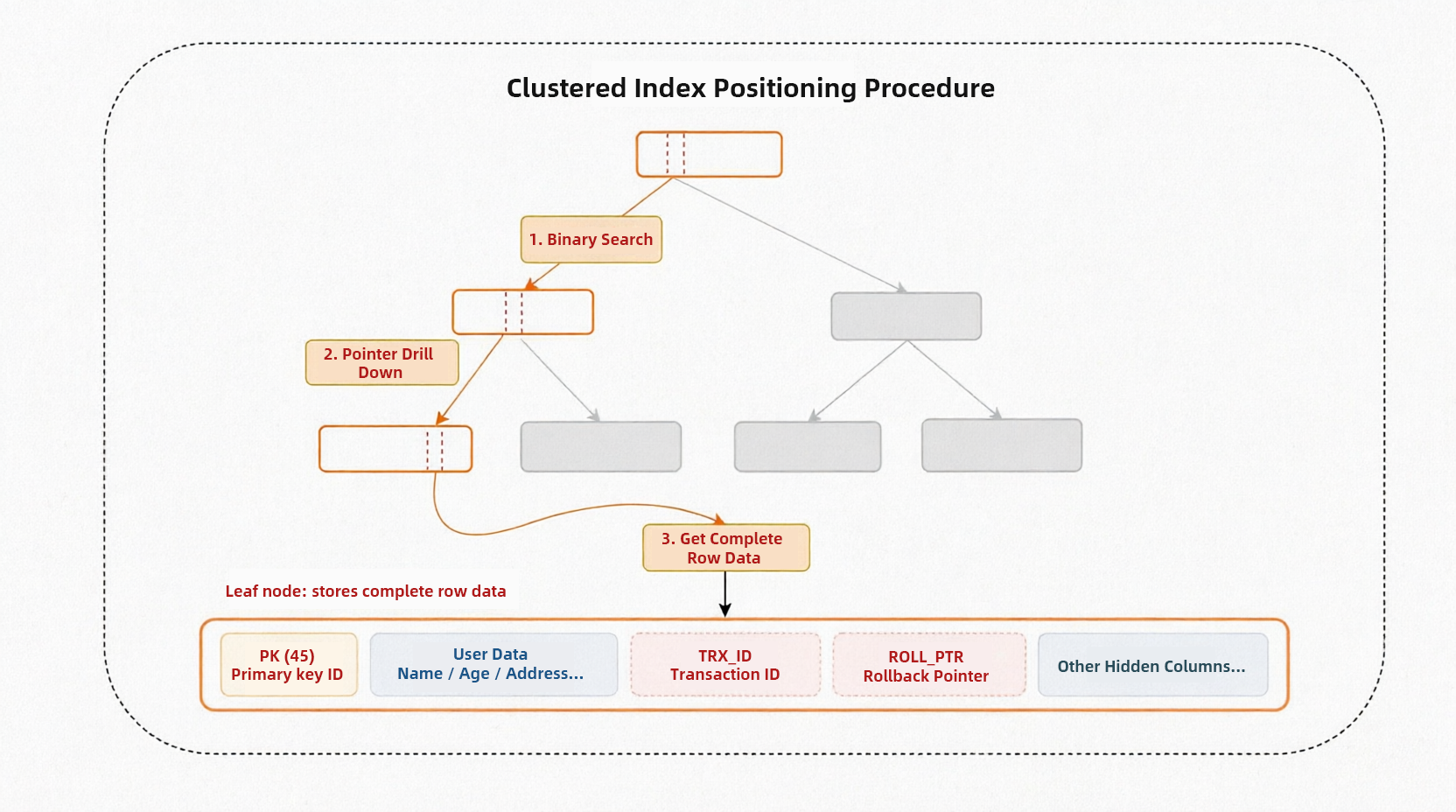

• Clustered index: This corresponds to the primary key B+ tree. Leaf nodes store complete row records.

• Secondary index: This corresponds to the B+ tree of normal columns. Leaf nodes store only the secondary index key values and primary key values.

These two indexes play different roles in data retrieval. They determine different query paths and performance features.

To understand performance differences, examine the behavior of these indexes in query paths. Start with the clustered index positioning flow. This path is the shortest and most efficient.

Clustered index retrieval is a top-down process:

This process requires only one B+ tree traversal to get the full data row. Therefore, clustered index query efficiency is high.

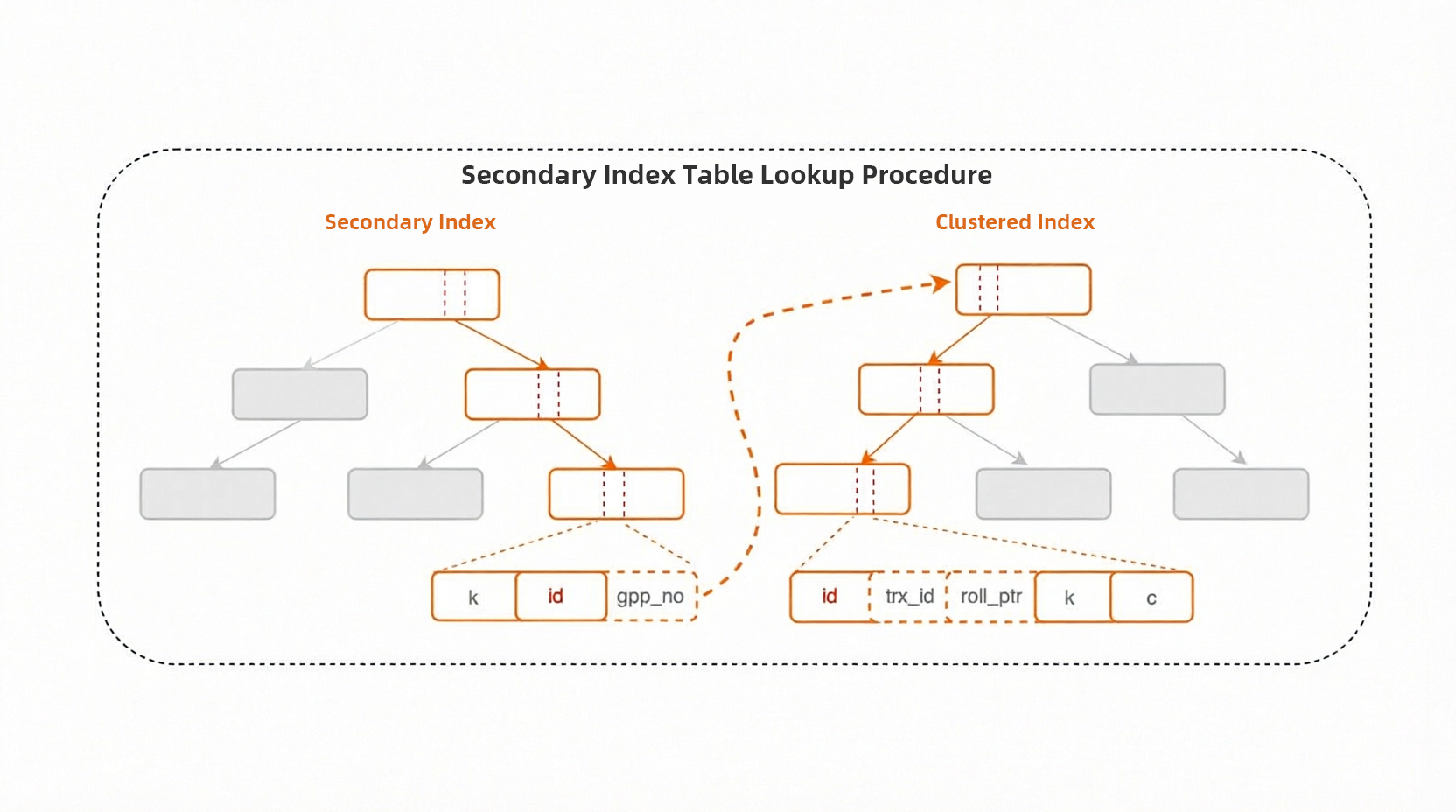

However, querying with a secondary index is more complex. Because leaf nodes of secondary indexes do not contain complete data rows and store only index key values and primary key values, using a secondary index usually requires a table lookup.

Take the query statement select * from t1 where k > 1 as an example. The complete query process is as follows:

This is a typical scenario of "secondary index table lookup". Because the secondary index stores incomplete information, the system must first scan the secondary index B+ tree to locate records. Then, it scans the clustered index B+ tree using the primary key value to obtain complete data.

Compared to the single traversal of a clustered index, a secondary index query requires two B+ tree traversals. This increases query overhead. The performance impact is significant when many records require table lookups.

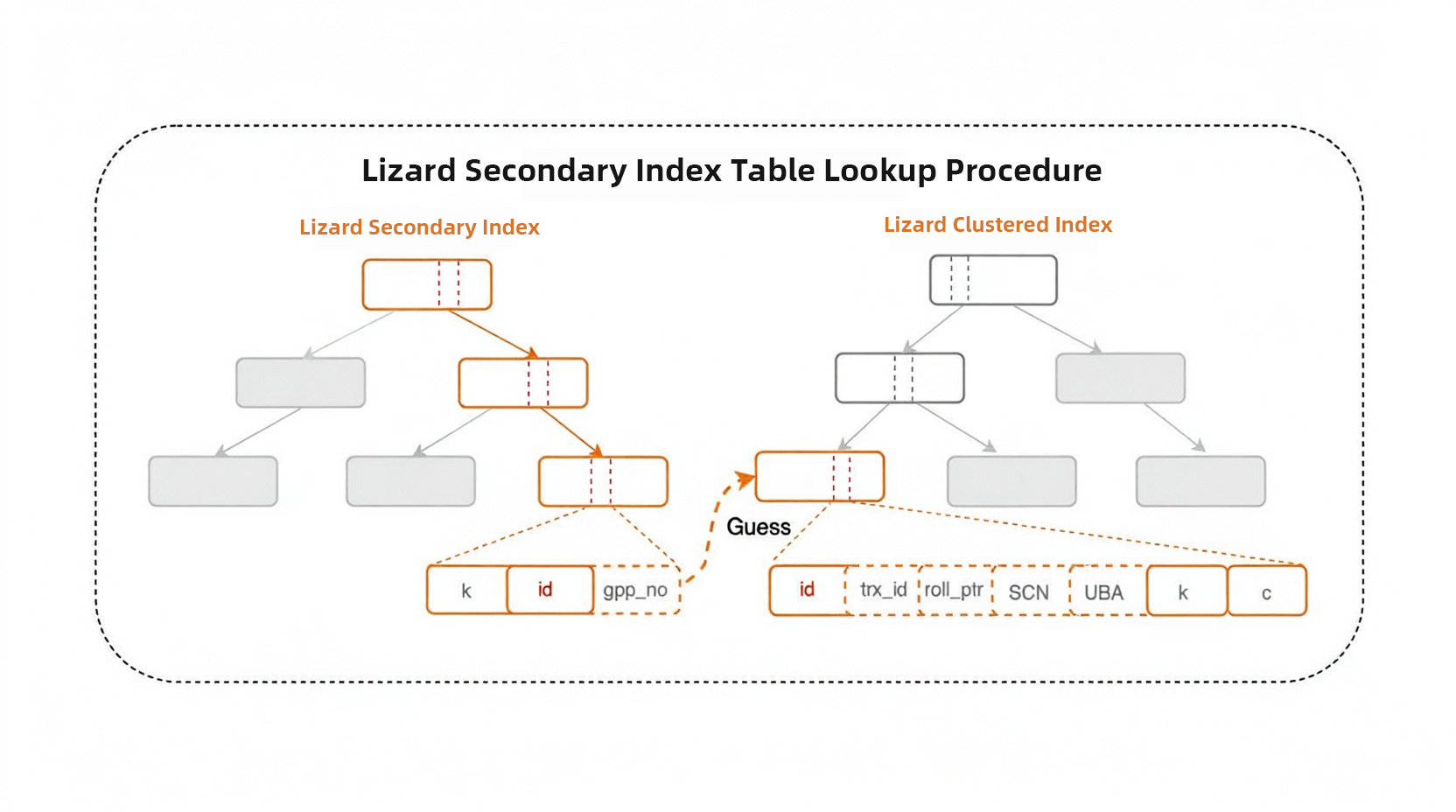

To address these issues, the Lizard transaction system introduces system columns. It maintains the page number (page_no) of the corresponding clustered index record within the secondary index record. This implements the Guess Primary PageNo (GPP) scheme and significantly reduces the overhead of secondary index table lookups.

The process is as follows:

Using the GPP scheme, secondary index table lookups are optimized from "secondary index B+ tree traversal + clustered index B+ tree traversal" to "secondary index B+ tree traversal + direct page access". This optimization reduces the complexity of table lookup operations from O(log N) based on tree height to O(1) for direct access. It significantly reduces disk I/O counts and CPU overhead. Performance improves notably in batch table lookup scenarios. This effectively resolves the performance bottlenecks of traditional secondary index table lookups.

The GPP scheme of the Lizard transaction system enables direct access to secondary index physical pages. However, the upper-layer SQL executor must use this physical location information efficiently. This requires optimizing the interaction between the SQL layer and the InnoDB storage tier. The following section compares "logical addressing" and "physical addressing." It explains how the Lizard execution engine eliminates redundant overhead during interactions.

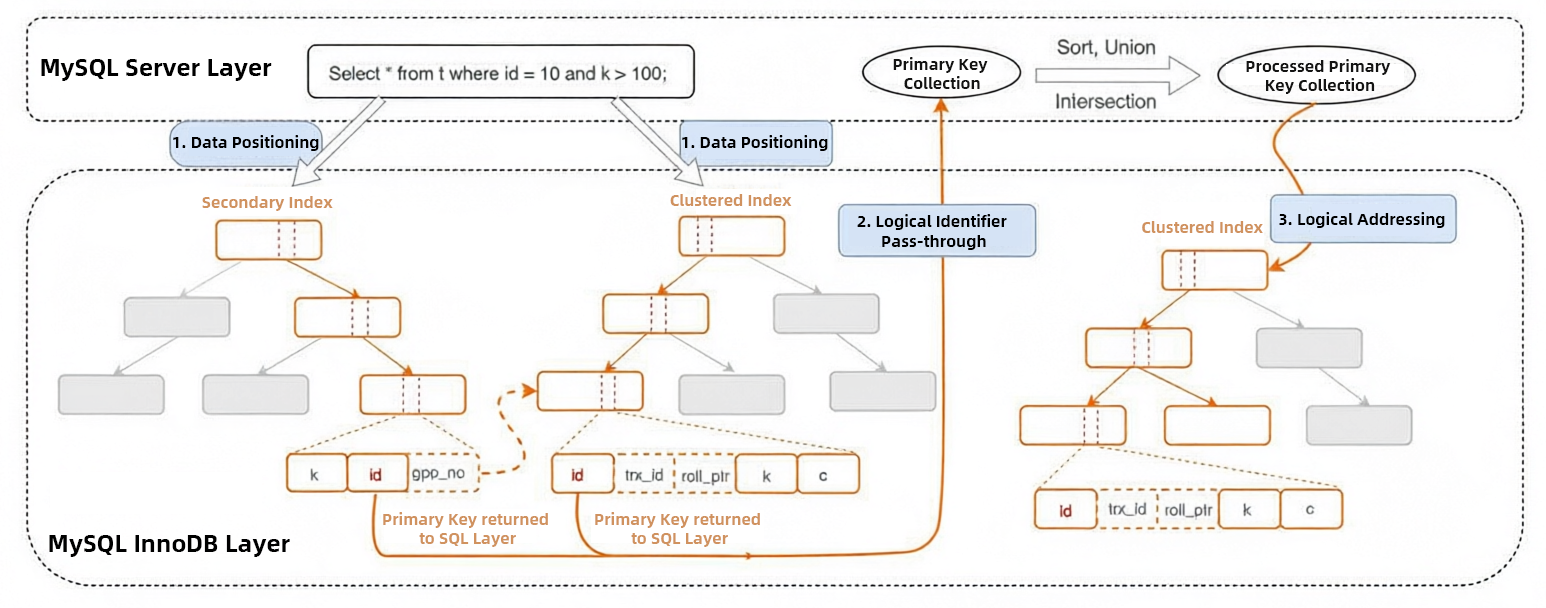

In the traditional MySQL executor architecture, the SQL layer and the InnoDB layer interact using "logical identifiers." InnoDB implements these identifiers as follows:

• Clustered index primary key: If a table has a primary key, the system uses the primary key as the logical identifier.

• Implicit RowID: If a table lacks an explicit primary key, the system uses an internal 6-byte globally incrementing ID generated by InnoDB as the implicit primary key.

Typical index query interaction logic works as follows:

index_read to query the index. InnoDB navigates the index B+ tree to locate the leaf node. It then extracts the logical identifier (Primary key / RowID) for the record.

The figure shows that traditional logical addressing is unaware of the physical distribution of data. Therefore, two complete B+ tree scans are required.

MySQL was designed with a pluggable storage engine architecture. The SQL layer and the storage engine interact using the abstract handler API. In this architecture, the executor is unaware of underlying physical storage details. It uses logical identifiers for addressing. This decouples the SQL layer from the storage engine layer and provides flexibility. A single database instance can use different storage engines as needed. Different storage engines can also evolve and optimize independently without requiring changes to the SQL layer code.

However, this decoupling comes with performance costs:

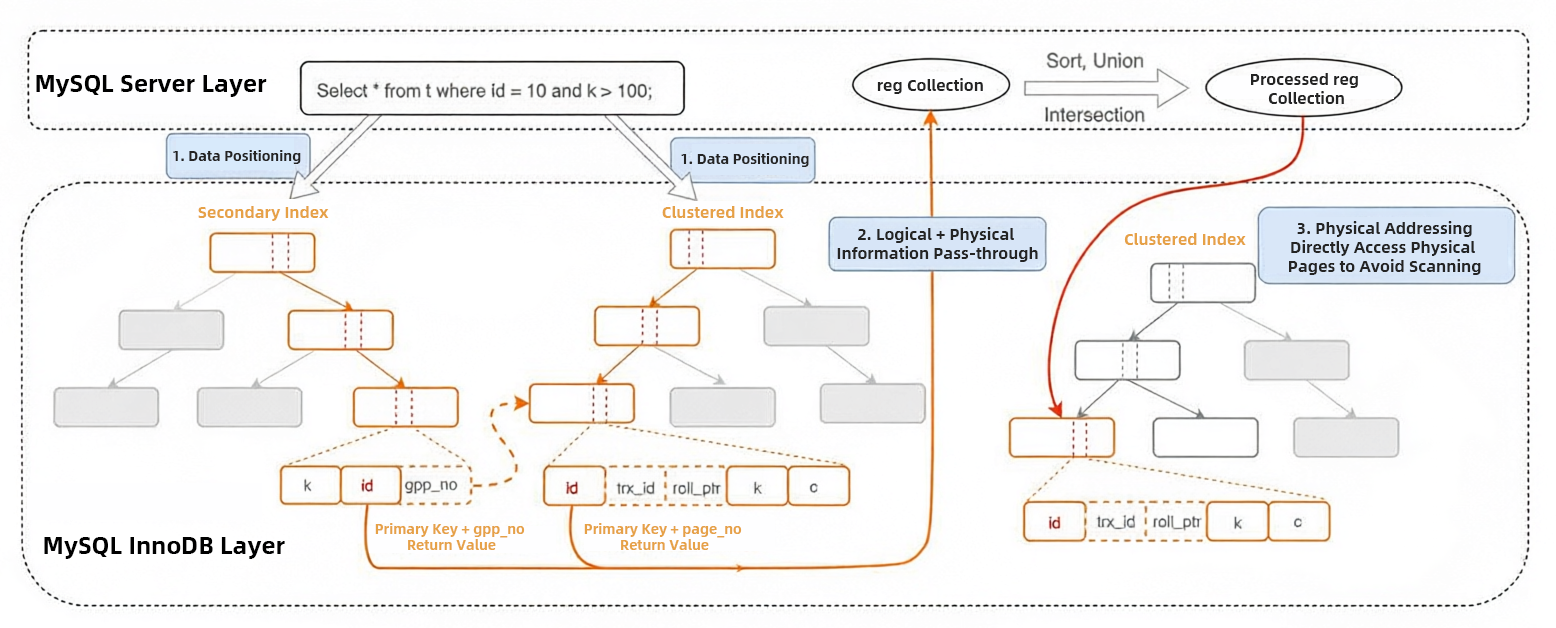

To address performance bottlenecks caused by "logical addressing" in the traditional mode, the PolarDB-X storage engine team delved into the kernel. They fully refactored and optimized everything from underlying interaction mechanisms to physical storage access paths:

1. Data positioning: In the data positioning interaction logic, InnoDB still needs to perform a complete B+ tree traversal. It selects a secondary index or a clustered index for the query based on conditions. However, when locating data at the leaf node, we pass through more than just the logical identifier to the SQL layer. We pass logical information (Primary Key / RowID) plus physical information (page_no).

• For clustered indexes, we return the Primary Key / RowID of the record plus the page_no of the physical page where the record is located.

• For secondary indexes, we use our existing GPP (Guess Primary Pageno) capability. We return the page_no of the physical page containing the clustered index record that corresponds to the secondary index record.

2. Logical + physical information pass-through: To let the SQL layer perceive the physical engine, we defined a reg struct. This struct encapsulates the logical and physical information passed through from the InnoDB layer. During SQL layer processing, we still use logical information for comparison. Physical information is not involved in comparison.

3. Physical addressing: After processing the SQL layer logic, the reg struct carries logical and physical information back to the InnoDB layer. InnoDB uses the physical information for physical addressing to access the physical page directly. This avoids the second complete B+ tree scan process.

Throughout the optimization process, we did not change the original interaction logic between the MySQL SQL layer and the InnoDB layer. Instead, we passed through physical information during the interaction. This lets the SQL layer perceive the underlying physical distribution. Carrying this physical information during the second interaction reduces one B+ tree scan. This significantly improves query performance.

Based on the discussion above, we selected index merge and multi range read as typical scenarios for testing. This verifies the optimization effect of physical addressing:

• In a single query, index merge uses multiple independent indexes on one table. It then merges the results from each index.

• Multi range read targets scenarios where secondary index queries require table lookups. It converts the random I/O of secondary indexes into an ordered arrangement of primary keys. This transforms random I/O into ordered I/O.

| Query Type | Metric | Base | Index Merge GPP | Improvement |

|---|---|---|---|---|

| Intersection Merge | QPS | 413968.34 | 481810.13 | 16.38% |

| 95% Latency | 1.91 | 1.73 | / | |

| Union Merge | QPS | 147093.55 | 218326.52 | 48.43% |

| 95% Latency | 5.09 | 3.68 | / | |

| Sort-Union Merge | QPS | 1799.21 | 3520.24 | 95.64% |

| 95% Latency | 390.30 | 227.40 | / | |

| MRR | QPS | 183932.70 | 263934.09 | 43.49% |

| 95% Latency | 4.49 | 3.02 | / |

Testing results show that Lizard's optimization on the executor provides physical addressing as a new interaction model. This yields significant performance improvements.

Reveal How PolarDB Materialized Views Boost Query Performance by 100x

When MySQL Meets the Columnar Storage Engine DuckDB in the AI Era

ApsaraDB - May 16, 2025

ApsaraDB - December 2, 2025

ApsaraDB - November 26, 2025

ApsaraDB - November 7, 2024

ApsaraDB - March 19, 2025

ApsaraDB - October 17, 2024

Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More Database Migration Solution

Database Migration Solution

Migrating to fully managed cloud databases brings a host of benefits including scalability, reliability, and cost efficiency.

Learn More Oracle Database Migration Solution

Oracle Database Migration Solution

Migrate your legacy Oracle databases to Alibaba Cloud to save on long-term costs and take advantage of improved scalability, reliability, robust security, high performance, and cloud-native features.

Learn More Database Gateway

Database Gateway

A tool product specially designed for remote access to private network databases

Learn MoreMore Posts by ApsaraDB