By Qiquan (Chen Shiyang)

In OLAP or hybrid transactional and analytical processing (HTAP) scenarios, periodically executed complex queries (such as multi-table JOINs, aggregations, and subqueries) often become system bottlenecks. These types of queries usually have characteristics of high computing overhead, low concurrency frequency, and reusable results. If the queries are calculated in real time every time, resources are wasted, and the stability of online transactions may be affected.

To solve this problem, materialized views (MVs) are widely adopted as a classic pre-computation optimization method. The cloud-native database PolarDB for MySQL officially supports materialized views in the latest version. Combined with the PolarDB architecture, it performs deep kernel optimizations to provide an innovative solution that balances performance, cost, and real-time capabilities for enterprise-level HTAP scenarios. While ensuring transaction processing capabilities, it boosts complex query performance to a new level.

This topic systematically introduces the capabilities and applicable boundaries of PolarDB materialized views from the aspects of implementation principles, key optimizations, and test results.

Based on the refresh algorithm, materialized views can be classified into three types: full refresh, incremental refresh, and real-time refresh. Different refresh methods represent three trade-offs between "cost and timeliness":

• Full refresh: Each refresh recalculates the entire view result and overwrites the old data. It features the strongest versatility (applicable to almost all complex queries), but has high computing and I/O overhead and relatively higher refresh latency. It is suitable for scenarios where data changes are infrequent or timeliness requirements are not high. For example: refreshing the sales ranking by category for the previous day at 00:00 every day.

• Incremental refresh: Each refresh calculates only the changes since the last time. It saves more resources and is faster, but it depends on change records (such as logs and CDC) and has a complex implementation with constraints on query types. It is suitable for scenarios where base tables are updated frequently and the data volume is large. For example: The order table is appended by day, and the materialized view processes only the new data of the day.

• Real-time refresh: A refresh is triggered immediately when a base table transaction is committed, ensuring strong consistency with the base table. Essentially, it is "performing an incremental refresh for every commit." It offers the best consistency but requires extra costs on the write path. When updates are frequent, it may affect online services, and it limits the number of materialized views on the same base table. It is suitable for scenarios involving consistency-sensitive metrics and second-level synchronization.

In the current version of PolarDB, we already support materialized views with automatic full refresh, which have the following advantages:

100% compatibility: Based on the native kernel implementation, materialized views can be created for any query supported by standard views. Except for slight differences in creation, deletion, and refresh syntax, the user experience is consistent with standard views.

Efficient refresh: During a refresh, "query computing" can be offloaded to a column store node (IMCI) for execution. By leveraging the vectorized execution capability of the column store, this not only reduces the pressure on the primary node but also accelerates refresh efficiency.

Row-column hybrid storage: An In-Memory Column Index is automatically generated after a refresh. When the materialized view is queried, the optimizer selects a better engine for execution based on the cost.

Nesting and cascade refresh: Supports defining nested materialized views to chain complex extract, transform, and load (ETL) pipelines. For example, you can first aggregate Order Details, and then perform secondary statistics based on the intermediate materialized view.

In subsequent versions, we will gradually support capabilities such as incremental refresh, rich query rewrite, and external table data sources to further cover real-time and Near Real-Time scenarios.

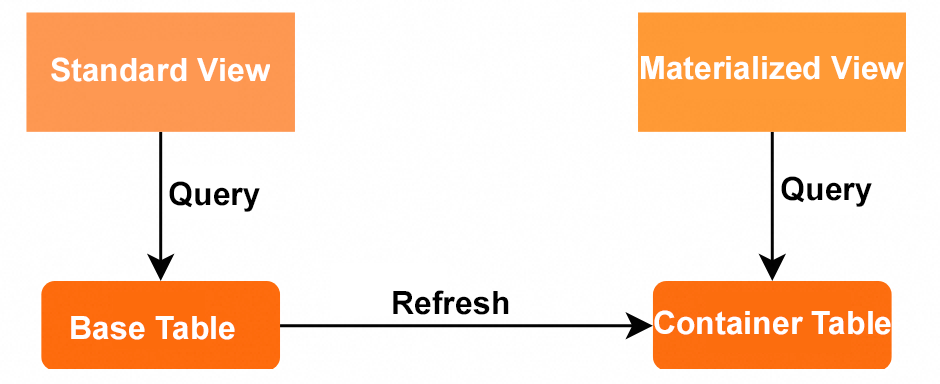

Materialized views achieve acceleration through "pre-computation and result persistence." They are stored on the disk in the form of a hidden physical table. You can understand it as: a "definition table" (view definition) + a "container table" (a hidden table that actually stores data). The query execution path of a materialized view directly references the underlying storage structure (the container table) of the materialized view to read the calculation result, instead of scanning and calculating the base table in real time.

As shown in the preceding figure, a container table is attached after a materialized view is created. Each refresh retrieves data from the base table, performs pre-computation, and writes the new result to the container table. External queries directly access the container table, bypassing the expensive on-the-fly calculation path.

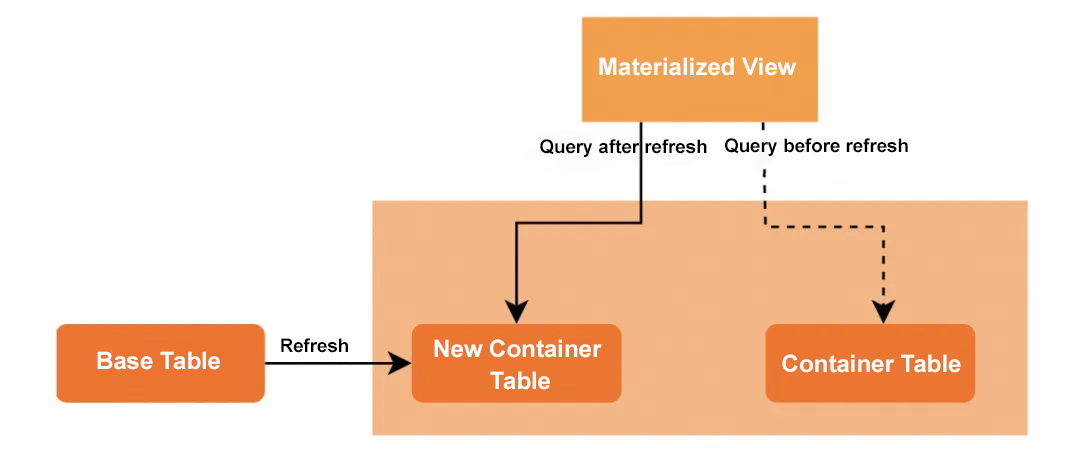

To ensure "freshness", the materialized view needs to be refreshed regularly. A full refresh does not directly delete the old container and rewrite it. Instead, it adopts a "shadow table switching" method: First, create a brand-new container table, write the new results into it, and after the writing is completed, atomically switch the old container table with the new container. The benefits are:

• It avoids "delete then write" operations in a single large transaction, reduces lock holding time, and alleviates system pressure.

• It does not block sessions that are querying the materialized view. New data can be read immediately at the moment of the switch.

We support two methods: auto-refresh and manual refresh. You can specify the refresh policy and refresh time when you create the materialized view. At the same time, the system table allows you to observe the task status of the refresh, making it "searchable, controllable, and traceable."

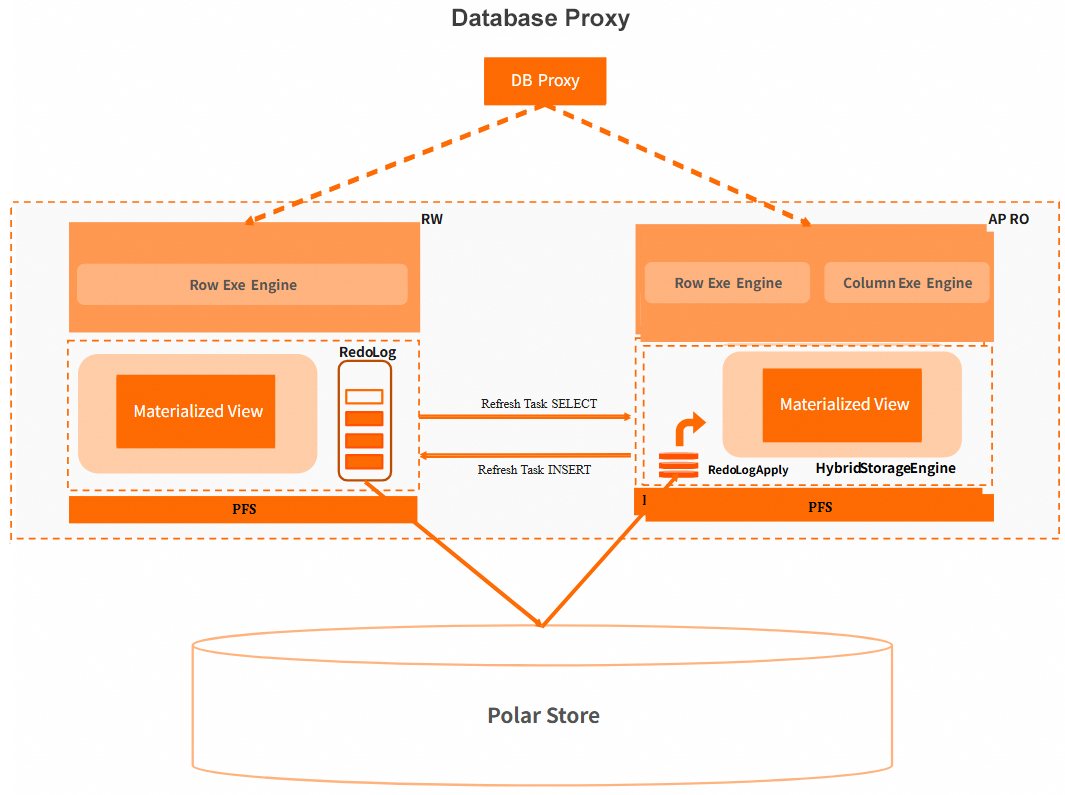

When the view definition is complex (multi-table JOIN, nested subquery, re-aggregation), the refresh bottleneck is almost always in the pre-computation. PolarDB supports offloading "query computation" to the column store node for execution. The overall architecture is as follows:

If an IMCI is created on the base table, the pre-computation job is offloaded to the column store node for execution during the refresh. The result is sent to the primary node and written into the container table. This can reduce the resource consumption of the refresh on the primary node and improve refresh efficiency. Therefore, we recommend that you create IMCIs on the base tables of the materialized view to utilize this optimization. For example, for queries with large JOIN and aggregation such as TPCH Q9, offloading to IMCI can significantly shorten the pre-computation time while not affecting the stationarity of online writing.

In some scenarios, the materialized view result set itself is large (such as the detail cache after dimensionality reduction). At this time, even if the query part is offloaded to IMCI, the bottleneck may still be in the "batch writing to the container table". We support parallelizing the insertion stage: divide the results by shard or stream, and perform multi-threaded parallel writing. The refresh duration decreases approximately linearly with the degree of parallelism. This is suitable for materialized jobs with "medium complexity and large result sets."

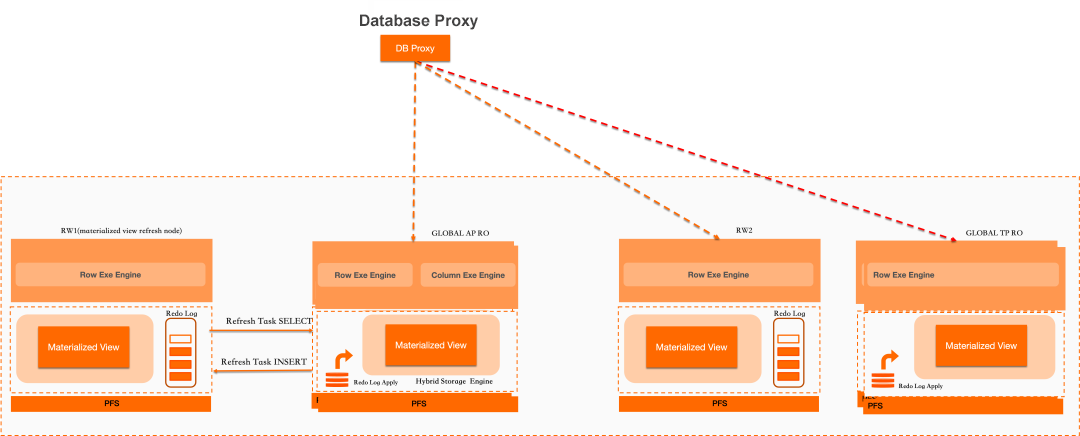

With the help of optimization methods such as compute offloading and parallel writing, the materialized view can be refreshed efficiently. However, the refresh occupies certain resources, which may have a certain Impact on latency-sensitive online services. To solve this problem, we support specifying a sub-cluster to refresh the materialized view in a multi-master cluster to achieve physical resource isolation and avoid affecting online services.

As shown in the figure above, in a multi-master cluster, you can dedicate a RW sub-cluster (such as RW1) to materialized view refresh. Business read and write operations are routed through the agent to another RW (such as RW2) and read-only nodes to achieve resource isolation at the physical layer. After the refresh is completed, the latest results are written to the shared storage and are visible to all nodes. For latency-sensitive businesses, this is a very practical "disturbance reduction" method.

To verify the performance of the materialized view, we used the TPCH-100G test dataset to test the query acceleration effect of using the IMCI to "query the materialized view" compared to "directly querying the base table", as well as the materialized view refresh duration.

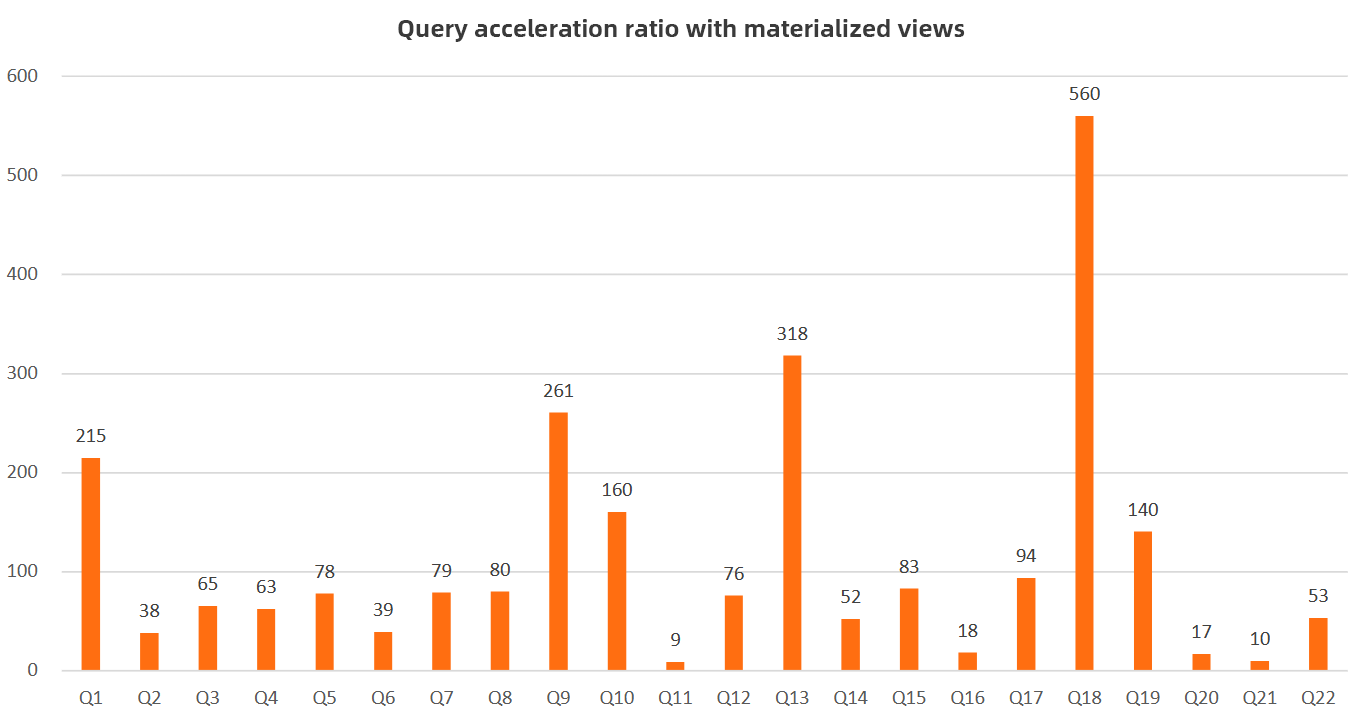

The query acceleration ratio of using the materialized view is shown in the following figure:

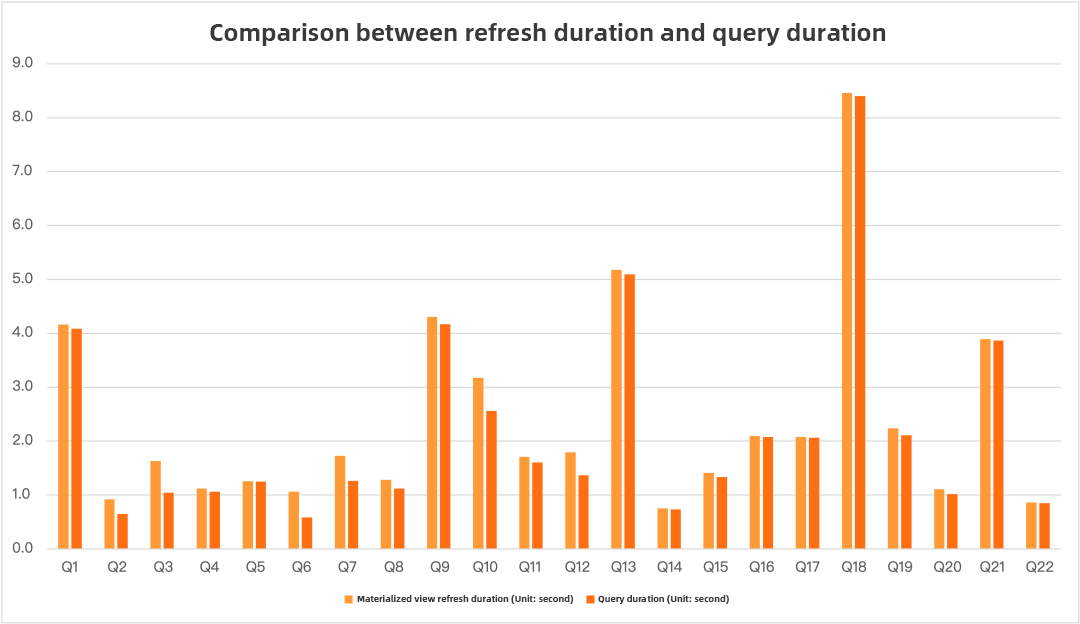

The comparison chart of refresh duration and query duration is as follows:

From the above results, we can see that:

• For most complex queries (such as Q1, Q3, Q9, Q13, and Q18), querying the materialized view can achieve orders of magnitude in performance improvement. For example, the Q18 query dropped from 8.4 seconds to 0.015 seconds.

• For SQL where "the query itself is not complex but the result set is large", the acceleration of the materialized view is relatively limited. This type of scenario is more like "moving data", and the advantage of pre-computation is not obvious.

• The refresh duration is close to or slightly higher than the direct query duration for most queries, which meets the expectation of "turning queries into background computation". In other words, it is "extremely fast in the foreground, and pay-as-planned in the background".

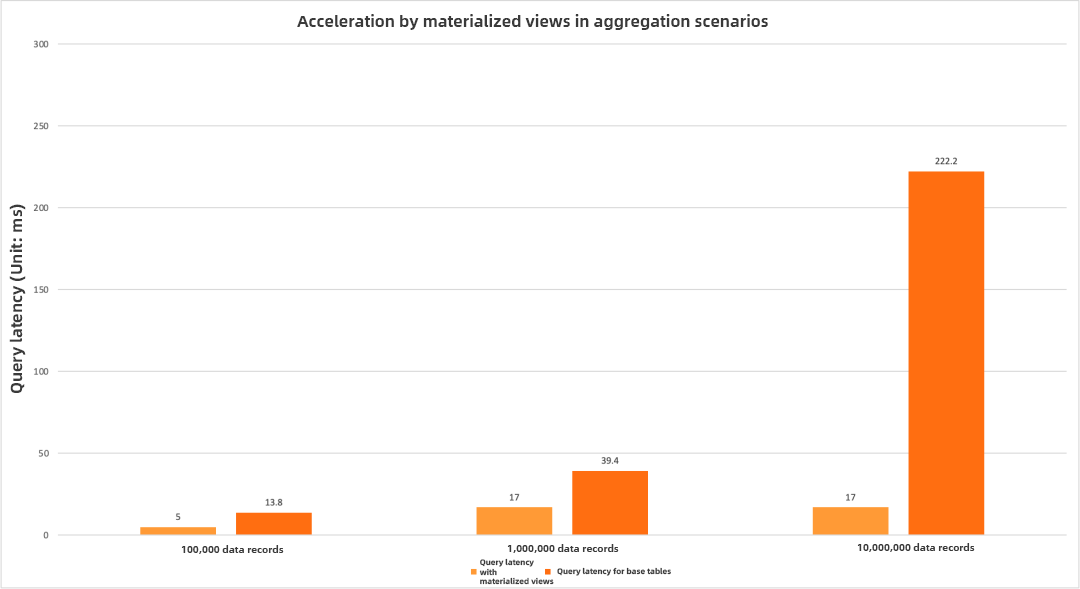

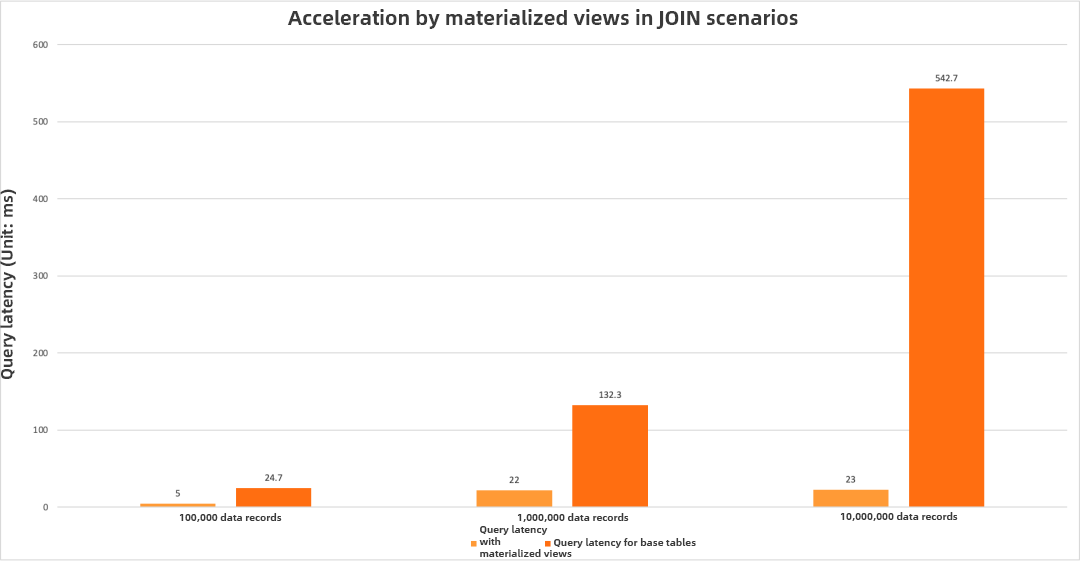

In analysis scenarios, grouping and aggregation and complex association are the most common operations. Therefore, we selected TPCH Q1 and TPCH Q9 to test the query acceleration effects of the materialized view under different data volumes for these two typical scenarios.

Performance comparison in aggregation scenarios:

Performance comparison in JOIN scenarios:

From the above results, we can see that for the two typical scenarios of aggregation and complex JOIN, the performance advantage brought by materialized view pre-computation becomes more obvious as the data volume increases. This further verifies the improvement effect of the "exchanging space for time" optimization method on system performance in massive data scenarios, and fully reflects the query optimization potential of the materialized view in dealing with association analysis of high-cardinality datasets.

Based on the above testing results, we have the following selection suggestions:

• For analysis queries that can accept a certain delay, have high complexity, and have small result sets, prioritize the materialized view.

• For scenarios with large result sets, we recommend that you evaluate them in combination with parallel writing and resource isolation.

• For scenarios that are sensitive to both strong consistency and low latency, you can wait for the incremental/real-time refresh capabilities to be published before making a selection.

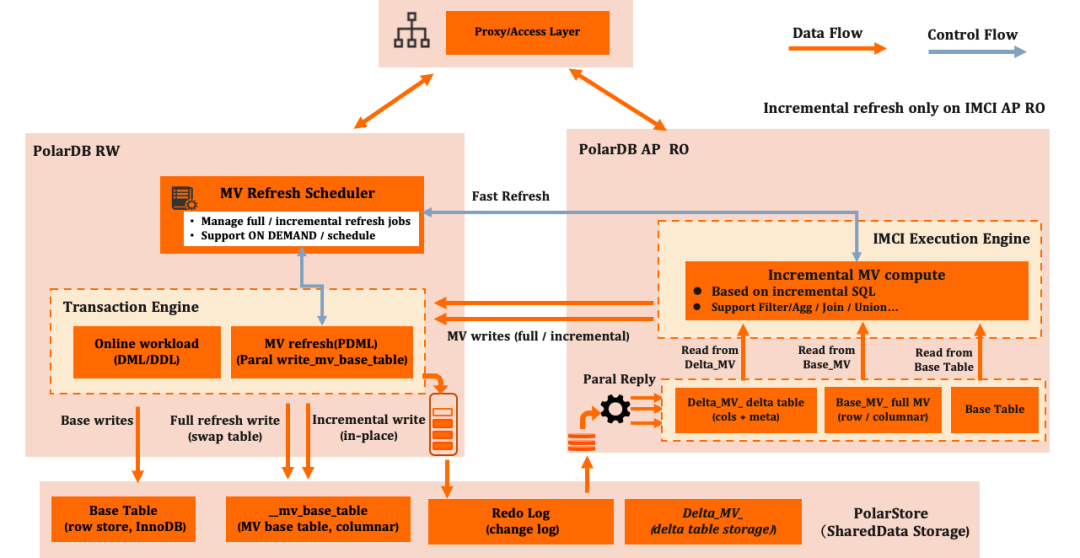

Full refresh materialized views have been officially released in PolarDB for MySQL. Incremental refresh materialized views are currently in the invitational testing phase. This section briefly introduces the architecture and advantages of incremental refresh materialized views.

Incremental refresh materialized views inherit the compute offloading design concept of full refresh materialized views. Their core mechanism is based on change capture from Redo Logs to achieve efficient data synchronization and computation. Specifically, during the Redo Log replay process, data change events in transaction processing are captured in real-time on column store nodes, and these change records are persisted to the incremental table for transaction-consistent maintenance. When incremental refresh is performed, a differential dataset is generated by combining the incremental table and base table data using an incremental algorithm, and this dataset is merged into the materialized view container table through atomic writes, ensuring data freshness while significantly reducing repetitive computation overhead.

In addition to inheriting advantages such as compute offloading, parallel writing, and scalability from full refresh, incremental refresh materialized views provide the following core advantages:

1. Implicit incremental table management and transparent O&M

The columnar incremental log table is automatically created and maintained through an intelligent inference algorithm, without the need to manually define explicit log tables (such as the MLOG mechanism in Oracle). This mechanism supports Metadata Synchronization updates when the base table schema is dynamically extended, realizing the automated management of the full lifecycle of the log table, and significantly reducing O&M complexity.

2. Low-intrusive synchronization based on Redo Logs

Redo Log streaming parsing technology is adopted to achieve near real-time capture of changed data. Transactional consistency of data synchronization is ensured through the WAL mechanism. Compared with traditional trigger solutions, this solution avoids additional overhead on business tables, controls synchronization latency at the millisecond level, and significantly reduces resource consumption.

3. Intelligent data reclamation and storage optimization

A global transaction offset trail mechanism is introduced to perform batch cleanup of expired data through background asynchronous processes. This procedure calculates the Data Retention border based on the safe watermark algorithm to achieve efficient reclamation of storage space. The entire procedure is completely decoupled from online businesses, avoiding lock competition and resource contention.

Materialized views are not a silver bullet, but for periodic complex queries, they are the most cost-effective acceleration solution. Empowered by the PolarDB row-column hybrid storage architecture, you can enjoy the ecosystem compatibility of MySQL and obtain AP-level analysis performance. In subsequent versions, we will continue to improve capabilities such as incremental refresh, query rewrite, service monitoring, and disparate data source support. These capabilities will further extend the application border of materialized views in hybrid transactional and analytical processing (HTAP) and real-time data warehousing scenarios. You are welcome to continue to follow subsequent version updates.

Alibaba Cloud Native Community - January 27, 2026

ApsaraDB - November 18, 2025

ApsaraDB - July 9, 2025

Alibaba Cloud Native Community - November 4, 2025

digoal - October 16, 2023

ApsaraDB - October 29, 2024

PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn MoreMore Posts by ApsaraDB