By Tian Jingqi

In database query optimizers, join reordering is a critical optimization technique. For complex queries involving multiple joins, different join orders can result in execution performance that differs by several orders of magnitude. However, the number of possible join orders grows factorially with the number of tables, making the problem of finding the optimal join order NP-hard in the general case. The core objective of join reordering is to efficiently explore this enormous search space and select a near-optimal (or otherwise acceptable) join order, thereby significantly improving query performance.

In DuckDB, two optimizations are closely related to join reordering: JOIN_ORDER and BUILD_SIDE_PROBE_SIDE. Let's first explain the role of each. The JOIN_ORDER optimization is responsible for optimizing the join order of multiple tables. For example, given three tables A, B, and C, this optimization evaluates join orders such as (A ⋈ B) ⋈ C, (A ⋈ C) ⋈ B, and (B ⋈ C) ⋈ A and selects the most efficient one. You may notice that not all theoretically possible join orders are enumerated. This is because, in this optimization, the cost model only considers the cardinalities of the participating tables and the cardinality of the resulting join outputs. As a result, the estimated cost of A ⋈ B is identical to that of B ⋈ A. In practice, however, for hash joins, it is generally more efficient to build the hash table on the smaller table and use the larger table as the probe side. This is precisely the responsibility of the BUILD_SIDE_PROBE_SIDE optimization. It determines which input should be used as the build side and which as the probe side by comparing the estimated hash table sizes, and swaps the two sides if necessary. For example, if the JOIN_ORDER optimization determines that (A ⋈ B) ⋈ C is the optimal join order, the BUILD_SIDE_PROBE_SIDE optimization will then attempt to swap A and B, as well as (A ⋈ B) and C.

Let's first look at the performance of DuckDB's join reordering algorithm through a concrete example. Suppose we have the following table schema:

CREATE TABLE t1 (

id int,

col1 int,

col2 int,

col3 int,

col4 int,

col5 int,

col6 int

);

CREATE TABLE t2 AS FROM t1;

CREATE TABLE t3 AS FROM t1;

CREATE TABLE t4 AS FROM t1;

CREATE TABLE t5 AS FROM t1;

CREATE TABLE t6 AS FROM t1;Now consider the following SQL query. How should its join order be enumerated?

SELECT t1.id

FROM t1, t2, t3, t4, t5, t6

WHERE t1.col1 = t2.col1

AND t2.col2 = t3.col2

AND t4.col4 = t5.col4

AND t5.col5 = t6.col5

AND t1.id + t2.id + t3.id = t4.id + t5.id + t6.id;In MySQL, since the optimizer only enumerates left-deep join trees, the resulting execution plan (assuming that all tables are empty) is as follows:

-> Filter: (((t1.id + t2.id) + t3.id) = ((t4.id + t5.id) + t6.id)) (cost=2.1 rows=1)

-> Inner hash join (t6.col5 = t5.col5) (cost=2.1 rows=1)

-> Table scan on t6 (cost=0.35 rows=1)

-> Hash

-> Inner hash join (t5.col4 = t4.col4) (cost=1.75 rows=1)

-> Table scan on t5 (cost=0.35 rows=1)

-> Hash

-> Inner hash join (no condition) (cost=1.4 rows=1)

-> Table scan on t4 (cost=0.35 rows=1)

-> Hash

-> Inner hash join (t3.col2 = t2.col2) (cost=1.05 rows=1)

-> Table scan on t3 (cost=0.35 rows=1)

-> Hash

-> Inner hash join (t2.col1 = t1.col1) (cost=0.7 rows=1)

-> Table scan on t2 (cost=0.35 rows=1)

-> Hash

-> Table scan on t1 (cost=0.35 rows=1)In this execution plan, tables t1 through t6 are joined in sequence. When table t4 is joined, because there is no join condition, a Cartesian product is generated. The query engine then applies a filter at the end to retain only those rows that satisfy the condition t1.id + t2.id + t3.id = t4.id + t5.id + t6.id.

This join order is clearly not optimal. A more efficient approach is to first join tables t1 through t3, then join tables t4 through t6, and finally use the condition t1.id + t2.id + t3.id = t4.id + t5.id + t6.id to perform a hash join between the two intermediate result sets.

Let's now examine the execution plan generated by DuckDB:

┌─────────────────────────────┐

│┌───────────────────────────┐│

││ Physical Plan ││

│└───────────────────────────┘│

└─────────────────────────────┘

┌───────────────────────────┐

│ PROJECTION │

│ ──────────────────── │

│ #0 │

│ │

│ ~1 row │

└─────────────┬─────────────┘

┌─────────────┴─────────────┐

│ HASH_JOIN │

│ ──────────────────── │

│ Join Type: INNER │

│ │

│ Conditions: ├────────────────────────────────────────────────────────────────────────┐

│ ((id + id) + id) = ((id + │ │

│ id) + id) │ │

│ │ │

│ ~1 row │ │

└─────────────┬─────────────┘ │

┌─────────────┴─────────────┐ ┌─────────────┴─────────────┐

│ HASH_JOIN │ │ HASH_JOIN │

│ ──────────────────── │ │ ──────────────────── │

│ Join Type: INNER │ │ Join Type: INNER │

│ │ │ │

│ Conditions: ├───────────────────────────────────────────┐ │ Conditions: ├───────────────────────────────────────────┐

│ col1 = col1 │ │ │ col4 = col4 │ │

│ │ │ │ │ │

│ ~1 row │ │ │ ~1 row │ │

└─────────────┬─────────────┘ │ └─────────────┬─────────────┘ │

┌─────────────┴─────────────┐ ┌─────────────┴─────────────┐┌─────────────┴─────────────┐ ┌─────────────┴─────────────┐

│ HASH_JOIN │ │ SEQ_SCAN ││ HASH_JOIN │ │ SEQ_SCAN │

│ ──────────────────── │ │ ──────────────────── ││ ──────────────────── │ │ ──────────────────── │

│ Join Type: INNER │ │ Table: t1 ││ Join Type: INNER │ │ Table: t4 │

│ │ │ Type: Sequential Scan ││ │ │ Type: Sequential Scan │

│ Conditions: │ │ ││ Conditions: │ │ │

│ col2 = col2 ├──────────────┐ │ Projections: ││ col5 = col5 ├──────────────┐ │ Projections: │

│ │ │ │ col1 ││ │ │ │ col4 │

│ │ │ │ id ││ │ │ │ id │

│ │ │ │ ││ │ │ │ │

│ ~1 row │ │ │ ~0 rows ││ ~1 row │ │ │ ~0 rows │

└─────────────┬─────────────┘ │ └───────────────────────────┘└─────────────┬─────────────┘ │ └───────────────────────────┘

┌─────────────┴─────────────┐┌─────────────┴─────────────┐ ┌─────────────┴─────────────┐┌─────────────┴─────────────┐

│ SEQ_SCAN ││ SEQ_SCAN │ │ SEQ_SCAN ││ SEQ_SCAN │

│ ──────────────────── ││ ──────────────────── │ │ ──────────────────── ││ ──────────────────── │

│ Table: t2 ││ Table: t3 │ │ Table: t5 ││ Table: t6 │

│ Type: Sequential Scan ││ Type: Sequential Scan │ │ Type: Sequential Scan ││ Type: Sequential Scan │

│ ││ │ │ ││ │

│ Projections: ││ Projections: │ │ Projections: ││ Projections: │

│ col1 ││ col2 │ │ col4 ││ col5 │

│ col2 ││ id │ │ col5 ││ id │

│ id ││ │ │ id ││ │

│ ││ │ │ ││ │

│ ~0 rows ││ ~0 rows │ │ ~0 rows ││ ~0 rows │

└───────────────────────────┘└───────────────────────────┘ └───────────────────────────┘└───────────────────────────┘As we can see, the join order generated by DuckDB is consistent with our expectations.

This naturally raises the question: can MySQL produce such an execution plan? By default, the join reordering algorithm in MySQL can only enumerate left-deep join trees. However, the join order shown above is a bushy tree. Therefore, regardless of the data contained in the tables, MySQL cannot generate this execution plan for the query. However, MySQL provides a configuration option called hypergraph_optimizer. When enabled, MySQL can enumerate bushy join trees and produce an execution plan of this kind.

In fact, both DuckDB and the hypergraph optimizer in MySQL rely on the same join order enumeration algorithm: Dphyp. Dphyp is also the core algorithm used by DuckDB's JOIN_ORDER optimization. Since Dphyp is a complex algorithm, this article will first introduce it and then explain how the JOIN_ORDER and BUILD_SIDE_PROBE_SIDE optimizations are implemented by examining DuckDB's source code.

The Dphyp algorithm comes from the paper "Dynamic Programming Strikes Back." As the name suggests, it involves dynamic programming and hypergraphs. To understand Dphyp, it is first necessary to clarify how dynamic programming is applied to the join order problem.

The principle behind using dynamic programming for join order optimization is quite simple. When determining the join order for n tables, the problem can be transformed into joining one table with the result of joining the remaining n-1 tables. This naturally introduces subproblems, making the problem well-suited to a dynamic programming approach.

DPsize and DPsub algorithms

DPsize and DPsub are classic dynamic programming algorithms for solving the join order optimization problem. The only difference between them is the enumeration order. DPsize gradually increases the number of tables participating in the join, while DPsub uses a bitmap-based approach. For example, when joining four tables (t1, t2, t3, and t4), DPsize first enumerates join orders involving two tables, then three, and finally all four. In contrast, DPsub encodes the tables as a four-bit binary number. For example, the binary value 1010 represents a join between tables t4 and t2. Enumeration then proceeds in the order 0001, 0010, 0011, 0100, 0101, and so on.

However, when using these straightforward dynamic programming algorithms, many meaningless join orders may be generated. Consider the following SQL statement: SELECT * FROM t1 JOIN t2 ON t1.col1 = t2.col1 JOIN t3 ON t2.col2 = t3.col2;. Using the DPsize algorithm, the join orders are enumerated in the following sequence: ({t1}, {t2}), ({t2}, {t1}), ({t1},{t3}), ({t3}, {t1}), ({t2}, {t3}), ({t3},{t2}), ({t1}, {t2,t3}), ({t2}, {t1,t3}), ({t3}, {t1,t2}), ({t1,t2}, {t3}), ({t1,t3}, {t2}), ({t2,t3}, {t1}). In reality, join orders such as ({t1},{t3}), ({t3}, {t1}), ({t2}, {t1,t3}) do not need to be considered because there are no join predicates between t1 and t3. This occurs because the join order enumeration is performed without taking join relationships into account.

The Dphyp algorithm solves this problem by converting the join relationships in an SQL query into a hypergraph, allowing for more efficient enumeration of join orders. In this article, we explain the Dphyp algorithm and illustrate it using DuckDB's source code. First, let's revisit the SQL example and examine the basic definitions used in Dphyp.

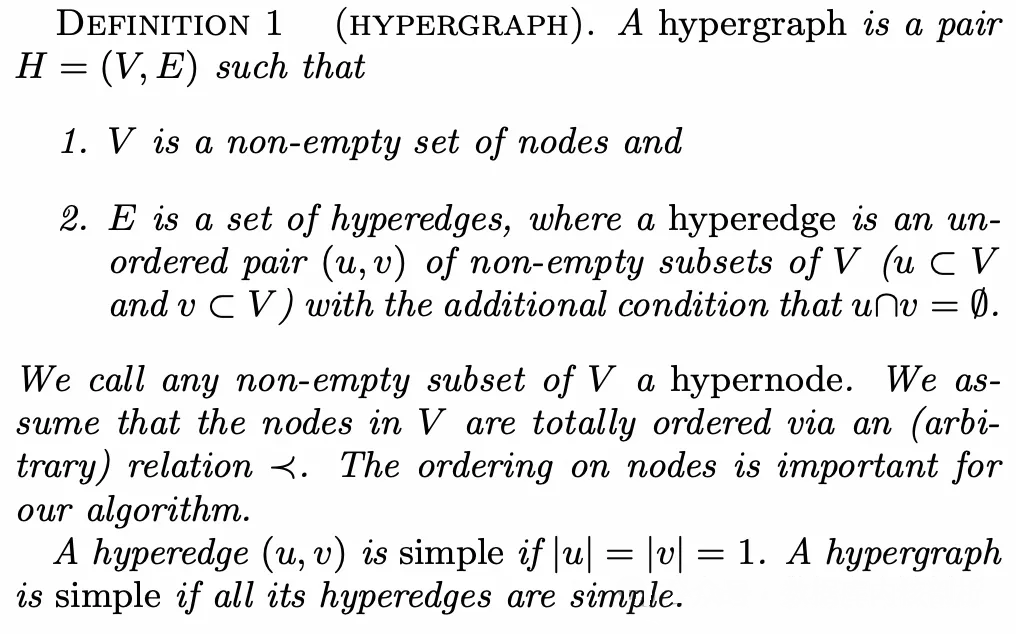

Definition of HYPERGRAPH

A hypergraph H = (V, E) consists of a set of nodes V and a set of hyperedges E. Each hyperedge connects two hypernodes, u and v, where both u and v are disjoint subsets of the node set V.

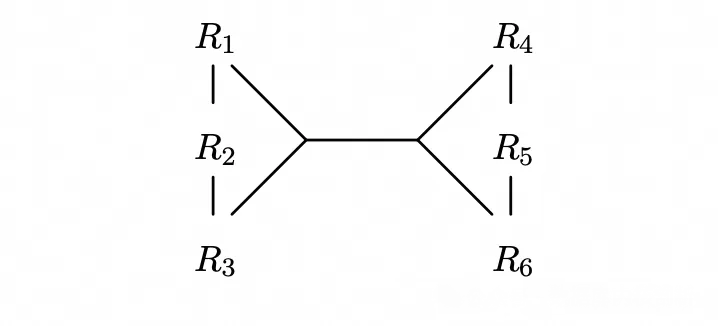

With this definition, for a given SQL query, each table corresponds to a node, and each join relationship corresponds to a hyperedge. Taking the SQL example above as an illustration, we obtain the corresponding hypergraph.

HyperGraph

In addition, an ordering must be imposed on the nodes. This ordering can be chosen arbitrarily, but it must be defined because it affects the order in which the algorithm enumerates join orders. In this SQL example, the tables can simply be ordered by their names.

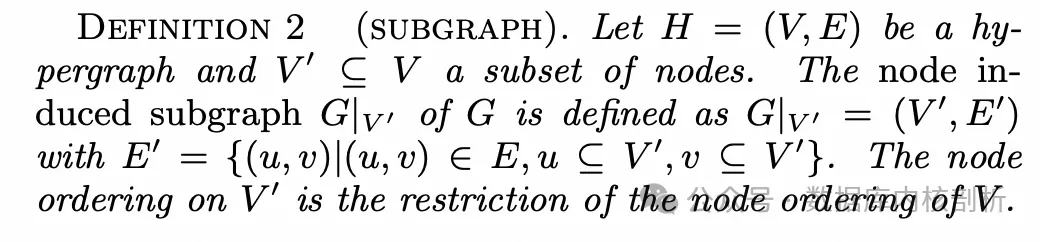

Definition of SUBGRAPH

Given a hypergraph H = (V, E), a subset V' ⊆ V induces a subgraph, denoted as G|V' = (V', E'), where E' ⊆ E, and every hyperedge in E' has both of its endpoints contained within V'.

Using the same SQL example, if V' = {t1, t2, t3, t4}, then the induced subgraph has the hyperedge set E' = {({t1},{t2}),({t2},{t3})}.

Definition of CONNECTED

If a hypergraph contains only a single node, then the hypergraph is connected.

If a hypergraph contains more than one node, it is considered connected if there is a partition of the node set into two subsets, V' and V'', and a hyperedge in E such that the two endpoints of the hyperedge belong to V' and V'' respectively, and the subgraphs  induced by V' and V'' are also connected.

induced by V' and V'' are also connected.

The hypergraph constructed from the SQL example above is connected. This is because it can be partitioned into V' = {t1, t2, t3} and V'' = {t4, t5, t6}. These two node sets are connected by the hyperedge ({t1, t2, t3}, {t4, t5, t6}), and the subgraphs induced by each subset are also connected.

It is important to note that not every SQL query corresponds to a connected hypergraph. However, for a disconnected hypergraph, one can always add a hyperedge representing the Cartesian product of its disconnected components to make the entire hypergraph connected, and this does not affect the result of the SQL query.

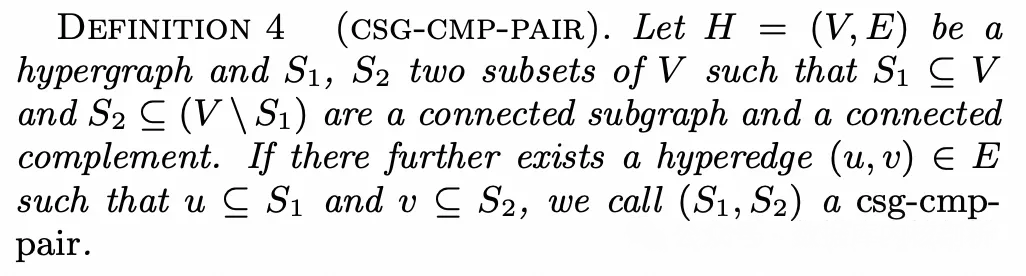

Definition of CSG-CMP-PAIR

Given a hypergraph H = (V, E), if a subset S1 of nodes V induces a connected subgraph, we call it a connected subgraph (strictly speaking, S1 is a set of nodes rather than a graph; the term is used here for convenience), abbreviated as csg. If another node set S2 is a subset of the complement of S1 in V, and the subgraph it induces is also connected, then S2 is called the connected complement of S1, abbreviated as cmp. If there exists a hyperedge that connects S1 and S2, then the pair (S1, S2) is called a connected subgraph-connected complement pair, abbreviated as a csg-cmp-pair.

Using the same SQL example, S1 = {t1, t2, t3} and S2 = {t4, t5, t6} form a csg-cmp-pair, whereas S1 = {t1, t2, t3} and S2 = {t4} do not.

Clearly, enumerating join orders using a dynamic programming approach is essentially equivalent to enumerating all csg-cmp-pairs. For example, identifying the csg-cmp-pair S1 = {t1, t2, t3} and S2 = {t4, t5, t6} corresponds to first joining tables t1, t2, and t3, then joining tables t4, t5, and t6, and finally joining the two intermediate result sets. To satisfy the requirements of dynamic programming, csg-cmp-pairs must be enumerated in a specific order, and the core of the Dphyp algorithm lies precisely in determining this enumeration order.

In a simple graph, the concept of a neighbor is straightforward. However, in a hypergraph, the presence of hyperedges changes the definition of a neighbor.

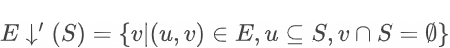

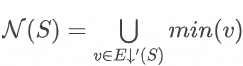

In a hypergraph, "neighborhood" is defined with respect to sets of nodes rather than individual nodes. Therefore, we need to consider, for example, the neighbors of a node set such as S1 = {t1, t2, t3} within the hypergraph. A natural definition is as follows: if there exists a hyperedge  where one hypernode is a subset of S1 and the other hypernode

where one hypernode is a subset of S1 and the other hypernode  is disjoint from S1, then the corresponding node set S2 can be considered a neighbor of S1. Formally, this can be expressed as:

is disjoint from S1, then the corresponding node set S2 can be considered a neighbor of S1. Formally, this can be expressed as:

However, for the purpose of enumerating csg-cmp-pairs, this definition can be optimized: Not all nodes in v need to be listed as neighbors; since they are all equivalent from the perspective of S₁, it is sufficient to select just one.

Therefore, we optimize the definition of a neighborhood:

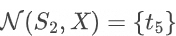

Here,  denotes the node with the smallest order in a hypernode. For example, for

denotes the node with the smallest order in a hypernode. For example, for  , we have

, we have  .

.

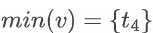

This definition can be further optimized. Suppose there are two hyperedges where the hypernodes  satisfy the ordering relationship

satisfy the ordering relationship  . In this case, it is sufficient to consider only the nodes in

. In this case, it is sufficient to consider only the nodes in  . For example, if there is also a hyperedge ({t1, t2, t3}, {t5, t6}) in the graph, then the neighbors of S1 = {t1, t2, t3} do not need to be defined as {t4, t5}; it is enough to define them as {t5}. In the paper, the set of hypernodes that has undergone this optimization is denoted as

. For example, if there is also a hyperedge ({t1, t2, t3}, {t5, t6}) in the graph, then the neighbors of S1 = {t1, t2, t3} do not need to be defined as {t4, t5}; it is enough to define them as {t5}. In the paper, the set of hypernodes that has undergone this optimization is denoted as  . With this optimization, the definition of a neighborhood becomes:

. With this optimization, the definition of a neighborhood becomes:

Finally, we add another parameter, X, to the neighborhood definition, requiring that the hypernode satisfies  . This refinement ensures the correct enumeration order and forms our final definition of a neighborhood.

. This refinement ensures the correct enumeration order and forms our final definition of a neighborhood.

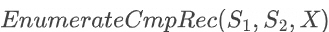

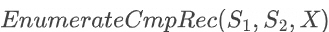

The Dphyp algorithm is composed of five functions: Solve, EnumerateCsgRec, EmitCsg, EnumerateCmpRec, and EmitCsgCmp. For ease of understanding, this article presents these five functions in an order that differs from the one in the original paper.

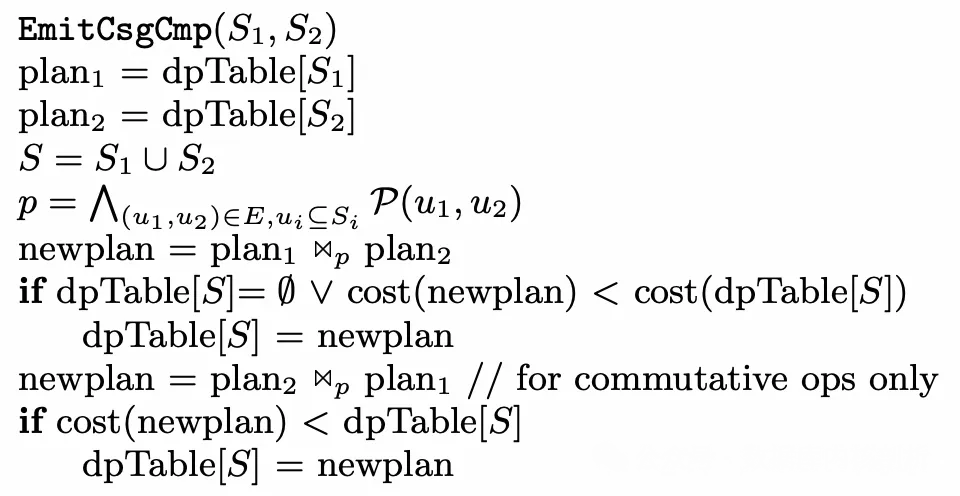

Algorithmic implementation of EmitCsgCmp

Once a csg-cmp-pair has been identified, this function is invoked to record the cost of the corresponding join order in the dpTable. Here, S1 and S2 denote the discovered csg-cmp-pair, and p is the join predicate used to connect S1 and S2. The function computes the cost of joining S1 with S2, and, if the join operator is commutative, also the cost of joining S2 with S1, and then updates dpTable[S] accordingly.

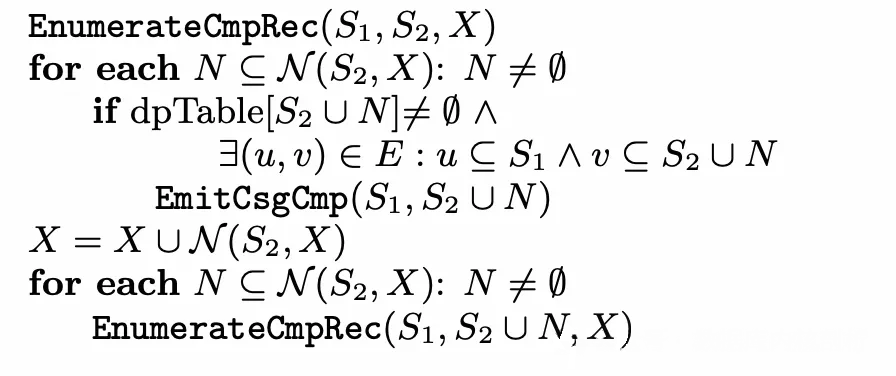

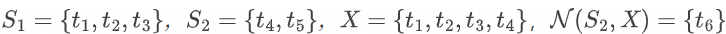

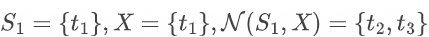

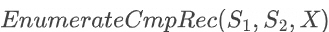

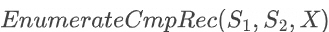

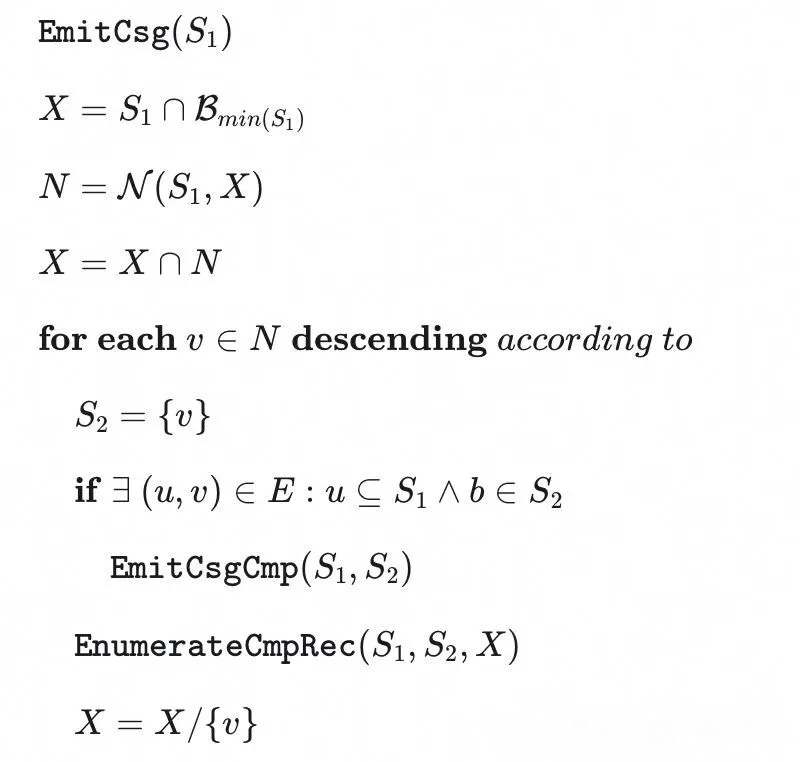

Algorithmic implementation of EnumerateCmpRec

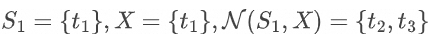

For two node sets S1 and S2, they may not initially form a csg-cmp-pair if no hyperedge directly connects them. In such cases, S2 can be expanded based on connectivity until a valid csg-cmp-pair is obtained. X denotes the exclusion set, which restricts the expansion of S2 such that newly added nodes must not belong to X. The exclusion set ensures that, during cmp enumeration, the same node is not selected repeatedly.

For example, when  ,

,  , no hyperedge exists between {t1, t2, t3} and {t4, t5}. The algorithm, therefore, recursively invokes EnumerateCmpRec. When

, no hyperedge exists between {t1, t2, t3} and {t4, t5}. The algorithm, therefore, recursively invokes EnumerateCmpRec. When  , a hyperedge is found connecting {t1, t2, t3} and {t4, t5, t6}, at which point they form a valid csg-cmp-pair. The algorithm can then invoke EmitCsgCmp to compute the join cost and update the dpTable.

, a hyperedge is found connecting {t1, t2, t3} and {t4, t5, t6}, at which point they form a valid csg-cmp-pair. The algorithm can then invoke EmitCsgCmp to compute the join cost and update the dpTable.

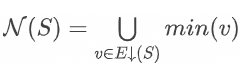

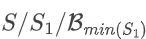

Algorithmic implementation of EmitCsg

For a given csg, we need to find its corresponding cmp. The enumeration of a csg starts by expanding from the neighbors of S1.

First, we compute the exclusion set X, which is defined as  , where

, where  indicates the set of all nodes whose order is less than or equal to a given node, for example,

indicates the set of all nodes whose order is less than or equal to a given node, for example,  . The exclusion set is introduced to enforce an ordered enumeration; the underlying rationale will be explained later when discussing the Solve function.

. The exclusion set is introduced to enforce an ordered enumeration; the underlying rationale will be explained later when discussing the Solve function.

We then iterate over the neighbors of S1 in descending order of the node ordering and initialize S2 with each such neighbor. If S1 and S2 can directly form a valid csg-cmp-pair, we invoke EmitCsgCmp to compute the join cost. Finally, we call EnumerateCmpRec to further expand S2 and explore additional possible csg-cmp-pairs.

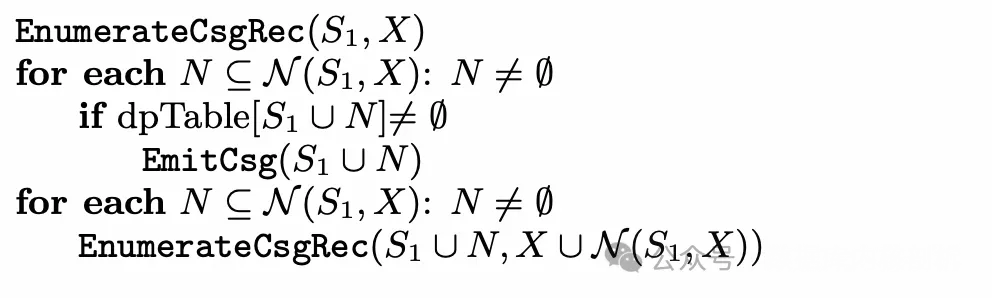

Algorithmic implementation of EnumerateCsgRec

For a given set of nodes, we attempt to expand it as much as possible to enumerate all valid csgs. Specifically, we first iterate over its neighbors and, by checking the dpTable, determine one-by-one if each neighbor can be combined with the current node set to form a csg. If a csg can be formed, we invoke EmitCsg to search for its corresponding cmp. After completing this step, we recursively invoke EnumerateCsgRec to continuously test whether the newly formed node sets can be further expanded through their neighbors to form larger csgs.

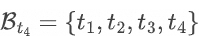

Algorithmic implementation of Solve

This function serves as the entry point for the entire algorithm. It first initializes the dpTable for individual nodes. Then, for each node in the node set, in descending order, it first calls EmitCsg to determine whether the node can form a csg-cmp-pair with its neighbors. Next, starting from this node, it expands the node set along its neighbors and checks whether the resulting subgraph is connected.

It is important to note that the nodes in V are enumerated in descending order. Therefore, when we reach  , we have already identified all csg and cmp pairs among

, we have already identified all csg and cmp pairs among  , but the connectivity involving

, but the connectivity involving  is still unknown. Consequently, in the EmitCsg function, we define

is still unknown. Consequently, in the EmitCsg function, we define  . This is because, while a cmp for S1 must be found within S/S1, the connectivity of nodes in

. This is because, while a cmp for S1 must be found within S/S1, the connectivity of nodes in  has not yet been established, so the search is limited to

has not yet been established, so the search is limited to  .

.

The overall algorithm is relatively straightforward: starting from a single node, the current subgraph S1 is continuously expanded based on its neighborhood relationships. If S1 is connected, it constitutes a valid csg. Based on the neighbors of this csg, the algorithm then searches for its corresponding cmp, S2. The process of finding the cmp can itself be recursively expanded via the neighbors of S2, ultimately enumerating all valid csg-cmp-pairs while ensuring correctness. The exclusion set ensures that the enumeration follows the proper order.

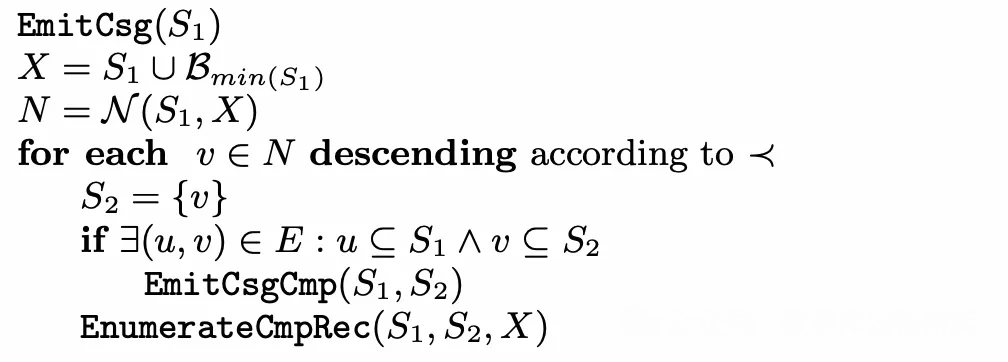

However, the algorithm as presented in the paper has certain flaws and can still lead to redundant enumeration. Consider the following simple SQL example:

SELECT *

FROM t1, t2, t3

WHERE t1.col1 = t2.col1

AND t2.col2 = t3.col2

AND t3.col3 = t1.col3;The SQL in this example is very simple: the three tables represent nodes that are all neighbors of each other. When t1 is being enumerated in the Solve function,  . Initially, S2 = {t3}. When

. Initially, S2 = {t3}. When  is invoked, S2 is expanded via connectivity to S2 = {t3, t2}, at which point the join order t1 JOIN (t2 JOIN t3) has been enumerated. When S2 = {t2}, invoking

is invoked, S2 is expanded via connectivity to S2 = {t3, t2}, at which point the join order t1 JOIN (t2 JOIN t3) has been enumerated. When S2 = {t2}, invoking  again expands S2 via connectivity to S2 = {t2, t3}, causing the same join order t1 JOIN (t2 JOIN t3) to be enumerated a second time. To prevent this redundant enumeration, we can modify the algorithm's pseudocode. The revised EmitCsg function is as follows:

again expands S2 via connectivity to S2 = {t2, t3}, causing the same join order t1 JOIN (t2 JOIN t3) to be enumerated a second time. To prevent this redundant enumeration, we can modify the algorithm's pseudocode. The revised EmitCsg function is as follows:

Algorithmic implementation of the revised EmitCsg function

This modification is quite simple: its purpose is to ensure that if the neighbors of S1 are also neighbors of each other, S2 is still expanded based on the sequenced relationships. Applied to the example above:

, X is initially expanded to X = {t1, t2, t3}, and S2 = {t3}. When

, X is initially expanded to X = {t1, t2, t3}, and S2 = {t3}. When  is invoked, S2 will not be further expanded via connectivity to S2 = {t3, t2}, so the join order t1 JOIN (t2 JOIN t3) is not enumerated at this step. Later, X is updated to its difference set, X = {t1, t2}. When

is invoked, S2 will not be further expanded via connectivity to S2 = {t3, t2}, so the join order t1 JOIN (t2 JOIN t3) is not enumerated at this step. Later, X is updated to its difference set, X = {t1, t2}. When  is invoked, S2 is expanded via connectivity to S2 = {t2, t3}, at which point the join order t1 JOIN (t2 JOIN t3) is enumerated. This ensures that the enumeration is free from redundancy.

is invoked, S2 is expanded via connectivity to S2 = {t2, t3}, at which point the join order t1 JOIN (t2 JOIN t3) is enumerated. This ensures that the enumeration is free from redundancy.

Earlier, we explained the Dphyp algorithm. However, two additional steps are necessary to fully implement JOIN_ORDER optimization. The first step is Extract Relation, which identifies the tables to be considered for join reordering from the logical operator tree. The second step is Cost Model, which evaluates the cost of each join order after it has been enumerated using the Dphyp algorithm. Next, we will explain the JOIN_ORDER implementation by covering three components: Extract Relation, the Dphyp algorithm implementation, and the Cost Model.

The first step in applying the Dphyp algorithm is to construct the hypergraph. In DuckDB, execution plans are represented as logical operator trees. The initial challenge is how to extract the tables that will be considered for join reordering from these trees.

Although we have been discussing join reordering in terms of table join order, it is important to note that these "tables" are not base tables but rather intermediate results produced by operators. To avoid ambiguity, we refer to these operator results as "relations." Therefore, the first step in JOIN_ORDER optimization is ExtractJoinRelations. ExtractJoinRelations recursively constructs the set of relations to be reordered. It takes a logical operator input_op as input and returns whether the sub-tree rooted at input_op can be reordered.

ExtractJoinRelations traverses a given logical operator tree until it encounters an operator that cannot be directly skipped. Depending on the type of operator, it can be classified as follows:

ExtractJoinRelations returns true.ExtractJoinRelations is recursively applied to both children. In this case, ExtractJoinRelations returns true.ExtractJoinRelations returns false.We use the operator tree above to illustrate the workflow of the ExtractJoinRelations function:

First, consider  . Its two children can be swapped, so

. Its two children can be swapped, so ExtractJoinRelations is applied to its left child. The left child is another ⟗, whose two children in DuckDB cannot be swapped. Therefore, JOIN_ORDER optimization is applied separately to its left and right children. After optimization, this operator is treated as a single unit and added to the relations of  . The right child is R5, which is also added as a single unit to the relations of

. The right child is R5, which is also added as a single unit to the relations of  .

.

During  optimization on the left child of ⟗, its two children can be swapped. Therefore, ExtractJoinRelations is applied to the left child of

optimization on the left child of ⟗, its two children can be swapped. Therefore, ExtractJoinRelations is applied to the left child of  , which is ⋈. ⋈ is also swappable, so

, which is ⋈. ⋈ is also swappable, so ExtractJoinRelations is applied to its left child. The left child of ⋈ is a Π operator, which cannot be skipped. Therefore, it is added as a single unit, Π(R1), to the relations of  . The right child of ⋈ is R2, which is also added as a single unit to the relations.

. The right child of ⋈ is R2, which is also added as a single unit to the relations. ExtractJoinRelations is also applied to the right child of  . The right child of

. The right child of  is σ(R3), which is also added as a single unit to the relations of

is σ(R3), which is also added as a single unit to the relations of  .

.

Therefore, this logical operator tree undergoes join reordering twice in total. The first reordering is applied to the three operators Π(R1), R2, and σ(R3), while the second reordering is applied to ⟗ and R5.

The logic for this process is implemented in RelationManager::ExtractJoinRelations. Interested readers can refer to it for more details.

In DuckDB, when the number of tables involved in join reordering is less than 12, the Dphyp algorithm is used for join order enumeration. The entry function of the Dphyp algorithm is PlanEnumerator::SolveJoinOrderExactly. PlanEnumerator::SolveJoinOrderExactly corresponds to the Solve function in the algorithm workflow. Its implementation closely aligns with the pseudocode presented in the algorithm description.

bool PlanEnumerator::SolveJoinOrderExactly() {

// now we perform the actual dynamic programming to compute the final result

// we enumerate over all the possible pairs in the neighborhood

// Iterate over all relations in descending order.

for (idx_t i = query_graph_manager.relation_manager.NumRelations(); i > 0; i--) {

// for every node in the set, we consider it as the start node once

// Find the i-th relation.

auto &start_node = query_graph_manager.set_manager.GetJoinRelation(i - 1);

// emit the start node

// A single node is also a csg; call EmitCsg to search for its cmp

if (!EmitCSG(start_node)) {

return false;

}

// initialize the set of exclusion_set as all the nodes with a number below this

// Initialize the exclusion set.

unordered_set<idx_t> exclusion_set;

for (idx_t j = 0; j < i; j++) {

exclusion_set.insert(j);

}

// then we recursively search for neighbors that do not belong to the banned entries

// Recursively call EnumerateCSGRecursive, starting from the start node to expand the csg.

if (!EnumerateCSGRecursive(start_node, exclusion_set)) {

return false;

}

}

return true;

}Next, let's examine the PlanEnumerator::EmitCSG function. DuckDB's Dphyp implementation addresses the shortcomings of the original paper that we discussed earlier:

bool PlanEnumerator::EmitCSG(JoinRelationSet &node) {

if (node.count == query_graph_manager.relation_manager.NumRelations()) {

return true;

}

// create the exclusion set as everything inside the subgraph AND anything with members BELOW it

// Initialize the exclusion set.

unordered_set<idx_t> exclusion_set;

for (idx_t i = 0; i < node.relations[0]; i++) {

exclusion_set.insert(i);

}

// Intersect S1 with the exclusion set.

UpdateExclusionSet(&node, exclusion_set);

// find the neighbors given this exclusion set

// Find the neighbor nodes of S1.

auto neighbors = query_graph.GetNeighbors(node, exclusion_set);

if (neighbors.empty()) {

return true;

}

//! Neighbors should be reversed when iterating over them.

// We iterate over the neighbor nodes in descending order, so we sort them here first.

std::sort(neighbors.begin(), neighbors.end(), std::greater<idx_t>());

for (idx_t i = 0; i < neighbors.size() - 1; i++) {

D_ASSERT(neighbors[i] > neighbors[i + 1]);

}

// Dphyp paper missing this.

// Because we are traversing in reverse order, we need to add neighbors whose number is smaller than the current

// node to exclusion_set

// This avoids duplicated enumeration

// As mentioned earlier, to address the problem in the Dphyp paper, we also need to add the neighbors of S1 to the exclusion set.

unordered_set<idx_t> new_exclusion_set = exclusion_set;

for (idx_t i = 0; i < neighbors.size(); ++i) {

D_ASSERT(new_exclusion_set.find(neighbors[i]) == new_exclusion_set.end());

new_exclusion_set.insert(neighbors[i]);

}

// Since the neighbors have already been sorted in descending order, we can now iterate over them directly.

for (auto neighbor : neighbors) {

// since the GetNeighbors only returns the smallest element in a list, the entry might not be connected to

// (only!) this neighbor, hence we have to do a connectedness check before we can emit it

// Initialize S2 with the neighbor node.

auto &neighbor_relation = query_graph_manager.set_manager.GetJoinRelation(neighbor);

auto connections = query_graph.GetConnections(node, neighbor_relation);

// If a hyperedge exists connecting S1 and S2, emit the cmp and update the dpTable.

if (!connections.empty()) {

if (!TryEmitPair(node, neighbor_relation, connections)) {

return false;

}

}

// Expand S2 to enumerate cmp.

if (!EnumerateCmpRecursive(node, neighbor_relation, new_exclusion_set)) {

return false;

}

// Remove S2 from the exclusion set.

new_exclusion_set.erase(neighbor);

}

return true;

}The logic of PlanEnumerator::EnumerateCSGRecursive largely follows the EnumerateCsgRec pseudocode:

bool PlanEnumerator::EnumerateCSGRecursive(JoinRelationSet &node, unordered_set<idx_t> &exclusion_set) {

// find neighbors of S under the exclusion set

// Get all neighbors of S1.

auto neighbors = query_graph.GetNeighbors(node, exclusion_set);

if (neighbors.empty()) {

return true;

}

// Get all subsets of the neighbor set.

auto all_subset = GetAllNeighborSets(neighbors);

vector<reference<JoinRelationSet>> union_sets;

union_sets.reserve(all_subset.size());

// For each subset

for (const auto &rel_set : all_subset) {

auto &neighbor = query_graph_manager.set_manager.GetJoinRelation(rel_set);

// emit the combinations of this node and its neighbors

// Compute the union of the subset and S1.

auto &new_set = query_graph_manager.set_manager.Union(node, neighbor);

D_ASSERT(new_set.count > node.count);

// Check whether it is connected; if so, a new csg is found. Call EmitCSG to search for its cmp.

if (plans.find(new_set) != plans.end()) {

if (!EmitCSG(new_set)) {

return false;

}

}

union_sets.push_back(new_set);

}

// All neighbor nodes no longer need to be enumerated again; update the exclusion set.

unordered_set<idx_t> new_exclusion_set = exclusion_set;

for (const auto &neighbor : neighbors) {

new_exclusion_set.insert(neighbor);

}

// recursively enumerate the sets

// Recursively call EnumerateCSGRecursive to expand the csg.

for (idx_t i = 0; i < union_sets.size(); i++) {

// updated the set of excluded entries with this neighbor

if (!EnumerateCSGRecursive(union_sets[i], new_exclusion_set)) {

return false;

}

}

return true;

}PlanEnumerator::EnumerateCmpRecursive is also largely equivalent to the EnumerateCmp procedure:

bool PlanEnumerator::EnumerateCmpRecursive(JoinRelationSet &left, JoinRelationSet &right,

unordered_set<idx_t> &exclusion_set) {

// get the neighbors of the second relation under the exclusion set

// Get neighbors of S2.

auto neighbors = query_graph.GetNeighbors(right, exclusion_set);

if (neighbors.empty()) {

return true;

}

// Get all subsets.

auto all_subset = GetAllNeighborSets(neighbors);

vector<reference<JoinRelationSet>> union_sets;

union_sets.reserve(all_subset.size());

for (const auto &rel_set : all_subset) {

auto &neighbor = query_graph_manager.set_manager.GetJoinRelation(rel_set);

// emit the combinations of this node and its neighbors

// Compute the union of S2 and the neighbor set.

auto &combined_set = query_graph_manager.set_manager.Union(right, neighbor);

// If combined_set.count == right.count, This means we found a neighbor that has been present before

// This means we didn't set exclusion_set correctly.

D_ASSERT(combined_set.count > right.count);

// Check whether the union of S2 and the neighbor set is connected.

if (plans.find(combined_set) != plans.end()) {

// Check whether S1 and the union of S2 and the neighbor set are connected.

auto connections = query_graph.GetConnections(left, combined_set);

if (!connections.empty()) {

// If connected, find the csg-cmp-pair and update the dpTable

if (!TryEmitPair(left, combined_set, connections)) {

return false;

}

}

}

union_sets.push_back(combined_set);

}

// Update the exclusion set.

unordered_set<idx_t> new_exclusion_set = exclusion_set;

for (const auto &neighbor : neighbors) {

new_exclusion_set.insert(neighbor);

}

// recursively enumerate the sets

// recursively enumerate the union of S2 and the neighbor sets.

for (idx_t i = 0; i < union_sets.size(); i++) {

// updated the set of excluded entries with this neighbor

if (!EnumerateCmpRecursive(left, union_sets[i], new_exclusion_set)) {

return false;

}

}

return true;

}Finally, PlanEnumerator::EmitPair is invoked to generate the plan for the discovered csg-cmp-pair and update the join table.

DPJoinNode &PlanEnumerator::EmitPair(JoinRelationSet &left, JoinRelationSet &right,

const vector<reference<NeighborInfo>> &info) {

// get the left and right join plans

// Get the plans for S1 and S2.

auto left_plan = plans.find(left);

auto right_plan = plans.find(right);

if (left_plan == plans.end() || right_plan == plans.end()) {

throw InternalException("No left or right plan: internal error in join order optimizer");

}

// Get the union of S1 and S2.

auto &new_set = query_graph_manager.set_manager.Union(left, right);

// create the join tree based on combining the two plans

// Construct the join tree for S1 JOIN S2.

auto new_plan = CreateJoinTree(new_set, info, *left_plan->second, *right_plan->second);

// check if this plan is the optimal plan we found for this set of relations

auto entry = plans.find(new_set);

auto new_cost = new_plan->cost;

double old_cost = NumericLimits<double>::Maximum();

if (entry != plans.end()) {

old_cost = entry->second->cost;

}

// If the computed cost is smaller, update the dpTable.

if (entry == plans.end() || new_cost < old_cost) {

// the new plan costs less than the old plan. Update our DP table.

plans[new_set] = std::move(new_plan);

return *plans[new_set];

}

// Create join node from the plan currently in the DP table.

return *entry->second;

}When calculating the cost of a join order, DuckDB does not consider the physical execution plan. Rather, it bases its cost estimation solely on cardinality, that is, the number of rows produced by each intermediate result.

For table cardinality, if the table is a DuckDB-managed storage table, DuckDB calls TableScanCardinality, which returns a unique_ptr<NodeStatistics>. The returned value represents the number of rows in the table.

unique_ptr<NodeStatistics> TableScanCardinality(ClientContext &context, const FunctionData *bind_data_p) {

auto &bind_data = bind_data_p->Cast<TableScanBindData>();

auto &duck_table = bind_data.table.Cast<DuckTableEntry>();

auto &local_storage = LocalStorage::Get(context, duck_table.catalog);

auto &storage = duck_table.GetStorage();

// Get the number of rows in the table

idx_t table_rows = storage.GetTotalRows();

// local_storage may contain uncommitted inserts, which must also be included.

idx_t estimated_cardinality = table_rows + local_storage.AddedRows(duck_table.GetStorage());

return make_uniq<NodeStatistics>(table_rows, estimated_cardinality);

}The cardinality computed here is the cardinality before any filters are applied. If there are filters that can be pushed down to a single table, their impact on cardinality must be considered. The computation works as follows: if there is a constant equality predicate such as col1 = constant, and the cardinality of column col1 is column_count, then the post-filter cardinality is computed as cardinality_after_filters = (cardinality + column_count - 1) / column_count. If constant equality predicates exist on multiple columns, the cardinality_after_filters computation is performed for each predicate, and the minimum value is chosen. This logic is implemented in RelationStatisticsHelper::ExtractGetStats. Interested readers can refer to it for more details. For non-equality predicates, DuckDB uniformly assumes a selectivity of 0.2.

For column cardinality, which DuckDB refers to as the "domain," it is obtained by calling TableScanStatistics, which returns a unique_ptr<BaseStatistics>. The domain is estimated using the HyperLogLog algorithm. Each time data is written to disk, HyperLogLog is executed to estimate the domain, and the estimated value is persisted in the table's metadata.

static unique_ptr<BaseStatistics> TableScanStatistics(ClientContext &context, const FunctionData *bind_data_p,

column_t column_id) {

auto &bind_data = bind_data_p->Cast<TableScanBindData>();

auto &duck_table = bind_data.table.Cast<DuckTableEntry>();

auto &local_storage = LocalStorage::Get(context, duck_table.catalog);

// Don't emit statistics for tables with outstanding transaction-local data.

if (local_storage.Find(duck_table.GetStorage())) {

return nullptr;

}

return duck_table.GetStatistics(context, column_id);

}In DuckDB, the RelationsToTDom object describes comparison relationships formed by multiple columns. It is constructed in the CardinalityEstimator::InitEquivalentRelations function. It is defined as follows:

struct RelationsToTDom {

//! column binding sets that are equivalent in a join plan.

//! if you have A.x = B.y and B.y = C.z, then one set is {A.x, B.y, C.z}.

// A set of column_bindings, representing that comparison relationships exist among these column_bindings.

column_binding_set_t equivalent_relations;

//! the estimated total domains of the equivalent relations determined using HLL

// Domain estimation derived from the HyperLogLog algorithm.

idx_t tdom_hll;

//! the estimated total domains of each relation without using HLL

// Domain estimation derived without using the HyperLogLog algorithm

idx_t tdom_no_hll;

bool has_tdom_hll;

// FilterInfo objects corresponding to the comparison relationships.

vector<optional_ptr<FilterInfo>> filters;

vector<string> column_names;

explicit RelationsToTDom(const column_binding_set_t &column_binding_set)

: equivalent_relations(column_binding_set), tdom_hll(0), tdom_no_hll(NumericLimits<idx_t>::Maximum()),

has_tdom_hll(false) {};

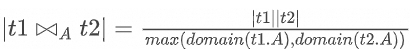

};For example, given a join condition t1.col1 = t2.col1, the equivalent_relations set will contain the two column_bindings t1.col1 and t2.col1. In this case, tdom_hll = max(domain(t1.col1), domain(t2.col1)). This value is computed because it is required by the cost formula used for multi-table join cardinality estimation.

The process of building RelationsToTDom consists of iterating over all filters, grouping columns that participate in comparison relationships into the same RelationsToTDom object, and updating tdom_hll accordingly.

In DuckDB, cost computation is very straightforward: it consists of adding the cardinality of the result set produced by each join. For example, to compute the cost of t1 JOIN t2, we only need to compute the cardinality of the result set of t1 JOIN t2, and then add the cardinalities of t1 and t2.

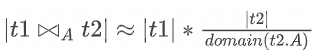

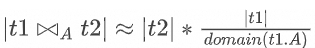

double CostModel::ComputeCost(DPJoinNode &left, DPJoinNode &right) {

auto &combination = query_graph_manager.set_manager.Union(left.set, right.set);

auto join_card = cardinality_estimator.EstimateCardinalityWithSet<double>(combination);

auto join_cost = join_card;

return join_cost + left.cost + right.cost;

}Therefore, the key focus lies in how to estimate the cardinality of a join between two tables. To make the cardinality of a two-table join result estimable, DuckDB makes a key assumption: all joins follow the most common join pattern, a foreign key-primary key (FK-PK) join. In an FK-PK join, the foreign key (FK) of table t1 is derived from the primary key (PK) of another table t2, and the FK and PK are joined using an equality predicate. Under this assumption, every row in table t1 can find a corresponding matching row in table t2. Based on this assumption, we have:

But since the FK-PK relationship between the two tables is unknown, if we swap the FK and PK roles, we have:

Therefore, we can approximate the estimation as follows:

We use |t₁| to represent the cardinality of table t1, and domain(t1.A) to represent the cardinality of column A in table t1.

CardinalityEstimator::EstimateCardinalityWithSet uses the previously constructed RelationsToTDom structure to estimate cardinality.

template <>

double CardinalityEstimator::EstimateCardinalityWithSet(JoinRelationSet &new_set) {

if (relation_set_2_cardinality.find(new_set.ToString()) != relation_set_2_cardinality.end()) {

return relation_set_2_cardinality[new_set.ToString()].cardinality_before_filters;

}

// can happen if a table has cardinality 0, or a tdom is set to 0

// Obtain the denominator; denom.denominator is essentially max(domain(), domain()).

// denom.numerator_relations contains all tables included in the denominator.

auto denom = GetDenominator(new_set);

// Multiply the cardinalities of the tables in the denominator to obtain the numerator.

auto numerator = GetNumerator(denom.numerator_relations);

// Calculate the cardinality of the resulting set.

double result = numerator / denom.denominator;

auto new_entry = CardinalityHelper(result);

relation_set_2_cardinality[new_set.ToString()] = new_entry;

return result;

}The calculation of the numerator is straightforward. Let's focus on the calculation of the denominator. To calculate the denominator, relations_to_tdoms is first converted into edges. The conversion condition is that the tables involved in a filter from relations_to_tdoms must be a subset of the current set. For example, suppose we are computing the cardinality of t1 Join t2, and there exists a predicate t1.col1 = t2.col1 AND t2.col1 = t3.col1. In this case, equivalent_relations in relations_to_tdoms is {t1.col1, t2.col1, t3.col1}, and there are two filters: the first involves tables t1 and t2, and the second involves t2 and t3. Here, an edge can be generated.

Next, we iterate over all the edges while maintaining a subgraph array. This array tracks the subgraphs that can be formed using the edges enumerated so far. By continuously adding edges, each subgraph can be incrementally expanded until the entire graph is complete.

DenomInfo CardinalityEstimator::GetDenominator(JoinRelationSet &set) {

vector<Subgraph2Denominator> subgraphs;

// Finding the denominator is tricky. You need to go through the tdoms in decreasing order

// Then loop through all filters in the equivalence set of the tdom to see if both the

// left and right relations are in the new set, if so you can use that filter.

// You must also make sure that the filters all relations in the given set, so we use subgraphs

// that should eventually merge into one connected graph that joins all the relations

// TODO: Implement a method to cache subgraphs so you don't have to build them up every

// time the cardinality of a new set is requested

// relations_to_tdoms has already been sorted by largest to smallest total domain

// then we look through the filters for the relations_to_tdoms,

// and we start to choose the filters that join relations in the set.

// edges are guaranteed to be in order of largest tdom to smallest tdom.

unordered_set<idx_t> unused_edge_tdoms;

auto edges = GetEdges(relations_to_tdoms, set);

for (auto &edge : edges) {

// If at this point the graph is already complete, any remaining edges are redundant and can be added to unused_edge_tdoms.

if (subgraphs.size() == 1 && subgraphs.at(0).relations->ToString() == set.ToString()) {

// the first subgraph has connected all the desired relations, just skip the rest of the edges

if (edge.has_tdom_hll) {

unused_edge_tdoms.insert(edge.tdom_hll);

}

continue;

}

// Check whether this edge can connect two subgraphs. If it can, merge the two subgraphs using this edge.

// If it connects to only one subgraph, use this edge to expand that subgraph.

// If it connects to no subgraph, create a new subgraph for this edge.

auto subgraph_connections = SubgraphsConnectedByEdge(edge, subgraphs);

if (subgraph_connections.empty()) {

// create a subgraph out of left and right, then merge right into left and add left to subgraphs.

// this helps cover a case where there are no subgraphs yet, and the only join filter is a SEMI JOIN

auto left_subgraph = Subgraph2Denominator();

auto right_subgraph = Subgraph2Denominator();

left_subgraph.relations = edge.filter_info->left_set;

left_subgraph.numerator_relations = edge.filter_info->left_set;

right_subgraph.relations = edge.filter_info->right_set;

right_subgraph.numerator_relations = edge.filter_info->right_set;

left_subgraph.numerator_relations = &UpdateNumeratorRelations(left_subgraph, right_subgraph, edge);

left_subgraph.relations = edge.filter_info->set.get();

left_subgraph.denom = CalculateUpdatedDenom(left_subgraph, right_subgraph, edge);

subgraphs.push_back(left_subgraph);

} else if (subgraph_connections.size() == 1) {

auto left_subgraph = &subgraphs.at(subgraph_connections.at(0));

auto right_subgraph = Subgraph2Denominator();

right_subgraph.relations = edge.filter_info->right_set;

right_subgraph.numerator_relations = edge.filter_info->right_set;

if (JoinRelationSet::IsSubset(*left_subgraph->relations, *right_subgraph.relations)) {

right_subgraph.relations = edge.filter_info->left_set;

right_subgraph.numerator_relations = edge.filter_info->left_set;

}

if (JoinRelationSet::IsSubset(*left_subgraph->relations, *edge.filter_info->left_set) &&

JoinRelationSet::IsSubset(*left_subgraph->relations, *edge.filter_info->right_set)) {

// here we have an edge that connects the same subgraph to the same subgraph. Just continue. no need to

// update the denom

continue;

}

left_subgraph->numerator_relations = &UpdateNumeratorRelations(*left_subgraph, right_subgraph, edge);

left_subgraph->relations = &set_manager.Union(*left_subgraph->relations, *right_subgraph.relations);

left_subgraph->denom = CalculateUpdatedDenom(*left_subgraph, right_subgraph, edge);

} else if (subgraph_connections.size() == 2) {

// The two subgraphs in the subgraph_connections can be merged by this edge.

D_ASSERT(subgraph_connections.at(0) < subgraph_connections.at(1));

auto subgraph_to_merge_into = &subgraphs.at(subgraph_connections.at(0));

auto subgraph_to_delete = &subgraphs.at(subgraph_connections.at(1));

subgraph_to_merge_into->relations =

&set_manager.Union(*subgraph_to_merge_into->relations, *subgraph_to_delete->relations);

subgraph_to_merge_into->numerator_relations =

&UpdateNumeratorRelations(*subgraph_to_merge_into, *subgraph_to_delete, edge);

subgraph_to_merge_into->denom = CalculateUpdatedDenom(*subgraph_to_merge_into, *subgraph_to_delete, edge);

subgraph_to_delete->relations = nullptr;

auto remove_start = std::remove_if(subgraphs.begin(), subgraphs.end(),

[](Subgraph2Denominator &s) { return !s.relations; });

subgraphs.erase(remove_start, subgraphs.end());

}

}

// Slight penalty to cardinality for unused edges

// For unused edges, they might reduce the result set's cardinality, so a penalty is applied to the denominator.

auto denom_multiplier = 1.0 + static_cast<double>(unused_edge_tdoms.size());

// It's possible cross-products were added and are not present in the filters in the relation_2_tdom

// structures. When that's the case, merge all remaining subgraphs.

if (subgraphs.size() > 1) {

auto final_subgraph = subgraphs.at(0);

for (auto merge_with = subgraphs.begin() + 1; merge_with != subgraphs.end(); merge_with++) {

D_ASSERT(final_subgraph.relations && merge_with->relations);

final_subgraph.relations = &set_manager.Union(*final_subgraph.relations, *merge_with->relations);

D_ASSERT(final_subgraph.numerator_relations && merge_with->numerator_relations);

final_subgraph.numerator_relations =

&set_manager.Union(*final_subgraph.numerator_relations, *merge_with->numerator_relations);

final_subgraph.denom *= merge_with->denom;

}

}

// can happen if a table has cardinality 0, a tdom is set to 0, or if a cross product is used.

if (subgraphs.empty() || subgraphs.at(0).denom == 0) {

// denominator is 1 and numerators are a cross product of cardinalities.

return DenomInfo(set, 1, 1);

}

return DenomInfo(*subgraphs.at(0).numerator_relations, 1, subgraphs.at(0).denom * denom_multiplier);

}Finally, let's take a closer look at the CardinalityEstimator::CalculateUpdatedDenom function. This function distinguishes between the type of comparison and the type of join. When the comparison is an equality comparison and the join is an INNER JOIN, the calculation is performed according to the formula we presented earlier. If the conditions differ, additional adjustments are applied. For non-equality comparisons, the denominator is raised to the power of 2/3 to increase the estimated cardinality of the result set. If the join is a SEMI JOIN, the result is further multiplied by a default selectivity factor.

double CardinalityEstimator::CalculateUpdatedDenom(Subgraph2Denominator left, Subgraph2Denominator right,

FilterInfoWithTotalDomains &filter) {

double new_denom = left.denom * right.denom;

switch (filter.filter_info->join_type) {

case JoinType::INNER: {

// Collect comparison types

ExpressionType comparison_type = ExpressionType::INVALID;

ExpressionIterator::EnumerateExpression(filter.filter_info->filter, [&](Expression &expr) {

if (expr.GetExpressionClass() == ExpressionClass::BOUND_COMPARISON) {

comparison_type = expr.GetExpressionType();

}

});

if (comparison_type == ExpressionType::INVALID) {

new_denom *=

filter.has_tdom_hll ? static_cast<double>(filter.tdom_hll) : static_cast<double>(filter.tdom_no_hll);

// no comparison is taking place, so the denominator is just the product of the left and right

return new_denom;

}

// extra_ratio helps represents how many tuples will be filtered out if the comparison evaluates to

// false. set to 1 to assume cross product.

double extra_ratio = 1;

switch (comparison_type) {

case ExpressionType::COMPARE_EQUAL:

case ExpressionType::COMPARE_NOT_DISTINCT_FROM:

// extra ratio stays 1

extra_ratio =

filter.has_tdom_hll ? static_cast<double>(filter.tdom_hll) : static_cast<double>(filter.tdom_no_hll);

break;

case ExpressionType::COMPARE_LESSTHANOREQUALTO:

case ExpressionType::COMPARE_LESSTHAN:

case ExpressionType::COMPARE_GREATERTHANOREQUALTO:

case ExpressionType::COMPARE_GREATERTHAN:

case ExpressionType::COMPARE_NOTEQUAL:

case ExpressionType::COMPARE_DISTINCT_FROM:

// Assume this blows up, but use the tdom to bound it a bit

extra_ratio =

filter.has_tdom_hll ? static_cast<double>(filter.tdom_hll) : static_cast<double>(filter.tdom_no_hll);

extra_ratio = pow(extra_ratio, 2.0 / 3.0);

break;

default:

break;

}

new_denom *= extra_ratio;

return new_denom;

}

case JoinType::SEMI:

case JoinType::ANTI: {

if (JoinRelationSet::IsSubset(*left.relations, *filter.filter_info->left_set) &&

JoinRelationSet::IsSubset(*right.relations, *filter.filter_info->right_set)) {

new_denom = left.denom * CardinalityEstimator::DEFAULT_SEMI_ANTI_SELECTIVITY;

return new_denom;

}

new_denom = right.denom * CardinalityEstimator::DEFAULT_SEMI_ANTI_SELECTIVITY;

return new_denom;

}

default:

// cross product

return new_denom;

}

}In the JOIN_ORDER optimization's Cost Model, since the cost of a join is calculated solely based on the cardinality of the join result, the Cost Model treats A ⋈ B as having the same cost as B ⋈ A. However, in a Hash Join, better performance is achieved when the smaller table is used to build the hash table (the BUILD side) and the larger table is used for hash probing (the PROBE side). This is the rationale behind the BUILD_SIDE_PROBE_SIDE optimization.

This optimization is straightforward. Because the cardinalities of both relations in the join have already been estimated, we can roughly approximate the size of the hash table that each relation would generate. We then use this estimated hash table size as the cost metric, assigning the relation with the smaller estimated cost to the BUILD side and the relation with the larger estimated cost to the PROBE side.

There are also some special considerations. First, if the left relation is itself a join and the right relation is not, using the left relation as the BUILD side may be preferable. This is because the tuples from the left join have just been processed in the pipeline, so building a hash table on them can be faster. In such cases, the cost of building the hash table on the right relation is multiplied by 1.15, reflecting a bias toward using the left relation as the BUILD side. Second, if the estimated cardinalities of the left and right relations are identical, the side containing a rowid column is preferred as the PROBE side. This part of the implementation is relatively straightforward, and interested readers can refer to BuildProbeSideOptimizer for the detailed implementation.

This article primarily explains the Join Reorder optimization algorithm used by DuckDB. DuckDB uses the Dphyp algorithm to enumerate join orders, which allows for the efficient exploration of join sequences for complex SQL queries. Compared with other analytical databases such as ClickHouse, this approach offers a clear performance advantage. However, DuckDB's Join Reorder optimization has certain limitations. For example, its relatively simple cost model can lead to significant errors in cardinality estimation and does not account for the varying efficiency of different physical execution plans. It is expected that the community will address these issues over time.

PolarDB-X Clustered Columnar Index | How to Create a Columnar Index

AliSQL Vector Technology Analysis (1): Storage Format and Algorithm Implementation

ApsaraDB - November 26, 2025

ApsaraDB - November 18, 2025

ApsaraDB - November 13, 2025

ApsaraDB - February 5, 2026

ApsaraDB - February 5, 2026

ApsaraDB - September 3, 2025

PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More AnalyticDB for MySQL

AnalyticDB for MySQL

AnalyticDB for MySQL is a real-time data warehousing service that can process petabytes of data with high concurrency and low latency.

Learn More Managed Service for Prometheus

Managed Service for Prometheus

Multi-source metrics are aggregated to monitor the status of your business and services in real time.

Learn More ApsaraDB RDS for MySQL

ApsaraDB RDS for MySQL

An on-demand database hosting service for MySQL with automated monitoring, backup and disaster recovery capabilities

Learn MoreMore Posts by ApsaraDB