By Lijiu

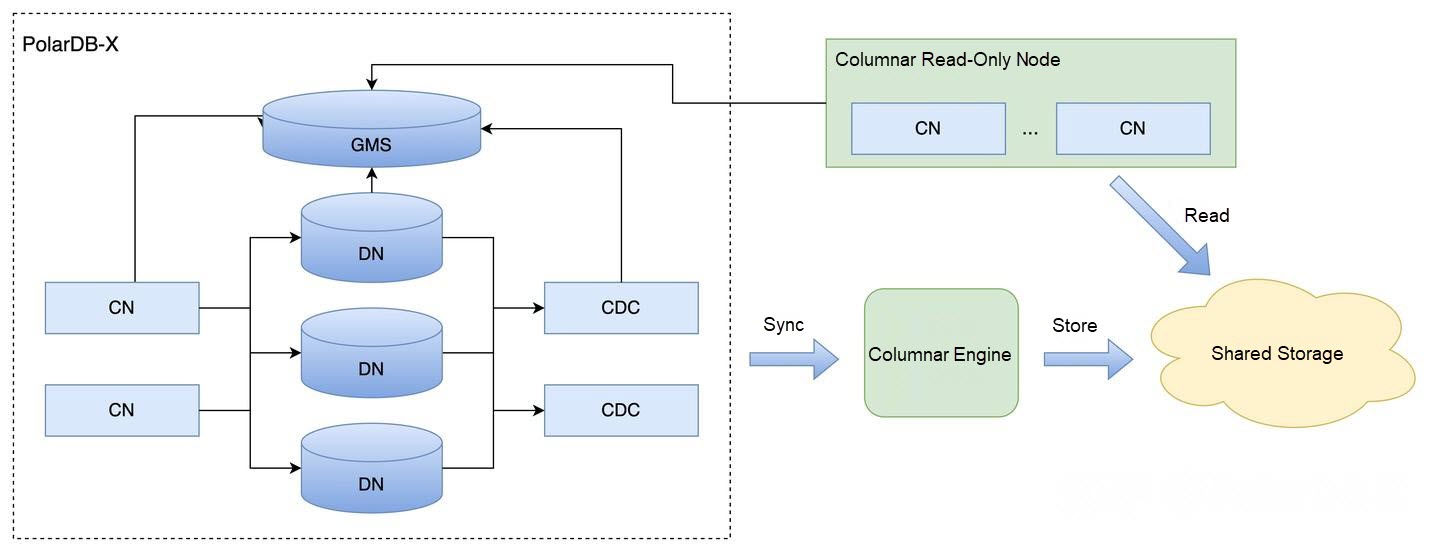

PolarDB-X supports a hybrid row-column storage architecture. For details on the specific architecture, refer to the previous article PolarDB-X Clustered Columnar Index | Birth of the Columnar Engine. Columnar data writes are synchronized through the columnar storage engine. When a DDL statement for creating a columnar index is executed, it notifies the columnar engine via binlog events, triggering the DDL application. For details on how to implement multi-version schema changes, see PolarDB-X Clustered Columnar Index | Full Support for Multi-Version Schema. This article primarily discusses how to build columnar index data and ensure data consistency between row storage and columnar storage.

Imagine the current scenario: The row storage table already contains some data, referred to as full data (or historical data). Now, a DDL statement is issued to create a columnar index. Meanwhile, the business continues to write data to the table—this newly written data after the DDL is called incremental data. The goal is to build a complete columnar data replica based on the DDL, ensuring consistency between row storage and columnar storage.

Solution 1: Ideal Scenario: The most ideal solution would be to create the table with the columnar index DDL from the start, so there's no full data—only incremental data needs to be synchronized. This works for pure AP (Analytical Processing) databases: create the table first, then import data. However, HTAP (Hybrid Transactional/Analytical Processing) databases can't operate this way. Typically, the table already contains data and the business is running. The columnar index is created later to accelerate performance.

Solution 2: Simple Snapshot-Based Approach: A simple solution would be to use the database's snapshot read functionality when receiving the create columnar index DDL. Read the data at a specific timestamp (TSO), complete the full data build, and then synchronize incremental data. While MySQL supports snapshot reads through MVCC and ensures transaction visibility via Read View, what if the full data is extremely large? Long-running transactions can cause Undo Log accumulation. MySQL's background Purge process may clean up logs, potentially resulting in a "Snapshot too old" error, which could make the database unavailable. Additionally, there's no guarantee of maintaining long-lived connections—any network issue could cause connection interruptions.

Solution 3: Common Industry Approach: A common solution is to pull full data from the source using SELECT SQL statements. Meanwhile, incremental data modifications for the table are retained but not applied. Once the full data pull is complete, record the ending position. Then, on top of the full data, consume incremental data. This incremental data requires special processing because the full data pull may include some incremental data, so incremental data must overwrite full data. Common database data transfer and synchronization tools use this approach, such as DTS and Flink.

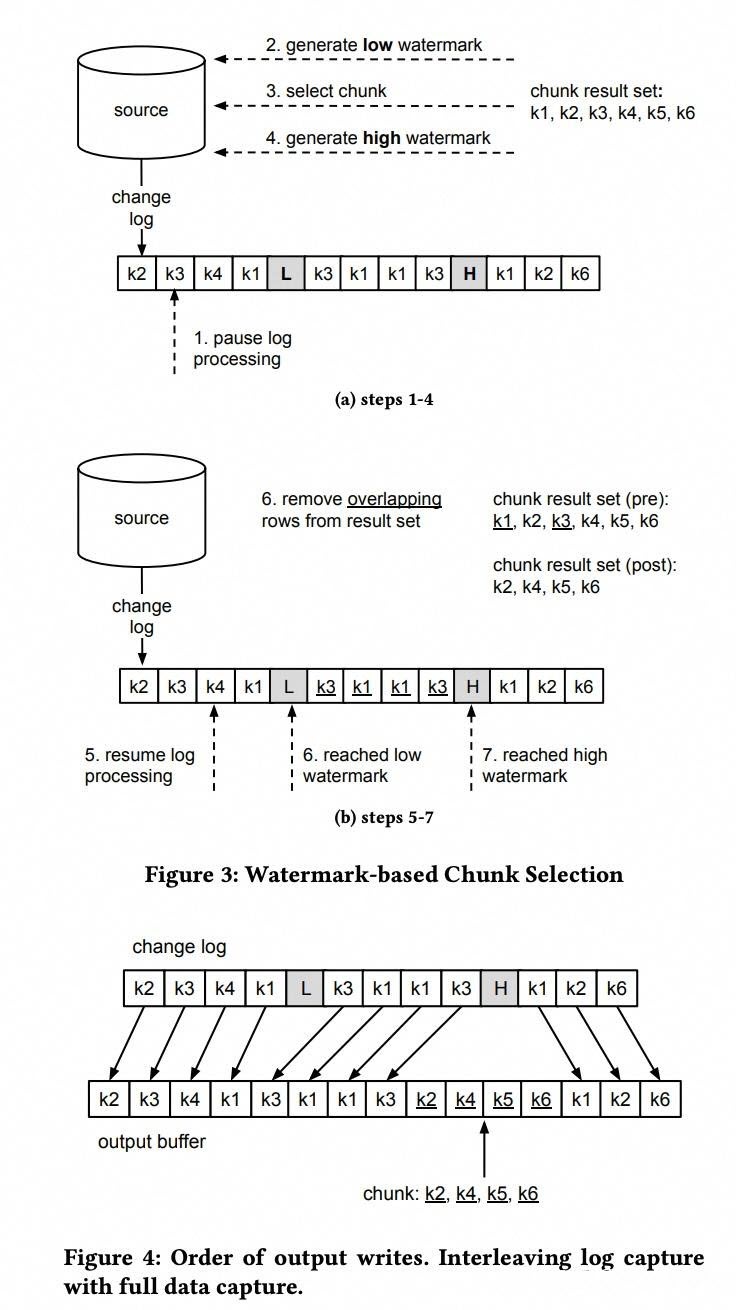

Solution 4: Fine-Grained Watermark-Based Approach: A more refined solution is referenced in the paper "DBLog: A Watermark Based Change-Data-Capture Framework." As shown in the figure below, full data is divided into small chunks based on primary key ranges. Each chunk is processed individually by inserting low and high watermark events into the binlog stream. The time between these markers is when the full data chunk is being pulled. The high watermark signifies that the full data chunk must have been generated before that binlog position. At the high watermark, duplicate keys from incremental consumption are removed from the full data chunk, and the remaining chunk data is then applied to the stream. This ensures the full data chunk is properly integrated into the stream.

This approach can even be completed by the CDC node (which generates globally consistent binlogs), directly integrating full data into the binlog, allowing downstream systems to consume it directly. However, this method requires intrusive modifications to the original binlog, and performance is limited by the single-stream nature.

PolarDB-X's columnar engine faces the following challenges when creating columnar indexes:

PolarDB-X's columnar engine adopts the common industry approach: Full data and incremental data are collected separately, then merged through a full-incremental merge process. Once the complete columnar data replica is assembled, the columnar index creation is complete.

Creating a columnar index involves two data sources:

Full Data: Pulled from PolarDB-X by continuously accessing data in primary key order using: select * from table where xxx order by pk limit n;

Incremental Data: Obtained by consuming the binlog stream.

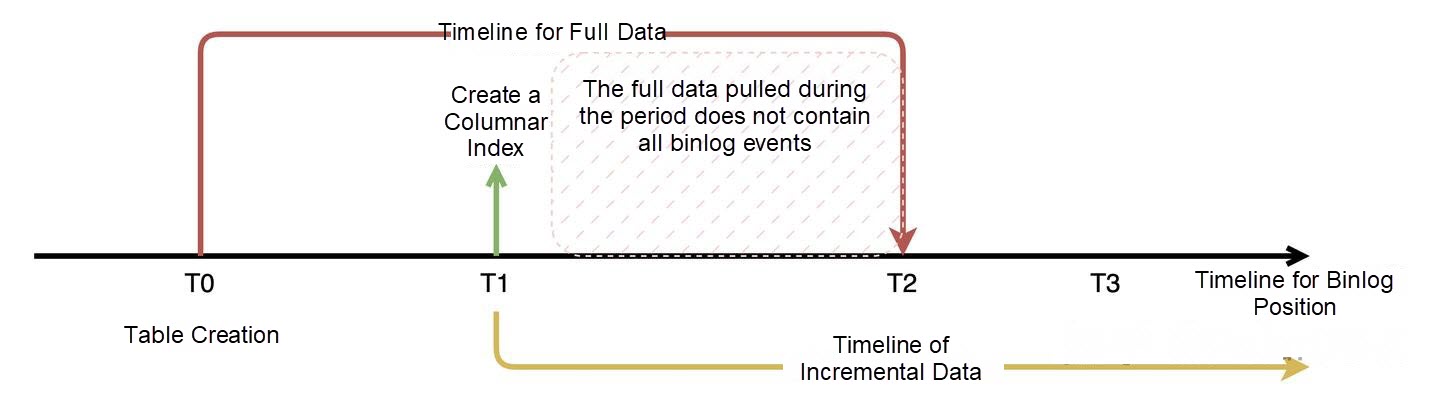

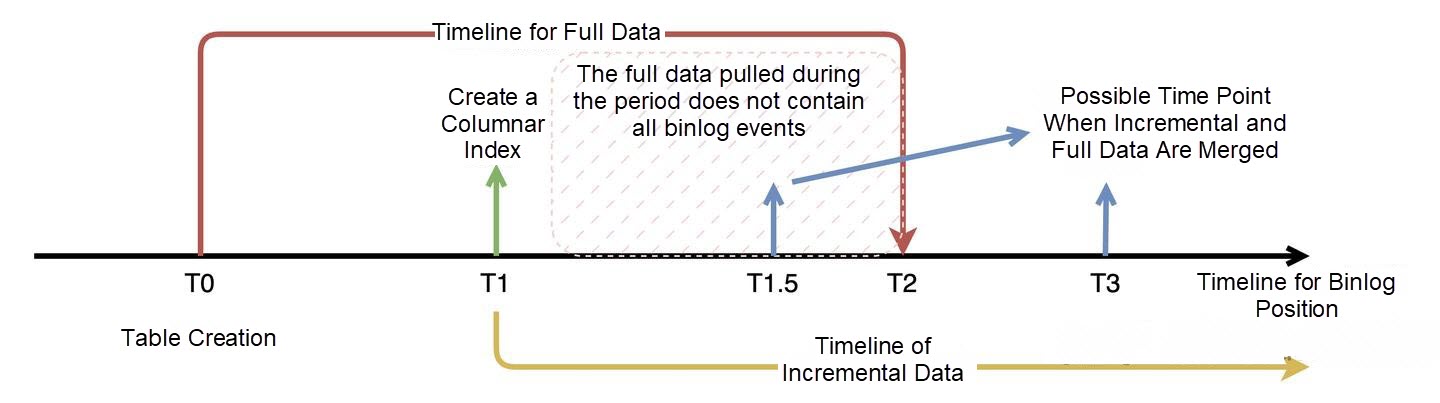

Since SELECT statements cannot guarantee reading from the same snapshot, or connections may be interrupted with large data volumes, full data and incremental data cannot be cleanly separated into two distinct parts. Redundancy occurs, as illustrated in the figure below:

According to the binlog position timeline, full data pulling actually spans a long time period. This is because the process is not based on the binlog stream and consists of already-existing snapshot data. Meanwhile, the business may be writing new data during the pull. Therefore, the full data may include some data written after the columnar index creation. Once the full data pull completes, the current binlog position is recorded as the high watermark T2. T2 represents that the full data must consist of data before this binlog position—no data after T2 can appear.

During the time period from T1 to T2, DML operations may or may not be captured by full data, but incremental data will definitely capture them. This redundancy manifests in the following scenarios:

Insert: Incremental data will definitely capture it; full data may or may not contain it (if the insert happened after pulling that range, it won't exist in full data).

Update: Incremental data may not have the old record; full data may contain the new or old record.

Delete: Incremental data may not capture it; full data may contain the old record or not.

To address these three scenarios, the incremental data stream requires special processing. First, update operations can be converted into delete + insert.

For delete operations: Incremental data may not have the record, but the delete operation must be recorded because full data may contain it, and it needs to be deleted from full data.

For insert operations: Incremental data always has the latest record. Full data may or may not contain it. If it's an insert from an update, full data may contain an old record. Therefore, incremental data must overwrite full data, removing redundant keys.

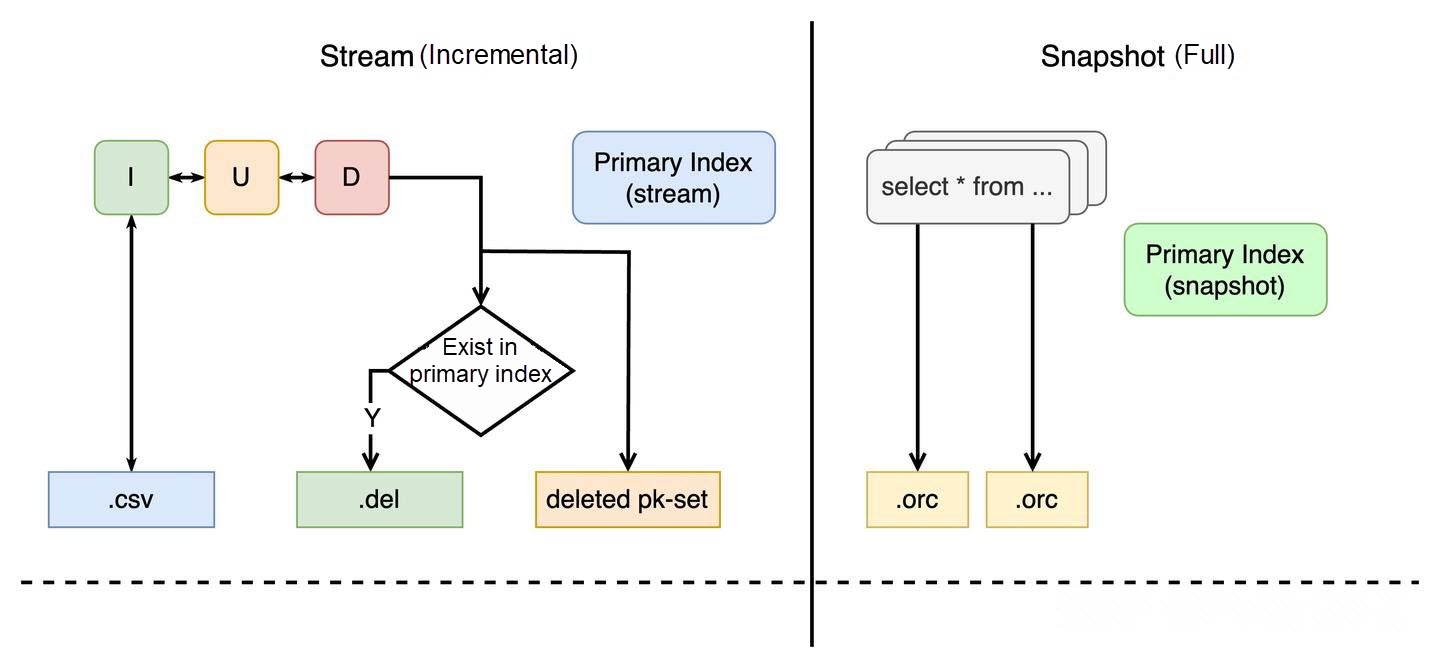

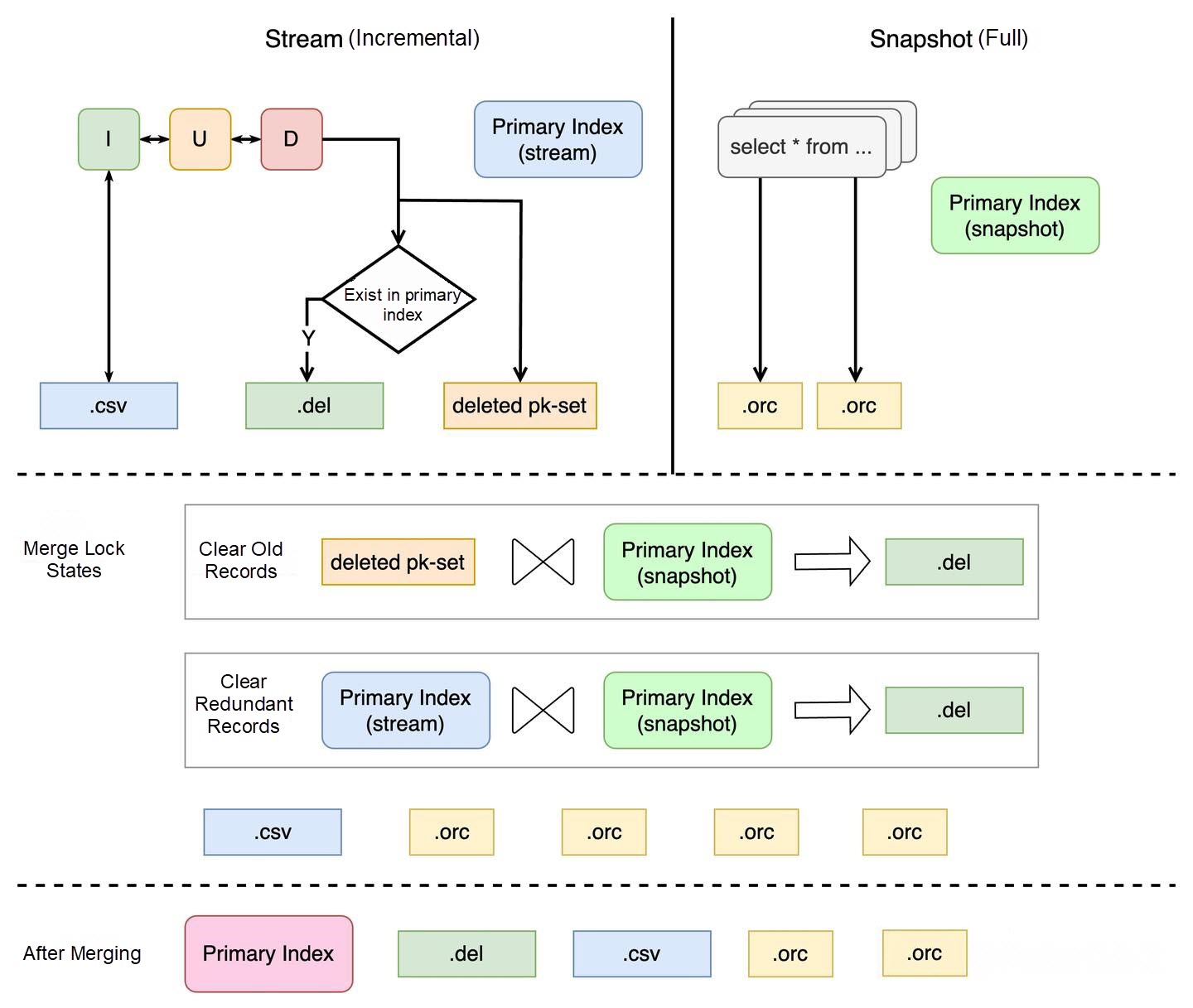

The data processing for both parts is shown in the figure below:

Both incremental and full data have independent primary key indexes (key-value storage structures) to record primary keys and their locations. Compared to normal processing, incremental data additionally records a deleted pk set, representing keys to be deleted from full data. Full data is directly pulled and converted into columnar files. Finally, the two data parts are merged to form a complete dataset.

Since the columnar engine has primary key indexes, the merge operation doesn't require modifying file data—only merging primary key indexes, greatly improving efficiency. The merge process is shown in the figure below:

The full-incremental merge process includes two main steps:

The columnar index creation is complete when:

Why must the incremental stream position surpass the full data pull position? Consider the special scenario shown in the figure below:

If incremental synchronization is very slow—reaching T1.5 while full data has already pulled data up to T2—the incremental data from T1.5 to T2 hasn't been synchronized yet. If the merge happens when the incremental stream reaches T1.5, it will discover "future data" that hasn't been synced incrementally yet, causing redundancy. There are two solutions:

Method 1: Wait until the incremental stream surpasses T2 before merging.

Method 2: Merge first, entering a state where there may be redundant future data. When the incremental stream encounters an insert, check whether redundant future data exists. If so, add a deletion mark. Once the binlog position surpasses T2, there will be no redundancy, and no checking is needed.

PolarDB-X adopts Method 2 because this special scenario is rare. Completing the full-incremental merge earlier allows faster columnar index creation.

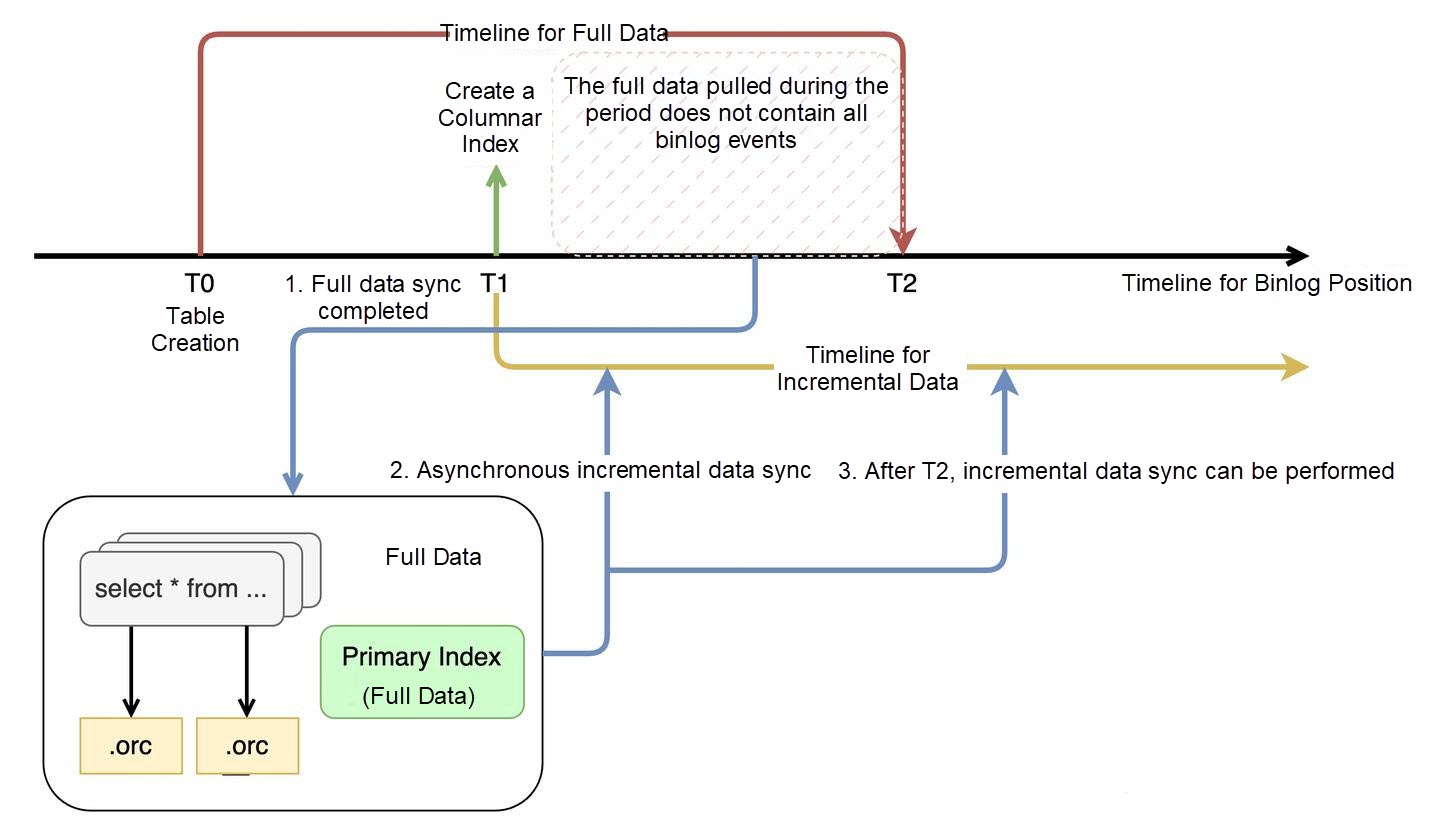

While the above merge approach only requires primary key index operations and is efficient, primary key index operations are performed under locks, preventing incremental synchronization during the full-incremental merge. Since PolarDB-X consumes a single binlog stream to synchronize multiple columnar indexes, blocking one columnar index would block columnar synchronization, causing latency. In real-world scenarios, columnar index synchronization latency is critical. Therefore, newer versions of PolarDB-X support an asynchronous full-incremental merge approach, as shown in the figure below:

Full data is still pulled independently in the background. During the creation phase, the columnar index only records DML events related to the table in the incremental stream. Once full data is complete, incremental events for the table are asynchronously synchronized on top of the full data. This way, incremental synchronization is not blocked—it only filters valid data. When the incremental stream surpasses the full data completion position T2, and the asynchronous incremental synchronization catches up, it transitions to normal incremental synchronization. While this approach may not be optimal in terms of efficiency, it doesn't affect normal synchronization.

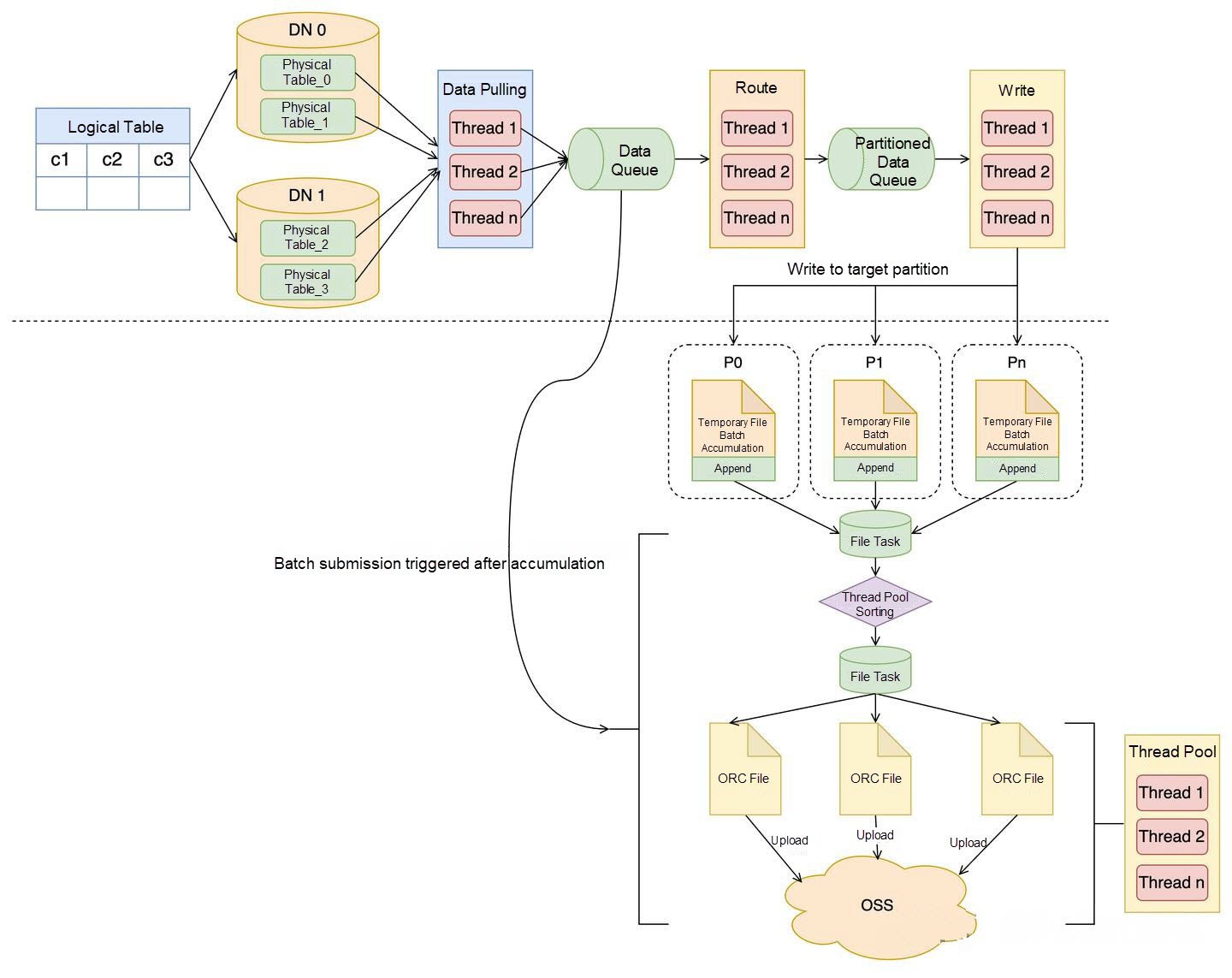

The full data process is essentially an independent task responsible for pulling table data from the primary instance to the columnar engine node and converting it into ORC columnar storage format. The process is shown in the figure below:

It consists of six steps: data pulling, routing calculation, appending writes to target partitions, file data sorting, generating ORC file format, and batch commit. Each step is handled by a separate thread pool, similar to a pipeline, maximizing concurrency.

The optimal way to pull data from PolarDB-X is to directly access physical tables using node hints, which not only reduces PolarDB-X resource consumption but also maximizes execution efficiency. A straightforward approach is to divide the logical table into subtasks based on the number of physical tables, execute SELECT statements concurrently, and ensure resumable transfers by recording the position of each pull. Data is pulled in primary key order, recording the maximum primary key value and continuously pulling in blocks from minimum to maximum:

/*+TDDL:node='xxx'*/ SELECT xxx from xxx WHERE (pk > last_select_id ) AND NOT (pk > max_id) ORDER BY id LIMIT 1024;This approach can also be extended for multi-column primary keys:

/*+TDDL:node='xxx'*/ SELECT xxx from xxx WHERE (pk1 > last_select_id1 ) OR ( pk1 = last_select_id1 and pk2 > last_select_id2 ) AND NOT (pk1 > max_id1) OR (pk1 = max_id1 and pk2 > max_id2) ORDER BY id LIMIT 1024;Currently, concurrency is limited by the number of physical tables. PolarDB-X distributed databases typically use partitioned tables. If data skew exists and a single physical table contains a large volume of data, one physical table can be further divided into multiple continuous primary key range tasks based on statistics, aiming to distribute data evenly. This increases the number of subtasks and enhances concurrency.

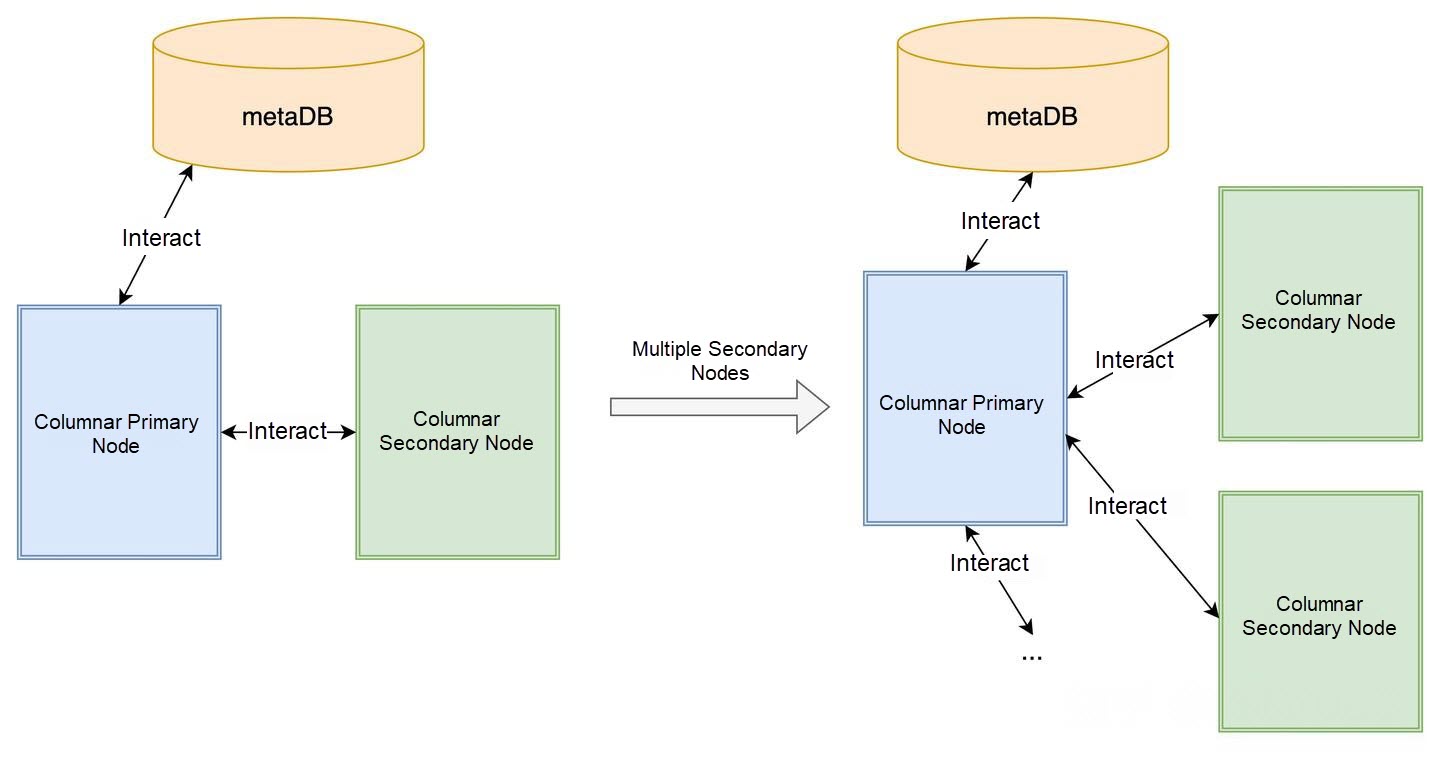

PolarDB-X's columnar engine uses a primary-backup architecture. Since full data pulling is relatively independent, the columnar engine also supports MPP (Massively Parallel Processing), where backup nodes can also be assigned tasks to pull data, further improving efficiency, as shown in the figure below:

The columnar primary node can distribute subtasks to multiple backup nodes for data pulling and ORC file generation and upload. Finally, only metadata commits are completed by the primary node, further enhancing full data pulling performance.

Columnar index creation performance is closely related to data volume. Since incremental data size depends on business traffic and is difficult to evaluate and compare, this section primarily tests full data pulling performance—i.e., creating a columnar index when the table already contains substantial data. Performance mainly depends on full data pulling.

Random data was generated using the following method:

CREATE TABLE `full256B` (

`id` bigint NOT NULL AUTO_INCREMENT,

`k` bigint not null default 100,

`c` binary(240) NOT NULL DEFAULT '',

PRIMARY KEY (`id`)

) PARTITION BY KEY(`id`) PARTITIONS 128;

## Use MPP hint to accelerate insert select

/*+TDDL:CMD_EXTRA(INSERT_SELECT_MPP=true)*/ insert into full256B(k,c) select FLOOR(RAND() * 9223372036854775807), RPAD(uuid(), 240, uuid()) from full256B;

## Create columnar index

create clustered columnar index `cci_full` on full256B(`id`) partition by hash(`id`) partitions 64 async=true;Columnar engine version: Post-September 2025 optimized version.

The primary instance has 12 nodes each with 16 vCPUs and 128 GB memory. The specifications of the columnar nodes are different. All nodes are deployed in the same zone to minimize cross-zone impact.

Each row is 256 bytes in length and 1,235,596,172 rows are stored in the instance.

| Columnar node specification | 4C32GB | 8C64GB | 16C128GB | 32C256GB | 96C768GB |

|---|---|---|---|---|---|

| Duration (seconds) | 2,489 | 1,177 | 613 | 364 | 211 |

| Rows/sec | 496,422 | 1,049,784 | 2,015,654 | 3,394,494 | 5,855,906 |

| MB/sec | 121.1 | 256.2 | 492.1 | 828.7 | 1429.6 |

Conclusion: When the primary node specifications are sufficient, increasing columnar node specifications achieves good linear performance growth, especially in low-specification scenarios where CPU resources are fully utilized, nearly doubling performance.

The primary instance has 12 nodes each with 16 vCPUs and 128 GB memory. The columnar nodes each have 96 vCPUs and 768 GB memory

| Row length | 8B | 256B | 1KB | 4KB | 64 columns (2.3 KB) |

|---|---|---|---|---|---|

| Data volume (rows) | 2,008,472,530 | 1,235,596,172 | 616,247,616 | 179,714,374 | 226,951,104 |

| Rows/sec | 7,466,440 | 5,855,906 | 2,455,169 | 784,778 | 825,276 |

| MB/sec | 56.9 | 1429.6 | 2397.6 | 3065 | 1853.6 |

Conclusion: As row length increases, bandwidth improvement is significant. The 64-column scenario represents multi-column cases with highly random data and almost no compression, limited by file system performance.

The primary instance has 12 nodes each with 16 vCPUs and 128 GB memory. The columnar nodes each have 8 vCPUs and 64 GB memory

| Node count | 1 node | 2 node |

|---|---|---|

| Data volume (rows) | 1,235,596,172 | 1,235,596,172 |

| Duration (seconds) | 1,177 | 757 |

| Rows/sec | 1,049,784 | 1,632,227 |

| MB/sec | 256.2 | 398.5 |

| Speedup | 1.55 | |

Conclusion: When columnar resources are CPU-limited, columnar MPP with multiple nodes can effectively improve performance.

Test Method

CREATE TABLE `sbtest1` (

`id` bigint NOT NULL AUTO_INCREMENT,

`k` int(11) NOT NULL DEFAULT '0',

`c` char(120) NOT NULL DEFAULT '',

`pad` char(60) NOT NULL DEFAULT '',

PRIMARY KEY (`id`),

INDEX `k_1` (`k`)

) PARTITION BY KEY(`id`) PARTITIONS 128;

## Step 1: Use sysbench to insert 100 million rows as full data

sysbench --mysql-host=xxx --mysql-port=xxx --mysql-user=xxx --mysql-password=xxx --mysql-db=xxx --threads=64 --time=0 --events=100000 --histogram=on --percentile=95 --db-driver=mysql --rand-type=uniform --report_interval=1 --tables=1 --batch_size=1000 --auto_inc=true oltp_batch_insert run

## Step 2: Inject a position to pause CCI creation at full data pk merge phase

curl 'http://127.0.0.1:3070/columnarServer/debug?key=full_data_pk_compaction_begin&value=sleep_once3000000'

## Step 3: Create CCI

create clustered columnar index `cci_full` on sbtest1(`id`) partition by hash(`id`) partitions 64 async=true;

## Step 4: Use sysbench oltp_write_only workload to modify 100 million rows

sysbench --mysql-host=xxx --mysql-port=xxx --mysql-user=xxx --mysql-password=xxx --mysql-db=xxx --threads=512 --time=0 --events=25000000 --histogram=on --percentile=95 --db-driver=mysql --rand-type=uniform --report_interval=1 --tables=1 --table_size=100000000 --skip_trx=true --mysql-ignore-errors=all oltp_write_only run

## Step 5: Restart columnar process, ensure only one columnar node survives to guarantee leader election on the same node with log cache

## Step 6: Check columnar logs to observe cost of catching up with 100 million rowsThe primary instance has 12 nodes each with 16 vCPUs and 128 GB memory. The specifications of the columnar nodes are different.

Each row is 256 bytes in length. The workload ratio of insert, delete, and update operations is 1:1:2. There are 320 log files (149,893,151 rows, 19.75 GB) stored in columnar nodes.

| Columnar node specification | 4C32GB | 8C64GB | 16C128GB | 32C256GB | 96C768GB | 96C768GB |

|---|---|---|---|---|---|---|

| Async Incremental Concurrency | 4 | 4 | 8 | 8 | 8 (default) | 16 |

| Duration (second) | 382 | 249 | 190 | 175 | 177 | 171 |

| Columnar rows/sec | 392,390 | 601,980 | 788,911 | 856,532 | 846,853 | 876,568 |

| MB/sec (by file) | 52.9 | 81.2 | 106.4 | 115.5 | 114.2 | 118.2 |

Conclusion: Synchronization by partition concurrency is limited by single-table primary key index operations, resulting in a performance bottleneck. Performance reaches approximately 800,000 rows per second.

This article discusses the process of creating columnar indexes in PolarDB-X's columnar engine—how to use full data and incremental data to build a columnar replica, optimize creation efficiency and synchronization latency. Test performance also meets expectations.

VLDB Paper Deep Dive: As AI Advances, Data Analysis Enters the Era of Incremental Computation

ApsaraDB - April 10, 2024

ApsaraDB - March 26, 2025

ApsaraDB - June 19, 2024

ApsaraDB - March 26, 2025

ApsaraDB - March 26, 2025

ApsaraDB - October 16, 2024

PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More LedgerDB

LedgerDB

A ledger database that provides powerful data audit capabilities.

Learn More ApsaraDB for OceanBase

ApsaraDB for OceanBase

A financial-grade distributed relational database that features high stability, high scalability, and high performance.

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn MoreMore Posts by ApsaraDB