By Shi Mengchu and Wang Zihao

As AI and large language model (LLM) applications gain traction, so does the demand for efficient storage, search, and retrieval of high-dimensional vectors, which are key representations for complex data (such as text, images, and speech), in sectors including recommendation systems, image retrieval, and natural language processing (NLP). This has posed new challenges for database technology. Vector application scenarios have spawned dedicated vector databases, and also promoted the trend of adding vector capabilities to traditional databases.

In this context, PostgreSQL uses the pgvector extension for efficient vector search, whereas the MySQL ecosystem has long lacked native support. Although MySQL 9.0 supports the VECTOR data type, it lacks general vector index capabilities, and its built-in vector distance function is only supported within HeatWave. This technological gap results in enterprises' dependence on self-deployed vector databases or data migration for hyperdimensional computing.

Built on MySQL 8.0, AliSQL adds native support for enterprise-class vector data processing, providing an out-of-the-box vectorization solution. By seamlessly integrating high-precision vector matching and complex business logic using standard SQL interfaces, AliSQL helps enterprises quickly implement innovative AI applications with a cost-efficient and highly compatible architecture.

Based on AliSQL 8.0 20251031, this article focuses on the key implementation of vector indexes, from the storage format to algorithm implementation, to help readers better understand and use vector capabilities.

AliSQL natively supports the storage and computing of vector data with up to 16,383 dimensions. AliSQL integrates mainstream vector functions such as cosine similarity (COSINE) and Euclidean distance (EUCLIDEAN), and supports Hierarchical Navigable Small World (HNSW)-based creation of vector indexes on columns containing full-dimensional vectors.

Create a table with a vector index, insert data, and perform a vector search:

# Create a table with a vector index.

CREATE TABLE `t1` (

`id` INT NOT NULL AUTO_INCREMENT PRIMARY KEY,

`animal` VARCHAR(10),

`vec` VECTOR(2) NOT NULL, # Add a column of the VECTOR data type

VECTOR INDEX `vi`(`vec`) m=6 distance=cosine # Explicitly specify m and distance

);

# Insert data.

INSERT INTO `t1`(`animal`, `vec`) VALUES

("Frog", VEC_FROMTEXT("[0.1, 0.2]")),

("Dog", VEC_FROMTEXT("[0.6, 0.7]")),

("Cat", VEC_FROMTEXT("[0.6, 0.6]"));

# Perform a vector search.

SELECT `animal`, VEC_DISTANCE(`vec`, VEC_FROMTEXT("[0.1, 0.1]")) AS `distance` FROM t1 ORDER BY `distance`;Sample response:

|--------|---------------------------|

| animal | distance |

|--------|---------------------------|

| Cat | 0.00000001552204198507212 |

| Dog | 0.0029455257170004634 |

| Frog | 0.05131670194948623 |

|--------|---------------------------|You can also create a vector index on a table that already contains vectors.

# Convert a column to the VECTOR type.

ALTER TABLE `t1` MODIFY COLUMN `vec` VECTOR(2) NOT NULL;

# Create a vector index.

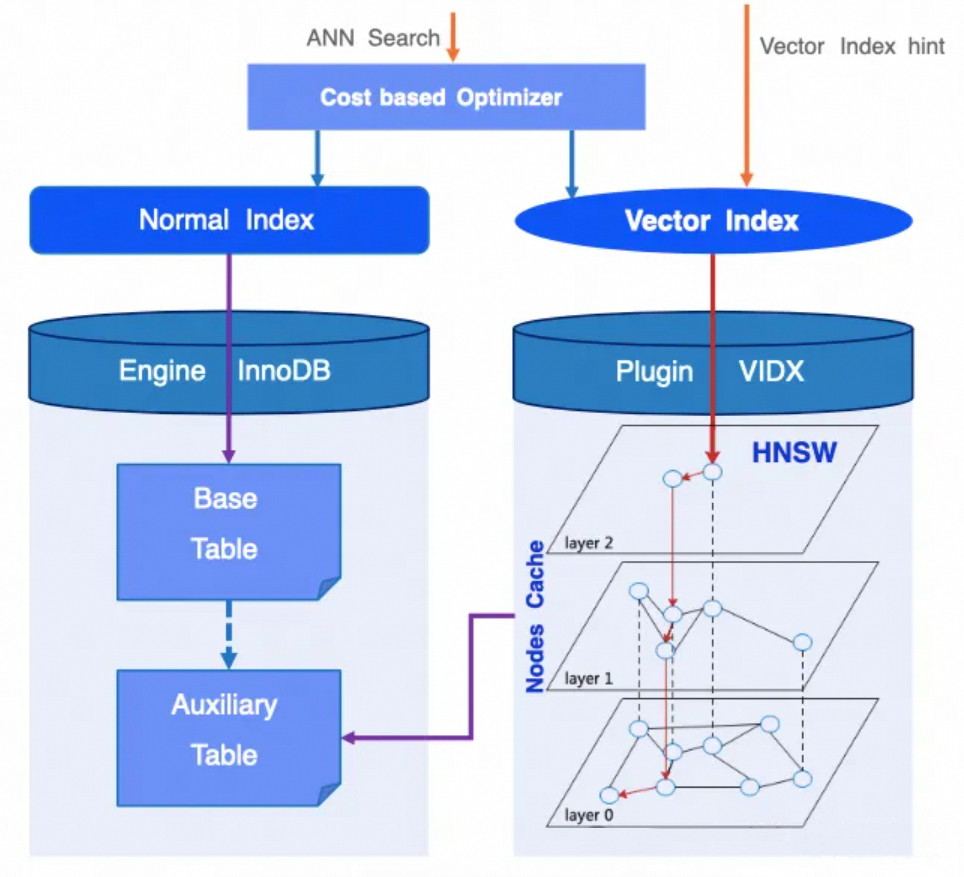

CREATE VECTOR INDEX vi ON t1(v); # If m and distance are not explicitly specified, the default values are used.As one of the most popular Approximate Nearest Neighbor (ANN) algorithms, HNSW has been widely recognized and verified in institutional evaluations and engineering implementations. AliSQL prioritizes support for HNSW-based vector indexes. The following figure shows the overall architecture of vector search.

• Following the estimation of the ANN query cost, an appropriate index is selected for search. You can also use a hint, such as FORCE INDEX, to specify a vector index for search.

• Logically, a complete HNSW graph exists. The information in this graph is organized into an auxiliary table that is stored persistently on the disk. Each row of data in the table represents a node in the HNSW graph. The HNSW algorithm operates on this graph representation to support vector insertion and retrieval.

• Unlike common indexes that directly access the storage engine, an HNSW-based index uses a vector index extension to maintain a Nodes Cache for the HNSW graph in memory to improve query efficiency.

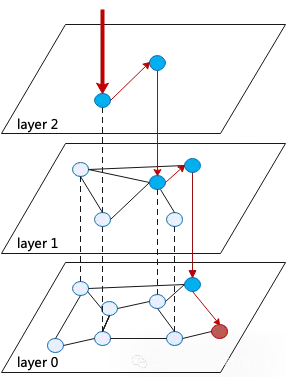

HNSW is an efficient ANN search algorithm that uses a multi-layer graph structure, proposed in the paper "Efficient and robust approximate nearest neighbor search using Hierarchical Navigable Small World graphs" ("the Paper"). Here is a breakdown of key elements:

• [Layered skip lists] The graph at layer 0 has information on all nodes. From bottom to top, the graph at the higher layer is like a "snapshot" that contains only some nodes of the graph at the lower layer. The top layer is used for fast search, and the bottom layer is used for accurate search.

• [Connections to neighbors] Each layer represents a proximity graph where each node is connected to its neighbors (nearest nodes) based on vector distance.

First, here is a step-by-step guide on how to perform Nearest Neighbor Search (NNS) in a Navigable Small World (NSW, a single-layer graph), which corresponds to Algorithm 2 in the Paper.

The insert algorithm corresponds to Algorithm 1 in the Paper. Parameter M indicates the maximum number of neighbors of a node in each layer, and L indicates the maximum number of layers of the current graph.

The k-nearest neighbor (kNN) search algorithm corresponds to Algorithm 5 in the Paper.

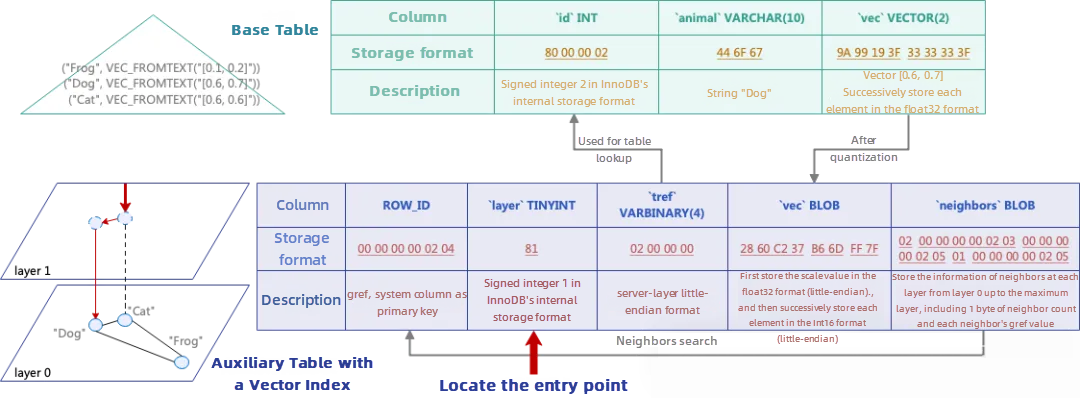

The HNSW graph structure must be stored in the database to implement the HNSW algorithm. The structure of the auxiliary table is shown below. Each row of data corresponds to all information of a node in the graph. A node contains all its representations from layer 0 up to the maximum layer at which this node exists.

|

Index |

Column name |

Type |

Description |

Use |

|

PRIMARY KEY |

ROW_ID |

System columns |

Primary key, named gref |

Neighbor search |

|

TRX_ID ROLL_PTR |

Transaction information |

Ensuring indexes are MVCC-aware |

||

|

KEY |

layer |

TINYINT NOT NULL |

Maximum layer |

Algorithm traversal |

|

Unique KEY |

tref |

VARBINARY(?) |

Primary key column of the base table, stored in the server-layer binary format |

Table lookup |

|

vec |

BLOB NOT NULL |

Quantized vector, with each element using the Int16 format and occupying 2 bytes |

Vector distance calculation |

|

|

neighbors |

BLOB NOT NULL |

Neighbors of the node at each layer |

Algorithm traversal |

The following example shows some key points.

[Quantization] When a float32 vector element is quantized to Int16, the linear mapping formula Int16 = round (float32/scale) is used: WHERE, scale is the ratio of the largest absolute value of a vector's elements to 32767 (maximum Int16 value). For example, the vector [0.6, 0.7] provided in the above figure is quantized in the following way:

[9A 99193F | 3333333F]

Primitive vector [0.6, 0.7] big-endian

| 1. Find the largest absolute value: 0.7

| 2. Calculate scale: 0.7/32767

v

Scale = 2.13629555e-05

| 3. Quantization: Each element is divided by the scale value and rounded off.

v

Quantized vector: [28086, 32767]

| 4. Stored as: scale (float32) + dims (Int16 array)

v

Structure of stored vector: [scale: 2.13629555e-05] [dims: 28086] [dims: 32767] little-endian

[2860 C2 37 | B6 6D | FF 7F]Overall, the auxiliary table with a vector index employs a well-designed format and quantization for efficient storage of HNSW graph node information. The proper indexing and a carefully designed neighbors column structure fully support the HNSW algorithm, which operates by successively loading node data from this auxiliary table.

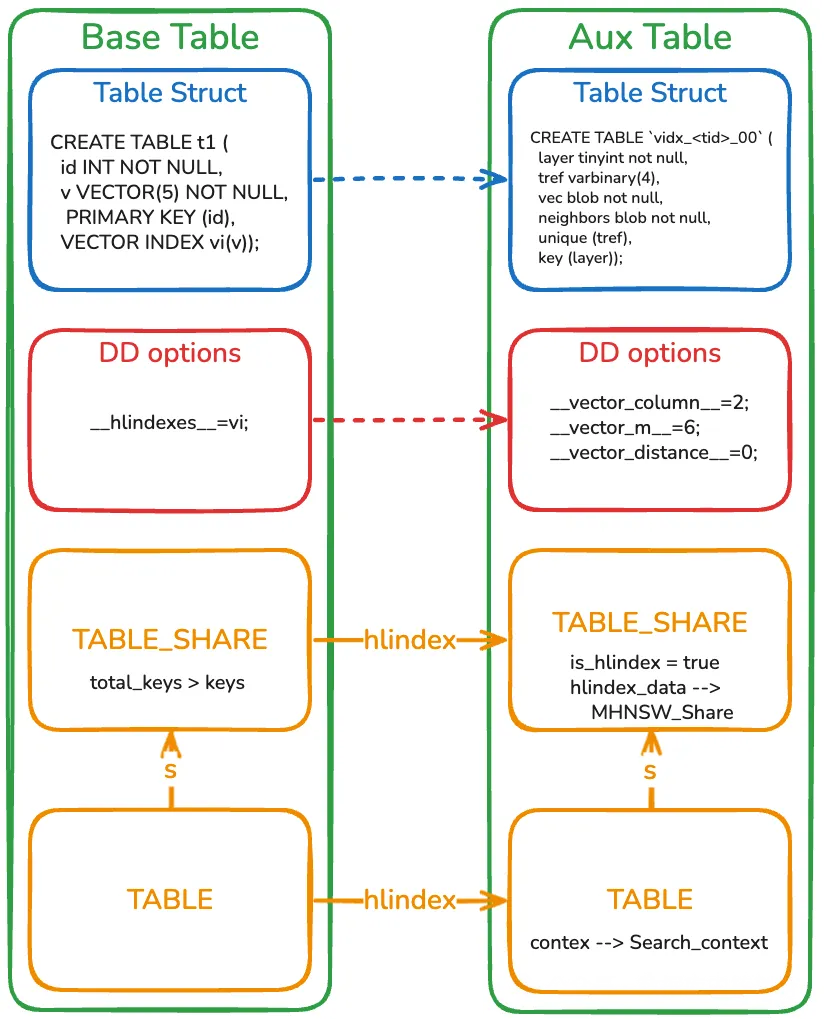

Different from ordinary tables, auxiliary tables with a vector index are invisible to users and cannot be directly accessed. The following figure shows the relationship between the base table and the auxiliary table.

• An auxiliary table is named vidx_<table_id>_00 using the base table ID. The base table can use the auxiliary table's name to find the auxiliary table's metadata from the data dictionary (DD).

• To perform a vector search or update, you can open an auxiliary table through the base table.

• The auxiliary table's TABLE_SHARE is not stored in the table cache. It is closed together with the base table.

During DDL execution, structural changes to both the base and auxiliary tables are encapsulated within the same transaction. Through MySQL's transaction system and DDL log, atomic and Crash Safe DDL operations are guaranteed.

By designing a structured auxiliary table with a vector index, AliSQL completely realizes the efficient HNSW graph storage and traversal. Combined with the integration of MySQL 8.0 data dictionary (DD), it ensures the consistency of metadata and the atomicity of DDL operations. This addresses MySQL ecosystem's long-term weakness in vector processing and provides out-of-the-box high-dimensional search capabilities for existing AliSQL instances.

This article focuses on the vector index storage and algorithm implementation. However, transactional guarantees and concurrency control are still required to realize database integrity and reliability. In AliSQL Vector Technology Analysis (2): Nodes Cache and Concurrency Control, we will discuss how Nodes Cache optimizes queries and how vector operations meet production-grade requirements through concurrency control and transaction isolation.

[Infographic] Highlights | Database New Features in January 2026

ApsaraDB - February 27, 2026

ApsaraDB - May 13, 2026

ApsaraDB - March 19, 2020

ApsaraDB - May 13, 2026

ApsaraDB - April 28, 2020

ApsaraDB - March 20, 2024

Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn More Network Intelligence Service

Network Intelligence Service

Self-service network O&M service that features network status visualization and intelligent diagnostics capabilities

Learn MoreMore Posts by ApsaraDB