Have you ever thought that the underlying logic used by Alibaba Cloud Operations and Maintenance (O&M) engineers today to locate server faults is essentially the same as the thinking of ancient Greek philosophers who asked "what the world is made of" more than two thousand years ago? From the first existence analysis frame built by Aristotle in "Metaphysics" to the observability modeling of enterprise Information Technology (IT) systems in the digital age today, ontology has spanned more than two thousand years, gradually evolving from a core branch of metaphysics into the underlying methodology for the digital transformation of various industries. It is never an obscure philosophical speculation in a study. Instead, it always revolves around the simplest proposition: how can we clearly understand the world? How can we turn scattered and personal experiences into a transferable, reusable, and verifiable consensus?

Today, we will follow this path from philosophy to practice to thoroughly analyze the essence of ontology, and see how ontology transforms from an abstract philosophical theory into an engineering implementation tool, and finally completes its native practice in the realm of observability and artificial intelligence for IT operations (AIOps) on Alibaba Cloud UModel.

When many people hear about ontology, their first reaction is that ontology is a "profound philosophical concept". However, to put it plainly, ontology is to draw a unified and unambiguous map for the "world" you want to study. The etymology of ontology comes from the Greek words ontos (existence) and logos (doctrine), which literally translates to "the doctrine of existence". In the philosophical system, ontology is the core of metaphysics, and the ultimate questions it needs to answer are: what is the world made of? What is the essence of things? How does existence become existence? Whether it is ontology in philosophy or ontology in the computer realm, the core must solve three problems:

● What truly exists in this world? (What exists?)

● How should we perform categorization and definition for these things? (How to classify?)

● What are the relationships between these things, and how will they interact with each other? (How to relate?)

Here we must distinguish three concepts that are easily confused, which is also the foundation for us to understand the value of ontology:

● Ontology: It defines "what the world itself is". It is the starting point of all cognition. For example, you must first clearly define what a host, pod, and service are, and what the relationships between them are, before you can discuss subsequent O&M operations.

● Epistemology: It answers "how we should understand this world" and is the method of cognition. For example, we need to decide whether to conduct an observation of the Status of a host through Metrics, logs, or traces.

● Methodology: It solves "what means we should use to transform this world" and is the path for implementation. For example, after a fault occurs, we need to determine what steps to take to locate the root cause and complete the disposal.

Without the underlying "map" of ontology, epistemology and methodology become water without a source. If you have not even clearly explained what you want to study, subsequent observations and operations will inevitably fall into chaos. The biggest misunderstanding of ontology is thinking that it is just defining things and attaching labels to things. However, the true soul of ontology is never a static entity definition, but rather dynamic relationships and behaviors. Take the simplest example: to understand "water", we must first clarify that its molecular formula is H₂O. This is the essential definition of water. However, what truly makes us understand "water" is its status changes at different temperatures, its chemical reactions with other substances, and its loop patterns in the ecosystem. Detached from these dynamic behaviors and relationships, "H₂O" is just a cold symbol without any practical significance. This is the essential difference between the static perspective and the dynamic perspective in ontology. The static perspective only focuses on the properties of the things themselves, while the dynamic perspective believes that the essence of a thing can only truly manifest in its relationships with other things and in its own movement and changes. This core cognition is also the fundamental reason why ontology can step out of the philosophical study and take root in the engineering realm. The most painful problem in enterprise digitalization is never "we do not have data", but rather "we have a pile of data, but we do not know what the relationships between the data are, let alone what the business logic behind the data is".

The development of ontology has never been a random accumulation of scattered viewpoints, but has completed three key leaps along the main line of "standardizing human cognition".

The starting point of ontology was ancient Greece in the 6th century BC. Before this, people used myths to explain the world. The philosophers of ancient Greece used reason for the first time to start asking "what the origin of the world actually is." Thales said that "water is the origin of all things." He attributed the essence of the world to concrete matter for the first time and opened the prelude to rational inquiry. Heraclitus said that "all things stream, and a person cannot step into the same river twice." He shifted the perspective to "change" and believed that the essence of the world is a procedure of movement. Parmenides proposed that "true existence is eternal and unchanging." The dispute between the two also planted the core proposition of "static and dynamic" in ontology. The person who truly transformed ontology into a complete System was Aristotle. In "Metaphysics", he treated "the study of existence itself" as an independent discipline for the first time. He dismantled the underlying logic of the existence of things using the theory of four causes (material cause, formal cause, efficient cause, and final cause). He also used the ten-category System to perform categorization on all manifestations of existence. Aristotle drew a universal "ontology map" for the world for the first time. This turned scattered inquiries into a reusable Analysis frame.

In the subsequent Middle Ages, European philosophy was incorporated into the theological frame. The dispute between realism and nominalism became the core. Realism believed that universal concepts truly exist. Nominalism believed that only concrete individuals are real, and concepts are just names. This dispute appears to be attached to theology, but this dispute actually clarified the "relationship between concepts and entities." This is exactly the core premise of "knowledge representation" in the computer realm later. From the 17th century to the 19th century, driven by the profound impact of the modern scientific revolution and the rational spirit of the Enlightenment, the modern scientific revolution completely pulled ontology out of theology. Descartes' mind-body dualism separated the cognitive entity and the objective world, and set the research paradigm of "subject-object separation" for modern science. Kant's twelve-category system achieved the conversion of the inquiry of traditional ontology into an epistemological issue. Kant's twelve-category system no longer inquired about the unknowable 'thing-in-itself', but instead studied the a priori logical frame of how humans perceive the world. Hegel's dialectics thoroughly injected the thinking of dynamic evolution into ontology. Hegel's dialectics completed the crucial upgrade from the "description of static existence" to the "description of the laws of motion of existence."

At this point, the philosophical kernel of ontology had become completely mature. The remaining task was to wait for an era that could allow ontology to be implemented.

Since the 20th century, the successive explosion of mathematical logic, computer science, and IT has opened the door of engineering for ontology. The development of ontology has also followed the technological wave and completed four crucial steps:

The first step is from text to symbols. The concept of a "universal language" proposed by Leibniz in the 17th century was finally implemented from the end of the 19th century to the 20th century. The mathematical logic founded by Frege and Russell provided ontology with a rigorous and unambiguous formal expression tool. The "existence" that could only be described in words before can now be calculated using symbols and formulas. Ontology transformed from philosophical speculation into a scientific system that can be authenticated and computed.

The second step is from science to the core tool of artificial intelligence (AI). When the discipline of AI was born in the mid-20th century, the first problem to be solved was "how to make machines understand human knowledge." This is exactly what ontology is best at. In 1993, the scholar Gruber proposed the classic definition: _An ontology is an explicit specification of a conceptualization_. Later, scholars such as Studer performed extension and perfection on this definition, and formed the consensus definition still in use today: Ontology is a formal and explicit normative specification of a shared conceptual system in a certain realm. This completely accomplished the paradigm transformation of ontology. Ontology is no longer a toy for philosophers, but ontology has become the core foundation of knowledge representation in the realm of AI.

The third step is from a single System to the infrastructure of the Internet. Around the year 2000, the Internet rapidly became popular. However, the information on the Internet could only be read by humans. Machines could not understand the information, and the data between different websites were completely isolated islands. The concept of the Semantic Web proposed by Tim Berners-Lee, the father of the World Wide Web, was to use ontology to perform unified semantic tagging on information on the Internet. The publication of standards such as Resource Description Framework (RDF) and Web Ontology Language (OWL) made ontology the underlying infrastructure for knowledge interconnection and interoperability on the Internet.

The fourth step is from the Internet to the era of big data and Large Language Models (LLMs). In 2012, Google published the knowledge graph, and Google took ontology as the "pattern layer" of the knowledge graph. The combination of ontology and Graph Database allowed ontology to achieve large-scale engineering implementation in the era of big data. After the explosion of large language models in 2022, ontology found a new positioning. LLMs have massive knowledge, but LLMs are prone to "talking nonsense", and the procedure is uncontrollable when LLMs infer. However, the structured and precise attributes of ontology can exactly put a "halter" on LLMs, and ontology and LLMs become a Gold combination for the industry implementation of LLMs.

At this point, many people will certainly ask: Is there an application for ontology in industries such as healthcare, finance, industry, and government affairs? In essence, ontology needs to solve three common difficulties that cannot be bypassed in the digital transformation of all enterprises:

● Data silos. Different systems and departments have different data standards and disconnected semantics. Even though data is available, the data cannot be used together. For example, in the medical industry, the disease glossaries of different hospitals are not unified, and data cannot interoperate at all. In the government realm, the data of different departments is managed independently, and citizens must visit several departments to complete a single task. Ontology builds a unified "translation language" for this heterogeneous data, which allows data from different systems to communicate with each other.

● Experience churn. Most of the core capabilities of an enterprise are hidden in the minds of senior employees. For example, a senior worker in a factory knows what sound a device makes when the device is about to malfunction, and a senior risk control expert in a bank knows what features indicate fraud. Newcomers need to spend several years learning this tacit experience, and if the employees leave, the experience is lost. Ontology can break down this fragmented experience into standardized rules, and turn the experience into reusable and inheritable knowledge in the system. This knowledge will not be lost because of personnel turnover.

● Disconnection between systems and businesses. The IT systems of many enterprises only move offline flows online, but do not incorporate business logic. A heap of data exists in the system, but the data cannot support business decisions, and problems cannot be quickly located when problems occur. Ontology models business entities, relationships, and rules into the system. This makes the system truly understand the business, rather than just storing data.

On the path of modern engineering practice of ontology, Palantir is an unavoidable benchmark. This company can gain a firm foothold in global intelligence, finance, and industrial realms. This is never because of how powerful its big data technology is, but because it is the first to truly implement the core value of ontology into enterprise-level scenarios. Palantir hits the nail on the head by exposing the fatal flaw of traditional data systems. The data of enterprises lies in the database, but the business relationships between data are invisible, and the business experience and judgment rules in the minds of senior employees cannot be incorporated into the system. Everyone is looking at the data, but no one can clearly explain how the business behind the data actually runs. Palantir uses ontology to find the answer to this problem:

● Palantir jumps out of the cold association of primary and foreign keys in traditional databases, and adds business semantics to the relationships between entities. This is not a simple "Identifier (ID) match", but an association with practical significance, such as "Company A holds shares in Company B" and "Account C transfers money to Account D".

● Palantir breaks down the tacit experience in the minds of business experts into configurable and executable rules, and incorporates the rules into the system. This allows the system to replicate the judgment logic of experts.

● Palantir not only records the final status of data, but also traces the end-to-end flow of data generation and circulation. This makes the complete procedure of the business observable and traceable.

This set of strategies allows Palantir to prove the huge value of ontology in highly complex scenarios, such as anti-terrorism, finance risk control, and industrial manufacturing. However, its limitations are also obvious. Palantir is positioned to serve only top-tier customers, takes the route of heavy customization and heavy delivery, has a long implementation cycle, and has an extremely high cost. Ordinary small and medium-sized enterprises cannot afford it at all. Moreover, the threshold for ontology modeling is very high, which requires the cooperation of professional teams and cannot be popularized on a large scale. Palantir has paved the way for the engineering of ontology, but it has also left a new problem. How to turn this system into a reusable, low-threshold, and inclusive capability for the entire industry, so that ordinary enterprises can also use it? This has become a brand-new proposition for the engineering implementation of ontology.

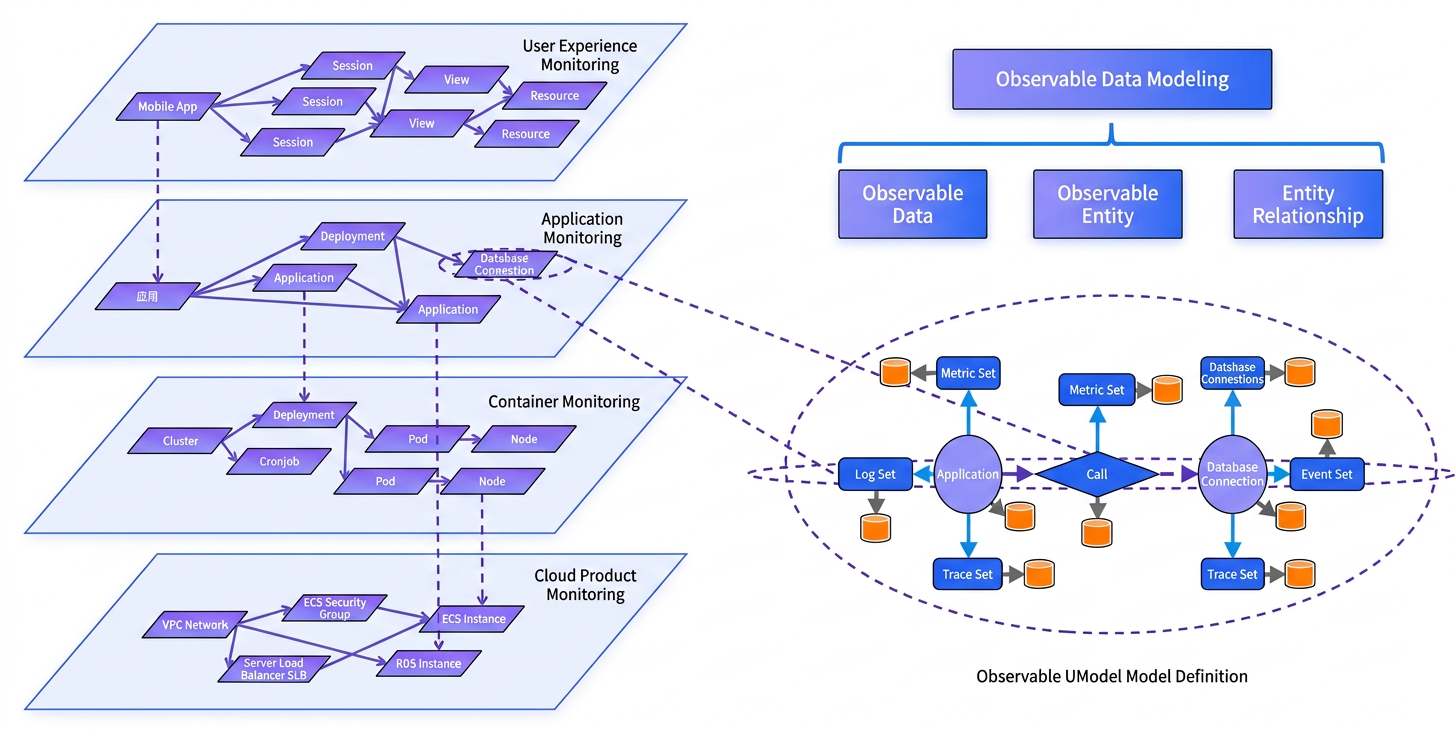

If we focus on the observability realm, we will find a deeply meaningful point of convergence. With the continuous deepening of the digital transformation of enterprises, and IT architectures fully evolving toward microservices, cloud-native, and containerization, the core dilemma faced by the observability realm is essentially homologous to the philosophical proposition that ontology sought to solve more than 2,000 years ago. Both aim to solve the fundamental problem of how to clearly define cognitive objects, sort out association relationships, and form a unified consensus. The current enterprise observability systems generally face three major core pain points:

● Data silos and semantic fragmentation. The four major core observability data types, which are metrics, logs, traces, and changes, are scattered in systems of different vendors and different features. The data formats are not unified, and the business semantics are not interoperable. When a fault occurs, O&M engineers need to switch back and forth among multiple platforms for troubleshooting, and cannot achieve end-to-end association analysis or root cause location at all.

● Tacit experience and inheritance failure. Senior O&M engineers can quickly locate faults based on long-accumulated experience. However, this core judgment logic and handling methods exist in the minds of individuals in the form of tacit knowledge. Not only is the training cycle for newcomers long and difficult to master, but the core O&M capabilities of the enterprise cannot achieve standardized accumulation or scaled reuse. The fault handling efficiency always highly depends on individual capabilities.

● LLMs lack a reliable foundation for implementation. The industry generally attempts to apply LLMs to artificial intelligence for IT operations scenarios. However, LLMs lack a standardized knowledge framework in the vertical O&M realm, and have biases in understanding professional terms and business logic. They are highly prone to hallucinate, and their infer procedures and Results are uncontrollable. Therefore, they can never be truly implemented in a production environment.

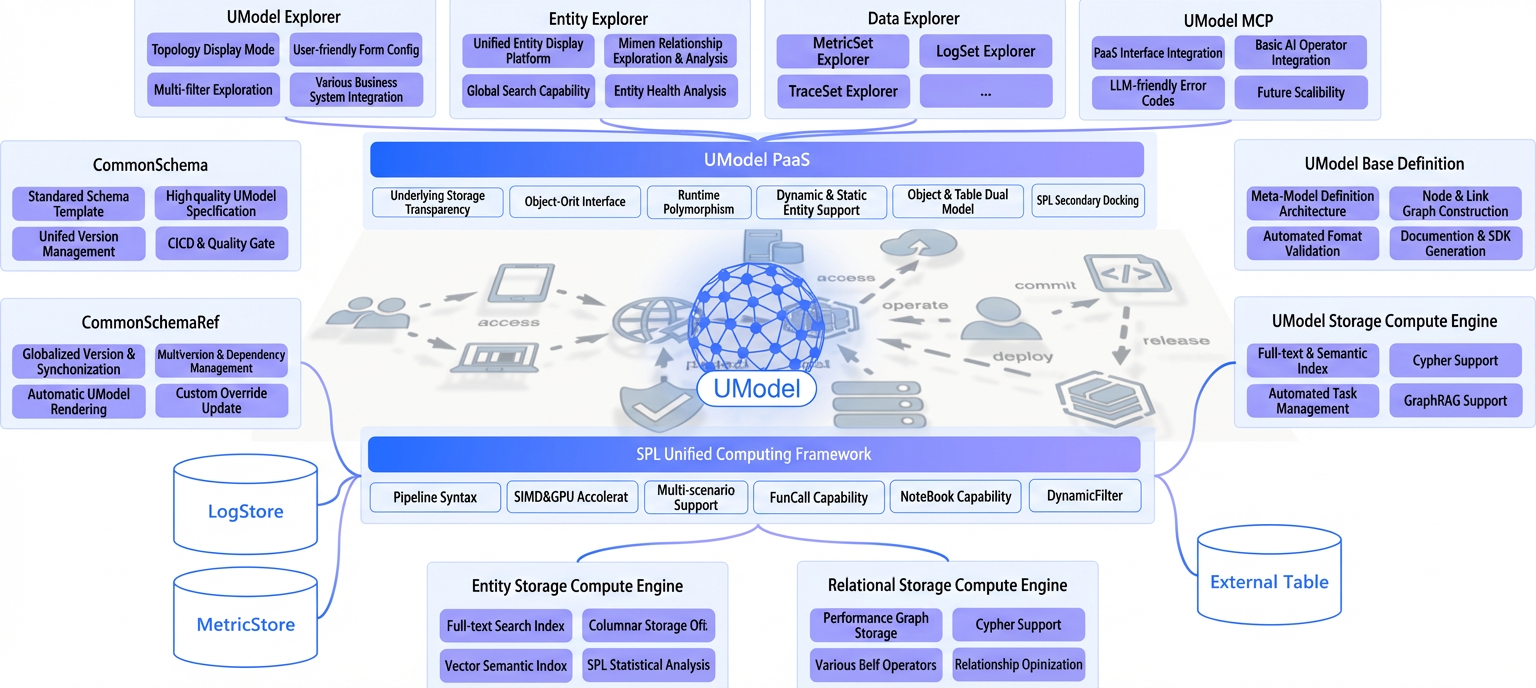

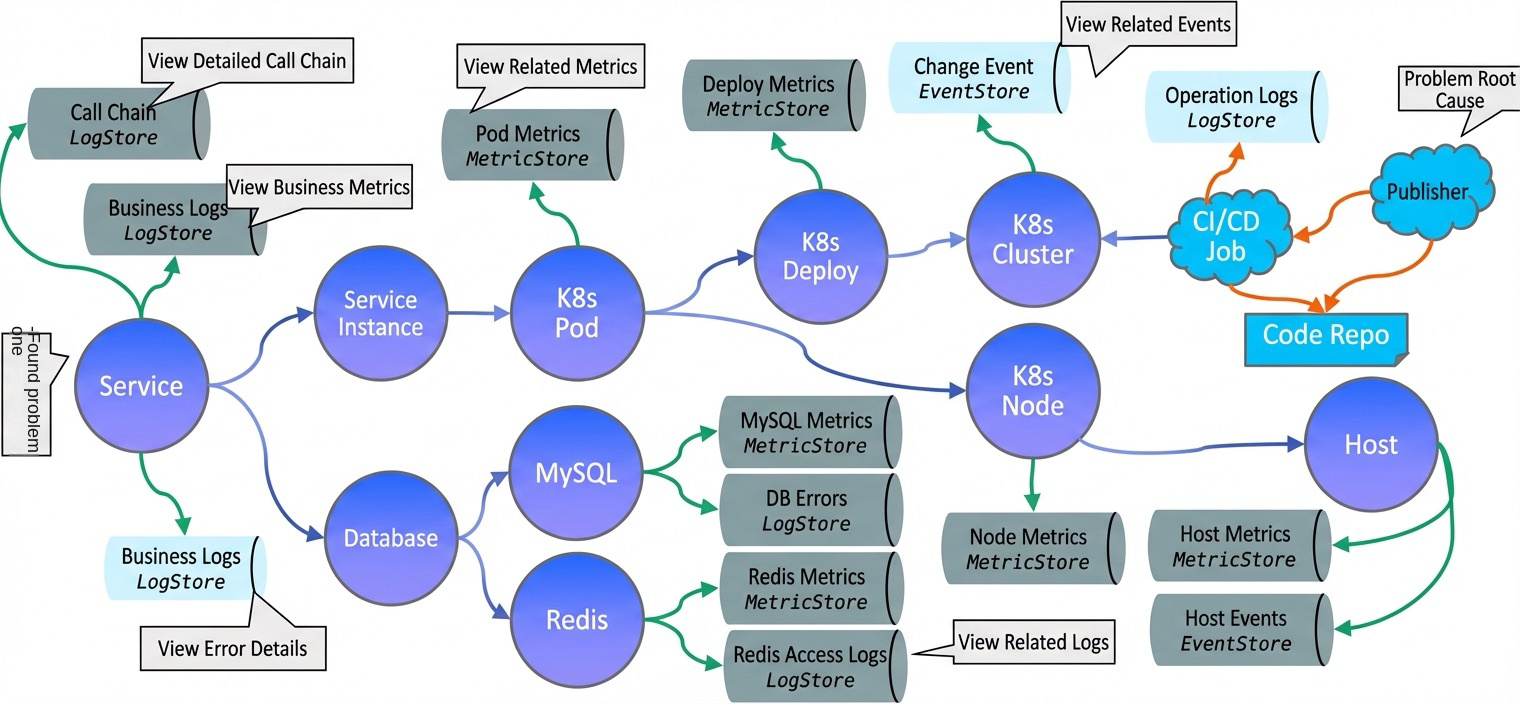

Alibaba Cloud UModel emerged precisely to systematically solve these industry pain points. Based on the underlying logic of ontology, which prioritizes behavior and takes relationships as the core, UModel creates a universal and unified modeling framework for the observability realm. Essentially, it draws a complete and unambiguous cognitive map of the digital world for complex and heterogeneous IT systems, truly transforming ontology from an abstract theory into a practical tool that O&M engineers can use, know how to use, and afford to use. During the design procedure, UModel is not only an abstraction of data, but also a complete system that integrates data, knowledge, and actions.

UModel Builds a complete product system around four core dimensions. Each dimension not only aligns with the native ideas of ontology, but also forms an irreplaceable differentiated advantage against the industry pain points in the observability realm. This completely distinguishes UModel from general-purpose ontology platforms such as Palantir and traditional observability monitoring tools:

● Standardized semantics definition to solve the core pain points of data silos and semantics fragmentation

With the native ideas of ontology as the core, UModel provides unified and unambiguous standardized definitions for all entities, associate relationships, and business rules in the O&M world. This allows O&M engineers, applications, and AI LLMs to form a consistent understanding of observable data, solving the problem of semantics inconsistency from the root. Unlike general-purpose platforms that have a high threshold of requiring users to Build realm models from scratch, UModel is optimized in depth specifically for IT O&M and cloud Resource Management scenarios. It has built-in mature realm ontology libraries and standardized modeling templates that cover all scenarios such as infrastructure, intermediaries, application performance, and Alibaba Cloud services. Enterprises do not need to build from scratch, and can complete the adaptation of core scenarios out of the box.

● End-to-end closed-loop Build to achieve complete implementation from Data to actions

Based on the graph model, UModel bridges the complete closed loop of "data-knowledge-action". It connects the underlying multi-source observation Data, expert knowledge in the O&M realm, and automated disposal execute actions in depth, achieving end-to-end integration from Data observation and Root Cause Analysis to decision-making and disposal, rather than the simple static data storage and display of traditional tools. At the same time, as the core foundation of Cloud Monitor 2.0, UModel can natively connect to full-stack observability products such as Alibaba Cloud Simple Log Service (SLS) and Application Real-Time Monitoring Service (ARMS). It provides one-stop integration of all observable data, including metrics, logs, traces, and changes. Enterprises do not need to perform complex system integration or custom development, significantly reducing implementation costs.

● Explicit precipitation of implicit experience to achieve standardized inheritance of enterprise O&M capabilities

Closely following the core definition of "rules and constraints" in ontology, UModel dismantles the implicit experience accumulated by O&M engineers during fault judgment, root cause analysis, and emergency disposal into a standardized, configurable, and reusable rule system. This system is precipitated into the system, allowing personal experience to be converted into inheritable digital knowledge assets of the enterprise. Unlike the pattern of Palantir that heavily relies on professional teams to customize rules, UModel relies on visualization modeling tools and standardized modeling flows to completely break the technical barriers of rule precipitation. O&M engineers do not need to master complex ontology theories to independently complete the standardized dismantling of experience and model configuration, achieving universal reuse of core capabilities.

● LLM native integration design to achieve universally beneficial AIOps with bidirectional empowerment

UModel uses a unified ontology model to provide reliable realm knowledge constraints and logical frames for LLMs. This avoids the problem where LLMs hallucinate in vertical O&M scenarios from the root. At the same time, by leveraging the natural language understanding and generate capabilities of LLMs, UModel significantly lowers the technical threshold for ontology modeling and O&M operations, truly achieving bidirectional empowerment between ontology models and LLMs. This is also the core advantage that distinguishes UModel from traditional tools: traditional tools can only achieve simple connection with LLMs, whereas UModel has completed the native integration of the ontology model and the Qwen LLM from the beginning of its design. Users can complete fault localization, root cause analysis, and model configuration through daily conversations. They do not need to memorize complex query syntax or operation instructions, truly achieving universally beneficial "conversational O&M".

In terms of specific architecture implementation, UModel adopts a directed graph structure of "nodes + edges" to completely describe the entire IT world. Each architecture component forms a precise one-to-one mapping with the core concepts of ontology.

At the same time, the implementation procedure of UModel essentially breaks down the philosophical ideas of ontology into standardized flows that can be executed and copied in O&M scenarios. Through five core actions, it helps enterprises convert scattered, tacit O&M experience into standardized ontological models. Each step of the end-to-end flow deeply aligns with the core logic of ontology:

Based on this set of standardized methodologies, we are also actively exploring the further implementation practices of UModel in industries such as the Internet, finance, industrial manufacturing, and government affairs. This forms replicable implementation solutions adapted to the attributes of different industries, truly authenticating the inclusive value of ontology in the observability realm.

The Internet industry generally adopts distributed microservices models. Core business traces often span tens to hundreds of microservices, with tens of thousands to hundreds of thousands of container instances running online. The industry generally faces three core challenges. First, observability data is scattered across multiple sets of monitoring tools. Metric, trace, log, and change data lack unified semantics definitions, forming critical data silos. When online faults occur, O&M engineers need to repeatedly troubleshoot across multiple platforms, resulting in extremely low positioning efficiency. Second, core fault handling and root cause analysis experience is highly concentrated in the hands of senior O&M engineers. The parenting epoch for new team members is long, and experience is difficult to standardize for accumulation and reuse. Third, the alert storm problem triggered by massive alerting is prominent. Effective alerting is overwhelmed by invalid information, and fault response efficiency is significantly reduced.

The finance industry is currently in a critical stage of IT application innovation transformation. IT architectures are transforming from traditional centralized architectures to distributed hybrid cloud architectures. Hundreds of operational systems, such as core trading, credit, and wealth management, are running simultaneously in IT application innovation environments and traditional environments. The core pain points of the industry include the following aspects. First, observability data is scattered across monitoring tools of multiple vendors and types. Cross-environment data semantics are disconnected, making troubleshooting extremely difficult. Second, the size of O&M teams is limited, and senior O&M engineers are scarce. Fault handling highly depends on experts, and core experience is difficult to cover all operational systems. Third, the industry faces strict financial regulatory compliance requirements. It needs to achieve end-to-end traceability and auditability of O&M operations and trading traces. Traditional O&M patterns are difficult to meet rigid compliance requirements.

The discrete manufacturing and process manufacturing industries are accelerating their transformation to the Industrial Internet. A single production line is often equipped with thousands of industrial devices, and the automation rate of production lines continues to increase. The core pain points of the industry include the following. First, the operational data of production line devices, manufacturing execution system (MES) data, and IT O&M data are isolated from each other. They lack a unified semantics definition. Operational technology (OT) and IT data cannot be integrated for analysis. Second, device fault handling highly depends on the personal experience of on-site maintenance personnel. The fault handling cycle is long, which easily causes unplanned downtime of production lines. Third, the core experience in device maintenance and process optimization is scattered across various production bases. When new bases are built or new employees are trained, the experience cannot be quickly reused. Fourth, there is a lack of a standardized predictive maintenance system. Sudden device faults occur frequently, and production continuity is difficult to guarantee.

In addition to the standardized O&M scenarios in the aforementioned core industries, UModel explores and implements various innovative scenarios based on the underlying philosophy of ontology, which prioritizes behavior and centers on relationships. This further expands the implementation borders of ontology in the observability realm. These include conversational O&M with native integration of the LLM. Based on the unified realm ontology model built by UModel, the LLM can accurately understand the professional terms, entity relationships, and business rules in O&M scenarios. This fundamentally avoids the hallucination problem of the LLM in vertical O&M scenarios. Users can complete operations such as core system run status queries, fault root cause localization, and O&M policy configurations through natural language. They do not need to master professional query syntax or technical knowledge. This lowers the technical threshold for O&M operations. In response to the common industry status of enterprise hybrid cloud and multicloud deployments, UModel overcomes the limitation of traditional monitoring tools in cross-environment adaptation capabilities. It achieves unified ontology modeling across cloud vendors, deployment environments, and technology stacks. A single ontology model is compatible with the observable data of public clouds, private clouds, and traditional self-managed data centers. You do not need to build independent monitoring or O&M systems for different environments. This reduces the O&M complexity and Management costs under hybrid cloud architectures.

More than two thousand years ago, Aristotle wrote Metaphysics to find a unified and unambiguous explanation for the chaotic world. Today, we use UModel to build ontology models for IT Systems. We aim to draw a map for the complex digital world that can be understood and utilized. Today, when the LLM is rapidly popularized, we do not lack AI that can generate Content. What we lack is a knowledge frame that can put a "halter" on AI, make AI truly understand the business, and prevent it from talking nonsense. Ontology is exactly the core of this frame. The combination of the LLM and UModel essentially equips AI with a "business brain." This transforms it from being "eloquent" to being "capable of working and working accurately." This is probably the most charming aspect of ontology. From questioning the origin of the world to locating server faults, ontology has spanned more than two thousand years. What has changed is only the object of research. What remains unchanged is humanity's obsession with "explaining cognition clearly and passing it down." To this day, it still provides the most underlying power for our digital age.

🔥 UModel Data Governance: Practice of Building an O&M World Model

🔥 UModel Explorer: Redefining Observability Data Modeling with a Graphical Approach

🔥 From Symptoms to Root Causes: How MetricSet Explorer Reinvents the Metric Analysis Experience

🔥 Building a Unified Entity Search Engine by Using UModel for Observability Scenarios

HiClaw: Unified Deployment for OpenClaw and Hermes Workflows

726 posts | 59 followers

FollowAlibaba Cloud Native Community - June 3, 2026

Alibaba Cloud Native Community - February 10, 2026

Alibaba Cloud Native Community - May 8, 2026

chuan - February 27, 2020

Alibaba Cloud Native Community - November 6, 2025

Alibaba Cloud Native Community - February 12, 2026

726 posts | 59 followers

Follow Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More CloudMonitor

CloudMonitor

Automate performance monitoring of all your web resources and applications in real-time

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn MoreMore Posts by Alibaba Cloud Native Community