The openclaw-cms-plugin is a self-developed OpenClaw observability plugin of Alibaba Cloud CMS. It implements tracing analysis for each job invocation of OpenClaw, conforms to the Generative Artificial Intelligence (GenAI) semantics specifications, and makes it convenient for you to quickly locate and troubleshoot issues. For more information, see One Command Equips Your OpenClaw with an X-ray Machine - Alibaba Cloud Observability Makes Farming Lobsters Cheaper and Safer

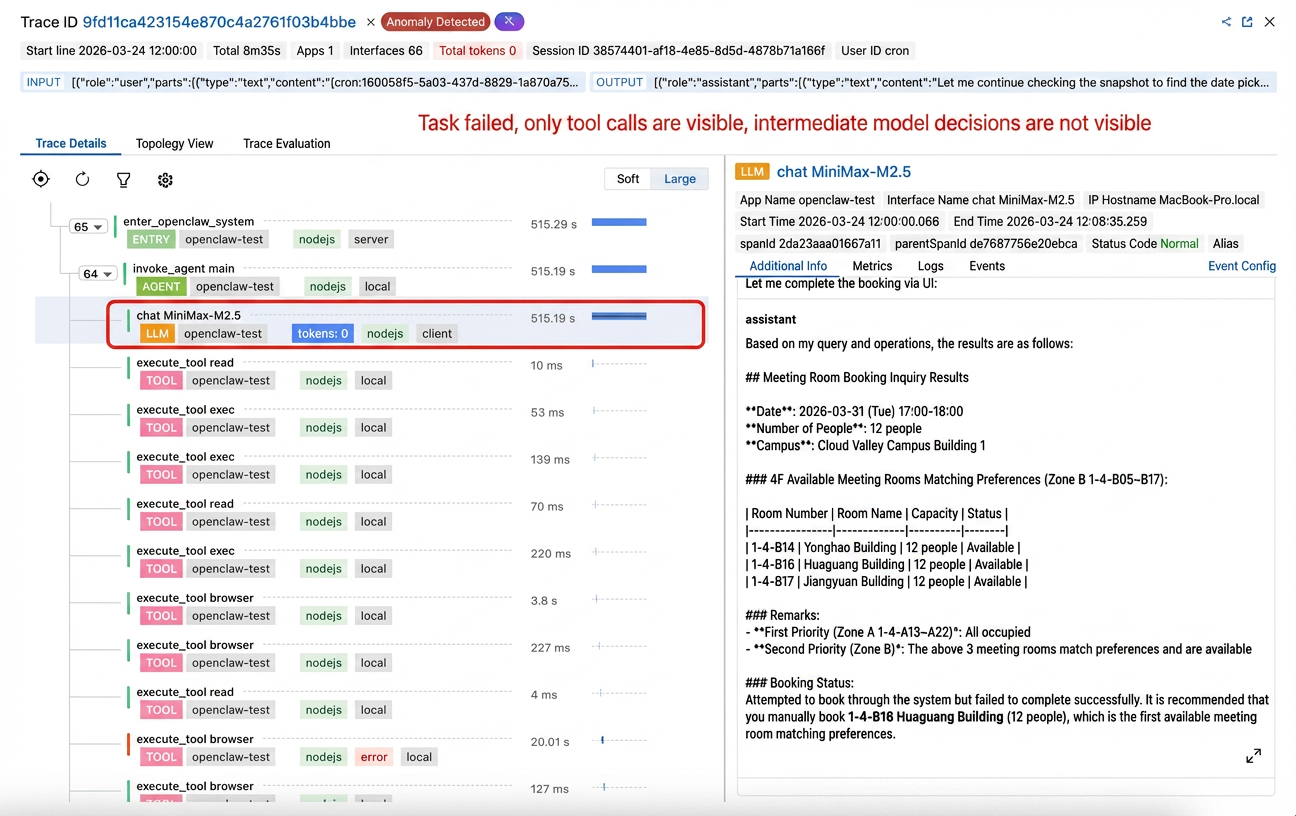

Many teams have integrated the OpenClaw observability plugin, but when issues are troubleshot, they still encounter the awkward situation of "the graph is there, but the truth is not"—although there is a trace diagram, it cannot reflect the real decision-making procedure. There are large language models (LLMs) and tools on the trace, but you just cannot see why the model makes such decisions at each step.

More importantly, this is not an individual issue of a single plugin. In most OpenClaw observability plugins on the market implemented based on llm_input/llm_output hooks, the same type of structural issue exists: The multi-turn conversation is only compressed into "a single-turn LLM + multiple tools".

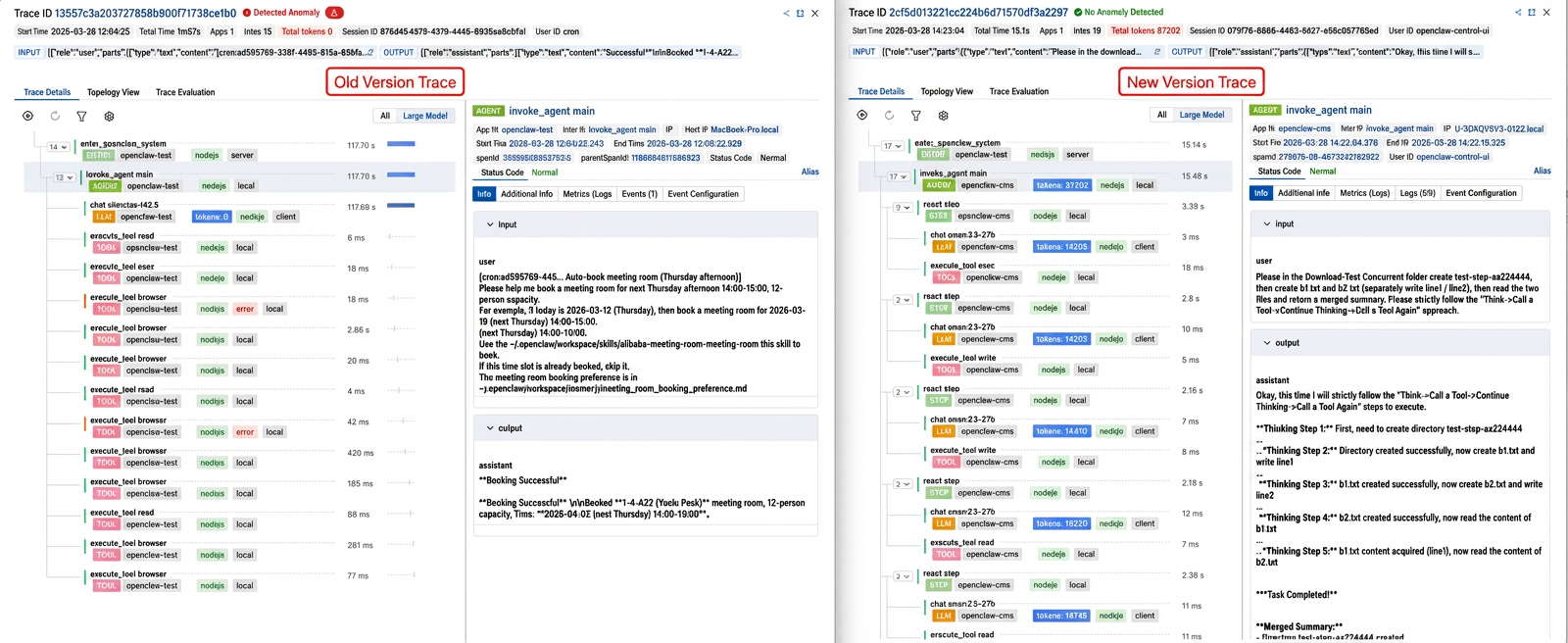

🚀 The value of openclaw-cms-plugin 0.1.2 lies exactly here: It not only fixes the issues of the old version, but also pioneeringly completely reconstructs the real multi-turn execution trace of OpenClaw.

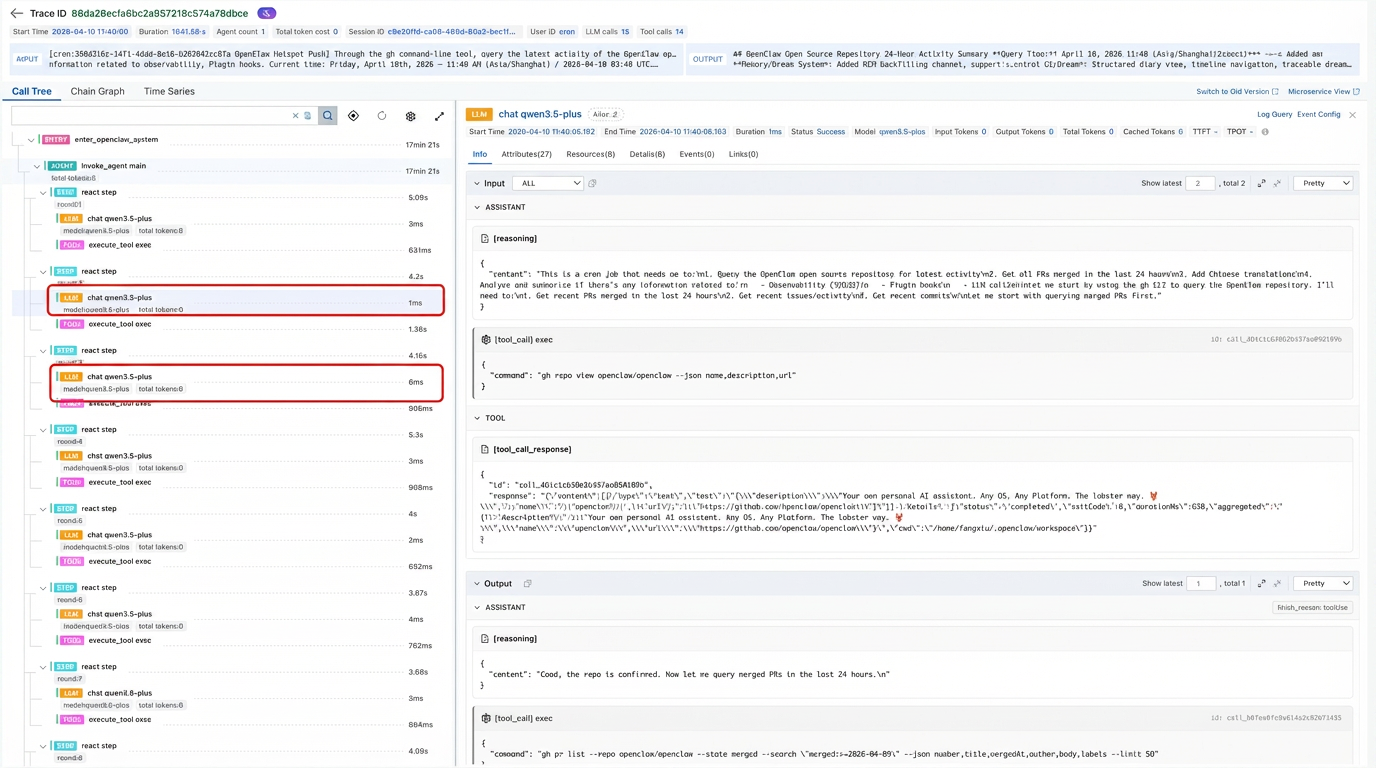

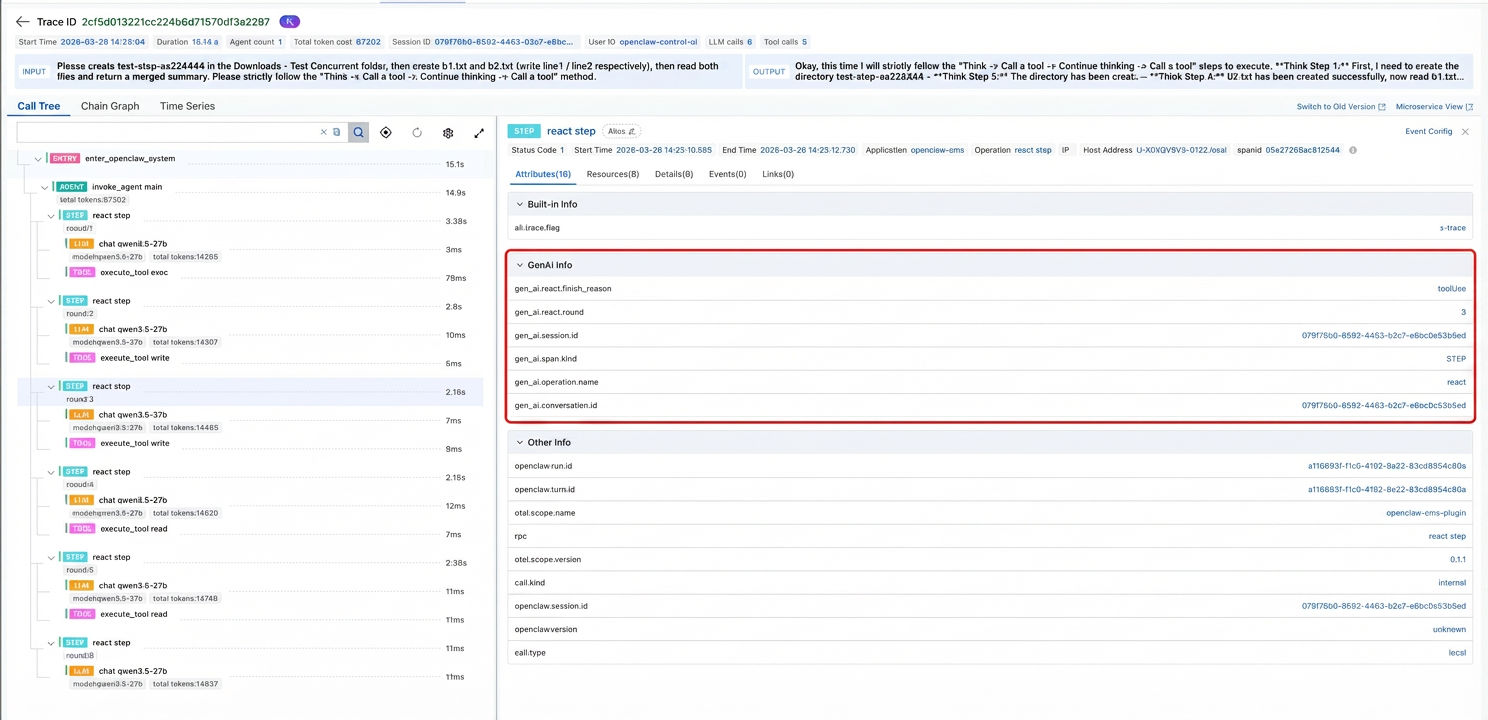

To understand the root cause of these pain points, you need to first clarify the agent's real execution pattern: The agent is not "one LLM invocation + several tools", but a ReAct iteration system. Each turn contains judgment, tool selection, result absorption, and next-step planning. Using a single LLM span to summarize the behavior of the entire turn will naturally lose the intermediate semantics.

Therefore, the self-developed observability plugin V0.1.1 of Alibaba Cloud CMS OpenClaw (and many similar plugins) will experience three typical issues:

● You cannot see the real LLM inputs or outputs of the intermediate turns. You only see the beginning and end of the session.

● The trace structure is inconsistent with the real execution. When issues are troubleshot, it "looks complete, but is actually misleading".

● Under concurrent and continuous invocations, the trace is prone to break or cross, and the run (task execution) association is unstable.

The V0.1.2 implements the LLM segmentation export, and is no longer subject to the limitation that "multiple turns only trigger the LLM hook once". At the same time, it supports assistant structured output blocks (reasoning/text/toolCall), and reconstructs the next segment of LLM input context after the tool batch.

The V0.1.2 achieves a more stable trace connection in concurrent scenarios through the following mechanisms:

● Serialize the task queue by trace to avoid concurrent write conflicts.

● The active anchor of the agent channel to ensure accurate trace attribution.

● The identity-safe cleanup to prevent accidental cleanup of active traces.

● The non-destructive endTrace() to avoid premature truncation.

● The root/agent self-healing mechanism of **llm_input** is used to handle abnormal break scenarios.

Added step semantics (gen_ai.span.kind=STEP), and completed gen_ai.operation.name=react, gen_ai.react.round, and gen_ai.react.finish_reason. This ultimately forms the ReAct standard level structure: ENTRY -> AGENT -> STEP -> (LLM/TOOL...).

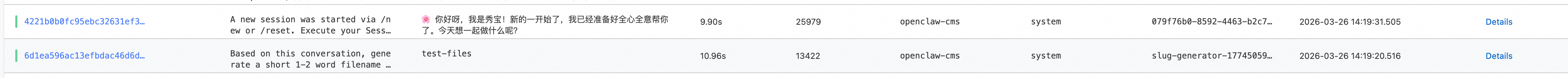

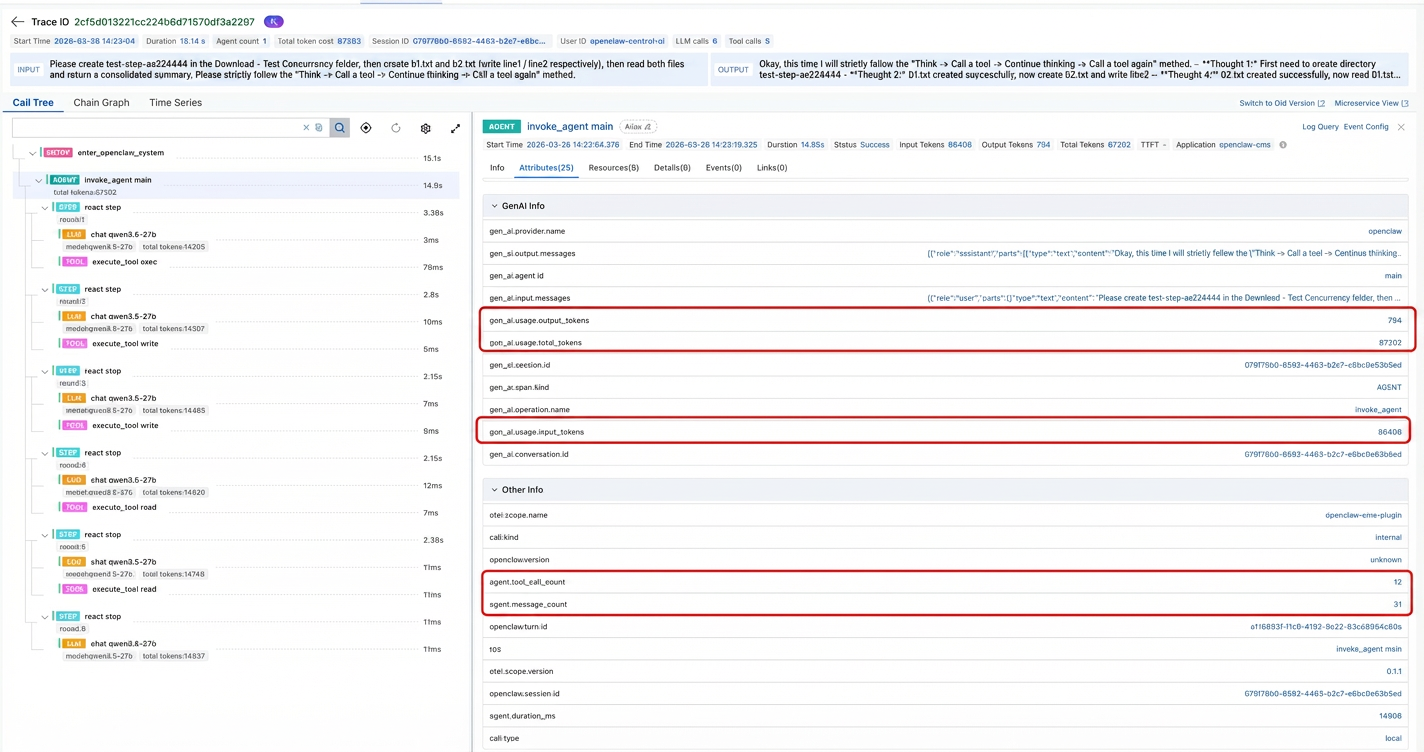

The calculation methods for three classes of core metrics are comprehensively upgraded:

● **agent.message_count**: Accurately calculated based on event.messages.length.

● **agent.tool_call_count**: Counted successively based on the assistant tool calling block.

● usage(token usage): Changed to be summarized from the llm_output cache and then uniformly written at agent_end.

Ultimately, you can stably see the three classes of core metrics: message, tool, and token.

⚡ Value 1: Troubleshooting efficiency is significantly improved. Previously, you could only know "which tools were invoked". Now, you can see "why the model invoked these tools in each round". The troubleshooting path is significantly shortened from "suspecting a model issue" to "locating a parameter construction issue in the exact round".

🧪 Value 2: More confidence in concurrent regression testing. After the concurrent link stabilizes, stress testing and regression no longer rely on "manual visual inspection of whether it is roughly normal". Instead, you can validate them in a standardized way based on run-level consistency, step rounds, and parent-child relationship.

💰 Value 3: Finer cost administration. When the agent layer retrieves stable message, tool, and token metrics, you can more accurately evaluate the "complexity cost" of a job, detect high-consumption task types, and optimize the prompt and tool orchestration policy.

🧭 Value 4: Smoother cross-role collaboration R&D, testing, and O&M see the same "real link with semantics": Developers look at decision rounds, test engineers look at behavior consistency, and O&M engineers look at concurrent stability. The communication cost is significantly reduced.

🔒 Value 5: Faster loss mitigation for online faults. When abnormal tool parameters, model retry jitter, or concurrent misbinding threats occur, the fine-granularity data of the V0.1.2 link can provide evidence faster. The STEP round + finish_reason compresses the troubleshooting path from the minute level to the second level, reducing the window of "long-term blind troubleshooting".

If you hope that the observability of OpenClaw truly services production, rather than staying at "having a graph to look at", V0.1.2 is a version worth prioritizing for upgrade: It completes the multi-round decision procedure, concurrent stability, and agent core metrics all at once, upgrading trace from "displaying data" to "supporting decisions".

Summary in one sentence: See every step, accurately observe concurrency, and clearly calculate costs. This is the true value of observability in the agent scenario. Everyone is welcome to try and experience the openclaw-cms-plugin 0.1.2 plugin!

👉 Integration document: https://www.alibabacloud.com/help/en/cms/cloudmonitor-2-0/monitor-openclaw-applications

From Observable to Understandable: Building Agent-Native Code Knowledge Graphs with UModel

705 posts | 57 followers

FollowCloudSecurity - February 3, 2026

Alibaba Cloud Native Community - April 16, 2026

Alibaba Cloud Native Community - March 30, 2026

Justin See - March 11, 2026

Alibaba Cloud Native Community - April 15, 2026

CloudSecurity - March 16, 2026

705 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn MoreMore Posts by Alibaba Cloud Native Community