By Kenmeng

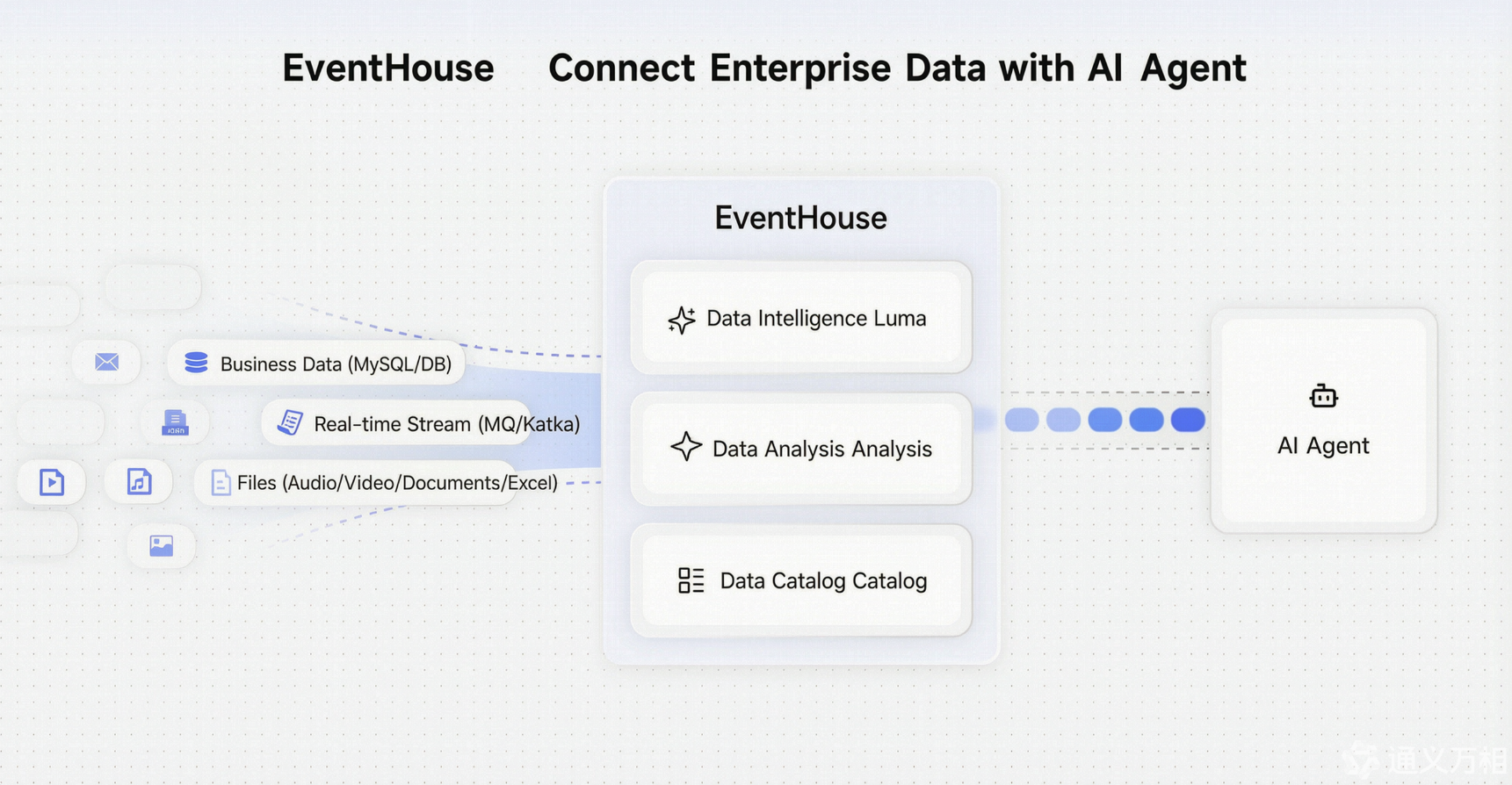

EventHouse is an AI-native data platform launched by Alibaba Cloud EventBridge. This article will deeply analyze the core capabilities of EventHouse and its positioning in the era of artificial intelligence, and introduce how EventHouse serves as an AI data base to help enterprises mine data value at an unprecedented speed and drive business growth in a rapidly changing market.

2025 is widely regarded as the first year of AI agent business. IDC pointed out in its FutureScape 2026 report that enterprise data platforms are moving from "human-centric query-style access" to "agent-centric programmatic access." This means that data consumers are no longer just analysts who write SQL, but AI agents who can call data and perform analysis independently.

However, most enterprise data infrastructures are not ready for this.

Event data, such as user behavior, transaction flow, system status, and IoT telemetry, is the most valuable real-time information flow for enterprises, but it is also the most easily wasted data asset. EventBus and EventStreaming solve the problem of event routing and distribution, but often draw a full stop after the event is consumed. Subsequent storage, governance, and in-depth analysis have long been in a "fragmented" state: data engineers need to manually splice data across multiple systems, ETL pipelines bring T +1 delay, and AI Agent has no way to access these scattered data sources.

This is exactly what EventHouse aims to solve- to provide a unified Data Integration bridge for agents and build an AI-oriented data base.

In the wave of digital transformation, enterprises have accumulated massive amounts of event data, which records valuable information such as user behavior, system status, and transaction flows. However, for a long time, these data are often "shelved" and become "dark data" that is difficult to use.

The traditional event bus (EventBus) mainly solves the problem of "routing and distribution" of events, that is, to ensure that an event can be accurately delivered from the producer to the consumer, but once the event is consumed, its subsequent storage, governance and in-depth analysis are often ignored. This model has led to a huge waste of data value and the need for a new generation of data infrastructure.

EventHouse emerged in this context, marking the evolution of data infrastructure from a simple "data pipeline" to an "AI data base" that integrates storage, governance, and intelligent analysis capabilities.

On the one hand, EventHouse inherits the openness and flexibility of data lakes and can accommodate structured, semi-structured, and even unstructured data from various sources such as Kafka, RocketMQ, and MySQL. On the other hand, EventHouse integrates the reliability and high performance of data warehouses and provides enterprise-level governance capabilities such as atomicity, consistency, isolation, durability transactions, schema management, and permission control. Its core mission is to solve the three major problems of "storage, governance and intelligent analysis" of event data, and transform events that were originally regarded as the end of the life cycle into core assets that can be repeatedly mined and continuously increased in value.

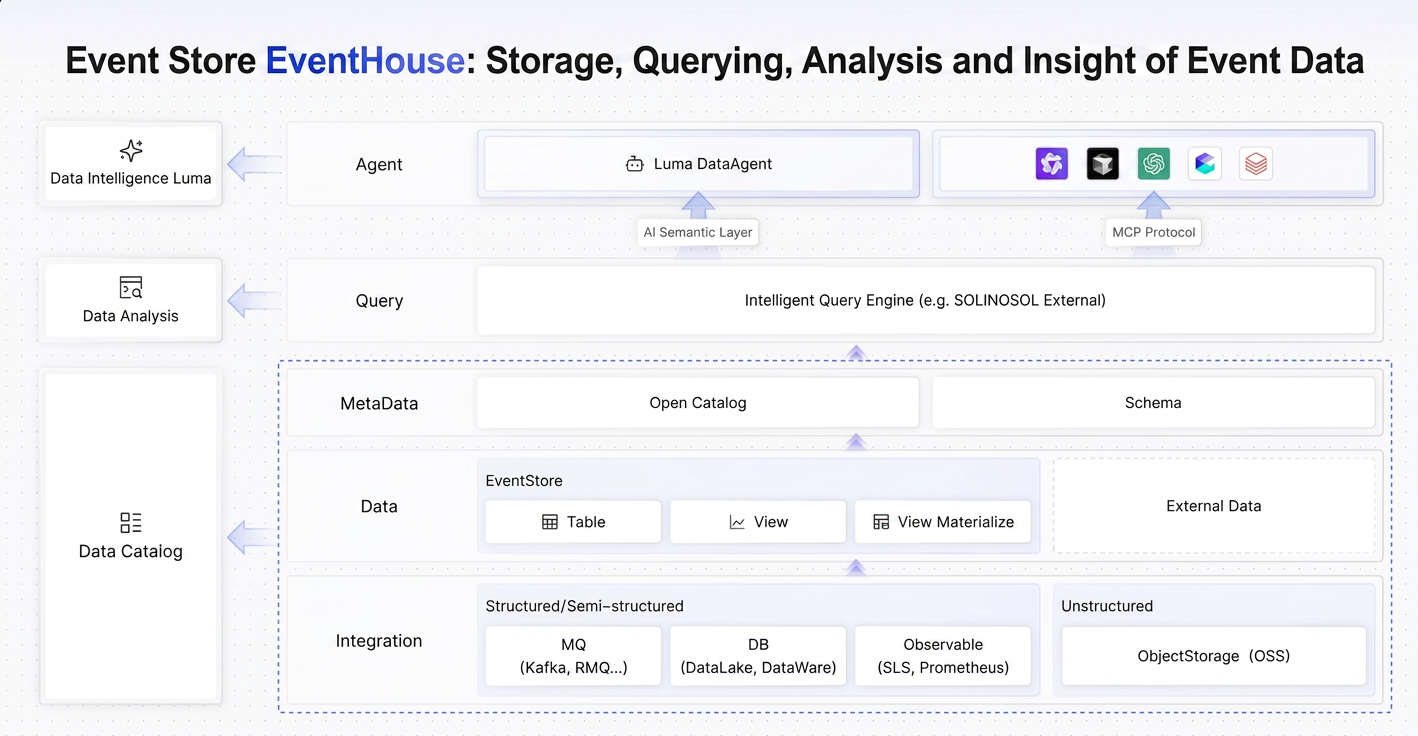

EventHouse is not an isolated storage engine, but a complete system composed of multiple decoupling layers. Its architecture design draws on industry-leading DataMesh and Lakehouse ideas to build a truly future-oriented data platform.

The core architecture of EventHouse adopts a layered decoupling design, which is clearly divided into integration layer, data layer layer, meta data layer layer, data query and intelligent analysis layer. Each layer is supported by specific core components, which together form a complete closed loop of data processing and analysis. At the same time, each level is relatively independent and can be used separately or end-to-end.

The integration layer is the entrance to the entire system. It acts as a multi-functional "translator" and can seamlessly connect to multiple MSMQ such as Kafka and RocketMQ, ApsaraDB RDS such as MySQL, and Object Storage Service OSS to realize unified access to all-modal data. This ensures that no matter what form the data is originally generated, it can be stored in EventHouse for unified processing.

At the heart of the data layer is EventStore, a storage format specifically designed for event streams. Unlike general-purpose file storage, EventStore provides dedicated column compression for event data such as JSON, which significantly reduces storage costs and is estimated to save more than 50% over traditional databases.

More importantly, it provides ApsaraDB RDS-like abstractions such as tables, views, and even materialized views, enabling analysts to use familiar SQL syntax for complex queries while retaining the extensibility of a data lake.

The metadata layer is the bridge between disparate data source and the key to achieve unified governance. EventHouse uses open catalogs that are compatible with Hive Metastore Thrift API standards to automatically discover and register data elements from different sources, such as Kafka topics and RDS table structures.

When the input data schema changes, Catalog can also manage the schema evolution of compatibility to avoid the interruption of downstream analysis tasks due to schema mismatch. At the same time, it can automatically trace the whole link of events from generation to analysis, greatly simplifying troubleshooting and impact assessment.

The data query layer is dominated by Intelligent Query Engine, which solves the fundamental contradiction that traditional data warehouses cannot handle high-concurrency real-time events and traditional MSMQ lacks complex correlation analysis capabilities.

The most prominent feature of the engine is "stream and batch integration"-using the same SQL syntax, you can query both historical archived data (batch) and real-time event streams (streaming) that are flowing in, greatly reducing the user's use threshold.

In addition, it supports Zero ETL and Federated Query (to be released). You can directly use SQL JOIN operations in EventHouse to perform joint analysis between internally stored tables and external data sources, such as log files on OSS or dimension tables in another RDS database, without any physical data migration.

The intelligent analysis layer is the most forward-looking part of EventHouse. It integrates AI capabilities into the data platform by introducing the self-developed Luma Agent and MCP protocols.

The goal of this layer is to achieve "dialogue as analysis" and eventually move towards "autonomous analysis". Users no longer need to write complex SQL or wait for reports to be generated, but can ask questions directly in natural language and get immediate answers.

Furthermore, the built-in Luma DataAgent can actively monitor data, find problems, plan analysis paths and execute queries like human experts, and finally output a complete report with root cause analysis and action suggestions. This marks a profound change in the paradigm of data analytics: moving from reactive responses to proactive insights.

In summary, through its modern architecture of layered decoupling, EventHouse not only solves the pain points of traditional data platforms in processing real-time event streams, but also deploys AI-native capabilities in a more forward-looking manner, and is committed to building an open, unified, and intelligent data base. Its emergence provides a new and attractive solution for enterprises to effectively manage and utilize massive event data.

Enterprise event data is scattered in MSMQ, business databases, Object Storage Service and other corners. Before analysis, it often takes a lot of time to "find the data" and "understand the data".

EventHouse builds a unified metadata center named Catalog, which is compatible with Apache Hive Metastore Thrift API standards. When a new Kafka topic or RDS table is accessed, Open Catalog automatically captures its schema, partition information, and data type, and registers it as a logical table that can be queried. The discoverability of data is reduced from the "day level" to the "second level".

Choosing a Hive-compatible metastore instead of a self-developed closed protocol is a well-thought-out ecological decision. This means that the existing computing engines of the enterprise, such as Spark, Flink, and Presto, can directly connect to the metadata of EventHouse without the risk of vendor lock-in.

More importantly, Catalog is not only a "registry" of data, but also a "command center" of governance-it can automatically track the full-link blood of events from generation to analysis, manage the compatibility evolution of schemas, and ensure that downstream analysis tasks are not interrupted when input data schema changes.

Order payment events of an e-commerce platform flow through RocketMQ in real time, and user profile data is stored in MySQL. In the past, operators needed to query in the MQ console and database client respectively, and then manually associate in Excel. Now, create an Order_View virtual view through Open Catalog to logically associate the real-time payment stream with the user table. All analysts can directly query this unified view. The underlying metadata mapping, data source connection, and permission verification are all automatically completed by Catalog.

Traditional data warehouses rely on ETL pipelines to process data, which is costly and has a large delay; traditional MSMQ can process real-time streams, but it is difficult to complete complex correlation analysis. Two sets of systems, two sets of syntax, and two sets of O&M are almost the daily routine of most enterprise data teams.

The Intelligent Query Engine of EventHouse is built to solve this contradiction. Its core features include:

● Unified SQL for streaming and batching: You can use the same SQL syntax to query historical archived data and real-time event streams that are flowing in. You do not need to maintain the code for stream processing and batch processing.

● Zero ETL cross-source query: You can use standard SQL JOIN statements to join internal tables in EventHouse with external data sources, such as Log Service log files and ApsaraDB RDS dimension tables, without the need to migrate physical data.

● Computational pushdown optimization: When a cross-origin query is executed, the engine pushes down the filter conditions and computational logic to the data source for execution and pulls only the required result set. For example, when you query the data of a certain day, the engine commands the external MySQL to return only the records of the current day instead of a full table scan. This not only significantly reduces network traffic, but also brings query performance closer to local analysis.

● The Federated Query feature will be available soon to further support real-time analysis across data sources.

An IoT enterprise needs to join the real-time device telemetry data stream with the device archives stored in RDS to build a device health profile. In the traditional solution, the telemetry data needs to be imported to the data warehouse through ETL with a delay of at least T +1. After using EventHouse, the O&M team can directly use an SQL statement to complete cross-source association, improve data availability from T +1 to quasi-real-time, and significantly improve the speed of detecting and responding to abnormal devices.

Even with a unified data view and a powerful query engine, "being able to write SQL" is still a barrier. Business people have problems and intuition, but lack the technical means to directly verify them.

This is where EventHouse is essentially separated from traditional data platforms. It takes two paths to deeply integrate AI capabilities into the data base.

● Path 1: AI semantic layer (Luma Agent)

Although the big language model is knowledgeable, it does not understand the business fields within the enterprise. When the user asks "how many orders failed to pay in Beijing yesterday", LLM may know to look up the order table, but does not know whether "payment failure" corresponds to status_code='FAIL or payment_result=false. This ambiguity is the core reason for the low Text-to-SQL accuracy.

The AI semantic layer of Luma Agent solves this problem. It allows data managers to label business descriptions, business aliases, and computational logic for each field in the Catalog. When a user asks a question in natural language, Luma accurately maps the user's intent to the correct data fields and business logic based on these semantic annotations.

Furthermore, the built-in Luma DataAgent can actively monitor data like a human expert, find anomalies, plan analysis paths and execute queries, and finally output a complete report with root cause analysis and action suggestions. This is not "dialogue and analysis", but "autonomous analysis".

● Path 2: Native MCP Protocol (Coming Soon)

MCP (Model Context Protocol) is becoming the de facto standard for AI agents to connect to external tools-it can be understood as the "USB interface" of the AI agent world. Since 2025, manufacturers such as LangChain, Dify, Coze, and Internet leading enterprises have been connected to the MCP ecosystem, with rapid growth momentum.

EventHouse natively supports the MCP protocol and encapsulates its query capabilities, such as streaming query, materialized view analysis, and alert triggering, into MCP tools. This means that any AI agent that supports the MCP protocol can seamlessly access EventHouse to obtain real-time event data as if it were calling a standard API. EventHouse is connected to the entire AI agent data ecosystem.

After detecting abnormal transaction patterns, a risk control agent developed by an enterprise can automatically call the query tool of EventHouse through the MCP protocol to pull the historical behavior data and real-time transaction flows of associated users, perform multi-dimensional correlation analysis, generate risk assessment reports, and push them to the risk control team. This will greatly reduce the manual intervention link, so that the wind control response from the "hour level" compressed to the "minute level."

The integration trend of Data + AI platform is moving from "industry prediction" to "enterprise just needs". There are several forces pushing this to happen simultaneously:

In the past decade, enterprises usually purchased a set of ETL tools, a set of metadata management tools, a set of data quality testing tools, and a set of BI platforms to deal with different data problems. In the end, it turns out that just getting these tools to open up and "talk" to each other requires a cost and effort equivalent to buying them.

The industry consensus is shifting to a data fabric and AI-driven approach that integrates these decentralized capabilities into an integrated data ecosystem.

EventHouse's integrated design of Open Catalog, query engine, and Luma Agent is essentially based on the concept of the vertical domain of event data-end-to-end solution to Data Integration problems.

This sounds a bit contradictory: natural language lowers the threshold for data consumption, but if the underlying metadata is messy and the field semantics are unclear, then the SQL generated by LLM is likely to be wrong, and you don't even know where it is wrong.

Gartner's judgment is that poor data quality and insufficient governance will cause a large number of GenAI projects to stay in the proof-of-concept stage and fail to go live.

This is also why EventHouse first built AI semantic layer and Open Catalog before launching Luma Agent: instead of pursuing the cool experience of "natural language data query", EventHouse first consolidated the infrastructure of semantic annotation, metadata governance and schema evolution to make the accuracy of Text-to-SQL truly available for production.

AI agents are more than just "natural language search". It breaks down complex tasks, invokes multiple tools, and autonomously executes end-to-end analysis processes. This means that the data platform must provide standardized interfaces that can be called by programs, not just dashboards for people to see. At the same time, it needs to have a sufficiently complete governance framework to ensure that the autonomous operation of agents is safe, controllable, and auditable.

EventHouse natively supports the MCP protocol and has built-in permission control and kinship tracking in Open Catalog. This prepares the infrastructure for the future of Agentic.

With the continuous improvement of model capabilities, Data Agent is stepping out of the POC stage and truly entering the enterprise production environment. Data Management tools have moved from fragmentation to integration, and data consumption has moved from "people writing SQL" to "agent autonomous calling". The key to connecting these two sides is solid data governance capabilities.

EventHouse is currently under public preview. If your team is facing the following challenges, we look forward to exploring with you:

● Real-time event data has accumulated massive scale, but cannot be efficiently analyzed and utilized

● Cross-data source queries rely on complex ETL pipelines, and the analysis results always lag behind the business

● I hope that AI agents can directly access business data to realize autonomous analysis and intelligent decision-making.

Let's define the next generation of event data platforms together!

Click here to join the public preview:

https://eventbridge.console.aliyun.com/cn-chengdu/event-house/overview (Currently available in the China (Chengdu) region; support for other regions will be rolled out soon.)

Join our DingTalk group: 44552972

Multi-Turn Agents, Single-Turn Traces? OpenClaw CMS Plugin 0.1.2 Released

706 posts | 57 followers

FollowAlibaba Cloud Big Data and AI - January 21, 2026

Alibaba Cloud Community - September 19, 2025

Alibaba Cloud Community - February 3, 2026

Alibaba Cloud Community - December 17, 2025

ApsaraDB - January 16, 2026

Alibaba Cloud Community - September 19, 2025

706 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More E-Commerce Solution

E-Commerce Solution

Alibaba Cloud e-commerce solutions offer a suite of cloud computing and big data services.

Learn MoreMore Posts by Alibaba Cloud Native Community