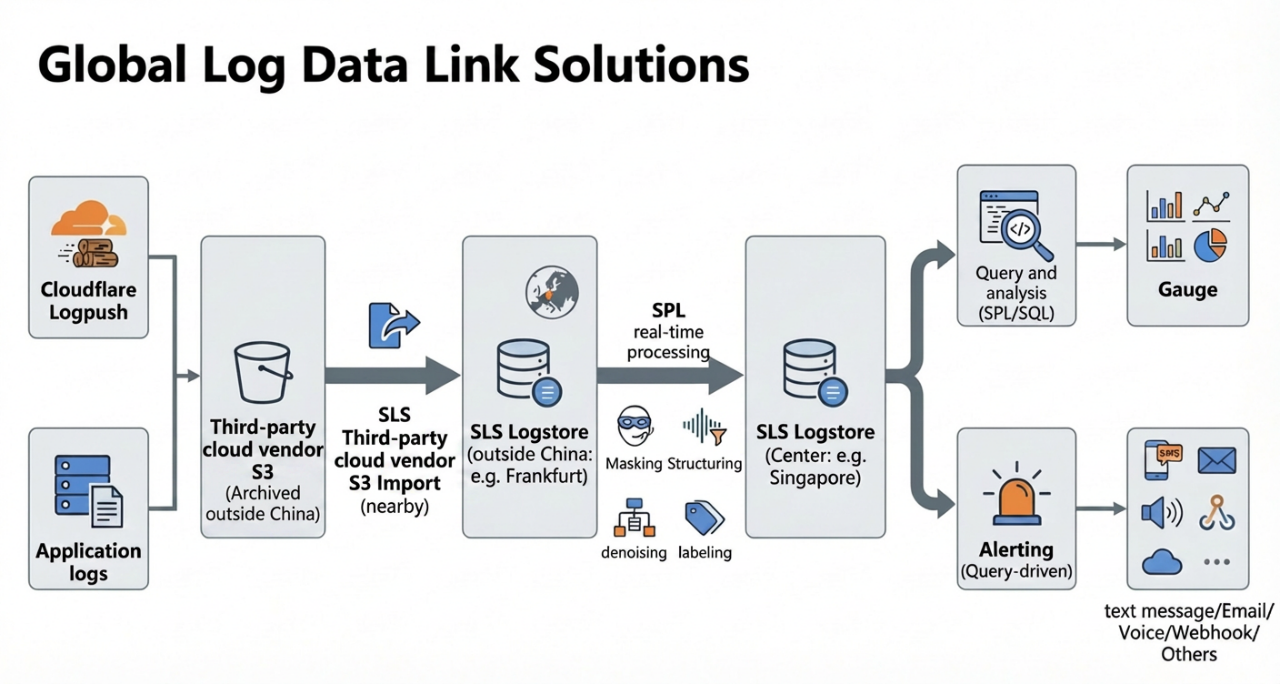

A common form in multicloud scenarios is that edge security and access capabilities outside China are handled by Cloudflare (Web Application Firewall (WAF), Content Delivery Network (CDN), and Access), and Verbose Logs are uniformly stored in Amazon Simple Storage Service (S3) through Logpush for low-cost archiving and compliance retention. Meanwhile, the core business and observability systems of the headquarters often Run on the Alibaba Cloud side. For example, application, gateway, and business logs enter Simple Log Service (SLS), and the alerting, on-call, and ticket systems are also built around the Alibaba Cloud side. The Result is that the "chain of evidence" of the same User Request, the same Attack, or the same publish Change is Distributed across both the Third-party cloud vendor and Alibaba Cloud side. This makes it difficult to complete unified retrieval, association analysis, or closed-loop handling in a single platform.

For the platform engineering team, the core challenge is not the location of log storage, but rather the lack of a unified platform to perform analysis and complete operational tasks.

● Logs are in S3, but troubleshooting, security analytics, and operation Analysis are often scattered across multiple Systems (Cloudflare console, Athena, Glue, Amazon Elastic MapReduce (EMR), CloudWatch, Business Intelligence (BI), and self-built alerting).

● Metrics cannot be standardized: the same Metric (such as 5xx, P99 latency, and WAF block ratio) is calculated separately in different Systems. It is difficult to audit Changes, reuse them, or perform migration.

● The management event response chain is long: it requires "first querying logs -> then manually summarizing -> then sending Notifications -> then dispatching tickets or performing rollback", and the Mean Time To Detect (MTTD) and Mean Time To Resolve (MTTR) are artificially lengthened.

If S3 is used as Log Storage, to "use" the Data (query and analysis, visualization, and alerting filter interaction), a combination of additional components is usually required for querying, ETL, metrics, and alerting. The chain becomes longer, configuration and troubleshooting span multiple Systems, and O&M complexity will significantly increase.

If Data is directly connected to CloudWatch: CloudWatch Logs is used for Collection and storage, Logs Insights is used for query and analysis, and Dashboards and Alarms are used for gauge and alerting closed-loops. The overall cost is usually very high.

Next, the data import, processing, query and analysis, gauge display, and alerting features in this set of SLS Solutions will be broken down and introduced step by step.

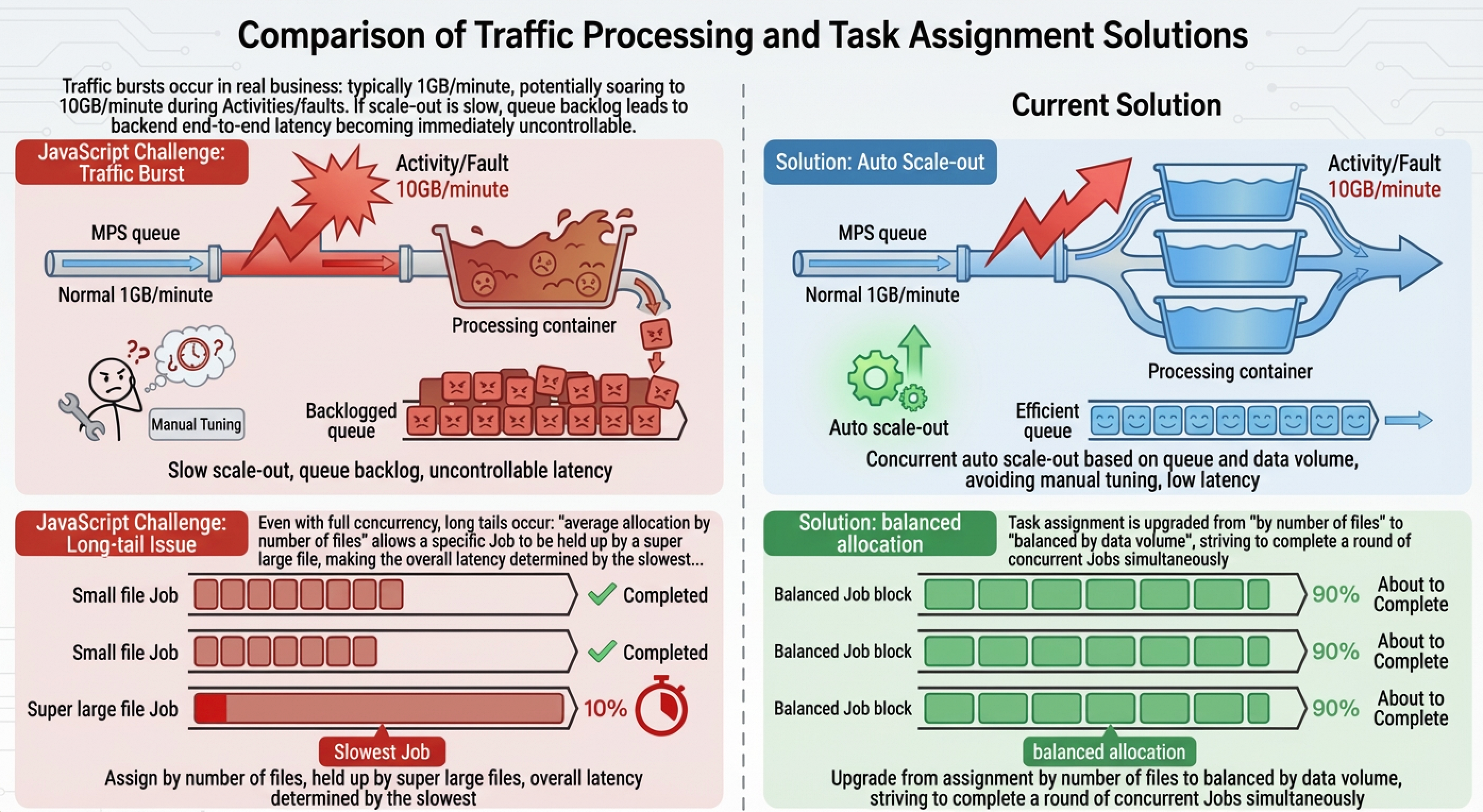

In the eyes of many people, data import is just the three-step procedure of "read-transmit-write". But when you face:

● Logs that generate thousands of files per minute

● Attack and defense traffic that instantly surges from 1 GB to 10 GB

● Various mixed data formats such as gzip, snappy, JavaScript Object Notation (JSON), and Comma-Separated Values (CSV)

You will find that this is by no means a simple "copy and paste" operation.

Next, the difficulties encountered in the actual import procedure will be clarified first, and then the corresponding implementation methods will be explained:

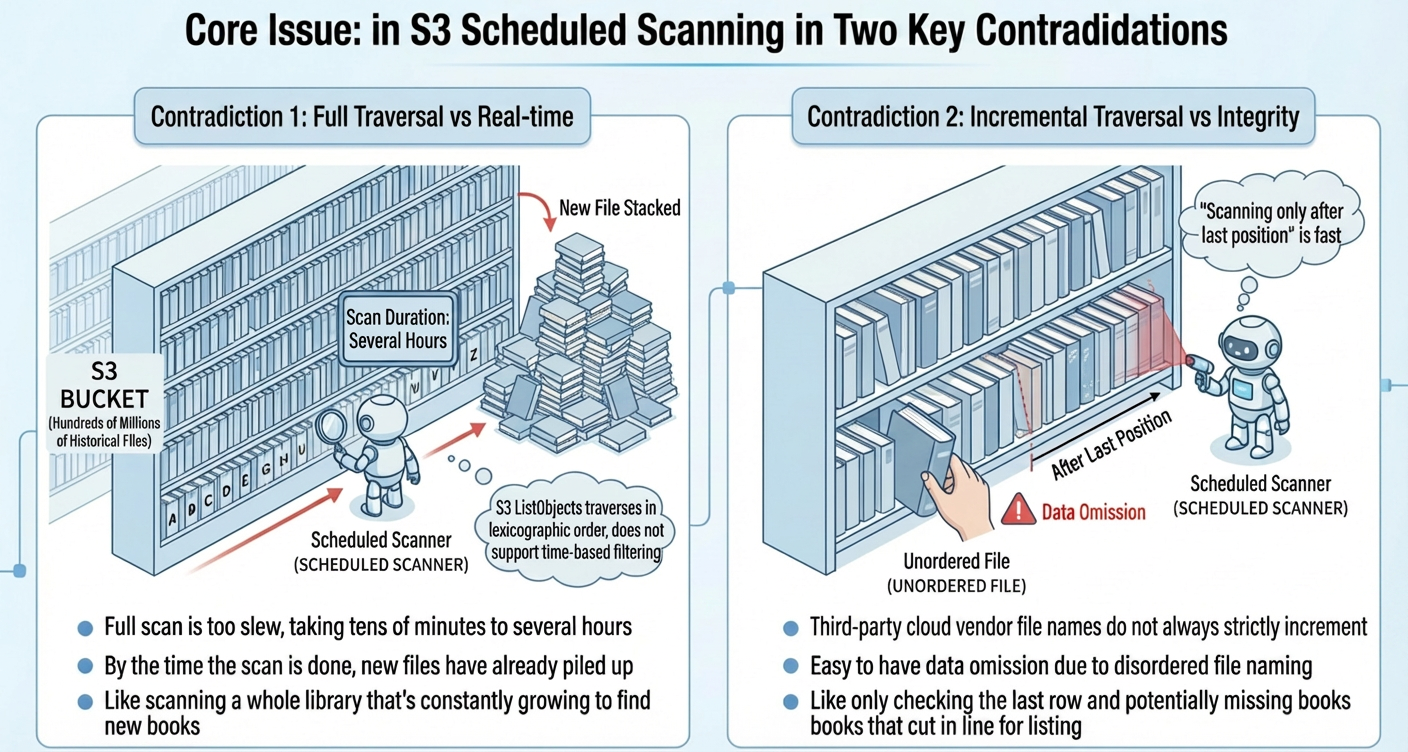

The ListObjects operation of S3 only Supports traverse in lexicographic order, and does not Support "filtering by Time". When the volume of History files in a bucket or folder is huge, a full scan may take a long Time. However, if only an incremental scan is performed, files may be missed because file names are out of order.

Consequences: New files are not Searched in Time (latency increases), or they are missed in extreme cases (Integrity threat).

Our design solutions for these difficulties are as follows:

● Design point 1: A "dual-mechanism" for file discovery ensures both timeliness and completeness.

| Comparison dimension | Dual-pattern traverse | Simple Queue Service (SQS) Event-driven |

|---|---|---|

| Real-time Search of new files | Minute-level | Second-level |

| Configuration complexity | Simple, no additional configuration required | Requires the configuration of S3 event Notifications and SQS |

| Reliability | High (full fallback) | Dependency on SQS reliability |

| Cost | Only S3 API calls | Additional SQS fees |

| Scenarios | Standard log import | High real-time requirements, irregular file names |

● Design point 2: Auto Scaling + balanced allocation by data volume to handle traffic peaks and manage long-tail data.

● Design point 3: Auto compression detection and explicit configuration of data formats (no guessing).

● Design point 4: Point and Status Management + retry and fencing + file-level tracking to make data backfilling feasible.

Data import is only the first step. A complete observability closed loop also requires data governance, interactive search, visualization, and intelligent alerting. SLS integrates these capabilities into a unified platform. The core principles of each step are described below.

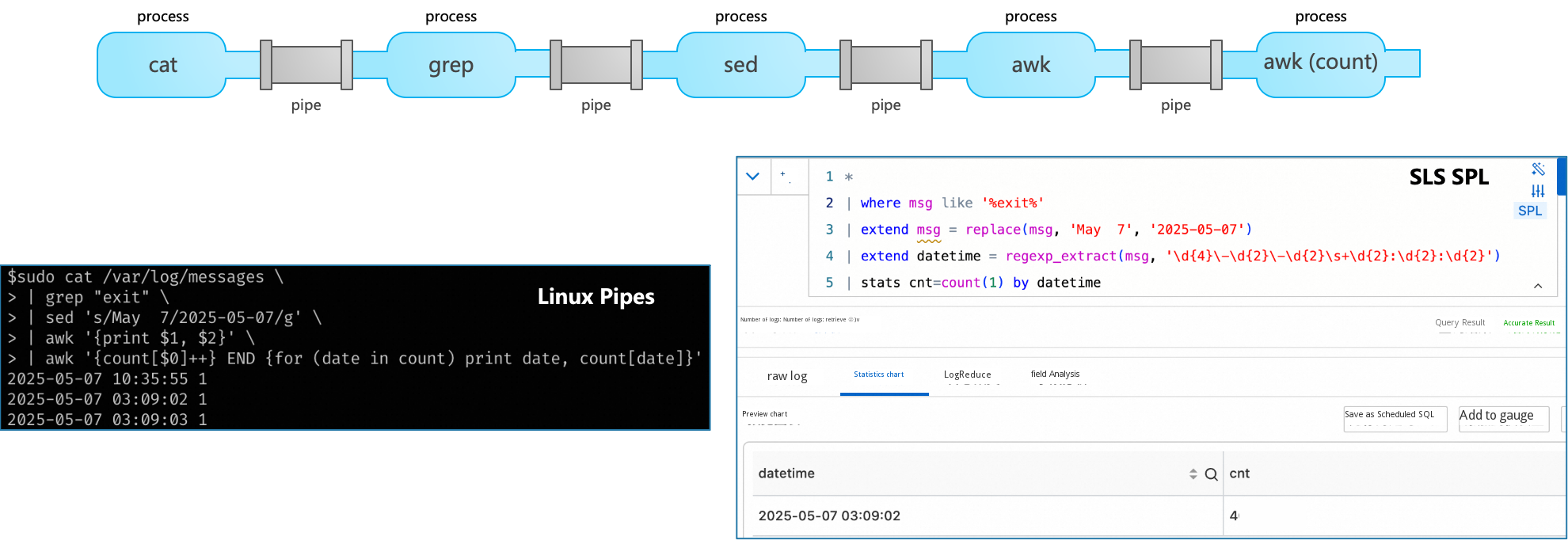

SLS data transformation is based on managed real-time Consumption Jobs and uses Structured Process Language (SPL) syntax to process logs in streams. It is fully managed, supports Auto Scaling, and makes Data visible in seconds. It also Supports line-by-line debugging and code hinting.

SLS uses the SPL engine as the kernel on the log pipeline, which includes advantages such as column-oriented calculation, single instruction multiple data (SIMD) acceleration, and C++ implementation. Based on the distributed architecture of the SPL engine, we have redesigned the Elasticity mechanism. It is not just scaling at the granularity of an instance (such as a Kubernetes pod or service compute unit) in the usual sense, but can quickly scale at the granularity of a DataBlock (MB level).

Scenario capabilities:

● Pre-compliance: IP-to-Geo transform and desensitization are completed outside China. Only compliance fields are retained after cross-border data transfer to meet General Data Protection Regulation (GDPR) and data export requirements.

● Data filtering: Invalid Data is removed to reduce downstream index and storage overheads.

● Structured extraction: Original fields are transformed into analyzable Metrics, and nested JSON is parsed to avoid repeated calculations during queries.

● Field projection: Only Gold fields are delivered, which can reduce cross-border traffic and index costs by 50% to 80%.

● Field enrichment: Field connection (JOIN) is performed on logs (such as order logs) and dimension tables (such as User information Tables) to Add more dimension information to logs for data analytics.

● Data forwarding: Logstore Data can be forwarded and aggregated to destination databases. Data can also be flexibly forwarded based on field Content.

SLS provides a high-Performance query engine that Supports the index pattern (responses in seconds for tens of billions of Data records) and the scan pattern (lightweight Analysis). Queries are directly applied to indexes without the need to pre-build datasets or wait for purge delays. For ultra-large-scale data analytics scenarios, SLS provides the Dedicated SQL, which includes the enhancement mode and complete accuracy mode.

Query engine and capabilities:

● Nearly a hundred Window Functions: Built-in statistical, aggregation, string, Time, and geospatial functions are provided out-of-the-box.

● Cross-database federated queries: StoreView supports cross-Project and cross-Logstore Data associated queries.

● SQL Exclusive: Provides high-precision Analysis capabilities in large data volume scenarios to avoid sampling errors.

● Scheduled SQL: Supports scheduled execution of SQL queries for Report Generation and Metric pre-computation.

SLS dashboards are Data Visualization Tools provided by Simple Log Service to display query and analysis Results in a graphical interface. A dashboard usually contains multiple statistical charts to summarize and render key performance metrics, important Data, and Analysis Results.

Visualization capabilities:

● Rich chart Types: Multiple statistical charts such as Tables, line charts, column charts, pie charts, and maps are supported. The Pro Version supports the overlaid display of multiple query Results.

● Interaction and drill down: Supports global Time filtering, variable filter interaction, and chart drill down to track from the overall situation to details layer by layer.

● Subscribe and Share: Supports periodically rendering dashboards into Images and sending them by Email or to DingTalk groups. Supports embedding the console into third-party Systems.

● Third-party Integration: Can be integrated with visualization tools such as DataV, Grafana, and Tableau, and supports bidirectional import and export of Grafana dashboards.

SLS alerting is a one-stop artificial intelligence for IT operations platform for alerting and monitoring systems, denoising, transaction management, and Notification dispatch. It consists of subsystems such as the alerting and monitoring system, alert management system, and notification management system. After logs or metrics are ingested, you can create monitoring jobs, notification channels, and alert policies within minutes.

Feature advantages:

● Low cost and fully managed: Provided as Software as a Service (SaaS). Except for text messages and voice calls, no additional fees are charged for alerting and monitoring systems, transaction management, or other features.

● Denoising and dispatch: Supports grouping, removing duplicates, suppression, and upgrading to avoid alert storms. Supports automatic dispatch to different teams based on rules.

● Rich notification channels: Natively integrates DingTalk, WeCom, Lark, Slack, text messages, voice calls, and Webhooks.

Having multiple components is not necessarily bad, but when your requirement is "unified standards, minute-level closed loop, and controllable low cost," multiple components mean:

● Longer pipeline: Data needs to be moved more times (extract, transform, and load (ETL), saving to intermediate tables, and refreshing datasets).

● Larger failure surface: Jitter in any step will Impact the end-to-end timeliness.

● More fragmented billing: Costs for storage, scans, ETL, alerting, visualization, and Networks are all increasing.

In SLS, you can create a reusable engineering template that combines "import + processing + index + query + dashboard + alerting/transaction," use the template to deliver the first version, and use policies to iterate on costs and results.

A large globalized enterprise whose business covers multiple areas such as Europe, Asia-Pacific, and North America achieves global access acceleration and Web application protection through mainstream Alibaba Cloud CDN and security services. To meet Data compliance and audit requirements outside China, the enterprise continuously archives its security and access logs to public cloud Object Storage Service for long-term retention and subsequent Analysis through the native log push capability (Logpush) of the platform.

Currently, the enterprise uses a combination of multiple components on THIRD-PARTY CLOUD VENDOR to achieve the Analysis and monitoring of logs outside China, and encounters the following problems:

● Scattered Data: S3 is distributed across multiple Regions such as Frankfurt and Tokyo, and data silos are difficult to uniformly manage and analyze.

● High query and analysis costs: Athena bills based on scan volume. CloudWatch Logs Insights has limited query capabilities and requires separate queries across regions. The costs of daily retrievals and alerting queries increase linearly with frequency.

In addition, extract, transform, and load (ETL) dependencies on Glue or Lambda require self-maintenance. QuickSight visualization requires additional authorization and has synchronization latency. CloudWatch Alarms configurations are scattered and lack unified denoising capabilities. The multiple product portfolio causes issues such as high O&M complexity and uncontrollable costs.

You can build a unified observability analysis platform based on SLS to achieve the following goals:

● Unified data transformation: You can use Structured Process Language (SPL) to complete data governance outside China (such as field clipping, IP address desensitization, and Geo enrichment). This reduces the costs of cross-border transfer.

● Unified query and analysis: You can aggregate gold data in the central Logstore in China to provide second-level interactive search for hundreds of millions of data records.

● Unified visualization: A one-stop dashboard is provided, and no additional business intelligence (BI) tools are required.

● Unified alerting closed loop: Intelligent alerting based on SLS query and analysis is provided. It supports denoising, dispatching, and multi-channel notifications.

Data is pushed from Cloudflare Logpush to various Amazon Web Services (THIRD-PARTY CLOUD VENDOR) S3 regions outside China for archiving. SLS imports the data into Logstores in the same region through event-driven mechanisms or scheduled scans. After the data is transformed by SPL, it is aggregated into the central Logstore in China to support unified query and analysis, dashboards, and alerting.

Sample raw log (Cloudflare Web Application Firewall (WAF) log)

The sample Cloudflare WAF raw log contains sensitive and security fields such as ClientIP, SecurityAction, and SecuritySources, and covers three security action scenarios: block, allow, and challenge. You can directly use these logs to test SPL data transformation statements.

{

"EdgeStartTimestamp": "2024-12-25T10:30:00Z",

"RayID": "abc123def456",

"ClientIP": "203.0.113.50",

"OriginIP": "10.0.0.100",

"ClientRequestURI": "/api/v1/users?id=123",

"ClientRequestMethod": "POST",

"ClientRequestReferer": null,

"SecurityAction": "block",

"SecurityRuleID": "rule_001",

"SecuritySources": "[{\"source\":\"waf\",\"action\":\"block\"}]",

"OriginResponseStatus": 200,

"OriginResponseTime": 150,

"ResponseHeaders": "{\"x-cache\":\"MISS\"}"

}The following SPL script completes data governance outside China: time standardization, IP address to Geo geographic information conversion, IP address desensitization to anonymous fingerprints, security metadata parsing, and threat labeling. Finally, sensitive fields such as ClientIP and OriginIP are removed by using project-away, and only gold fields are retained for cross-border transfer.

-- Core tracking and time standardization

*

| extend __time__ = cast(to_unixtime(date_parse(EdgeStartTimestamp, '%Y-%m-%dT%H:%i:%SZ')) as bigint)

| extend RequestId = RayID

| extend RequestPath = url_extract_path(ClientRequestURI)

-- IP -> Geo (completed outside China)

| extend

GeoCountry = ip_to_country(ClientIP),

GeoRegion = ip_to_province(ClientIP),

GeoCity = ip_to_city(ClientIP)

-- IP address desensitization: Retain anonymous fingerprints (optional) and do not carry the raw IP address for cross-border transfer

| extend ClientFingerprint = to_base64(sha256(to_utf8(ClientIP)))

-- Security metadata parsing and labeling

| expand-values -keep SecuritySources

| parse-json -prefix='Security' SecuritySources

| extend IsHighRisk = if(ClientRequestMethod = 'POST' and (ClientRequestReferer is null or SecurityAction = 'block'), 1, 0)

-- Final denoising and field projection

| project-away ClientIP, OriginIP, ResponseHeaders, RayIDSample Data after data transformation

The data after data transformation has completed Geo enrichment, IP masking, and threat labeling. Sensitive fields have been removed, and the data can be directly used for downstream query and analysis and alerting:

{

"RequestPath": "/api/v1/users",

"__time__": "1735122600",

"RequestId": "abc123def456",

"ClientFingerprint": "O1zTaFfLyH1ZqEHS03UiLSNMzwMX+4ZW7OsIVsDGgEg=",

"OriginResponseTime": "150",

"GeoCity": "Richardson",

"ClientRequestURI": "/api/v1/users?id=123",

"IsHighRisk": "1",

"EdgeStartTimestamp": "2024-12-25T10:30:00Z",

"SecurityAction": "block",

"SecurityRuleID": "rule_001",

"Securityaction": "block",

"GeoCountry": "United State",

"GeoRegion": "Texas",

"OriginResponseStatus": "200",

"Securitysource": "waf",

"ClientRequestMethod": "POST"

}Sample 1: Web Application Firewall (WAF) rule hit Statistics - This sample aggregates the hit Count, high-threat proportion, and unique attacker count by rule.

* | SELECT

SecurityRuleID,

count(*) AS TotalHits,

count_if(IsHighRisk = 1) AS HighRiskHits,

approx_distinct(ClientFingerprint) AS UniqueClients

FROM log

WHERE SecurityRuleID IS NOT NULL AND SecurityRuleID <> ''

GROUP BY SecurityRuleID

ORDER BY TotalHits DESC

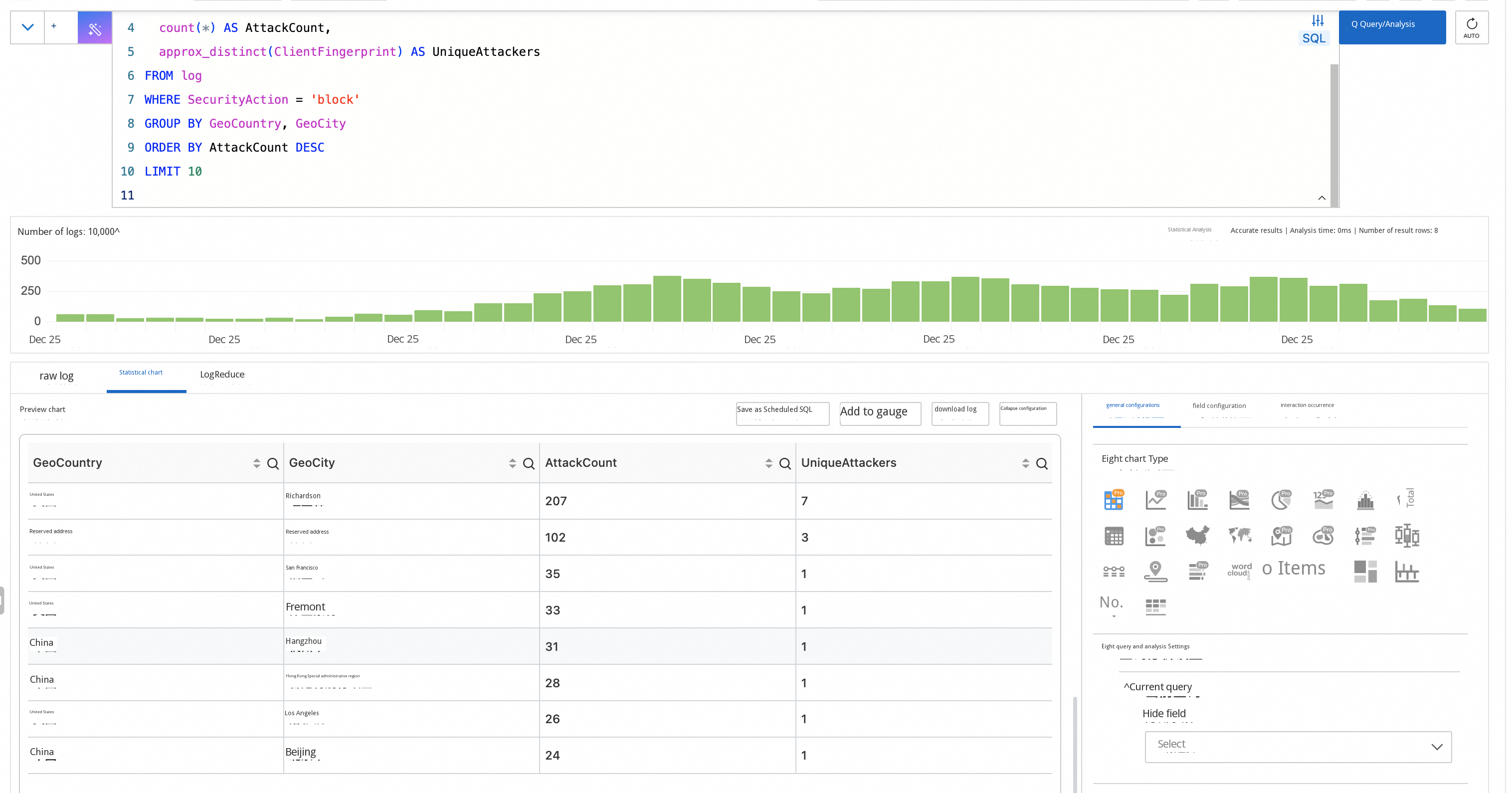

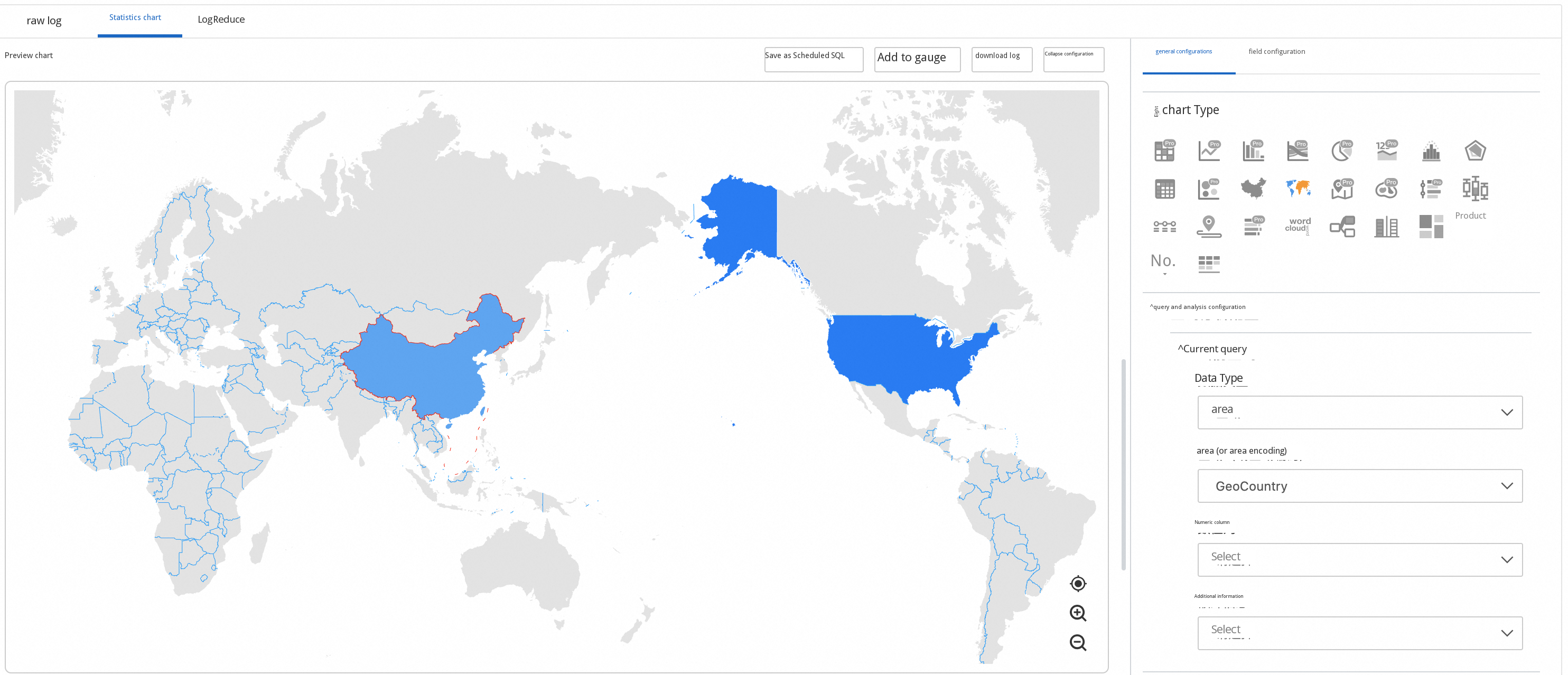

Sample 2: Top 10 Attack source regions - This sample aggregates the block Count and unique attacker count by country or city.

* | SELECT

GeoCountry,

GeoCity,

count(*) AS AttackCount,

approx_distinct(ClientFingerprint) AS UniqueAttackers

FROM log

WHERE SecurityAction = 'block'

GROUP BY GeoCountry, GeoCity

ORDER BY AttackCount DESC

LIMIT 10

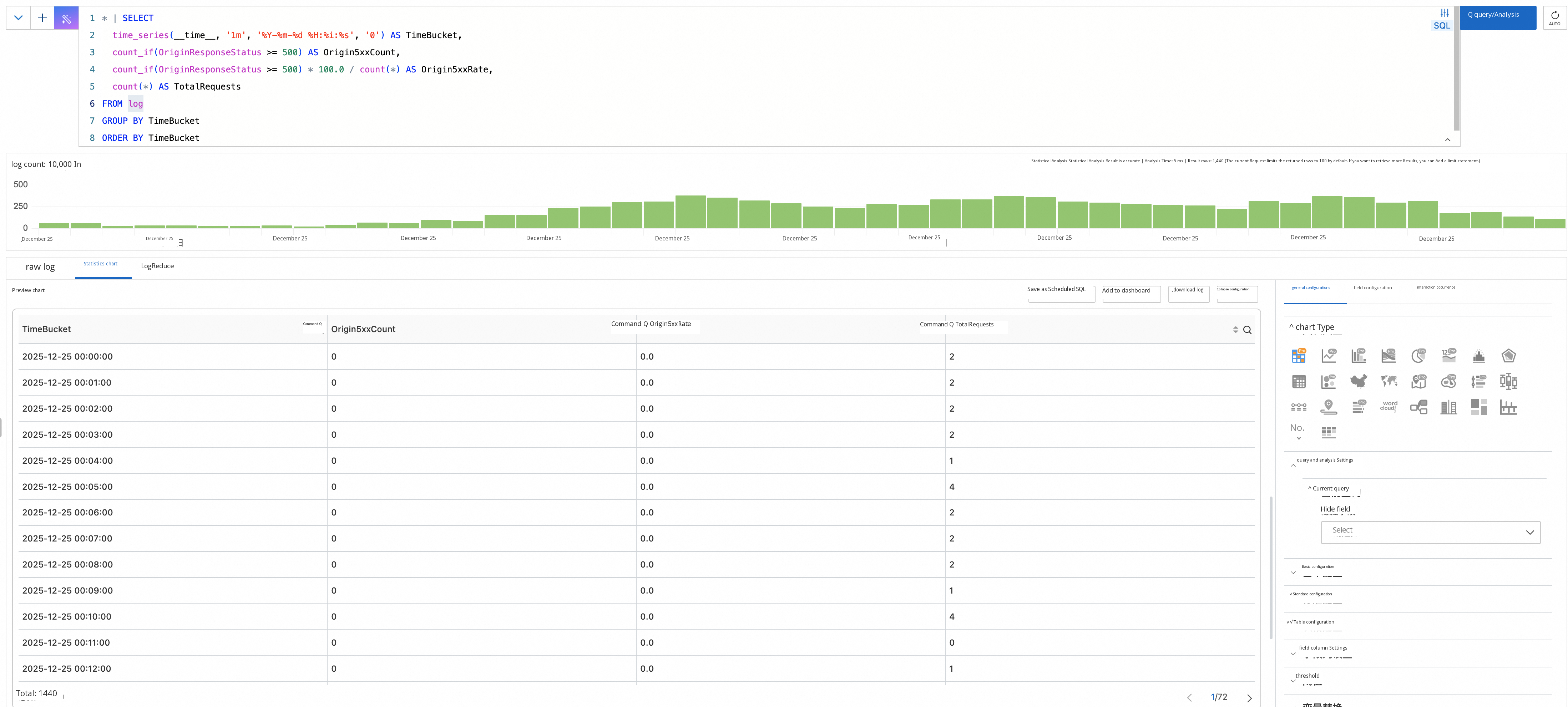

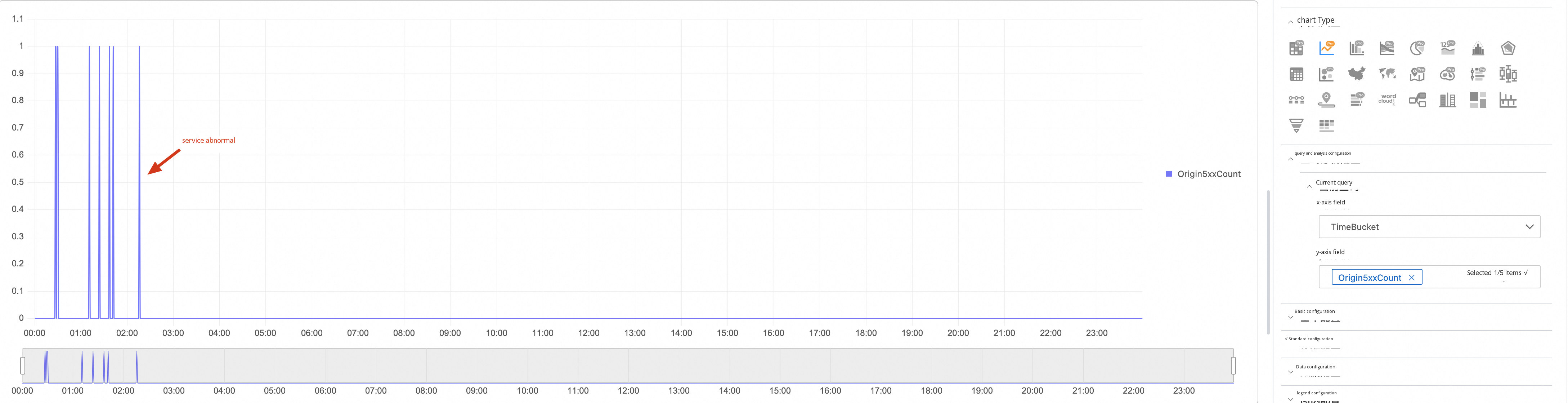

Sample 3: Origin 5xx fault Trend - This sample aggregates the fault Count, Error Rate, and total Request count by minute.

* | SELECT

time_series(__time__, '1m', '%Y-%m-%d %H:%i:%s', '0') AS TimeBucket,

count_if(OriginResponseStatus >= 500) AS Origin5xxCount,

count_if(OriginResponseStatus >= 500) * 100.0 / count(*) AS Origin5xxRate,

count(*) AS TotalRequests

FROM log

GROUP BY TimeBucket

ORDER BY TimeBucket

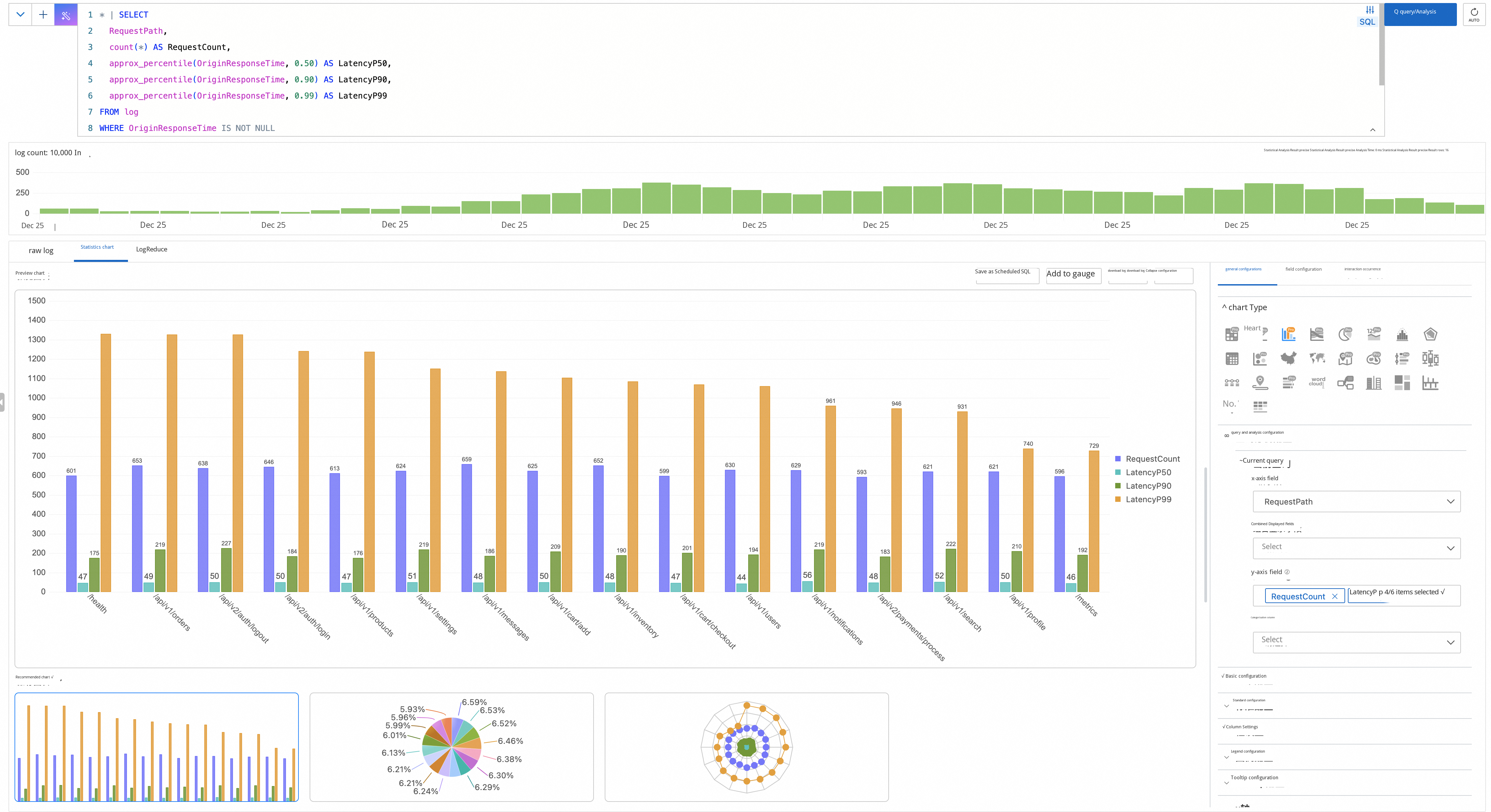

Sample 4: Request latency quantile Analysis - This sample aggregates P50/P90/P99 latency by path to locate slow APIs.

* | SELECT

RequestPath,

count(*) AS RequestCount,

approx_percentile(OriginResponseTime, 0.50) AS LatencyP50,

approx_percentile(OriginResponseTime, 0.90) AS LatencyP90,

approx_percentile(OriginResponseTime, 0.99) AS LatencyP99

FROM log

WHERE OriginResponseTime IS NOT NULL

GROUP BY RequestPath

HAVING count(*) > 100

ORDER BY LatencyP99 DESC

LIMIT 20

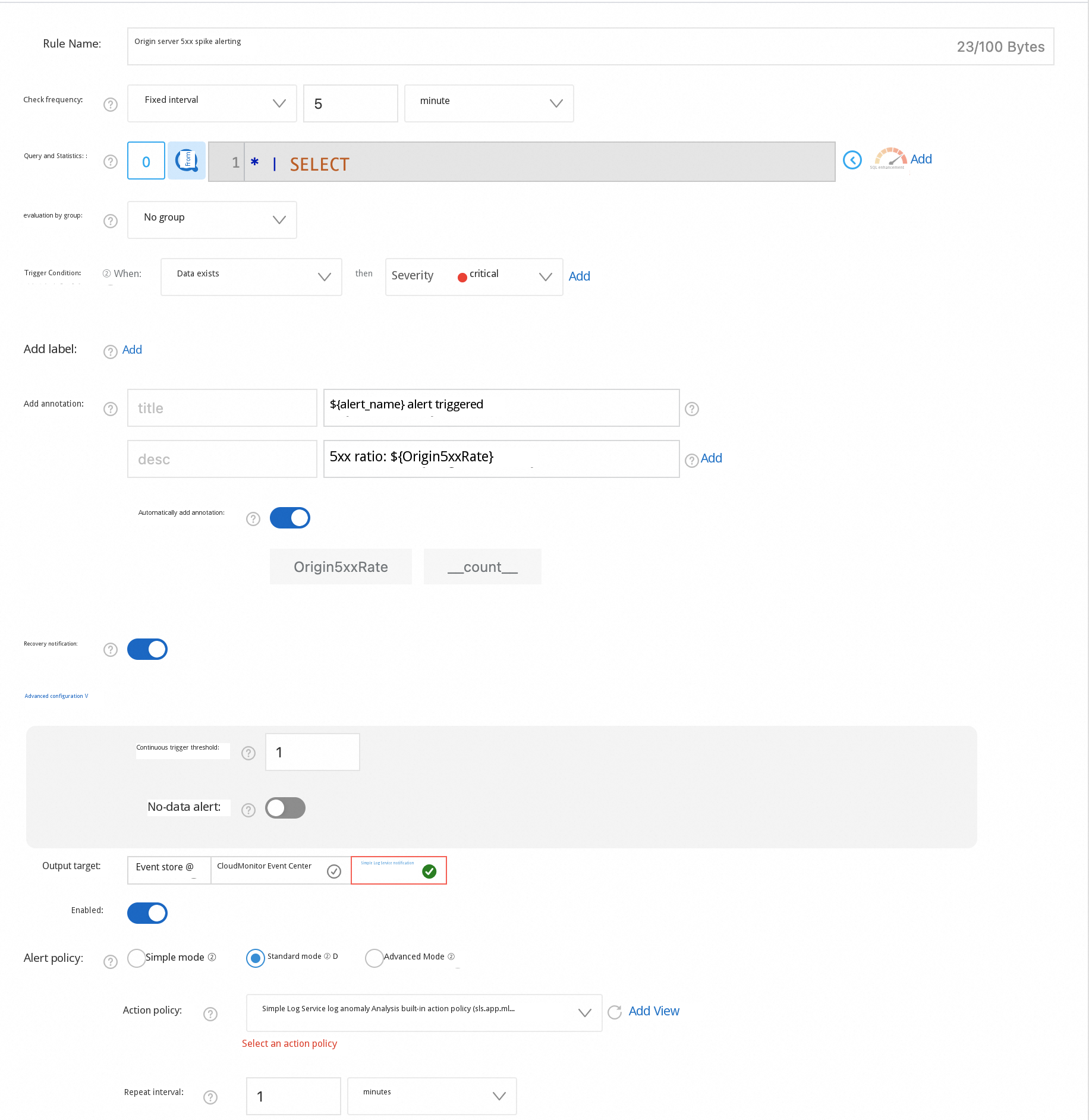

Alert 1: Sudden increase in origin 5xx faults - This alert is triggered when the Error Rate exceeds 5% to rapidly discover origin abnormalities.

* | SELECT

count_if(OriginResponseStatus >= 500) * 100.0 / count(*) AS Origin5xxRate

FROM log

HAVING Origin5xxRate > 5

Alert 2: Sudden increase in high-threat Requests - This alert is triggered when the Count exceeds 100 or the proportion exceeds 10% to detect potential Attacks.

* | SELECT

count_if(IsHighRisk = 1) AS HighRiskCount,

count_if(IsHighRisk = 1) * 100.0 / count(*) AS HighRiskRate

FROM log

HAVING HighRiskCount > 100 OR HighRiskRate > 10Alert 3: Sudden increase in WAF blocks - This alert is triggered when the block Count exceeds 1000 or the unique attacker count exceeds 50 to assess the attack posture.

* | SELECT

count_if(SecurityAction = 'block') AS BlockCount,

approx_distinct(ClientFingerprint) AS UniqueAttackers

FROM log

HAVING BlockCount > 1000 OR UniqueAttackers > 50During the data migration procedure, the network quality and fees of cross-cloud and Cross-border Transfer cannot be ignored. Therefore, we have implemented the capability to reduce the overhead of cross-cloud and Cross-border Transfer by using CloudFront for users to choose.

● Import data from Amazon S3 to Simple Log Service

700 posts | 56 followers

FollowAlibaba Cloud Native Community - April 3, 2026

Alibaba Cloud Native Community - March 11, 2026

Kidd Ip - July 31, 2025

Alibaba Cloud Native Community - August 11, 2025

Alibaba Cloud Native - June 21, 2024

Alibaba Cloud Native Community - November 4, 2025

700 posts | 56 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More EasyDispatch for Field Service Management

EasyDispatch for Field Service Management

Apply the latest Reinforcement Learning AI technology to your Field Service Management (FSM) to obtain real-time AI-informed decision support.

Learn MoreMore Posts by Alibaba Cloud Native Community