By Cheng Tan & Wang Chen

As a new entry for model services, OpenClaw can help you write code, check emails, operate GitHub, set up scheduled tasks, and more. This kind of direct instruction interaction through IM can provide users with a sense of "satisfaction." However, as the backlog of historical commands increases and the number of Long Horizon projects rises, problems arise:

● Security Spillover: Each Agent must configure its own API Key, with GitHub PATs and LLM Keys scattered everywhere. The CVE-2026-25253 vulnerability from January 2026 made us realize that this "self-hackable" architecture brings significant security risks while being convenient.

● Memory Explosion: An Agent bears too many roles, doing both frontend and backend work, as well as writing documentation. The skills/ directory becomes increasingly chaotic, with various memories mixed in MEMORY.md, causing a large amount of irrelevant context to be loaded each time. This wastes tokens and leads to memory confusion.

● Low Collaboration Efficiency with Multiple Agents: Manually configuring each SubAgent, manually assigning tasks, and manually synchronizing progress takes substantial time away from focusing on business directives and outputs, as you spend a lot of time being the "nanny" of the Agents.

● Poor Mobile Experience: Trying to command an Agent to work from a phone only to find that the integration process for Feishu and DingTalk bots can take days or even weeks.

● High Configuration Bar: Even experienced programmers may require half a day from installation and configuration to usage. There are even paid installation projects for OpenClaw available on certain platforms, offering on-site services.

If you agree, then HiClaw was born for this.

HiClaw = Super Evolution of OpenClaw, which can be understood as the Team version of OpenClaw.

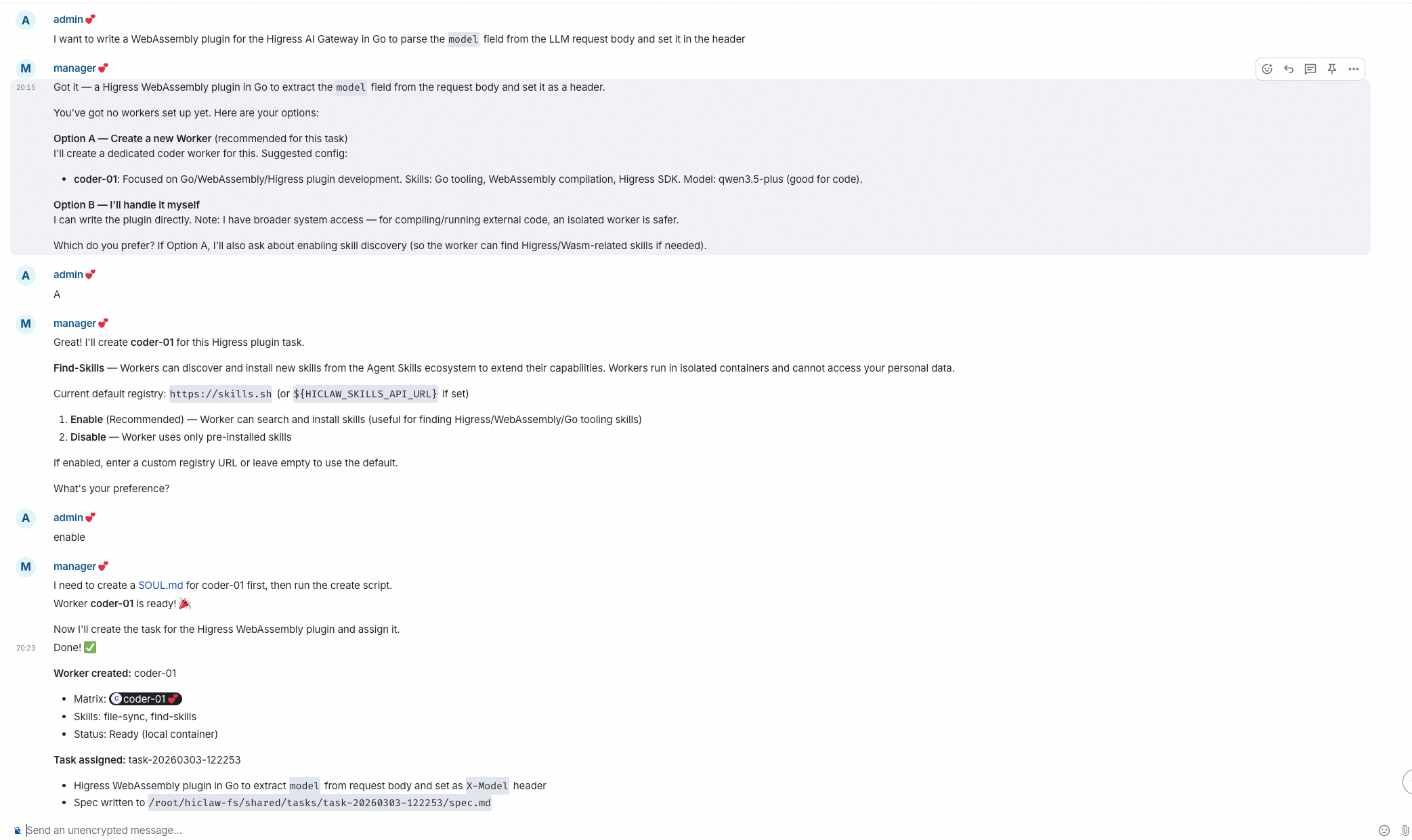

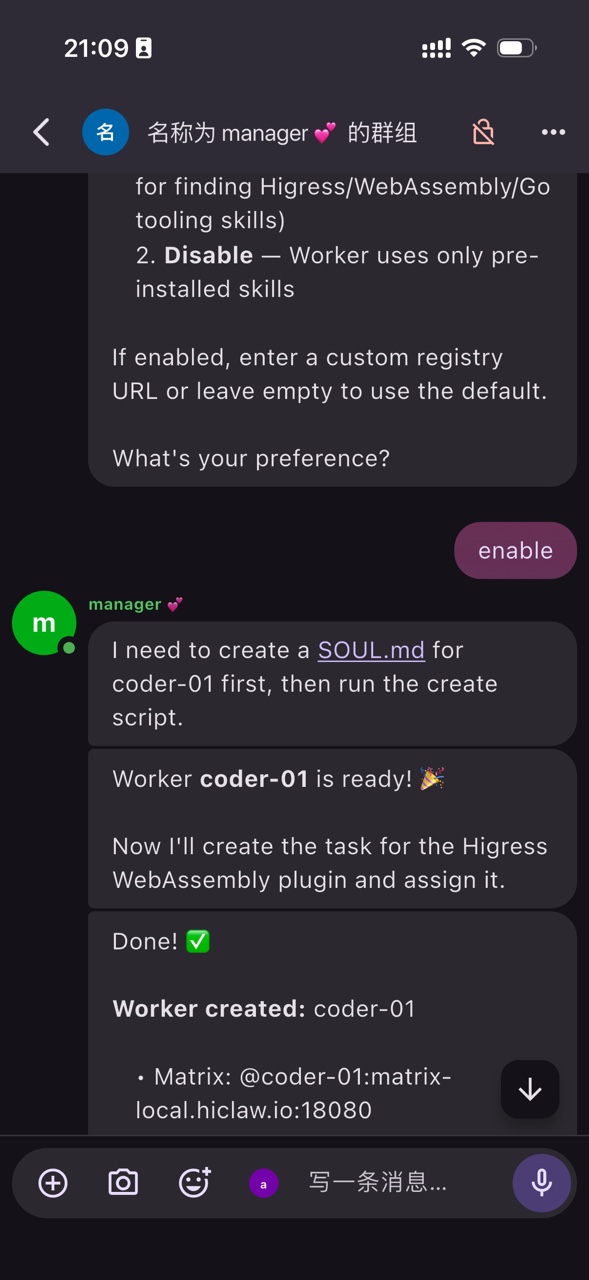

The core innovation is the introduction of the Manager Agent role based on OpenClaw. It does not directly perform tasks but helps you manage the Worker Agent team, just like Iron Man's butler Jarvis.

┌─────────────────────────────────────────────────────┐

│ Your local environment │

│ ┌───────────────────────────────────────────────┐ │

│ │ Manager Agent (AI butler) │ │

│ │ ↓ manages │ │

│ │ Worker Alice Worker Bob Worker ... │ │

│ │ (frontend dev) (backend dev) │ │

│ └───────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────┘

↑

You (human admin)

Only need to make decisions, not babysitThis management system is enabled on demand and can be flexibly selected:

Mode 1: Direct Conversation with Manager

● Simple tasks can be directly told to the Manager, and it will handle them.

● Suitable for quick Q&A and simple operations.

Mode 2: Manager Assigns Worker

● Complex tasks are broken down by the Manager and assigned to specialized Workers.

● Each Worker has independent Skills and Memory.

● Skills and memories are completely isolated and do not contaminate each other.

In addition to the Manager Agent role, HiClaw has implemented multiple evolutions, including:

| Dimension | OpenClaw | HiClaw |

|---|---|---|

| Positioning | One or more Claws

|

A Claw Team managed by Manager

|

| Deployment | Single process | Distributed container |

| Topology | Flat peer-to-peer | Manager + Workers |

| Credential management | Each Agent holds | AI Gateway centralized management |

| Model selection | Unified model | Optimal model allocated by Worker task type |

| Cost efficiency | Expensive models used for simple tasks as well | Cheap models used for simple tasks, saving 60-80% |

| Skills | Manual configuration | Manager allocates on demand |

| Memory | Mixed storage | Worker isolated |

| Communication | Internal bus | Matrix protocol |

| Mobile | Enterprise IM | Element + multiple client options |

| Fault isolation | Shared process | Container-level isolation |

| Multi-instance collaboration | Not supported | Interconnected through external IM channels |

| External channels | Must be configured by yourself | Out of the box (Channel plugin) |

We will elaborate on how to solve the challenges of implementing OpenClaw by combining these evolutions.

Under the native OpenClaw architecture, each Agent needs to hold a real API Key, which could be leaked if the Agent is attacked or unintentionally outputs it.

HiClaw's solution is: Workers never hold actual credentials. Actual API Keys, GitHub PATs, and other credentials are stored in the AI Gateway, and Workers call external services through the Gateway proxy. Even if a Worker is attacked, the attacker cannot obtain the actual credentials. The security design of the Manager is equally strict: it knows what tasks the Worker needs to perform but does not know the API Keys or GitHub PATs. The Manager's responsibility is to "manage and coordinate"; it does not directly execute file reads or writes or write code.

| Dimension | OpenClaw Native | HiClaw |

|---|---|---|

| Credential Holding | Each Agent holds its own | Worker only holds Consumer Token |

| Leakage Path | Agents can directly output credentials | Manager cannot access actual credentials |

| Attack Surface | Each Agent is an entry point | Only the Manager needs protection |

OpenClaw has a great open skill ecosystem skills.sh; the community already has over 80,000 skills that can be installed with a single click, with tutorials on crawler tools, mainstream frontend libraries, SEO hacks, and more...

However, in the native OpenClaw, you may hesitate to use it easily. After all, you can't fully audit a SKILL.md in a public skill library, and if a skill induces an Agent to output credentials or execute dangerous commands, the consequences could be unimaginable. Because the Agent itself holds your API Key and various credentials.

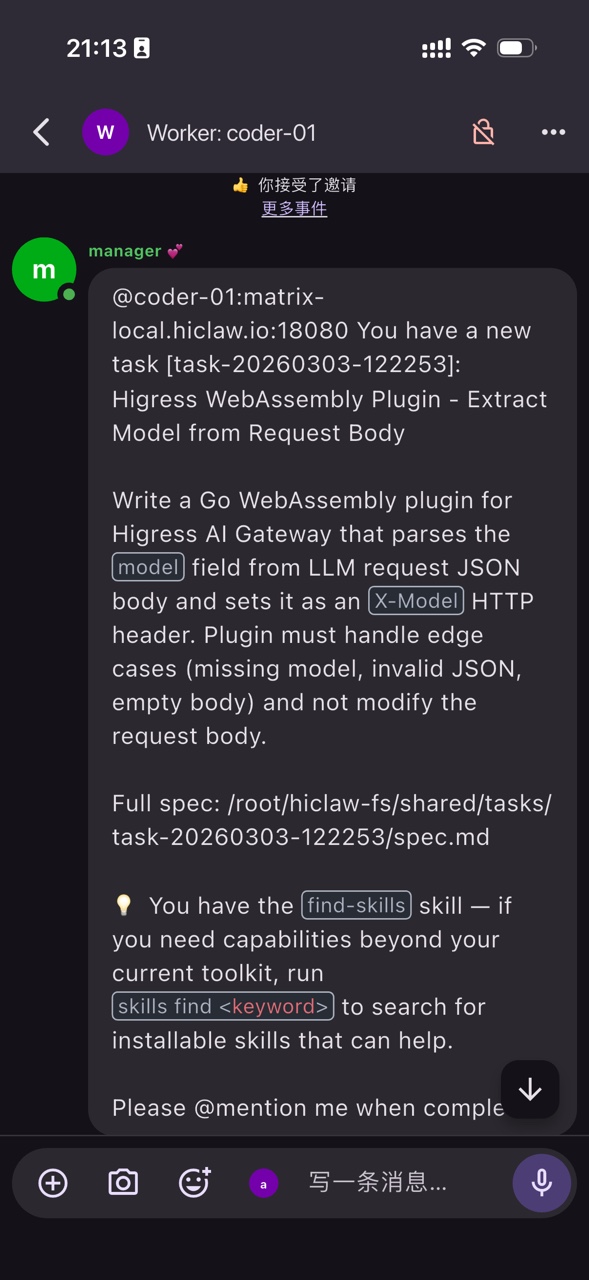

Thanks to HiClaw's design, each Worker runs in a fully isolated container and holds no actual credentials. Developers can confidently let Claw retrieve and autonomously install skills.

What can a Worker access?

✅ Task files, the code repository, and its own working directory

✅ Consumer Token (like an "access badge" that can only call the AI API)

❌ Your LLM API key

❌ Your GitHub PAT

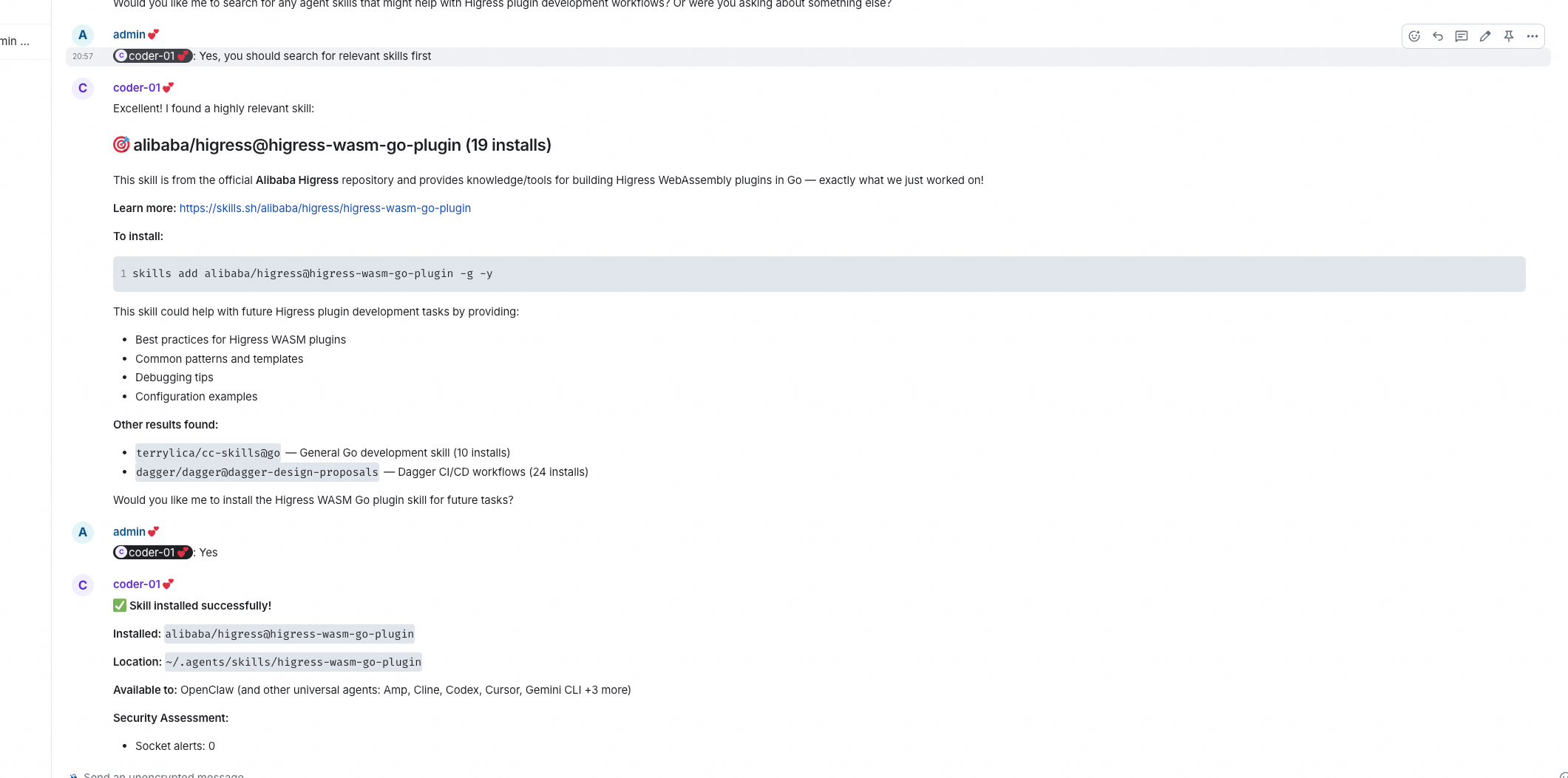

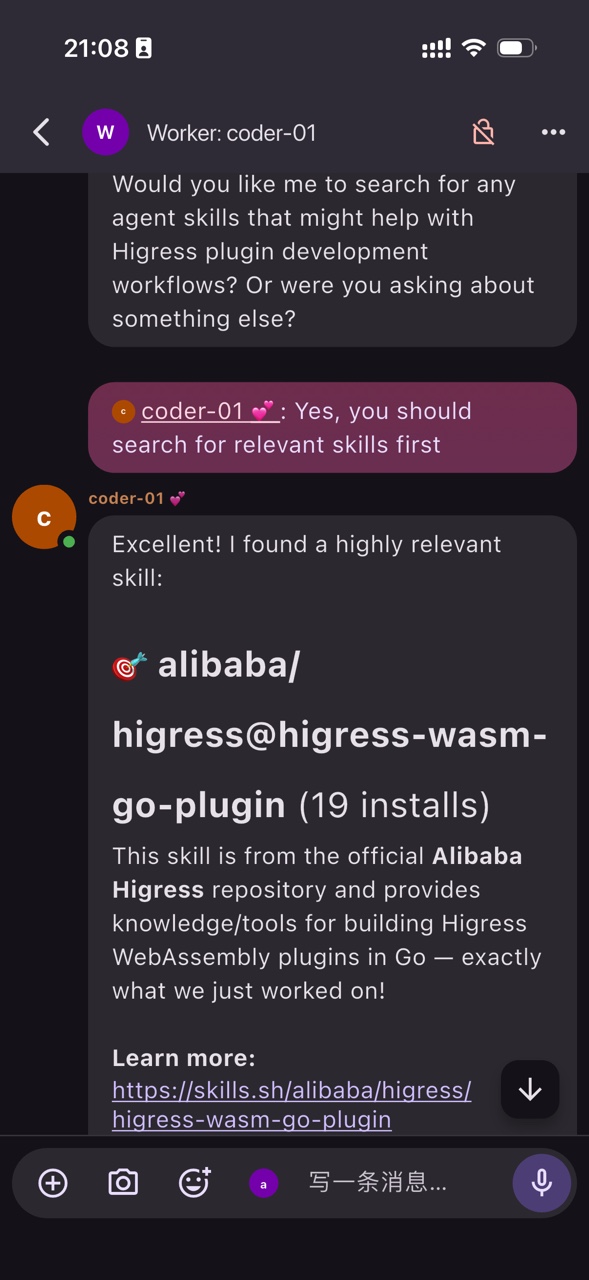

❌ Any encrypted credentialsIn addition, HiClaw has built-in the find-skills skill for Workers. When Workers encounter tasks that require specific domain knowledge, they will automatically search for and install suitable skills:

Manager assigns a task: "Develop a Higress WASM Go plugin"

↓

Worker realizes it’s missing the required tooling

↓

skills find higress wasm

→ alibaba/higress@higress-wasm-go-plugin (3.2K installs)

↓

skills add alibaba/higress@higress-wasm-go-plugin -g -y

↓

Skill installed. The Worker now has a complete plugin dev scaffold and workflow.If you have concerns or need to accumulate internal skills, HiClaw also supports switching to a self-built private skill library—just set an environment variable in the Manager:

SKILLS_API_URL=https://your-private-registry.example.comAs long as your private repository implements the same API as skills.sh, the Worker will seamlessly switch to internal searching. In both scenarios, the usage of the Worker remains entirely consistent.

The mobile experience of OpenClaw is hard to describe: you want to command Agents to work on your phone but find that the bot integration processes for Feishu and DingTalk have to go through company approval processes, and the company has a limit on bot API quotas.

HiClaw has a built-in Matrix server, supporting multiple clients:

● Use it directly after one-click installation: No need to configure Feishu/DingTalk bots.

● Command anytime on your phone: Download the Matrix client, Element Mobile or FluffyChat.

● Messages are pushed in real-time: Won't collapse into "service account".

● All conversations are visible: You, the Manager, and the Worker are in the same Room, fully transparent.

Manually configuring, manually assigning tasks, and manually synchronizing progress for each SubAgent; you want to focus on business instructions and outputs, but you spend a lot of time in the "nanny" role for Agents.

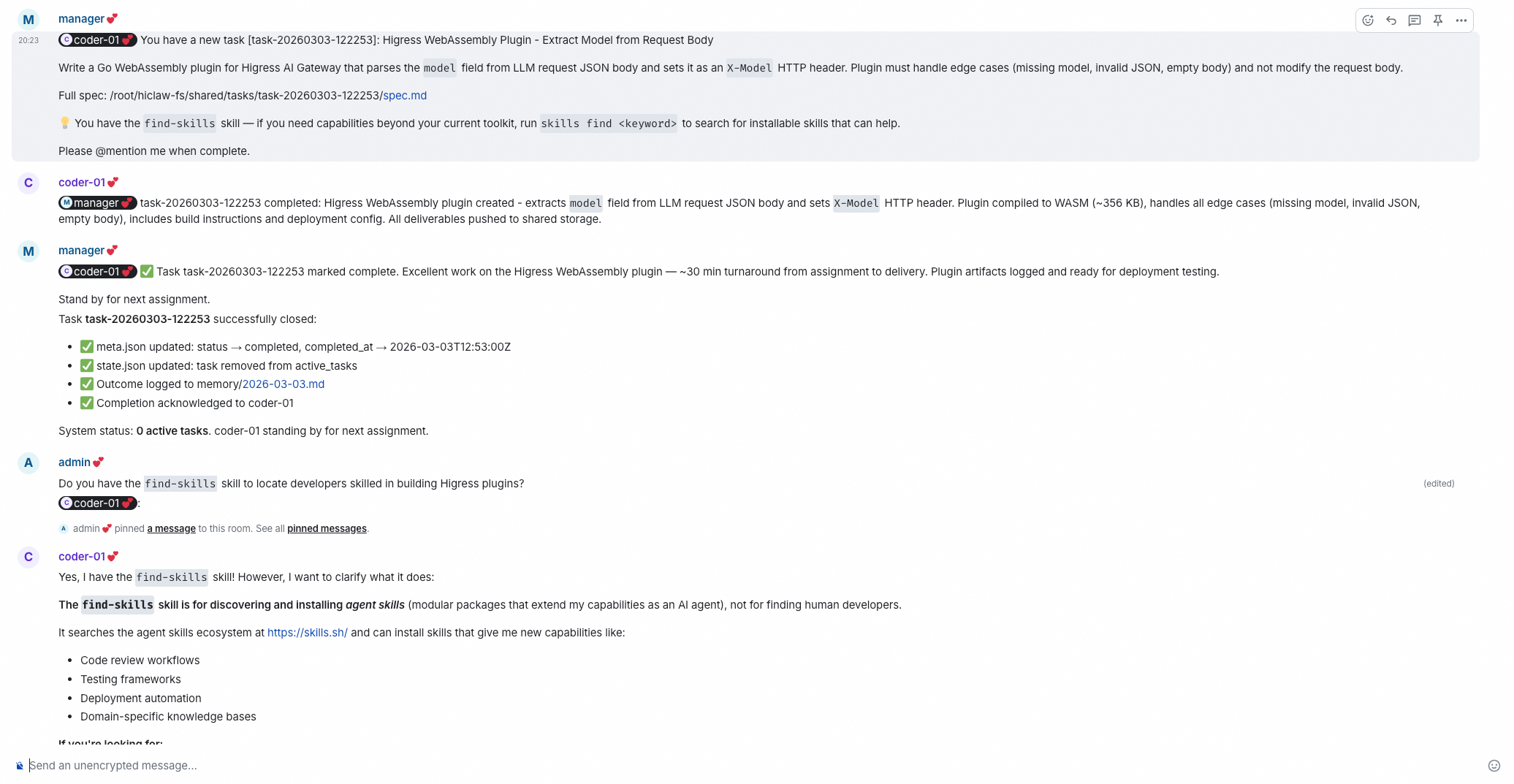

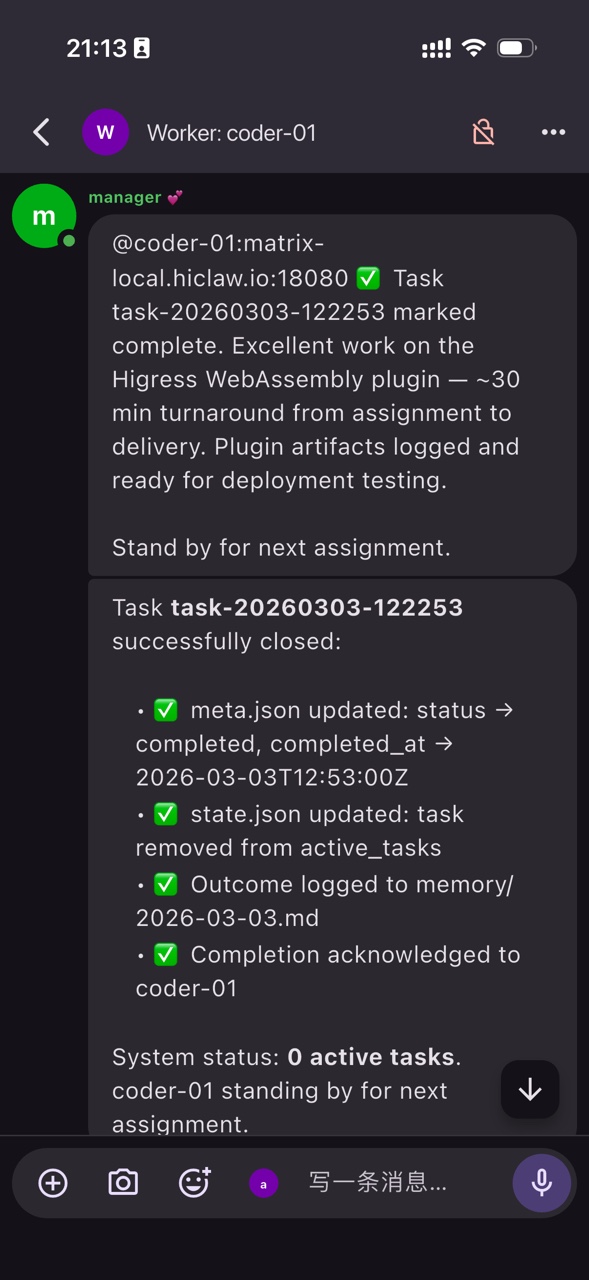

From the perspective of Manager-Worker, HiClaw is a Supervisor architecture: the Manager coordinates all Workers as a central node. But because it collaborates in the Matrix group chat room, it also has characteristics of a Swarm architecture.

In Swarm mode, each Agent can see the complete context in the group chat room:

● Alice says, "I’m working on the login page".

● Bob automatically knows what the frontend is working on and can coordinate during API design.

● No need for the Manager to perform additional information synchronization.

HiClaw has implemented anti-swarming design. It avoids triggering all Agents to call LLM for every message in the group, as the costs and delays would skyrocket.

Rule: An agent only triggers an LLM call when it is @mentioned.● Group chat messages mainly consist of meaningful communication information.

● Agents will not be awakened by irrelevant messages.

● Costs are controllable, and responses are timely.

Compared to the native OpenClaw Sub Agent system, HiClaw's Multi-Agent system is not only easier to use but also more transparent:

Core advantages:

● Fully visible: All Agents' collaboration processes are in the Matrix group chat.

● Can intervene at any time: If a problem is found, you can directly @ a specific Agent to correct it.

● Natural interaction: Just like collaborating with a group of colleagues in a WeChat group.

HiClaw's Manager can help you do these things

| Capabilities | Description |

|---|---|

| Worker lifecycle management | "Help me create a frontend Worker" → Automatically completes configuration and skill assignments |

| Automatic task assignment | You state the goal, and the Manager breaks it down and assigns it to the suitable Worker |

| Heartbeat automatic supervision | Regularly checks the Worker status and automatically alerts you if stuck |

| Automatically initiated project groups | Creates Matrix Room for the project and invites relevant personnel |

An Agent takes on too many roles, doing both frontend and backend work, and writing documentation. The skills/ directory is getting more chaotic, and MEMORY.md is filled with all kinds of memories, loading a lot of irrelevant context each time. This wastes tokens and can cause memory confusion.

A key design of HiClaw: Intermediate work products are not sent to group chat. A large amount of collaboration between Agents (file exchanges, code snippets, temporary data) is accomplished through the underlying MinIO shared file system:

┌─────────────────────────────────────────────────────────────┐

│ Matrix group chat room │

│ Keep only meaningful communication and decisions │

│ (lean, minimal context) │

└─────────────────────────────────────────────────────────────┘

↑ meaningful info

│

┌─────────────────────────────────────────────────────────────┐

│ MinIO shared file system │

│ Large intermediate artifacts like code, docs, temp files │

│ (keeps the chat context uncluttered) │

└─────────────────────────────────────────────────────────────┘This way, the context in the group chat remains at a reasonable scale, and it does not rapidly inflate due to a large amount of file exchange.

Assuming a project requires:

● 3 code development tasks (50k tokens each)

● 10 information collection tasks (100k tokens each)

Native OpenClaw (using Sonnet uniformly):

Code: 3 × 50k × $3/M = $0.45

Messages: 10 × 100k × $3/M = $3.00

Total: $3.45HiClaw (task allocation model):

Code: 3 × 50k × $3/M = $0.45 (Sonnet)

Messages: 10 × 100k × $0.25/M = $0.25 (Haiku)

Total: $0.70Save 80% cost while ensuring code quality.

The design of OpenClaw is like a complete organism: it has a brain (LLM), a central nervous system (pi-mono), and sensors like eyes and mouth (various Channels). However, in the native design, both the brain and the sensors are "external"; you need to configure the LLM Provider yourself and connect various message channels.

HiClaw has undergone a "organ transplant" surgery, turning these external components into internal organs:

┌────────────────────────────────────────────────────────────────────┐

│ HiClaw All-in-One │

│ ┌──────────────────────────────────────────────────────────────┐ │

│ │ OpenClaw (pi-mono) │ │

│ │ Central nervous system │ │

│ └──────────────────────────────────────────────────────────────┘ │

│ ↑ ↑ │

│ ┌────────────────┐ ┌────────────────┐ │

│ │ Higress AI │ │ Tuwunel │ │

│ │ Gateway │ │ Matrix │ │

│ │ (brain access) │ │ Server │ │

│ │ │ │ (sense organs) │ │

│ │ Flexibly │ │ │

│ │ switch LLM │ │ Element Web │

│ │ providers │ │ FluffyChat │

│ │ and models │ │ (clients built-in) │

│ └────────────────┘ └────────────────┘ │

└────────────────────────────────────────────────────────────────────┘The brain (LLM) is no longer external but flexibly managed through the AI Gateway:

● A single entry point with multiple models: Switch between different model providers like Qwen, OpenAI, Claude, etc. on the Higress console

● Centralized credential management: API Keys only need to be configured once and are shared among all Agents

● On-demand authorization: Each Worker only receives calling rights and never comes into contact with the actual API Key

Communication access: Built-in Matrix Server

Sensors have also become built-in:

● Tuwunel Matrix Server: An out-of-the-box messaging server with no configuration needed

● Includes Element Web client: Open the browser and you can start chatting

● Mobile-friendly: Supports FluffyChat, Element Mobile, and other cross-platform clients

● Zero integration cost: No need to apply for Feishu/DingTalk bots, no waiting for approvals

💡 To put it another way: Native OpenClaw is like an assembled computer; you need to buy a graphics card (LLM), a monitor (Channel), and install drivers yourself. HiClaw, on the other hand, is like a ready-to-use laptop with all peripherals integrated, ready to work right away.

HiClaw integrates multiple open-source components (Higress, Tuwunel, MinIO, Element Web...), but you don't have to worry about deployment complexity. Based on the All-in-One concept, it has designed configuration packaging to solve the high configuration threshold problem.

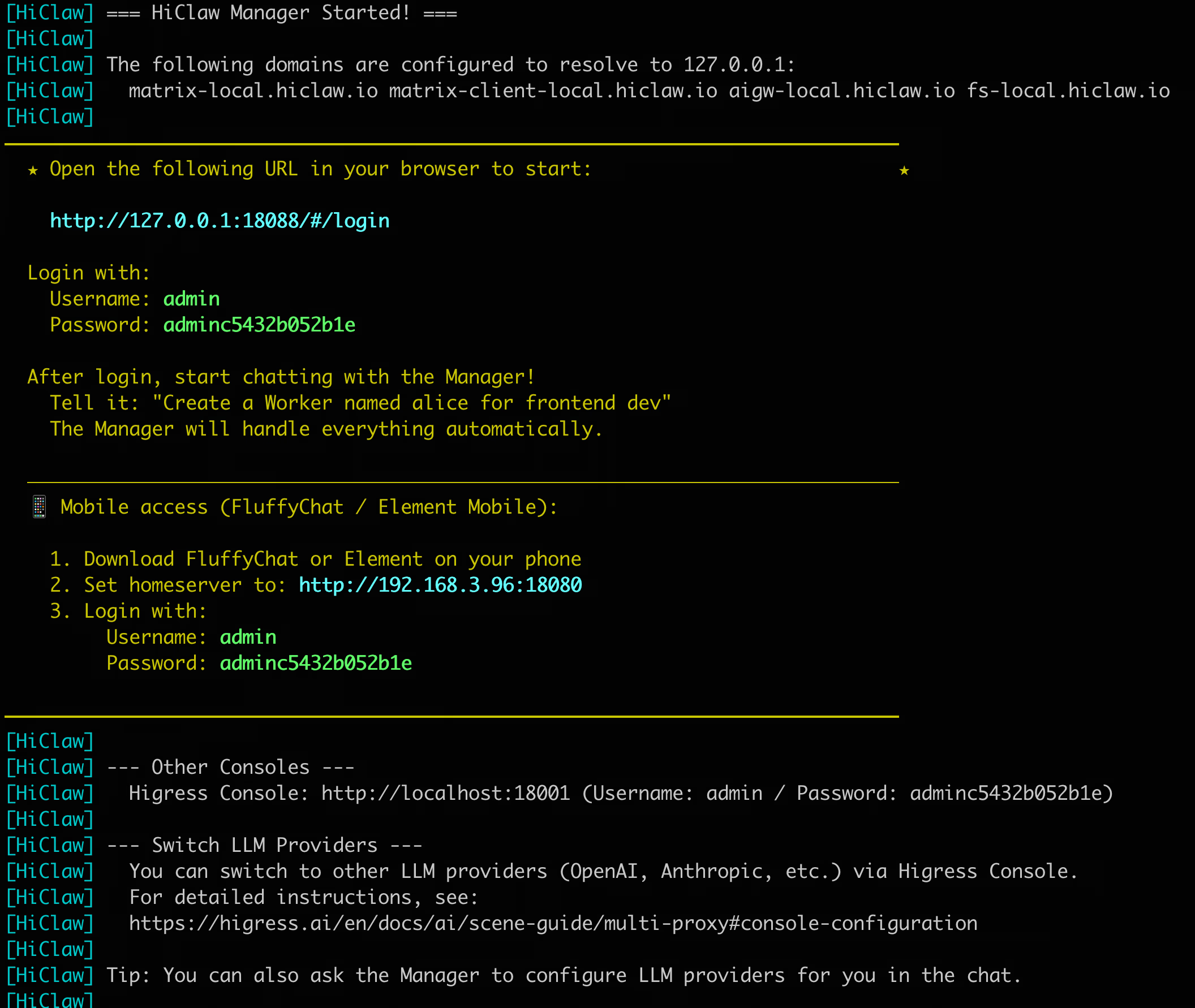

macOS / Linux:

bash <(curl -sSL https://higress.ai/hiclaw/install.sh)Windows (PowerShell 7+):

Set-ExecutionPolicy Bypass -Scope Process -Force; Invoke-Expression ((New-Object System.Net.WebClient).DownloadString('https://higress.ai/hiclaw/install.ps1'))The features of this script include:

● Cross-platform compatibility: Supports Mac, Linux, and Windows

● Intelligent detection: Automatically selects the nearest mirror warehouse based on timezone

● Docker encapsulation: All components run in containers, eliminating OS differences

● Minimal configuration: Only requires one LLM API Key; everything else is optional

After the installation is complete, you will see:

Truly out of the box, not the kind where you have to configure for half a day after opening, including the following components:

| Component | Port | Purpose |

|---|---|---|

| Higress Gateway | 18080 | AI Gateway + Reverse Proxy |

| Higress Console | 18001 | Model configuration, routing management |

| Element Web | 18080 | Chat client (browser) |

| MinIO | 9000/9001 | Shared file system |

http://127.0.0.1:18088)

Suppose you want to create a SaaS product—from idea to launch to growth, traditionally you need product, design, development, testing, operation... but now you can do it this way:

You: Create 4 Workers for me:

- alex: Product Manager

- sam: Full-stack Developer

- taylor: Content Operations

- jordan: Data Analyst

Manager: OK. 4 Workers created, each with independent skills and memory.

You: Start the project: AI Writing Assistant MVP, needs to go live next week

Manager: Got it. I’ll plan the project...

[Create a project group chat and invite all Workers]

[Draft the project plan]

Here’s the plan—please confirm:

Phase 1: Product definition

- task-001: Competitive research & PRD (alex)

Phase 2: Development

- task-002: Tech stack selection & architecture (sam, depends on task-001)

- task-003: Core feature development (sam, depends on task-002)

- task-004: Code review (alex, depends on task-003)

Phase 3: Launch prep

- task-005: Launch asset preparation (taylor, depends on task-001)

Once confirmed, I’ll start assigning tasks.

You: Confirm

Manager: [Update project status to active]

@alex You have a new task [task-001]: Competitive research & PRD

Goal: Deliver a PRD that clearly defines the MVP scope

Spec: ~/hiclaw-fs/shared/tasks/task-001/spec.md

After finishing, report back in the project group and @mention me

[1 hour later]

alex: @manager task-001 done

PRD delivered: core features include AI continuation, multi-model switching, history

Result: ~/hiclaw-fs/shared/tasks/task-001/result.md

Manager: [Read result.md, status: SUCCESS]

[Update project plan, mark task-001 as done]

@sam You have a new task [task-002]: Tech stack selection & architecture

PRD reference: ~/hiclaw-fs/shared/tasks/task-001/result.md

@taylor You have a new task [task-005]: Launch asset preparation

Product positioning reference: ~/hiclaw-fs/shared/tasks/task-001/result.md

[1 hour later]

sam: @manager task-002 done

Stack: Next.js + Vercel + Supabase

Manager: [Update plan, assign task-003]

@sam Continue with core feature development [task-003]

[1 hour later]

sam: @manager task-003 done, deployed to Vercel

Demo URL: https://xxx.vercel.app

Manager: [Update plan, assign task-004]

@alex Please accept [task-004]: Product acceptance

Test URL: https://xxx.vercel.app

Verify feature completeness against the PRD

[An issue found during acceptance]

alex: @manager task-004 needs changes

Issue: There’s no guidance for multi-model switching—users don’t know what to choose

Suggestion: Add a model comparison / guidance page

Result: ~/hiclaw-fs/shared/tasks/task-004/result.md

Status: REVISION_NEEDED → back to task-003

Manager: [Read result.md, status: REVISION_NEEDED]

[Create revision task task-006]

@sam task-004 acceptance found issues that need fixing

Revision task [task-006]: Add a model selection guidance page

Feedback details: ~/hiclaw-fs/shared/tasks/task-004/result.md

Report back and @mention me when done

[Fix completed]

sam: @manager task-006 done, guidance page added

Manager: [task-006 done, task-004 re-accepted and passed]

[All dev tasks completed]

@you MVP development completed!

- Deployed: https://xxx.vercel.app

- Product acceptance passed

- Launch assets are ready

Project plan: ~/hiclaw-fs/shared/projects/proj-xxx/plan.md

You: Prepare to launch. @taylor Product Hunt post tomorrow

taylor: Got it—scheduled. Assets are ready.

[Launch day — Manager automatically checks progress]

Manager: @you Product Hunt launch reminder

Current rank: #3

Upvotes: 423

Comments: 87

@jordan Please set up analytics instrumentation

jordan: Got it. Setting up GA4 + custom events...

[After data is ready]

jordan: @manager Instrumentation complete

Dashboard: https://analytics.google.com/xxx

Day 1 metrics:

- Sign-ups: 1,247

- Day-2 retention: 34%

- AI continuation usage: 78%

- Multi-model switching usage: 23%

Manager: @you Project “AI Writing Assistant MVP” launch daily report

Key metrics:

- Day 1 sign-ups: 1,247

- Day-2 retention: 34%

- Feature usage: continuation 78%, switching 23%

Insight: Multi-model switching usage is low

Recommendation: @alex analyze the cause and improve the guidance flow

[This is how the Manager runs end-to-end: plan → assign → monitor → coordinate → report]An AI, with 4 AI assistants, completed the work of a team. And you only need to lie on the couch and check the progress on your phone, participating in guidance during key decisions.

HiClaw retains the core concepts of OpenClaw (natural language conversation, Skills ecosystem, IM Native, etc.), while addressing pain points in security and usability. If you are:

● Independent developer: A person who wants to accomplish the work of a team

● Deep user of OpenClaw: Looking for a more secure and user-friendly experience

● Founder of a one-person company: Need AI assistants to help share the workload

HiClaw is prepared for you.

HiClaw is an open-source project based on the Apache 2.0 license, developed by the Higress team using OpenClaw, Higress AI Gateway, Element IM client + Tuwunel IM server (all based on the Matrix real-time communication protocol), and MinIO shared file system.

● GitHub: https://github.com/higress-group/hiclaw

● Documentation: https://github.com/higress-group/hiclaw/tree/main/docs

● Official website: https://higress.ai/hiclaw

● Ding group: 163855016601

● Discord: https://discord.gg/n6mV8xEYUF

Stop Guessing About iOS Crash Troubleshooting! Save This Layered Catch Guide

Unified Cross-cloud Logging: Intelligently Importing S3 Data into SLS

706 posts | 57 followers

FollowAlibaba Cloud Native Community - March 19, 2026

Alibaba Cloud Native Community - April 30, 2026

Alibaba Cloud Native Community - March 17, 2026

Alibaba Cloud Native Community - April 22, 2026

Alibaba Cloud Native Community - March 25, 2026

Alibaba Cloud Native Community - April 3, 2026

706 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More Managed Service for Prometheus

Managed Service for Prometheus

Multi-source metrics are aggregated to monitor the status of your business and services in real time.

Learn MoreMore Posts by Alibaba Cloud Native Community