By Ru Man

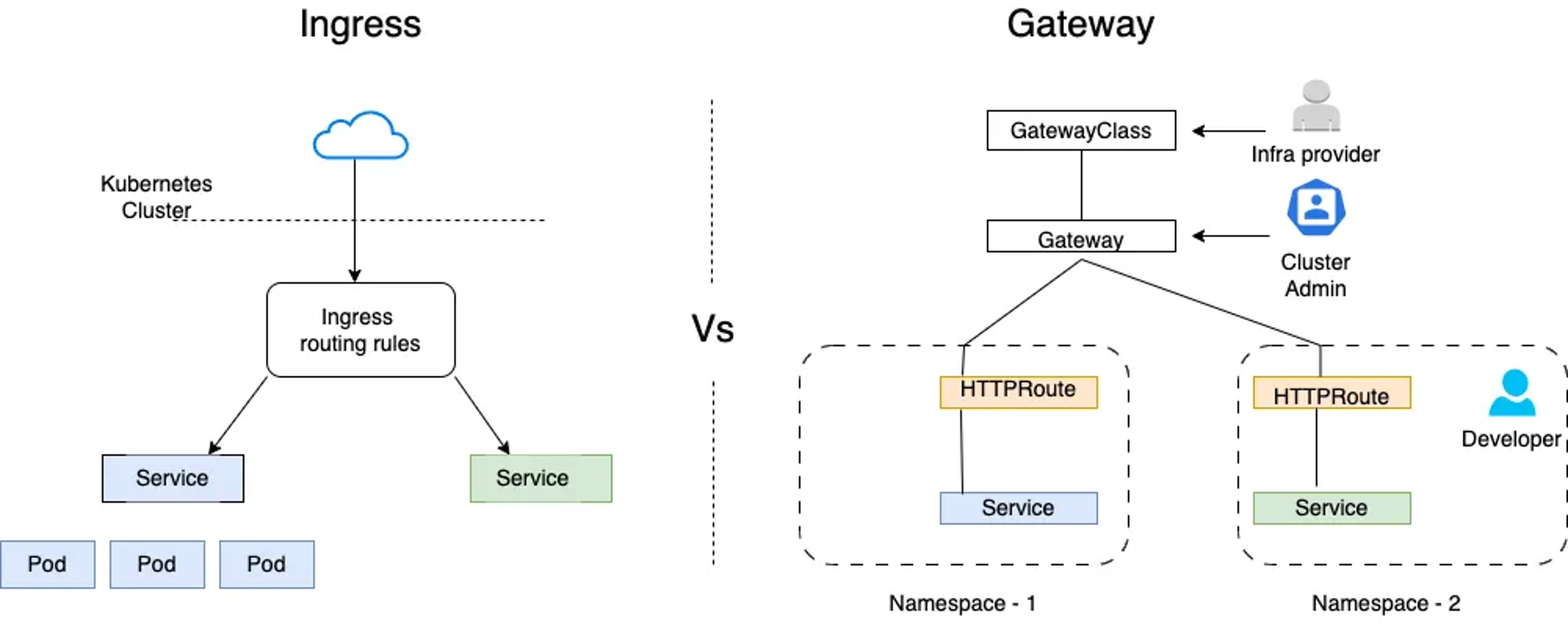

Currently, the container service network is undergoing a dual evolution of "standard upgrades" and "scenario expansions": on one hand, the Gateway API is gradually replacing the responsibilities of traditional Ingress with a more complete resource model; on the other hand, more and more AI inference services are being deployed in containers, and the gateway needs to natively understand the characteristics of model calls and governance requirements.

Furthermore, in the context of the impending retirement of Ingress Nginx, Ingress Nginx users may seek new Ingress Controller alternatives or try to migrate to the Gateway API.

While maintaining long-term compatibility and support for Ingress, Higress has implemented the main features of the new version of the Gateway API and Gateway API Inference Extension in the recently released version 2.2.0.

This article will introduce the feature upgrade of Higress and provide detailed operation guidance.

As the de facto standard ingress for early Kubernetes, Ingress shines with its simplicity and mature ecosystem, covering a large number of classic routing use cases. However, as team sizes expand and governance dimensions increase, the limitations of a single resource in many aspects such as multi-role collaboration and policy composability gradually become apparent. As the Gateway API matures, the official website is committed to promoting the migration from Ingress to Gateway API.

Ingress Nginx, as the earliest and most mature Ingress controller and de facto standard implementation, had its official maintainer, the Kubernetes community, announce on November 12, 2025, that Ingress Nginx will officially go offline in March 2026, and will no longer be continuously maintained or updated, see: Unfortunately, Ingress NGINX is retiring.

Long-term use of an unmaintained product will undoubtedly increase security risks and maintenance costs. In response, the official recommendation given to users by Ingress Nginx includes:

● Use Gateway API to replace Ingress.

● If it is necessary to use Ingress, other alternative Ingress controllers can be used.

Regarding the official recommendations, Higress has provided implementation solutions:

● For the latter, Higress will provide long-term support for Ingress, ensuring high compatibility and security, while offering a complete migration plan from Ingress Nginx.

● For the former, Higress fully supports users in configuring network routes with the new version of the Gateway API and configuring intelligent routing for AI inference services with the Gateway API Inference Extension, actively embracing the new generation of protocol standards. This will be explained in detail later.

Currently, Higress is one of the mainstream Ingress Controllers, having undergone a long evolution and practical refinement, featuring mature reliability and high compatibility with Nginx Ingress annotations, while providing a smooth migration path, see: Ingress NGINX Migration Guide | Including Enterprise Migration Plan Distribution.

For teams evaluating migration from Ingress NGINX, Higress offers a viable and sustainably evolving alternative: compatible with mainstream Ingress semantics and commonly used Nginx annotations, covering capabilities such as rewrites, rate limiting, authentication, TLS, etc., and supporting gray traffic switching, traffic mirroring, and one-click rollback to ensure safe and controllable migration and daily changes without interruption.

Looking to the future, we will continue to be compatible with Ingress, coexisting with the Gateway API dual stack, supporting smooth acceptance of existing services and incremental upgrades as needed.

Gateway API is the new generation service network standard launched by the Kubernetes community, aimed at replacing the traditional Ingress API. The Gateway API provides more robust expression capabilities and extensibility, with a role-oriented design. With the release of version v1.0.0 at the end of 2023, the Gateway API has entered the GA phase.

In the latest released version, Higress aligns with Gateway API version v1.4.0, supporting main resources such as HttpRoute, GrpcRoute, TcpRoute, and UdpRoute. In addition, one of the major changes of Gateway API v1.4.0 is the formal GA of BackendTLSPolicy, which Higress has also updated accordingly.

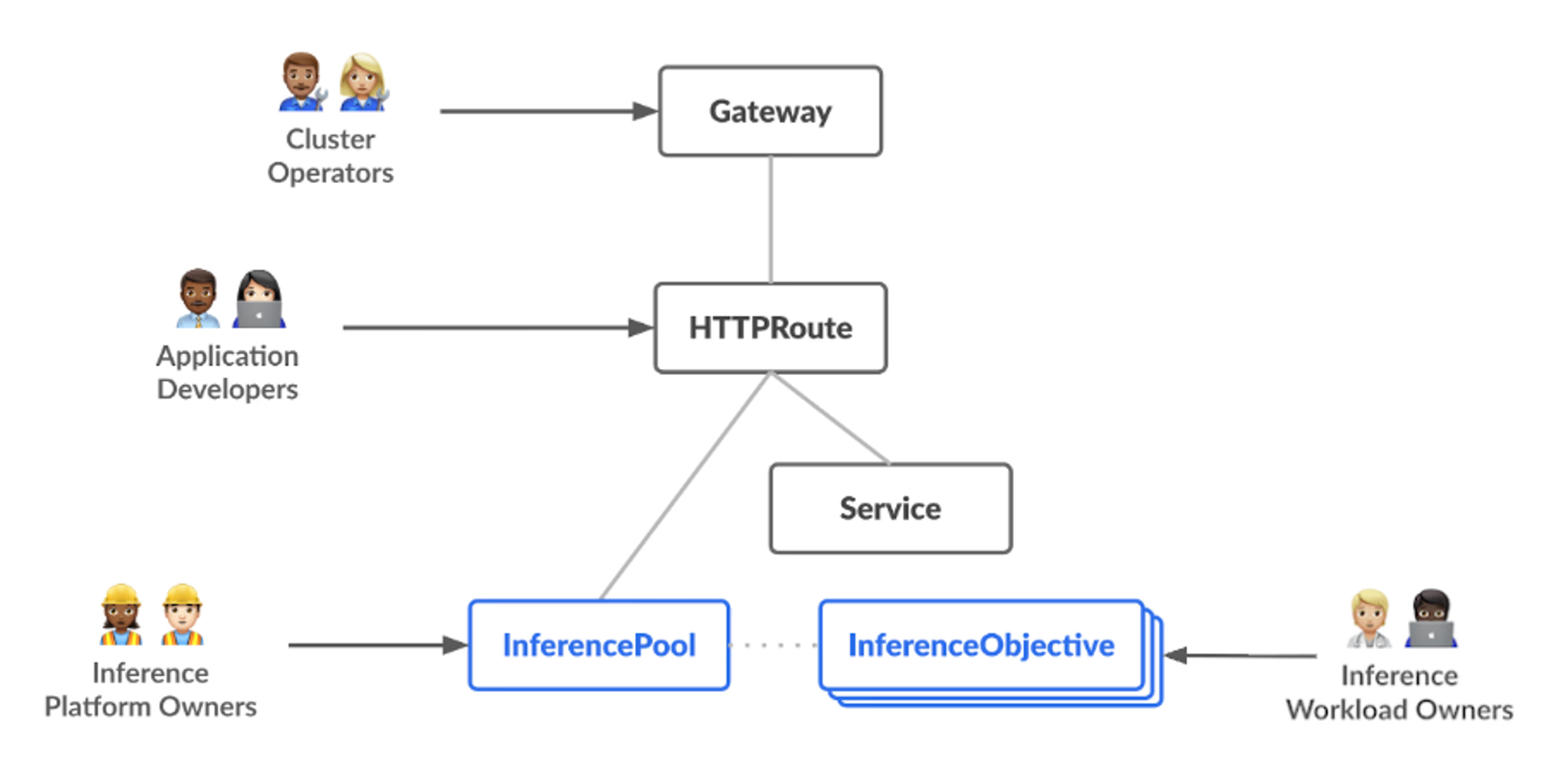

With the rapid development of generative AI and large language model services, AI inference services feature long runtimes, resource intensity, and partly stateful characteristics, making traditional load balancers insufficient. Gateway API Inference Extension (GIE)is the standard protocol extension proposed by the Kubernetes official community for AI inference scenarios. The Inference Extension aims to address the traffic management challenges of large language model services on Kubernetes by introducing model-aware routing, priority-based scheduling, smart load balancing, etc., providing a standardized solution for AI inference workloads. The Kubernetes community officially released the GA version in September 2025.

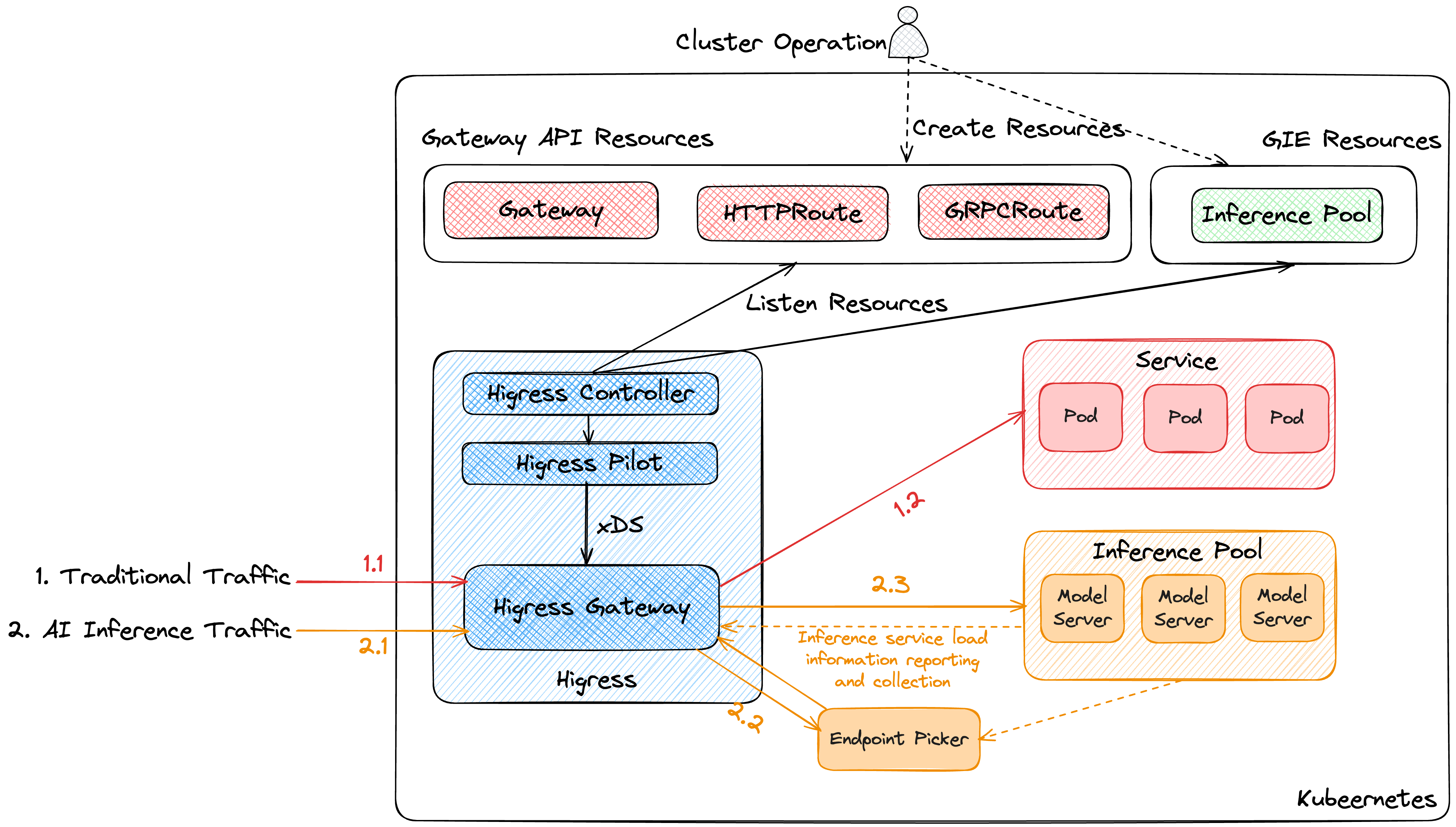

In the latest released version, Higress has achieved compatibility with the Gateway API Inference Extension, supporting GA version InferencePool resource listening, while being compatible with GIE community standard EndPoint Picker, providing users with standardized and efficient AI traffic management capabilities.

The data flow supported by Higress for standard GIE is shown in the figure below. The Higress control plane manages the expected behavior of the data plane by listening to resources such as the Gateway API and InferencePool and transforming them into configurations for routing and load balancing; when the data plane recognizes model inference traffic, it accesses the standard Endpoint Picker instance via the gRPC protocol based on the external processing mechanism. The Endpoint Picker selects the most suitable inference node based on the task queue status in the InferencePool, KV Cache hit rate, LoRA Adaptor, etc., and adds the node address to the request's dynamic_metadata. Subsequently, the data plane prioritizes the node address specified in the dynamic_metadata as the target of load balancing. If the target node does not exist or access is abnormal, the default fallback will execute load balancing using the RoundRobin strategy.

This section will introduce how to use Gateway API and Gateway API Inference Extension to configure network routing and AI routing policies for Higress.

Before starting the configuration, make sure you have installed the Gateway API CRD in the container cluster. It is recommended to use Alibaba Cloud ACK, which will default install the latest version of Gateway API.

1. Deploy Higress Gateway version 2.2.0 or above using Helm

helm repo add higress.io https://higress.io/helm-charts

helm install higress higress.io/higress -n higress-system --create-namespace2. Create a Gateway in the container cluster, linking it to the Higress deployed in step 1

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: higress-gateway

namespace: higress-system

spec:

gatewayClassName: higress

listeners:

- name: default

hostname: "*.example.com"

protocol: HTTP

port: 80

allowedRoutes:

namespaces:

from: All3. Create a httpbin demo service in the container cluster to simulate the business service for routing verification

apiVersion: apps/v1

kind: Deployment

metadata:

name: go-httpbin

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: go-httpbin

template:

metadata:

labels:

app: go-httpbin

version: v1

spec:

containers:

- image: registry.cn-hangzhou.aliyuncs.com/mse/go-httpbin

args:

- "--port=8090"

- "--version=v1"

imagePullPolicy: Always

name: go-httpbin

ports:

- containerPort: 8090

---

apiVersion: v1

kind: Service

metadata:

name: go-httpbin

namespace: default

spec:

type: ClusterIP

ports:

- port: 80

targetPort: 8090

protocol: TCP

selector:

app: go-httpbin4. Create an HttpRoute to route traffic to httpbin

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: http

namespace: default

spec:

parentRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: higress-gateway

namespace: higress-system # Note: this must match the namespace where the Gateway resides

hostnames: ["httpbin.example.com"]

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- kind: Service

name: go-httpbin

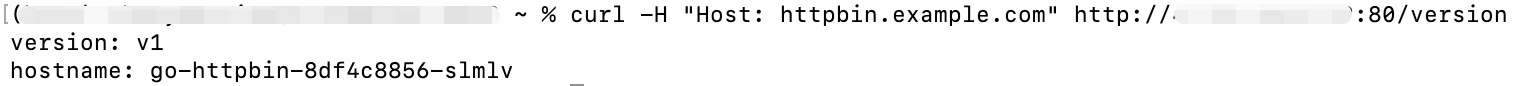

port: 805. Access the application through the Higress gateway to get the following result, indicating that the Gateway API routing configuration has taken effect.

curl -H "Host: httpbin.example.com" http://{higress_endpoint}:80/version

Before starting the configuration of the inference extension route, make sure that the Gateway API CRD has been installed. Next, refer to the following steps for AI inference service route configuration.

1. Install Gateway API Inference Extension CRD and Istio CRD in the container cluster

# Gateway API Inference Extension CRD

kubectl apply -f https://github.com/kubernetes-sigs/gateway-api-inference-extension/releases/download/v1.1.0/manifests.yaml

# Istio CRD

helm repo add istio https://istio-release.storage.googleapis.com/charts

helm install istio-base istio/base -n istio-system --create-namespace2. Deploy Higress Gateway version 2.2.0 or above using Helm. Unlike before, as Higress does not enable InferencePool resource listening by default, you need to specify the startup parameter global.enableInferenceExtension=true to enable it.

helm repo add higress.io https://higress.io/helm-charts

helm install higress higress.io/higress -n higress-system --create-namespace --set global.enableInferenceExtension=true3. Create a Gateway in the container cluster, linking it to the Higress deployed in step 2

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: higress-gateway

namespace: higress-system

spec:

gatewayClassName: higress

listeners:

- name: default

hostname: "*.example.com"

protocol: HTTP

port: 80

allowedRoutes:

namespaces:

from: All4. Create a model inference service instance. To save container resources, we will only deploy the community open-source vllm simulation inference service llm-d-inference-sim for verification.

kubectl apply -f https://github.com/kubernetes-sigs/gateway-api-inference-extension/raw/main/config/manifests/vllm/sim-deployment.yaml5. Create InferencePool and Endpoint Picker for the model inference service in the container cluster.

In actual operation, the Endpoint Picker will be bound to an InferencePool, monitoring the inference service instances and their metrics in the InferencePool. Before the Higress envoy instance accesses the actual InferencePool, it will access the endpoint picker node via an external processing mechanism. The endpoint picker comprehensively selects the most suitable node based on the operational status of the current inference service and returns this information to the envoy. Subsequently, the envoy will access this node to perform inference tasks, thereby achieving intelligent load balancing.

export IGW_CHART_VERSION=v1.1.0

export GATEWAY_PROVIDER=istio

helm install vllm-llama3-8b-instruct \

--set inferencePool.modelServers.matchLabels.app=vllm-llama3-8b-instruct \

--set provider.name=$GATEWAY_PROVIDER \

--version $IGW_CHART_VERSION \

oci://registry.k8s.io/gateway-api-inference-extension/charts/inferencepool6. Create HttpRoute to route AI traffic to the corresponding model inference service in the InferencePool.

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: http

namespace: default

spec:

parentRefs:

- group: gateway.networking.k8s.io

kind: Gateway

name: higress-gateway

namespace: higress-system

hostnames: ["httpbin.example.com"]

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- name: vllm-llama3-8b-instruct

group: inference.networking.k8s.io

kind: InferencePool

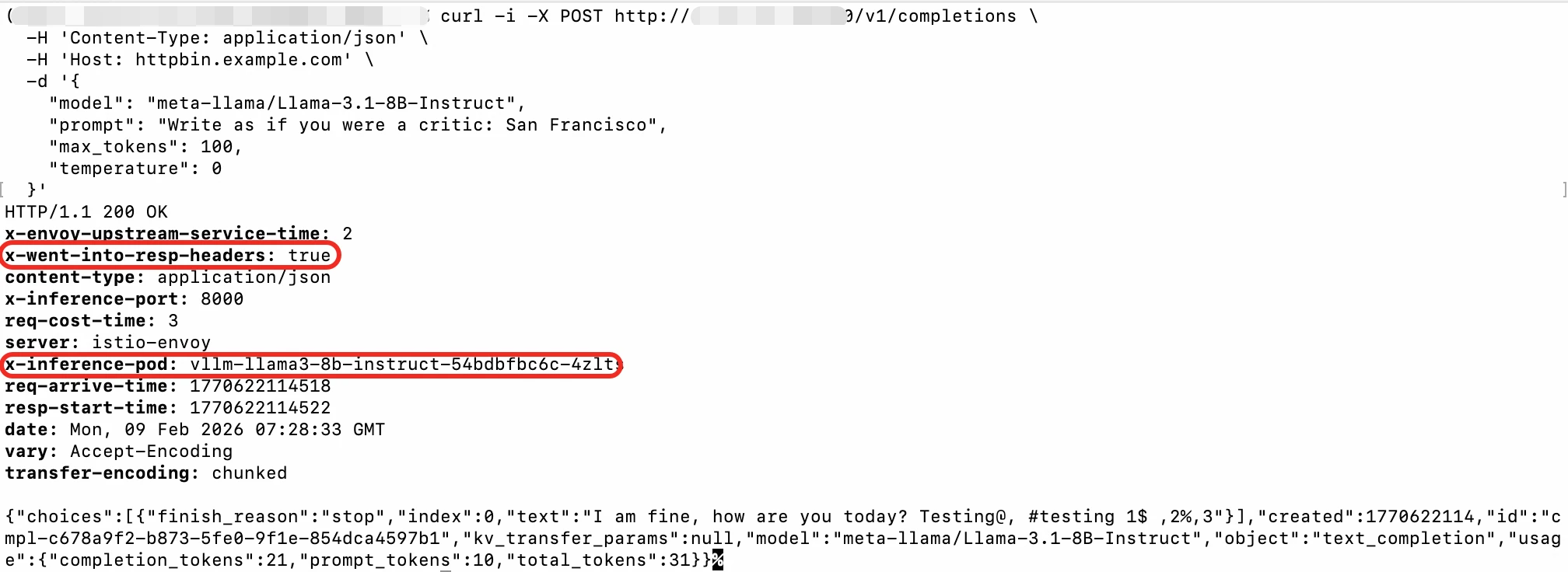

port: 807. Access the model inference service through the Higress gateway. In the response header, observe x-went-into-resp-headers=true, and through x-inference-pod and x-inference-port, see the actual instance and port to which the inference request is routed, indicating that the Gateway API Inference Extension routing configuration has taken effect.

curl -i -X POST http://{higress-endpoint}:80/v1/completions \

-H 'Content-Type: application/json' \

-H 'Host: httpbin.example.com' \

-d '{

"model": "meta-llama/Llama-3.1-8B-Instruct",

"prompt": "Write as if you were a critic: San Francisco",

"max_tokens": 100,

"temperature": 0

}'

With support for the latest version of Gateway API and Inference Extension, Higress unifies traditional North-South and AI inference traffic on the same foundation, ensuring long-term support and compatibility for Ingress, while accelerating the upgrade of service network standards and AI native processes through standardized Gateway API.

Facing the impending offline timeline of Ingress Nginx, whether to choose to migrate to Gateway API or continue to use Ingress, Higress provides complete solutions.

Looking to the future, the Higress community will continue to evolve in the following areas:

If you have any questions about Ingress annotation compatibility and migration details or the functional requirements of Gateway API and its AI inference extension, feel free to leave a message in the comments or contact us directly!

From Symptoms to Root Causes: How MetricSet Explorer Reinvents the Metric Analysis Experience

OpenClaw Access to GLM5/MiniMax M2.5 Simplified Tutorial, Here It Comes

701 posts | 57 followers

FollowAlibaba Cloud Native Community - March 25, 2026

Alibaba Cloud Native Community - October 20, 2025

Alibaba Cloud Native Community - April 7, 2026

Alibaba Cloud Native Community - August 11, 2025

Alibaba Cloud Native Community - February 20, 2025

Alibaba Cloud Native Community - February 18, 2025

701 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Cloud Migration Solution

Cloud Migration Solution

Secure and easy solutions for moving you workloads to the cloud

Learn More Oracle Database Migration Solution

Oracle Database Migration Solution

Migrate your legacy Oracle databases to Alibaba Cloud to save on long-term costs and take advantage of improved scalability, reliability, robust security, high performance, and cloud-native features.

Learn MoreMore Posts by Alibaba Cloud Native Community