By Wang Chen

We previously talked about Harness Engineering (driving engineering). A dragon has come into your living room, and you need to equip it with a complete harness system: reins, saddle, and protective gear. In fact, ever since Agents appeared, Harness Engineering has existed. It was only with the emergence of OpenClaw, which shifted AI sovereignty from model vendors to users, that we gained a deeper appreciation of Harness Engineering, sparking resonance across the industry.

But for Agent forms in different eras, the reins we need are not the same.

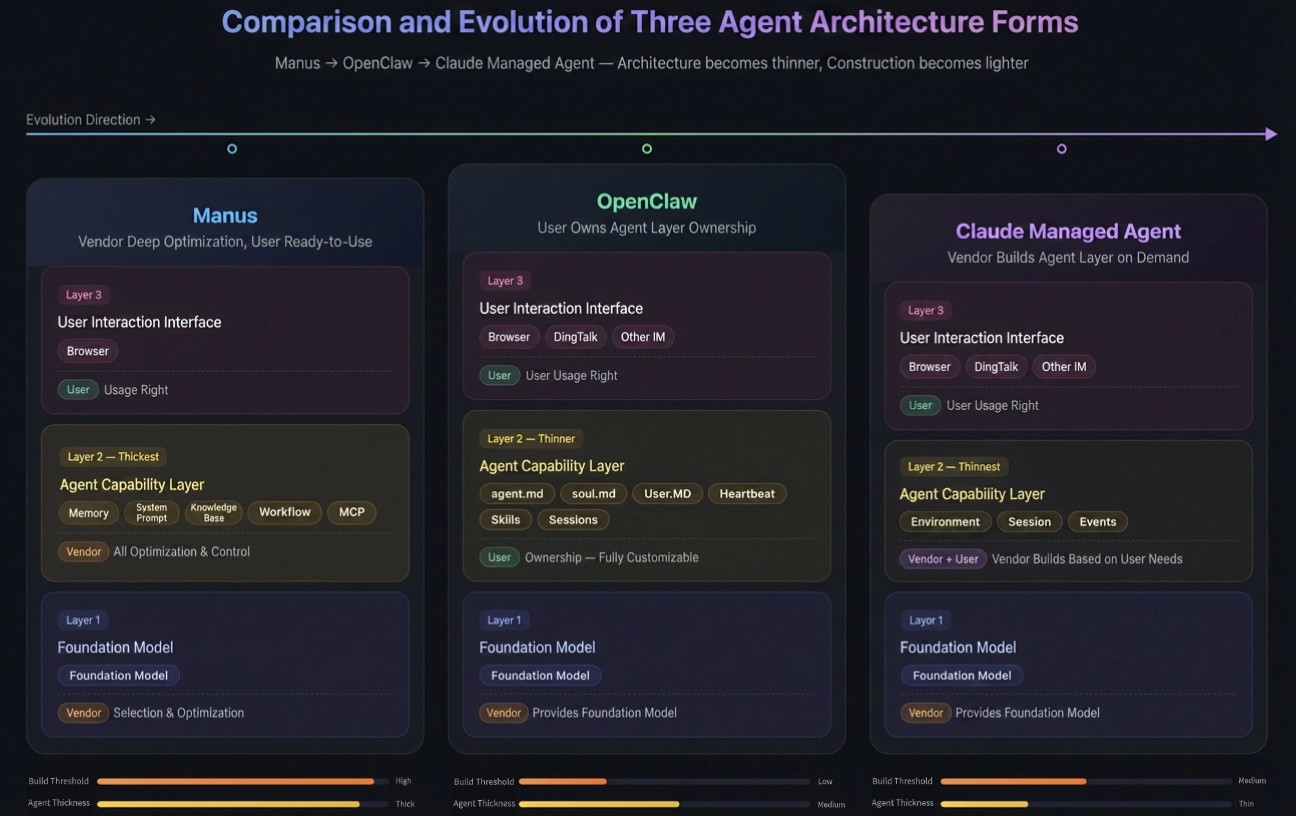

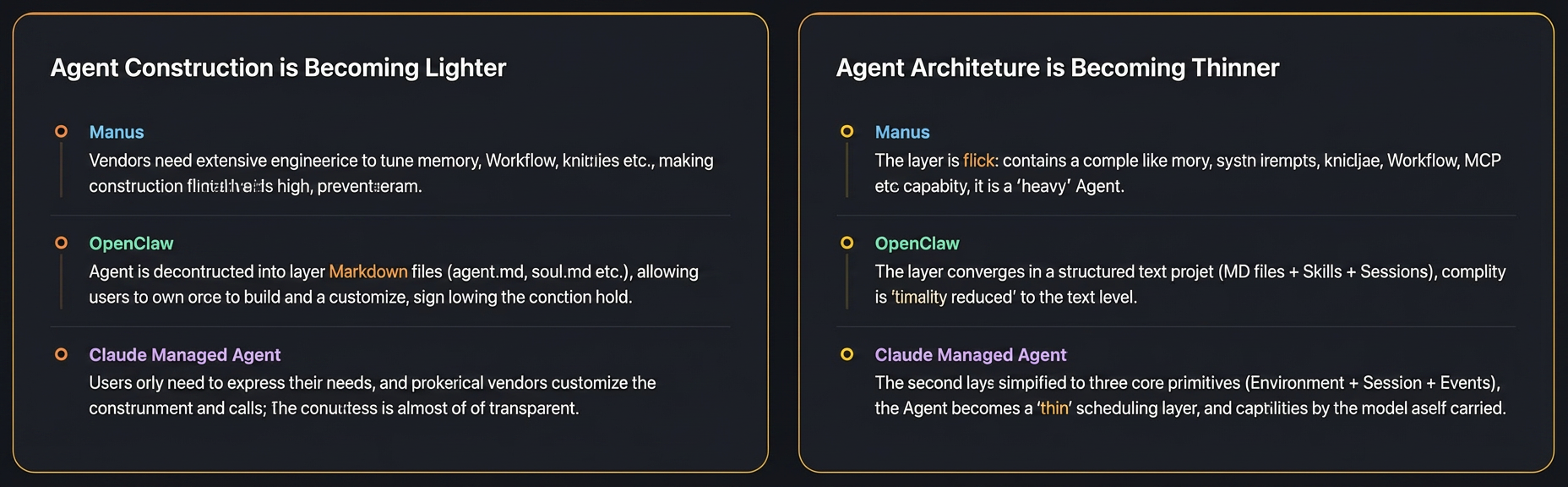

Taking the three mainstream Agents Manus, OpenClaw, and Claude Managed Agent as examples, they all follow a three-layer structure: the bottom layer is the foundation model, the middle layer is the Agent capability-building layer, and the top layer is the user interaction interface. But on the two questions of 'who builds the middle layer' and 'how thick the middle layer is', the three give different answers.

Manus: a turnkey black-box Agent solution.

The second-layer Agent capability layer (memory, system prompts, knowledge base, Workflow, MCP, and so on) is tuned and controlled by Manus. What the user gets is a finished product, and they only have access to the third-layer browser interface. It is like buying a branded car: the engine, transmission, and suspension are all calibrated by the manufacturer; you just step on the accelerator and steer the wheel.

OpenClaw: an open skeleton, with users responsible for optimizing Agent performance.

The second-layer Agent capability layer is decomposed into a set of text protocols: agent.md defines behavior, soul.md defines personality, User.MD describes the user profile, plus the Heartbeat mechanism, Skills, and Sessions management. These are all owned by the user, who 'trains' the lobster through natural language so it understands you better and can do more. The third-layer interaction interface expands from a single browser to IM platforms such as Discord, Feishu, and DingTalk. It is like getting a racing chassis that can be modified: the engine comes from the manufacturer, but the suspension, aero kit, and seats are all assembled by us.

Claude Managed Agent: managed co-building, customized on demand.

The second-layer Agent capability layer is simplified to just three core primitives: Environment, Session, and Events. Unlike OpenClaw, this extremely minimal second layer is customized and built by Anthropic based on user needs. The third layer also supports both browser and IM multi-device access. It is like hiring a top-tier racing engineering team to tune everything to the best possible state.

Manus's second layer is a complete capability stack.

The memory system, system prompts, knowledge base, Workflow orchestration, MCP tool protocol... it wraps almost everything needed to make AI work reliably inside the Agent capability layer.

This 'thick Agent' strategy was completely reasonable when early models were not smart enough. For example, the problems of 'exponential amplification of technical debt' and 'context rot' mentioned here both stem from the model's limited autonomous ability when facing generalized requirements, requiring a heavy Harness to compensate.

OpenClaw's second layer begins to converge.

From five or six different kinds of capability modules (memory, knowledge base, Workflow...) to a set of structured text protocols (Agent.md, Soul.md, User.md), and then through hot-swappable Skills, a highly customized Agent can be built. We can even encode 'taste' as automated rules to constrain it. Thus, the behavioral complexity of the Agent has been reduced dimensionally, from engineering complexity to text complexity.

What lies behind this is the improvement in our understanding of models and Agents: instead of designing complex orchestration systems to make up for the model's shortcomings, it is better to design a simple constrained environment to bring out the model's strengths.

Claude Managed Agent, meanwhile, compresses the second layer to the extreme.

Only three core primitives remain: Environment defines the execution environment, Session manages session state, and Events handles event-driven execution. There is no explicit memory module, no knowledge base, and no Workflow orchestration. These capabilities are all 'pushed down' into the model layer, where it autonomously plans, reasons, and executes within an extremely minimal Agent capability framework.

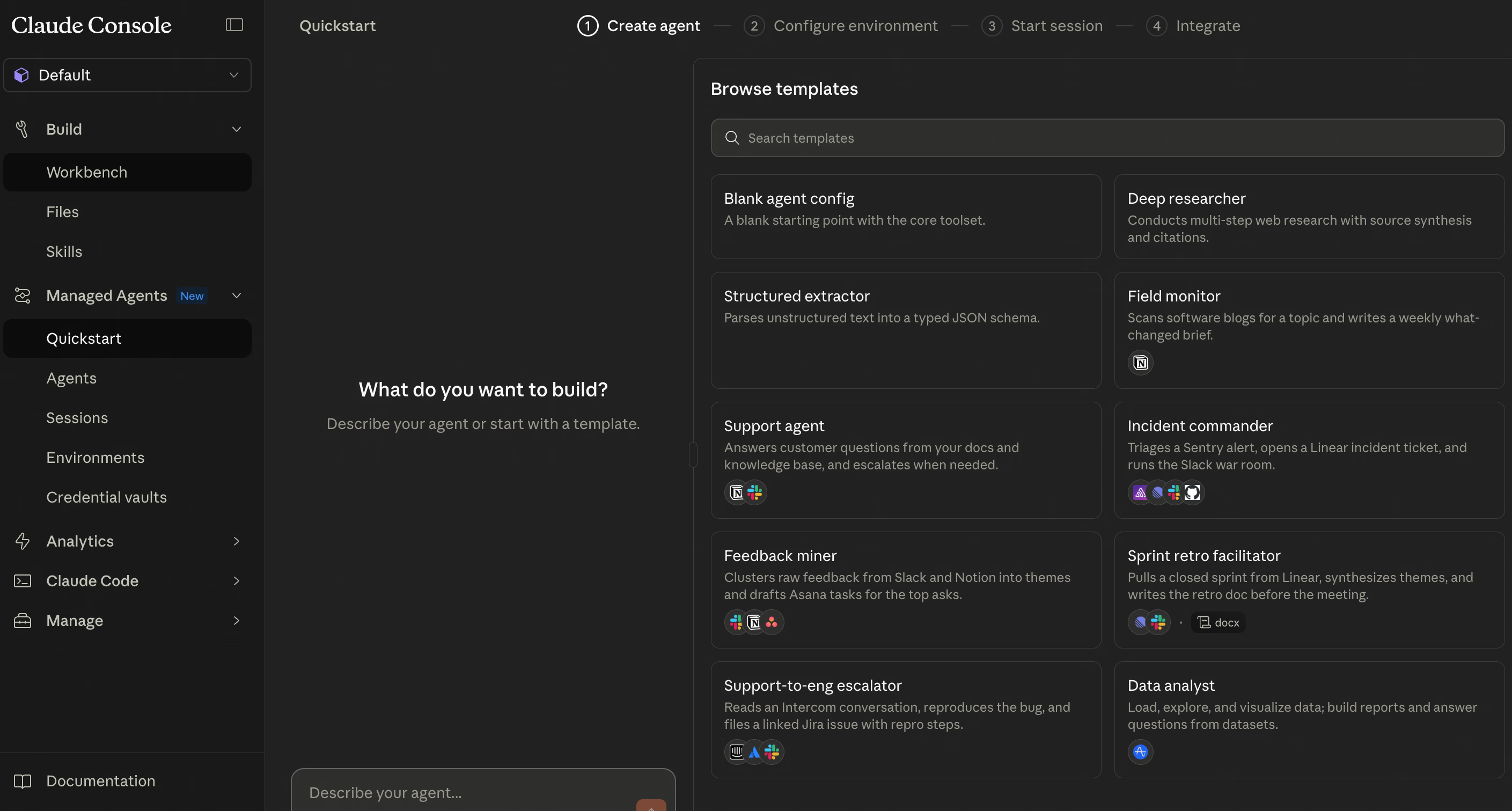

Claude Managed Agent user interface

Light and thin are only half the story; collaboration between Agent and Agent is rapidly getting thicker.

The rise of Agent Teams is driven by complex tasks.

The tasks we hand to Agents are evolving from 'generate an image' to 'help me diagnose an online outage'. For complex, long-horizon tasks, a single Agent is increasingly inadequate—for example, context rot and Skills contamination. Multi-Agent setups can both isolate context and skills and let the main Agent handle planning, while sub-agents execute specific tasks in isolated contexts, each doing its own job.

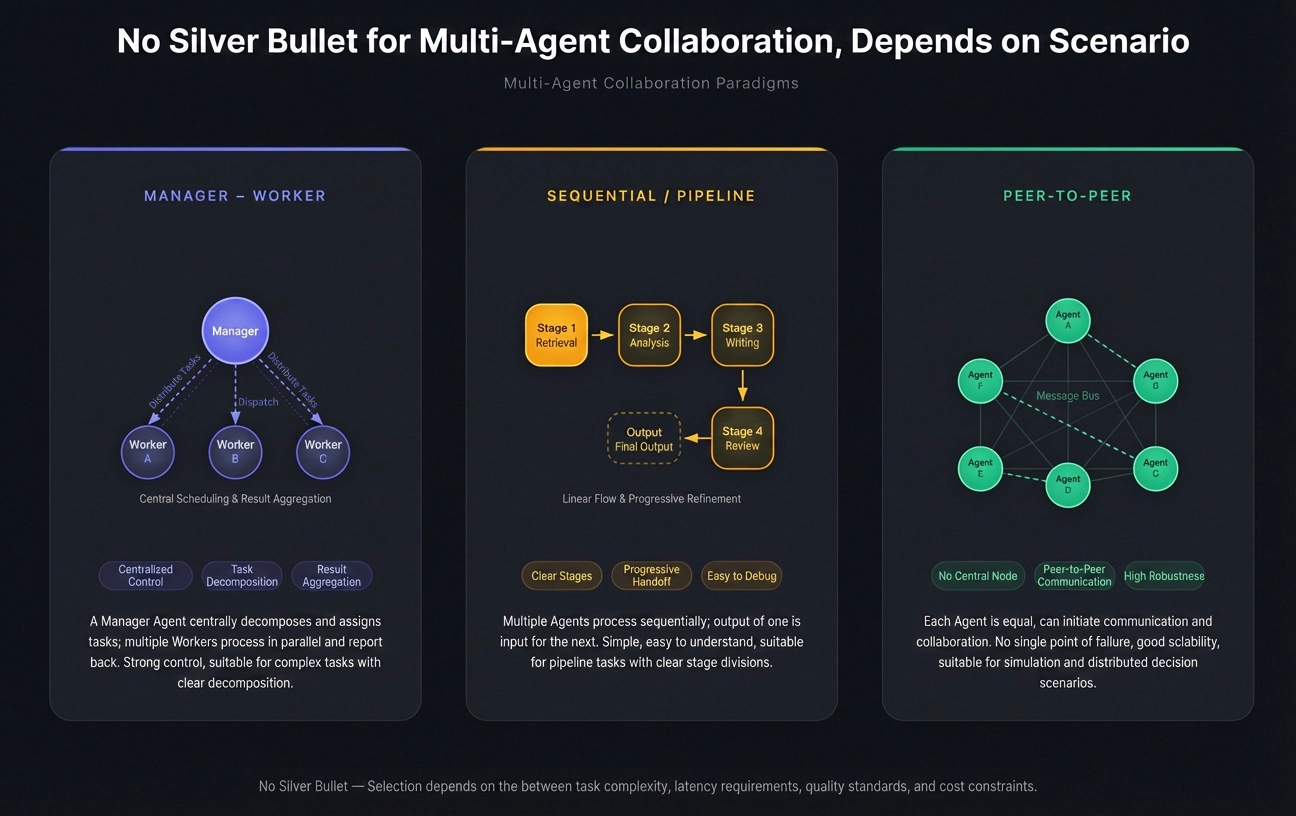

For example, the Manager-Workers architecture used by HiClaw is a typical Agent Team paradigm, but there is no silver bullet for multi-agent collaboration; it depends on the scenario. Below are three common collaboration paradigms: Manager-Worker, Sequential / Pipeline, and Peer-to-Peer / Decentralized.

Manager-Worker

The core capability of this paradigm is 'divide and conquer'; it is best at scenarios where tasks can be clearly split into independent subtasks and then aggregated at the end.

Deep research and report generation are the most typical scenarios. For example, if you need to produce an industry competitor analysis report, the Manager breaks the task into 'research Company A's product line', 'research Company B's financial data', and 'research Company C's tech stack'; the three Workers do it in parallel, and the Manager finally synthesizes a complete report. There is almost no dependency between the subtasks, so parallel efficiency is high.

Task allocation in complex software projects is another common use case. After the Manager understands the overall requirements, it assigns the frontend, backend, and database design to different expert Agents. But there is a subtle point here: if there are strong dependencies between subtasks (for example, backend API definitions affecting frontend implementation), pure Manager-Worker will struggle, and the Manager needs to coordinate much more.

In short, as long as your task meets these three conditions—'decomposable, subtasks independent, and needs aggregation'—Manager-Worker is the first choice.

Sequential / Pipeline

Pipeline excels at tasks with clear stages, where the next step depends on the output of the previous one. Its mental model is a production line: raw materials go in, finished products come out, and each station performs one processing step.

Data analysis pipelines are a classic scenario. Data cleaning → feature engineering → model inference → result visualization; each step builds on the previous one, and ETL workflows are naturally Pipeline structures. Another example is code generation and testing: requirement understanding → code writing → unit test generation → code review → fixes.

Each Agent focuses on one link in the chain, with very clear responsibility boundaries, so when problems occur it is easy to locate which stage's Agent performed poorly. The core advantages of Pipeline are interpretability and debuggability. We can clearly see the intermediate result of each step. But its limitations are also obvious: once a certain stage needs to trace back and modify earlier output, a pure linear structure becomes awkward and requires feedback loops to handle it.

Peer-to-Peer / Decentralized

The distinctive feature of the P2P paradigm is that there is no central controller; each Agent is autonomous, and they collaborate through negotiation, broadcasting, and shared information. This architecture is best for scenarios with no predefined process, where collaboration patterns need to emerge dynamically.

Social simulation and modeling are the most classic P2P applications. For example, Generative Agents (Stanford's 'AI town' experiment). Twenty-five Agents each have their own memories, goals, and schedules; they act autonomously in a virtual environment, talk to one another, and form relationships. No Manager tells anyone what to do; all social behavior emerges from peer-to-peer interaction.

In addition, multi-party negotiation and game theory are naturally suited to the P2P paradigm. For example, if you simulate a business negotiation, the buyer, seller, and intermediary each have their own interests and strategies, and they need to probe, bid, and concede to one another. There is no single correct central scheduling logic in this kind of scenario; the outcome of the game depends on the dynamic interactions among the parties.

Self-organizing workflow optimization is also a cutting-edge direction. Multiple Agents discover bottlenecks while executing tasks and autonomously adjust the division of labor—for example, one Agent with a light workload proactively shares work with an Agent under heavier load. This kind of dynamic load balancing requires a Manager in the Manager-Worker architecture, but in P2P it can emerge spontaneously.

But the cost of P2P is high coordination complexity and unpredictable behavior. In production environments, pure P2P usually needs good communication protocols and termination-condition design; otherwise it can easily fall into infinite loops or information explosion.

Swarm intelligence is already moving from experiment to application, pushing Agent Teams forward from another dimension.

When a single Agent becomes thin enough to be lightweight, the cost of keeping a swarm of Agents becomes acceptable. This gives rise to a brand-new pattern: swarm intelligence.

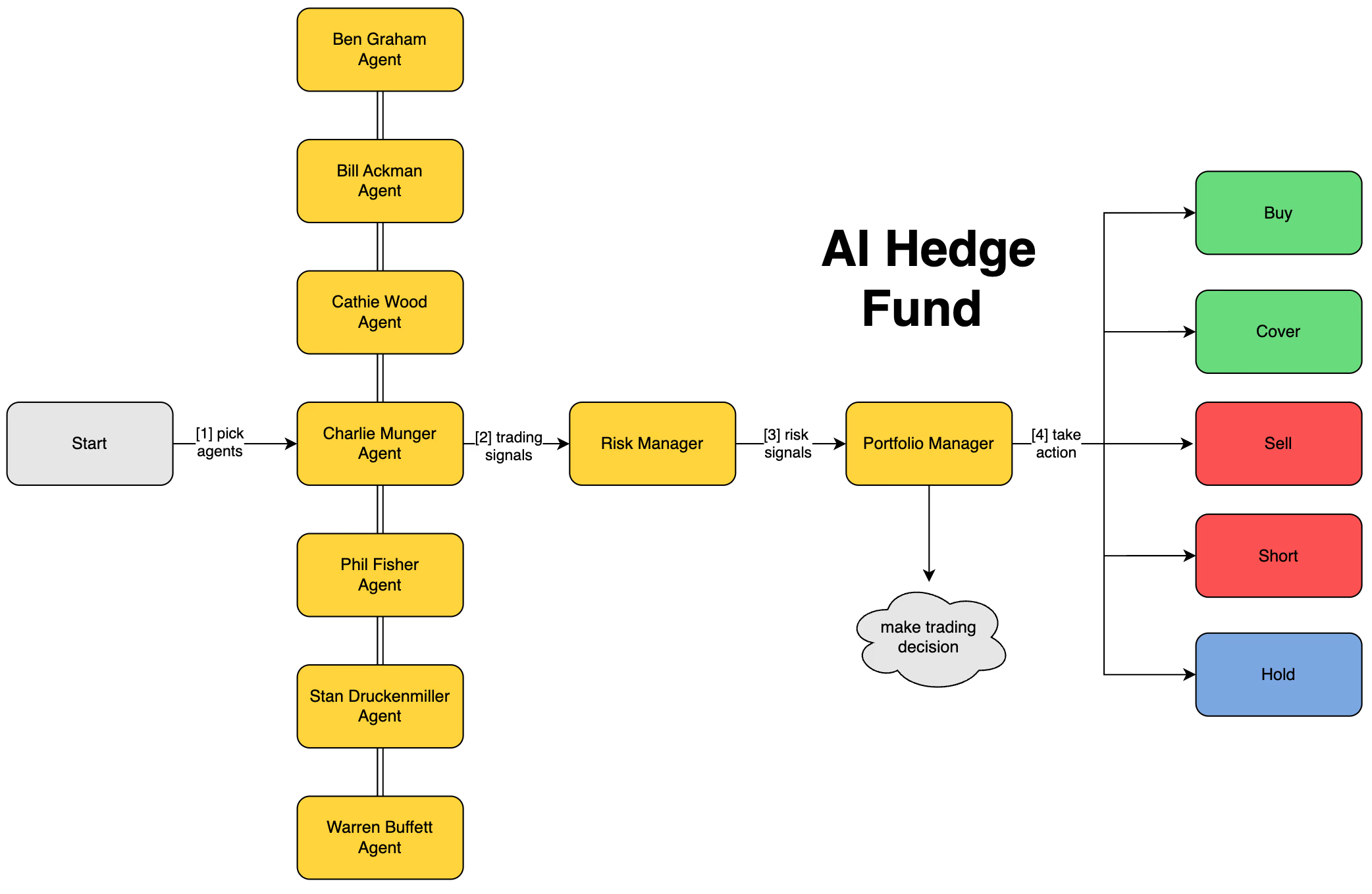

HiClaw's 7-million-yuan luxury car design is a typical case of swarm intelligence. Multiple Agents with different roles discuss for 100 rounds and produce a synthesized conclusion. Another recent sensation is AI Hedge Fund. The project builds an Agent legion composed of 19 legendary investors, including: Buffett Agent focusing on value investing and moat analysis, Munger Agent excelling at contrarian thinking and cross-disciplinary models, Cathie Wood Agent focusing on disruptive innovation and long-term tech trends...

When multiple investment-master Agents with very different styles form a legion and collide with one another, the insights that emerge are no longer something any single Agent can reach. That is the value of swarm intelligence: improvements in individual efficiency are linear, while the emergence of swarm intelligence is exponential.

Agent Teams happen to follow the same logic as the development of the internet. End devices have gone from mainframes to phones, becoming lighter and thinner, but human collaboration based on the internet has become more and more diverse, unleashing unparalleled collective intelligence.

The images in this article were created by QoderWork.

Alibaba Cloud MSE AI Registry Public Beta Launches: Give Your AI Assets a Dedicated Registry

707 posts | 57 followers

FollowAlibaba Developer - May 31, 2021

ApsaraDB - January 16, 2026

Justin See - March 19, 2026

Alibaba Developer - April 7, 2020

Alibaba Cloud Native Community - August 25, 2022

Alibaba Clouder - February 22, 2021

707 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Managed Service for Prometheus

Managed Service for Prometheus

Multi-source metrics are aggregated to monitor the status of your business and services in real time.

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn MoreMore Posts by Alibaba Cloud Native Community