By Que Xian, Wen Ting, Fu Li and Zhi Liu

As capabilities such as large language models (LLMs), Automatic Speech Recognition (ASR), and Text-to-Speech (TTS) mature, AI agents are evolving from text-based interaction to speech interaction. Typical scenarios include AI teachers, AI emotional companions, and AI assistants. Compared to text input, speech is more natural and real-time, allowing users to complete questions, exercises, task triggers, and multi-round dialogues simply by speaking. This transition has truly brought "conversation with agents" into actual business scenarios.

However, when agent-based speech interaction enters high-concurrency scenarios, many teams find that the first bottleneck encountered is often not the model itself, but the message link supporting the real-time interaction. Issues such as massive session management, high-frequency small-packet transmission, asynchronous result push-back, and session lifecycle management appear simultaneously. To make agent-based speech interaction truly stabler and faster, the underlying link design is often the key.

This article examines a typical high-concurrency intelligent speech interaction scenario to demonstrate architectural best practices. It introduces how to build a stable, reliable, and efficient real-time speech message link architecture using the LiteTopic feature of ApsaraMQ for RocketMQ.

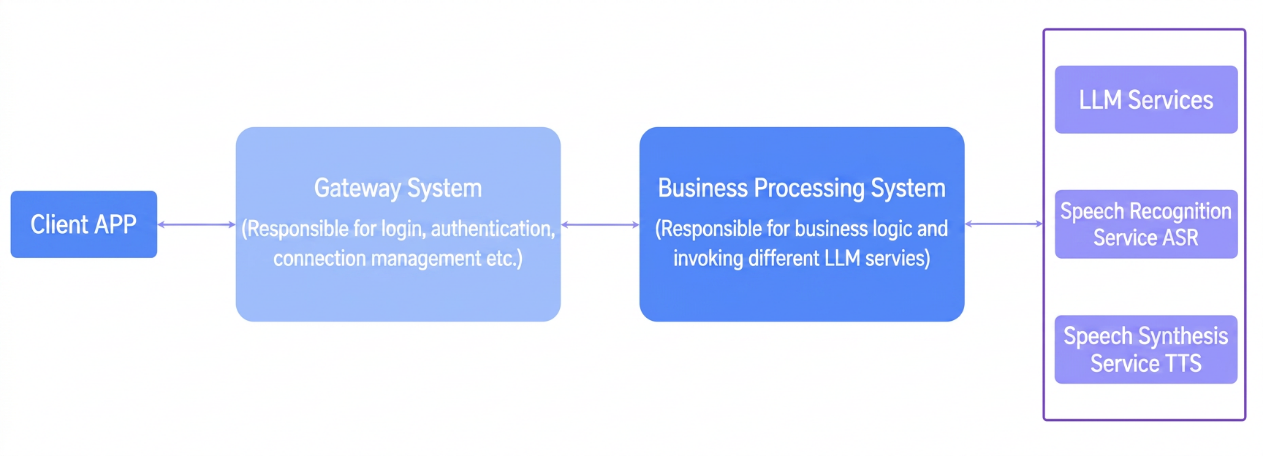

In agent-based speech interaction scenarios, the system does not simply "receive one sentence and return one sentence." Behind a complete interaction lies the synergy between the client, gateway, business processing systems, and multiple services like LLMs, ASR, and TTS.

Therefore, intelligent speech interaction business places higher demands on technical architecture:

● Massive session management: As business scales, concurrent connections and active sessions rise rapidly. Each user interaction is an independent session, requiring the system to maintain tens or even hundreds of thousands of long connections simultaneously.

● High-frequency small-packet transmission: A session is formed from the moment a user presses the record button until they release it. During this period, the client slices the audio stream into small packets for continuous transmission; ensuring these packets are continuous and not lost is essential for business accuracy.

● Strict timeliness: Clients are extremely sensitive to latency. If no response is received for a significant period, the user experience drops noticeably. This places higher demands on LLM throughput in high-concurrency scenarios and the real-time response notification capabilities of the system.

Consequently, many systems that seem to "work" at low concurrency quickly see their underlying message architecture problems magnified in high-concurrency, real-time speech scenarios.

In the practical implementation of intelligent speech interaction, traditional message architectures often expose several typical issues when supporting high-concurrency, low-latency scenarios:

The message flow path for speech interaction typically spans: APP <-> Gateway <-> BizProcessSystem (Route) <-> LLM/ASR/TTS. WebSocket persistent connections are maintained between the APP and Gateway, as well as between the BizProcessSystem and LLM.

In this architecture, the entire link must strictly maintain session stickiness. This means a user's upstream audio stream and downstream feedback must be precisely routed to the specific gateway node they are currently connected to, as well as the corresponding backend processing instance.

The problem is that in a distributed environment, maintaining a dynamic mapping table of "Session ID to Physical Node IP" is inherently complex. If gateway nodes scale out, restart, or experience network fluctuations, route table synchronization delays can easily cause messages to be delivered to the wrong node, leading to disconnected links and data loss, which disrupts interaction continuity.

The inference process for LLMs is usually time-consuming and fluctuates significantly (ranging from seconds to minutes). Using a synchronous waiting mode would occupy gateway and business threads for a long time, causing system throughput to plummet and potentially triggering a "snowball effect" of resource exhaustion.

To improve throughput, LLM calls are usually transformed into asynchronous processing. However, a new challenge arises after asynchronization: how can the final calculated results (such as ASR text or TTS audio) be pushed accurately and in real time back to the specific user connection that initiated the request?

Relying on complex callback polling or status queries is not only difficult to implement but also further increases latency and maintenance costs. This is one of the core difficulties in speech interaction architecture design.

From a business logic perspective, establishing an isolated communication channel for each independent speech session is the ideal way to avoid data crosstalk.

However, creating a standard RocketMQ topic for every session would lead to a significant metadata explosion. A massive number of temporary topics would severely consume the memory and CPU resources of name servers and brokers, leading to a sharp decline in cluster performance and even affecting availability.

Previously, some scenarios used broadcast messaging to circumvent this. While simple to implement, it has two major flaws:

● Messages are repeatedly delivered and filtered across all nodes, wasting significant network traffic and computing resources.

● Since all nodes must process all messages, a single node's processing capacity becomes the upper limit for the entire system, restricting scale-out scalability.

Therefore, the broadcast mode struggles to support continuously growing high-concurrency speech services.

Voice sessions are typically highly temporary and are not permanent resources. A session's lifecycle might only be a few minutes of dialogue or exist only within a specific business cycle.

In traditional architectures, resources like routing records, cache states, and temporary channels often rely on scheduled scan tasks or manual cleanup after a session ends. This brings two typical problems:

● Delayed cleanup: Invalid resources accumulate over time, occupying system memory and computing resources.

● Premature cleanup: Legitimate interactions still in progress might be accidentally cut off.

The system needs a mechanism truly suited for temporary session scenarios that can achieve automatic creation, expiration, and destruction of channels.

To address these issues, a message middleware architecture better suited for high-concurrency intelligent speech interaction can be built using the LiteTopic model of ApsaraMQ for RocketMQ.

LiteTopic supports the dynamic creation of massive lightweight topics, possesses native session isolation capabilities, and includes a built-in TTL automatic cleanup mechanism. These features align perfectly with the requirements of agent-based speech interaction for "high concurrency, low latency, strong isolation, and easy recycling."

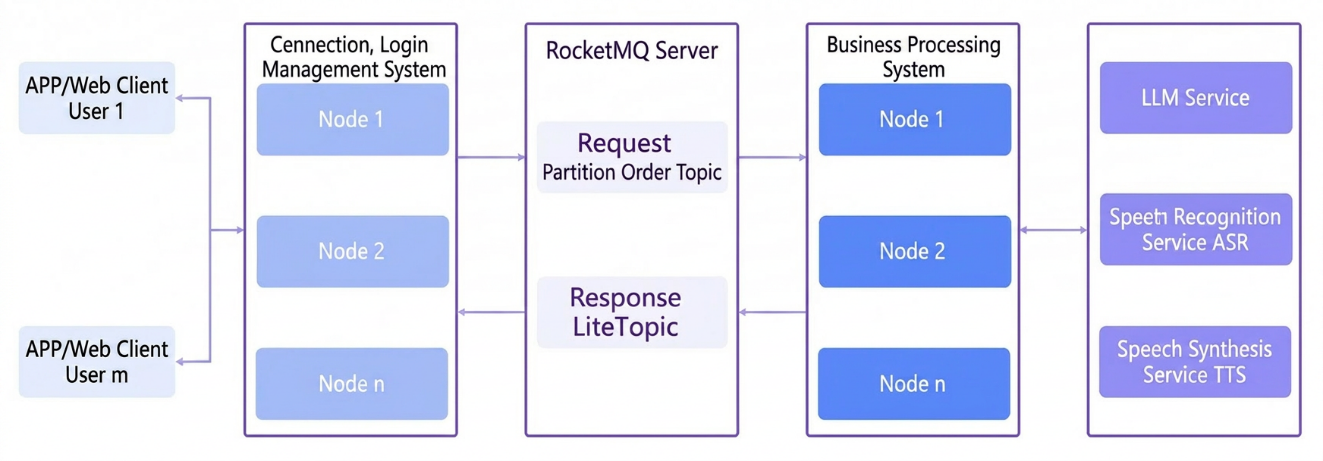

● Request side: sharded audio packets uploaded via partitioned order topics

Audio packets for the same session use the SessionID as the key for partitioning. Messages with the same key are delivered to the business processing system in the order they were sent, ensuring ordered message processing within a single session.

● Response side: model results asynchronously notified via LiteTopic

RocketMQ LiteTopic establishes a comprehensive and fine-grained monitoring, alerting, and troubleshooting system based on Cloud Monitor:

● Intelligent alerting: Configure message accumulation thresholds for LiteTopics. If a session link's latency exceeds expectations, an alert is triggered immediately.

● Fast positioning: Upon receiving an alert, O&M personnel can view the list of LiteTopics with the highest accumulation and their corresponding consumer IP addresses directly on the group details page of the console. This fine-grained visibility turns "needle-in-a-haystack" troubleshooting into precise, minute-level fixes.

Based on the native features of RocketMQ LiteTopic, such as being lightweight, flexible in subscription, automatically created, and automatically deleted via TTL, this solution offers three major architectural benefits:

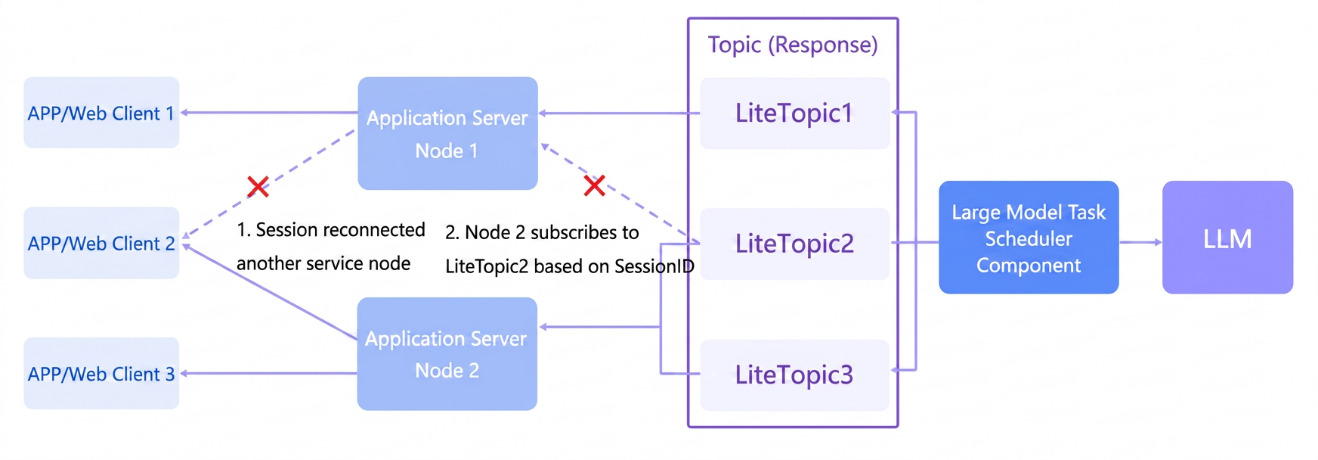

Through LiteTopic's automatic creation, "one-session-one-channel" and dynamic subscription mechanisms, the system can establish independent response channels for every speech session. Regardless of how long LLM inference takes, messages flow orderly in their exclusive channels, avoiding crosstalk between sessions.

Furthermore, even if backend services scale or network fluctuations occur, response messages can still return to the gateway node where the user is currently connected, ensuring session stickiness and session continuity in long-link interactions.

Additionally, the LiteTopic asynchronous notification mechanism avoids blocking long-running threads, further improving overall throughput and providing users with a smooth speech interaction experience even during peak periods.

In traditional solutions, applications often need to maintain "Session ID to Node IP" routing mappings, along with heartbeat and exception cleanup logic, resulting in complex state management and high maintenance costs.

With LiteTopic, routing logic is offloaded to the message middleware layer, and business code only needs to send and receive messages based on the SessionID. Application nodes thus become closer to stateless computing units, no longer heavily dependent on local connection state tables. This not only reduces state management complexity but also makes applications easier to scale elastically and recover from failures, thereby improving overall maintainability and disaster recovery capabilities.

In traditional architectures, "no-response" scenarios caused by message misrouting or timeouts often trigger client retries. This leads to the same audio segment being repeatedly sent for LLM inference, resulting in extra token consumption.

Through more precise session routing and reliable delivery mechanisms, this solution better ensures "one request, guaranteed response," significantly reducing repeated calls caused by link issues and directly lowering the cost of invalid LLM tokens.

From a business perspective, introducing RocketMQ LiteTopic typically yields significant improvements in the following areas:

● More stable user experience: Significantly reduces "no-response" issues caused by inconsistent connection states and improves the success rate of speech interactions. Even in network fluctuation scenarios, it better supports seamless reconnection and interaction continuity.

● Lower system complexity: No longer requires maintenance of complex custom route tables and state synchronization logic. Instead, it leverages the native capabilities of LiteTopic for session management, making the overall architecture simpler and easier to scale.

● More efficient O&M positioning: With fine-grained monitoring and alerting, potential performance bottlenecks can be discovered and handled before they affect users, markedly improving the efficiency of problem positioning and fixing.

● More controllable resource costs: By leveraging the elasticity of ApsaraMQ for RocketMQ, business can pay by usage without reserving peak capacity in advance, while also reducing extra model consumption from repeated calls

● Easier business expansion: A more lightweight and scalable link design lays the foundation for expanding into more real-time interactive scenarios, allowing for more confident handling of traffic growth.

When building agents, many teams prioritize model capabilities, inference results, and cost control. However, in high-concurrency real-time speech scenarios, the stability, precision, and scalability of the message link are equally indispensable for delivering these capabilities to users.

The solution based on RocketMQ LiteTopic essentially addresses several key questions:

● How to isolate massive sessions.

● How to maintain session stickiness across long links.

● How to precisely push back asynchronous results.

● How to automatically manage temporary channels.

● How to maintain observability and scalability under high concurrency.

RocketMQ LiteTopic provides a message architecture approach better suited for high-concurrency real-time interaction. For teams advancing the engineering of agents, real-time interactions, and LLM applications, especially those needing to support massive dynamic sessions, low-latency responses, and flexible expansion, these capabilities are moving from "nice-to-have" to "must-have".

We welcome you to search on DingTalk (group ID: 110085036316) or scan the code to join the RocketMQ for AI user community to exchange and discuss with us.

How Enterprise Data Is Called by AI Agents? EventHouse Builds a AI-Ready Data Base

707 posts | 57 followers

FollowAlibaba Cloud Native Community - December 31, 2024

Alibaba Cloud Community - October 14, 2025

Alibaba Cloud Indonesia - March 17, 2026

Alibaba Cloud Community - December 11, 2025

Alibaba Clouder - March 23, 2020

Alibaba Cloud Data Intelligence - August 14, 2024

707 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Bastionhost

Bastionhost

A unified, efficient, and secure platform that provides cloud-based O&M, access control, and operation audit.

Learn More ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ is a distributed message queue service that supports reliable message-based asynchronous communication among microservices, distributed systems, and serverless applications.

Learn MoreMore Posts by Alibaba Cloud Native Community