Prerequisites

Before you begin, ensure that you have:

-

An Alibaba Cloud account. If you don't have one, register here.

-

A virtual private cloud (VPC) and a vSwitch. For setup instructions, see Create an IPv4 VPC.

Limitations

-

The source Elasticsearch cluster, Logstash cluster, and destination Elasticsearch cluster must all reside in the same VPC. If they are in different VPCs, configure a NAT gateway for the Logstash cluster so it can reach both clusters over the internet. See Configure a NAT gateway for data transmission over the internet.

-

The versions of all three clusters must meet compatibility requirements. Check Compatibility matrixes before proceeding.

Overview

This guide walks you through the following steps:

-

Prepare — Create source and destination Elasticsearch clusters, enable Auto Indexing on the destination cluster, and load test data.

-

Create a Logstash cluster — Create a Logstash cluster and wait for it to become active.

-

Create and run a pipeline — Configure the Logstash pipeline to sync data between the two clusters.

-

Verify results — Query the destination cluster in Kibana to confirm the data arrived.

Prepare

Create Elasticsearch clusters

-

Log in to the Elasticsearch console.

-

In the left-side navigation pane, click Elasticsearch Clusters.

-

Click Create and create two Elasticsearch clusters — one as the source and one as the destination. This example uses a Logstash V6.7.0 cluster to sync data between two Elasticsearch V6.7.0 Standard Edition clusters. The pipeline configuration in this guide applies to this combination. For other version combinations, verify compatibility in Compatibility matrixes first — if your clusters don't meet requirements, upgrade their versions or create new clusters.

The default user for accessing an Elasticsearch cluster is

elastic. To use a different user, grant the required permissions to a role and assign that role to the user. See Use the RBAC mechanism provided by Elasticsearch X-Pack to implement access control.

Enable Auto Indexing on the destination cluster

Enable the Auto Indexing feature for the destination Elasticsearch cluster. See Configure the YML file for instructions.

Alibaba Cloud Elasticsearch disables Auto Indexing by default for security reasons. When Logstash writes data to an Elasticsearch cluster, it creates indexes by submitting data directly — not by calling the create index API. Enable Auto Indexing on the destination cluster before running the pipeline, or manually create the index and configure its mappings in advance.

Load test data

Log in to the Kibana console of the source Elasticsearch cluster, go to Dev Tools, and run the following commands on the Console tab.

The code below applies only to Elasticsearch V6.7 clusters and is for testing only. For V7.0 and later clusters, see Getting started with Elasticsearch. For instructions on logging in to the Kibana console, see Log on to the Kibana console.

-

Create an index named

my_indexwith typemy_type.PUT /my_index { "settings" : { "index" : { "number_of_shards" : "5", "number_of_replicas" : "1" } }, "mappings" : { "my_type" : { "properties" : { "post_date": { "type": "date" }, "tags": { "type": "keyword" }, "title" : { "type" : "text" } } } } } -

Insert document

1intomy_index.PUT /my_index/my_type/1?pretty { "title": "One", "tags": ["ruby"], "post_date":"2009-11-15T13:00:00" } -

Insert document

2intomy_index.PUT /my_index/my_type/2?pretty { "title": "Two", "tags": ["ruby"], "post_date":"2009-11-15T14:00:00" }

Step 1: Create a Logstash cluster

-

In the Elasticsearch console, select the region where your destination Elasticsearch cluster resides from the top navigation bar.

-

In the left-side navigation pane, click Logstash Clusters.

-

On the Logstash Clusters page, click Create.

-

On the buy page, configure the cluster. For this example, set Billing Method to Pay-as-you-go and Logstash Version to 6.7, and keep other settings at their defaults. For a full parameter reference, see Create an Alibaba Cloud Logstash cluster.

Pay-as-you-go clusters are suitable for development and testing. Subscription clusters offer discounts for production use.

-

Accept the Logstash (Pay-as-you-go) Terms of Service and click Buy Now.

-

After the cluster is created, click Console. In the top navigation bar, select the region where the Logstash cluster resides. In the left-side navigation pane, click Logstash Clusters and confirm the new cluster appears in the list.

Step 2: Create and run a pipeline

Wait for the Logstash cluster state to change to Active before proceeding.

-

On the Logstash Clusters page, find your cluster and click Manage Pipeline in the Actions column.

-

On the Pipelines page, click Create Pipeline.

-

In the Config Settings step, set a Pipeline ID and enter the pipeline configuration. The following configuration syncs all non-system indexes from the source cluster to the destination cluster. All examples use the

inputandoutputElasticsearch plug-ins, withfile_extendfor debug logging.Placeholder Description Example es-cn-0pp1f1y5g000h****ID of the source Elasticsearch cluster es-cn-0pp1f1y5g000h4udces-cn-mp91cbxsm000c****ID of the destination Elasticsearch cluster es-cn-mp91cbxsm000c3ugryour_passwordPassword for the Elasticsearch user — ls-cn-v0h1kzca****ID of the Logstash cluster (used in the file_extendpath)ls-cn-v0h1kzca4ool`input` parameters

Parameter Required Description hostsYes Endpoint of the source Elasticsearch cluster, in the format http://<source-cluster-id>.elasticsearch.aliyuncs.com:9200.userYes Username for the source cluster. The default is elastic.passwordYes Password for the source cluster user. To reset a forgotten password, see Reset the access password for an Elasticsearch cluster. indexYes Indexes to read from. The value *,-.monitoring*,-.security*,-.kibana*syncs all indexes except system indexes (those whose names start with.). System indexes store cluster monitoring data and do not need to be synced.docinfoNo Set to trueto extract document metadata (_index,_type,_id) and make it available in[@metadata]. Required when you want the destination index name, type, and document IDs to match the source.`output` parameters

Parameter Required Description hostsYes Endpoint of the destination Elasticsearch cluster, in the format http://<destination-cluster-id>.elasticsearch.aliyuncs.com:9200.userYes Username for the destination cluster. passwordYes Password for the destination cluster user. indexYes Destination index name. The value %{[@metadata][_index]}uses the source index name (requiresdocinfo => trueininput).document_typeNo Type of the destination index. The value %{[@metadata][_type]}matches the source type.document_idNo IDs for documents in the destination index. The value %{[@metadata][_id]}preserves the original document IDs.file_extend>pathNo Path for debug logs. Install the logstash-output-file_extendplug-in before using this parameter. See Install and remove a plug-in. Click Start Configuration Debug in the console to get the correct path — do not change the system-specified default path.input { elasticsearch { hosts => ["http://es-cn-0pp1f1y5g000h****.elasticsearch.aliyuncs.com:9200"] user => "elastic" password => "your_password" index => "*,-.monitoring*,-.security*,-.kibana*" docinfo => true } } filter {} output { elasticsearch { hosts => ["http://es-cn-mp91cbxsm000c****.elasticsearch.aliyuncs.com:9200"] user => "elastic" password => "your_password" index => "%{[@metadata][_index]}" document_type => "%{[@metadata][_type]}" document_id => "%{[@metadata][_id]}" } file_extend { path => "/ssd/1/ls-cn-v0h1kzca****/logstash/logs/debug/test" } }Replace the placeholders: For more on the Config Settings syntax and supported data types, see Structure of a Config File.

-

Click Next to configure pipeline parameters. Set Pipeline Workers to the number of vCPUs on your Logstash cluster, and keep other settings at their defaults. For details, see Use configuration files to manage pipelines.

-

Click Save and Deploy.

-

Save stores the configuration and triggers a cluster change, but the pipeline does not start running. To start it, find the pipeline on the Pipelines page and click Deploy.

-

Save and Deploy restarts the Logstash cluster immediately and makes the settings take effect.

-

-

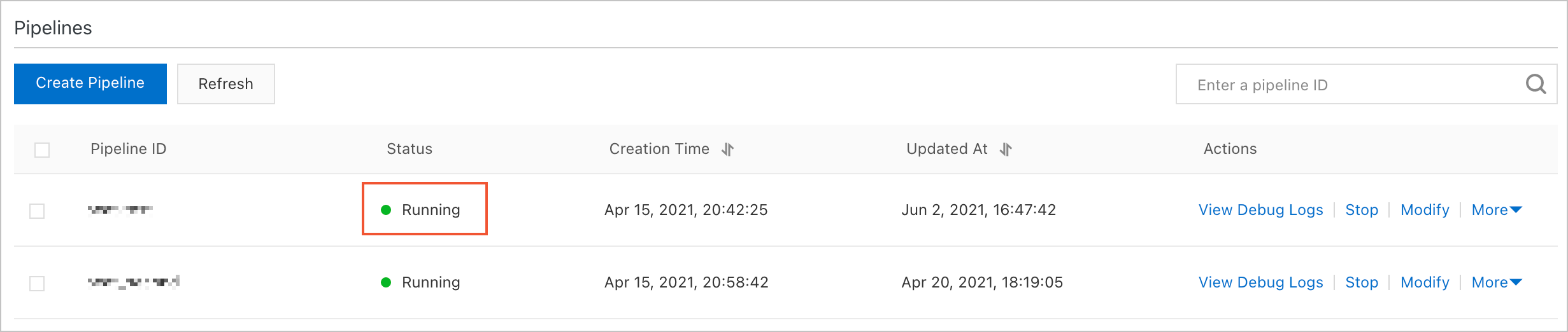

Click OK to confirm. The new pipeline appears in the Pipelines section. Once its state changes to Running, data synchronization begins.

Step 3: Verify synchronization results

-

Log in to the Kibana console of the destination Elasticsearch cluster. For instructions, see Log on to the Kibana console.

This example uses an Elasticsearch V6.7.0 cluster. The steps may differ for other versions.

-

In the left-side navigation pane, click Dev Tools.

-

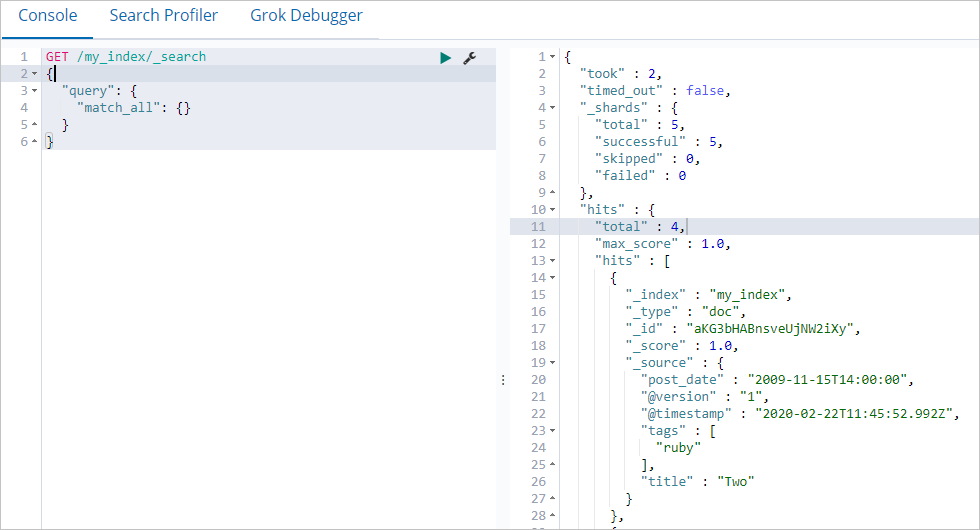

On the Console tab, run the following query:

GET /my_index/_search { "query": { "match_all": {} } }If synchronization succeeded, the destination cluster returns the same documents as the source cluster. To compare index sizes, run

GET _cat/indices?von both clusters and check that the destination index size matches the source.

What's next

-

Set up monitoring for your Logstash cluster:

-

Migrate data from a self-managed Elasticsearch cluster: Use Alibaba Cloud Logstash to migrate data from a self-managed Elasticsearch cluster to an Alibaba Cloud Elasticsearch cluster