This guide shows you how to use the pipeline configuration feature of Alibaba Cloud Logstash to migrate data from a self-managed Elasticsearch cluster to an Alibaba Cloud Elasticsearch cluster.

Logstash migrates data only — it does not migrate index mappings or settings. If you rely on automatic index creation on the destination cluster, data structures before and after migration may be inconsistent. To keep data structures consistent, manually create empty indexes on the destination cluster before starting migration. Copy the mappings and settings from the source cluster, and allocate an appropriate number of shards.

Prerequisites

Before you begin, make sure that:

-

The Elastic Compute Service (ECS) instance hosting your self-managed Elasticsearch cluster is in a virtual private cloud (VPC). ECS instances connected through ClassicLink are not supported.

-

The security group of that ECS instance allows inbound access from the Logstash cluster node IP addresses on port 9200. Find the node IP addresses on the Basic Information page of your Logstash instance.

-

Your Alibaba Cloud Logstash instance and the ECS instance are in the same VPC. If they are in different networks, configure a NAT Gateway for public network access. For details, see Configure a NAT gateway for public data transmission.

-

The versions are compatible. This guide uses self-managed Elasticsearch 8.17, Alibaba Cloud Elasticsearch 8.17, and Alibaba Cloud Logstash 8.11.4. The pipeline configuration in this guide applies to this version combination only. For other versions, check Product compatibility before proceeding.

Migration steps

The migration involves three steps:

Step 1: Prepare the environment

-

Set up a self-managed Elasticsearch cluster on ECS. This guide uses version 8.17 as an example. For installation instructions, see Install and run Elasticsearch.

-

Create an Alibaba Cloud Logstash instance in the same VPC as your ECS instance. For details, see Create an Alibaba Cloud Logstash instance.

-

Create a destination Alibaba Cloud Elasticsearch instance and enable automatic index creation.

-

Create an Elasticsearch instance in the same VPC as the Logstash instance, using the same version (8.17 in this example). For details, see Create an Alibaba Cloud Elasticsearch instance.

-

To enable automatic index creation, see Configure YML parameters.

-

Step 2: Configure and run the Logstash pipeline

-

Go to the Logstash Clusters page in the Alibaba Cloud Elasticsearch console.

-

In the top navigation bar, select the region where your cluster resides. On the Logstash Clusters page, find the cluster and click its ID.

-

In the left-side navigation pane, click Pipelines.

-

On the Pipelines page, click Create Pipeline.

-

On the Create Pipeline Task page, enter a pipeline ID and configure the pipeline using the following configuration.

Placeholder Description <IP address of the self-managed Elasticsearch master node>The IP address of your self-managed Elasticsearch master node es-cn-mp91cbxsm000c****Your Alibaba Cloud Elasticsearch instance ID your_passwordThe password for the respective Elasticsearch service Parameter Description hostsThe endpoint of the Elasticsearch service. In input, usehttp://<master node IP>:<port>. Inoutput, usehttp://<Alibaba Cloud Elasticsearch instance ID>.elasticsearch.aliyuncs.com:9200.userThe username for accessing Elasticsearch. userandpasswordare required. If X-Pack is not installed on your self-managed cluster, leave them blank. The default username for Alibaba Cloud Elasticsearch iselastic. To use a custom user, assign the required roles first. See Use Elasticsearch X-Pack role management to control user permissions.passwordThe password for accessing Elasticsearch. index(input)Set to *,-.monitoring*,-.security*,-.kibana*to sync all indexes except system indexes that start with..index(output)Set to %{[@metadata][input][elasticsearch][_index]}to preserve the source index name on the destination cluster.docinfoSet to trueto fetch document metadata from the source cluster, including index, type, and ID.document_idSet to %{[@metadata][input][elasticsearch][_id]}to preserve the source document ID on the destination cluster.file_extendOptional. Enables the debug log feature. The pathparameter specifies the log output path. Before using this parameter, install thelogstash-output-file_extendplugin. See Install and remove a plugin and Use the Logstash pipeline to configure the debug feature. Click Start Configuration Debug to get the default path — do not change it.input { elasticsearch { hosts => ["http://<IP address of the self-managed Elasticsearch master node>:9200"] user => "elastic" index => "*,-.monitoring*,-.security*,-.kibana*" password => "your_password" docinfo => true } } filter { } output { elasticsearch { hosts => ["http://es-cn-mp91cbxsm000c****.elasticsearch.aliyuncs.com:9200"] user => "elastic" password => "your_password" index => "%{[@metadata][input][elasticsearch][_index]}" document_id => "%{[@metadata][input][elasticsearch][_id]}" } file_extend { path => "/ssd/1/ls-cn-v0h1kzca****/logstash/logs/debug/test" } }Replace the following placeholders with actual values: The pipeline reads all indexes from the source cluster (excluding system indexes that start with

.) and writes them to the destination cluster, preserving index names and document IDs. The following table describes the key parameters. Prevent duplicate writes The Elasticsearch input plugin stops automatically after reading all data. Because Alibaba Cloud Logstash requires the process to run continuously, it restarts the pipeline when it stops — which can cause duplicate writes. To prevent this, add thescheduleparameter to theinputsection to run the pipeline once at a specific time using a cron expression, then stop the pipeline manually after the first run.schedule => "20 13 5 3 *"This example runs the pipeline once at 13:20 on March 5. After the pipeline completes, stop it manually. For more information about cron syntax, see Scheduling in the Logstash documentation. For additional configuration options, see Logstash configuration file description.

-

Click Next to configure pipeline parameters.

WarningSaving and deploying the pipeline triggers a restart of the Logstash cluster. Make sure the restart does not affect your business before proceeding.

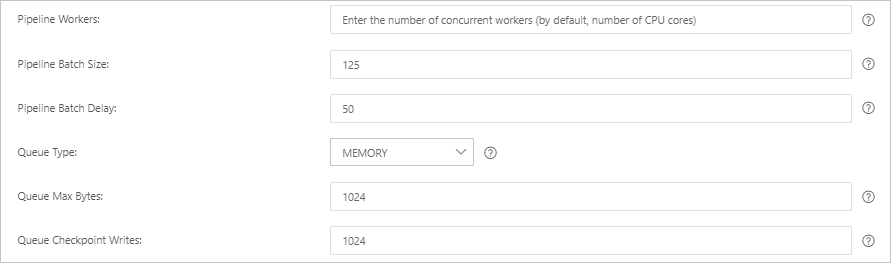

Parameter Description Pipeline Workers The number of worker threads that run the filter and output plugins in parallel. If events are backing up or CPU resources are underused, increase this value. Default: number of vCPUs. Pipeline Batch Size The maximum number of events a single worker thread collects from input plugins before running filter and output plugins. Larger values increase throughput but consume more memory. To support larger batches, increase the JVM heap size using the LS_HEAP_SIZEvariable. Default: 125.Pipeline Batch Delay The wait time (in milliseconds) before assigning a small batch to a pipeline worker thread. Default: 50 ms. Queue Type The internal queue model for buffering events. MEMORY (default): memory-based queue. PERSISTED: disk-based persistent queue. Queue Max Bytes The maximum data size for a queue, in MB. Valid values: integers from 1 to 2⁵³ - 1. Must be less than your total disk capacity. Default: 1024. Queue Checkpoint Writes The maximum number of events written before a checkpoint is enforced (for persistent queues). Set to 0for no limit. Default: 1024.

-

Click Save or Save and Deploy.

-

Save: Stores the pipeline settings and triggers a cluster change, but the settings do not take effect immediately. On the Pipelines page, find the pipeline and click Deploy Now in the Actions column. Then, the system restarts the Logstash cluster to make the settings take effect.

-

Save and Deploy: Immediately restarts the Logstash cluster and applies the settings.

-

Step 3: View the data migration result

-

In your Alibaba Cloud Elasticsearch instance, log on to the Kibana console. In the left navigation pane, click the

icon and choose Management > Developer Tools.

icon and choose Management > Developer Tools.This guide uses Alibaba Cloud Elasticsearch 8.17. Steps may differ for other versions. Refer to the actual console UI.

-

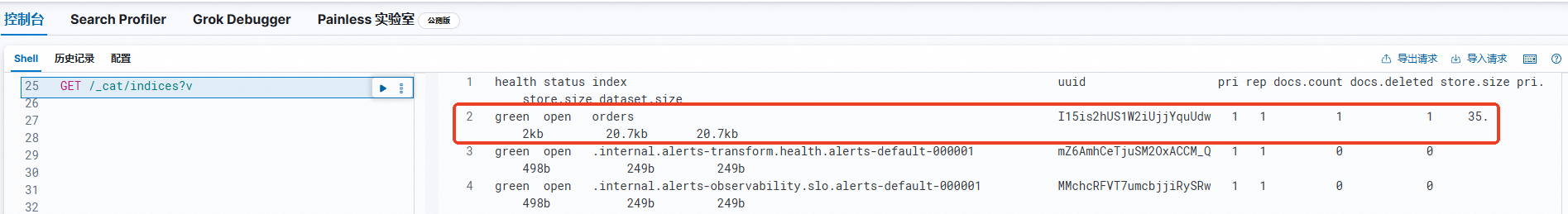

In the Console, run the following command to list all migrated indexes.

GET /_cat/indices?vThe output lists all indexes on the destination cluster. Confirm that the index names and document counts match those on the source cluster.

FAQ

My self-managed Elasticsearch cluster and Alibaba Cloud Logstash instance are under different accounts. How do I configure network connectivity?

Because the ECS instance and the Logstash instance are under different accounts, they are in different VPCs. Use Cloud Enterprise Network (CEN) to connect the two VPCs. For details, see Step 3: Load network instances.

What should I do if Logstash fails to write data?