By Yangkun Ai (Author of Apache RocketMQ 5.0 Java SDK, CNCF Envoy Contributor, CNCF OpenTelemetry Contributor, and Senior Development Engineer from Alibaba Cloud Intelligence)

The RocketMQ 5.0 SDK uses a new API, implements the communication layer using gRPC, and significantly improves observability.

The API here specifies different methods and behaviors of each interface and clarifies the entire message model.

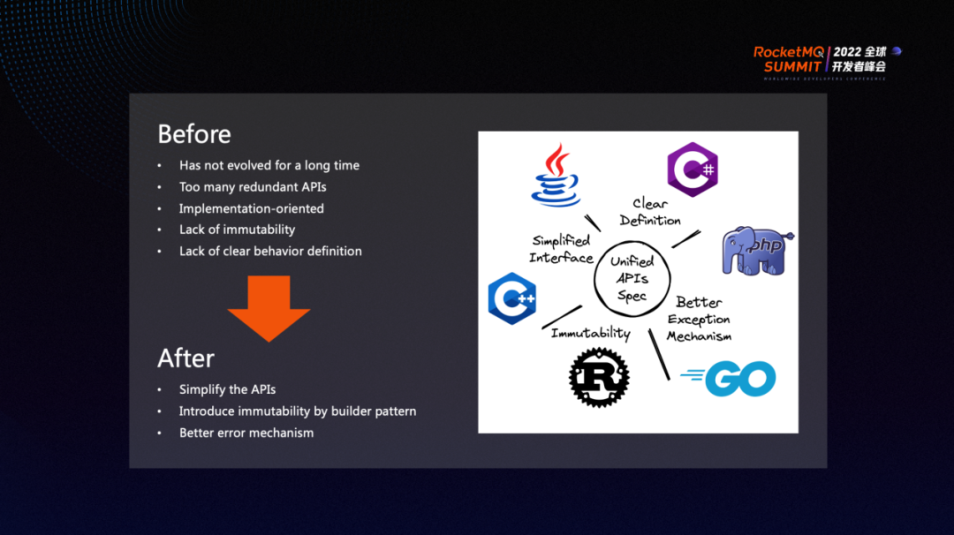

A long time has passed since the first version of RocketMQ API. Long-term dependence leads to a lack of change, and there is no subsequent iteration for some APIs intended to be abandoned or changed. In addition, the definition of interfaces is not clear enough. Therefore, we hope to establish a unified specification in RocketMQ 5.0, streamline the entire API, introduce more invariability by introducing the builder model, and do a good job of exception management to give developers and users a more refreshing look.

C++ and Java have already defined and implemented 5.0 API, and more language support is on the way. We also welcome more developers to participate in the work of the community. Here is the repository link for the 5.0 client

In addition to the preceding modifications made to the interfaces above, RocketMQ 5.0 specifies four different client types: Producer, Push Consumer, Simple Consumer, and Pull Consumer.

Pull Consumer is still under development. Interface tailoring is done on the Producer to standardize exception management. No subversive changes have been made in functions. Push Consumer is similar. Simple Consumer distributes more rights to users. It is a consumer by which users can actively control the process of message receiving and processing. In particular, both Push Consumer and Simple Consumer are implemented with RocketMQ's pop mechanism in the 5.0 SDK, which some students in the community may already be familiar with.

If users do not necessarily want to control or care about the entire message receiving process, but only care about the message consumption process, Push Consumer may be a better choice.

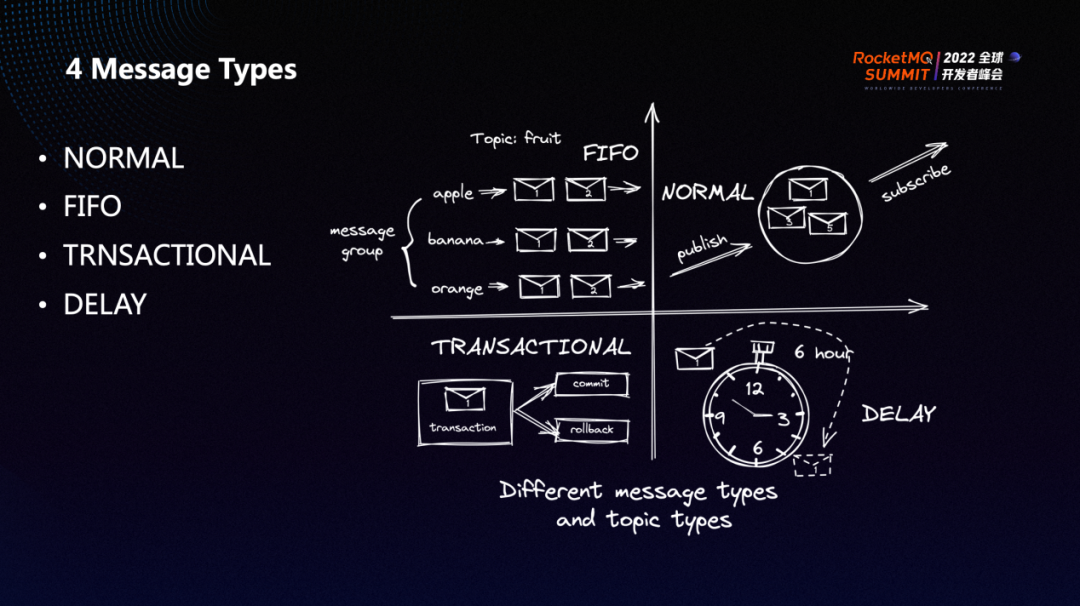

RocketMQ 5.0 defines four different message types. We did not highlight the concept of the message type in the past open-source versions. Later, due to the operation and maintenance needs and the model's complete definition, the message type concept was introduced in 5.0.

The four types of messages above are mutually exclusive. We will identify their types in the metadata of the topic. In practice, if the message type does not match the topic type, some restrictions are imposed.

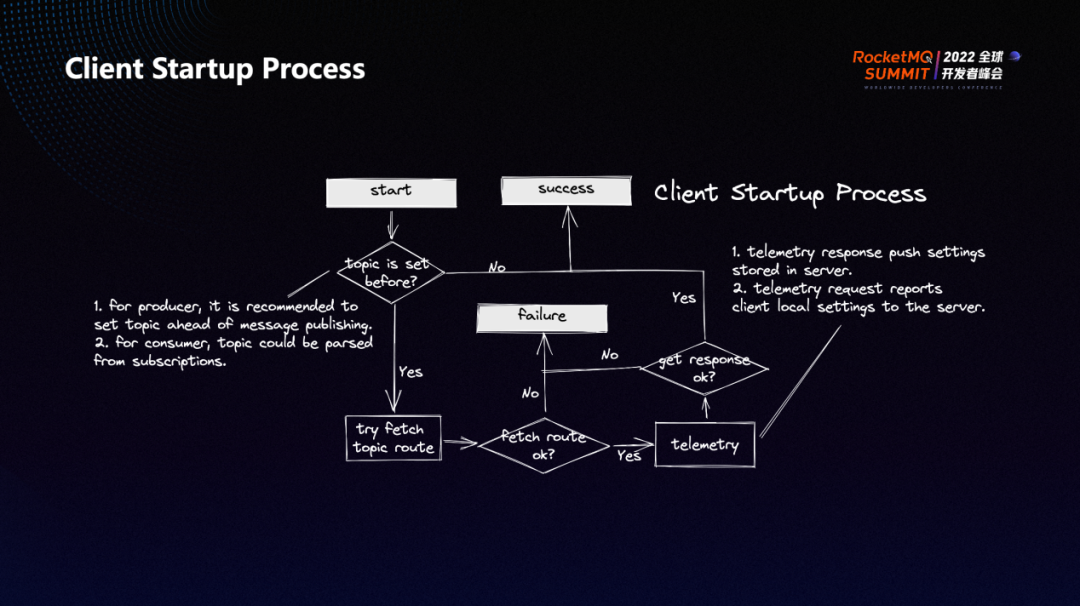

RocketMQ 5.0 makes more preparations in advance during the startup of the client. For example, if a user sets the topic to send messages in advance, the Producer will attempt to fetch the route of the corresponding topic during startup. When sending a message to a topic for the first time in the past client implementation, users need to fetch the route first, which is similar to a cold start process.

Obtaining routing information about a topic in advance has two benefits:

Similarly, the startup of the Consumer also has such a process.

In addition, we have added a Telemetry part between the client and the server, which will establish a channel for two-way data communication between the client and the server. The client and the server will communicate configurations in this process. For example, the server can distribute the configuration to the client and manage the client better. In addition, Telemetry can actively report the local configuration to the server so the server can have a better understanding of the client settings. The Telemetry channel is also attempted to be established when the client is started. If the channel is not established successfully, the client's startup is affected.

Generally, the startup process of the client will do all the preparations as much as possible. At the same time, a communication channel (such as Telemetry) is established between the client and the server.

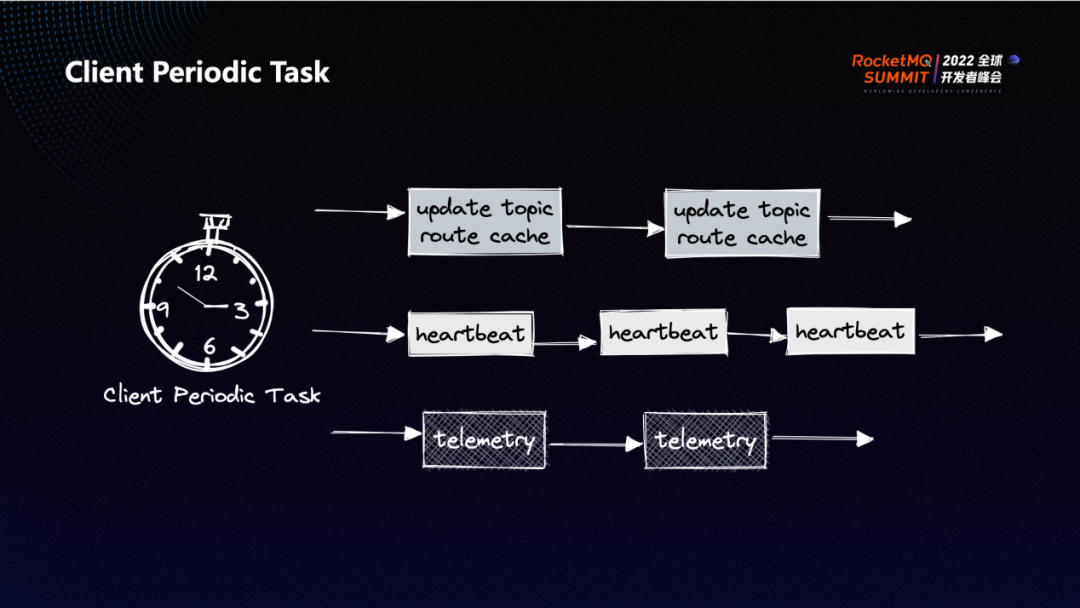

There are some periodic tasks in the client (such as the scheduled update of routes and the sending of heartbeats from the client to the server). The client configuration report is also periodic for the Telemetry mentioned above.

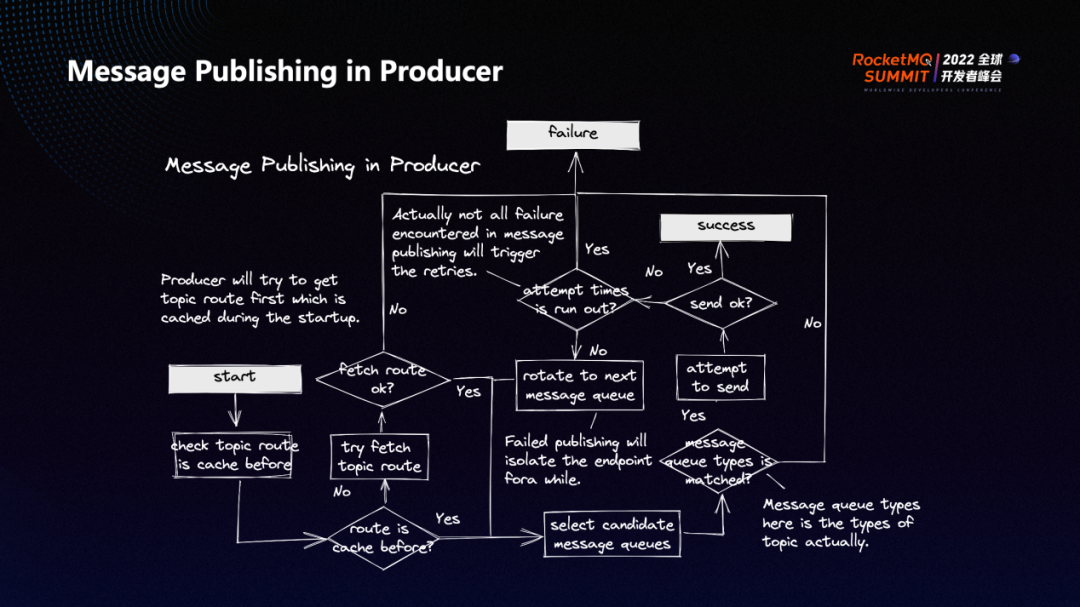

The preceding figure shows the specific workflow of the Producer in RocketMQ 5.0.

When a message is to be sent, check whether the routing information of the corresponding topic has been obtained. If the routing information has been obtained, try to select a queue in the route and check whether the type of the message to be sent matches the topic type. If so, send the message. If the message is sent successfully, then return. Otherwise, determine whether the current retry count exceeds the limit set by the user. If it exceeds, return failure. Otherwise, rotate to the next message queue and retry the new queue until the consumption count exceeds the upper limit. However, if the route is not fetched in advance during the startup process, try to fetch the route first and proceed to the next step.

Another big change compared to the old client is that the underlying RPC interaction and the upper layer business logic on the new client are all implemented asynchronously. The Producer provides a synchronous sending interface and an asynchronous sending interface, but the synchronous method is also implemented asynchronously, and the whole logic is very uniform and refreshing.

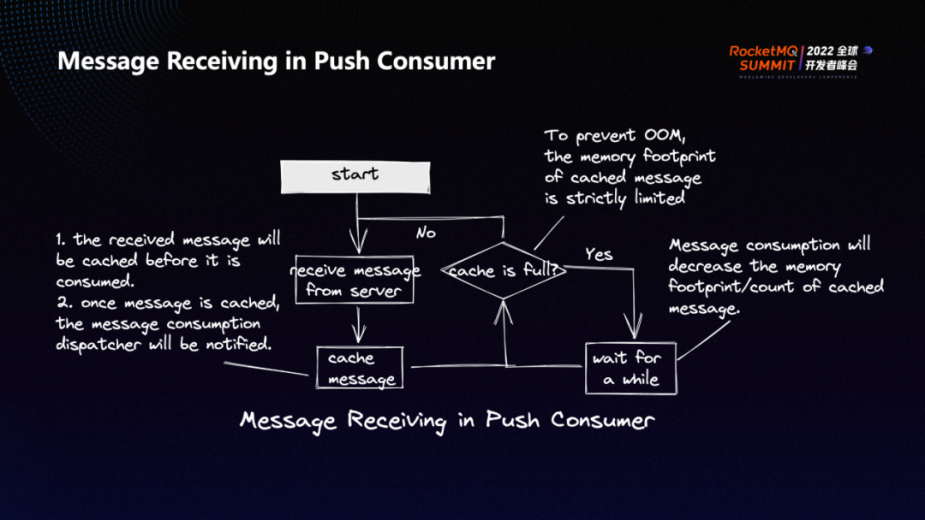

Push Consumer is divided into two parts: message receiving and consumption.

The message receiving process is listed below:

The client needs to continuously pull messages from the server and cache the messages. Push Consumer caches messages to the client and consumes them. Therefore, Push Consumer determines whether the local cache on the client is full. If the cache is full, Push Consumer will wait for a while, determine again whether the local cache is full until the message is consumed by the client, and pull the messages when the cache is free. The size of cached messages is also strictly limited to avoid some memory problems.

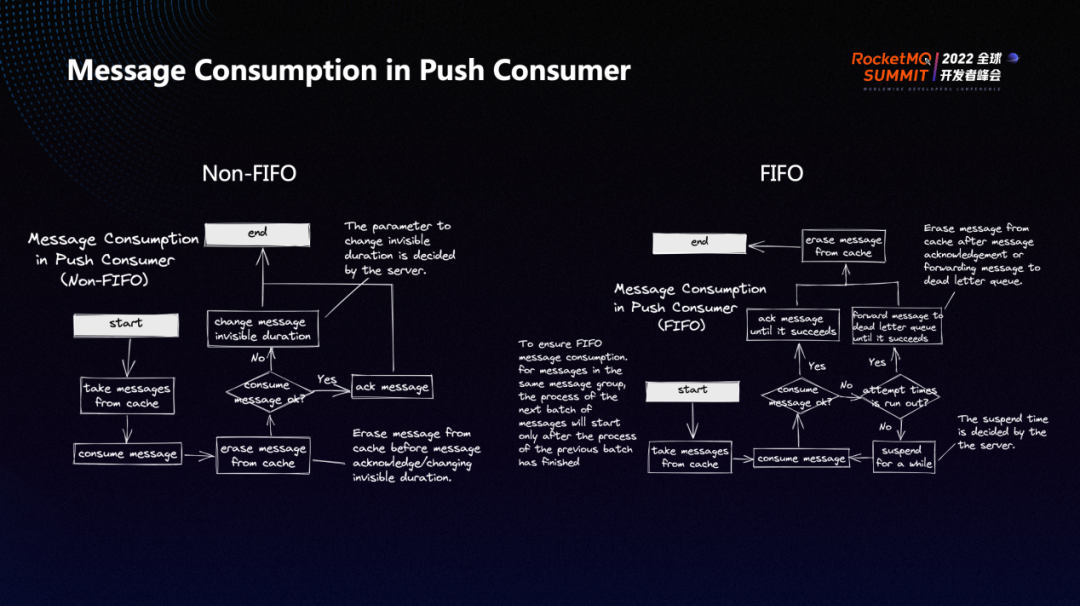

The message consumption process is divided into two types: FIFO consumption and Non-FIFO consumption.

Non-FIFO consumption refers to concurrent consumption. The consumer first obtains the message from the cache, attempts to consume the message, and erases the message from the cache after consumption. If the message is consumed successfully, the message will be acknowledged, and the consumption process ends. If the message fails to be consumed, the consumer will change the message's invisible duration. In other words, it will change the time when the message will be visible next time.

FIFO means messages in the same group can only be consumed after the previous message has been consumed and confirmed. The consumption process is similar to Non-FIFO consumption. First, the consumer tries to pull the message from the cache. If the consumption is successful, it will acknowledge the message. After the message is acknowledged successfully, the consumer will erase the message from the cache. In particular, if the consumption fails, the system will suspend for a while and continue to try to consume messages. In this case, the consumer determines whether the attempt times are run out. If so, the consumer will forward the message to the dead-letter queue.

Compared with Non-FIFO consumption, FIFO consumption is more complicated because it requires the previous message to be successfully consumed before the subsequent message can be consumed. The consumption logic of FIFO consumption is isolated based on message groups. The message group will hash when sending messages, so messages of the message group will eventually fall into a queue. The FIFO consumption mode essentially guarantees the order of consumption within the queue.

In addition, since ordered messages of different message groups may eventually be mapped to the same queue, this may lead to the consumption between different message groups being blocked. Therefore, a virtual queue will be implemented on the server in the future, allowing different message groups to be mapped to the virtual queue of the client. This ensures there is no blocked consumption between them, thus accelerating the consumption of data messages.

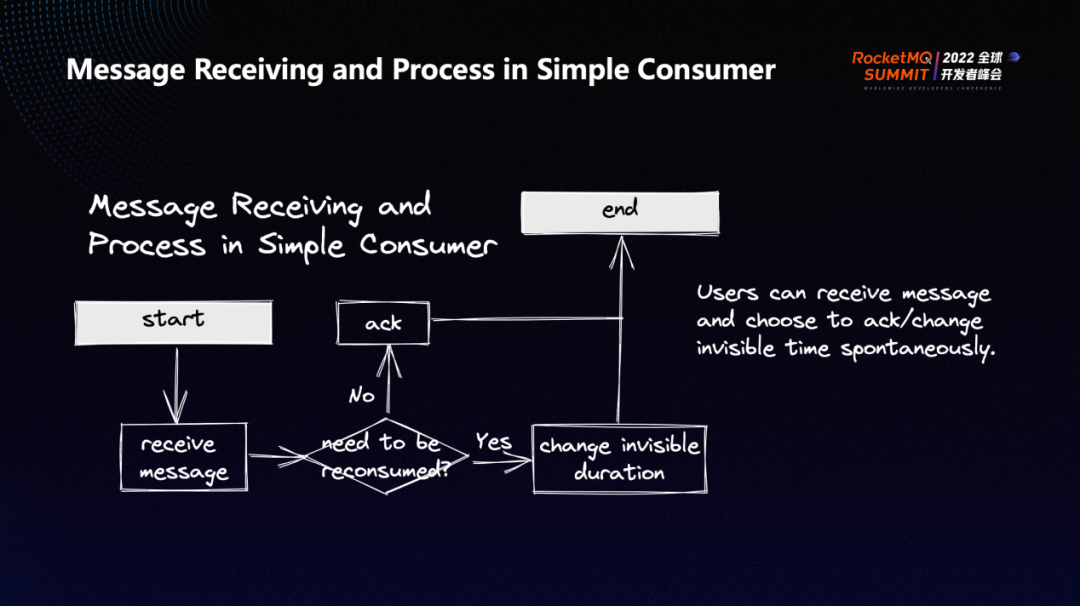

users can actively control the process of receiving and acknowledging messages for Simple Consumer. For example, after receiving a message, users can decide whether to consume the message after a period or not receive the message anymore based on the business. After the consumption succeeds, the message is acknowledged. If the consumption fails, users can change the message's invisible duration to determine the time when the message will be visible next time. The user will spontaneously control the whole process.

Due to historical reasons, the programming of the old RocketMQ client is not based on SLF4J but on logback. The purpose of this is to obtain logs conveniently and quickly, and users do not need to configure parameters manually. There is a special logging module in RocketMQ responsible for log issues. For example, if users using logback and RocketMQ SDK directly use logback as well, various conflicts will occur. This logging module is used to ensure isolation.

However, the implementation of the logging module is not very simple, and it also brings certain maintenance costs. Therefore, we adopt the shade logback method to achieve the isolation mentioned above. Shaded logback can avoid conflicts between users' logback and RocketMQ's logback and maintain good maintainability. It will be much easier to make changes in logs in the future.

Specifically, the user's logback will use the configuration file logback.xml. With the shaded logback, the client of RocketMQ 5.0 will use the configuration file rocketmq.logback.xml. So the isolation has been achieved in the configuration section. At the same time, some environment variables and system variables used in the native logback are modified during the logback shading, thus ensuring the complete isolation of the two.

In addition, after using shaded logback, the log part in the RocketMQ 5.0 client is all programmed based on SLF4J. This way, if we want users to fully control the log in the future, it is very convenient to provide an SDK without logback.

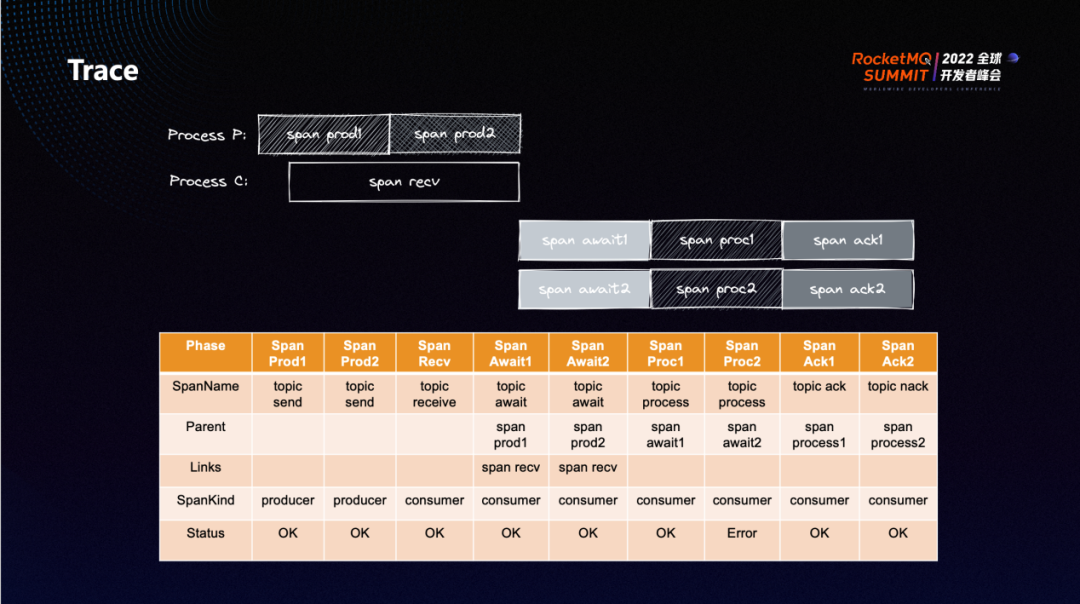

The message traces of RocketMQ 5.0 are defined and implemented based on the OpenTelemetry model. The process of sending or receiving messages is defined as isolated spans. This set of span specifications refers to the definition of Messaging in OpenTelemetry. In the figure, Process P indicates Producer, and Process C indicates Consumer. The full lifecycle of a message (from sending to receiving to consumption) can be visualized as such individual spans.

For example, there is a received span for Push Consumer to indicate the process of obtaining a message from the server. The process from receiving a message to waiting for the message to be processed is represented by await span. The process of processing a message corresponds to the process span in the figure. After the message consumption is completed, a special span will be set up to describe the feedback of the message processing result to the server.

We can associate all these spans through parent and link, so all spans in the full lifecycle of a message can be obtained through any span of the message.

In addition, users are allowed to set a span context to associate with their business procedures and embed the message trace of RocketMQ 5.0 into their full-procedure observable system.

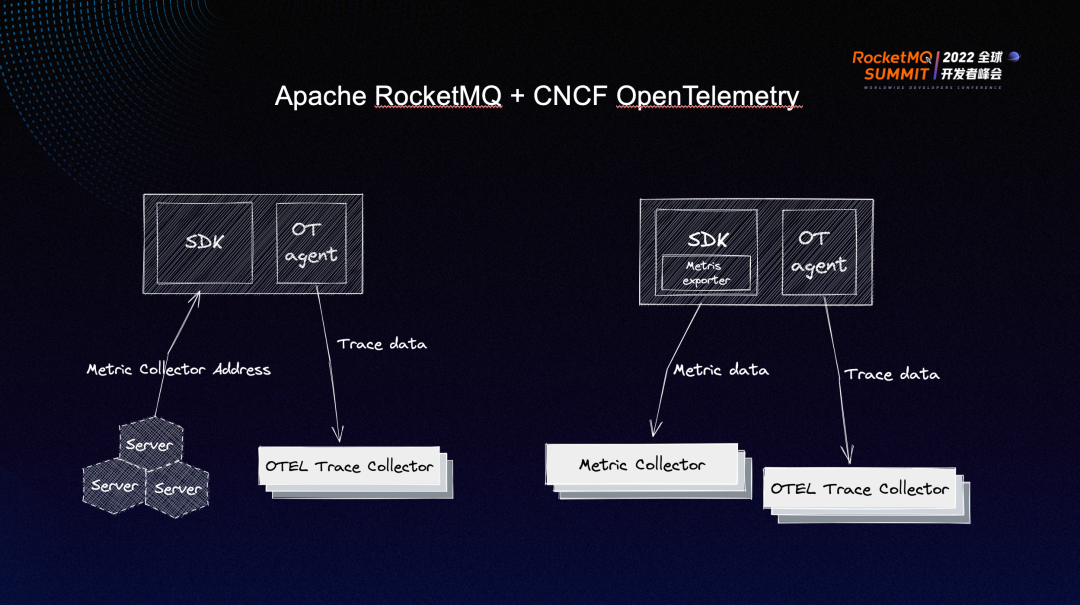

Tracing costs are relatively high because there may be many processes (from sending to receiving a message), which are accompanied by many spans, leading to relatively high storage and query costs for tracing data. We want to diagnose the health status of the entire SDK, and we don't want to collect too much tracing information to increase costs. In this case, providing a copy of metric data can meet our needs.

We have added many metrics in the metrics of the SDK, including (but not limited to) the message sending delay in the Producer, the consumption duration of messages, and the number of cached messages in the Push Consumer, which can help users and O&M providers find exceptions faster and better.

The SDK Metrics in 5.0 is also implemented based on OpenTelemetry. Let’s take a Java program as an example. OpenTelemetry provides an agent for Java implementation. The agent collects some tracing/metrics messages of the SDK on the run time through event tracking and reports them to the corresponding metric collector. This method of reducing non-intrusive data collection through the agent is called automatic instrumentation, while the method of manually implementing collection through event tracking in the code is called manual instrumentation. We still use manual instrumentation to collect and report data for metrics. The server will inform the client of the corresponding collector address. Then, the client will upload the Metrics data to the corresponding collector.

Join the Apache RocketMQ community: https://github.com/apache/rocketmq

Storage Enhancements for Apache RocketMQ 5.0 in Stream Scenarios

RocketMQ 5.0: Exploration and Practice of Stateless Proxy Mode

698 posts | 56 followers

FollowAlibaba Cloud Community - December 21, 2021

Alibaba Cloud Native - June 7, 2024

Alibaba Cloud Native - June 6, 2024

Alibaba Cloud Native Community - November 23, 2022

Alibaba Cloud Native Community - January 5, 2023

Alibaba Cloud Native Community - May 15, 2023

698 posts | 56 followers

Follow ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ is a distributed message queue service that supports reliable message-based asynchronous communication among microservices, distributed systems, and serverless applications.

Learn More ChatAPP

ChatAPP

Reach global users more accurately and efficiently via IM Channel

Learn More Short Message Service

Short Message Service

Short Message Service (SMS) helps enterprises worldwide build channels to reach their customers with user-friendly, efficient, and intelligent communication capabilities.

Learn More AliwareMQ for IoT

AliwareMQ for IoT

A message service designed for IoT and mobile Internet (MI).

Learn MoreMore Posts by Alibaba Cloud Native Community