Over the past two years, the automation of processes such as E-commerce customer service, tickets, and logistics has been advancing rapidly. An intelligent assistant can simultaneously process hundreds of refund requests, logistics queries, and bill tracking. It continuously converses with users, invokes APIs, records logs, and aggregates reports in the backend. A large amount of sensitive data is hidden in these automated interactions:

● User queries may contain phone numbers, order IDs, and shipping addresses.

● Backend service logs often contain bank card numbers, API IP addresses, and account IDs.

● The ticket forwarding process may even include internal tokens and usernames.

If this information is forwarded, stored, or exported within the system without being processed, it not only violates the data minimization principle but also risks accidental leakage when logs are debugged, shared, or exported. However, in real-world scenarios, we cannot simply "log less" or "remove fields". Logs are tools for O&M troubleshooting, the basis for operational analysis, and the evidence for security audits.

This article will use an E-commerce Copilot demo as an example to demonstrate how to use the Data Masking Functions of Alibaba Cloud Simple Log Service (SLS) to ensure the privacy and security of sensitive data in the system without changing the business logic.

flowchart LR

subgraph UserFlow[User Interaction Layer]

U[User Question]

D[Dify Platform Orchestration <br/>(Intent Recognition / Node Scheduling)]

end

subgraph Backend[Business Service Layer]

B1[Refund Service]

B2[Order Service]

B3[Logistics Service]

B4[Product Consultation]

end

subgraph LogPipeline[Log Link]

L1[LoongCollector<br/> Log Collection]

L2[SLS LogStore

Data Masking<br/>]

end

subgraph Teams[Usage Layer]

O1[O&M Analysis]

O2[Operations Analysis]

O3[Security Audit]

end

U --> D --> B1 & B2 & B3 & B4

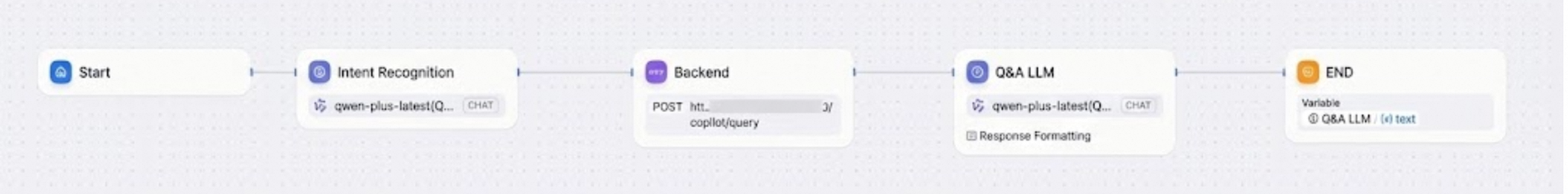

B1 & B2 & B3 & B4 --> L1 --> L2 --> O1 & O2 & O3The upper-layer orchestration of the system is handled by the Dify platform. Dify is responsible for coordinating user input, intent recognition, invoking backend services, and generating replies. It is the central hub of the entire Copilot system.

However, we found that Dify's native observability is not granular enough. Specifically:

● The platform mainly provides node-level execution logs.

● However, it has limited observation of downstream API calls, abnormal stacks, or duration distributions.

● When a failure occurs, Dify's built-in logs are often insufficient to support troubleshooting and auditing.

Therefore, we chose to collect service logs on the Dify service deployment side by using LoongCollector, which uniformly pushes the logs to an SLS logstore.

● Data Stream: The collection source is unified. It simultaneously collects Dify orchestration logs, backend service logs, and system standard outputs, and outputs them in a fixed log format. The complete data flow is as follows:

sequenceDiagram

participant U as User

participant C as Copilot (Built on the Dify platform)

participant B as E-commerce backend

participant L as LoonCollector

participant S as SLS Logstore

participant M as Business side

U->>C: Submits a query request (Refund / logistics / Product)

C->>B: Triggers an API call

B-->>C: Returns an API response

C-->>U: Generates an intelligent reply

L->>B: Collects logs

L->>S: Writes logs

S->>S: The write-side data processor masks data

M-->>S: O&M/security/operations query● Write-side masking: You can configure the Structured Process Language (SPL) mask function through a write processor to ensure that sensitive fields are masked when they are stored.

● Usage layer: O&M, operations, or security personnel can perform relevant business analysis based on the masked logstore data.

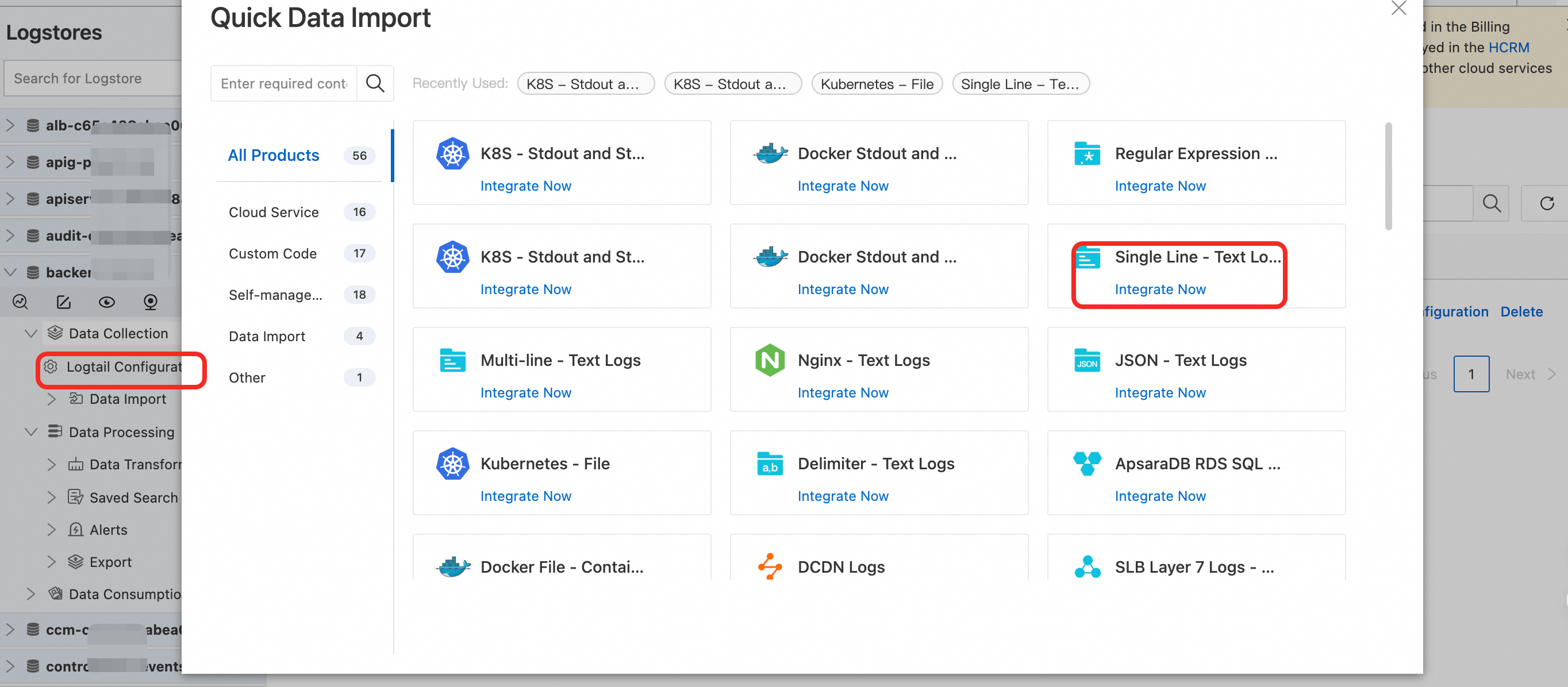

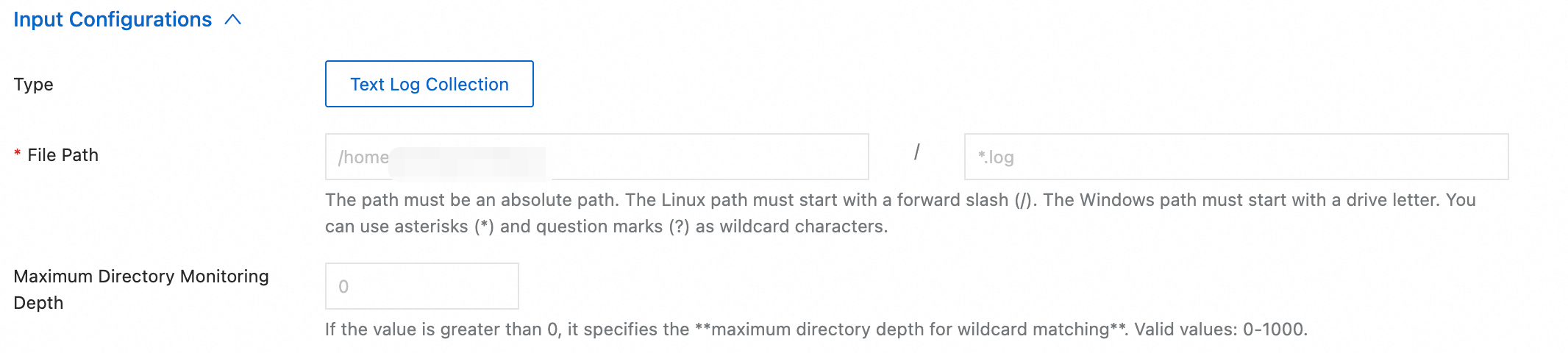

As a lightweight log collection tool, LoongCollector supports collecting data from different data sources such as host text logs, Kubernetes cluster container logs, and HTTP data. Currently, the Copilot demo logs are printed in JSON format in the host log folder. In this case, you only need to connect to the text logs:

Configure the file path of the logs:

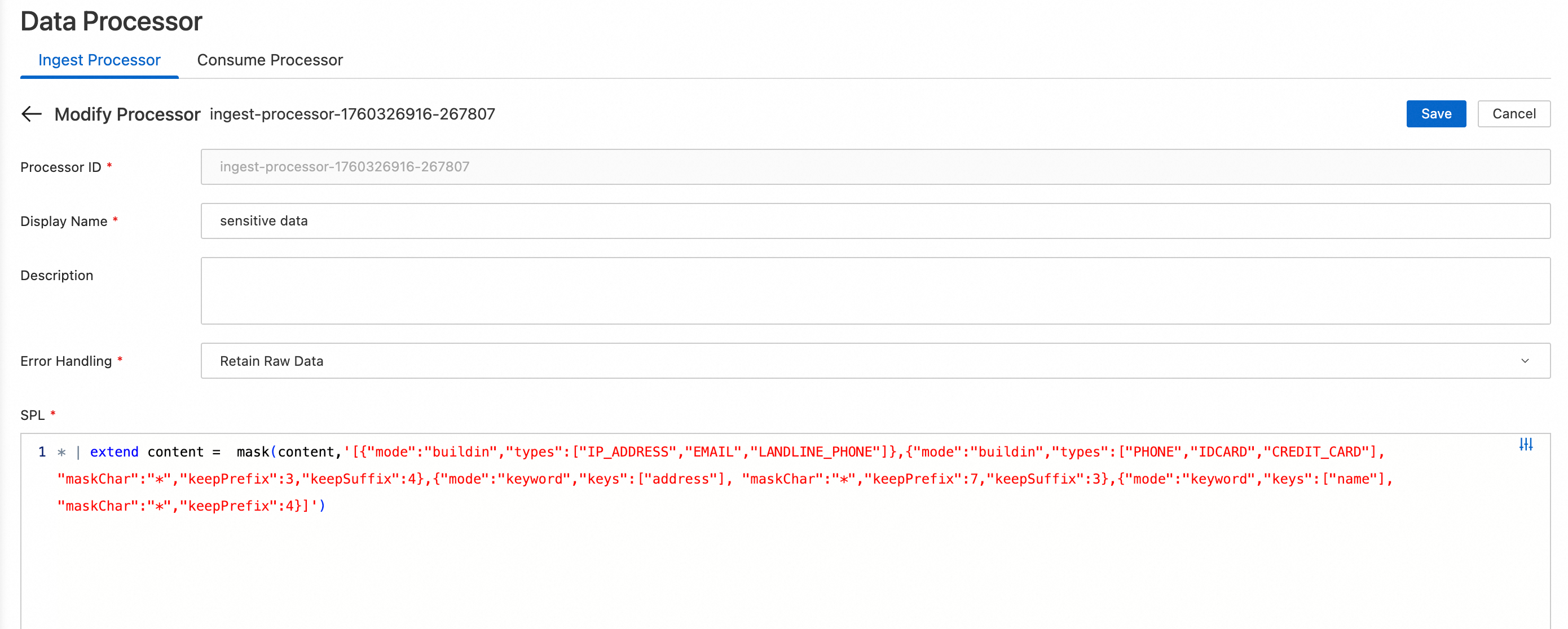

The SLS mask function supports two patterns for detecting and masking sensitive information: built-in matching and keyword matching.

● Built-in matching (buildin): The mask function is ready to use out of the box and has a built-in capability to detect six common types of sensitive information, such as phone numbers, ID card numbers, mailboxes, IP addresses, landline numbers, and bank card numbers.

● Keyword matching (keyword): It intelligently detects sensitive information in any text that matches common key-value (KV) pair formats such as "key":"value", 'key':'value', or key=value.

For the e-commerce copilot logs mentioned in this article, you can create a data processor in the corresponding project (configure the SPL configuration shown in the following figure) to intelligently detect and mask sensitive content such as IP addresses and mailboxes. For phone numbers, ID card numbers, credit card numbers, names, and address information, you can customize the retention of prefixes and suffixes. For more information about the configuration, see No Complex Regular Expressions Required: The New SLS Data Masking Function Makes Privacy Protection Simpler and More Efficient.

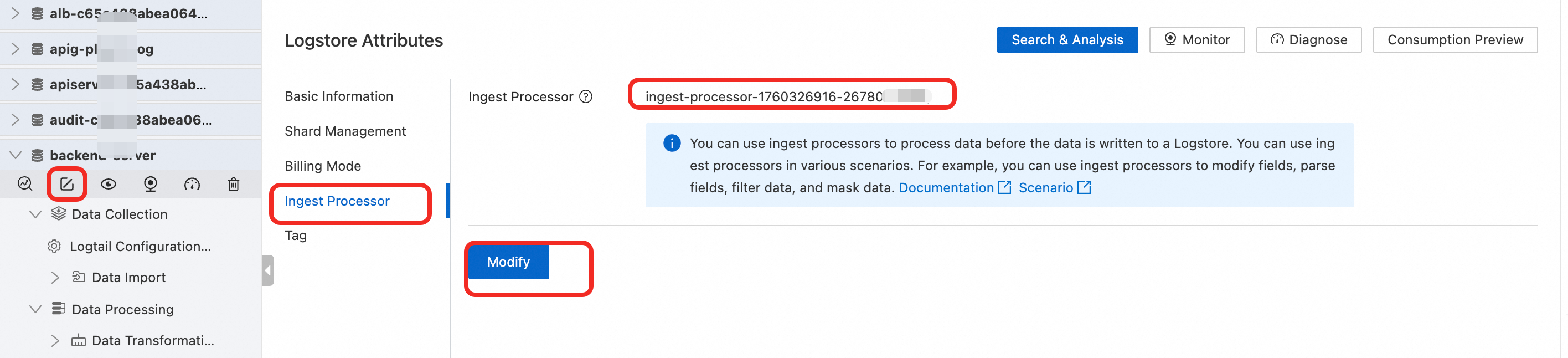

● Configure a write processor to activate the job: Select the logstore to which you want to apply the job, and apply the newly created processing job on the Write Processor tab.

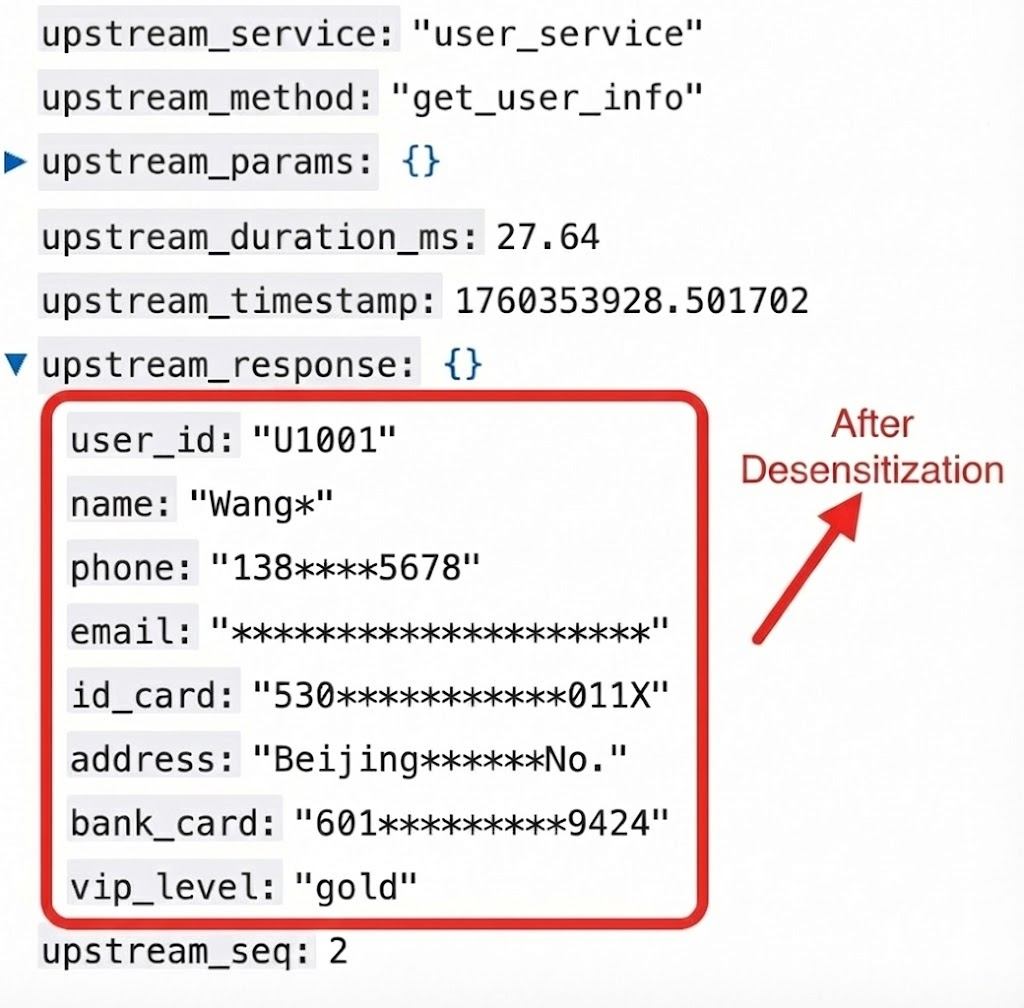

A comparison with the data before masking shows the following:

● On-demand retention for both security and availability: For different sensitive fields, you can customize the retention of prefix and suffix characters. For phone numbers, the first three and last four digits are retained. This not only protects user privacy but also facilitates problem troubleshooting and user identity verification for O&M engineers. It ensures security while maintaining data availability.

● Minimal configuration without regular expressions: The keyword matching pattern can accurately mask values even in nested JSON structures. You only need to configure the innermost key, which eliminates the need for complex regular expressions to handle various key-value formats. You also do not need to write complex regular expressions to be compatible with various key:value pair formats, which greatly reduces the configuration difficulty.

● Precise masking for Chinese content: Names and addresses are precisely masked according to the configured rules. This prevents masking failures caused by encoding issues.

In addition, compared to using regular expressions for data masking, the mask function has significant performance advantages. It can effectively reduce log processing latency and improve overall performance. The performance advantage is more pronounced in complex scenarios or scenarios with large data volumes.

Data Masking allows the same log to be presented from three "perspectives":

● O&M personnel see the call chain and performance bottlenecks, but they do not see privacy data.

● Operations personnel see trends, efficiency, and user experience, but they do not see information about individual users.

● Security personnel see policy execution and audit trails, and they do not need to worry about omissions.

With this system, data is no longer isolated but becomes a managed asset with clear security boundaries. Data compliance, analysis, and troubleshooting can be performed in parallel.

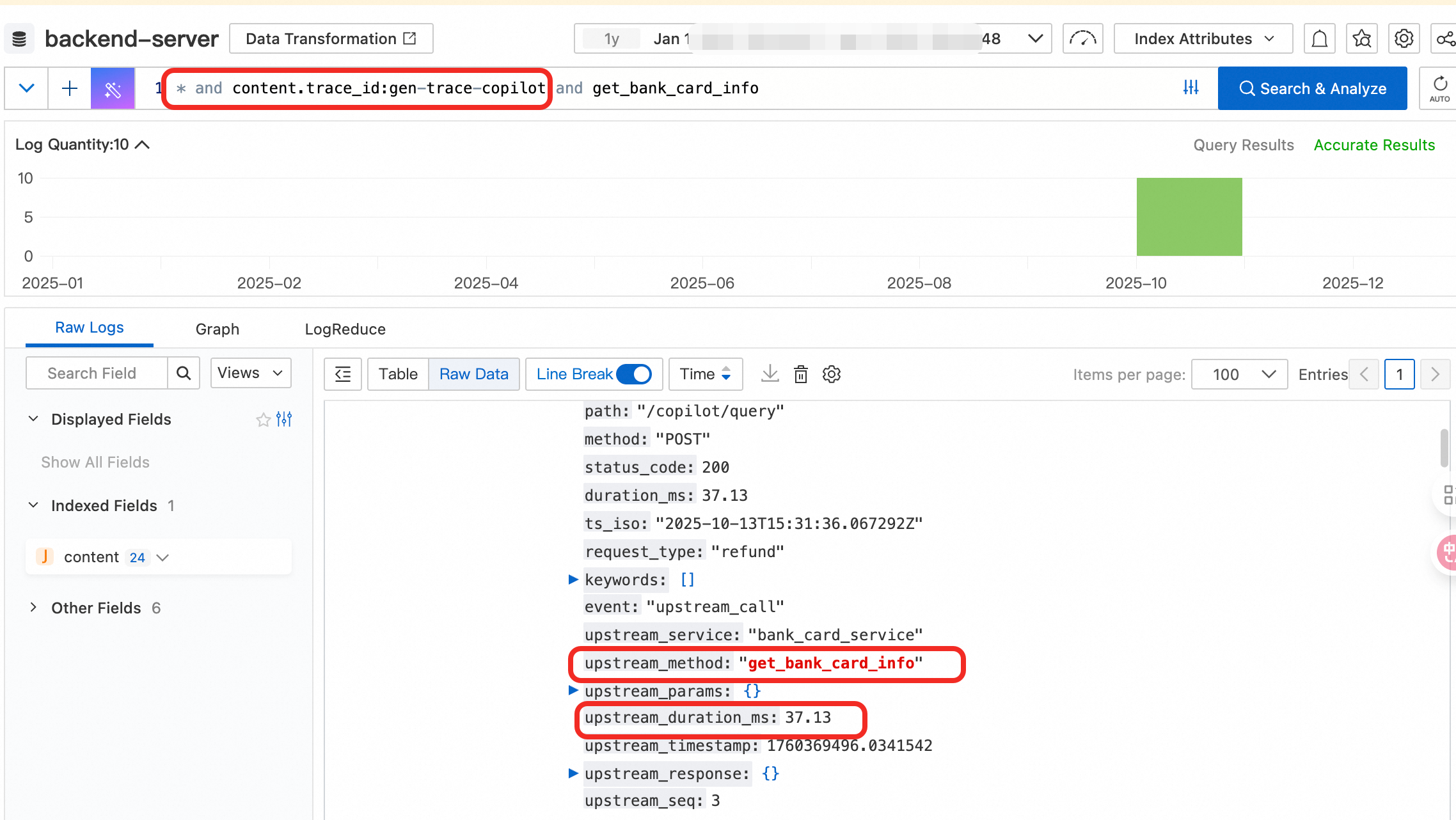

For O&M teams, troubleshooting often relied on plaintext logs that contained user phone numbers, addresses, and account numbers. This posed a high compliance risk. Now, masked logs make this process secure from the source. In troubleshooting scenarios, you can retrieve the entire call chain by searching for the trace_id:

● Starting from intent recognition by Copilot.

● To the order service → refund service → third-party payment gateway.

● And then to the return result and duration.

When user identity needs to be verified, the logs only retain masked information such as bank card numbers and phone numbers. This information is sufficient to correlate with user records on the business side without exposing the raw data. Even during cross-team investigations, problems can be located directly in the masked logs, which avoids the risk of data leakage.

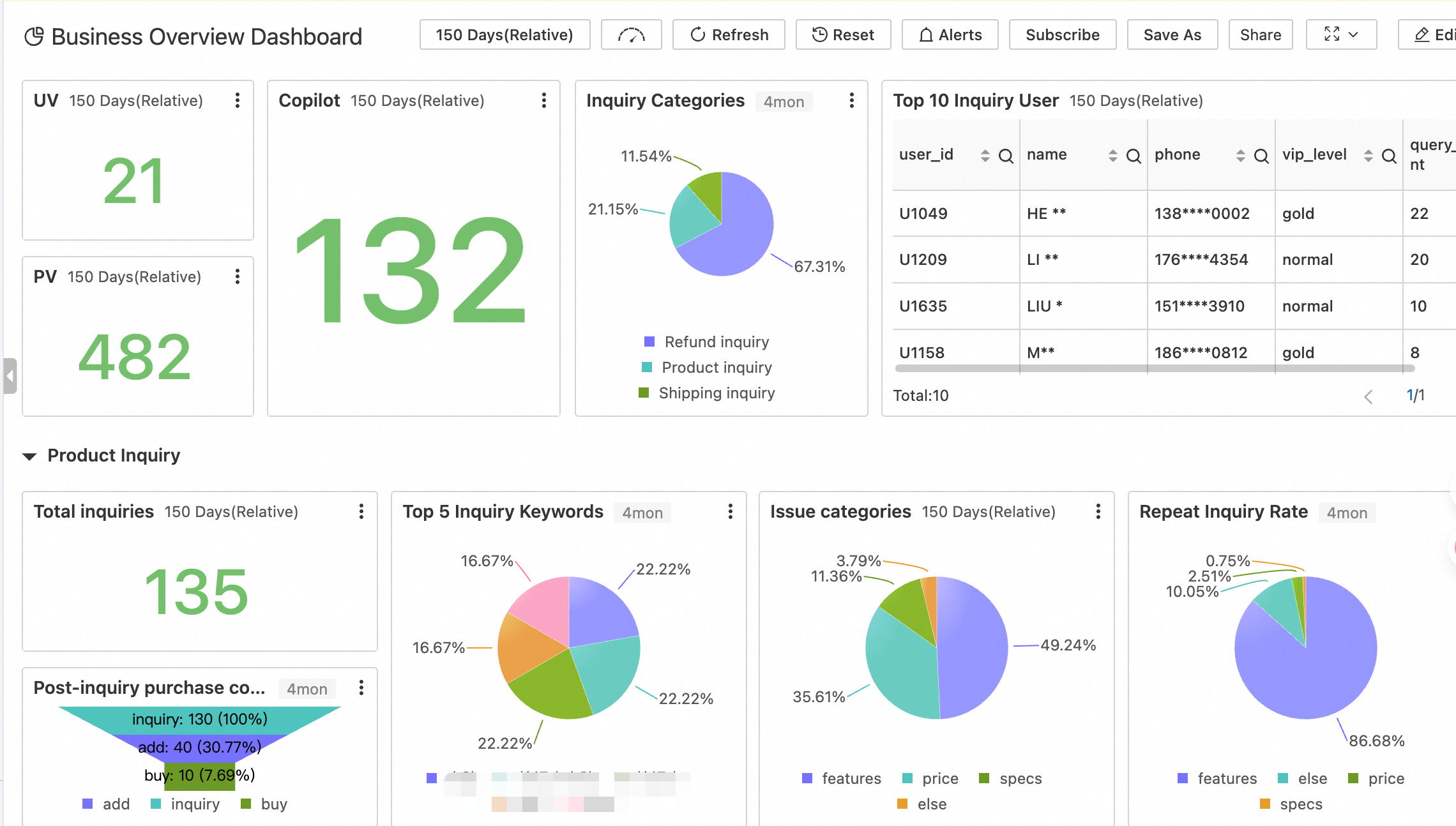

The value of reports lies in discovering overall trends, not in examining individual user data. In masked operations reports, user information is anonymized. Only key business metrics are retained to help the team discover insights from the data.

From this report, the operations team can quickly understand the following:

● Overall overview: Key metrics such as UV and PV, the number of interactions with Copilot, and the total number of inquiries provide a quick overview of operations.

● Inquiry categorization: The proportions of inquiries about refunds, products, and logistics provide a clear understanding of user focus points.

● Question categorization: Understand the focus of user questions, such as questions about features, prices, and specifications.

● Repeat inquiry rate: Measure service quality and quickly locate areas that require optimization.

● User behavior: The post-inquiry purchase conversion funnel and popular inquiry keywords help optimize product and marketing strategies.

● Key users: Top 10 inquiring users. Although user information is masked, you can develop differentiated service policies based on VIP levels and inquiry counts.

In addition, all user information in the report is masked. Personal information, such as phone numbers and names, is masked to ensure that it cannot be reverse-engineered to identify specific users, which fully protects user privacy.

For the security and compliance team, the biggest risk associated with logs is existing plaintext data. The masking solution described in this article performs masking upfront. Data is processed before it is written. This fundamentally eliminates the possibility of incomplete sensitive data masking coverage or the export of plaintext data. In addition, SLS also provides comprehensive compliance support capabilities:

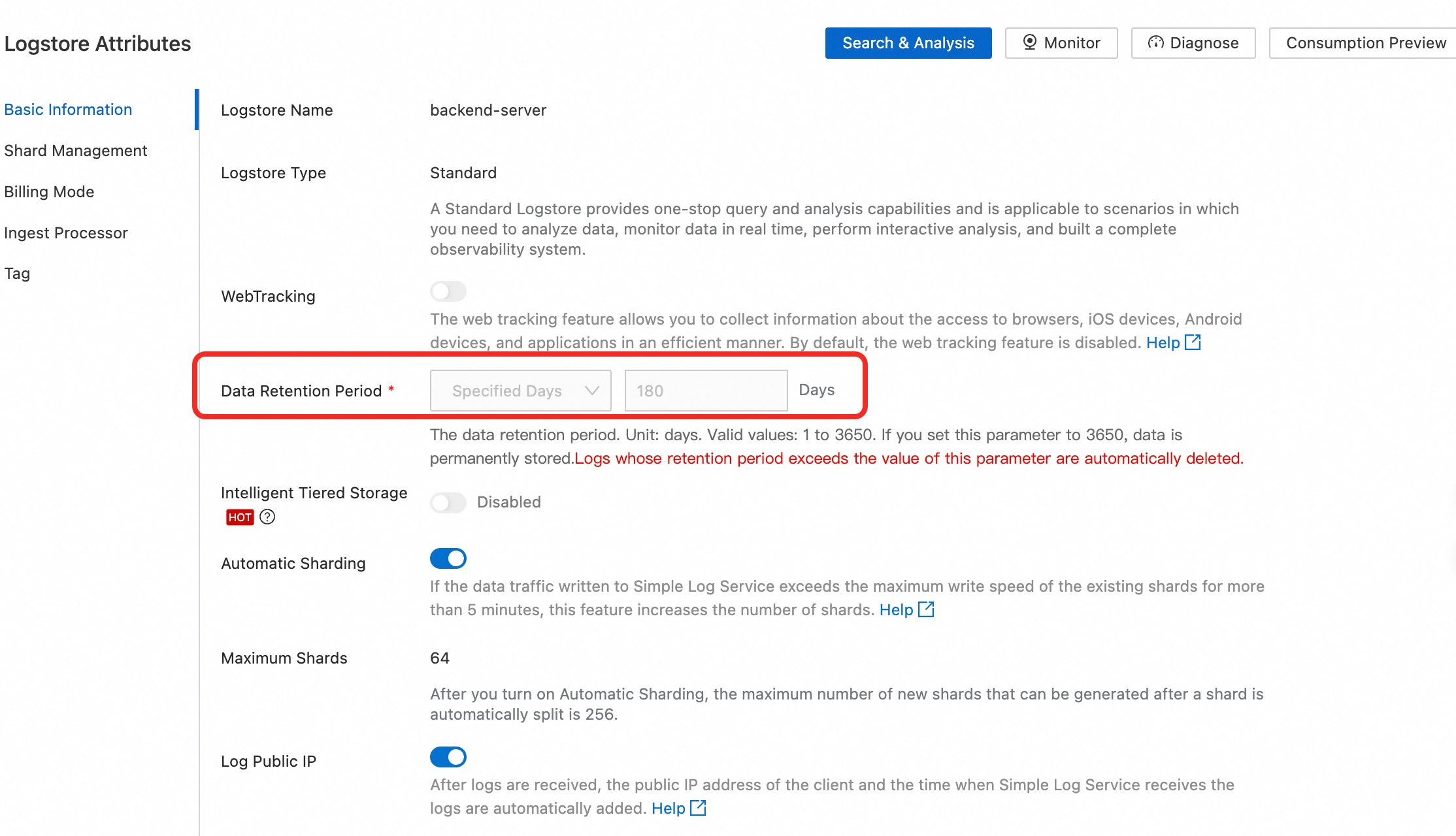

● Data storage: You can customize the log storage duration. For network audit-related logs, you can set the storage duration to more than 180 days to meet security audit requirements.

● Data operation audit: User-level operations occur when logs are used. Whether these are console operations or OpenAPI calls on the control plane, or business log usage on the data plane, anyone who views, analyzes, or exports logs can only see the content that they are authorized to view. In addition, CloudLens for SLS provides asset usage monitoring for projects and logstores.

When log collection by LoongCollector and data masking in logstores form a closed loop, logs are secured as they are ingested. O&M personnel can perform troubleshooting, operations personnel can perform analysis, and security personnel can perform audits. This is not a one-time fix but a reusable pattern: masking on the write side, storing masked data by default, and providing role-based access. By adopting this pattern as a baseline, enterprises can confidently expand the business coverage of Copilot, enabling them to reap the benefits of AI efficiency while maintaining robust compliance.

In Response to the Retirement of Nginx Ingress, It’s Time to Clarify These Confusing Concepts

701 posts | 57 followers

FollowAlibaba Cloud Native Community - December 10, 2025

Alibaba Cloud Native Community - March 30, 2026

Alibaba Cloud Indonesia - March 21, 2025

CloudSecurity - April 10, 2026

Alibaba Cloud Native Community - November 6, 2025

Alibaba Cloud Native Community - February 13, 2025

701 posts | 57 followers

Follow Bastionhost

Bastionhost

A unified, efficient, and secure platform that provides cloud-based O&M, access control, and operation audit.

Learn More Managed Service for Grafana

Managed Service for Grafana

Managed Service for Grafana displays a large amount of data in real time to provide an overview of business and O&M monitoring.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn MoreMore Posts by Alibaba Cloud Native Community