By Wuzhe

In the process of building modern data and business systems, observability has become essential. Simple Log Service (SLS) provides a large-scale, low-cost, and high-performance one-stop platform service for Log/Trace/Metric data, supporting features such as data processing, delivery, analysis, alerting, and visualization. SLS significantly enhances the digital capabilities of enterprises across various scenarios such as research and development, operations and maintenance, business operations, and security.

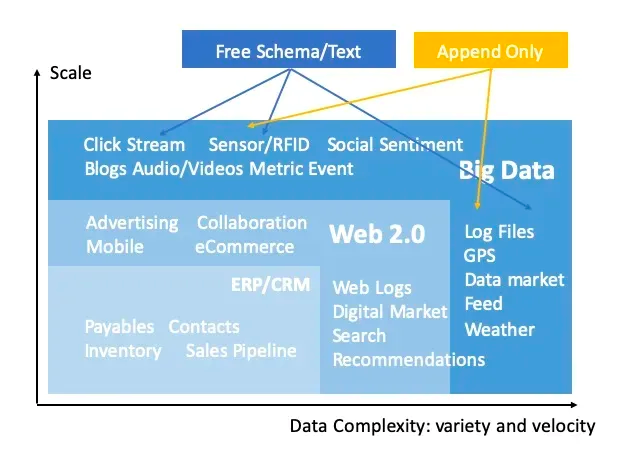

As one of the most basic data types in observable scenarios, log data is naturally unstructured and is related to a variety of factors:

• Diversity of sources: There are many types of log data, and it is difficult to have a unified schema for data from different sources.

• Data randomness: For example, anomalous event logs and user behavior logs are often random and unpredictable.

• Business complexity: Different participants have different understandings of data. For example, developers usually print logs in the development process, while operations and data engineers often analyze logs, making it challenging to foresee specific analysis needs during the logging process.

These factors contribute to the outcome where there isn't always an ideal data model suitable for preprocessing log data. A more common approach is to store raw data directly, known as Schema-on-Read or the Sushi Principle (The Sushi Principle: Raw data is better than cooked, since you can cook it in as many different ways as you like). This untidy raw log data poses a challenge for analysts, as they often require a certain level of familiarity with the data model to conduct a more structured analysis.

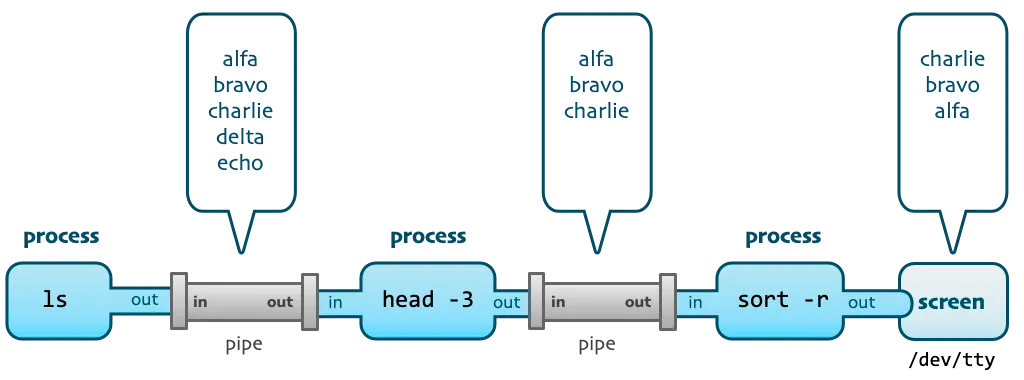

Before the emergence of various log analysis systems and platforms, the most traditional log analysis method for developers and O&M personnel was to directly log on to the machine where the log file was located to grep the log and analyze and process the log with a series of Unix commands.

For example, to view the source host of the 404 in the access log, you may use the following command:

grep 404 access.log | tail -n 10 | awk '{print $2}' | tr a-z A-ZThis command connects four Unix command line tools (keyword search, log truncation, field extraction, and case conversion) into a complete processing stack through three pipe operators.

Note that when using such a command, we often do not write a complete command at one time, but press Enter after writing a command, observe the result of the execution output, and then add the next processing command through the pipeline to continue the execution.

This process fully embodies the design philosophy of Unix, combining small and elegant tools into powerful programs through pipelines. At the same time, from the perspective of log analysis, we can get the following inspiration:

1) Interactive and iterative profiling, executing each time based on the previous profiling.

2) In the process of profiling, the full data is often not processed, but a small part of the sample data is intercepted for analysis.

3) Various processing operations performed during the profiling process only affect the output of this query and do not change the original data.

It can be seen that interactive profiling is a great way to handle log data. On cloud logging platforms like SLS, when facing vast amounts of raw log data, we hope to employ a similar approach to Unix pipelines in our queries. This iterative profiling process helps us uncover patterns in the chaotic logs. Subsequently, we can proceed with purposeful operations such as processing, cleaning, delivering, and SQL aggregation and analysis.

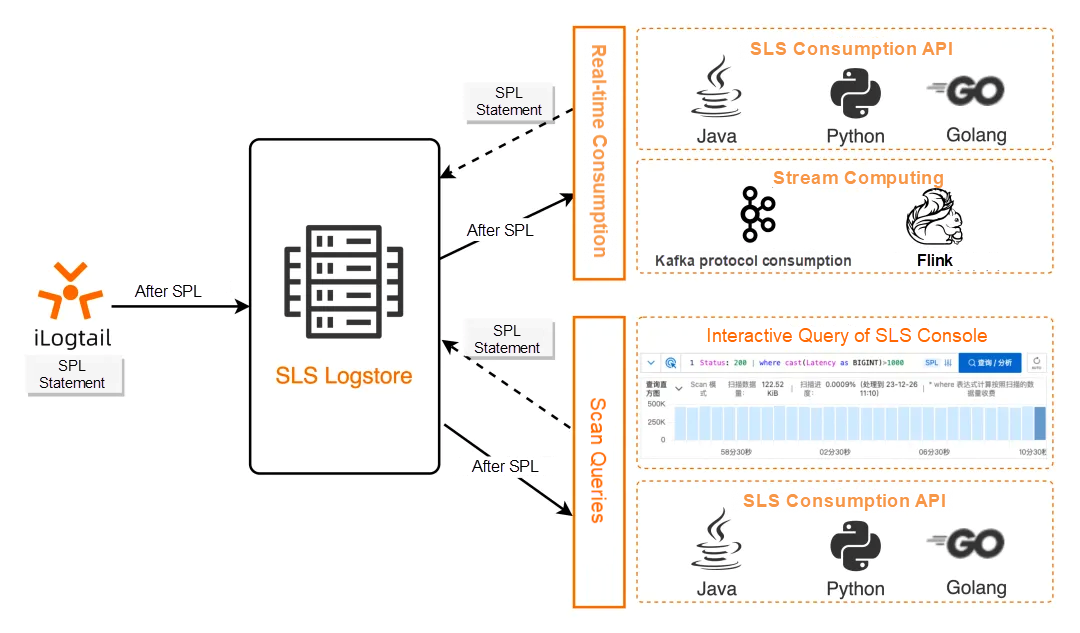

SPL, or SLS Processing Language, is a unified data processing syntax provided by SLS for scenarios requiring data processing such as log querying, streaming consumption, data processing, Logtail collection, and data ingestion. This uniformity enables SPL to achieve a Write Once, Run Anywhere effect throughout the entire lifecycle of log processing. For more information about SPL, see SPL overview [1].

The basic syntax of SPL is as follows:

<data-source> | <spl-expr> ... | <spl-expr> ...<data-source> indicates the data source. For log query scenarios, it refers to the index query statement.

<spl-expr> is an SPL instruction that supports multiple operations, such as regular expression values, field splitting, field projection, and numerical calculation. For more information, see SPL instructions [2].

As you can see from the syntax definition, SPL naturally supports multi-level pipelines. For log query scenarios, after the index query statement, you can continuously append SPL commands by using pipeline characters as needed. You can click the query at each step to view the current processing result, which is similar to the Unix pipeline processing experience. Compared with Unix commands, SPL has more operators and functions and can perform more flexible debugging, exploration, and analysis on logs.

In log query scenarios, SPL works in scan mode and can directly process unstructured raw data, regardless of whether to create indexes or index types. During scanning, you are billed based on the actual amount of data scanned. For more information, see Scan query overview [3].

Although scan queries and index queries have different working principles, they are completely unified in the user interface (console query, GetLogs API).

When querying logs, when you enter an index query statement, it is queried through the index.

If you continue to enter the pipeline operator and SPL instructions, the results of the index filter will be processed directly and automatically according to the scan mode (no need to specify the scan mode button), and you will be prompted that you are now in SPL input mode.

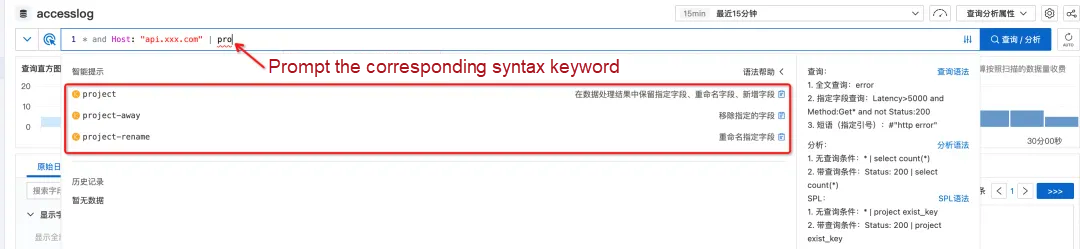

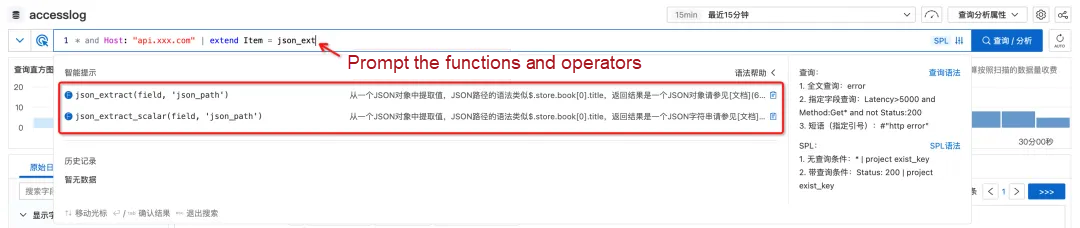

In addition, when you query data in the console, the system automatically identifies the current syntax mode and provides intelligent prompts for SPL-related commands and functions.

With the input, the drop-down box automatically prompts the corresponding syntax keyword and function.

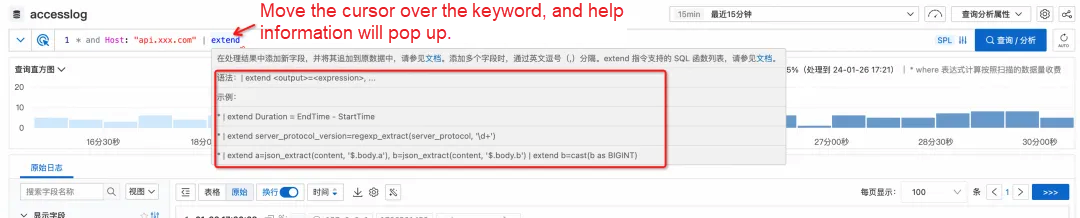

If you forget how to write a grammar, you don't have to leave the current interface to find the document. Simply move the cursor over the keyword, and detailed help information will pop up.

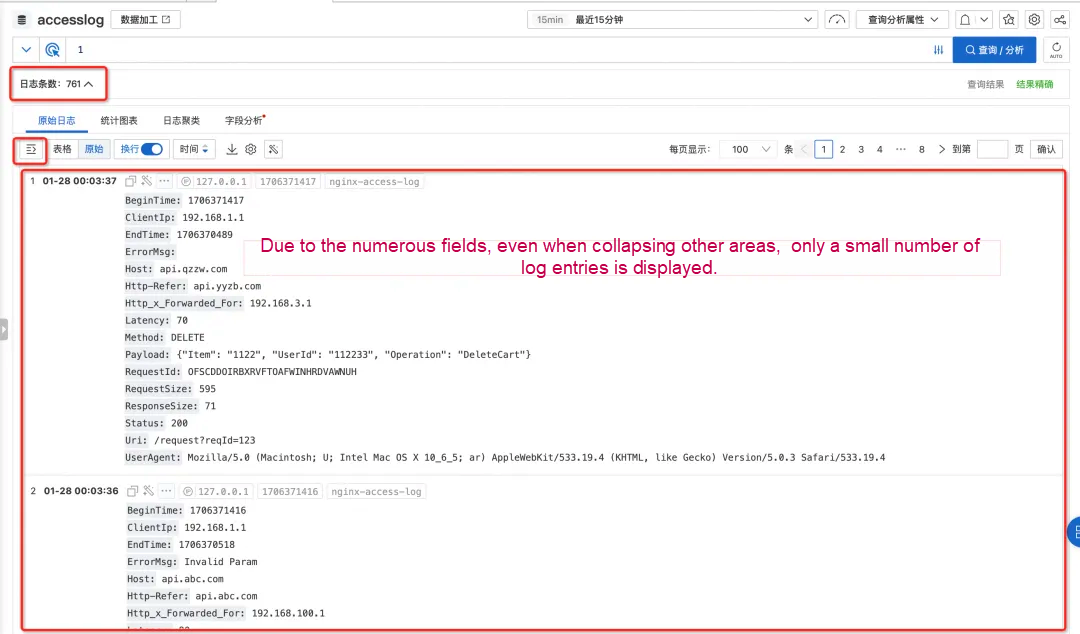

When typing logs, to meet potential analysis requirements in the future, we usually type as much relevant information as possible into the logs, so we often find that there are more fields in the final single log.

In this case, a log occupies too much space when querying in the SLS console. Even if the bar chart at the top and the quick analysis bar on the side are folded, only one or two logs can be seen in the original log area at the same time. You have to scroll to constantly turn pages to view other logs, which is inconvenient.

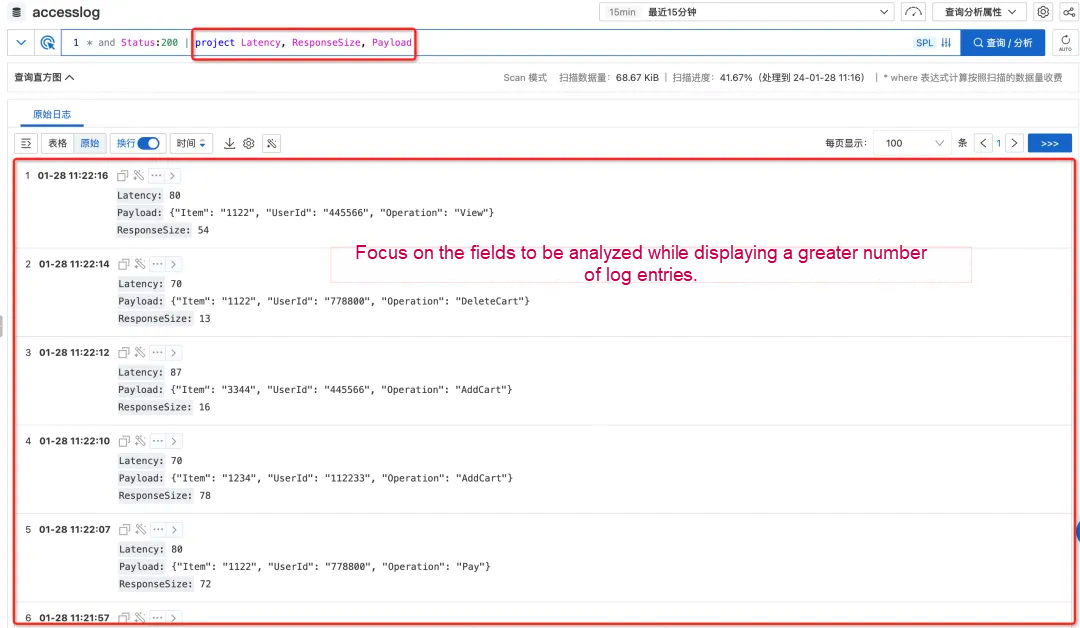

However, in fact, when we query logs, we often search for a certain purpose, which means we usually only care about some of the fields. In this case, you can use the project instruction in the SPL to keep only the fields you care about (or use the project-away instruction to remove the fields you don't need to see. This can not only remove interference and focus on the fields to be focused on but also preview more logs at the same time because the fields are simplified.)

As mentioned earlier, the analysis requirements cannot be fully foreseen when writing logs. Therefore, when analyzing logs, it is often necessary to process existing fields and extract new fields. This can be achieved by using the Extend instruction of SPL.

By using the Extend instruction, you can call a wide range of functions (most of which are common to SQL syntax) for scalar processing.

Status:200 | extend urlParam=split_part(Uri, '/', 3)You can also calculate new fields based on multiple fields, such as calculating the difference between two numeric fields. (Note: The field is considered as varchar by default, and the type must be converted through cast when calculating the number type.)

Status:200 | extend timeRange = cast(BeginTime as bigint) - cast(EndTime as bigintIndex queries can only be performed based on search methods such as keywords, phrases composed of multiple keywords, and keyword end ambiguity. In scan mode, you can filter by using the WHERE quality based on various conditions. This is the current scan query capability. After the upgrade to SPL, WHERE can be placed in any level of pipelines to filter new fields calculated, thus providing more flexible and powerful filtering capabilities.

For example, after the TimeRange is calculated based on the BeginTime and EndTime, you can determine and filter the calculated value.

Status:200

| where UserAgent like '%Chrome%'

| extend timeRange = cast(BeginTime as bigint) - cast(EndTime as bigint)

| where timeRange > 86400Sometimes, logs contain semi-structured data such as JSON and CSV. we can use the extend command to extract one of the sub-fields. However, if there are many sub-fields to be analyzed, we need to write a large number of json_extract_scalar or field extraction functions such as regexp_extract, which is inconvenient.

SPL provides commands such as parse-json and parse-csv, which can directly expand JSON and CSV fields into independent fields, and then directly operate on these fields. This method eliminates the overhead of writing the field extraction function, which is more convenient in interactive query scenarios.

Let's experience it through a moving picture. In the exploration of logs, the data is processed step by step through the pipeline with the continuous input of SPL instructions. Each step can materialize the processing steps in mind on the query result page view, and finally extract the structured information we need to analyze in the step-by-step interactive exploration.

Due to the diversity of data sources and the uncertainty of analysis requirements, log data is often stored directly as unstructured raw data, which brings certain challenges to query and analysis.

SLS provides the unified log processing language SPL. In log query scenarios, you can use multi-level pipelines to explore data in an interactive and progressive way. This makes it easier to discover data features and better perform subsequent structured analysis, processing, and consumption.

Now, the support for SPL log query has been launched in various regions. Welcome to use! If you have any questions or requirements, you can feedback to us in the work order. SLS will continue to work hard to build a more easy-to-use, more stable, and more powerful observability analysis platform.

[1] SPL overview

https://www.alibabacloud.com/help/en/sls/user-guide/spl-overview

[2] SPL instructions

https://www.alibabacloud.com/help/en/sls/user-guide/spl-instruction?spm=a2c4g.11186623.0.0.197c59d4pRrjml

[3] Scan query overview

https://www.alibabacloud.com/help/en/sls/user-guide/scan-based-query-overview

[1] The Sushi Principle

https://www.datasapiens.co.uk/blog/the-sushi-principle

[2] Unix Commands, Pipes, and Processes

https://itnext.io/unix-commands-pipes-and-processes-6e22a5fbf749

[3] SPL overview

https://www.alibabacloud.com/help/en/sls/user-guide/spl-overview

[4] Scan query overview

https://www.alibabacloud.com/help/en/sls/user-guide/scan-based-query-overview

[5] SLS architecture upgrade-lower cost, higher performance, more stable, and easier to use https://login.alibaba-inc.com/ssoLogin.htm?APP_NAME=ata&BACK_URL=https%3A%2F%2Fata.atatech.org%2Farticles%2F11020158038&CONTEXT_PATH=%2F

Serverless Cost Optimization: Knative Supports Preemptible Instances

698 posts | 56 followers

FollowAlibaba Cloud Native Community - November 6, 2025

Alibaba Cloud Native - April 26, 2024

Alibaba Cloud Native Community - November 4, 2024

Alibaba Cloud Native Community - November 4, 2025

Alibaba Cloud Native Community - October 10, 2025

Alibaba Cloud Native Community - March 2, 2026

698 posts | 56 followers

Follow Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn More NAT(NAT Gateway)

NAT(NAT Gateway)

A public Internet gateway for flexible usage of network resources and access to VPC.

Learn More Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn MoreMore Posts by Alibaba Cloud Native Community