By Xinsheng, an Alibaba Cloud Technical Expert

Since OpenYurt was made open-source, its non-intrusive architecture design for integrating cloud-native and edge computing has attracted attention from a lot of developers. Alibaba Cloud launched the open-source OpenYurt project to share its experience in the cloud-native edge computing field with the open-source community, accelerate the extension of cloud computing to the edge, and work with the community to define unified standards for future cloud-native edge computing architectures. We published the Deep-Dive Into OpenYurt article series to help the community understand OpenYurt. The third of this series describes the extended capabilities of YurtHub.

Recommended articles in this series:

OpenYurt is a cloud-native edge computing solution that was made open-source one year after ACK@Edge was released. OpenYurt is different from other open-source containerized edge computing solutions, as it adheres to the design philosophy of extending your native Kubernetes to the edge without requiring any modifications to Kubernetes. It can instantly convert Kubernetes clusters to OpenYurt clusters to give native Kubernetes clusters edge cluster capabilities.

OpenYurt will adhere to the following development concepts during evolution:

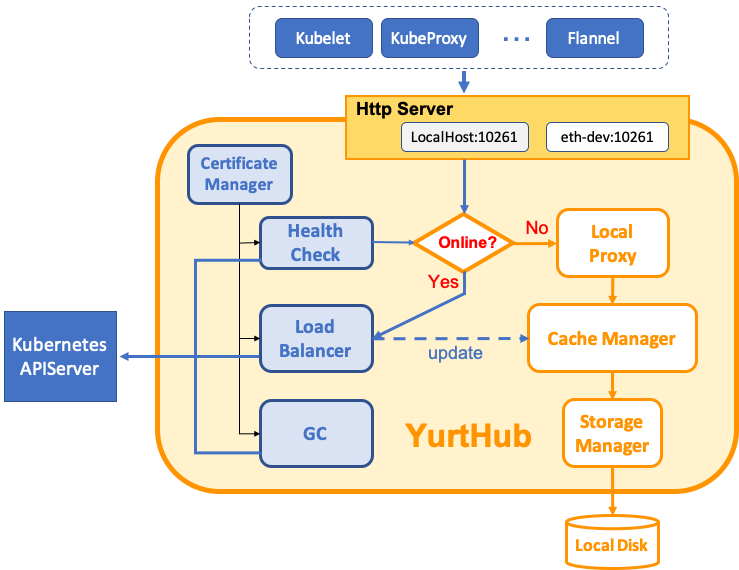

In the previous article, we introduced the OpenYurt edge autonomy design and discussed the YurtHub component. The following figure shows the YurtHub architecture:

One of YurtHub's advantages is its compatibility with the Kubernetes design, which makes it easier for YurtHub to extend more capabilities. Next, we will describe YurtHub's extended capabilities in detail.

Edge network autonomy enables cross-node communication so that edge businesses can continue or automatically recover when the edge is disconnected from the cloud and service containers or edge nodes are restarted.

To ensure edge network autonomy, OpenYurt needs to meet the following requirements (here, a Flannel VXLAN overlay network is used as an example):

According to requirement 1, we must ensure the autonomy of the kube-proxy, Flannel, CoreDNS, and other components to ensure autonomous network configurations. If edge autonomy was implemented by reconstructing the kubelet, there would be great difficulties in achieving edge network autonomy. If the autonomous capabilities of the reconstructed kubelet are forcibly migrated to various network components, such as kube-proxy, Flannel, and CoreDNS, it would be a nightmare for the entire architecture.

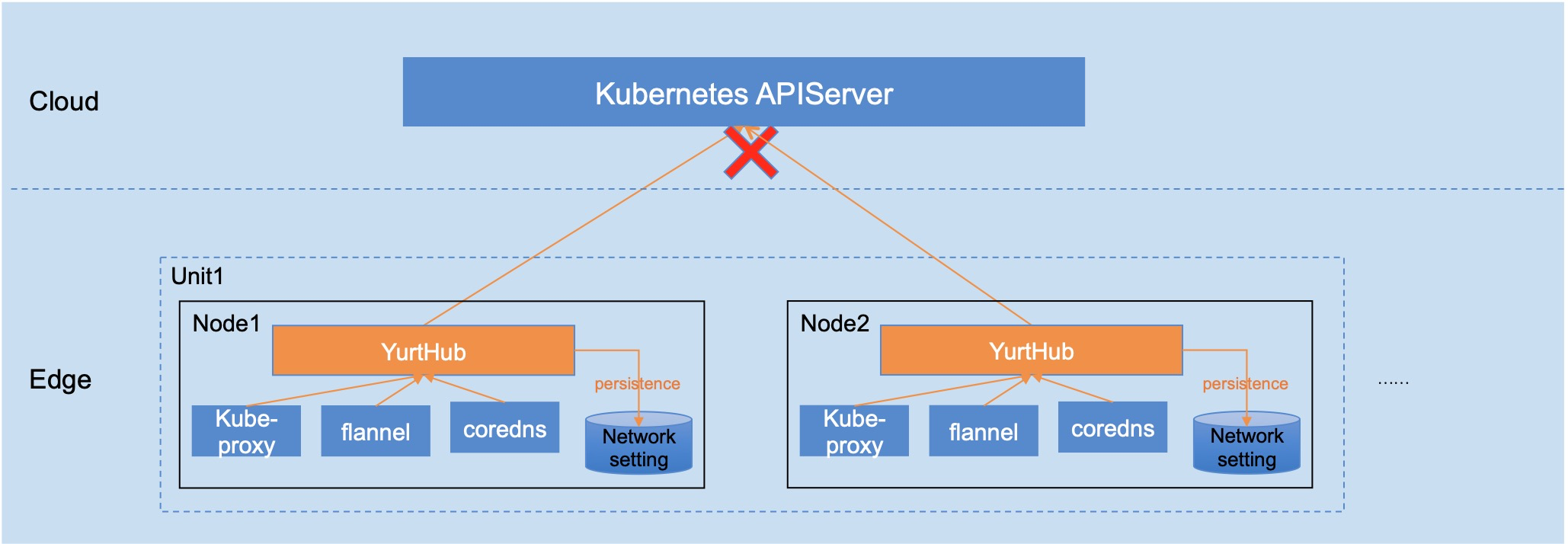

In OpenYurt, YurtHub is independent, and therefore network components, such as kube-proxy, Flannel, and CoreDNS can easily use YurtHub to achieve autonomous network configurations. YurtHub caches network configuration resources, such as services, in the local storage. When the network is disconnected or nodes are restarted, network components can still obtain the statuses and configuration information of objects from before the network interruption, as shown in the following figure:

Requirements 2 and 3 are independent of the Kubernetes core and involve Container Network Interface (CNI) plugins and flanneld enhancement. We will describe them in detail in subsequent articles.

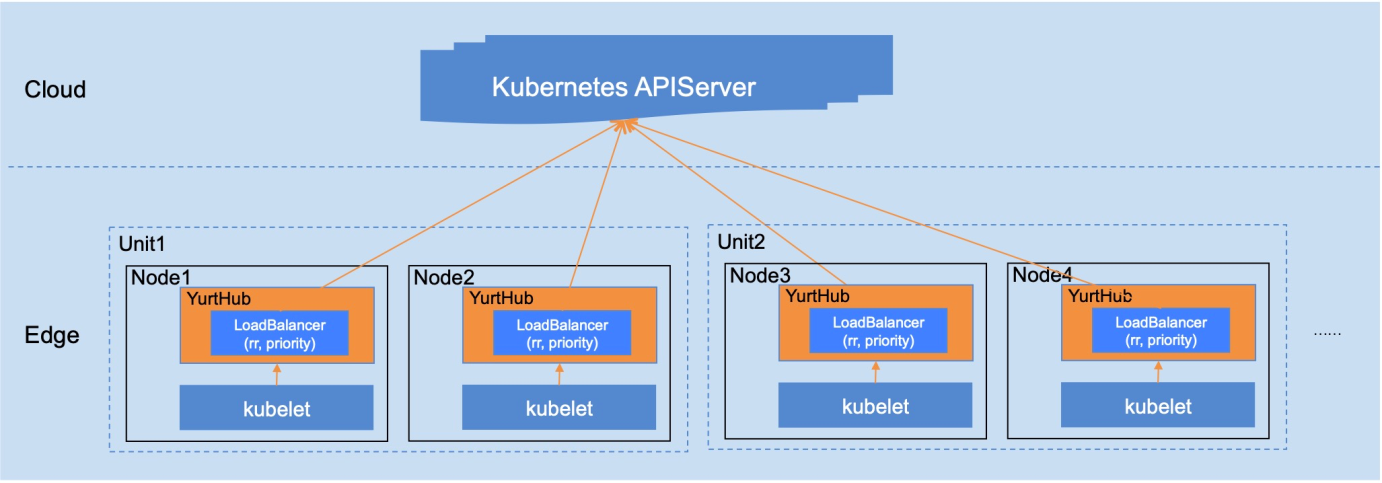

When a Kubernetes cluster is deployed on a public cloud in high availability (HA) mode, an SLB is deployed between the multi-instance kube-apiserver and the edge. However, in private cloud or edge computing scenarios, edge nodes need to use different cloud addresses to access the kube-apiserver.

YurtHub supports cloud access with different IP addresses to meet the preceding requirements. You can use either of the following load balancing modes for cloud access addresses:

For more information, please see the LB module of YurtHub, as shown in the following figure:

Throttling is required for a distributed system. Native Kubernetes encapsulates throttling in the kube-apiserver from the cluster perspective and in the client-go library from the visitors' perspective. In edge computing scenarios, client-go throttling is decentralized and intrudes upon businesses to a certain extent. Therefore, it cannot solve the throttling problem.

At the edge, YurtHub can take over the cloud access traffic from both system components and service containers and implement node-based throttling on the cloud. In this case, when the number of concurrent requests to the cloud from a single node exceeds 250, YurtHub rejects new requests.

Kubernetes supports automatic node certificate changing. When a node certificate is about to expire, the kubelet automatically applies for a new node certificate from the cloud. However, in edge computing scenarios, the kubelet may fail to change node certificates when the edge is disconnected from the cloud. If the connection with the cloud is restored after a certificate has expired, it may not be automatically changed, so the kubelet is repeatedly restarted.

When YurtHub takes over the traffic between edge nodes and the cloud, it can also take over node certificate management. It avoids inconsistencies in node certificate management by using different installation tools and ensures automatic certificate changes for expired certificates after the network recovers. Currently, YurtHub is used together with kubelet for node certificate management. YurtHub will provide an independent node certificate management feature in the near future.

In addition to the preceding extended capabilities, YurtHub also provides other valuable capabilities, including:

With the preceding extended capabilities, YurtHub serves as a reverse proxy on edge nodes that supports data caching. It also adds an encapsulation layer to the application lifecycle management of Kubernetes nodes and provides core control capabilities required for edge computing.

YurtHub applies to edge computing scenarios and can function as a common component on nodes for use in all Kubernetes scenarios. We believe that these extended capabilities will drive YurtHub to become more stable and provide better performance. We invite you to participate in the project.

Alibaba Released Sentinel Go 0.4.0 with Hot Parameter Throttling

703 posts | 57 followers

FollowAlibaba Developer - January 11, 2021

Alibaba Developer - January 20, 2021

Alibaba Cloud Native Community - July 17, 2023

Alibaba Developer - January 11, 2021

Alibaba Cloud Native Community - May 4, 2023

Alibaba Cloud Native Community - May 4, 2023

703 posts | 57 followers

Follow IoT Platform

IoT Platform

Provides secure and reliable communication between devices and the IoT Platform which allows you to manage a large number of devices on a single IoT Platform.

Learn More IoT Solution

IoT Solution

A cloud solution for smart technology providers to quickly build stable, cost-efficient, and reliable ubiquitous platforms

Learn More Global Internet Access Solution

Global Internet Access Solution

Migrate your Internet Data Center’s (IDC) Internet gateway to the cloud securely through Alibaba Cloud’s high-quality Internet bandwidth and premium Mainland China route.

Learn More Link IoT Edge

Link IoT Edge

Link IoT Edge allows for the management of millions of edge nodes by extending the capabilities of the cloud, thus providing users with services at the nearest location.

Learn MoreMore Posts by Alibaba Cloud Native Community