Following the launch of Qwen3.6-Plus, we are excited to open-source Qwen3.6-35B-A3B — a sparse yet remarkably capable mixture-of-experts (MoE) model with 35 billion total parameters and only 3 billion active parameters. Despite its efficiency, Qwen3.6-35B-A3B delivers outstanding agentic coding performance, surpassing its predecessor Qwen3.5-35B-A3B by a wide margin and rivaling much larger dense models such as Qwen3.5-27B and Gemma4-31B. Still supporting both multimodal thinking and non-thinking modes, Qwen3.6-35B-A3B works as one of the most versatile open-source models available today. Now, Qwen3.6-35B-A3B is live on Qwen Studio, available through our API, and released as open weights for the community.

Qwen3.6-35B-A3B is a fully open-source MoE model (35B total / 3B active), featuring:

Qwen3.6-Flash on Alibaba Cloud Model Studio API (coming soon), or download weights from Hugging Face and ModelScope.

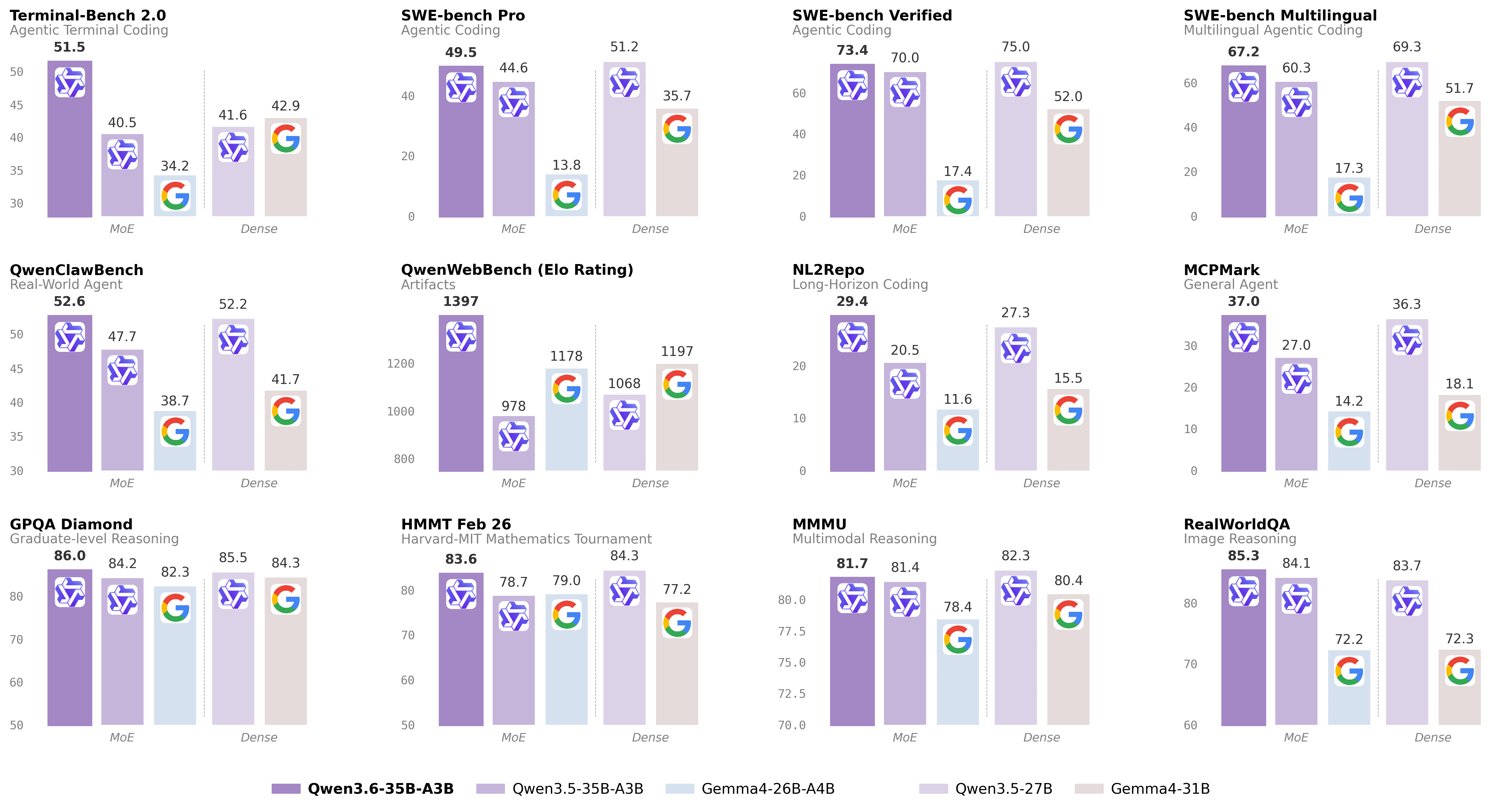

Below we present comprehensive evaluations of Qwen3.6-35B-A3B against peer-scale models across a wide range of tasks and modalities.

With only 3B active parameters, Qwen3.6-35B-A3B outperforms the dense 27B-parameter Qwen3.5-27B on several key coding benchmarks and dramatically surpasses its direct predecessor Qwen3.5-35B-A3B, especially on agentic coding and reasoning tasks.

| Qwen3.5-27B | Gemma4-31B | Qwen3.5-35BA3B | Gemma4-26BA4B | Qwen3.6-35BA3B | |

|---|---|---|---|---|---|

| Coding Agent | |||||

| SWE-bench Verified | 75.0 | 52.0 | 70.0 | 17.4 | 73.4 |

| SWE-bench Multilingual | 69.3 | 51.7 | 60.3 | 17.3 | 67.2 |

| SWE-bench Pro | 51.2 | 35.7 | 44.6 | 13.8 | 49.5 |

| Terminal-Bench 2.0 | 41.6 | 42.9 | 40.5 | 34.2 | 51.5 |

| Claw-Eval Avg | 64.3 | 48.5 | 65.4 | 58.8 | 68.7 |

| Claw-Eval Pass^3 | 46.2 | 25.0 | 51.0 | 28.0 | 50.0 |

| SkillsBench Avg5 | 27.2 | 23.6 | 4.4 | 12.3 | 28.7 |

| QwenClawBench | 52.2 | 41.7 | 47.7 | 38.7 | 52.6 |

| NL2Repo | 27.3 | 15.5 | 20.5 | 11.6 | 29.4 |

| QwenWebBench | 1068 | 1197 | 978 | 1178 | 1397 |

| General Agent | |||||

| TAU3-Bench | 68.4 | 67.5 | 68.9 | 59.0 | 67.2 |

| VITA-Bench | 41.8 | 43.0 | 29.1 | 36.9 | 35.6 |

| DeepPlanning | 22.6 | 24.0 | 22.8 | 16.2 | 25.9 |

| Tool Decathlon | 31.5 | 21.2 | 28.7 | 12.0 | 26.9 |

| MCPMark | 36.3 | 18.1 | 27.0 | 14.2 | 37.0 |

| MCP-Atlas | 68.4 | 57.2 | 62.4 | 50.0 | 62.8 |

| WideSearch | 66.4 | 35.2 | 59.1 | 38.3 | 60.1 |

| Knowledge | |||||

| MMLU-Pro | 86.1 | 85.2 | 85.3 | 82.6 | 85.2 |

| MMLU-Redux | 93.2 | 93.7 | 93.3 | 92.7 | 93.3 |

| SuperGPQA | 65.6 | 65.7 | 63.4 | 61.4 | 64.7 |

| C-Eval | 90.5 | 82.6 | 90.2 | 82.5 | 90.0 |

| STEM & Reasoning | |||||

| GPQA | 85.5 | 84.3 | 84.2 | 82.3 | 86.0 |

| HLE | 24.3 | 19.5 | 22.4 | 8.7 | 21.4 |

| LiveCodeBench v6 | 80.7 | 80.0 | 74.6 | 77.1 | 80.4 |

| HMMT Feb 25 | 92.0 | 88.7 | 89.0 | 91.7 | 90.7 |

| HMMT Nov 25 | 89.8 | 87.5 | 89.2 | 87.5 | 89.1 |

| HMMT Feb 26 | 84.3 | 77.2 | 78.7 | 79.0 | 83.6 |

| IMOAnswerBench | 79.9 | 74.5 | 76.8 | 74.3 | 78.9 |

| AIME26 | 92.6 | 89.2 | 91.0 | 88.3 | 92.7 |

* SWE-Bench Series: Internal agent scaffold (bash + file-edit tools); temp=1.0, top_p=0.95, 200K context window. We correct some problematic tasks in the public set of SWE-bench Pro and evaluate all baselines on the refined benchmark.

* Terminal-Bench 2.0: Harbor/Terminus-2 harness; 3h timeout, 32 CPU/48 GB RAM; temp=1.0, top_p=0.95, top_k=20, max_tokens=80K, 256K ctx; avg of 5 runs.

* SkillsBench: Evaluated via OpenCode on 78 tasks (self-contained subset, excluding API-dependent tasks); avg of 5 runs.

* NL2Repo: Others are evaluated via Claude Code (temp=1.0, top_p=0.95, max_turns=900).

* QwenClawBench: An internal real-user-distribution Claw agent benchmark (open-sourcing soon); temp=0.6, 256K ctx.

* QwenWebBench: An internal front-end code generation benchmark; bilingual (EN/CN), 7 categories (Web Design, Web Apps, Games, SVG, Data Visualization, Animation, and 3D); auto-render + multimodal judge (code/visual correctness); BT/Elo rating system.

* TAU3-Bench: We use the official user model (gpt-5.2, low reasoning effort) + default BM25 retrieval.

* VITA-Bench: Avg subdomain scores; using claude-4-sonnet as judger, as the official judger (claude-3.7-sonnet) is no longer available.

* MCPMark: GitHub MCP v0.30.3; Playwright responses truncated at 32K tokens.

* MCP-Atlas: Public set score; gemini-2.5-pro judger.

* AIME 26: We use the full AIME 2026 (I & II), where the scores may differ from Qwen 3.5 notes.

Qwen3.6 is natively multimodal, and Qwen3.6-35B-A3B showcases perception and multimodal reasoning capabilities that far exceed what its size would suggest, with only around 3 billion activated parameters. Across most vision-language benchmarks, its performance matches Claude Sonnet 4.5, and even surpasses it on several tasks. Its strengths are particularly evident in spatial intelligence, where it achieves 92.0 on RefCOCO and 50.8 on ODInW13.

| Qwen3.5-27B | Claude-Sonnet-4.5 | Gemma4-31B | Gemma4-26BA4B | Qwen3.5-35B-A3B | Qwen3.6-35B-A3B | |

|---|---|---|---|---|---|---|

| STEM and Puzzle | ||||||

| MMMU | 82.3 | 79.6 | 80.4 | 78.4 | 81.4 | 81.7 |

| MMMU-Pro | 75.0 | 68.4 | 76.9* | 73.8* | 75.1 | 75.3 |

| Mathvista(mini) | 87.8 | 79.8 | 79.3 | 79.4 | 86.2 | 86.4 |

| ZEROBench_sub | 36.2 | 26.3 | 26.0 | 26.3 | 34.1 | 34.4 |

| General VQA | ||||||

| RealWorldQA | 83.7 | 70.3 | 72.3 | 72.2 | 84.1 | 85.3 |

| MMBenchEN-DEV-v1.1 | 92.6 | 88.3 | 90.9 | 89.0 | 91.5 | 92.8 |

| SimpleVQA | 56.0 | 57.6 | 52.9 | 52.2 | 58.3 | 58.9 |

| HallusionBench | 70.0 | 59.9 | 67.4 | 66.1 | 67.9 | 69.8 |

| Text Recognition and Document Understanding | ||||||

| OmniDocBench1.5 | 88.9 | 85.8 | 80.1 | 74.4 | 89.3 | 89.9 |

| CharXiv(RQ) | 79.5 | 67.2 | 67.9 | 69.0 | 77.5 | 78.0 |

| CC-OCR | 81.0 | 68.1 | 75.7 | 74.5 | 80.7 | 81.9 |

| AI2D_TEST | 92.9 | 87.0 | 89.0 | 88.3 | 92.6 | 92.7 |

| Spatial Intelligence | ||||||

| RefCOCO(avg) | 90.9 | -- | -- | -- | 89.2 | 92.0 |

| ODInW13 | 41.1 | -- | -- | -- | 42.6 | 50.8 |

| EmbSpatialBench | 84.5 | 71.8 | -- | -- | 83.1 | 84.3 |

| RefSpatialBench | 67.7 | -- | -- | -- | 63.5 | 64.3 |

| Video Understanding | ||||||

| VideoMME(w sub.) | 87.0 | 81.1 | -- | -- | 86.6 | 86.6 |

| VideoMME(w/o sub.) | 82.8 | 75.3 | -- | -- | 82.5 | 82.5 |

| VideoMMMU | 82.3 | 77.6 | 81.6 | 76.0 | 80.4 | 83.7 |

| MLVU | 85.9 | 72.8 | -- | -- | 85.6 | 86.2 |

| MVBench | 74.6 | -- | -- | -- | 74.8 | 74.6 |

| LVBench | 73.6 | -- | -- | -- | 71.4 | 71.4 |

* Empty cells (--) indicate scores not available or not applicable.

Qwen3.6-35B-A3B is coming soon to Alibaba Cloud Model Studio. Please stand by until we are fully ready.

Qwen3.6-35B-A3B is available as open weights on Hugging Face and ModelScope for self-hosting, and through the Alibaba Cloud Model Studio API as qwen3.6-flash. You can also try it instantly on Qwen Studio.

The model can be seamlessly integrated with popular third-party coding assistants, including OpenClaw, Claude Code, and Qwen Code, to streamline development workflows and enable efficient, context-aware coding experiences.

This release supports the preserve_thinking feature: preserving thinking content from all preceding turns in messages, which is recommended for agentic tasks.

Alibaba Cloud Model Studio supports industry-standard protocols, including chat completions and responses APIs compatible with OpenAI’s specification, as well as an API interface compatible with Anthropic.

Example code for chat completions API is provided below:

"""

Environment variables (per official docs):

DASHSCOPE_API_KEY: Your API Key from https://modelstudio.console.alibabacloud.com

DASHSCOPE_BASE_URL: (optional) Base URL for compatible-mode API.

- Beijing: https://dashscope.aliyuncs.com/compatible-mode/v1

- Singapore: https://dashscope-intl.aliyuncs.com/compatible-mode/v1

- US (Virginia): https://dashscope-us.aliyuncs.com/compatible-mode/v1

DASHSCOPE_MODEL: (optional) Model name; override for different models.

"""

from openai import OpenAI

import os

api_key = os.environ.get("DASHSCOPE_API_KEY")

if not api_key:

raise ValueError(

"DASHSCOPE_API_KEY is required. "

"Set it via: export DASHSCOPE_API_KEY='your-api-key'"

)

client = OpenAI(

api_key=api_key,

base_url=os.environ.get(

"DASHSCOPE_BASE_URL",

"https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

),

)

messages = [{"role": "user", "content": "Introduce vibe coding."}]

model = os.environ.get(

"DASHSCOPE_MODEL",

"qwen3.6-flash",

)

completion = client.chat.completions.create(

model=model,

messages=messages,

extra_body={

"enable_thinking": True,

# "preserve_thinking": True,

},

stream=True

)

reasoning_content = "" # Full reasoning trace

answer_content = "" # Full response

is_answering = False # Whether we have entered the answer phase

print("\n" + "=" * 20 + "Reasoning" + "=" * 20 + "\n")

for chunk in completion:

if not chunk.choices:

print("\nUsage:")

print(chunk.usage)

continue

delta = chunk.choices[0].delta

# Collect reasoning content only

if hasattr(delta, "reasoning_content") and delta.reasoning_content is not None:

if not is_answering:

print(delta.reasoning_content, end="", flush=True)

reasoning_content += delta.reasoning_content

# Received content, start answer phase

if hasattr(delta, "content") and delta.content:

if not is_answering:

print("\n" + "=" * 20 + "Answer" + "=" * 20 + "\n")

is_answering = True

print(delta.content, end="", flush=True)

answer_content += delta.contentFor more information, please visit the API doc.

Qwen3.6-35B-A3B features excellent agentic coding capabilities and can be seamlessly integrated into popular third-party coding assistants, including OpenClaw, Claude Code, and Qwen Code.

Qwen3.6-35B-A3B is compatible with OpenClaw (formerly Moltbot / Clawdbot), a self-hosted open-source AI coding agent. Connect it to Model Studio to get a full agentic coding experience in the terminal. Get started with the following script:

# Node.js 22+

curl -fsSL https://molt.bot/install.sh | bash # macOS / Linux

# Set your API key

export DASHSCOPE_API_KEY=<your_api_key>

# Launch OpenClaw

openclaw dashboard # web browser

# openclaw tui # Open a new terminal and start the TUIOn first use, edit ~/.openclaw/openclaw.json to point OpenClaw at Model Studio. Find or create the following fields and merge them — do not overwrite the entire file to preserve your existing settings:

{

"models": {

"mode": "merge",

"providers": {

"modelstudio": {

"baseUrl": "https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

"apiKey": "DASHSCOPE_API_KEY",

"api": "openai-completions",

"models": [

{

"id": "qwen3.6-flash",

"name": "qwen3.6-flash",

"reasoning": true,

"input": ["text", "image"],

"contextWindow": 131072,

"maxTokens": 16384

}

]

}

}

},

"agents": {

"defaults": {

"model": {

"primary": "modelstudio/qwen3.6-flash"

},

"models": {

"modelstudio/qwen3.6-flash": {}

}

}

}

}Qwen3.6-35B-A3B is compatible with Qwen Code, an open-source AI agent designed for the terminal and deeply optimized for the Qwen Series. Get started with the following script:

# Node.js 20+

npm install -g @qwen-code/qwen-code@latest

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/authOn first use, you’ll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Qwen APIs also support the Anthropic API protocol, meaning you can use it with tools like Claude Code for elevated coding experience:

# Install Claude Code

npm install -g @anthropic-ai/claude-code

# Configure environment

export ANTHROPIC_MODEL="qwen3.6-flash"

export ANTHROPIC_SMALL_FAST_MODEL="qwen3.6-flash"

export ANTHROPIC_BASE_URL=https://dashscope-intl.aliyuncs.com/apps/anthropic

export ANTHROPIC_AUTH_TOKEN=<your_api_key>

# Launch the CLI

claudeQwen3.6-35B-A3B demonstrates that sparse MoE models can achieve remarkable agentic coding and reasoning capability. With only 3B active parameters, it delivers performance that rivals dense models several times its active size, while also excelling across multimodal benchmarks. As a fully open-source checkpoint, it sets a new standard for what’s possible at its scale.

Looking ahead, we will continue to expand the Qwen3.6 open-source family and push the boundaries of what efficient, open models can accomplish. We are grateful for the community’s feedback and look forward to seeing what you build with Qwen3.6-35B-A3B. Also, Qwen3.6 open-source family keeps expanding, stay tuned for our future releases!

Feel free to cite the following article if you find Qwen3.6-35B-A3B helpful:

@misc{qwen36_35b_a3b,

title = {{Qwen3.6-35B-A3B}: Agentic Coding Power, Now Open to All},

url = {https://qwen.ai/blog?id=qwen3.6-35b-a3b},

author = {{Qwen Team}},

month = {April},

year = {2026}

}Alibaba Open-sources Qwen3.6-35B-A3B; Wan2.7 Tops Design Arena

Alibaba Launches HappyOyster, a World Model Product for Real-Time Immersive Creation and Interaction

1,383 posts | 491 followers

FollowAlibaba Cloud Community - April 17, 2026

Alibaba Cloud Community - August 8, 2025

Alibaba Cloud Community - October 14, 2025

Alibaba Cloud Community - February 5, 2026

Alibaba Cloud Community - February 5, 2026

Alibaba Cloud Community - July 29, 2025

1,383 posts | 491 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More Alibaba Cloud for Generative AI

Alibaba Cloud for Generative AI

Accelerate innovation with generative AI to create new business success

Learn MoreMore Posts by Alibaba Cloud Community