Following the launch of Qwen3.6-Plus and Qwen3.6-35B-A3B, we are excited to open-source Qwen3.6-27B — a dense 27-billion-parameter multimodal model at the scale the community has been asking for most. Still supporting both multimodal thinking and non-thinking modes, Qwen3.6-27B delivers flagship-level agentic coding performance, surpassing the previous-generation open-source flagship Qwen3.5-397B-A17B (397B total / 17B active MoE) across all major coding benchmarks. As a dense architecture, it is straightforward to deploy without MoE routing complexity, making it an ideal choice for developers who need top-tier coding capabilities at a practical, widely-deployable scale. Qwen3.6-27B is now live on Qwen Studio, available through our API, and released as open weights for the community.

Qwen3.6-27B is a fully open-source dense model (27B parameters), featuring:

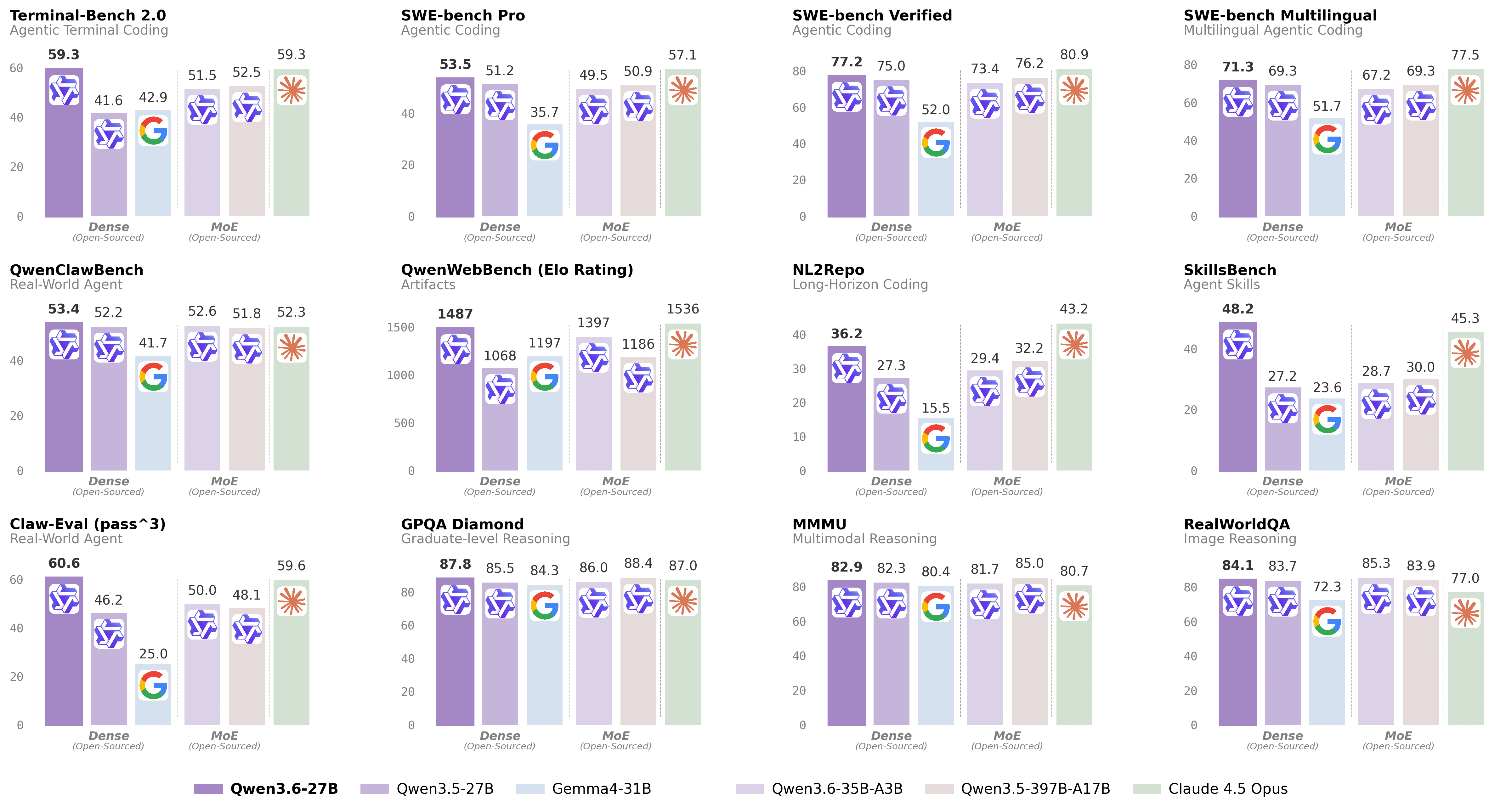

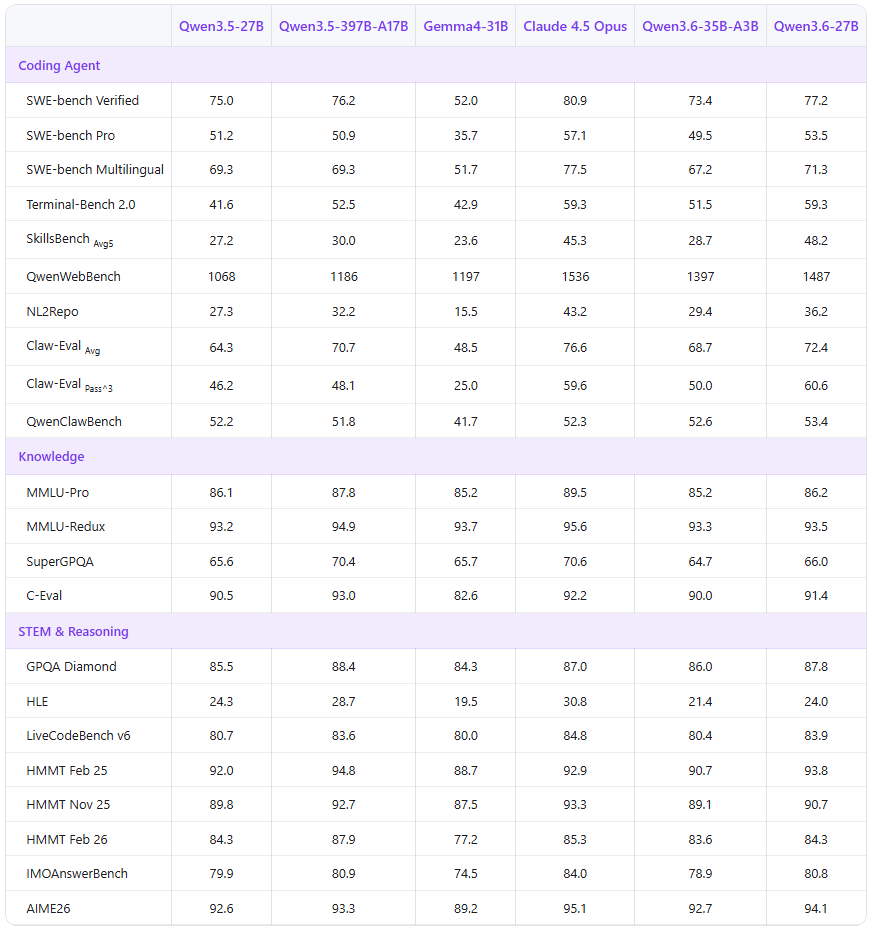

Below we present comprehensive evaluations of Qwen3.6-27B against both dense and MoE baselines, including our previous-generation open-source flagship Qwen3.5-397B-A17B. Qwen3.6-27B delivers remarkable improvements across agentic coding benchmarks, surpassing models with up to 15x its total parameter count.

Qwen3.6-27B achieves a breakthrough in agentic coding for dense models. With only 27B parameters, it outperforms the Qwen3.5-397B-A17B (397B total / 17B active) on every major coding benchmark — including SWE-bench Verified (77.2 vs. 76.2), SWE-bench Pro (53.5 vs. 50.9), Terminal-Bench 2.0 (59.3 vs. 52.5), and SkillsBench (48.2 vs. 30.0). It also surpasses all peer-scale dense models by a wide margin. On reasoning tasks, Qwen3.6-27B achieves 87.8 on GPQA Diamond, competitive with models several times its size.

* SWE-Bench Series: Internal agent scaffold (bash + file-edit tools); temp=1.0, top_p=0.95, 200K context window. We correct some problematic tasks in the public set of SWE-bench Pro and evaluate all baselines on the refined benchmark.

* Terminal-Bench 2.0: Harbor/Terminus-2 harness; 3h timeout, 32 CPU/48 GB RAM; temp=1.0, top_p=0.95, top_k=20, max_tokens=80K, 256K ctx; avg of 5 runs.

* SkillsBench: Evaluated via OpenCode on 78 tasks (self-contained subset, excluding API-dependent tasks); avg of 5 runs.

* NL2Repo: Others are evaluated via Claude Code (temp=1.0, top_p=0.95, max_turns=900).

* QwenClawBench: A real-user-distribution Claw agent benchmark; temp=0.6, 256K ctx.

* QwenWebBench: An internal front-end code generation benchmark; bilingual (EN/CN), 7 categories (Web Design, Web Apps, Games, SVG, Data Visualization, Animation, and 3D); auto-render + multimodal judge (code/visual correctness); BT/Elo rating system.

* AIME 26: We use the full AIME 2026 (I & II), where the scores may differ from Qwen 3.5 notes.

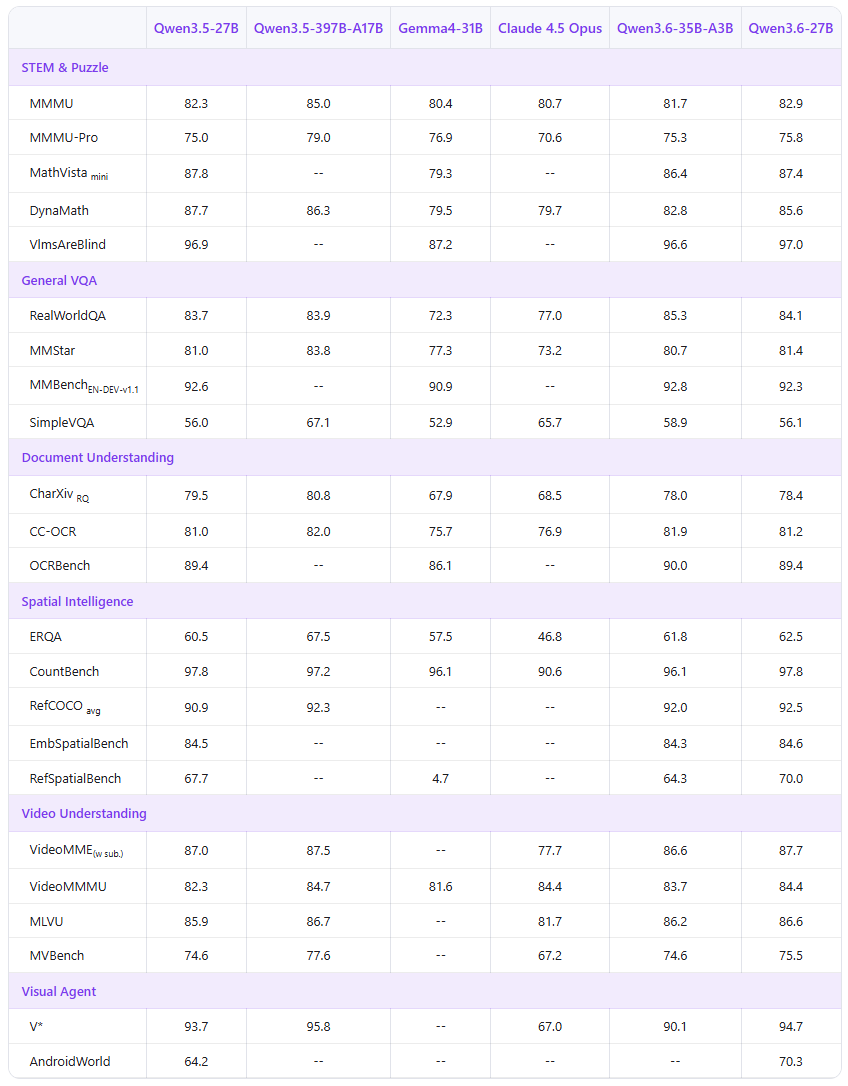

Qwen3.6-27B is natively multimodal, supporting both vision-language thinking and non-thinking modes in a single unified checkpoint — the same as Qwen3.6-35B-A3B. It handles images and video alongside text, enabling multimodal reasoning, document understanding, and visual question answering.

* Empty cells (--) indicate scores not yet available or not applicable.

Qwen3.6-27B is coming soon to Alibaba Cloud Model Studio. Please stand by until we are fully ready.

Qwen3.6-27B is available as open weights on Hugging Face and ModelScope for self-hosting, and through the Alibaba Cloud Model Studio API. You can also try it instantly on Qwen Studio.

The model can be seamlessly integrated with popular third-party coding assistants, including OpenClaw, Claude Code, and Qwen Code, to streamline development workflows and enable efficient, context-aware coding experiences.

This release supports the preserve_thinking feature: preserving thinking content from all preceding turns in messages, which is recommended for agentic tasks.

Alibaba Cloud Model Studio supports industry-standard protocols, including chat completions and responses APIs compatible with OpenAI’s specification, as well as an API interface compatible with Anthropic.

Example code for chat completions API is provided below:

"""

Environment variables (per official docs):

DASHSCOPE_API_KEY: Your API Key from https://modelstudio.console.alibabacloud.com

DASHSCOPE_BASE_URL: (optional) Base URL for compatible-mode API.

- Beijing: https://dashscope.aliyuncs.com/compatible-mode/v1

- Singapore: https://dashscope-intl.aliyuncs.com/compatible-mode/v1

- US (Virginia): https://dashscope-us.aliyuncs.com/compatible-mode/v1

DASHSCOPE_MODEL: (optional) Model name; override for different models.

"""

from openai import OpenAI

import os

api_key = os.environ.get("DASHSCOPE_API_KEY")

if not api_key:

raise ValueError(

"DASHSCOPE_API_KEY is required. "

"Set it via: export DASHSCOPE_API_KEY='your-api-key'"

)

client = OpenAI(

api_key=api_key,

base_url=os.environ.get(

"DASHSCOPE_BASE_URL",

"https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

),

)

messages = [{"role": "user", "content": "Introduce vibe coding."}]

model = os.environ.get(

"DASHSCOPE_MODEL",

"qwen3.6-27b",

)

completion = client.chat.completions.create(

model=model,

messages=messages,

extra_body={

"enable_thinking": True,

# "preserve_thinking": True,

},

stream=True

)

reasoning_content = "" # Full reasoning trace

answer_content = "" # Full response

is_answering = False # Whether we have entered the answer phase

print("\n" + "=" * 20 + "Reasoning" + "=" * 20 + "\n")

for chunk in completion:

if not chunk.choices:

print("\nUsage:")

print(chunk.usage)

continue

delta = chunk.choices[0].delta

# Collect reasoning content only

if hasattr(delta, "reasoning_content") and delta.reasoning_content is not None:

if not is_answering:

print(delta.reasoning_content, end="", flush=True)

reasoning_content += delta.reasoning_content

# Received content, start answer phase

if hasattr(delta, "content") and delta.content:

if not is_answering:

print("\n" + "=" * 20 + "Answer" + "=" * 20 + "\n")

is_answering = True

print(delta.content, end="", flush=True)

answer_content += delta.contentFor more information, please visit the API doc.

Qwen3.6-27B features excellent agentic coding capabilities and can be seamlessly integrated into popular third-party coding assistants, including OpenClaw, Claude Code, and Qwen Code.

Qwen3.6-27B is compatible with OpenClaw (formerly Moltbot / Clawdbot), a self-hosted open-source AI coding agent. Connect it to Model Studio to get a full agentic coding experience in the terminal. Get started with the following script:

# Node.js 22+

curl -fsSL https://molt.bot/install.sh | bash # macOS / Linux

# Set your API key

export DASHSCOPE_API_KEY=<your_api_key>

# Launch OpenClaw

openclaw dashboard # web browser

# openclaw tui # Open a new terminal and start the TUIOn first use, edit ~/.openclaw/openclaw.json to point OpenClaw at Model Studio. Find or create the following fields and merge them — do not overwrite the entire file to preserve your existing settings:

{

"models": {

"mode": "merge",

"providers": {

"modelstudio": {

"baseUrl": "https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

"apiKey": "DASHSCOPE_API_KEY",

"api": "openai-completions",

"models": [

{

"id": "qwen3.6-27b",

"name": "qwen3.6-27b",

"reasoning": true,

"input": ["text", "image"],

"contextWindow": 131072,

"maxTokens": 16384

}

]

}

}

},

"agents": {

"defaults": {

"model": {

"primary": "modelstudio/qwen3.6-27b"

},

"models": {

"modelstudio/qwen3.6-27b": {}

}

}

}

}Qwen3.6-27B is compatible with Qwen Code, an open-source AI agent designed for the terminal and deeply optimized for the Qwen Series. Get started with the following script:

# Node.js 20+

npm install -g @qwen-code/qwen-code@latest

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/authOn first use, you’ll be prompted to sign in. You can run /auth anytime to switch authentication methods.

Qwen APIs also support the Anthropic API protocol, meaning you can use it with tools like Claude Code for elevated coding experience:

# Install Claude Code

npm install -g @anthropic-ai/claude-code

# Configure environment

export ANTHROPIC_MODEL="qwen3.6-27b"

export ANTHROPIC_SMALL_FAST_MODEL="qwen3.6-27b"

export ANTHROPIC_BASE_URL=https://dashscope-intl.aliyuncs.com/apps/anthropic

export ANTHROPIC_AUTH_TOKEN=<your_api_key>

# Launch the CLI

claudeQwen3.6-27B demonstrates that a well-trained dense model can surpass much larger predecessors on the tasks that matter most for developers. At 27 billion parameters — the most widely deployed open-source scale — it outperforms the 397B-parameter Qwen3.5-397B-A17B on every major agentic coding benchmark, while remaining straightforward to deploy and serve. With Qwen3.6-27B joining the roster, the Qwen3.6 open-source family now offers a comprehensive range of models, underscoring a generation where agentic coding achieved breakthroughs across every scale — from the 3B-active Qwen3.6-35B-A3B to the API-accessible Qwen3.6-Plus and Qwen3.6-Max-Preview. We are grateful for the community’s feedback and look forward to seeing what you build with these models. Stay tuned for more from the Qwen team!

Feel free to cite the following article if you find Qwen3.6-27B helpful:

@misc{qwen36_27b,

title = {{Qwen3.6-27B}: Flagship-Level Coding in a 27B Dense Model},

url = {https://qwen.ai/blog?id=qwen3.6-27b},

author = {{Qwen Team}},

month = {April},

year = {2026}

}Qwen App Expands Seamless End-to-End Agentic Experience with First External Partnership

China Art Museum Explores AI-Guided Viewing with Alibaba's Qwen AI Glasses

1,420 posts | 497 followers

FollowAlibaba Cloud Community - April 17, 2026

Alibaba F(x) Team - July 28, 2022

Alibaba Clouder - September 30, 2019

Alibaba Cloud Community - April 29, 2025

Alibaba Cloud Community - November 22, 2024

Alibaba Cloud Community - April 17, 2026

1,420 posts | 497 followers

Follow Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More Alibaba Cloud for Generative AI

Alibaba Cloud for Generative AI

Accelerate innovation with generative AI to create new business success

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn MoreMore Posts by Alibaba Cloud Community