Agentic SOC collects logs from Kafka, Amazon S3, and Alibaba Cloud Object Storage Service (OSS) into a unified log analysis and security operations center, giving you consistent visibility across cloud environments.

How it works

The import flow has four stages:

Choose an import channel (Kafka, S3, or OSS) based on your latency requirements and data source.

Deliver source logs to that channel.

Grant Agentic SOC permission to read from the channel.

Create a data import task and configure parsing rules.

Prerequisites

Before you begin, ensure that you have:

An active Security Center subscription with the Agentic SOC feature enabled

Access credentials (AccessKey pairs or passwords) for your log source

Endpoint and bucket or topic information for the chosen import channel

Choose an import channel

Select the channel that matches your data source, latency needs, and cost requirements.

| Channel | Best for | Latency | Supported formats | Cost notes |

|---|---|---|---|---|

| Kafka | Real-time log stream analysis; self-hosted platforms exporting via Kafka | Low | JSON, text (raw log) | Configuration is more complex than S3 or OSS |

| S3 (or S3-compatible storage) | Sources where minute-level latency is acceptable; any source supporting the S3 protocol | Minute-level | CSV, JSON, text, multi-line text | Simple setup; works with AWS S3, Tencent Cloud COS, Huawei Cloud OBS |

| OSS | Log data already stored in Alibaba Cloud OSS | Minute-level | CSV, single-line JSON, text, multi-line text, ORC, Parquet, OSS access log, Alibaba Cloud CDN download log | Tightly integrated with the Alibaba Cloud ecosystem; same-region access is free |

Deliver logs to the import channel

Agentic SOC pulls data from Kafka, S3, or OSS — it does not reach into your source systems directly. Route your source logs to the chosen channel first.

Common delivery methods:

OSS: Store log data in an Alibaba Cloud OSS bucket. See OSS Quick Start.

Huawei Cloud: Use the log dump feature in Huawei Cloud Log Tank Service (LTS) to forward logs to Kafka or Object Storage Service (OBS). See Import Huawei Cloud log data.

Tencent Cloud: Use the log dump feature in Tencent Cloud Log Service (CLS) to forward logs to Kafka or Cloud Object Storage (COS). See Import Tencent Cloud log data.

Azure: Azure Event Hubs is compatible with the Apache Kafka protocol. Agentic SOC can read from an Event Hub as if it were a Kafka service. See Import Azure log data.

Save the access credentials, endpoints, and bucket or topic names from this step — you'll need them when configuring the import task.

Grant Security Center access

Before creating an import task, grant Agentic SOC permission to read from the channel.

Kafka or S3 → Grant access for Kafka and S3

OSS → Grant access for OSS

Grant access for Kafka and S3

Log on to the Security Center console and navigate to System Settings > Feature Settings. In the upper-left corner, select the region where your assets are located: Chinese Mainland or Outside Chinese Mainland.

On the Multi-cloud Configuration Management tab, select Multi-cloud Assets, then click Grant Permission.

In the panel that appears, select IDC from the drop-down list.

When configuring a generic Kafka or S3-compatible service (such as AWS S3, Tencent Cloud COS, or Huawei Cloud OBS), select IDC. This is a UI classification only and does not affect functionality.

Configure the connection parameters based on your channel type.

Kafka parameters

| Parameter | Description |

|---|---|

| Service Provider | Select Apache |

| Service | Select Kafka |

| Endpoint | The public endpoint for Kafka. For Azure Event Hubs, use <YOUR-NAMESPACE>.servicebus.windows.net:9093 |

| Username/Password | Kafka credentials. For Azure Event Hubs, the default username is $ConnectionString and the password is the primary connection string |

| Communication Protocol | Maps to security.protocol in Kafka config. Options: plaintext (default), sasl_plaintext, sasl_ssl, ssl |

| SASL Authentication Mechanism | Maps to sasl.mechanism in Kafka config. Options: plain, SCRAM-SHA-256, SCRAM-SHA-512 |

S3 parameters

| Parameter | Description |

|---|---|

| Service Provider | Select AWS-S3 |

| Service | Select S3 |

| Endpoint | The endpoint for the object storage service |

| Access Key Id / Secret Access Key | The AccessKey pair used to access S3 |

Configure the synchronization policy. AK Service Status Check: Sets the interval at which Security Center checks the validity of your AccessKey pair. Select Disable to turn off this check.

Grant access for OSS

Go to the Cloud Resource Access Authorization page and click Confirm Authorization to grant the system role permission to access OSS resources.

If you are a Resource Access Management (RAM) user, attach the following custom policy to your RAM user. For instructions, see Create a custom policy and Manage RAM user permissions.

{ "Statement": [ { "Effect": "Allow", "Action": "ram:PassRole", "Resource": "acs:ram:*:*:role/aliyunlogimportossrole" }, { "Effect": "Allow", "Action": "oss:GetBucketWebsite", "Resource": "*" }, { "Effect": "Allow", "Action": "oss:ListBuckets", "Resource": "*" } ], "Version": "1" }

Create a data import task

Step 1: Create a data source

Skip this step if a data source already exists.

Log on to the Security Center console and navigate to Agentic SOC > Integration Center. In the upper-left corner, select the region where your assets are located: Chinese Mainland or Outside Chinese Mainland.

On the Data Source tab, click to create a data source. For detailed steps, see Create a data source for Simple Log Service (SLS). Configure the following parameters:

Parameter Description Source Data Source Type Select Agentic SOC Dedicated Collection Channel (recommended) or User Log Service Add Instances Create a new Logstore to isolate this data from other sources

Step 2: Configure the import source

On the Data Import tab, click Add Data, then configure the parameters for your channel type.

For parameter values such as endpoints and bucket names, refer to the official documentation of your cloud provider.

Kafka

| Parameter | Description |

|---|---|

| Endpoint | Select the Kafka public endpoint you entered when granting access |

| Topics | The Kafka topic where logs are stored. For Azure Event Hubs, the Event Hub name is the Kafka topic |

| Value Type | The log storage format |

Value Type options:

| Log format | Value Type |

|---|---|

| JSON | json |

| Raw log | text |

S3

Always set File Path Prefix Filter. If left blank, the import task traverses the entire S3 bucket, which can severely degrade performance when the bucket contains many files.

| Parameter | Description |

|---|---|

| Endpoint | The endpoint for the S3 bucket |

| S3 Bucket | The ID of the S3 bucket |

| File Path Prefix Filter | Filters objects by path prefix. For example, set to csv/ to import only objects stored in the csv/ directory |

| File Path Regex Filter | Filters files using a regular expression. Default is blank (no filtering). Example: (testdata/csv/)(.*) for a file at testdata/csv/bill.csv. Evaluated with a logical AND together with File Path Prefix Filter. See How to debug a regular expression |

| Data Format | The parsing format for the file. See S3 data formats |

| Encoding Format | Encoding of the S3 file. Options: GBK, UTF-8 |

| Compression format | Auto-detected based on the S3 data compression settings |

| Modified Time | Imports all files modified starting 30 minutes before the task begins, including newly added files |

| New File Check Cycle | How often the import task scans for new files |

S3 data formats

CSV: A separator-delimited text file. Specify the first row as field names or define them manually. Each data row is parsed as log field values.

JSON: Reads the file line by line and parses each line as a JSON object. JSON fields map to log fields.

Text: Parses each line as a single log entry.

Multi-line Text: Parses multi-line log entries using a regular expression for the first or last line.

If you select CSV or Multi-line Text, configure the additional parameters below.

CSV parameters:

| Parameter | Description |

|---|---|

| Separator | Delimiter for log fields. Default: comma (,) |

| Quote character | Quote character used in the CSV string |

| Escape Character | Escape character for log fields. Default: backslash (\) |

| Max Line Span | Maximum number of lines for a log entry that spans multiple lines. Default: 1 |

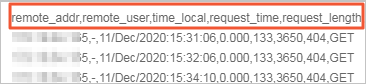

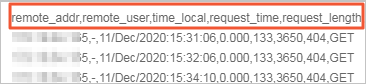

| First Row as Header | When enabled, the first row of the CSV file is used as field names. For example, the first row in the following figure is extracted as the names of the log fields. |

| Skip rows | Number of rows to skip at the start of the file. For example, 1 starts collection from the second row |

Multi-line Text parameters:

| Parameter | Description |

|---|---|

| Regex Match Position | Prefix Regex: Matches the first line of a log entry; subsequent non-matching lines are part of that entry until Max Lines is reached. Suffix Regex: Matches the last line of a log entry; the non-matching part is the next entry until Max Lines is reached |

| Regex | The regular expression based on your log content. See How to debug a regular expression |

| Max Lines | Maximum number of lines in a single log entry |

OSS

OSS import supports files up to 5 GB. Compressed file size is measured after compression.

Always set File Path Prefix Filter. If left blank, the import task traverses the entire OSS bucket, which can degrade performance and incur unnecessary costs.

| Parameter | Description |

|---|---|

| OSS Region | The region where the OSS data is stored. Cross-region access incurs data transfer costs charged by OSS. See Billing details |

| Bucket | The OSS bucket that contains the files to import |

| File Path Prefix Filter | Filters objects by path prefix. For example, set to csv/ to import only files in the csv/ directory |

| File Path Regex Filter | Filters files using a regular expression. Default is blank (no filtering). Example: (testdata/csv/)(.*) for a file at testdata/csv/bill.csv. Evaluated with a logical AND together with File Path Prefix Filter. See How to debug a regular expression |

| Modified Time | Sets the start time to 30 minutes before the task is initiated. All files modified after that time, including newly added files, are imported |

| Data Format | The format used to parse the file. See OSS data formats |

| Encoding Format | Encoding of the OSS file. Options: GBK, UTF-8 |

| Compression format | Auto-detected based on the OSS data compression settings |

| New File Check Cycle | How often the import task scans for new files |

OSS data formats

CSV: A separator-delimited text file. Specify the first row as field names or define them manually. Each data row is parsed as log field values.

Single-Line JSON: Reads the file line by line and parses each line as a JSON object. JSON fields map to log fields.

Text: Parses each line as a single log entry.

Multi-line Text: Parses multi-line log entries using a regular expression for the first or last line.

ORC: Apache ORC format. Automatically parsed into log format with no additional configuration.

Parquet: Apache Parquet format. Automatically parsed into log format with no additional configuration.

OSS Access Log: Format for Alibaba Cloud OSS access logs. See Log storage.

Download Alibaba Cloud CDN logs: Format for Alibaba Cloud CDN download logs. See Quick Start.

If you select CSV or Multi-line Text, configure the additional parameters below.

CSV parameters:

| Parameter | Description |

|---|---|

| Separator | Delimiter for log fields. Default: comma (,) |

| Quote character | Quote character used in the CSV string |

| Escape Character | Escape character for log fields. Default: backslash (\) |

| Max Line Span | Maximum number of lines for a log entry that spans multiple lines. Default: 1 |

| First Row as Header | When enabled, the first row of the CSV file is used as field names. For example, the first row in the following figure is extracted as the names of the log fields. |

| Skip rows | Number of rows to skip at the start of the file. For example, 1 starts collection from the second row |

Multi-line Text parameters:

| Parameter | Description |

|---|---|

| Regex Match Position | Prefix Regex: Matches the first line of a log entry; subsequent non-matching lines are part of that entry until Max Lines is reached. Suffix Regex: Matches the last line of a log entry; the non-matching part is the next entry until Max Lines is reached |

| Regex | The regular expression based on your log content. See How to debug a regular expression |

| Max Lines | Maximum number of lines in a single log entry |

Step 3: Configure the destination

| Parameter | Description |

|---|---|

| Data Source Name | Select the data source created in Step 1 |

| Target Logstore | The Logstore configured in Step 1 |

Step 4: Save and start

Click OK to save the configuration. Security Center automatically starts pulling logs from the import channel.

Analyze imported data

After logs are ingested, configure parsing and detection rules so Agentic SOC can analyze them.

Create an integration policy

Create a new integration policy in Product integration with the following settings:

| Parameter | Description |

|---|---|

| Data Source | Select the target data source configured in the import task |

| Standardized Rule | Agentic SOC provides built-in standardized rules for some cloud products. Select one if available for your source |

| Standardization Method | When converting access logs to alert logs, only Real-time Consumption is supported |

Configure threat detection rules

Enable or create log detection rules in rule management to generate alerts and security events from the ingested logs. See Detection Rules.

Billing

Costs vary by the data source type you selected and the cloud providers you import from.

Agentic SOC and Simple Log Service (SLS) costs

| Data source type | Agentic SOC billable items | SLS billable items | Notes |

|---|---|---|---|

| Agentic SOC Dedicated Collection Channel | Log ingestion fees; log storage and write fees (consume Log Ingestion Traffic) | Fees other than log storage and writes (such as Internet traffic) | Agentic SOC creates and manages SLS resources and is billed for Logstore storage and write fees |

| User Log Service | Log ingestion fees (consuming Log Ingestion Traffic) | All log-related fees (storage, writes, data transfer, and more) | All log resources are managed by SLS, which is billed for all log fees |

For billing details, see Agentic SOC subscription, Agentic SOC pay-as-you-go, and SLS billing overview.

OSS import costs

When importing from OSS, OSS charges for traffic and API requests. Same-region access uses the internal network (free); cross-region access uses the Internet (charged).OSS Pricing

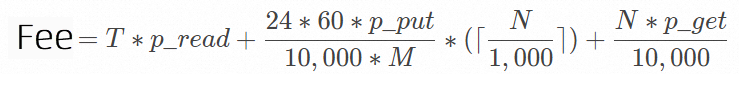

Cost formula:

| Field | Description |

|---|---|

N | Number of files imported per day |

T | Total data volume imported per day, in GB |

p_read | Traffic fee per GB. Same-region: internal network outbound (free). Cross-region: Internet outbound (charged) |

p_put | Fee per 10,000 PUT requests. SLS uses the ListObjects API to list files in the bucket (max 1,000 entries per call), which OSS bills as PUT requests |

p_get | Fee per 10,000 GET requests |

M | New file check interval in minutes, set by the New File Check Cycle parameter |

For OSS pricing, see OSS Pricing.

Third-party channel costs

Tencent Cloud: CLS billing, COS billing

Huawei Cloud: LTS billing, Kafka billing, OBS billing

Azure: Event Hubs pricing